+

+ +

+

+

+## Overview

+### Introduction

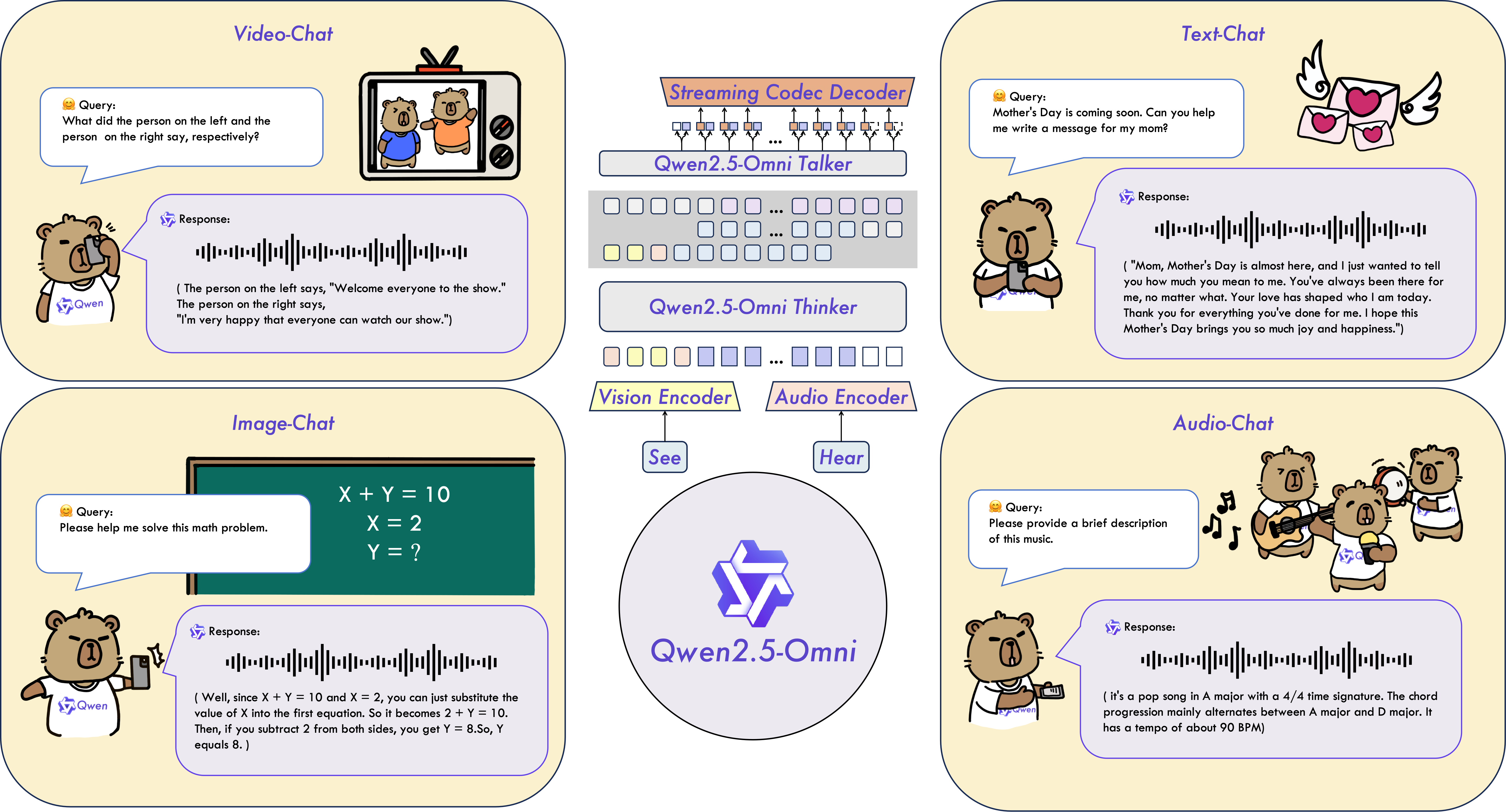

+Qwen2.5-Omni is an end-to-end multimodal model designed to perceive diverse modalities, including text, images, audio, and video, while simultaneously generating text and natural speech responses in a streaming manner.

+

+

+

+

+

+## Overview

+### Introduction

+Qwen2.5-Omni is an end-to-end multimodal model designed to perceive diverse modalities, including text, images, audio, and video, while simultaneously generating text and natural speech responses in a streaming manner.

+

+

+  +

+

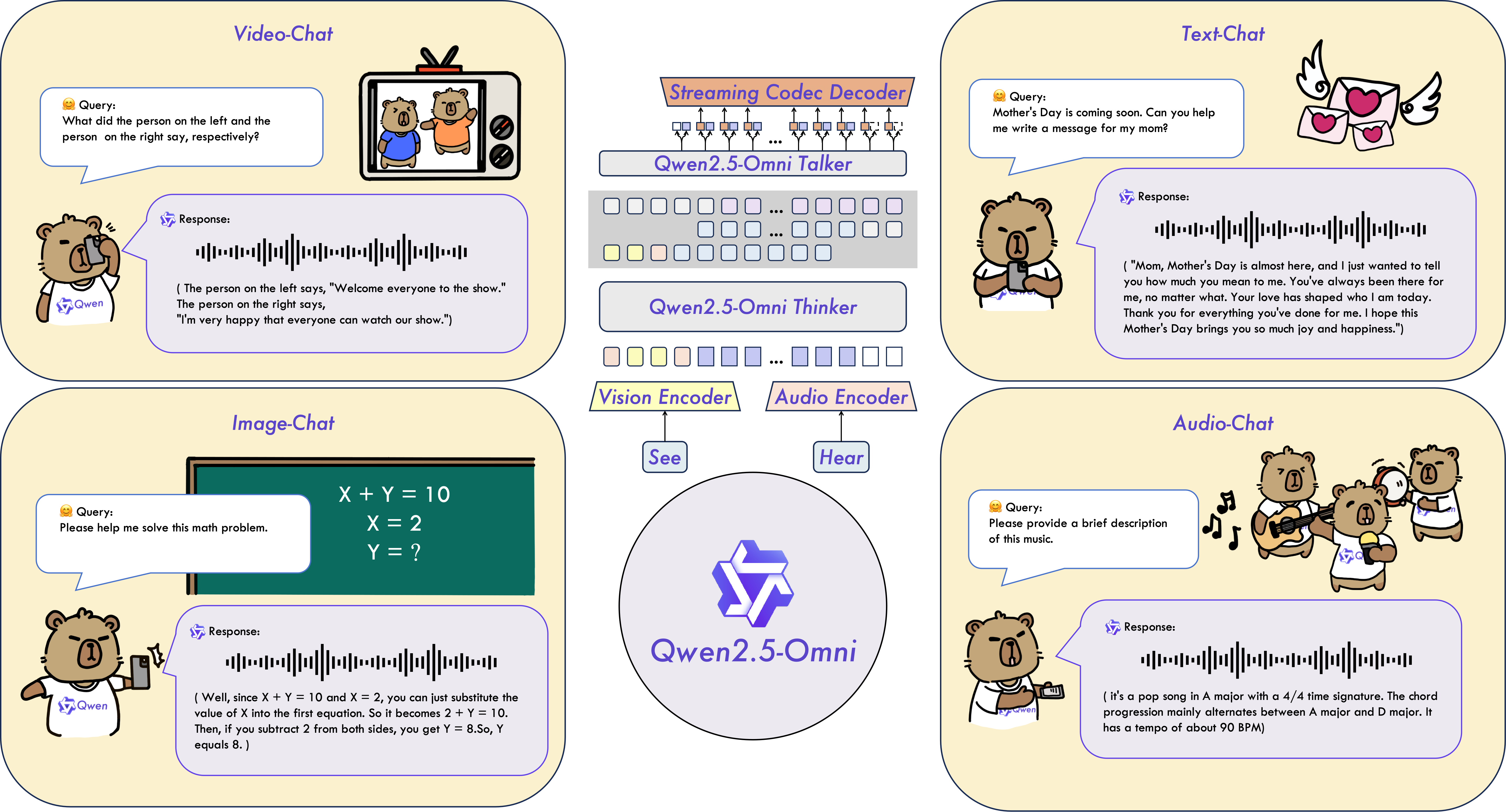

+ +### Key Features + +* **Omni and Novel Architecture**: We propose Thinker-Talker architecture, an end-to-end multimodal model designed to perceive diverse modalities, including text, images, audio, and video, while simultaneously generating text and natural speech responses in a streaming manner. We propose a novel position embedding, named TMRoPE (Time-aligned Multimodal RoPE), to synchronize the timestamps of video inputs with audio. + +* **Real-Time Voice and Video Chat**: Architecture designed for fully real-time interactions, supporting chunked input and immediate output. + +* **Natural and Robust Speech Generation**: Surpassing many existing streaming and non-streaming alternatives, demonstrating superior robustness and naturalness in speech generation. + +* **Strong Performance Across Modalities**: Exhibiting exceptional performance across all modalities when benchmarked against similarly sized single-modality models. Qwen2.5-Omni outperforms the similarly sized Qwen2-Audio in audio capabilities and achieves comparable performance to Qwen2.5-VL-7B. + +* **Excellent End-to-End Speech Instruction Following**: Qwen2.5-Omni shows performance in end-to-end speech instruction following that rivals its effectiveness with text inputs, evidenced by benchmarks such as MMLU and GSM8K. + +### Model Architecture + +

+  +

+

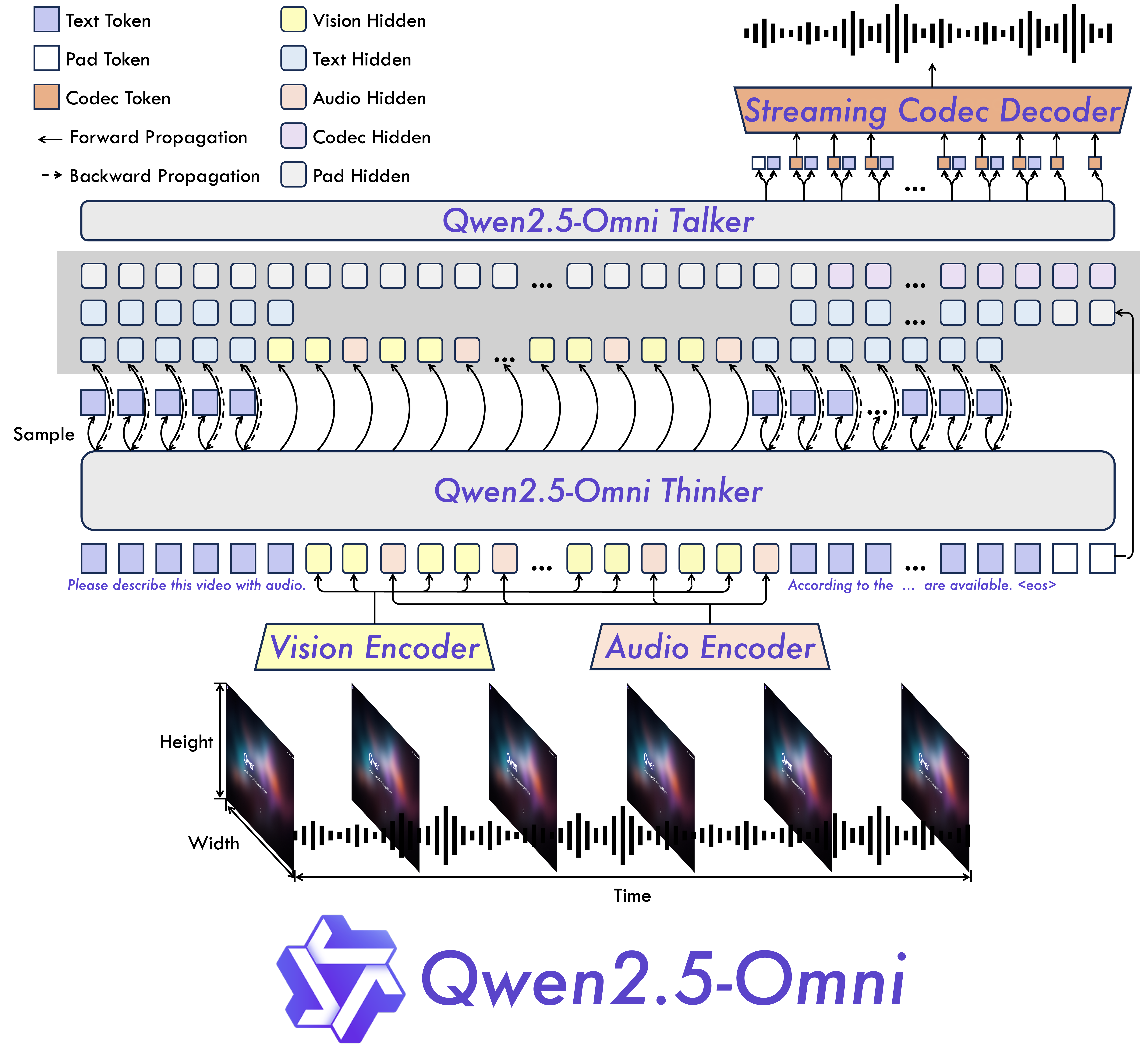

+ +### Performance + +We conducted a comprehensive evaluation of Qwen2.5-Omni, which demonstrates strong performance across all modalities when compared to similarly sized single-modality models and closed-source models like Qwen2.5-VL-7B, Qwen2-Audio, and Gemini-1.5-pro. In tasks requiring the integration of multiple modalities, such as OmniBench, Qwen2.5-Omni achieves state-of-the-art performance. Furthermore, in single-modality tasks, it excels in areas including speech recognition (Common Voice), translation (CoVoST2), audio understanding (MMAU), image reasoning (MMMU, MMStar), video understanding (MVBench), and speech generation (Seed-tts-eval and subjective naturalness). + +

+  +

+

+

+ YRf6NHY?zorU4lUUYpqi+J%C^mKOP!$d+i

zw*%AdTG7$i4dbh)F?x=zo7Zk))q{3C;5I|{(J8hMC_r#@1x6y$@aIMvez&BfZ({?D

z@-HIsO$Rpcs-ei7#PbNky7f)qyg7{e3A*6#R)CL?3|54X$CE$-#Hk5F{2)Kfs`%I(

z{Y%j$Uun_KPjp~th)P92&``)5n$|W*D{sA_G2R=RqxX^~2!5gJg^IYiXg ^oSYg?naq41F`-0%

z7aeubVeXMkjd@2H(C8w|eHH4iyTMwUpTzb0htal%YTZ5JMVMr+ZnAH##>L&?NJbLh

zs-@CRCy0sX-6bdEN#vR!7X0+W@|!1rddT@$;Lpi~VA9V;@NLOPl3xyCU${24vf8mr

z@`aX17vtRJfBh$Ubnwf>Mt2uWUx!n3-fsF^d-3^<9Y=}=G9gRO?oMultimodality -> Text

+

+

+

+

+

+

+ Datasets

+ Model

+ Performance

+

+

+ OmniBench

+

Speech | Sound Event | Music | AvgGemini-1.5-Pro

+ 42.67%|42.26%|46.23%|42.91%

+

+

+ MIO-Instruct

+ 36.96%|33.58%|11.32%|33.80%

+

+

+ AnyGPT (7B)

+ 17.77%|20.75%|13.21%|18.04%

+

+

+ video-SALMONN

+ 34.11%|31.70%|56.60%|35.64%

+

+

+ UnifiedIO2-xlarge

+ 39.56%|36.98%|29.25%|38.00%

+

+

+ UnifiedIO2-xxlarge

+ 34.24%|36.98%|24.53%|33.98%

+

+

+ MiniCPM-o

+ -|-|-|40.50%

+

+

+ Baichuan-Omni-1.5

+ -|-|-|42.90%

+

+

+ Qwen2.5-Omni-3B

+ 52.14%|52.08%|52.83%|52.19%

+

+

+Qwen2.5-Omni-7B

+ 55.25%|60.00%|52.83%|56.13%

+ Audio -> Text

+

+

+

+

+

+

+

+ Datasets

+ Model

+ Performance

+

+

+ ASR

+

+

+ Librispeech

+

dev-clean | dev other | test-clean | test-otherSALMONN

+ -|-|2.1|4.9

+

+

+ SpeechVerse

+ -|-|2.1|4.4

+

+

+ Whisper-large-v3

+ -|-|1.8|3.6

+

+

+ Llama-3-8B

+ -|-|-|3.4

+

+

+ Llama-3-70B

+ -|-|-|3.1

+

+

+ Seed-ASR-Multilingual

+ -|-|1.6|2.8

+

+

+ MiniCPM-o

+ -|-|1.7|-

+

+

+ MinMo

+ -|-|1.7|3.9

+

+

+ Qwen-Audio

+ 1.8|4.0|2.0|4.2

+

+

+ Qwen2-Audio

+ 1.3|3.4|1.6|3.6

+

+

+ Qwen2.5-Omni-3B

+ 2.0|4.1|2.2|4.5

+

+

+ Qwen2.5-Omni-7B

+ 1.6|3.5|1.8|3.4

+

+

+ Common Voice 15

+

en | zh | yue | frWhisper-large-v3

+ 9.3|12.8|10.9|10.8

+

+

+ MinMo

+ 7.9|6.3|6.4|8.5

+

+

+ Qwen2-Audio

+ 8.6|6.9|5.9|9.6

+

+

+ Qwen2.5-Omni-3B

+ 9.1|6.0|11.6|9.6

+

+

+ Qwen2.5-Omni-7B

+ 7.6|5.2|7.3|7.5

+

+

+ Fleurs

+

zh | enWhisper-large-v3

+ 7.7|4.1

+

+

+ Seed-ASR-Multilingual

+ -|3.4

+

+

+ Megrez-3B-Omni

+ 10.8|-

+

+

+ MiniCPM-o

+ 4.4|-

+

+

+ MinMo

+ 3.0|3.8

+

+

+ Qwen2-Audio

+ 7.5|-

+

+

+ Qwen2.5-Omni-3B

+ 3.2|5.4

+

+

+ Qwen2.5-Omni-7B

+ 3.0|4.1

+

+

+ Wenetspeech

+

test-net | test-meetingSeed-ASR-Chinese

+ 4.7|5.7

+

+

+ Megrez-3B-Omni

+ -|16.4

+

+

+ MiniCPM-o

+ 6.9|-

+

+

+ MinMo

+ 6.8|7.4

+

+

+ Qwen2.5-Omni-3B

+ 6.3|8.1

+

+

+ Qwen2.5-Omni-7B

+ 5.9|7.7

+

+

+ Voxpopuli-V1.0-en

+ Llama-3-8B

+ 6.2

+

+

+ Llama-3-70B

+ 5.7

+

+

+ Qwen2.5-Omni-3B

+ 6.6

+

+

+ Qwen2.5-Omni-7B

+ 5.8

+

+

+ S2TT

+

+

+ CoVoST2

+

en-de | de-en | en-zh | zh-enSALMONN

+ 18.6|-|33.1|-

+

+

+ SpeechLLaMA

+ -|27.1|-|12.3

+

+

+ BLSP

+ 14.1|-|-|-

+

+

+ MiniCPM-o

+ -|-|48.2|27.2

+

+

+ MinMo

+ -|39.9|46.7|26.0

+

+

+ Qwen-Audio

+ 25.1|33.9|41.5|15.7

+

+

+ Qwen2-Audio

+ 29.9|35.2|45.2|24.4

+

+

+ Qwen2.5-Omni-3B

+ 28.3|38.1|41.4|26.6

+

+

+ Qwen2.5-Omni-7B

+ 30.2|37.7|41.4|29.4

+

+

+ SER

+

+

+ Meld

+ WavLM-large

+ 0.542

+

+

+ MiniCPM-o

+ 0.524

+

+

+ Qwen-Audio

+ 0.557

+

+

+ Qwen2-Audio

+ 0.553

+

+

+ Qwen2.5-Omni-3B

+ 0.558

+

+

+ Qwen2.5-Omni-7B

+ 0.570

+

+

+ VSC

+

+

+ VocalSound

+ CLAP

+ 0.495

+

+

+ Pengi

+ 0.604

+

+

+ Qwen-Audio

+ 0.929

+

+

+ Qwen2-Audio

+ 0.939

+

+

+ Qwen2.5-Omni-3B

+ 0.936

+

+

+ Qwen2.5-Omni-7B

+ 0.939

+

+

+ Music

+

+

+ GiantSteps Tempo

+ Llark-7B

+ 0.86

+

+

+ Qwen2.5-Omni-3B

+ 0.88

+

+

+ Qwen2.5-Omni-7B

+ 0.88

+

+

+ MusicCaps

+ LP-MusicCaps

+ 0.291|0.149|0.089|0.061|0.129|0.130

+

+

+ Qwen2.5-Omni-3B

+ 0.325|0.163|0.093|0.057|0.132|0.229

+

+

+ Qwen2.5-Omni-7B

+ 0.328|0.162|0.090|0.055|0.127|0.225

+

+

+ Audio Reasoning

+

+

+ MMAU

+

Sound | Music | Speech | AvgGemini-Pro-V1.5

+ 56.75|49.40|58.55|54.90

+

+

+ Qwen2-Audio

+ 54.95|50.98|42.04|49.20

+

+

+ Qwen2.5-Omni-3B

+ 70.27|60.48|59.16|63.30

+

+

+ Qwen2.5-Omni-7B

+ 67.87|69.16|59.76|65.60

+

+

+ Voice Chatting

+

+

+ VoiceBench

+

AlpacaEval | CommonEval | SD-QA | MMSUUltravox-v0.4.1-LLaMA-3.1-8B

+ 4.55|3.90|53.35|47.17

+

+

+ MERaLiON

+ 4.50|3.77|55.06|34.95

+

+

+ Megrez-3B-Omni

+ 3.50|2.95|25.95|27.03

+

+

+ Lyra-Base

+ 3.85|3.50|38.25|49.74

+

+

+ MiniCPM-o

+ 4.42|4.15|50.72|54.78

+

+

+ Baichuan-Omni-1.5

+ 4.50|4.05|43.40|57.25

+

+

+ Qwen2-Audio

+ 3.74|3.43|35.71|35.72

+

+

+ Qwen2.5-Omni-3B

+ 4.32|4.00|49.37|50.23

+

+

+ Qwen2.5-Omni-7B

+ 4.49|3.93|55.71|61.32

+

+

+ VoiceBench

+

OpenBookQA | IFEval | AdvBench | AvgUltravox-v0.4.1-LLaMA-3.1-8B

+ 65.27|66.88|98.46|71.45

+

+

+ MERaLiON

+ 27.23|62.93|94.81|62.91

+

+

+ Megrez-3B-Omni

+ 28.35|25.71|87.69|46.25

+

+

+ Lyra-Base

+ 72.75|36.28|59.62|57.66

+

+

+ MiniCPM-o

+ 78.02|49.25|97.69|71.69

+

+

+ Baichuan-Omni-1.5

+ 74.51|54.54|97.31|71.14

+

+

+ Qwen2-Audio

+ 49.45|26.33|96.73|55.35

+

+

+ Qwen2.5-Omni-3B

+ 74.73|42.10|98.85|68.81

+

+

+Qwen2.5-Omni-7B

+ 81.10|52.87|99.42|74.12

+ Image -> Text

+

+| Dataset | Qwen2.5-Omni-7B | Qwen2.5-Omni-3B | Other Best | Qwen2.5-VL-7B | GPT-4o-mini |

+|--------------------------------|--------------|------------|------------|---------------|-------------|

+| MMMUval | 59.2 | 53.1 | 53.9 | 58.6 | **60.0** |

+| MMMU-Prooverall | 36.6 | 29.7 | - | **38.3** | 37.6 |

+| MathVistatestmini | 67.9 | 59.4 | **71.9** | 68.2 | 52.5 |

+| MathVisionfull | 25.0 | 20.8 | 23.1 | **25.1** | - |

+| MMBench-V1.1-ENtest | 81.8 | 77.8 | 80.5 | **82.6** | 76.0 |

+| MMVetturbo | 66.8 | 62.1 | **67.5** | 67.1 | 66.9 |

+| MMStar | **64.0** | 55.7 | **64.0** | 63.9 | 54.8 |

+| MMEsum | 2340 | 2117 | **2372** | 2347 | 2003 |

+| MuirBench | 59.2 | 48.0 | - | **59.2** | - |

+| CRPErelation | **76.5** | 73.7 | - | 76.4 | - |

+| RealWorldQAavg | 70.3 | 62.6 | **71.9** | 68.5 | - |

+| MME-RealWorlden | **61.6** | 55.6 | - | 57.4 | - |

+| MM-MT-Bench | 6.0 | 5.0 | - | **6.3** | - |

+| AI2D | 83.2 | 79.5 | **85.8** | 83.9 | - |

+| TextVQAval | 84.4 | 79.8 | 83.2 | **84.9** | - |

+| DocVQAtest | 95.2 | 93.3 | 93.5 | **95.7** | - |

+| ChartQAtest Avg | 85.3 | 82.8 | 84.9 | **87.3** | - |

+| OCRBench_V2en | **57.8** | 51.7 | - | 56.3 | - |

+

+

+| Dataset | Qwen2.5-Omni-7B | Qwen2.5-Omni-3B | Qwen2.5-VL-7B | Grounding DINO | Gemini 1.5 Pro |

+|--------------------------|--------------|---------------|---------------|----------------|----------------|

+| Refcocoval | 90.5 | 88.7 | 90.0 | **90.6** | 73.2 |

+| RefcocotextA | **93.5** | 91.8 | 92.5 | 93.2 | 72.9 |

+| RefcocotextB | 86.6 | 84.0 | 85.4 | **88.2** | 74.6 |

+| Refcoco+val | 85.4 | 81.1 | 84.2 | **88.2** | 62.5 |

+| Refcoco+textA | **91.0** | 87.5 | 89.1 | 89.0 | 63.9 |

+| Refcoco+textB | **79.3** | 73.2 | 76.9 | 75.9 | 65.0 |

+| Refcocog+val | **87.4** | 85.0 | 87.2 | 86.1 | 75.2 |

+| Refcocog+test | **87.9** | 85.1 | 87.2 | 87.0 | 76.2 |

+| ODinW | 42.4 | 39.2 | 37.3 | **55.0** | 36.7 |

+| PointGrounding | 66.5 | 46.2 | **67.3** | - | - |

+Video(without audio) -> Text

+

+| Dataset | Qwen2.5-Omni-7B | Qwen2.5-Omni-3B | Other Best | Qwen2.5-VL-7B | GPT-4o-mini |

+|-----------------------------|--------------|------------|------------|---------------|-------------|

+| Video-MMEw/o sub | 64.3 | 62.0 | 63.9 | **65.1** | 64.8 |

+| Video-MMEw sub | **72.4** | 68.6 | 67.9 | 71.6 | - |

+| MVBench | **70.3** | 68.7 | 67.2 | 69.6 | - |

+| EgoSchematest | **68.6** | 61.4 | 63.2 | 65.0 | - |

+Zero-shot Speech Generation

+

+

+

+

+

+

+

+ Datasets

+ Model

+ Performance

+

+

+ Content Consistency

+

+

+ SEED

+

test-zh | test-en | test-hard Seed-TTS_ICL

+ 1.11 | 2.24 | 7.58

+

+

+ Seed-TTS_RL

+ 1.00 | 1.94 | 6.42

+

+

+ MaskGCT

+ 2.27 | 2.62 | 10.27

+

+

+ E2_TTS

+ 1.97 | 2.19 | -

+

+

+ F5-TTS

+ 1.56 | 1.83 | 8.67

+

+

+ CosyVoice 2

+ 1.45 | 2.57 | 6.83

+

+

+ CosyVoice 2-S

+ 1.45 | 2.38 | 8.08

+

+

+ Qwen2.5-Omni-3B_ICL

+ 1.95 | 2.87 | 9.92

+

+

+ Qwen2.5-Omni-3B_RL

+ 1.58 | 2.51 | 7.86

+

+

+ Qwen2.5-Omni-7B_ICL

+ 1.70 | 2.72 | 7.97

+

+

+ Qwen2.5-Omni-7B_RL

+ 1.42 | 2.32 | 6.54

+

+

+ Speaker Similarity

+

+

+ SEED

+

test-zh | test-en | test-hard Seed-TTS_ICL

+ 0.796 | 0.762 | 0.776

+

+

+ Seed-TTS_RL

+ 0.801 | 0.766 | 0.782

+

+

+ MaskGCT

+ 0.774 | 0.714 | 0.748

+

+

+ E2_TTS

+ 0.730 | 0.710 | -

+

+

+ F5-TTS

+ 0.741 | 0.647 | 0.713

+

+

+ CosyVoice 2

+ 0.748 | 0.652 | 0.724

+

+

+ CosyVoice 2-S

+ 0.753 | 0.654 | 0.732

+

+

+ Qwen2.5-Omni-3B_ICL

+ 0.741 | 0.635 | 0.748

+

+

+ Qwen2.5-Omni-3B_RL

+ 0.744 | 0.635 | 0.746

+

+

+ Qwen2.5-Omni-7B_ICL

+ 0.752 | 0.632 | 0.747

+

+

+Qwen2.5-Omni-7B_RL

+ 0.754 | 0.641 | 0.752

+ Text -> Text

+

+| Dataset | Qwen2.5-Omni-7B | Qwen2.5-Omni-3B | Qwen2.5-7B | Qwen2.5-3B | Qwen2-7B | Llama3.1-8B | Gemma2-9B |

+|-----------------------------------|-----------|------------|------------|------------|------------|-------------|-----------|

+| MMLU-Pro | 47.0 | 40.4 | **56.3** | 43.7 | 44.1 | 48.3 | 52.1 |

+| MMLU-redux | 71.0 | 60.9 | **75.4** | 64.4 | 67.3 | 67.2 | 72.8 |

+| LiveBench0831 | 29.6 | 22.3 | **35.9** | 26.8 | 29.2 | 26.7 | 30.6 |

+| GPQA | 30.8 | 34.3 | **36.4** | 30.3 | 34.3 | 32.8 | 32.8 |

+| MATH | 71.5 | 63.6 | **75.5** | 65.9 | 52.9 | 51.9 | 44.3 |

+| GSM8K | 88.7 | 82.6 | **91.6** | 86.7 | 85.7 | 84.5 | 76.7 |

+| HumanEval | 78.7 | 70.7 | **84.8** | 74.4 | 79.9 | 72.6 | 68.9 |

+| MBPP | 73.2 | 70.4 | **79.2** | 72.7 | 67.2 | 69.6 | 74.9 |

+| MultiPL-E | 65.8 | 57.6 | **70.4** | 60.2 | 59.1 | 50.7 | 53.4 |

+| LiveCodeBench2305-2409 | 24.6 | 16.5 | **28.7** | 19.9 | 23.9 | 8.3 | 18.9 |

+Minimum GPU memory requirements

+

+|Model | Precision | 15(s) Video | 30(s) Video | 60(s) Video |

+|--------------|-----------| ------------- | ------------- | ------------------ |

+| Qwen-Omni-3B | FP32 | 89.10 GB | Not Recommend | Not Recommend |

+| Qwen-Omni-3B | BF16 | 18.38 GB | 22.43 GB | 28.22 GB |

+| Qwen-Omni-7B | FP32 | 93.56 GB | Not Recommend | Not Recommend |

+| Qwen-Omni-7B | BF16 | 31.11 GB | 41.85 GB | 60.19 GB |

+

+Note: The table above presents the theoretical minimum memory requirements for inference with `transformers` and `BF16` is test with `attn_implementation="flash_attention_2"`; however, in practice, the actual memory usage is typically at least 1.2 times higher. For more information, see the linked resource [here](https://huggingface.co/docs/accelerate/main/en/usage_guides/model_size_estimator).

+Video URL resource usage

+

+Video URL compatibility largely depends on the third-party library version. The details are in the table below. Change the backend by `FORCE_QWENVL_VIDEO_READER=torchvision` or `FORCE_QWENVL_VIDEO_READER=decord` if you prefer not to use the default one.

+

+| Backend | HTTP | HTTPS |

+|-------------|------|-------|

+| torchvision >= 0.19.0 | ✅ | ✅ |

+| torchvision < 0.19.0 | ❌ | ❌ |

+| decord | ✅ | ❌ |

+Batch inference

+

+The model can batch inputs composed of mixed samples of various types such as text, images, audio and videos as input when `return_audio=False` is set. Here is an example.

+

+```python

+# Sample messages for batch inference

+

+# Conversation with video only

+conversation1 = [

+ {

+ "role": "system",

+ "content": [

+ {"type": "text", "text": "You are Qwen, a virtual human developed by the Qwen Team, Alibaba Group, capable of perceiving auditory and visual inputs, as well as generating text and speech."}

+ ],

+ },

+ {

+ "role": "user",

+ "content": [

+ {"type": "video", "video": "/path/to/video.mp4"},

+ ]

+ }

+]

+

+# Conversation with audio only

+conversation2 = [

+ {

+ "role": "system",

+ "content": [

+ {"type": "text", "text": "You are Qwen, a virtual human developed by the Qwen Team, Alibaba Group, capable of perceiving auditory and visual inputs, as well as generating text and speech."}

+ ],

+ },

+ {

+ "role": "user",

+ "content": [

+ {"type": "audio", "audio": "/path/to/audio.wav"},

+ ]

+ }

+]

+

+# Conversation with pure text

+conversation3 = [

+ {

+ "role": "system",

+ "content": [

+ {"type": "text", "text": "You are Qwen, a virtual human developed by the Qwen Team, Alibaba Group, capable of perceiving auditory and visual inputs, as well as generating text and speech."}

+ ],

+ },

+ {

+ "role": "user",

+ "content": "who are you?"

+ }

+]

+

+

+# Conversation with mixed media

+conversation4 = [

+ {

+ "role": "system",

+ "content": [

+ {"type": "text", "text": "You are Qwen, a virtual human developed by the Qwen Team, Alibaba Group, capable of perceiving auditory and visual inputs, as well as generating text and speech."}

+ ],

+ },

+ {

+ "role": "user",

+ "content": [

+ {"type": "image", "image": "/path/to/image.jpg"},

+ {"type": "video", "video": "/path/to/video.mp4"},

+ {"type": "audio", "audio": "/path/to/audio.wav"},

+ {"type": "text", "text": "What are the elements can you see and hear in these medias?"},

+ ],

+ }

+]

+

+# Combine messages for batch processing

+conversations = [conversation1, conversation2, conversation3, conversation4]

+

+# set use audio in video

+USE_AUDIO_IN_VIDEO = True

+

+# Preparation for batch inference

+text = processor.apply_chat_template(conversations, add_generation_prompt=True, tokenize=False)

+audios, images, videos = process_mm_info(conversations, use_audio_in_video=USE_AUDIO_IN_VIDEO)

+

+inputs = processor(text=text, audio=audios, images=images, videos=videos, return_tensors="pt", padding=True, use_audio_in_video=USE_AUDIO_IN_VIDEO)

+inputs = inputs.to(model.device).to(model.dtype)

+

+# Batch Inference

+text_ids = model.generate(**inputs, use_audio_in_video=USE_AUDIO_IN_VIDEO, return_audio=False)

+text = processor.batch_decode(text_ids, skip_special_tokens=True, clean_up_tokenization_spaces=False)

+print(text)

+```

+

diff --git a/mllm/models/qwen2_5omni/python_src_code/__init__.py b/mllm/models/qwen2_5omni/python_src_code/__init__.py

new file mode 100644

index 000000000..0d7ddae0d

--- /dev/null

+++ b/mllm/models/qwen2_5omni/python_src_code/__init__.py

@@ -0,0 +1,28 @@

+# Copyright 2025 The Qwen Team and The HuggingFace Inc. team. All rights reserved.

+#

+# Licensed under the Apache License, Version 2.0 (the "License");

+# you may not use this file except in compliance with the License.

+# You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+from typing import TYPE_CHECKING

+

+from ...utils import _LazyModule

+from ...utils.import_utils import define_import_structure

+

+

+if TYPE_CHECKING:

+ from .configuration_qwen2_5_omni import *

+ from .modeling_qwen2_5_omni import *

+ from .processing_qwen2_5_omni import *

+else:

+ import sys

+

+ _file = globals()["__file__"]

+ sys.modules[__name__] = _LazyModule(__name__, _file, define_import_structure(_file), module_spec=__spec__)

diff --git a/mllm/models/qwen2_5omni/python_src_code/added_tokens.json b/mllm/models/qwen2_5omni/python_src_code/added_tokens.json

new file mode 100644

index 000000000..456225635

--- /dev/null

+++ b/mllm/models/qwen2_5omni/python_src_code/added_tokens.json

@@ -0,0 +1,24 @@

+{

+ "": 151658,

+ "

+

+Ġع ÙĦÙī

+Ġm á»Ļt

+Ġv Ỽi

+Ġng Æ°á»Ŀi

+ĠØ¥ ÙĦÙī

+Ġnh ững

+Ġth á»ĥ

+Ġ×IJ ×ķ

+Ġ×¢ ×Ŀ

+ا Ùĭ

+Ġ à¹ģละ

+ĠÙĦ ا

+Ġnh Æ°

+ĠاÙĦت ÙĬ

+Ġ×Ķ ×ķ×IJ

+ĠÄij ến

+ĠØ£ ÙĪ

+Ġv á»ģ

+ĠlÃł m

+Ġs ẽ

+Ġc Å©ng

+Ġ ợ

+ĠÄij ó

+Ġnhi á»ģu

+Ġt ại

+Ġtr ên

+Ġ×Ĵ ×Ŀ

+Ġnh Ãł

+Ġ׼ ×Ļ

+Ġs á»±

+ĠÄij ầu

+Ġb á»ĭ

+ĠÙĩ ذا

+Ġnh ất

+Ġph ải

+Ġhi á»ĩn

+Ġdụ ng

+ĠÄij á»Ļng

+ĠاÙĦÙĦ Ùĩ

+ĠØ Į

+ĠÙĥ ÙĦ

+Ġvi á»ĩc

+Ġn Äĥm

+Ġth ì

+Ġh á»įc

+ĠÙĪ ت

+t é

+Ġا ÙĨ

+Ġt ôi

+Ġ×IJ ׳×Ļ

+Ġ׾ ×Ļ

+Ġ×ŀ ×ķ

+Ġng Ãły

+Ġn Æ°á»Ľc

+Ġ×Ķ ×Ļ×IJ

+Ġ×IJ ×Ļ

+Ġh Æ¡n

+ĠÙĩ Ø°Ùĩ

+ĠÙĪ ÙĬ

+ĠاÙĦ Ø°ÙĬ

+Ġ×ķ ×ŀ

+Ġgi á

+Ġnh ân

+Ġch ÃŃnh

+Ġm ình

+ĠÐĿ а

+Ġth ế

+Ġ×Ļ ×ķתר

+Ġ×IJ ×Ŀ

+Ġn ên

+Ġh ợ

+Ġhợ p

+Ġc òn

+ĠÙĩ ÙĪ

+Ġc Æ¡

+Ġr ất

+ĠVi á»ĩt

+Ġب عد

+Ġש ×Ļ

+Ġth á»Ŀi

+Ġc ách

+ĠÄij á»ĵng

+Ġн о

+Ġtr Æ°á»Ŀng

+Ø Ł

+ĠÄij á»ĭnh

+ĠÄiji á»ģu

+×Ļ ×Ļ×Ŀ

+Ġth á»±c

+n ın

+Ġh ình

+Ġn ói

+Ġc ùng

+Ġ×Ķ ×Ķ

+ĠØ¥ ÙĨ

+Ġ×IJ ×ij׾

+Ġnh Æ°ng

+Ġbi ết

+Ġж е

+Ġch úng

+ĠÄij ang

+ĠØ° ÙĦÙĥ

+Ġl ên

+Ġkh ách

+Ġn Ãło

+Ġs á»Ń

+Ġkh ác

+Ġë° ı

+Ġl ý

+×Ļ ×Ļ

+ĠÄij ây

+Ġ׾ ×ŀ

+Ġc ần

+Ġtr ình

+Ġph át

+ãģ« ãĤĤ

+п о

+Ġn Äĥng

+Ġb á»Ļ

+Ġv ụ

+ĠÄij á»Ļ

+Ñĩ е

+Ġnh áºŃn

+Ġtr Æ°á»Ľc

+Ġ×¢ ×ĵ

+Ġh Ãłnh

+ĠØ® ÙĦاÙĦ

+Ġl ượng

+Ġc ấp

+Ġtá» ±

+Ġv ì

+Ġt Æ°

+Ġch ất

+Ġ׼ ×ŀ×ķ

+Ġg ì

+Ġש ׳

+Ġt ế

+ת ×ķ

+Ġnghi á»ĩp

+Ġm ặt

+ĠÙĥ Ùħا

+Ġ×ij ×Ļף

+Ġר ק

+Ġth ấy

+Ġmá y

+ĠÙģ Ùī

+Ġd ân

+Ġ×IJ ×Ĺ×ĵ

+Ġt âm

+Ġ׼ ×ļ

+Ġ׾ ×ķ

+в о

+Ġt ác

+Ġto Ãłn

+ĠÙĪ Ùħ

+Ġk ết

+Ġ หรืà¸Ń

+ĠÙĪاÙĦ Ùħ

+ĠÄiji á»ĥm

+Ġ×ĸ ×ķ

+Ġ×ij ×ķ

+׼ ×ķת

+Ġh á»Ļi

+Ġb ằng

+ت Ùĩا

+Ġ׼ ×ĵ×Ļ

+Ġ×Ķ ×Ŀ

+Ġxu ất

+ĠÙĤ د

+Ġb ảo

+Ġt á»ijt

+Ġt ình

+ĠÙĩ ÙĬ

+ĠÄij á»iji

+Ġthi ết

+Ġhi á»ĩu

+Ġti ếp

+Ġt ạo

+ת ×Ķ

+Ġch ủ

+o ÅĽÄĩ

+Ġgi ú

+Ġgiú p

+ĠÃ ½

+Ġqu ả

+Ġlo ại

+Ġc ô

+ĠÃ ´

+Ġô ng

+Ġ×Ķ ×ķ

+ĠاÙĦÙĬ ÙĪÙħ

+ĠtÃŃ nh

+г а

+Ġph òng

+Ġ Äĥn

+Ġع اÙħ

+Ġv á»ĭ

+lar ını

+r ÃŃa

+Ġt Ỽi

+ĠÄij Æ°á»Ŀng

+Ġgi Ỽi

+Ġb ản

+Ġc ầu

+Ġnhi ên

+Ġb á»ĩnh

+Ġth Æ°á»Ŀng

+Ġ×IJ ×Ļף

+ĠÄij á»ģ

+Ġh á»ĩ

+Ġ×Ļש ר×IJ׾

+Ġqu á

+ĠÐĹ Ð°

+ãģ® ãģ§ãģĻãģĮ

+ĠÐŁ ÑĢи

+Ġph ần

+ĠÙĪ ÙĦا

+ĠlỼ n

+Ġtr á»ĭ

+Ġcả m

+Ġм о

+Ġd ùng

+ĠاÙĦ Ùī

+ĠعÙĦÙĬ Ùĩ

+ĠìŀĪ ìĬµëĭĪëĭ¤

+ÙĬ ÙĤ

+ĠÙĤ بÙĦ

+Ġho ặc

+ĠØŃ ÙĬØ«

+Ġ à¸Ĺีà¹Ī

+Ġغ ÙĬر

+ĠÄij ại

+Ġsá»ij ng

+нÑĭ ми

+Ġth ức

+Ġפ ×Ļ

+ĠÄiji á»ĩn

+ãģª ãģĭãģ£ãģŁ

+Ġgi ải

+Ġv ẫn

+Ġи Ñħ

+Ġö nce

+Ġv áºŃy

+Ġmu á»ijn

+Ġ ảnh

+à¹ĥà¸Ļ à¸ģาร

+ĠQu á»ijc

+Ġk ế

+׳ ×IJ

+Ġס ×Ļ

+Ġy êu

+ãģ® ãģĭ

+ĠÄij ẹ

+ĠÄijẹ p

+Ġch ức

+Ġy ıl

+ĠTür kiye

+d é

+ĠÙĤ اÙĦ

+Ġd á»ĭch

+ĠolduÄŁ u

+Ġch á»įn

+Ġت Ùħ

+หà¸Ļ ึà¹Īà¸ĩ

+ãģķãĤĮ ãģŁ

+Ġph áp

+ìĽ Ķ

+Ġti á»ģn

+ãģĹ ãģ¾ãģĹãģŁ

+Ġש ׾×IJ

+ÙĦ Ø©

+Ġ׾פ ׳×Ļ

+Ġ×ij ×Ļת

+ĠH Ãł

+ĠØŃ Øª

+ĠØŃت Ùī

+Ġ×¢ ×ķ×ĵ

+Ġn ó

+Ġth áng

+à¹Ģลืà¸Ń à¸ģ

+ר ×Ķ

+Ġt Äĥng

+Ġcá i

+Ġtri á»ĥn

+Ġ×IJ×ķת ×ķ

+ìłģ ìĿ¸

+ĠC ông

+Ġ׾×Ķ ×Ļ×ķת

+Ġг ода

+и Ñİ

+Ġب عض

+Ġ à¸ģาร

+èī¯ ãģĦ

+ÙĪ ت

+Ġli ên

+ĠÐĿ о

+ĠÐĿ е

+çļĦ ãģª

+ĠÙħ ت

+ĠÑĤак же

+ĠкоÑĤоÑĢ Ñĭе

+Ġ×Ļ ×ĵ×Ļ

+Ġtr á»įng

+ãĤµ ãĤ¤ãĥĪ

+ìłģ ìľ¼ë¡ľ

+Ġt áºŃp

+Ġש ׾×Ļ

+íķĺ ê²Į

+Ġt Ãłi

+ĠÐ ¯

+Ġr á»ĵi

+ا Ùĥ

+Ġth Æ°Æ¡ng

+Ġ×Ķ ×ĸ×Ķ

+ĠÙĪ ÙħÙĨ

+à¸Ĺีà¹Ī มี

+Ġcu á»Ļc

+Ġbü yük

+ãģ¨ ãģĭ

+Ġ×ij ×Ļ×ķתר

+Ġl ần

+Ġgö re

+Ġtr ợ

+Ġ×ĺ ×ķ×ij

+ÑĤÑĮ ÑģÑı

+Ġth á»ijng

+Ġ׼ ש

+Ġti êu

+Ġ×ŀ×IJ ×ķ×ĵ

+Ø Ľ

+k Äħ

+Ġ à¹ĥà¸Ļ

+Ġv ấn

+Ġש ׾×ķ

+ĠÄij á»ģu

+Ùģ ت

+Ġê²ĥ ìĿ´

+Ġh óa

+ĠاÙĦع اÙħ

+ĠÙĬ ÙĪÙħ

+к ой

+Ġbi á»ĩt

+ÑģÑĤ о

+Ġ×Ķ ×Ļ×ķ

+à¸Ĺีà¹Ī à¸Īะ

+Ġ×ĵ ×Ļ

+Ġ×IJ ×ļ

+Ġá n

+ص ÙĪر

+Ġtr ÃŃ

+ĠÐŁÑĢ о

+Ġl á»±c

+ãģĹãģ¦ ãģĦãģ¾ãģĻ

+Ġb Ãłi

+Ġ×ĸ ×IJת

+Ġb áo

+à¸ļ à¸Ļ

+ĠëĮĢ íķľ

+Ġti ế

+Ġtiế ng

+Ġb ên

+ãģķãĤĮ ãĤĭ

+s ión

+Ġt ìm

+×¢ ×ķ

+m é

+ни Ñı

+ãģ» ãģ©

+Ġà¹Ģà¸ŀ ราะ

+ب ة

+Ġë¶ Ħ

+Ġ×IJ ×ĸ

+à¸Ĺ à¹Īาà¸Ļ

+ת ×Ŀ

+Ġth êm

+Ġho ạt

+y ı

+×ĸ ×ķ

+Ġgi á»Ŀ

+Ġb án

+à¸Ĥ าย

+Ñĩ а

+Ġ à¹Ĩ

+ĠاÙĦÙħ ت

+ĠоÑĩ енÑĮ

+Ġb ất

+Ġtr ẻ

+ÑĤ ÑĢ

+ĠØ£ ÙĨÙĩ

+ĠØ« Ùħ

+Ġ׼ ×ŀ×Ķ

+Ġkh ó

+Ġr ằng

+ĠÙĪ ÙģÙĬ

+ни й

+Ġho Ãłn

+t ó

+Ġ×IJ שר

+ĠìĥĿ ê°ģ

+Ñģ а

+Ġ׼ ×ijר

+ĠÑįÑĤ ом

+lar ının

+Ġch Æ°a

+з и

+Ġd ẫn

+ĠÐļ ак

+ج ÙĪ

+ĠбÑĭ ло

+ĠÙĬ ت

+n ı

+ÅĤ am

+ĠÙĪÙĩ ÙĪ

+×ij ×ķ

+п и

+ר ת

+Ġqu á»ijc

+ж д

+ĠÄij Æ¡n

+Ùĥت ب

+Ġm ắt

+ระ à¸ļ

+ระà¸ļ à¸ļ

+ĠÙĥ اÙĨت

+Ġth ân

+สิà¸Ļ à¸Ħà¹īา

+×Ĵ ×Ļ

+Ġph Æ°Æ¡ng

+à¹Ħมà¹Ī à¹Ħà¸Ķà¹ī

+ĠìĦ ±

+ĠC ác

+Ġ×Ķ×ŀ ×ķ

+ĠÑĤ ем

+Ġ×ĵ ×ķ

+à¸Ńะ à¹Ħร

+Ġv Äĥn

+ãģª ãģ®ãģ§

+ĠN á»Ļi

+Ġ×¢ ×ķ

+ãĤīãĤĮ ãĤĭ

+Ġs áng

+Ġgö ster

+ãģĵãģ¨ ãĤĴ

+Ġtaraf ından

+Ġм а

+ĠпоÑģл е

+Ġ׳ ×Ļת

+Ġ׳×Ļת ף

+Ġл еÑĤ

+Ġ׾ ׳×ķ

+Ñģ Ñģ

+Ġ×Ļ ×ķ

+п е

+ĠÙĪ ÙĦÙĥ

+ĠÙĪÙĦÙĥ ÙĨ

+Ġngo Ãłi

+ĠÄij á»ĭa

+r zÄħd

+dz iaÅĤ

+ĠÙħ ر

+иÑĤÑĮ ÑģÑı

+Ġ×IJ×Ĺר ×Ļ

+Ġ׾ ׼׾

+à¸Ĥ à¹īà¸Ńม

+à¸Ĥà¹īà¸Ńม ูล

+Ġб ол

+Ġбол ее

+جÙħ ع

+л еÑĤ

+Ġl á»ĭch

+ĠÙħ Ø«ÙĦ

+Ġ그리 ê³ł

+Ġth ứ

+ĠdeÄŁ il

+ÙĪ ØŃ

+Ġש׾ ×ļ

+ĠÙħ ØŃÙħد

+Ġn ếu

+ĠÄij á»ķi

+Ġv ừa

+Ġm á»įi

+Ġо ни

+Ġl úc

+ĠÙĬ ÙĥÙĪÙĨ

+ì§ Ī

+Ġש׾ ׳×ķ

+ĠÐĶ о

+Ġש ׳×Ļ

+ล ิ

+×IJ פשר

+Ġs ức

+ê¶ Į

+Ġ ứng

+à¹Ħมà¹Ī มี

+Ø·ÙĦ ب

+ĠÑĩ ем

+Ġch uyên

+Ġth ÃŃch

+Ġ×ķ ×Ļ

+íķ ©

+ĠÙħ صر

+д о

+ĠÄij ất

+Ġch ế

+à¸Ĭ ืà¹Īà¸Ń

+Ġìĭ ł

+ĠØ¥ ذا

+Ġر ئÙĬس

+Ġש ×Ļש

+Ġgiả m

+Ñģ ка

+lar ında

+Ġs ợ

+ĠtÃŃ ch

+ĠÙĦ ÙĥÙĨ

+Ġب Ùħ

+×¢ ×ķ×ij

+×¢×ķ×ij ×ĵ

+ÅĤÄħ cz

+ları na

+Ġש ×Ŀ

+ĠÙĦ ت

+Ġש×Ķ ×ķ×IJ

+t ów

+Ġëĭ¤ 른

+ĠØ£ Ùĥثر

+ãģ® ãģ§ãģĻ

+׼ ×Ļ×Ŀ

+ĠolduÄŁ unu

+ãģĭ ãģª

+ãĤĤ ãģĨ

+ÙĬ ØŃ

+Ġnh ìn

+Ġngh á»ĩ

+ãģ«ãģª ãģ£ãģ¦

+п а

+Ġquy ết

+ÙĦ ÙĤ

+t á

+Ġlu ôn

+ĠÄij ặc

+Ġ×IJ ר

+Ġtu á»ķi

+s ão

+ìĻ ¸

+ر د

+ĠبÙĩ ا

+Ġ×Ķ×Ļ ×ķ×Ŀ

+×ķ ×ķ×Ļ

+ãģ§ãģĻ ãģŃ

+ĠÑĤ ого

+Ġth ủ

+ãģĹãģŁ ãģĦ

+ر ÙĤ

+Ġb ắt

+г Ñĥ

+Ġtá» Ń

+ÑĪ а

+Ġ à¸Ľà¸µ

+Ġ×Ķ×IJ ×Ŀ

+íı ¬

+ż a

+Ġ×IJת ×Ķ

+Ġn á»Ļi

+Ġph ÃŃ

+ĠÅŁek ilde

+Ġl á»Ŀi

+d ıģı

+Ġ׼×IJ ף

+Ġt üm

+Ġm ạnh

+ĠM ỹ

+ãģĿ ãĤĵãģª

+Ġnh á»ı

+ãģª ãģĮãĤī

+Ġb ình

+ı p

+à¸ŀ า

+ĠÄij ánh

+ĠÙĪ ÙĦ

+ר ×ķת

+Ġ×IJ ×Ļ×ļ

+Ġch uyá»ĥn

+Ùĥ ا

+ãĤĮ ãĤĭ

+à¹ģม à¹Ī

+ãĤĪ ãģı

+ĠÙĪ ÙĤد

+íĸ Īëĭ¤

+Ġn Æ¡i

+ãģ«ãĤĪ ãģ£ãģ¦

+Ġvi ết

+Ġà¹Ģà¸ŀ ืà¹Īà¸Ń

+ëIJĺ ëĬĶ

+اد ÙĬ

+ĠÙģ Ø¥ÙĨ

+ì¦ Ŀ

+ĠÄij ặt

+Ġh Æ°á»Ľng

+Ġx ã

+Ġönem li

+ãģł ãģ¨

+Ġm ẹ

+Ġ×ij ×Ļ

+Ġ×ĵ ×ijר

+Ġv áºŃt

+ĠÄij ạo

+Ġdá»± ng

+ĠÑĤ ом

+ĠÙģÙĬ Ùĩا

+Ġج ÙħÙĬع

+Ġthu áºŃt

+st ÄĻp

+Ġti ết

+Ø´ ÙĬ

+Ġе Ñīе

+ãģĻãĤĭ ãģ¨

+ĠmÃł u

+ĠÑįÑĤ ого

+Ġv ô

+ĠÐŃ ÑĤо

+Ġth áºŃt

+Ġn ữa

+Ġbi ến

+Ġn ữ

+Ġ׾ ׼×Ŀ

+×Ļ ×Ļף

+Ġس ت

+ĠÐŀ ÑĤ

+Ġph ụ

+ê¹Į ì§Ģ

+Ġ׾ ×ļ

+Ġk ỳ

+à¹ĥ à¸Ħร

+Ġg ây

+ĠÙĦ ÙĦÙħ

+Ġtụ c

+ت ÙĬÙĨ

+Ġtr ợ

+Ġ׾ פ×Ļ

+Ġb á»ij

+ĠÐļ а

+ĠÄij ình

+ow Äħ

+s ında

+Ġkhi ến

+s ız

+Ġк огда

+ס ׾

+ĠбÑĭ л

+à¸Ļ à¹īà¸Ńย

+обÑĢаР·

+Ġê²ĥ ìĿ´ëĭ¤

+ëĵ¤ ìĿĢ

+ãģ¸ ãģ®

+Ġà¹Ģม ืà¹Īà¸Ń

+Ġph ục

+Ġ׊׾ק

+Ġh ết

+ĠÄij a

+à¹Ģà¸Ķà¹ĩ à¸ģ

+íĺ ķ

+l ÃŃ

+ê¸ ī

+Ġع دد

+ĠÄij á»ĵ

+Ġg ần

+Ġ×Ļ ×ķ×Ŀ

+Ġs Ä©

+ÑĢ Ñıд

+Ġquy á»ģn

+Ġ×IJ ׾×IJ

+Ùĩ Ùħا

+׳ ×Ļ×Ķ

+׾ ×ķת

+Ġ×Ķר ×ij×Ķ

+Ġti ên

+Ġal ın

+Ġd á»ħ

+人 ãģĮ

+но Ñģ

+л ÑģÑı

+ĠÄij Æ°a

+ส าว

+иÑĢов ан

+Ġ×ŀס פר

+×Ĵ ף

+Ġki ến

+ĠÐ ¨

+p é

+б Ñĥ

+ов ой

+б а

+ĠØ¥ ÙĦا

+×IJ ׾×Ļ

+Ġx ây

+Ġb ợi

+Ġש ×ķ

+人 ãģ®

+ק ×Ļ×Ŀ

+à¹Ģà¸Ķ ืà¸Ńà¸Ļ

+Ġkh á

+Ġ×ķ ׾×Ķ

+×ĵ ×ķת

+Ġ×¢ ×ij×ķר

+Ġبش ÙĥÙĦ

+ĠÙĩÙĨا Ùĥ

+ÑĤ ÑĢа

+Ġ íķĺëĬĶ

+ร à¸Ńà¸ļ

+owa ÅĤ

+h é

+Ġdi á»ħn

+Ġ×Ķ ׼׾

+ĠØ£ س

+Ġch uyá»ĩn

+ระ à¸Ķัà¸ļ

+ĠNh ững

+Ġ×IJ ×Ĺת

+ĠØŃ ÙĪÙĦ

+л ов

+׳ ר

+Ġ×ķ ׳

+Ġch Æ¡i

+Ġiç inde

+ÑģÑĤв Ñĥ

+Ġph á»ij

+ĠÑģ Ñĥ

+ç§ģ ãģ¯

+Ġch ứng

+Ġv á»±c

+à¹ģ à¸Ń

+Ġl áºŃp

+Ġtừ ng

+å°ij ãģĹ

+ĠNg uy

+ĠNguy á»ħn

+ĠÙģÙĬ Ùĩ

+Ġб а

+×Ļ ×Ļת

+Ġ×ľ×¢ ש×ķת

+Ġ×ŀ ׼

+Ġnghi á»ĩm

+Ġм ного

+Ġе е

+ëIJĺ ìĸ´

+Ġl ợi

+Ġ׾ ׾×IJ

+Ġ׼ ף

+Ġch ÃŃ

+ãģ§ ãģ®

+×Ĺ ×ķ

+ש ×ķ×Ŀ

+Ġ×ŀ ר

+ĠÐĶ лÑı

+Å ģ

+Ġ׼×IJ שר

+ĠM á»Ļt

+ĠÙĪاÙĦ ت

+ĠìĿ´ 룰

+ÅŁ a

+Ġchi ến

+Ġaras ında

+Ġ×ij ×IJתר

+ãģķãĤĮ ãģ¦ãģĦãĤĭ

+Ø´ ÙĥÙĦ

+Ġt ượng

+Ġت ت

+ĠC ó

+Ġb á»ı

+Ġtá»ī nh

+Ġkh ÃŃ

+ĠпÑĢ оÑģÑĤ

+ĠпÑĢоÑģÑĤ о

+ĠÙĪ ÙĤاÙĦ

+Ġgi áo

+ĠN ếu

+×IJ ×ŀר

+×¢×ł×Ļ ×Ļף

+íİ ¸

+Ùĩد Ùģ

+ĠB á»Ļ

+Ġb Ãłn

+Ġng uyên

+Ġgü zel

+ส าย

+ì² ľ

+×ŀ ×ķר

+Ġph ân

+ס פק

+ק ×ij׾

+ĠاÙĦÙħ تØŃ

+ĠاÙĦÙħتØŃ Ø¯Ø©

+ائ د

+Ġ×IJ ×ŀר

+Ġki ÅŁi

+ì¤ Ģ

+Ġtr uyá»ģn

+ĠÙĦ Ùĩا

+ĠÐľ а

+à¸ļริ ษ

+à¸ļริษ ั

+à¸ļริษั à¸Ĺ

+Ġש ׳×Ļ×Ŀ

+Ġмен Ñı

+ÅŁ e

+Ġdi á»ĩn

+Ġ×IJ׳ ×Ĺ׳×ķ

+k ü

+Ġc á»ķ

+Ġm á»Ĺi

+w ä

+Ùħ ÙĬ

+Ġhi á»ĥu

+ëĭ ¬

+Ġ×Ķ ×Ĺ׾

+Ġt ên

+Ġki á»ĩn

+ÙĨ ÙĤÙĦ

+Ġv á»ĩ

+×ĵ ת

+ĠÐłÐ¾ÑģÑģ ии

+л Ñĥ

+ĠاÙĦع ربÙĬØ©

+ĠØ· رÙĬÙĤ

+Ġ×Ķ×ij ×Ļת

+Ñģ еÑĢ

+Ġм не

+ä u

+Ġtri á»ĩu

+ĠÄij ủ

+Ġר ×ij

+ت ÙĩÙħ

+à¸ĭ ี

+Ġì§Ģ ê¸Ī

+li ÅĽmy

+د عÙħ

+ãģł ãĤįãģĨ

+Ñģки е

+Ġh á»ıi

+Ġק ×ķ

+ÑĢÑĥ Ñģ

+ÙĨ ظر

+ãģ® ãĤĤ

+Ġ×Ķ ׼×Ļ

+ĠìĽ IJ

+ÙĪ Ùĩ

+ĠÙĪ Ùİ

+ĠB ạn

+п лаÑĤ

+Ġ×ŀ ×ŀש

+лÑİ Ð±

+ĠнÑĥж но

+Ġth Æ°

+ãģ µ

+ãģı ãĤīãģĦ

+ر ش

+ר ×ķ×Ĺ

+ĠÙĬ تÙħ

+Ġצר ×Ļ×ļ

+Ġph á

+ม à¸Ńà¸ĩ

+Ġ×ij×IJ ×ķפף

+Ġcả nh

+Ġíķľ ëĭ¤

+Ġ×Ķ×ŀ ת

+à¸ķà¹Īาà¸ĩ à¹Ĩ

+มี à¸ģาร

+Ñģки Ñħ

+ĠÐĴ Ñģе

+Ġا ÙĪ

+ج ÙĬ

+ãģĵãģ¨ ãģ¯

+Ġd Ãłi

+Ġh á»ĵ

+èĩªåĪĨ ãģ®

+à¹Ħ หà¸Ļ

+ëĵ¤ ìĿĦ

+ĠV Äĥn

+Ġд аж

+Ġдаж е

+Ñĭ ми

+лаÑģ ÑĮ

+ÙĬ ÙĪÙĨ

+ÙĨ ÙĪ

+c ó

+ãģĹãģ¦ ãģĦãģŁ

+ãģł ãģĭãĤī

+طاÙĦ ب

+Ġc á»Ńa

+п ÑĢоÑģ

+ãģªãģ© ãģ®

+รุ à¹Īà¸Ļ

+Ġchi ếc

+л Ñĭ

+ĠÑıвлÑı еÑĤÑģÑı

+Ġn á»ķi

+ãģ® ãģĬ

+Ġ×IJת ×Ŀ

+ĠëķĮ문 ìĹIJ

+à¸ģล าà¸ĩ

+ĠbaÅŁ ka

+ìĦ Ŀ

+ĠÑĨ ел

+Ùģ ÙĤ

+ãģ«ãĤĪ ãĤĭ

+ÙĤ ا

+Ġçı kar

+Ġcứ u

+ط ا

+Ġש ת

+à¹Ĥ à¸Ħ

+Ġ×ŀ ׾

+Ġ×Ķ פר

+Ġг де

+ĠØ® Ø·

+åīį ãģ«

+c jÄĻ

+Ġ׊ש×ķ×ij

+ר×Ĵ ×¢

+Ġkho ảng

+ĠÄij á»Ŀi

+ĠÐł е

+Ġо на

+Ġ×IJ ׳×ķ

+ãģ® ãģ«

+ĠاÙĦØ° ÙĬÙĨ

+кÑĥ п

+ãĤµ ãĥ¼ãĥ

+ãĤµãĥ¼ãĥ ĵ

+ãĤµãĥ¼ãĥĵ ãĤ¹

+в ал

+г е

+Ġgi ữa

+ĠKh ông

+ĠâĹ ĭ

+à¸ģล ุà¹Īม

+ĠÙħÙĨ Ø°

+à¸Ń à¹Īาà¸Ļ

+ĠÑģп оÑģоб

+ĠÄij á»Ļi

+Ġdi ÄŁer

+Ġ à¸ĸà¹īา

+Ùħ Ø«ÙĦ

+Ġ×Ķ×IJ ×Ļ

+Ġد ÙĪÙĨ

+ÙĬر اÙĨ

+Ñī и

+بÙĨ اء

+ĠØ¢ خر

+ظ Ùĩر

+Ġ×ij ׼

+ĠاÙĦÙħ ع

+ãĥ Ĵ

+Ġt ất

+Ġm ục

+ĠdoÄŁ ru

+ãģŁ ãĤī

+Ġס ×ķ

+Ġx ác

+ร à¸Ń

+ĠcÄĥ n

+Ġон л

+Ġонл айн

+Ġk ý

+Ġch ân

+Ġ à¹Ħมà¹Ī

+اØŃ Ø©

+r án

+׳×Ļ ×Ļ×Ŀ

+Ġ×ij ף

+ĠÐ ĸ

+à¸ķร à¸ĩ

+д Ñĭ

+Ġs ắc

+ÙĦ ت

+ãĥŃ ãĥ¼

+ĠÙĦ ÙĨ

+Ġר ×ķ

+Ġd Æ°á»Ľi

+à¹Ģ à¸ĺ

+à¹Ģà¸ĺ à¸Ń

+e ÄŁi

+Ġ×ķ ש

+ĠÙĦ Ø£

+Ġg ặp

+Ġc á»ij

+ãģ¨ ãģ¦ãĤĤ

+رÙĪ س

+Ġ׾×Ķ ×Ļ

+Ġë³ ¸

+ä¸Ĭ ãģĴ

+Ġm ức

+Ñħ а

+Ġìŀ ¬

+à¸ī ัà¸Ļ

+ÑĢÑĥ ж

+Ġaç ık

+ÙĪ اÙĦ

+Ġ×ĸ ×ŀף

+人 ãģ¯

+ع ÙĬÙĨ

+Ñı Ñħ

+Ġ×Ĵ×ĵ ×ķ׾

+ר ×ķ×ij

+g ó

+ëĿ¼ ê³ł

+Ġark adaÅŁ

+ÙĨ شر

+Ġгод Ñĥ

+ĠболÑĮ ÑĪе

+ãģ¡ãĤĩ ãģ£ãģ¨

+Ġcâ u

+Ġs át

+íĶ ¼

+Ġti ến

+íķ´ ìķ¼

+ĠÙĪ Ø£ÙĨ

+à¸Ļ าà¸Ļ

+Ġ×ij×IJ×ŀ צע

+Ġ×ij×IJ×ŀצע ×ķת

+Ġ׾ ר

+Ġqu ản

+ĠÙĪاÙĦ Ø£

+Ġ×IJ×ķת ×Ķ

+Ġìĸ´ëĸ ¤

+Ġê²ĥ ìĿĢ

+ØŃس ÙĨ

+Ġm ất

+à¸Ħ ูà¹Ī

+ãĥ¬ ãĥ¼

+ĠÐĶ а

+Ġol ması

+Ġthu á»Ļc

+׳ ×Ĺ

+íĨ ł

+Ġsö yle

+ãģĿãģĨ ãģ§ãģĻ

+Ġت ÙĥÙĪÙĨ

+л ÑĥÑĩ

+׾ ×Ļ×ļ

+ĠØ£ ØŃد

+ли ÑģÑĮ

+ĠвÑģ его

+Ġ×Ķר ×ij

+Ġëª »

+o ÄŁ

+oÄŁ lu

+ĠìĦ ł

+Ġк аÑĢ

+à¸łà¸² à¸Ħ

+e ÅĦ

+Ġ à¸ģà¹ĩ

+Ġa ynı

+Ġb Ãł

+ãģªãĤĵ ãģ¦

+Ġ모 ëĵł

+ÙĤر ار

+ãģĹãģª ãģĦ

+ĠÐĴ о

+ĠÙĪÙĩ ÙĬ

+ни ки

+ãĤĮ ãģŁ

+Ġchu ẩn

+ר ע

+Ùģ رÙĬÙĤ

+ãĤĴ åıĹãģij

+ĠÄij úng

+б е

+׼ ×ķ×Ĺ

+п Ñĥ

+Ġ×ķ ×Ĵ×Ŀ

+×ŀ ׳×Ļ

+íĸ ¥

+צ ×Ļ×Ŀ

+à¸ĭ ิ

+Ùĩ ÙĨ

+н ем

+Ġ×ij×ij ×Ļת

+ر ع

+Ġ ส

+ĠÄIJ Ãł

+íķĺ ëĭ¤

+Ġ ấy

+×Ĺ ×ķ×ĵ

+×Ĺ×ķ×ĵ ש

+ĠÑĩеÑĢ ез

+Ñĥ л

+ĠB ình

+Ġê²ĥ ìĿĦ

+Ġ×Ĵ ר

+ä»ĺ ãģij

+×Ĺ׾ ק

+Ġت ÙĦÙĥ

+à¹ĥส à¹Ī

+sz Äħ

+ÙĤ اÙħ

+د ÙĪر

+ĠÙģ ÙĤØ·

+Ġh ữu

+Ġмог ÑĥÑĤ

+Ġg á»įi

+Ġק ר

+à¸Īะ มี

+ت ÙĤدÙħ

+Ġع بر

+Ġ׾×Ķ ×Ŀ

+ĠÑģам о

+ס ×ĵר

+Ġc Ãłng

+r ÃŃ

+Ġìŀ ¥

+ëĵ¤ ìĿĺ

+ĠÙĦ Ùĥ

+п оÑĢÑĤ

+Ġkh ả

+ĠÑģеб Ñı

+׳ ף

+Ġد ÙĪر

+Ġm ợ

+Ġcâ y

+Ġf ark

+Ġfark lı

+а ÑİÑĤ

+Ġtr á»±c

+wiÄĻks z

+Ġthu á»ijc

+Ġت ØŃت

+ت ÙĦ

+ов Ñĭе

+ëĤ ł

+Ġв ам

+بÙĦ غ

+Ġê°Ļ ìĿĢ

+íĮ IJ

+ÙĦ ب

+Ġnas ıl

+Ġод ин

+м ан

+ĠعÙĦÙĬ Ùĩا

+б и

+Ġפ ש×ķ×ĺ

+×ijר ×Ļ

+Ġש ׳×Ķ

+Ġëı Ħ

+ĠÄIJ ại

+Ġ×IJ×ķת ×Ŀ

+ĠاÙĦØŃ Ø±

+Ġб о

+à¸Ī ุà¸Ķ

+Ġr õ

+ĠdeÄŁi ÅŁ

+Ġëĭ ¨

+ĠÑģлÑĥÑĩ а

+ĠÑģлÑĥÑĩа е

+Ġ×IJ׳ ש×Ļ×Ŀ

+×ĵ ×£

+ש×ij ת

+Ġש׾ ׼×Ŀ

+Ġch ú

+nik ów

+Ġtan ı

+Ġcá o

+ĠÄij á

+Ġ×IJ ×ĵ×Ŀ

+Ġê° ķ

+Ġnhi á»ĩm

+Ġ׾ ס

+Ġ×Ľ×ª ×ij

+Ġ×Ķס פר

+ĠÄij Äĥng

+Ġë ijIJ

+à¸ľ ิ

+à¸ľà¸´ ว

+ج ا

+Ġê° IJ

+ر أ

+ست خدÙħ

+ãģ«ãģªãĤĬ ãģ¾ãģĻ

+Ġtá» ·

+×ĺ ×ķר

+г овоÑĢ

+Ġв оÑģ

+ĠÙħÙĨ Ùĩا

+иÑĢов аÑĤÑĮ

+ĠÄij ầy

+׳ ×Ĵ

+ĠÙħ ÙĪ

+ĠÙħ ÙĪÙĤع

+ר׼ ×Ļ

+ت Ùı

+ëª ¨

+Ġת ×ķ

+ÙĬا Ùĭ

+à¹ĥ à¸Ķ

+ãĤĬ ãģ¾ãģĻ

+à¸Ńยูà¹Ī à¹ĥà¸Ļ

+ĠØ£ ÙĪÙĦ

+ĠØ£ خرÙī

+Ġc Æ°

+ص ار

+×ŀ׊ש×ij

+б ÑĢа

+ÅĦ ski

+б ÑĢ

+ĠÙĬ Ùı

+à¸ģ ิà¸Ļ

+Ġch á»ijng

+Ùħ Ùı

+Ġ à¸Ħืà¸Ń

+Ġت ÙĨ

+t ÃŃ

+y Äĩ

+Ġm ạng

+Ùģ ÙĪ

+Ġdü nya

+ק ר×IJ

+Ġק ׾

+ĠØŃ Ø§ÙĦ

+c ÃŃa

+Ġà¹Ģ รา

+Ġר ×ķצ×Ķ

+Ġá p

+ë° ķ

+ا ÙĤØ©

+ни Ñİ

+Ġ×IJ ׾×ķ

+Ġ×ŀס ×ķ

+ãģ§ãģ¯ ãģªãģı

+Ġtr ả

+Ġק שר

+mi ÅŁtir

+Ġl Æ°u

+Ġh á»Ĺ

+ĠбÑĭ ли

+Ġl ấy

+عÙĦ Ùħ

+Ġö zel

+æ°Ĺ ãģĮ

+Ġ×ĵ ר×ļ

+Ùħ د

+s ını

+׳ ×ķש×IJ

+r ów

+Ñĩ еÑĢ

+êµIJ ìľ¡

+ĠÐľ о

+л ег

+ĠV Ỽi

+วัà¸Ļ à¸Ļีà¹ī

+ÑİÑī ие

+ãģĬ ãģĻ

+ãģĬãģĻ ãģĻ

+ãģĬãģĻãģĻ ãĤģ

+ëı ħ

+Ġ×Ļ×Ķ ×Ļ×Ķ

+×ŀ ×ĺר

+Ñı ми

+Ġl á»±a

+ĠÄij ấu

+à¹Ģส ียà¸ĩ

+Ġt Æ°Æ¡ng

+ëĵ ±

+ĠÑģÑĤ аÑĢ

+à¹ĥ à¸ļ

+ว ัà¸Ķ

+ĠÄ° stanbul

+Ġ à¸Īะ

+à¸ķ ลาà¸Ķ

+Ġب ÙĬ

+à¹ģà¸Ļ ะ

+à¹ģà¸Ļะ à¸Ļำ

+س اعد

+Ġب Ø£

+Ġki á»ĥm

+ØŃ Ø³Ø¨

+à¸Ĭั à¹īà¸Ļ

+Ġ×ķ ×¢×ķ×ĵ

+ов ÑĭÑħ

+оÑģ нов

+Ġtr Æ°á»Łng

+צ ×ij×¢

+ĠÃŃ t

+Ġk ỹ

+cr é

+Ñı м

+êµ °

+ãģĮ ãģªãģĦ

+ÙĬÙĦ Ø©

+ãĥķ ãĤ£

+ر Ùī

+ĠÙĬ جب

+Ġ×IJ ×£

+Ġc á»±c

+ãĤīãĤĮ ãģŁ

+Ġ à¸ľà¸¹à¹ī

+Ġ à¸Ń

+lar ımız

+Ġkad ın

+Ġê·¸ ëŀĺ

+Ġê·¸ëŀĺ ìĦľ

+ĠëĺIJ ëĬĶ

+ĠÄij ả

+ĠÄijả m

+Ġ×IJ ×ķ×ŀר

+Ġy ếu

+ci Äħ

+ciÄħ g

+Ġt á»ij

+Ġש×IJ ׳×Ļ

+Ġdz iaÅĤa

+Ñī а

+ĠÄij Ãłn

+s ına

+ãģĵãĤĮ ãģ¯

+Ġ×ij ׾×Ļ

+Ġ×ij ×Ļשר×IJ׾

+л оÑģÑĮ

+Ġgi ữ

+ê° IJ

+ÑĢ он

+تج ار

+г лав

+в ин

+Ġh ạn

+Ġyapı lan

+ب س

+Ġ à¸ŀรà¹īà¸Ńม

+ê´Ģ 리

+mÄ±ÅŁ tır

+b ü

+r ück

+ĠBaÅŁkan ı

+ĠÙĦ ÙĬس

+Ġs Æ¡

+à¸Īัà¸ĩ หว

+à¸Īัà¸ĩหว ัà¸Ķ

+د اء

+Ġ×Ķ ׼

+v ÃŃ

+ש ×IJר

+Ġh Æ°á»Łng

+Ġb óng

+ĠCh ÃŃnh

+Äħ c

+à¹Ģà¸ģีà¹Īยว à¸ģัà¸ļ

+Ġtá» ©

+Ġtứ c

+ĠÑĨ веÑĤ

+Ġt á»iji

+ĠnghÄ© a

+ÙĦا عب

+د ÙĦ

+Ġפע ×Ŀ

+h ör

+à¸Ĭ ุà¸Ķ

+à¸ŀ ู

+à¸ŀู à¸Ķ

+п аÑģ

+ĠÅŁ u

+Ġt Æ°á»Łng

+خار ج

+Ġâ m

+ĠинÑĤеÑĢ еÑģ

+ен нÑĭÑħ

+×IJ ׳×Ļ

+بد أ

+ëĿ¼ ëĬĶ

+ì¹ ´

+æĸ¹ ãģĮ

+ли в

+Ġ à¸Ħà¸Ļ

+ער ×ļ

+à¸Ĥà¸Ńà¸ĩ à¸Ħุà¸ĵ

+п ад

+Ġc ạnh

+ĠëĤ ¨

+ĠÄij âu

+Ġbi á»ĥu

+ãĤĤ ãģĤãĤĭ

+׾ ×Ĵ

+Ġ สำหรัà¸ļ

+Ġxu á»ijng

+ס ×ķ

+ĠØ° ات

+ĠÐľ е

+ع اÙĦÙħ

+×IJ ס

+ب ÙĬØ©

+ش ا

+и ем

+ĠNg Æ°á»Ŀi

+íĺ ij

+Ñģл ов

+Ġп а

+Ġm ẫu

+ĠпÑĢоÑĨ еÑģÑģ

+ĠNh Ãł

+пÑĢо из

+пÑĢоиз вод

+à¸łà¸²à¸¢ à¹ĥà¸Ļ

+Ġ à¸ļาà¸Ĺ

+×ŀ ׳×ķ

+ĠоÑĢг ан

+רצ ×ķ

+×ķ×ŀ ×Ļ×Ŀ

+Ġyaz ı

+Ġd ù

+ãĥ¬ ãĥ³

+ÙĪÙĦ ÙĬ

+ย ู

+Ġtr ò

+à¹Ģà¸ŀ ลà¸ĩ

+Ġ×ŀ ׾×IJ

+à¸ķ ล

+à¸ķล à¸Ńà¸Ķ

+ĠÄij ạt

+Ġ×Ĺ×ĵ ש

+p óÅĤ

+Ġ×ŀ ×ĵ×Ļ

+ujÄħ c

+×ŀ׳×Ķ ׾

+Ġש×ij ×ķ

+Ġ×Ķ×ŀש פ×ĺ

+Ġ×IJ ׾×Ķ

+ĠÙĪ Ø°ÙĦÙĥ

+à¹Ģà¸ŀ ราะ

+ĠÄijo Ãłn

+Ġíķ¨ ê»ĺ

+Ġd ục

+ش ت

+Ġ ula

+Ġula ÅŁ

+Ġqu ý

+Ġ×Ķ ×Ĵ×ĵ×ķ׾

+à¸ķัà¹īà¸ĩ à¹ģà¸ķà¹Ī

+Ġש ר

+Ø´ Ùĩد

+׳ ש×Ļ×Ŀ

+à¸ŀ ล

+رÙĪ ا

+ãĤĮ ãģ¦

+Ġн иÑħ

+Ġдел а

+ãģ§ãģį ãģªãģĦ

+ÅĤo ż

+×IJ ×Ĺר

+ì ½Ķ

+ãĤ¢ ãĥĥãĥĹ

+د Ùģع

+Ġti á»ĩn

+Ġkh á»ı

+Ġkhá»ı e

+ĠاÙĦع اÙħØ©

+ãģ« ãģĤãĤĭ

+ĠÄij á»Ļc

+ì¡ ±

+Ġc ụ

+й ÑĤе

+Ġзак он

+ĠпÑĢо екÑĤ

+ìĸ ¸

+ÙĦ ØŃ

+ĠçalÄ±ÅŁ ma

+ãĤĴ ãģĻãĤĭ

+Ñħ и

+ع اد

+Ġ׳ ×ŀצ×IJ

+Ġר ×Ļ

+à¸Ńà¸Ńà¸ģ มา

+ĠT ôi

+Ġth ần

+ĠÙĬ ا

+ล าย

+Ġав ÑĤо

+Ġsı ra

+ĠÙĥ Ø«ÙĬر

+Ùħ ÙĬز

+ĠاÙĦع ÙĦÙħ

+æĸ¹ ãģ¯

+×ķ×¢ ×ĵ

+Ġобла ÑģÑĤи

+×Ļ׾ ×Ļ×Ŀ

+ãģĮ åĩº

+à¸ĺ ุ

+à¸ĺุ ร

+à¸ĺุร à¸ģิà¸Ī

+ÙĤت ÙĦ

+ר×IJ ×ķ

+Ġng u

+Ġngu á»ĵn

+Ġ มา

+Ġпл ан

+t ório

+Ġcu á»iji

+Ñģк ом

+ĠاÙĦÙħ اض

+ĠاÙĦÙħاض ÙĬ

+Ġ×ij×¢ ׾

+Ġר ×ij×Ļ×Ŀ

+Ġlu áºŃn

+Ùĥ ÙĪ

+à¸Ĺัà¹īà¸ĩ หมà¸Ķ

+в ан

+Ġtho ại

+à¹Ħ à¸Ń

+б иÑĢ

+ĠاÙĦ ض

+ت ا

+ĠÑĢ од

+ĠV Ãł

+×ŀ ×Ļף

+ĠбÑĭ ла

+к ами

+ĠÐĶ е

+t ık

+קר ×Ļ

+ĠeÄŁ itim

+ĠÙĥ بÙĬر

+ب Ùĥ

+ĠÙĦ ÙĪ

+в ой

+Ġ ãģĵãģ®

+ĠÑĤ ÑĢÑĥд

+my ÅĽl

+Ġs Æ°

+à¸ŀ ีà¹Ī

+Ġ à¹ģลà¹īว

+ע ק

+Ġ×Ĺ×ijר ת

+ระ หว

+ระหว à¹Īาà¸ĩ

+×Ļ ×Ļ×Ķ

+ĠاÙĦÙĨ اس

+ün ü

+Ġ׾ ×ŀ×Ķ

+Ġch Æ°Æ¡ng

+ĠH á»ĵ

+ار ت

+ãĤĪãģĨ ãģ§ãģĻ

+l á

+ק×Ļ ×Ļ×Ŀ

+æľ¬ å½ĵ

+æľ¬å½ĵ ãģ«

+ãģĵãĤĵ ãģª

+Ñģ ов

+Ġ×ķ ×Ĺ

+à¹Ģà¸ģ à¹ĩà¸ļ

+Ġк ÑĤо

+à¹Ĥร à¸Ħ

+ĠØ´ رÙĥØ©

+ع زÙĬ

+عزÙĬ ز

+Ø·ÙĦ ÙĤ

+п ÑĥÑģÑĤ

+Ùģ تØŃ

+ëŀ Ģ

+Ġhã y

+ض Ùħ

+ë¦ °

+åł´åIJĪ ãģ¯

+ãĤª ãĥ¼

+Ġh ắn

+Ġ×IJ ×ij×Ļ×ij

+Ġש׾×Ķ ×Ŀ

+Ġ×Ķ×Ļ ×Ļת×Ķ

+ĠاÙĦد ÙĪÙĦØ©

+ĠاÙĦ ÙĪÙĤ

+ĠاÙĦÙĪÙĤ ت

+ãģĤ ãģ¾ãĤĬ

+Ġta ÅŁÄ±

+Ä° N

+ע סק

+ãģ¦ ãģĦãģŁ

+Ġtá»ķ ng

+ĠاÙĦØ¥ ÙĨس

+ĠاÙĦØ¥ÙĨس اÙĨ

+ÑĢ еÑĪ

+Ġg ái

+ĠÑĨ ен

+ĠÙģ ÙĤد

+Ùħ ات

+ãģķãĤĵ ãģ®

+Ġph ù

+×ĺ ×Ķ

+ĠÙĪاÙĦ تÙĬ

+Ġب Ùĥ

+ìĿ´ ëĤĺ

+к Ñģ

+Ùħ ÙĬر

+Ġv ùng

+ĠاÙĦØ´ عب

+ĠNh Æ°ng

+ãĥĢ ãĥ¼

+Ġ×Ĺ×Ļ ×Ļ×Ŀ

+ĠØ´ خص

+ק ×ķ×ĵ

+ê² Ģ

+ע ש

+×¢ ×ķ׾×Ŀ

+צ ×ķר

+ع ÙĤد

+ĠiÅŁ lem

+Ġ×Ķ×ij ×IJ

+Ġd ưỡng

+à¸Ł รี

+Ġph ÃŃa

+ãģ®ä¸Ń ãģ§

+Ġп и

+Ġng Ãłnh

+ним а

+ĠÙĩ ÙĦ

+Ġ×ķ ×IJת

+ĠÄij áng

+é quipe

+ĠÑįÑĤ оÑĤ

+Ġgö rev

+ë§ ¤

+Ġqu ân

+å¼ķ ãģį

+æĻĤ ãģ«

+Ġب Ùħا

+×ŀ ×Ļת

+Ġü lke

+Ġ×ŀק ×ķ×Ŀ

+×ij ף

+æ°Ĺ æĮģãģ¡

+Ġë§İ ìĿĢ

+Ġyük sek

+ÑĨ енÑĤÑĢ

+ĠÙħ جÙĦس

+ç§ģ ãģ®

+ÙĤد ر

+Ġë¶Ģ ë¶Ħ

+Ġì° ¨

+خر ج

+ãģĭ ãģªãĤĬ

+ë³´ ëĭ¤

+Ġ×ŀ ×Ļ×ĵ×¢

+peÅĤ ni

+Ġx á»Ń

+ìĹIJìĦľ ëĬĶ

+ĠباÙĦ Ùħ

+ĠÙĪ Ùħا

+ĠÑįÑĤ ой

+ب ÙĬÙĨ

+n ü

+ØŃ Ø²

+ØŃز ب

+ĠÑĢабоÑĤ а

+ĠNh áºŃt

+ÙĦ اء

+Ġëĵ ¤

+Ġëĵ¤ ìĸ´

+ãĤĦãģĻ ãģĦ

+×Ĺ×ĸ ק

+Ġ×Ķ×Ĺ ×ijר×Ķ

+п иÑĤ

+ãģĭãĤī ãģ®

+Ġë§IJ ìĶĢ

+Ġפ ×ķ

+ÙĦ Ùİ

+à¹Ģà¸ķà¹ĩ ม

+ĠÐļ о

+Ġm ówi

+Ġt ÃŃn

+ר×Ĵ ש

+פר ק

+Ġtr ạng

+ĠÐŀ н

+×Ĺ ×ķ×¥

+ĠعÙĨد Ùħا

+Ġب ر

+使 ãģĦ

+Ġr á»Ļng

+ëĮĢ ë¡ľ

+íĪ ¬

+Ġktóry ch

+в ид

+ลูà¸ģ à¸Ħà¹īา

+Ġmog Äħ

+Ġש ×Ĺ

+×ij ×Ĺר

+ãĥĸ ãĥŃãĤ°

+ĠTh Ãłnh

+Ġ×Ķ ר×Ļ

+ĠÑģÑĤ аÑĤÑĮ

+ĠH á»Ļi

+à¸ļ à¹īาà¸ĩ

+çī¹ ãģ«

+ĠÄIJ ức

+èĢħ ãģ®

+×¢ ×ŀ×ķ×ĵ

+×ĺר ×Ķ

+Ð ¥

+ĠÙħ Ùħا

+Ġe ÅŁ

+ĠнеобÑħодим о

+ник ов

+Ġüzer inde

+a ÅĤa

+Ġchá»ĭ u

+ĠاÙĦ دÙĬÙĨ

+أخ بار

+ĠÄij au

+ãģĮ å¤ļãģĦ

+jÄħ cych

+د Ø®ÙĦ

+ları nd

+larınd an

+Ġs ẻ

+à¸ŀิ à¹Ģศ

+à¸ŀิà¹Ģศ ษ

+ת ף

+t ıģı

+Ġlu áºŃt

+ĠÅŀ e

+ãĤ« ãĥ¼

+ãģ® ãģĤãĤĭ

+Ġ×Ķ×IJ תר

+ĠاÙĦØ¢ ÙĨ

+ıld ı

+Ġá o

+ĠнаÑĩ ал

+Ġvi á»ĩn

+Ġ×ij×¢ ×ķ׾×Ŀ

+з наÑĩ

+×Ļ×ĺ ×Ķ

+к ам

+ĠÐĺ з

+à¹Ģà¸Ĥ ียà¸Ļ

+à¸Ļ à¹īà¸Ńà¸ĩ

+ÑĤ ÑĢо

+à¹Ģ à¸Ł

+Ġжиз ни

+Ġ สà¹Īวà¸Ļ

+Ġv áºŃn

+Ġê´Ģ 볨

+Ġl âu

+ס ×ĺר

+ק ש

+س ÙĬر

+Ġ×IJ×ķת ×Ļ

+Ġm ôi

+ائ ب

+Ġо ÑģÑĤа

+Ġm ón

+Ġ×ij ×ŀק×ķ×Ŀ

+Ġد اخÙĦ

+Ġ×IJ ×ķר

+Ġв аÑģ

+Ùĥ Ø´Ùģ

+ìĺ ¨

+à¸ĸ à¹Īาย

+Ġkullan ıl

+Ġt ô

+ãģ« ãĤĪãĤĬ

+ĠëĺIJ íķľ

+Ġ×¢×ij×ķ×ĵ ×Ķ

+Ġri ê

+Ġriê ng

+Ġyak ın

+ز ا

+Å »

+×IJ ×ķ׼׾

+شار Ùĥ

+Ġб еÑģ

+× ´

+Ġا بÙĨ

+ĠTá»ķ ng

+ÙĨ ظ

+ÅĽwi ad

+ãĤµ ãĥ¼

+ห าย

+ĠG ün

+Ġhakk ında

+à¹Ģà¸Ĥà¹īา มา

+ز ÙĨ

+ĠÐł о

+Ġbi á»ĥn

+ãģ© ãģĵ

+Ùģ عÙĦ

+ز ع

+פר ×ĺ

+Ġ×Ķ ף

+Ø£ ÙĩÙĦ

+Ġth ất

+ØŃ ÙħÙĦ

+Ñĩ Ñĥ

+ĠìĤ¬ ìĭ¤

+ì° ¸

+ĠìľĦ íķ´

+ÙĪ ظ

+ĠÐŁ од

+Ġkho ản

+ÑĤ ен

+ĠÙģ اÙĦ

+Ñģ ад

+à¸Ļ à¸Ńà¸Ļ

+ĠاÙĦسعÙĪد ÙĬØ©

+" ØĮ

+ĠاÙĦ ÙĴ

+ãĤī ãģļ

+Ġto án

+Ġch ắc

+׼ ×Ļר

+m éd

+méd ia

+ز ÙĪ

+Ġyan ı

+פ ׳×Ļ×Ŀ

+ØŃ Ø¸

+Ġб еÑģп

+ĠбеÑģп лаÑĤ

+ĠбеÑģплаÑĤ но

+ĠØ£ ÙħاÙħ

+à¸Ń าย

+à¸Ńาย ุ

+ר שת

+Ġg á»ĵ

+Ġgá»ĵ m

+Ġu á»ijng

+ص ب

+k ır

+ãĥij ãĥ¼

+Ġ׾×ĵ עת

+Ġк ÑĥпиÑĤÑĮ

+׾ ×ķ×Ĺ

+ÙĪض ع

+ÙĤÙĬ Ùħ

+à¸Ľ า

+ж ив

+à¸Ķ ิà¸Ļ

+×IJ ×ķפ

+à¹Ģล à¹ĩà¸ģ

+ãĥĥ ãĥī

+иÑĩеÑģки Ñħ

+ĠCh ủ

+кÑĢ аÑģ

+ÙĪ صÙĦ

+p ÅĤat

+м оÑĢ

+Ġ×Ķ×IJ ×ķ

+à¸Ń ิà¸Ļ

+Ġíķľ êµŃ

+гÑĢ е

+Ġìłľ ê³µ

+ì° ½

+Ġê°ľìĿ¸ ìłķë³´

+Ġngh á»ĭ

+à¸ĭ า

+ØŃس اب

+Ġby ÅĤa

+ÙħÙĦ Ùĥ

+иÑĩеÑģки е

+Ġb ác

+ض ØŃ

+ê¸ ¸

+ש ×ŀ×¢

+Ġìĸ´ëĸ »

+Ġìĸ´ëĸ» ê²Į

+ìĽ Į

+ات Ùĩ

+à¹Ĥรà¸ĩ à¹ģ

+à¹Ĥรà¸ĩà¹ģ รม

+خد ÙħØ©

+ĠÐł а

+׼×ķ׾ ×Ŀ

+×ŀש ×Ĺק

+ĠÙĪ ÙĥاÙĨ

+ס ×ķ×£

+ĠاÙĦØŃÙĥÙĪÙħ Ø©

+Ġ×ij ×ĺ

+Ġtr áºŃn

+Ġ×Ķ×¢ ×ķ׾×Ŀ

+ĠÃŃ ch

+t Äħ

+ש×ŀ ×ķ

+Ġ×Ķר×IJש ×ķף

+Ġíķĺ ê³ł

+ãģķ ãĤī

+ãģķãĤī ãģ«

+ãģ« ãģĹãģ¦

+Ġ à¸ľà¸¡

+ãģ® ãĤĪãģĨãģª

+ĠÙĪ ÙĤت

+ãĥį ãĥĥãĥĪ

+ÙĦ عب

+ÙĪ Ø´

+ìĺ ¬

+Ġ หาà¸ģ

+Ġm iaÅĤ

+à¸Ĺ à¸Ńà¸ĩ

+иÑĤ а

+ا صر

+ил ÑģÑı

+з е

+à¸Ľà¸£à¸° มาà¸ĵ

+ãģĿãĤĮ ãģ¯

+Ġb ır

+Ġbır ak

+صÙĨ اع

+Ð ®

+ش عر

+Ġ׳ ×Ĵ×ĵ

+Ġب سبب

+ãĥĿ ãĤ¤

+ãĥĿãĤ¤ ãĥ³ãĥĪ

+ĠاÙĦج ÙĪ

+ĠнеÑģк олÑĮко

+Ġki ếm

+Ùģ Ùİ

+Ġض د

+×ij×Ļ×ĺ ×ķ×Ĺ

+تاب ع

+ÙĨ ز

+ĠB ản

+Ġaç ıkl

+Ġaçıkl ama

+Ġ à¸Ħุà¸ĵ

+à¸Ĺ า

+ÅĤ ów

+ط ب

+ÙĨ ØŃÙĨ

+Ġ×ŀק ×ķר

+ĠÄ° s

+Ġдом а

+Ġ วัà¸Ļ

+Ġd Ãłnh

+Ñı н

+ми ÑĢ

+Ġm ô

+ĠvÃł ng

+ص اب

+s ının

+à¸Ħ ืà¸Ļ

+خ بر

+×ĸ׼ ×ķ

+Ġ×ŀ ש×Ķ×ķ

+m ü

+Ġкомпани и

+Ġ×Ķ×¢ ×Ļר

+ĠÙĥ ÙĪ

+ÙĤÙĦ ب

+ĠlỼ p

+и ки

+׳ ×ij

+à¹Ĥ à¸Ħร

+à¹Ĥà¸Ħร à¸ĩ

+à¹Ĥà¸Ħรà¸ĩ à¸ģาร

+×ŀ×ķ×¢ ×ĵ

+ÑıÑĤ ÑģÑı

+หลัà¸ĩ à¸Īาà¸ģ

+ени Ñİ

+Ġש ×¢

+Ġb Æ°á»Ľc

+ãĥ¡ ãĥ¼ãĥ«

+ãĤĦ ãĤĬ

+Ġ×Ļ×ķ×ĵ ×¢

+Ġê´Ģ íķľ

+ĠاÙĦØ£ Ùħر

+Ġböl ge

+ĠÑģв ой

+ÙĦ س

+Ġ×ŀ×Ļ ×ķ×Ĺ×ĵ

+ĠëĤ´ ìļ©

+ĠØ£ جÙĦ

+ĠÄIJ ông

+Ġ×ŀ ×ł×ª

+Ġìĭľ ê°Ħ

+Ùĥ Ùİ

+ãģ¨ãģĦãģĨ ãģ®ãģ¯

+Ġnale ży

+تÙĨظ ÙĬÙħ

+ĠÑģозд а

+Ġph é

+Ġphé p

+ãģ§ãģį ãģ¾ãģĻ

+Ġع ÙĦÙħ

+大ãģį ãģª

+ãĤ² ãĥ¼ãĥł

+í ħĮ

+Ġ׼×ķ׾ ׾

+ĠинÑĤеÑĢ неÑĤ

+ĠT ừ

+ãģ¨ ãģªãĤĭ

+ز اÙĦ

+Ġktóry m

+Ġnh é

+ìĪ ľ

+н ев

+д еÑĢ

+ãĤ¢ ãĥĹãĥª

+i á»ĩu

+×ij ×Ļ׾

+Ġت س

+ĠÄIJ ây

+ĠاÙĦØ® اصة

+Ġà¹Ģ à¸Ĭ

+Ġà¹Ģà¸Ĭ à¹Īà¸Ļ

+ص اد

+Ġd ạng

+س عر

+Ġש ×Ļ×ŀ×ķש

+×Ĵ ×Ļ×Ŀ

+ãģĮãģĤ ãģ£ãģŁ

+п ÑĢов

+пÑĢов од

+Ġ×IJ ×Ļ׳×ķ

+Ġ׾ ר×IJ

+Ġ׾ר×IJ ×ķת

+ĠØ£ ÙģضÙĦ

+ĠØŃ ÙĦ

+ĠØ£ بÙĪ

+ê° ķ

+Ġì§ ij

+ãģ® ãĤĪãģĨãģ«

+Ġפ ׳×Ļ

+ס ×Ļ×Ŀ

+ĠÙĪÙĩ ذا

+Ġka ç

+Ġé én

+Ġê± ´

+ë° Ķ

+Ñĥ з

+à¸Ĥà¸Ńà¸ĩ à¹Ģรา

+i ÅĤ

+ĠÐľ Ñĭ

+Ġch ết

+ĠاÙĦØ« اÙĨÙĬ

+×IJ ק

+Ġ×ķ ×¢×ľ

+ĠاÙĦØ· ب

+×ij×ĺ ×Ĺ

+Ġج دÙĬدة

+Ġع دÙħ

+ع ز

+สิà¹Īà¸ĩ à¸Ĺีà¹Ī

+ãģĻ ãĤĮãģ°

+ĠÄij ô

+ì£ ł

+د ÙĤ

+н омÑĥ

+Ġk á»ĥ

+ãĤ¢ ãĥ³

+å¤ļãģı ãģ®

+à¸Ľà¸£à¸° à¸ģ

+à¸Ľà¸£à¸°à¸ģ à¸Ńà¸ļ

+פע×Ļ׾ ×ķת

+ĠÑģÑĤ ол

+may ı

+ãģ¤ ãģĦ

+Ġyılı nda

+Ġ à¸Īึà¸ĩ

+koÅĦ cz

+ĠTh ông

+Ġак ÑĤив

+н ÑģÑĤ

+нÑģÑĤ ÑĢÑĥ

+ĠÃĸ z

+Ġת ×ŀ×Ļ×ĵ

+ĠÙĥ ÙĨت

+Ñģ иÑģÑĤем

+pr és

+prés ent

+Ġn â

+Ġnâ ng

+gÅĤ os

+ĠÙĪز ÙĬر

+ØŃ ØµÙĦ

+Ġиме еÑĤ

+ØŃ Ø±ÙĥØ©

+à¸ŀ à¹Īà¸Ń

+ãĤĴ ãģĬ

+Ġاست خداÙħ

+×IJ×Ļר ×ķ×¢

+ä»ĸ ãģ®

+Ġש×Ķ ×Ŀ

+ãģĹãģŁ ãĤī

+ש×ŀ ×Ļ

+Ñģ ла

+m ı

+Ġbaz ı

+Ġíķĺ ì§Ģë§Į

+×ĵ ׾

+Ġyapt ıģı

+ãĥĬ ãĥ¼

+׾ ×Ļ׾×Ķ

+ãģ¨ãģĦ ãģ£ãģŁ

+änd ig

+ĠÅŁ a

+ĠÙģÙĬ Ùħا

+иÑĤ елÑı

+×ŀ ×ķש

+à¸Ĥ à¸Ńà¸ļ

+l ük

+Ġh á»ĵi

+Ġëª ħ

+ĠاÙĦÙĥ Ø«ÙĬر

+צ ×IJ

+Ġhaz ır

+طر Ùģ

+ا ÙĬا

+ĠÄij ôi

+ен д

+ÙĦ غ

+×Ĺ ×ĸ×ķר

+ĠвÑģ ег

+ĠвÑģег да

+ëIJĺ ê³ł

+×ĵ ×ķ×ĵ

+ан а

+د ÙĪÙĦØ©

+Ġho ạch

+ع ÙĦا

+عÙĦا ج

+Ġ×ķ ×¢×ĵ

+×Ķ ×Ŀ

+ки й

+ÙĦ ÙIJ

+Ġ×¢ ׾×Ļ×ķ

+ÑİÑī ий

+Ġng ủ

+صÙĨ ع

+ĠاÙĦع راÙĤ

+à¸ķà¹Īà¸Ń à¹Ħà¸Ľ

+ãģŁãģı ãģķãĤĵ

+Ġph ạm

+ÙĦ اÙĨ

+ات Ùĩا

+Ġbö yle

+تÙĨ ÙģÙĬ

+تÙĨÙģÙĬ Ø°

+Ġש×Ķ ×Ļ×IJ

+Ñģ Ñĥ

+ย าว

+Ġש ×ķ׳×Ļ×Ŀ

+Ġ×ŀ ×ķ׾

+ĠÑģ ил

+Ġ×IJ×Ĺר ×Ļ×Ŀ

+Ġph ủ

+ÙĤØ· ع

+ĠTh ủ

+à¸Ľà¸£à¸°à¹Ģà¸Ĺศ à¹Ħà¸Ĺย

+ÙĨ ÙĤ

+ĠÄijo ạn

+Ġب Ø¥

+п ÑĢедел

+×ķת ×ķ

+Ġy arı

+пÑĢ е

+ĠczÄĻ ÅĽci

+ØŃ ÙĥÙħ

+×ķ׳ ×Ļת

+פע ׾

+ãĤĴ ãģĹãģ¦

+Ġktó rzy

+׾ ×Ŀ

+ĠÄIJi á»ģu

+ĠкоÑĤоÑĢ аÑı

+ĠìĿ´ ìĥģ

+ãģĤ ãģ£ãģŁ

+Ġ×ŀ×ĵ ×ķ×ijר

+פ ×ķ×¢×ľ

+d ım

+éĢļ ãĤĬ

+ĠбÑĥд ÑĥÑĤ

+à¹Ģวà¹ĩà¸ļ à¹Ħà¸ĭ

+à¹Ģวà¹ĩà¸ļà¹Ħà¸ĭ à¸ķà¹Į

+ا خر

+×Ĺ ×Ļ׾

+Ġ×Ļ ׾

+Ġ×Ļ׾ ×ĵ×Ļ×Ŀ

+×Ĺ ×Ļפ

+×Ĺ×Ļפ ×ķש

+Ġd òng

+Ġש ×ĸ×Ķ

+ÑĮ е

+ãģĤ ãģ¨

+ìŀIJ ê°Ģ

+×IJ ×ĵ

+Ġü z

+Ġüz ere

+ظ ÙĦ

+Ġ×IJ ×ķ׾×Ļ

+Ġ×ij ×Ļ×ķ×Ŀ

+ÙĦ ات

+Ġm ê

+ì¹ ¨

+تØŃ Ø¯

+تØŃد Ø«

+ĠØ® اصة

+Ġب رÙĨ

+ĠبرÙĨ اÙħج

+ĠH Ãłn

+×Ĺ ×¡

+ĠÙĪ ÙĦÙħ

+×¢ ×Ŀ

+Ġm ı

+à¸Ł ัà¸ĩ

+ש ×¢×Ķ

+ÙĪÙģ ÙĤ

+ס ×ij×Ļר

+алÑĮ нÑĭй

+×Ĺש ×ķ×ij

+Ġn Ãłng

+ë³ ¼

+ĠкоÑĤоÑĢ ÑĭÑħ

+Ġ×Ĺ ×ķק

+t ör

+ĠлÑĥÑĩ ÑĪе

+ãĥij ãĥ³

+ลà¹Īา สุà¸Ķ

+Ġج دÙĬد

+ÙĬد Ø©

+à¸Ĺ รà¸ĩ

+ãĤĪãĤĬ ãĤĤ

+ÙĦ ÙĦ

+ãĤĤ ãģ£ãģ¨

+ש×ĺ ×Ĺ

+Ġ×ķ ×IJ×Ļ

+Ġgi á»ijng

+Ø¥ ضاÙģ

+ק ת

+ë§ Ŀ

+Ġzosta ÅĤ

+ÑĢ оз

+×Ļפ ×Ļ×Ŀ

+Ġ׼׾ ׾

+ת×ķ׼ ף

+dıģ ını

+ÙĤ سÙħ

+ĠÑģ ÑĩиÑĤ

+ĠÑģÑĩиÑĤ а

+×ĺ ×ķת

+Ġ Æ°u

+ĠØ¢ ÙĦ

+Ġм ом

+Ġмом енÑĤ

+ĠاÙĦتع ÙĦÙĬÙħ

+×¢×ľ ×ķת

+Ġch ữa

+Ġy ön

+Ġtr Ãł

+ĠØŃ ÙĬÙĨ

+à¸ĭ ั

+ĠC á

+×¢ ×ĸ

+ĠاÙĦØ£ ÙħÙĨ

+c ÃŃ

+Ġv á»ijn

+Ġ à¸Ļาย

+об ÑĢа

+ק ×IJ

+Ġthi ếu

+ãĥŀ ãĥ¼

+ส วà¸Ļ

+Ġg á»Ń

+Ġgá»Ń i

+Ġê ¹

+Ġê¹ Ģ

+Ġthi á»ĩn

+ÙĤ ع

+w ÄĻ

+Ġн ам

+ÑĤ ол

+Ġs ân

+ס ×ķ×Ĵ

+Ġgeç ir

+ÑĤ он

+ев а

+ĠÙĪ ضع

+Ġع شر

+Ñģ ло

+à¸Ī ัà¸ļ

+ãĤ· ãĥ¼

+ãĤĤ ãģĤãĤĬãģ¾ãģĻ

+Ġv ẻ

+ĠÄIJ á»ĥ

+ر Ùģع

+ĠاÙĦØ£ÙĪÙĦ Ùī

+ÑĤ аÑĢ

+ãģªãģı ãģ¦

+Ùħ Ùİ

+qu ÃŃ

+×¢×ł×Ļ ×Ļ׳

+г ен

+Ġh ôm

+à¸Ī า

+Ġnh Ỽ

+ĠاÙĦع ربÙĬ

+×IJ ף

+Ġl á»Ļ

+Ġje ÅĽli

+à¹Ģà¸Ĺà¹Īา à¸Ļัà¹īà¸Ļ

+ĠØ£ÙĨ Ùĩا

+Ġt uy

+Ġtuy á»ĩt

+Ġت ص

+Ġتص ÙĨÙĬ

+ĠتصÙĨÙĬ Ùģ

+Ġê·¸ëŁ¬ ëĤĺ

+о ÑĨен

+à¸ģิà¸Ī à¸ģรรม

+ãĤĦ ãģ£ãģ¦

+Ġkh á»ıi

+Ġl á»ĩ

+ĠاÙĦÙħج تÙħع

+à¸Ńาà¸Ī à¸Īะ

+à¸Īะ à¹Ģà¸Ľà¹ĩà¸Ļ

+ов Ñĭй

+ר ×Ŀ

+ร à¹īà¸Ńà¸Ļ

+ש ×ŀש

+人 ãģ«

+Ġüzer ine

+פר ×Ļ

+du ÄŁu

+Ñĩ ик

+Ġmù a

+Ġ×ŀת ×ķ×ļ

+Ġc áºŃp

+Ġت ارÙĬØ®

+×ij׾ ת×Ļ

+Ġì¢ Ģ

+ÙĦ ع

+ب اÙĨ

+Ġch út

+Ġ×Ķ×ĸ ×ŀף

+n ée

+ĠLi ên

+ĠÙĦÙĦ Ø£

+ØŃد ÙĪد

+Ġ×¢ ׼ש×Ļ×ķ

+в оз

+Ġyapt ı

+Ġоб о

+à¹ĥหà¹ī à¸ģัà¸ļ

+Ġ×ij×Ķ ×Ŀ

+ãģı ãģ¦

+ر أس

+ĠÑģÑĢед ÑģÑĤв

+ĠB Ãłi

+ãģĵãģ¨ ãģ«

+ĠìĤ¬ íļĮ

+Ġ모 ëijIJ

+×ij ×IJ

+Ġtr ắng

+ĠاÙĦبÙĦ د

+ĠHo Ãłng

+ли бо

+ĠдÑĢÑĥг иÑħ

+Ä° R

+Ñĥм а

+ĠJe ÅĽli

+ãĤĤ ãģĹ

+Ġv òng

+Ġ×IJתר ×Ļ×Ŀ

+ĠÄij á»įc

+Ġв оÑĤ

+ãģł ãģĮ

+ë° °

+à¸Ķู à¹ģล

+Ġ×ŀ ׼׾

+ìĹIJ ëıĦ

+г аз

+Ġ׳×ķס פ×Ļ×Ŀ

+ãģĵãģ¨ ãģ§

+Ġت ÙĪ

+ãģ§ ãģĤãĤĬ

+à¸Ļั à¹Īà¸ĩ

+ĠможеÑĤ е

+sz ÄĻ

+ãģ® ãģł

+ĠÙħÙĨ Ùĩ

+Ġb á»ķ

+Ġb üt

+Ġbüt ün

+ë³´ ê³ł

+Ġch á»ĵng

+à¹ģà¸Ī à¹īà¸ĩ

+ĠV ì

+ĠØŃ Ø±

+Ġgi ản

+ĠÙħ دÙĬÙĨØ©

+تط بÙĬÙĤ

+à¸Ī ิ

+æĹ¥ ãģ®

+б ил

+à¸ģ à¸Ńà¸ĩ

+ê³ ³

+ĠØ£ Ùħا

+ìĨ IJ

+Ġtr ái

+ĠвÑģ ем

+Ġس ÙĨØ©

+ĠÑģай ÑĤ

+Ġг оÑĤов

+п Ñĭ

+ĠëIJ ł

+ĠاÙĦØ® Ø·

+ĠاÙĦرئÙĬس ÙĬØ©

+Ġíķ ©ëĭĪëĭ¤

+ĠìķĦëĭĪ ëĿ¼

+ĠìĿ´ ëłĩ

+ĠìĿ´ëłĩ ê²Į

+) ØĮ

+h ält

+ĠØ£ Ùħر

+Ġع Ùħر

+à¸ģà¹ĩ à¸Īะ

+Ġ à¸Ĺำà¹ĥหà¹ī

+Ġc ân

+Ġ×ij ׾

+Ġ×ij׾ ×ij×ĵ

+פ סק

+ĠÙĬ ÙĤÙĪÙĦ

+н ÑĥÑĤÑĮ

+à¹ģ à¸Ħ

+Ġק צת

+Ġn ằm

+Ġh òa

+bilit Ãł

+ĠìĹĨ ëĭ¤

+Ġ׼ פ×Ļ

+ÑĢ ож

+лаг а

+Ġ×Ķש ×Ļ

+ĠNgo Ãłi

+ĠÙĪ ج

+ĠÙĪج ÙĪد

+ĠìľĦ íķľ

+Ġus ÅĤug

+Ġtu ần

+d ź

+×ŀ ×ķף

+ĠاÙĦع دÙĬد

+Ġch ẳng

+สุà¸Ĥ à¸łà¸²à¸ŀ

+Ġ×ij ×ĵר×ļ

+ĠÑģеб е

+ĠìŀĪ ìĿĦ

+ĠاÙĦØŃ Ø§ÙĦ

+Ġd á

+Ġc Æ°á»Ŀi

+Ġnghi ên

+ie ÅĦ

+ĠD Æ°Æ¡ng

+ï¼ ħ

+ش د

+ãģĦãģ¤ ãĤĤ

+ĠвÑĭб оÑĢ

+Ġc á»Ļng

+ש ×Ļ׳×ķ×Ļ

+Ġch ạy

+Ġ×ij×¢ ׾×Ļ

+اخ بار

+íķĺ ë©°

+ż Äħ

+ج از

+Ġ׳ ר×IJ×Ķ

+ศ ู

+ศู à¸Ļ

+ศูà¸Ļ ยà¹Į

+×Ĵ ×¢

+Ġ×¢ ×ĵ×Ļ

+Ġ×¢×ĵ×Ļ ×Ļף

+بر ا

+ÑĨи й

+ĠÄIJ á»ĵng

+ÙĤ اÙĨÙĪÙĨ

+ĠÄij ứng

+ãģĹãģŁ ãĤĬ

+Ġ×Ĺ×Ļ ×Ļ

+Ġë IJľ

+ĠëIJľ ëĭ¤

+Ġм еждÑĥ

+à¸ŀวà¸ģ à¹Ģà¸Ĥา

+ĠB ắc

+ล ำ

+ë° ±

+ĠíĻ ķ

+มาà¸ģ ม

+มาà¸ģม าย

+бан к

+à¸Ńา à¸ģาร

+Ġh Ãł

+Ġ׾ ׳

+à¸Ń à¸Ń

+Ġë°Ķ ë¡ľ

+л ом

+m ática

+ĠØŃ Ø¯

+اب ت

+à¸Ĺีà¹Ī à¸Ļีà¹Ī

+Ġco ÅĽ

+ÙģÙĬ دÙĬ

+ÙģÙĬدÙĬ ÙĪ

+ĠмеÑģÑĤ о

+Ġph út

+มาà¸ģ à¸ģวà¹Īา

+×IJ פ

+ب ÙIJ

+ĠPh ú

+ì± Ħ

+ĠÙĪ سÙĦÙħ

+à¸Īี à¸Ļ

+поÑĤ ÑĢеб

+Ġ×Ĺ×ĵ ש×ķת

+Ø´ ÙĪ

+Ġעצ ×ŀ×ķ

+ĠعÙħÙĦ ÙĬØ©

+à¸Ħุà¸ĵ à¸łà¸²à¸ŀ

+ãģ¾ãģĻ ãģĮ

+دع ÙĪ

+طر ÙĤ

+à¹Ħมà¹Ī à¸ķà¹īà¸Ńà¸ĩ

+ë² Ķ

+ìĬ ¹

+Ġk ÃŃch

+ĠìĹĨ ëĬĶ

+ĠÑĤ ам

+ĠÙĨ ØŃÙĪ

+ĠاÙĦÙĤ اÙĨÙĪÙĨ

+×Ĺ ×ķ×Ŀ

+Ġk ız

+Ġ×ĵ ×Ļף

+ĠвÑĢем ени

+ãģ£ãģŁ ãĤĬ

+ĠØ´ Ùĩر

+ĠìĦľ ë¹ĦìĬ¤

+×¢ ש×Ķ

+Ġgi ác

+ĠاÙĦسÙĦ اÙħ

+Ġ×IJ ש

+ĠполÑĥÑĩ а

+à¸Īัà¸Ķ à¸ģาร

+к оÑĢ

+Ġ×Ķ×ĺ ×ķ×ij

+ราย à¸ģาร

+주 ìĿĺ

+à¹ģà¸ķà¹Ī ละ

+Ġê·¸ëŁ° ëį°

+à¸Ĺีà¹Ī à¹Ģà¸Ľà¹ĩà¸Ļ

+Ġת ×ķ×ļ

+بÙĬ اÙĨ

+Ð Ļ

+oÅĽci Äħ

+ÑĤ ок

+ĠÃ Ķ

+ĠÃĶ ng

+à¹Ħมà¹Ī à¹ĥà¸Ĭà¹Ī

+ãģ¿ ãģ¦

+ÐŁ о

+ĠЧ ÑĤо

+íĻ ©

+×ĺ ×ij×¢

+меÑĤ ÑĢ

+Ġ×ij ×ŀ×Ķ

+Ġ×ij×ŀ×Ķ ׾

+Ġ×ij×ŀ×Ķ׾ ×ļ

+Ñĩ ÑĮ

+ק ש×Ķ

+з нак

+знак ом

+uj ÄĻ

+×Ļצ ר

+ĠاÙĦÙħ ÙĦÙĥ

+ı yla

+×IJ×ŀ ת

+à¸Ľ ิà¸Ķ

+×IJ ×Ĺ×ĵ

+ر اد

+Ġm áºŃt

+ëĭ¤ ëĬĶ

+Ġl ạnh

+ש׾ ×ķש

+ØŃ Ø¯ÙĬØ«

+ت ز

+å¹´ ãģ®

+Ġк ваÑĢ

+ĠкваÑĢ ÑĤиÑĢ

+ä½ľ ãĤĬ

+رÙĪ ب

+ов ан

+ĠТ е

+à¸Īำ à¸ģ

+à¸Īำà¸ģ ัà¸Ķ

+ب اط

+×Ĵ ת

+Ġм аÑĪ

+ĠмаÑĪ ин

+×Ļצ ×Ķ

+ãģ» ãģ¨

+ãģ»ãģ¨ ãĤĵãģ©

+ÃŃ do

+ĠÑı зÑĭк

+à¸ļ ิà¸Ļ

+สà¸ĸาà¸Ļ à¸Ĺีà¹Ī

+ĠìĹ ´

+ãĤ¦ ãĤ§

+Ġc Ãł

+п ан

+åı£ ãĤ³ãĥŁ

+Ġر د

+اÙĤ ت

+ĠÙĥ ب

+ĠÙĥب ÙĬرة

+ÑģÑĤ ал

+ש×ŀ ×Ĺ

+pos ición

+ĠÙħÙĦÙĬ ÙĪÙĨ

+ĠìĿ´ ìķ¼

+ĠìĿ´ìķ¼ 기

+Ġh út

+ĠÅĽw iat

+Ġë°© ë²ķ

+ĠÑģв еÑĤ

+Ġвиде о

+ĠاÙĦÙĨ ظاÙħ

+Ġtr á»Ŀi

+ĠëĮĢ íķ´ìĦľ

+ר ×ŀת

+ت داÙĪÙĦ

+×ķר ×ĵ

+ת ×ŀ

+ת×ŀ ×ķ׳×ķת

+Ġ×ŀ ף

+Ġдв а

+Ġ×Ķק ×ķ

+æĹ¥ ãģ«

+Ġ×Ķ×Ĵ ×Ļ×¢

+à¹Ģà¸ŀิà¹Īม à¹Ģà¸ķิม

+Ùħار س

+Ġê²ĥ ìŀħëĭĪëĭ¤

+ãģªãģĦ ãģ¨

+Ġnhi á»ĩt

+ëIJ ©ëĭĪëĭ¤

+Ġ×ij׳ ×ķש×IJ

+Ġê°Ģ ìŀ¥

+Ġv ợ

+ĠÄij óng

+צ×Ļ׾ ×ķ×Ŀ

+ê´Ģ ê³Ħ

+в аÑı

+×IJ ×Ļ×ĸ

+×IJ×Ļ×ĸ ×Ķ

+ĠÙĨ ظاÙħ

+ÙħØŃ Ø§Ùģظ

+Ġt ải

+기 ëıĦ

+à¸Ľà¸±à¸Ī à¸Īุ

+à¸Ľà¸±à¸Īà¸Īุ à¸ļัà¸Ļ

+׼ ×ĵ×ķר

+ĠìķĦ ìĿ´

+׼׳ ×Ļס

+à¹Ģ à¸ķร

+à¹Ģà¸ķร ียม

+Ġngo ại

+ĠدÙĪÙĦ ار

+Ġr ẻ

+Ġkh Äĥn

+عد د

+ش عب

+czy Äĩ

+ĠاÙĦ Ùĥر

+ĠÑĩеловек а

+ĠÙĪ Ø¥ÙĨ

+×IJ ×ĺ

+Ġth Æ¡

+ĠاÙĦ رÙĬاض

+оп ÑĢедел

+опÑĢедел ен

+×Ķ ×ŀש×ļ

+ĠÐĿ ово

+з Ñĭва

+ĠاÙĦدÙĪÙĦ ÙĬ

+ĠÄij áp

+Ġк ÑĢед

+ĠкÑĢед иÑĤ

+ов ого

+Ġm ôn

+à¸Ľà¸£à¸° à¹Ĥย

+à¸Ľà¸£à¸°à¹Ĥย à¸Ĭà¸Ļ

+à¸Ľà¸£à¸°à¹Ĥยà¸Ĭà¸Ļ à¹Į

+ÑģÑĤ е

+ĠTh á»ĭ

+د ÙĬØ©

+×ŀצ ×ķ

+Ùģ ات

+ק ×ĵ×Ŀ

+ìĿ´ëĿ¼ ê³ł

+ÙĪ Ø®

+Ġ×Ĺ ×ĸ

+ĠÑĦоÑĤ о

+׾ ×Ļת

+ت Ùİ

+ÙĪ بر

+й ÑĤи

+ĠÃ¶ÄŁ ren

+Ġ×Ķ×ĸ ×ķ

+Ġv á»įng

+ÙĤÙĪ Ø©

+ĠT ây

+ĠÐĿ и

+Ġש ×ķ×ij

+ãģ¨è¨Ģ ãĤıãĤĮ

+ãģ© ãĤĵãģª

+׊צ×Ļ

+ï½ ľ

+Ġ×ķ×Ķ ×ķ×IJ

+ä¸Ģ ãģ¤

+ĠÑģÑĤо иÑĤ

+ni Äħ

+×ĺר ×Ļ

+ĠдеÑĤ ей

+нÑı ÑĤÑĮ

+ĠÑģдел аÑĤÑĮ

+Ġë§İ ìĿ´

+ä½ķ ãģĭ

+ãģĽ ãĤĭ

+à¹Ħ หม

+à¸ķิà¸Ķ à¸ķà¹Īà¸Ń

+Ġ×ij ת×Ĺ

+Ġ×ijת×Ĺ ×ķ×Ŀ

+ìĻ Ħ

+ì§Ģ ëĬĶ

+ÑģÑĤ аÑĤ

+ÑıÑģ н

+ü b

+Ġth ả

+Ġ×ij×IJ×ŀ ת

+Ġt uyến

+×ĵ ×Ļר×Ķ

+Ġ×IJ ×Ļש×Ļ

+×ĸ׼ ר

+ãģ° ãģĭãĤĬ

+Ġx ét

+׼ ×Ļ×ķ

+׼×Ļ×ķ ×ķף

+diÄŁ ini

+ĠاÙĦÙħ ÙĪضÙĪع

+Ġh áºŃu

+à¸Īาà¸ģ à¸ģาร

+×ijס ×Ļס

+Ġ×ŀ×Ĵ ×Ļ×¢

+×ij ×Ļ×¢

+ĠÙĪ جÙĩ

+à¹ģà¸Ķ à¸ĩ

+à¸Ļ าà¸ĩ

+ĠÅŀ a

+ì ¡´

+ë¡ Ģ

+à¸ķ ะ

+Ġ×Ķ×Ĺ×Ļ ×Ļ×Ŀ

+Ùģ ÙĬد

+ãģ§ãģĻ ãģĭãĤī

+ê· ľ

+ź ni

+ĠлÑİ Ð´ÐµÐ¹

+Ġyüz de

+ıy orum

+ĠاÙĦ بØŃر

+e ño

+п аÑĢ

+ÙĬ ÙĤØ©

+об ÑĢ

+ר ×ķ×ļ

+ت ÙĪÙĤع

+ĠاÙĦØ´ ÙĬØ®

+åĪĿ ãĤģãģ¦

+ĠÑĤ елеÑĦ

+ĠÑĤелеÑĦ он

+Ġth ôi

+Ġ×Ļ׼×ķ׾ ×Ļ×Ŀ

+ĠÅŁ irk

+ĠÅŁirk et

+Ġìļ°ë¦¬ ê°Ģ

+ĠÄij ông

+Ġת ×ķ×ĵ×Ķ

+ÑģмоÑĤÑĢ еÑĤÑĮ

+ĠÙĦ ÙĩÙħ

+Ġ׾ ׼

+ĠN ó

+ĠØŃ Ø§ÙĦØ©

+ãģĦ ãģij

+קר ×ķ

+az ı

+ãĤ³ ãĥ¼

+ĠÙĦÙĦ ت

+s ınız

+ĠH ải

+기 ìĪł

+ยัà¸ĩ à¹Ħมà¹Ī

+ëĭ¤ ê³ł

+פ ×Ĺ

+Ġ׾×Ĵ ×ij×Ļ

+Ġع ÙĨÙĩ

+Ġк аз

+Ġказ ино

+ب ÙĪر

+ÑĦ еÑĢ

+Ġê°Ļ ìĿ´

+تس جÙĬÙĦ

+ĠاÙĦÙħ رÙĥز

+ĠTh ái

+д аÑĤÑĮ

+×ŀ×Ļ ×Ļ׾

+Ġpay laÅŁ

+ãģ¤ ãģ®

+à¹Ģร ืà¸Ń

+n ça

+׳ ×ķ×Ĺ

+Ġ×IJ פ×Ļ׾×ķ

+ãģ¨ èĢĥãģĪ

+ãģ¨ãģĹãģ¦ ãģ¯

+à¹Ģà¸Ī à¸Ń

+×ŀ פ

+Ġg iriÅŁ

+л иÑĤ

+ÑĤ елÑı

+Ñij н

+æ°Ĺ ãģ«

+Ġg ó

+Ġgó p

+åĪĩ ãĤĬ

+Ġ×Ķ ×Ĺ×ĵש

+ж ал

+Ġ×ĵ עת

+éģķ ãģĨ

+à¹Ģà¸Ĥà¹īา à¹Ħà¸Ľ

+Ġס ר×ĺ

+e ña

+æĸ° ãģĹãģĦ

+ر Ùİ

+ĠÐIJ ÑĢ

+Ġph ản

+à¸Īะ à¹Ħà¸Ķà¹ī

+Ġ×ijצ ×ķר×Ķ

+Ø´ اÙĩ

+شاÙĩ د

+ÙĪر د

+à¹Ģà¸Ļืà¹Īà¸Ńà¸ĩ à¸Īาà¸ģ

+или ÑģÑĮ

+à¹ģละ à¸ģาร

+Ġ×Ķ ×ĸ׼

+Ġ×Ķ×ĸ׼ ×ķ×Ļ×ķת

+ei ÃŁ

+ãĥ ¨

+ìĥ Ī

+ĠÃĩ a

+Æ ¯

+ש ×Ĵ

+ÙĬÙĨ Ø©

+ร à¹īà¸Ńà¸ĩ

+ãĤµ ãĥ³

+ÑĢоÑģÑģ ий

+ÑĢоÑģÑģий Ñģк

+a ÄŁa

+ĠнаÑĩ ина

+Ġص ÙĦÙī

+à¸Ĺุà¸ģ à¸Ħà¸Ļ

+íļĮ ìĤ¬

+Ġли ÑĨ

+Ø´ ÙĬر

+ĠØ´ÙĬ Ø¡

+ÙĬÙĨ ا

+Ġפ ×Ĺ×ķת

+Ġiçer is

+Ġiçeris inde

+ĠØ£ ØŃÙħد

+Ġże by

+ì´ Ŀ

+Ġп оказ

+Ġи менно

+หà¸Ļัà¸ĩ ส

+หà¸Ļัà¸ĩส ืà¸Ń

+ĠÑĤÑĢ е

+สัà¸ĩ à¸Ħม

+Ø¥ ÙIJ

+ãģĮ å¿ħè¦ģ

+ÙĬÙij Ø©

+פ צ

+íĭ °

+ĠÙħ جاÙĦ

+׳ פש

+к ан

+×Ĺ ×ķפ

+×Ĺ×ķפ ש

+ì²ĺ ëŁ¼

+ов аÑı

+з ов

+Ġh ạ

+Ġdzi ÄĻki

+×Ļר ×ķ

+Ġ׾ ×ŀצ

+Ġ׾×ŀצ ×ķ×IJ

+×Ļ×ĵ ×ķ

+Ġs ợ

+Ġ׾×Ķ ×Ĵ×Ļ×¢

+ק ×ij×¢

+Ġchi á»ģu

+ãĥŀ ãĤ¤

+Ġd Ãłng

+à¹ģà¸Ł à¸Ļ

+Ġü ye

+×Ļ׳ ×Ĵ

+à¹Ģรีย à¸ģ

+ç§ģ ãģĮ

+th é

+ĠÑĦ илÑĮ

+ĠÑĦилÑĮ м

+ĠNg Ãły

+Ġж ен

+Ġжен Ñīин

+ج ÙĬد

+n ç

+à¸Ľ รา

+×Ļ×ŀ ×ķ

+Ġn á»ģn

+×IJ ×ķ׾×Ŀ

+Ġвозмож ноÑģÑĤÑĮ

+Ġëĭ¤ ìĭľ

+è¦ĭ ãģŁ

+à¸ĸ à¸Ļ

+à¸ĸà¸Ļ à¸Ļ

+mız ı

+ĠÙħ جÙħÙĪعة

+c jÄħ

+ĠÐł Ф

+à¸ģำ หà¸Ļ

+à¸ģำหà¸Ļ à¸Ķ

+ĠìŬ 기

+land ı

+ни ÑĨ

+ÑģÑĤв е

+Ġ×ĵ ×ijר×Ļ×Ŀ

+Ġsk ÅĤad

+ãĤĬ ãģ¾ãģĹãģŁ

+ĠоÑĤ кÑĢÑĭÑĤ

+нÑı ÑĤ

+ĠÑģво ей

+à¸Ī ิà¸ķ

+ĠкаÑĩеÑģÑĤв е

+Ġet tiÄŁi

+ìĤ¬ íķŃ

+ĠاÙĦÙĬ ÙħÙĨ

+иÑĩеÑģки й

+ë¸ Į

+Ġ×ij×IJר ×¥

+Ġا سÙħ

+Ġиз веÑģÑĤ

+r ão

+Ġatt ivitÃł

+à¹Ģà¸Ľà¹ĩà¸Ļ à¸ģาร

+ĠاÙĦد Ùĥت

+ĠاÙĦدÙĥت ÙĪر

+ĠÙĪاØŃد Ø©

+ĠÑģ ÑĩеÑĤ

+ĠпÑĢ иÑĩ

+ĠпÑĢиÑĩ ин

+ĠÙĪز ارة

+Ġh uyá»ĩn

+ĠÙĥ تاب

+à¹ģà¸Ļ à¹Īà¸Ļ

+à¹ģà¸Ļà¹Īà¸Ļ à¸Ńà¸Ļ

+Ġgün ü

+г ÑĢÑĥз

+ĠاÙĦØ® اص

+Ġgör ül

+׾ ×ŀ×ĵ

+Ġìłķ ëıĦ

+×ķ×ij ×Ļ׾

+Ġ×ŀק צ×ķ×¢×Ļ

+ĠоÑģоб енно

+à¸Ľà¸£à¸° à¸ģา

+à¸Ľà¸£à¸°à¸ģา ศ

+aca ģını

+ë¶ ģ

+à¸łà¸¹ มิ

+ĠÑį лекÑĤ

+ĠÑįлекÑĤ ÑĢо

+Ġק ש×Ķ

+سÙĦ Ø·

+à¸Ĭà¸Ļ ะ

+×¢ ×Ļ׾

+ĠЧ е

+à¹ģà¸Ļ à¹Ī

+lı ģ

+lıģ ın

+Ġ×ŀ×¢ ×¨×Ľ×ª

+好ãģį ãģª

+มาà¸ģ à¸Ĥึà¹īà¸Ļ

+×ŀ×¢ ×ijר

+ĠاÙĦÙħ غرب

+ĠпеÑĢ и

+ĠпеÑĢи од

+Ġnh ạc

+ا ÙĪÙĬ

+ĠÙĪ عÙĦÙī

+أخ ذ

+ĠC ô

+תר ×ij×ķת

+×Ĵ ×Ķ

+Ġktóre j

+×IJ ×Ļת

+×ij ×ķ×IJ

+д елÑĮ

+รี วิ

+รีวิ ว

+ж Ñĥ

+Ġ×ij×Ĺ ×ķ

+еÑĪ ÑĮ

+ĠØ£ ÙĦÙģ

+ĠاÙĦÙĪ Ø·ÙĨÙĬ

+ĠاÙĦÙħÙĨ Ø·ÙĤØ©

+nÄħ Äĩ

+Ġthi ên

+иÑĩеÑģк ой

+ĠاÙĦÙħ ÙĦ

+Ġع Ùħ

+ס פר

+Ġnh óm

+ÙĪص Ùģ

+ĠCh úng

+Ġر ÙĤÙħ

+ãģ¾ãģĹãģŁ ãģĮ

+al ité

+ล ม

+ĠëĤ´ ê°Ģ

+׾ק ×ķ×Ĺ

+ĠS Æ¡n

+pos ição

+mi ÄĻ

+Ġtr ánh

+ĠÄIJ á»Ļ

+׼ ×Ĺ

+ãģĤ ãģ£ãģ¦

+à¸Ńย à¹Īา

+Ġ×ŀ×Ĺ ×Ļר

+Ġ×Ķ ×Ļת×Ķ

+à¸Ľ à¹Īา

+à¸Ńืà¹Īà¸Ļ à¹Ĩ

+Ø´ ÙĤ

+×ł×¡ ×Ļ

+ë¦ ¼

+ãģ¦ãģĹãģ¾ ãģĨ

+Ġ×ŀ צ×ij

+ãģ« åĩº

+ÙħÙĪا Ø·ÙĨ

+ยัà¸ĩ มี

+алÑĮ нÑĭе

+san ız

+Ø¥ سرائÙĬÙĦ

+ĠvÃł i

+ì¤ Ħ

+ãģ¨æĢĿ ãģ£ãģ¦

+×Ļ ×ķ׳×Ļ

+çĶŁ ãģį

+Ġs âu

+Ñĩ иÑģÑĤ

+Ġl á»ħ

+ĠGi á

+à¸Ńุ à¸Ľ

+à¸Ńà¸¸à¸Ľ à¸ģร

+à¸Ńà¸¸à¸Ľà¸ģร à¸ĵà¹Į

+Ġnh ẹ

+r ö

+ס ×ĺ×Ļ

+ãģķãĤĵ ãģĮ

+Ġd ầu

+ع Ùİ

+ت را

+×Ĵ×ĵ ׾

+Ġtécn ica

+׼ ׳×Ļ×Ŀ

+תק ש

+תקש ×ķרת

+Ġн его

+ét ait

+Ġm á»ģm

+Ñģ еÑĤ

+Ġnh áºŃt

+Ġ×ŀ ×¢×ľ

+Ġ×Ķ×¢ ×ij×ķ×ĵ

+Ġ×Ķ×¢×ij×ķ×ĵ ×Ķ

+Ġ×Ĵ ×Ļ׾

+ãģ¯ ãģªãģĦ

+ائ ØŃ

+Ġз деÑģÑĮ

+×IJ ×Ļ׳×ĺר

+Ùħ ÙIJ

+Ġ×Ļ ×Ĺ×ĵ

+ر اÙģ

+ì²ĺ 리

+×ĵ ×¢×ķת

+ì¹ ľ

+ĠТ о

+ĠTh ế

+ì¶ ©

+Ġ׳׼ ×ķף

+عÙĬ Ø´

+ни з

+Ġج اÙĨب

+×ŀק צ×ķ×¢

+à¹Ĥ à¸ĭ

+Ñģ ÑĥÑĤ

+ìĸ´ ìļĶ

+ãĤĴè¦ĭ ãģ¦

+ار د

+Ġaç ıl

+ĠاÙĦØŃ ÙĬاة

+à¸ģà¹ĩ à¹Ħà¸Ķà¹ī

+ãģĿãĤĮ ãĤĴ

+عض ÙĪ

+Ġг ÑĢаж

+ĠгÑĢаж дан

+à¸Īะ à¸ķà¹īà¸Ńà¸ĩ

+ĠìĿ´ 룬

+ĠìĿ´ëŁ¬ íķľ

+Ġtr ách

+ÙĨ Ùİ

+Ġkı sa

+Ã Ķ

+ÑĪ ка

+ãģ® 人

+ĠÐŁ оÑģ

+ĠÐŁÐ¾Ñģ ле

+Ñĥ лÑĮ

+ÙĪا جÙĩ

+ÙĤ رب

+à¸Ľà¸ıิ à¸ļัà¸ķิ

+ê° Ļ

+Ġ×ŀ ׳

+ĠÑģво и

+بر اÙħج

+Ġر ÙĪ

+пÑĢ од

+пÑĢод аж

+Ġby ÅĤy

+วั ย

+Ġgör ün

+ĠÃ Ī

+ÑİÑī им

+ĠÑĤак ой

+Ùģ ÙĪر

+ĠÙģ عÙĦ

+Ġб ел

+ëIJ ł

+er ÃŃa

+ĠÑģво Ñİ

+Ġl ã

+Ġlã nh

+à¹Ģà¸ŀืà¹Īà¸Ń à¹ĥหà¹ī

+ÙĤ ÙĨ

+تط ÙĪÙĬر

+Ġsay ı

+ĠÑģ ейÑĩаÑģ

+Ġ×IJ×Ĺר ת

+ק ×ķפ×Ķ

+ק×ķר ס

+Ġس Ùħ

+Ġ×ĺ ×Ļפ×ķ׾

+ìĿ´ëĿ¼ ëĬĶ

+دراس ة

+èµ· ãģĵ

+×Ĺ ×Ļ׳

+×Ĺ×Ļ׳ ×ķ×ļ

+×ĵ ק

+Ġë§ ŀ

+Ġком анд

+ĠÐij о

+Ġиг ÑĢÑĭ

+à¸ļ ี

+ĠØ£ Ùİ

+в ен

+ĠاÙĦج دÙĬد

+ĠÙĦ Ø¥

+Ġ×ķ×IJ ׳×Ļ

+Ġ×Ķס ×Ļ

+иÑĩеÑģк ого

+رÙĪ ØŃ

+à¸ģาร ศึà¸ģษา

+ĠTr Æ°á»Ŀng

+иг ÑĢа

+ıl ması

+Ġм аÑģÑģ

+ãģ¨ãģį ãģ«

+à¸Ĺีà¹Ī à¸ľà¹Īาà¸Ļ

+à¸Ĺีà¹Īà¸ľà¹Īาà¸Ļ มา

+ĠاÙĦساب ÙĤ

+Ġ×ŀ×¢ ×ĺ

+в аÑĤÑĮ

+m Ã¼ÅŁ

+Ġ׾ ׼×ļ

+Ġt á»ĭch

+Ùģ ÙĩÙħ

+تد رÙĬب

+Ø´ Ùĥ

+Ġ×ij ×ŀ×Ļ

+Ġ×ij×ŀ×Ļ ×ķ×Ĺ×ĵ

+ÙĤØ· اع

+ãģª ãģĹ

+×ķצ ×Ļ×IJ

+ĠÙĪ سÙĬ

+з Ñĥ

+Ġy at

+Ġyat ırım

+ë§ İ

+Ġth ắng

+ãģĬ 客

+ãģĬ客 æ§ĺ

+ĠThi ên

+ãģ«å¯¾ ãģĹãģ¦

+ÑĢ иÑģ

+ÙĨت ائ

+ÙĨتائ ج

+Ġ×ŀ שר

+Ġ×ŀשר ×ĵ

+Ġتع اÙĦ

+ĠتعاÙĦ Ùī

+ש ׳×Ļ

+Ùĩ اÙħ

+×IJ׳ ש×Ļ×Ŀ

+Ġżyc ia

+ĠÑĢÑĥб лей

+ÙĬ ض

+Ġkat ıl

+ĠÙħ ÙĪضÙĪع

+Ġvard ır

+ĠÙħÙĨ Ø·ÙĤØ©

+ĠTr ần

+Ġв еÑģ

+ü p

+Ùħ ÙĪÙĨ

+ÑĪ ли

+Ġn óng

+Ø® ÙĦÙģ

+ĠС ÑĤа

+Ġд оÑĢ

+ĠдоÑĢ ог

+ĠwÅĤa ÅĽnie

+eÄŁ in

+Ġhi á»ĥm

+ĠС ам

+ê»ĺ ìĦľ

+ĠÑĦ а

+ãģ» ãģĨ

+ãģ»ãģĨ ãģĮ

+×ķפ ×Ļ×¢

+ê° Ī

+د ÙĪÙĦ

+Ġthu ê

+Ġch á»Ĺ

+Ġëĭ¹ ìĭł

+ãģij ãĤĮ

+ãģijãĤĮ ãģ©

+ë³´ íĺ¸

+ãģķãĤĮ ãģ¦ãģĦãģ¾ãģĻ

+Ġнад о

+ĠìĤ¬ëŀĮ ëĵ¤

+à¹Ģà¸Ĥ à¸ķ

+สม ัย

+z ÅĤ

+ت ÙĪر

+Ġש ת×Ļ

+v ê

+Ġ×ijת ×ķ×ļ

+à¸Ĭ ัย

+ãģĦ ãģ£ãģŁ

+ìĿ ij

+Ġt ầ

+Ġtầ ng

+ש ׼ר

+Ġê¸ Ģ

+Ġ×Ķש ׳×Ķ

+Ġا ÙĨÙĩ

+ç«ĭ ãģ¡

+r és

+füh ren

+ر ØŃÙħ

+ê· ¹

+ĠâĢ «

+Ġsu ất

+à¸Ł ิ

+ÙĬ Ùĩا

+ĠاÙĦ اتØŃاد

+Ġt uyá»ĥn

+ãģ¾ ãĤĭ

+Ġm ại

+Ġng ân

+ãĤ° ãĥ©

+欲 ãģĹãģĦ

+س ار

+ãĤĤãģ® ãģ§ãģĻ

+ки е

+Ġseç im

+åħ¥ ãĤĬ

+ãģªãģ© ãĤĴ

+ÑĤ ÑĢи

+ĠÑģп еÑĨ

+ĠØ£ د

+Ġод но

+ÑĪ ел

+ãĥĩ ãĥ¼ãĤ¿

+ãĤ· ãĤ¹ãĥĨ

+ãĤ·ãĤ¹ãĥĨ ãĥł

+è¡Į ãģį

+ãģ¨æĢĿ ãģ£ãģŁ

+à¹Ģà¸ģิà¸Ķ à¸Ĥึà¹īà¸Ļ

+ĠÑĤ ож

+ĠÑĤож е

+Ġs ạch

+ĠÑģ ÑĢок

+Ġкли енÑĤ

+ĠÙħØ´ رÙĪع

+Ġalt ında

+Ġì ·¨

+ä¸Ń ãģ®

+ãģķãģĽ ãĤĭ

+ãģĻ ãģ¹

+ãģĻãģ¹ ãģ¦

+ê°ľ ë°ľ

+ĠÄij êm

+ãģªãģĦ ãģ®ãģ§

+ì² ł

+×¢ ×ij×ĵ

+Ġd ấu

+à¸Ħà¸Ļ à¸Ĺีà¹Ī

+ĠC ách

+تع ÙĦÙĬÙħ

+Ġh ại

+ãĤ» ãĥķãĥ¬

+ĠÙĨÙģس Ùĩ

+ĠíĨµ íķ´

+ÑĪ ло

+Ġнап ÑĢав

+ĠнапÑĢав лен

+ÑĢÑĥ Ñĩ

+íĶ Į

+Ġ×ijר ×Ļ×IJ

+ãģ® ãģ¿

+ãģ«ãģĬ ãģĦãģ¦

+×ij ׳ק

+ãĤ¨ ãĥ³

+Ø«ÙĦ اث

+Ġm ỹ

+ĠÑģай ÑĤе

+Ġе мÑĥ

+ت غÙĬ

+تغÙĬ ÙĬر

+خص ÙĪص

+ÑĤе ли

+Ġ×ķ׾ ׼ף

+פע ×Ŀ

+Ġпо ÑįÑĤомÑĥ

+ر اÙĨ

+иÑĤел ей

+пиÑģ ан

+×¢ ×¥

+ĠìĤ¬ ìĹħ

+Ùħ ز

+جÙħ ÙĬع

+ë©´ ìĦľ

+à¸ľà¸¥à¸´à¸ķ à¸łà¸±

+à¸ľà¸¥à¸´à¸ķà¸łà¸± à¸ĵ

+à¸ľà¸¥à¸´à¸ķà¸łà¸±à¸ĵ à¸ij

+à¸ľà¸¥à¸´à¸ķà¸łà¸±à¸ĵà¸ij à¹Į

+ĠпÑĢ имеÑĢ

+ãĤŃ ãĥ¼

+l â

+Ġch Äĥm

+缮 ãģ®

+ãģĦ ãģĭ

+ãģ¨è¨Ģ ãģĨ

+×ĸ ×ķ×Ĵ

+Ġ×ij ×ĵ×Ļ

+Ġ×ij×ĵ×Ļ ×ķק

+ãģĬ åºĹ

+à¸ķà¸Ńà¸Ļ à¸Ļีà¹ī

+Ġph á»iji

+п ÑĤ

+สà¸Ļ าม

+Ø· ÙĪ

+ص اØŃ

+صاØŃ Ø¨

+ĠD ü

+ĠDü nya

+Ġп ока

+п ал

+ĠÄij ảo

+ĠاÙĦÙģ ÙĪر

+ĠاÙĦÙģÙĪر Ùĥس

+Ġmá u

+кÑĢ еп

+ĠاÙĦس اعة

+ĠгоÑĢ ода

+Ùģ صÙĦ

+ай ÑĤе

+Ġд ог

+Ġдог овоÑĢ

+ĠØ¥ Ø°

+Ġ×ij׼׾ ׾

+ÙĬ تÙĩ

+×Ĵ ×ijר

+Ġbir ç

+Ġbirç ok

+문 íĻĶ

+ãģĿãģĨ ãģª

+را ØŃ

+ĠÙħ رة

+ĠденÑĮ ги

+f ä

+à¸Ĥà¹īา ว

+ĠÑģов ÑĢем

+ĠÑģовÑĢем енн

+׾×Ĺ ×¥

+èī¯ ãģı

+ĠÙģ Ø£

+Ġ×ķ ×ĸ×Ķ

+Ġз ани

+Ġзани ма

+Ġê°Ģì§Ģ ê³ł

+Ġh Æ¡i

+ãģªãģ® ãģĭ

+ãĥĨ ãĥ¬ãĥĵ

+Ġר ×ij×ķת

+à¸ķ ี

+Ġ×ijש ×ł×ª

+ĠT ại

+Ġthu áºŃn

+Ñģ ел

+Ñij м

+dzi Äĩ

+ĠÑģ ка

+ĠÑģка Ñĩ

+ĠÑģкаÑĩ аÑĤÑĮ

+×ķ×ŀ ×ķ

+г ла

+Ġмин ÑĥÑĤ

+åĩº ãģĻ

+Ġ×Ĺ×Ļ ×Ļ×ij

+Ġת ×Ĵ×ķ×ij×Ķ

+à¸£à¸¹à¸Ľ à¹ģà¸ļà¸ļ

+ни ÑĨа

+ĠÄ° n

+ĠØ£ ع

+Ġض ÙħÙĨ

+Ùħ ثاÙĦ

+ĠyaÅŁ an

+ĠìĹ° 구

+ĠL ê

+ש׾ ×Ĺ

+ãģı ãģªãĤĭ

+ìĹĨ ìĿ´

+ĠÑĤ ÑĢи

+ĠÑĩаÑģÑĤ о

+Ġоб ÑĢаÑĤ

+п ло

+د خ

+دخ ÙĪÙĦ

+س Ùĩ

+à¸Ń าà¸ģ

+à¸Ńาà¸ģ าศ

+Ġ׼ ×ĸ×Ķ

+Ġ×Ķ×¢ סק

+ĠاÙĦØ£ ÙĨ

+å¹´ ãģ«

+×¢ ש×ķ

+Ġש ×¢×ķת

+Ġm Ãłn

+×IJר ×Ļ

+sı yla

+Ùģر ÙĤ

+ни Ñħ

+Ġت ست

+è¦ĭ ãģ¦

+ØŃا ÙĪÙĦ

+×IJ ×Ļ׼×ķת

+ĠbaÅŁ ladı

+st Äħ

+stÄħ pi

+à¸Ĺีà¹Ī à¹Ģรา

+ÙĤر ر

+ج اب

+Ġ×ijר ×ķר

+à¹Ģà¸Ĥà¹īา à¹ĥà¸Ī

+×ŀ׊קר

+al ım

+Ġס ×Ļפ×ķר

+ãģ§ãģĤ ãĤĮãģ°

+Ġש×ŀ ×ķר×ķת

+Ġ×ķ ×ŀ×Ķ

+ãģĵ ãģĿ

+id ée

+ä¸ĭ ãģķãģĦ

+تÙĨا ÙĪÙĦ

+Ġ ลà¹īาà¸Ļ

+Ġìļ°ë¦¬ ëĬĶ

+اÙĨ ا

+ÑģÑĤ ой

+б оÑĤ

+ĠyaÅŁ am

+kö y

+Ø¥ ÙĦ

+ÑĢ Ñĭв

+기 ìĹħ

+Ġ×Ķ×ŀ ×ĵ

+Ġ×Ķ×ŀ×ĵ ×Ļ׳×Ķ

+د ب

+×¢ ×Ļ׳×Ļ

+×ŀ ת×Ĺ

+Ġפ ר×Ļ

+ãĥĭ ãĥ¼

+اÙħ ÙĬ

+Ġnh ằm

+ãĤĮ ãģªãģĦ

+ت عرÙģ

+Ġë§Ī ìĿĮ

+ìĵ °

+Ġh ấp

+ר×Ĵ ×Ļ׾

+ب Ùİ

+Ġr Äĥng

+gl Äħd

+ĠÑģиÑģÑĤем Ñĭ

+Ġkh óa

+ãģ§ãģĻ ãĤĪãģŃ

+大ãģį ãģı

+기 를

+Ġké o

+ÙĪ Ø¡

+ج اÙħ

+جاÙħ ع

+Ġ×¢ ×Ļצ×ķ×ij

+t éri

+Ġת ש

+Ġ×IJ ×ij×Ļ

+ĠCh Æ°Æ¡ng

+à¸ļริ à¹Ģว

+à¸ļริà¹Ģว à¸ĵ

+ãģ¤ ãģı

+Ġ×Ĺ ×ķ׾

+עת ×Ļ×ĵ

+ש ×Ļ×ŀ×Ķ

+ëĤ ¨

+Ġש×IJ ×Ļף

+ĠÙĪاÙĦ Ø¥

+ÑĦ а

+Ġkh ám

+Ġ×ĺ ×ķ×ij×Ķ

+ĠвÑĭ Ñģ

+ĠвÑĭÑģ око

+ĠاÙĦØŃ Ø¯ÙĬØ«

+人 ãĤĤ

+d Ã¼ÄŁÃ¼

+×Ļ×Ĺ ×ķ×ĵ

+تع ÙĦÙĬ

+تعÙĦÙĬ ÙĤ

+l ö

+تØŃ Ø¯ÙĬد

+н его

+ĠÑĥд об

+Ġ׾ ×ŀ×Ļ

+Ġר ×ķצ×Ļ×Ŀ

+Ġج اء

+Ġ×ij ×ĸ×ŀף

+à¸Ľà¸ģ à¸ķิ

+é«ĺ ãģı

+à¸Ľà¸¥ า

+Ġart ık

+Ġbug ün

+ק ׳×Ļ

+Ġkho á

+ĠÙħ رÙĥز

+ĠìŀIJ 기

+در جة

+×ŀש ר×ĵ

+Ġgi ấy

+Ġch óng

+ק פ

+ÙĬب Ø©

+ĠczÄĻ sto

+в али

+Ùĥ ب

+ìŁ ģ

+ส à¸ļาย

+à¸Ľà¸£à¸°à¸Ĭา à¸Ĭà¸Ļ

+×Ĵ ×ķ×£

+ëŁ ī

+ãģ® ãģĵãģ¨

+ล à¸Ń

+Ġngh á»ī

+åŃIJ ãģ©

+åŃIJãģ© ãĤĤ

+à¹Ħà¸Ķ à¹īà¸Ńย

+à¹Ħà¸Ķà¹īà¸Ńย à¹Īาà¸ĩ

+×ĵ ×¢

+ĠاÙĦت Ùī