diff --git a/_toc.yml b/_toc.yml

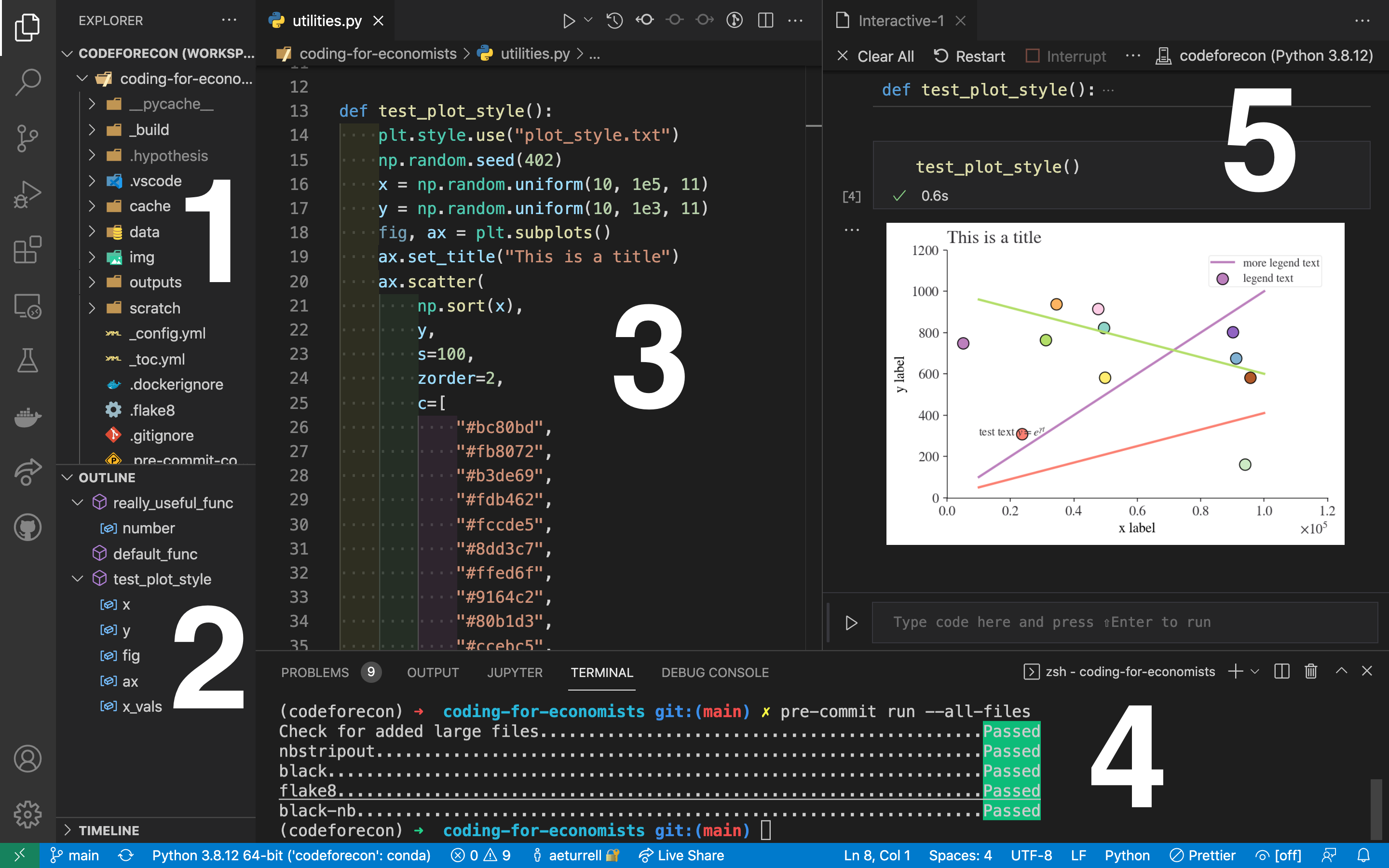

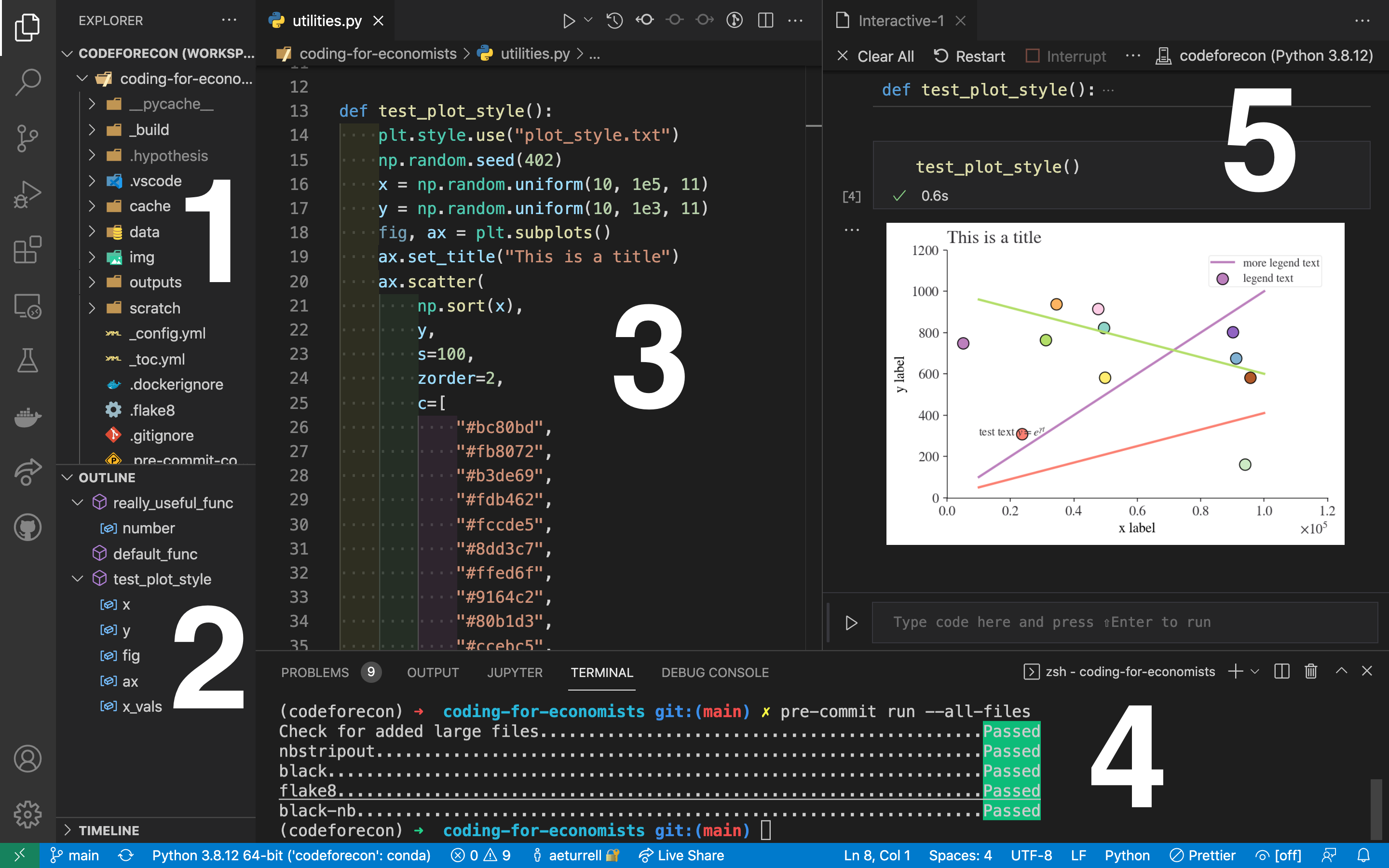

index 121f6ba..99d5015 100644

--- a/_toc.yml

+++ b/_toc.yml

@@ -1,5 +1,4 @@

# Table of contents

-# Learn more at https://jupyterbook.org/customize/toc.html

format: jb-book

root: welcome

@@ -7,7 +6,8 @@ parts:

- caption: Introduction

chapters:

- file: introduction

-- caption: Getting Started

+ - file: prerequisites

+- caption: Quick Start

numbered: true

chapters:

- file: whole-game

@@ -23,12 +23,13 @@ parts:

- caption: Visualise

numbered: true

chapters:

+ - file: visualise

+ - file: vis-layers

- file: exploratory-data-analysis

- file: communicate-plots

- caption: Transform

numbered: true

chapters:

- - file: joins

- file: boolean-data

- file: numbers

- file: strings

@@ -36,13 +37,14 @@ parts:

- file: categorical-data

- file: dates-and-times

- file: missing-values

+ - file: joins

- caption: Import

numbered: true

chapters:

- file: spreadsheets

- - file: webscraping-and-apis

- - file: rectangling

- file: databases

+ - file: rectangling

+ - file: webscraping-and-apis

- caption: Programme

numbered: true

chapters:

diff --git a/categorical-data.ipynb b/categorical-data.ipynb

index e74ea07..40e9265 100644

--- a/categorical-data.ipynb

+++ b/categorical-data.ipynb

@@ -223,18 +223,17 @@

"source": [

"### Renaming Categories\n",

"\n",

- "Renaming categories is done by assigning new values to the `.cat.categories` property or by using the `rename_categories()` method (which works with a list or a dictionary)."

+ "Renaming categories is done via the `rename_categories()` method (which works with a list or a dictionary)."

]

},

{

"cell_type": "code",

"execution_count": null,

- "id": "aedcf10f",

+ "id": "097171b8",

"metadata": {},

"outputs": [],

"source": [

- "df[\"cat_type\"].cat.categories = [\"alpha\", \"beta\", \"gamma\"]\n",

- "df"

+ "df[\"cat_type\"] = df[\"cat_type\"].cat.rename_categories([\"alpha\", \"beta\", \"gamma\"])"

]

},

{

@@ -380,7 +379,7 @@

"name": "python",

"nbconvert_exporter": "python",

"pygments_lexer": "ipython3",

- "version": "3.9.12"

+ "version": "3.10.12"

},

"toc-showtags": true

},

diff --git a/communicate-plots.ipynb b/communicate-plots.ipynb

index d351233..695ee37 100644

--- a/communicate-plots.ipynb

+++ b/communicate-plots.ipynb

@@ -12,26 +12,17 @@

"\n",

"In this chapter, you'll learn about using visualisation to communicate.\n",

"\n",

- "There are a plethora of options (and packages) for data visualisation using code. First, though a note about the different philosophies of data visualisation. There are broadly two categories of approach to using code to create data visualisations: imperative, where you build what you want, and declarative, where you say what you want. Choosing which to use involves a trade-off: imperative libraries offer you flexibility but at the cost of some verbosity; declarative libraries offer you a quick way to plot your data, but only if it’s in the right format to begin with, and customisation may be more difficult.\n",

- "\n",

- "There are also different purposes of data visualisation. It can be useful to bear in mind the three broad categories of visualisation that are out there:\n",

- "\n",

- "- exploratory\n",

- "\n",

- "- scientific\n",

- "\n",

- "- narrative\n",

- "\n",

- "Python has packages that cover all three of these.\n",

- "\n",

"In {ref}`exploratory-data-analysis`, you learned how to use plots as tools for *exploration*.\n",

- "When you make exploratory plots, you know---even before looking---which variables the plot will display.\n",

- "You made each plot for a purpose, could quickly look at it, and then move on to the next plot.\n",

- "In the course of most analyses, you'll produce tens or hundreds of plots, most of which are immediately thrown away. Exploratory visualisation is usually quick and dirty, and flexible too.\n",

+ "When you make exploratory plots, you know—even before looking—which variables the plot will display.\n",

+ "You made each plot for a purpose, quickly looked at it, and then moved on to the next plot.\n",

+ "In the course of most analyses, you'll produce tens or hundreds of plots, most of which are immediately thrown away.\n",

"\n",

- "The second kind, *scientific visualisation*, is the prime cut of your exploratory visualisation. It’s the kind of plot you might include in a more technical paper, the picture that says a thousand words. The first image of a black hole {cite}`akiyama2019first` is a prime example of this. You can get away with having a high density of information in a scientific plot because it's designed for specialists. Ensuring that important values can be accurately read from the plot is especially important in these kinds of charts. But they can also be the kind of plot that presents the killer results in a study; they might not be exciting to people who don’t look at charts for a living, but they might be exciting and, just as importantly, understandable by your peers.\n",

+ "Now that you understand your data, you need to *communicate* your understanding to others.\n",

+ "Your audience will likely not share your background knowledge and will not be deeply invested in the data. To help others quickly build up a good mental model of the data, you will need to invest considerable effort in making your plots as self-explanatory as possible. In this chapter, you'll learn some of the tools that **lets-plot** provides to do make charts tell a story.\n",

"\n",

- "The third and final kind is *narrative visualisation*, and it is the focus of this chapter—though we'll only scratch the surface. This is the one that requires the most thought in the step where you go from the first view to the end product because your audience will likely not share your background knowledge and will not be deeply invested in the data. It’s a visualisation that doesn’t just show a picture, but gives an insight. These are the kind of visualisations that you might see in the Financial Times, The Economist, or on the BBC News website. They come with aids that help the viewer focus on the aspects that the creator wanted them to (you can think of these aids or focuses as doing for visualisation what bold font does for text). They’re well worth using in your work, especially if you’re trying to communicate a particular narrative, and especially if the people you’re communicating with don’t have deep knowledge of the topic. You might use them in a paper that you hope will have a wide readership, in a blog post summarising your work, or in a report intended for a policymaker."

+ "### Prerequisities\n",

+ "\n",

+ "As ever, there are a plethora of options (and packages) for data visualisation using code. We're focusing on the declarative, \"grammar of graphics\" approach using **lets-plot** here, but advanced users looking for more complex graphics might wish to use an imperative library such as the excellent **matplotlib**. You should have both **lets-plot** and **pandas** installed. Once you have them installed, import them like so:"

]

},

{

@@ -54,24 +45,6 @@

"matplotlib_inline.backend_inline.set_matplotlib_formats(\"svg\")"

]

},

- {

- "cell_type": "markdown",

- "id": "17575f3a",

- "metadata": {},

- "source": [

- "### Prerequisites\n",

- "\n",

- "As well as **pandas**, you will need to install the declarative visualisation package **seaborn** for this chapter. This chapter uses the next generation version of **seaborn**, which can be installed by running the following on the command line (aka in the terminal): \n",

- "\n",

- "```bash\n",

- "pip install --pre seaborn\n",

- "```\n",

- "\n",

- "Although it will get installed when you install **seaborn**, we'll also be using the powerful imperative visualisation library that **seaborn** builds on, **matplotlib**.\n",

- "\n",

- "You'll need to import the **seaborn** and **pandas** libraries into your session using"

- ]

- },

{

"cell_type": "code",

"execution_count": null,

@@ -79,8 +52,12 @@

"metadata": {},

"outputs": [],

"source": [

- "import seaborn.objects as so\n",

- "import pandas as pd"

+ "from lets_plot import *\n",

+ "from lets_plot.mapping import as_discrete\n",

+ "import pandas as pd\n",

+ "import numpy as np\n",

+ "\n",

+ "LetsPlot.setup_html()"

]

},

{

@@ -88,9 +65,9 @@

"id": "a0dc9c10",

"metadata": {},

"source": [

- "## Labels and Titles\n",

+ "## Labels, titles, and other contextual information\n",

"\n",

- "The easiest place to start when turning an exploratory graphic into an expository graphic is with good labels. This example plot axis labels:"

+ "The easiest place to start when turning an exploratory graphic into an expository graphic is with good labels. Let's look at an example using the MPG (miles per gallon) data, which covers the fuel economy for 38 popular models of cars from 1999 to 2008."

]

},

{

@@ -111,21 +88,17 @@

"id": "1813ab08",

"metadata": {},

"source": [

- "Now let's do the plot with a title by passing the `title=` keyword argument into the `label` property."

+ "We want to show fuel efficiency on the highway changes with engine displacement, in litres. The most basic chart we can do with these variables is:"

]

},

{

"cell_type": "code",

"execution_count": null,

- "id": "40b9bfcb",

+ "id": "c7574bc6",

"metadata": {},

"outputs": [],

"source": [

- "(\n",

- " so.Plot(mpg, x=\"displ\", y=\"hwy\")\n",

- " .add(so.Dot())\n",

- " .label(title=\"Fuel efficiency generally decreases with engine size\")\n",

- ")"

+ "(ggplot(mpg, aes(x=\"displ\", y=\"hwy\")) + geom_point())"

]

},

{

@@ -133,53 +106,114 @@

"id": "ff5ed0d4",

"metadata": {},

"source": [

- "The purpose of a plot title is to summarise the main finding. Avoid titles that just describe what the plot is, e.g. \"A scatterplot of engine displacement vs. fuel economy\".\n",

+ "Now we're going to add lots of extra useful information that will make the chart better. The purpose of a plot title is to summarize the main finding.\n",

+ "Avoid titles that just describe what the plot is, e.g., \"A scatterplot of engine displacement vs. fuel economy\".\n",

"\n",

- "If you need to add more text, there are two other useful labels that you can use:\n",

+ "We're going to:\n",

"\n",

- "- `subtitle` adds additional detail in a smaller font beneath the title.\n",

+ "- add a title that summarises the main finding you'd like the viewer to take away (as opposed to one just describing the obvious!)\n",

+ "- add a subtitle that provides more info on the y-axis, and make the x-label more understandable\n",

+ "- remove the y-axis label that is at an awkward viewing angle\n",

+ "- add a caption with the source of the data\n",

"\n",

- "- `caption` adds text at the bottom right of the plot, often used to describe the source of the data.\n"

+ "Putting this all in, we get:"

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": null,

+ "id": "24b3513e",

+ "metadata": {},

+ "outputs": [],

+ "source": [

+ "(\n",

+ " ggplot(mpg, aes(x=\"displ\", y=\"hwy\"))\n",

+ " + geom_point(aes(colour=\"class\"))\n",

+ " + geom_smooth(se=False, method=\"loess\", size=1)\n",

+ " + labs(\n",

+ " title=\"Fuel efficiency generally decreases with engine size\",\n",

+ " subtitle=\"Highway fuel efficiency (miles per gallon)\",\n",

+ " caption=\"Source: fueleconomy.gov\",\n",

+ " y=\"\",\n",

+ " x=\"Engine displacement (litres)\",\n",

+ " )\n",

+ ")"

]

},

{

"cell_type": "markdown",

- "id": "061f528b",

+ "id": "2e28877a",

"metadata": {},

"source": [

- "You can use `.label` to replace the axis and legend titles. It's usually a good idea to replace short variable names with more detailed descriptions, and to include the units."

+ "This is much clearer. It's easier to read, we know where the data come from, and we can see *why* we're being shown it too.\n",

+ "\n",

+ "But maybe we want a different message? You can flex depending on your needs, and some people prefer to have a rotated y-axis so that the subtitle can provide even more context:"

]

},

{

"cell_type": "code",

"execution_count": null,

- "id": "b748ba52",

+ "id": "6489a6bf",

"metadata": {},

"outputs": [],

"source": [

"(\n",

- " so.Plot(mpg, x=\"displ\", y=\"hwy\")\n",

- " .add(so.Dot())\n",

- " .label(x=\"Engine displacement (L)\", y=\"Highway fuel economy (mpg)\")\n",

+ " ggplot(mpg, aes(x=\"displ\", y=\"hwy\"))\n",

+ " + geom_point(aes(colour=\"class\"))\n",

+ " + geom_smooth(se=False, method=\"loess\", size=1)\n",

+ " + labs(\n",

+ " x=\"Engine displacement (L)\",\n",

+ " y=\"Highway fuel economy (mpg)\",\n",

+ " colour=\"Car type\",\n",

+ " title=\"Fuel efficiency generally decreases with engine size\",\n",

+ " subtitle=\"Two seaters (sports cars) are an exception because of their light weight\",\n",

+ " caption=\"Source: fueleconomy.gov\",\n",

+ " )\n",

")"

]

},

{

"cell_type": "markdown",

- "id": "2e28877a",

+ "id": "9d88f188",

"metadata": {},

"source": [

- "It's possible to use mathematical equations and functions instead of text strings:"

+ "### Exercises\n",

+ "\n",

+ "1. Create one plot on the fuel economy data with customized `title`, `subtitle`, `caption`, `x`, `y`, and `color` labels.\n",

+ "\n",

+ "2. Recreate the following plot using the fuel economy data.\n",

+ " Note that both the colours and shapes of points vary by type of drive train."

]

},

{

"cell_type": "code",

"execution_count": null,

- "id": "ffc13eab",

- "metadata": {},

+ "id": "683d547c",

+ "metadata": {

+ "tags": [

+ "remove-cell"

+ ]

+ },

"outputs": [],

"source": [

- "(so.Plot(mpg, x=\"displ\", y=\"hwy\").add(so.Dot()).label(y=str.capitalize, x=r\"$x^{y-z}$\"))"

+ "(\n",

+ " ggplot(mpg, aes(x=\"cty\", y=\"hwy\", color=\"drv\", shape=\"drv\"))\n",

+ " + geom_point()\n",

+ " + labs(\n",

+ " x=\"City MPG\",\n",

+ " y=\"Highway MPG\",\n",

+ " shape=\"Type of\\ndrive train\",\n",

+ " color=\"Type of\\ndrive train\",\n",

+ " )\n",

+ ")"

+ ]

+ },

+ {

+ "cell_type": "markdown",

+ "id": "e10cdbd9",

+ "metadata": {},

+ "source": [

+ "3. Take an exploratory graphic that you've created in the last month, and add informative titles to make it easier for others to understand."

]

},

{

@@ -189,65 +223,242 @@

"source": [

"## Annotations\n",

"\n",

- "[TODO]"

+ "In addition to labelling major components of your plot, it's often useful to label individual observations or groups of observations.\n",

+ "The first tool you have at your disposal is `geom_text()`.\n",

+ "`geom_text()` is similar to `geom_point()`, but it has an additional aesthetic: `label`.\n",

+ "This makes it possible to add textual labels to your plots.\n",

+ "\n",

+ "There are two possible sources of labels: ones that are part of the data, which we'll add with `geom_text`; and ones that we add directly and manually as annotations using `geom_label`.\n",

+ "\n",

+ "In the first case, you might have a dataframe that contains labels.\n",

+ "In the following plot we pull out the cars with the highest engine size in each drive type and save their information as a new data frame called `label_info`. In creating it, we pick out the mean values of \"hwy\" by \"drv\" as the points to label—but we could do any aggregation we feel would work well on the chart."

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": null,

+ "id": "60826a32",

+ "metadata": {},

+ "outputs": [],

+ "source": [

+ "mapping = {\n",

+ " \"4\": \"4-wheel drive\",\n",

+ " \"f\": \"front-wheel drive\",\n",

+ " \"r\": \"rear-wheel drive\",\n",

+ "}\n",

+ "label_info = (\n",

+ " mpg.groupby(\"drv\")\n",

+ " .agg({\"hwy\": \"mean\", \"displ\": \"mean\"})\n",

+ " .reset_index()\n",

+ " .assign(drive_type=lambda x: x[\"drv\"].map(mapping))\n",

+ " .round(2)\n",

+ ")\n",

+ "label_info"

]

},

{

"cell_type": "markdown",

- "id": "1e245691",

+ "id": "93a136fe",

"metadata": {},

"source": [

- "## Scales\n",

- "\n",

- "The third way you can make your plot better for communication is to adjust the scales.\n",

- "Scales control the mapping from data values to things that you can perceive.\n",

- "Normally, **seaborn** automatically adds scales for you.\n",

- "For example, when you type:"

+ "Then, we use this new data frame to directly label the three groups to replace the legend with labels placed directly on the plot. Using the fontface and size arguments we can customize the look of the text labels. They’re larger than the rest of the text on the plot and bolded. (`theme(legend.position = \"none\")` turns all the legends off — we’ll talk about it more shortly.)"

]

},

{

"cell_type": "code",

"execution_count": null,

- "id": "012d8c4d",

+ "id": "6f90c2aa",

"metadata": {},

"outputs": [],

"source": [

- "(so.Plot(mpg, x=\"displ\", y=\"hwy\", color=\"class\").add(so.Dot()))"

+ "(\n",

+ " ggplot(mpg, aes(x=\"displ\", y=\"hwy\", color=\"drv\"))\n",

+ " + geom_point(alpha=0.5)\n",

+ " + geom_smooth(se=False, method=\"loess\")\n",

+ " + geom_text(\n",

+ " aes(x=\"displ\", y=\"hwy\", label=\"drive_type\"),\n",

+ " data=label_info,\n",

+ " fontface=\"bold\",\n",

+ " size=8,\n",

+ " hjust=\"left\",\n",

+ " vjust=\"bottom\",\n",

+ " )\n",

+ " + theme(legend_position=\"none\")\n",

+ ")"

]

},

{

"cell_type": "markdown",

- "id": "fae05382",

+ "id": "98c17829",

"metadata": {},

"source": [

- "**seaborn** automatically adds default scales behind the scenes:"

+ "Note the use of `hjust` (horizontal justification) and `vjust` (vertical justification) to control the alignment of the label.\n",

+ "\n",

+ "\n",

+ "The second of the two methods we're looking at is `geom_label`. This has two modes: in the first, it works like `geom_text` but with a box around the text, like so:"

]

},

{

"cell_type": "code",

"execution_count": null,

- "id": "f1b10368",

+ "id": "bdcd79bb",

"metadata": {},

"outputs": [],

"source": [

+ "potential_outliers = mpg.query(\"hwy > 40 | (hwy > 20 & displ > 5)\")\n",

"(\n",

- " so.Plot(mpg, x=\"displ\", y=\"hwy\", color=\"class\")\n",

- " .add(so.Dot())\n",

- " .scale(\n",

- " x=so.Continuous(),\n",

- " y=so.Continuous(),\n",

- " color=so.Nominal(),\n",

+ " ggplot(mpg, aes(x=\"displ\", y=\"hwy\"))\n",

+ " + geom_point(color=\"black\")\n",

+ " + geom_smooth(se=False, method=\"loess\", color=\"black\")\n",

+ " + geom_point(\n",

+ " data=potential_outliers,\n",

+ " color=\"red\",\n",

" )\n",

+ " + geom_label(\n",

+ " aes(label=\"model\"),\n",

+ " data=potential_outliers,\n",

+ " color=\"red\",\n",

+ " position=position_jitter(),\n",

+ " fontface=\"bold\",\n",

+ " size=5,\n",

+ " hjust=\"left\",\n",

+ " vjust=\"bottom\",\n",

+ " )\n",

+ " + theme(legend_position=\"none\")\n",

+ ")"

+ ]

+ },

+ {

+ "cell_type": "markdown",

+ "id": "38b69dcf",

+ "metadata": {},

+ "source": [

+ "The second method is generally useful for adding either a single or several annotations to a plot, like so:"

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": null,

+ "id": "d1e2cc3a",

+ "metadata": {},

+ "outputs": [],

+ "source": [

+ "import textwrap\n",

+ "\n",

+ "# wrap the text so it is over multiple lines:\n",

+ "trend_text = textwrap.fill(\"Larger engine sizes tend to have lower fuel economy.\", 30)\n",

+ "trend_text"

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": null,

+ "id": "e8c09f57",

+ "metadata": {},

+ "outputs": [],

+ "source": [

+ "(\n",

+ " ggplot(mpg, aes(x=\"displ\", y=\"hwy\"))\n",

+ " + geom_point()\n",

+ " + geom_label(x=3.5, y=38, label=trend_text, hjust=\"left\", color=\"red\")\n",

+ " + geom_segment(x=2, y=40, xend=5, yend=25, arrow=arrow(type=\"closed\"), color=\"red\")\n",

")"

]

},

{

"cell_type": "markdown",

- "id": "03b4d70c",

+ "id": "0720e7eb",

+ "metadata": {},

+ "source": [

+ "Annotation is a powerful tool for communicating main takeaways and interesting features of your visualisations. The only limit is your imagination (and your patience with positioning annotations to be aesthetically pleasing)!"

+ ]

+ },

+ {

+ "cell_type": "markdown",

+ "id": "9c00a0fd",

+ "metadata": {},

+ "source": [

+ "Remember, in addition to `geom_text()` and `geom_label()`, you have many other geoms in **lets-plot** available to help annotate your plot.\n",

+ "A couple ideas:\n",

+ "\n",

+ "- Use `geom_hline()` and `geom_vline()` to add reference lines.\n",

+ " We often make them thick (`size = 2`) and grey (`color = gray`), and draw them underneath the primary data layer.\n",

+ " That makes them easy to see, without drawing attention away from the data.\n",

+ "\n",

+ "- Use `geom_rect()` to draw a rectangle around points of interest.\n",

+ " The boundaries of the rectangle are defined by aesthetics `xmin`, `xmax`, `ymin`, `ymax`.\n",

+ "\n",

+ "- You already saw the use of `geom_segment()` with the `arrow` argument to draw attention to a point with an arrow.\n",

+ " Use aesthetics `x` and `y` to define the starting location, and `xend` and `yend` to define the end location.\n"

+ ]

+ },

+ {

+ "cell_type": "markdown",

+ "id": "730162e6",

+ "metadata": {},

+ "source": [

+ "### Exercises\n",

+ "\n",

+ "1. Use `geom_text()` with infinite positions to place text at the four corners of the plot.\n",

+ "\n",

+ "2. Use `geom_label()` to add a point geom in the middle of your last plot without having to create a dataframe\n",

+ " Customise the shape, size, or colour of the point.\n",

+ "\n",

+ "3. How do labels with `geom_text()` interact with faceting?\n",

+ " How can you add a label to a single facet?\n",

+ " How can you put a different label in each facet?\n",

+ " (Hint: Think about the dataset that is being passed to `geom_text()`.)\n",

+ "\n",

+ "4. What arguments to `geom_label()` control the appearance of the background box?\n",

+ "\n",

+ "5. What are the four arguments to `arrow()`?\n",

+ " How do they work?\n",

+ " Create a series of plots that demonstrate the most important options.\n"

+ ]

+ },

+ {

+ "cell_type": "markdown",

+ "id": "2f665492",

+ "metadata": {},

+ "source": [

+ "## Scales\n",

+ "\n",

+ "Another you can make your plot better for communication is to adjust the scales.\n",

+ "Scales control how the aesthetic mappings manifest visually.\n",

+ "\n",

+ "### Default scales\n",

+ "\n",

+ "Normally, **lets-plot** automatically adds scales for you and you don't need to worry about them. For example, when you type:\n",

+ "\n",

+ "```python\n",

+ "(\n",

+ " ggplot(mpg, aes(x=\"displ\", y=\"hwy\")) +\n",

+ " geom_point(aes(color=\"class\"))\n",

+ ")\n",

+ "```\n",

+ "\n",

+ "**lets-plot** is automatically doing this behind the scenes:\n",

+ "\n",

+ "```python\n",

+ "(\n",

+ " ggplot(mpg, aes(x=\"displ\", y=\"hwy\")) +\n",

+ " geom_point(aes(color=\"class\")) +\n",

+ " scale_x_continous() +\n",

+ " scale_y_continuous() +\n",

+ " scale_color_discrete()\n",

+ ")\n",

+ "```"

+ ]

+ },

+ {

+ "cell_type": "markdown",

+ "id": "39332b3b",

"metadata": {},

"source": [

- "Note the naming scheme for scales: `.scale` followed by the name of the dimension, then `=so.`, then the name of the scale.\n",

- "The default scales are named according to the type of variable they align with: continuous, nominal, and so on.\n",

+ "Note the naming scheme for scales: `scale_` followed by the name of the aesthetic, then `_`, then the name of the scale.\n",

+ "The default scales are named according to the type of variable they align with: continuous, discrete, datetime, or date.\n",

+ "`scale_x_continuous()` puts the numeric values from `displ` on a continuous number line on the x-axis, `scale_color_discrete()` chooses colours for each of the `class` of car, etc.\n",

+ "There are lots of non-default scales which you'll learn about below.\n",

"\n",

"The default scales have been carefully chosen to do a good job for a wide range of inputs.\n",

"Nevertheless, you might want to override the defaults for two reasons:\n",

@@ -256,195 +467,843 @@

" This allows you to do things like change the breaks on the axes, or the key labels on the legend.\n",

"\n",

"- You might want to replace the scale altogether, and use a completely different algorithm.\n",

- " Often you can do better than the default because you know more about the data.\n",

+ " Often you can do better than the default because you know more about the data.\n"

+ ]

+ },

+ {

+ "cell_type": "markdown",

+ "id": "c645247f",

+ "metadata": {},

+ "source": [

+ "### Axis ticks and legend keys\n",

"\n",

- "```{admonition} Exercise\n",

- "Try a plot with a scale setting of `x=\"log\"`.\n",

- "```"

+ "Collectively axes and legends get the somewhat confusing name **guides** in **lets-plot**. Axes are used for x and y aesthetics; legends are used for everything else.\n",

+ "\n",

+ "There are two primary arguments that affect the appearance of the ticks on the axes and the keys on the legend: `breaks` and `labels`.\n",

+ "Breaks controls the position of the ticks, or the values associated with the keys. If you like, the breaks *are* the ticks.\n",

+ "Labels controls the text label associated with each tick/key. We might more accurately call these *tick labels*.\n",

+ "The most common use of `breaks` is to override the default choice:\n"

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": null,

+ "id": "a95604d8",

+ "metadata": {},

+ "outputs": [],

+ "source": [

+ "(\n",

+ " ggplot(mpg, aes(x=\"displ\", y=\"hwy\", color=\"drv\"))\n",

+ " + geom_point()\n",

+ " + scale_y_continuous(breaks=np.arange(15, 40, step=5))\n",

+ ")"

+ ]

+ },

+ {

+ "cell_type": "markdown",

+ "id": "bd1113b7",

+ "metadata": {},

+ "source": [

+ "You can use `labels` in the same way (ie pass in an array or list of strings the same length as `breaks`). To remove them altogether, you would have to use a theme, though, a topic we'll return to later.\n",

+ "You can also use `breaks` and `labels` to control the appearance of legends.\n",

+ "For discrete scales for categorical variables, `labels` can be a named list of the existing levels names and the desired labels for them.\n"

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": null,

+ "id": "1a852304",

+ "metadata": {},

+ "outputs": [],

+ "source": [

+ "(\n",

+ " ggplot(mpg, aes(x=\"displ\", y=\"hwy\", color=\"drv\"))\n",

+ " + geom_point()\n",

+ " + scale_color_discrete(labels=[\"4-wheel\", \"front\", \"rear\"])\n",

+ ")"

+ ]

+ },

+ {

+ "cell_type": "markdown",

+ "id": "184dfb30",

+ "metadata": {},

+ "source": [

+ "To change the formatting of the tick labels, use the `format=` keyword argument. This is useful to render currencies, percentages, and so on—though it's often easier for the reader to just see this symbol once in the axis label.\n",

+ "\n",

+ "In the example below, we read in the `diamonds` dataset and then format it with a command `format=\"$.2s\"`; let's break this down:\n",

+ "\n",

+ "- the dollar sign says put a dollar sign in front of every number\n",

+ "- the .2 says use two significant digits\n",

+ "- the s says, use the Système International (SI)\n",

+ "\n",

+ "There are a wealth of alternative options for formatting—it's best to use the [helpful page on formatting](https://lets-plot.org/pages/formats.html) in the documentation of **lets-plot** to find out more."

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": null,

+ "id": "40ac230e",

+ "metadata": {},

+ "outputs": [],

+ "source": [

+ "diamonds = pd.read_csv(\n",

+ " \"https://vincentarelbundock.github.io/Rdatasets/csv/ggplot2/diamonds.csv\",\n",

+ " index_col=0,\n",

+ ")\n",

+ "diamonds[\"cut\"] = diamonds[\"cut\"].astype(\n",

+ " pd.CategoricalDtype(\n",

+ " categories=[\"Fair\", \"Good\", \"Very Good\", \"Premium\", \"Ideal\"], ordered=True\n",

+ " )\n",

+ ")\n",

+ "diamonds[\"color\"] = diamonds[\"color\"].astype(\n",

+ " pd.CategoricalDtype(categories=[\"D\", \"E\", \"F\", \"G\", \"H\", \"I\", \"J\"], ordered=True)\n",

+ ")"

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": null,

+ "id": "1520bb3c",

+ "metadata": {},

+ "outputs": [],

+ "source": [

+ "(\n",

+ " ggplot(diamonds, aes(x=\"cut\", y=\"price\"))\n",

+ " + geom_boxplot()\n",

+ " + coord_flip()\n",

+ " + scale_y_continuous(format=\"$.2s\", breaks=np.arange(0, 19000, step=6000))\n",

+ ")"

+ ]

+ },

+ {

+ "cell_type": "markdown",

+ "id": "6f2edc1b",

+ "metadata": {},

+ "source": [

+ "Another use of breaks is when you have relatively few data points and want to highlight exactly where the observations occur. For example, take this plot that shows when each US president started and ended their term."

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": null,

+ "id": "9d1f993a",

+ "metadata": {},

+ "outputs": [],

+ "source": [

+ "presidential = pd.read_csv(\n",

+ " \"https://vincentarelbundock.github.io/Rdatasets/csv/ggplot2/presidential.csv\",\n",

+ " index_col=0,\n",

+ ")\n",

+ "presidential = presidential.astype({\"start\": \"datetime64[ns]\", \"end\": \"datetime64[ns]\"})\n",

+ "presidential[\"id\"] = 33 + presidential.index\n",

+ "presidential.head()"

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": null,

+ "id": "cd2cc430",

+ "metadata": {},

+ "outputs": [],

+ "source": [

+ "# (\n",

+ "# ggplot(presidential, aes(x=\"start\", y=\"id\")) +\n",

+ "# geom_point() +\n",

+ "# geom_segment(aes(xend=\"end\", yend=\"id\")) +\n",

+ "# scale_x_datetime(breaks=presidential[\"start\"])\n",

+ "# )"

+ ]

+ },

+ {

+ "cell_type": "markdown",

+ "id": "a2d0b3f1",

+ "metadata": {},

+ "source": [

+ "Note that this example isn't currently working; we have [raised an issue on the **letsplot** Github page](https://github.com/JetBrains/lets-plot/issues/346) to get it fixed.\n"

+ ]

+ },

+ {

+ "cell_type": "markdown",

+ "id": "8b451c76",

+ "metadata": {},

+ "source": [

+ "### Legend layout\n",

+ "\n",

+ "You will most often use `breaks` and `labels` to tweak the axes.\n",

+ "While they both also work for legends, there are a few other techniques you are more likely to use.\n",

+ "\n",

+ "To control the overall position of the legend, you need to use a `theme()` setting.\n",

+ "We'll come back to themes at the end of the chapter, but in brief, they control the non-data parts of the plot.\n",

+ "The theme setting `legend.position` controls where the legend is drawn, and to demonstrate this we'll use `gggrid` to arrange all of the plots."

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": null,

+ "id": "52d6e86a",

+ "metadata": {},

+ "outputs": [],

+ "source": [

+ "base = ggplot(mpg, aes(x=\"displ\", y=\"hwy\")) + geom_point(aes(color=\"class\"))\n",

+ "\n",

+ "p1 = base + theme(legend_position=\"right\") # the default\n",

+ "p2 = base + theme(legend_position=\"left\")\n",

+ "p3 = base + theme(legend_position=\"top\") + guides(color=guide_legend(nrow=3))\n",

+ "p4 = base + theme(legend_position=\"bottom\") + guides(color=guide_legend(nrow=3))\n",

+ "\n",

+ "gggrid([p1, p2, p3, p4], ncol=2)"

+ ]

+ },

+ {

+ "cell_type": "markdown",

+ "id": "7ce2507b",

+ "metadata": {},

+ "source": [

+ "If your plot is short and wide, place the legend at the top or bottom, and if it's tall and narrow, place the legend at the left or right. You can also use `legend_position = \"none\"` to suppress the display of the legend altogether.\n",

+ "\n",

+ "To control the display of individual legends, use `guides()` along with `guide_legend()` or `guide_colorbar()`."

]

},

{

"cell_type": "markdown",

- "id": "6845649b",

+ "id": "f27913c7",

"metadata": {},

"source": [

- "### Axis Ticks\n",

"\n",

- "You can specify axis ticks directly using the `tick` property on the `Scale` parameter:"

+ "### Replacing a scale\n",

+ "\n",

+ "Instead of just tweaking the details a little, you can instead replace the scale altogether.\n",

+ "There are two types of scales you're mostly likely to want to switch out: continuous position scales and colour scales.\n",

+ "Fortunately, the same principles apply to all the other aesthetics, so once you've mastered position and colour, you'll be able to quickly pick up other scale replacements.\n",

+ "\n",

+ "It's very useful to plot transformations of your variable.\n",

+ "For example, it's easier to see the precise relationship between `carat` and `price` if we log transform them. The way to do this is by using an `apply` function on the data that gets sent to `ggplot`:"

]

},

{

"cell_type": "code",

"execution_count": null,

- "id": "dcd15c76",

+ "id": "2c1d3f8d",

"metadata": {},

"outputs": [],

"source": [

"(\n",

- " so.Plot(mpg, x=\"displ\", y=\"hwy\", color=\"class\")\n",

- " .add(so.Dot())\n",

- " .scale(\n",

- " x=so.Continuous(),\n",

- " y=so.Continuous().tick(at=[0, 10, 20, 30, 40]),\n",

- " color=so.Nominal(),\n",

+ " ggplot(\n",

+ " diamonds.apply({\"carat\": np.log10, \"price\": np.log10}),\n",

+ " aes(x=\"carat\", y=\"price\"),\n",

" )\n",

+ " + geom_bin2d()\n",

+ ")"

+ ]

+ },

+ {

+ "cell_type": "markdown",

+ "id": "f19dbbec",

+ "metadata": {},

+ "source": [

+ "However, the disadvantage of this transformation is that the axes are now mislabelled with the original values, making it hard to interpret the plot. Instead of doing the transformation in the aesthetic mapping, we can instead do it with the scale. This is visually identical, except the axes are labelled on the original data scale."

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": null,

+ "id": "39b4ef8d",

+ "metadata": {},

+ "outputs": [],

+ "source": [

+ "(\n",

+ " ggplot(diamonds, aes(x=\"carat\", y=\"price\"))\n",

+ " + geom_bin2d()\n",

+ " + scale_x_log10()\n",

+ " + scale_y_log10()\n",

+ ")"

+ ]

+ },

+ {

+ "cell_type": "markdown",

+ "id": "4402c4de",

+ "metadata": {},

+ "source": [

+ "Another scale that is frequently customised is colour. The default categorical scale picks colors that are evenly spaced around the color wheel. Useful alternatives are the ColorBrewer scales which have been hand tuned to work better for people with common types of colour blindness. The two plots below look similar, but there is enough difference in the shades of red and green that the dots on the right can be distinguished even by people with red-green colour blindness."

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": null,

+ "id": "f06d7e40",

+ "metadata": {},

+ "outputs": [],

+ "source": [

+ "(ggplot(mpg, aes(x=\"displ\", y=\"hwy\")) + geom_point(aes(color=\"drv\")))"

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": null,

+ "id": "6186b520",

+ "metadata": {},

+ "outputs": [],

+ "source": [

+ "(\n",

+ " ggplot(mpg, aes(x=\"displ\", y=\"hwy\"))\n",

+ " + geom_point(aes(color=\"drv\"))\n",

+ " + scale_color_brewer(palette=\"Set1\")\n",

")"

]

},

{

"cell_type": "markdown",

- "id": "f56d0216",

+ "id": "f19af6ad",

"metadata": {},

"source": [

- "### Legend Keys"

+ "Don't forget simpler techniques for improving accessibility.\n",

+ "If there are just a few colors, you can add a redundant shape mapping.\n",

+ "This will also help ensure your plot is interpretable in black and white."

]

},

{

"cell_type": "markdown",

- "id": "d3631634",

+ "id": "253af5a4",

"metadata": {},

"source": [

- "### Legend Layout\n",

+ "The ColorBrewer scales are documented online at . The sequential (top) and diverging (bottom) palettes are particularly useful if your categorical values are ordered, or have a \"middle\". This often arises if you've used `pd.cut()` to make a continuous variable into a categorical variable."

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": null,

+ "id": "bd347524",

+ "metadata": {

+ "tags": [

+ "remove-input"

+ ]

+ },

+ "outputs": [],

+ "source": [

+ "# remove-input\n",

+ "cmaps = [\n",

+ " (\n",

+ " \"Perceptually Uniform Sequential\",\n",

+ " [\"viridis\", \"plasma\", \"inferno\", \"magma\", \"cividis\"],\n",

+ " ),\n",

+ " (\n",

+ " \"Sequential\",\n",

+ " [\n",

+ " \"Blues\",\n",

+ " \"BuGn\",\n",

+ " \"BuPu\",\n",

+ " \"GnBu\",\n",

+ " \"Greens\",\n",

+ " \"Greys\",\n",

+ " \"Oranges\",\n",

+ " \"OrRd\",\n",

+ " \"PuBu\",\n",

+ " \"PuBuGn\",\n",

+ " \"PuRd\",\n",

+ " \"Purples\",\n",

+ " \"RdPu\",\n",

+ " \"Reds\",\n",

+ " \"YlGn\",\n",

+ " \"YlGnBu\",\n",

+ " \"YlOrBr\",\n",

+ " \"YlOrRd\",\n",

+ " ],\n",

+ " ),\n",

+ " (\n",

+ " \"Diverging\",\n",

+ " [\n",

+ " \"BrBG\",\n",

+ " \"PiYG\",\n",

+ " \"PRGn\",\n",

+ " \"PuOr\",\n",

+ " \"RdBu\",\n",

+ " \"RdGy\",\n",

+ " \"RdYlBu\",\n",

+ " \"RdYlGn\",\n",

+ " ],\n",

+ " ),\n",

+ " (\n",

+ " \"Qualitative\",\n",

+ " [\n",

+ " \"Pastel1\",\n",

+ " \"Pastel2\",\n",

+ " \"Paired\",\n",

+ " \"Accent\",\n",

+ " \"Dark2\",\n",

+ " \"Set1\",\n",

+ " \"Set2\",\n",

+ " \"Set3\",\n",

+ " \"tab10\",\n",

+ " \"tab20\",\n",

+ " \"tab20b\",\n",

+ " \"tab20c\",\n",

+ " ],\n",

+ " ),\n",

+ "]\n",

+ "\n",

+ "\n",

+ "gradient = np.linspace(0, 1, 256)\n",

+ "gradient = np.vstack((gradient, gradient))\n",

"\n",

- "[TODO]"

+ "\n",

+ "def plot_color_gradients(cmap_category, cmap_list):\n",

+ " # Create figure and adjust figure height to number of colourmaps\n",

+ " nrows = len(cmap_list)\n",

+ " figh = 0.35 + 0.15 + (nrows + (nrows - 1) * 0.1) * 0.22\n",

+ " fig, axs = plt.subplots(nrows=nrows, figsize=(6.4, figh))\n",

+ " fig.subplots_adjust(top=1 - 0.35 / figh, bottom=0.15 / figh, left=0.2, right=0.99)\n",

+ "\n",

+ " axs[0].set_title(cmap_category + \" colormaps\", fontsize=14)\n",

+ "\n",

+ " for ax, name in zip(axs, cmap_list):\n",

+ " ax.imshow(gradient, aspect=\"auto\", cmap=plt.get_cmap(name))\n",

+ " ax.text(\n",

+ " -0.01,\n",

+ " 0.5,\n",

+ " name,\n",

+ " va=\"center\",\n",

+ " ha=\"right\",\n",

+ " fontsize=10,\n",

+ " transform=ax.transAxes,\n",

+ " )\n",

+ "\n",

+ " # Turn off *all* ticks & spines, not just the ones with colourmaps.\n",

+ " for ax in axs:\n",

+ " ax.set_axis_off()\n",

+ "\n",

+ "\n",

+ "for cmap_category, cmap_list in cmaps[1:2]:\n",

+ " plot_color_gradients(cmap_category, cmap_list)\n",

+ "\n",

+ "plt.show()"

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": null,

+ "id": "d6350c71",

+ "metadata": {

+ "tags": [

+ "remove-input"

+ ]

+ },

+ "outputs": [],

+ "source": [

+ "# remove input\n",

+ "for cmap_category, cmap_list in cmaps[3:4]:\n",

+ " plot_color_gradients(cmap_category, cmap_list)"

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": null,

+ "id": "0063a574",

+ "metadata": {

+ "tags": [

+ "remove-input"

+ ]

+ },

+ "outputs": [],

+ "source": [

+ "# remove input\n",

+ "for cmap_category, cmap_list in cmaps[2:3]:\n",

+ " plot_color_gradients(cmap_category, cmap_list)"

]

},

{

"cell_type": "markdown",

- "id": "57d59667",

+ "id": "c32c2237",

+ "metadata": {},

+ "source": [

+ "When you have a predefined mapping between values and colours, use `scale_color_manual()`. For example, if we map presidential party to colour, we want to use the standard mapping of red for Republicans and blue for Democrats. One approach for assigning these colors is using hex colour codes:"

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": null,

+ "id": "9751058d",

"metadata": {},

+ "outputs": [],

"source": [

- "### Limits, aka 'zooming'\n",

+ "mini_presid = presidential.iloc[5:, :]\n",

"\n",

- "There are two ways to control the plot limits:\n",

+ "(\n",

+ " ggplot(mini_presid, aes(x=\"start\", y=\"id\", color=\"party\"))\n",

+ " + geom_point(size=3)\n",

+ " + geom_segment(aes(xend=\"end\", yend=\"id\"), size=1)\n",

+ " + scale_x_datetime(breaks=mini_presid[\"start\"], format=\"%Y\")\n",

+ " + scale_color_manual(values=[\"#00AEF3\", \"#E81B23\"], name=\"party\")\n",

+ ")"

+ ]

+ },

+ {

+ "cell_type": "markdown",

+ "id": "6be370b4",

+ "metadata": {},

+ "source": [

+ "You can also use typical colour names such as \"red\" and \"blue\".\n",

+ "\n",

+ "For continuous colour, you can use the built-in `scale_color_gradient()` or `scale_fill_gradient()`.\n",

+ "If you have a diverging scale, you can use `scale_color_gradient2()`. That allows you to give, for example, positive and negative values different colors. That's sometimes also useful if you want to distinguish points above or below the mean.\n",

"\n",

- "1. Adjusting what data are plotted\n",

- "2. Setting the limits in each scale\n",

+ "Another option is to use the viridis, magma, inferno, and plasma color scales developed for the extremely powerful imperative Python plotting package **[matplotlib](https://matplotlib.org/)**. The designers, Nathaniel Smith and Stéfan van der Walt, carefully tailored continuous color schemes that are perceptible to people with various forms of color blindness as well as perceptually uniform in both color and black and white. These scales are available as palettes in *lets-plot*. Here's an example using the continuous version of viridis (we'll generate some random data first):"

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": null,

+ "id": "644fd814",

+ "metadata": {},

+ "outputs": [],

+ "source": [

+ "prng = np.random.default_rng(1837) # prng=probabilistic random number generator\n",

+ "df_rnd = pd.DataFrame(prng.standard_normal((1000, 2)), columns=[\"x\", \"y\"])\n",

+ "(\n",

+ " ggplot(df_rnd, aes(x=\"x\", y=\"y\"))\n",

+ " + geom_bin2d()\n",

+ " + coord_fixed()\n",

+ " + scale_fill_viridis(option=\"plasma\")\n",

+ " + labs(title=\"Plasma, continuous\")\n",

+ ")"

+ ]

+ },

+ {

+ "cell_type": "markdown",

+ "id": "e7cf0931",

+ "metadata": {},

+ "source": [

+ "### Zooming\n",

+ "\n",

+ "There are three ways to control the plot limits:\n",

+ "\n",

+ "1. Adjusting what data are plotted.\n",

+ "2. Setting the limits in each scale.\n",

+ "3. Setting `xlim` and `ylim` in `coord_cartesian()`.\n",

+ "\n",

+ "We'll demonstrate these options in a series of plots.\n",

+ "The first plot shows the relationship between engine size and fuel efficiency, coloured by type of drive train.\n",

+ "The second plot shows the same variables, but subsets the data that are plotted.\n",

+ "Subsetting the data has affected the x and y scales as well as the smooth curve.\n"

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": null,

+ "id": "25a29f38",

+ "metadata": {},

+ "outputs": [],

+ "source": [

+ "(\n",

+ " ggplot(mpg, aes(x=\"displ\", y=\"hwy\"))\n",

+ " + geom_point(aes(color=\"drv\"))\n",

+ " + geom_smooth(method=\"loess\")\n",

+ ")"

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": null,

+ "id": "42318a59",

+ "metadata": {},

+ "outputs": [],

+ "source": [

+ "mpg_condition = (\n",

+ " (mpg[\"displ\"] >= 5) & (mpg[\"displ\"] <= 6) & (mpg[\"hwy\"] >= 10) & (mpg[\"hwy\"] <= 25)\n",

+ ")\n",

"\n",

- "Here is the same plot done according to 1 and 2 respectively."

+ "(\n",

+ " ggplot(mpg.loc[mpg_condition], aes(x=\"displ\", y=\"hwy\"))\n",

+ " + geom_point(aes(color=\"drv\"))\n",

+ " + geom_smooth(method=\"loess\")\n",

+ ")"

+ ]

+ },

+ {

+ "cell_type": "markdown",

+ "id": "ec4c07d0",

+ "metadata": {},

+ "source": [

+ "Let's compare these to the two plots below where the first plot sets the `limits` on individual scales and the second plot sets them in `coord_cartesian()`.\n",

+ "We can see that reducing the limits is equivalent to subsetting the data.\n",

+ "Therefore, to zoom in on a region of the plot, it's generally best to use `coord_cartesian()`."

]

},

{

"cell_type": "code",

"execution_count": null,

- "id": "013471ab",

+ "id": "03001d5e",

"metadata": {},

"outputs": [],

"source": [

"(\n",

- " so.Plot(mpg, x=\"displ\", y=\"hwy\", color=\"class\")\n",

- " .add(so.Dot())\n",

- " .limit(x=(5, 7), y=(10, 30))\n",

+ " ggplot(mpg, aes(x=\"displ\", y=\"hwy\"))\n",

+ " + geom_point(aes(color=\"drv\"))\n",

+ " + geom_smooth(method=\"loess\")\n",

+ " + scale_x_continuous(limits=(5, 6))\n",

+ " + scale_y_continuous(limits=(10, 25))\n",

")"

]

},

{

"cell_type": "code",

"execution_count": null,

- "id": "1734b4f4",

+ "id": "dc3bb833",

"metadata": {},

"outputs": [],

"source": [

"(\n",

- " so.Plot(\n",

- " mpg.query(\"displ >= 5 & displ <= 7 & hwy >= 10 & hwy <= 30\"),\n",

- " x=\"displ\",\n",

- " y=\"hwy\",\n",

- " color=\"class\",\n",

- " ).add(so.Dot())\n",

+ " ggplot(mpg, aes(x=\"displ\", y=\"hwy\"))\n",

+ " + geom_point(aes(color=\"drv\"))\n",

+ " + geom_smooth(method=\"loess\")\n",

+ " + coord_cartesian(xlim=(5, 6), ylim=(10, 25))\n",

")"

]

},

{

"cell_type": "markdown",

- "id": "89ad530b",

+ "id": "5d1fc3ee",

+ "metadata": {},

+ "source": [

+ "On the other hand, setting the `limits` on individual scales is generally more useful if you want to *expand* the limits, e.g., to match scales across different plots.\n",

+ "For example, if we extract two classes of cars and plot them separately, it's difficult to compare the plots because all three scales (the x-axis, the y-axis, and the colour aesthetic) have different ranges."

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": null,

+ "id": "aee538a8",

+ "metadata": {},

+ "outputs": [],

+ "source": [

+ "suv = mpg.loc[mpg[\"class\"] == \"suv\"]\n",

+ "compact = mpg.loc[mpg[\"class\"] == \"compact\"]\n",

+ "(ggplot(suv, aes(x=\"displ\", y=\"hwy\", color=\"drv\")) + geom_point())"

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": null,

+ "id": "a82c8c23",

"metadata": {},

+ "outputs": [],

"source": [

- "While they convey the same information, the former looks better."

+ "(ggplot(compact, aes(x=\"displ\", y=\"hwy\", color=\"drv\")) + geom_point())"

]

},

{

"cell_type": "markdown",

- "id": "eeaa7fde",

+ "id": "be777179",

+ "metadata": {},

+ "source": [

+ "One way to overcome this problem is to share scales across multiple plots, training the scales with the `limits` of the full data.\n"

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": null,

+ "id": "db6fce43",

+ "metadata": {},

+ "outputs": [],

+ "source": [

+ "x_scale = scale_x_continuous(limits=mpg[\"displ\"].agg([\"max\", \"min\"]).tolist())\n",

+ "y_scale = scale_y_continuous(limits=mpg[\"hwy\"].agg([\"max\", \"min\"]).tolist())\n",

+ "col_scale = scale_color_discrete(limits=mpg[\"drv\"].unique())"

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": null,

+ "id": "dd9e6606",

+ "metadata": {},

+ "outputs": [],

+ "source": [

+ "(\n",

+ " ggplot(suv, aes(x=\"displ\", y=\"hwy\", color=\"drv\"))\n",

+ " + geom_point()\n",

+ " + x_scale\n",

+ " + y_scale\n",

+ " + col_scale\n",

+ ")"

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": null,

+ "id": "bdd8b2c5",

+ "metadata": {},

+ "outputs": [],

+ "source": [

+ "(\n",

+ " ggplot(compact, aes(x=\"displ\", y=\"hwy\", color=\"drv\"))\n",

+ " + geom_point()\n",

+ " + x_scale\n",

+ " + y_scale\n",

+ " + col_scale\n",

+ ")"

+ ]

+ },

+ {

+ "cell_type": "markdown",

+ "id": "577d8648",

+ "metadata": {},

+ "source": [

+ "In this particular case, you could have simply used faceting, but this technique is useful more generally, if for instance, you want to spread plots over multiple pages of a report.\n"

+ ]

+ },

+ {

+ "cell_type": "markdown",

+ "id": "4094830b",

+ "metadata": {},

+ "source": [

+ "### Exercises\n",

+ "\n",

+ "1. What is the first argument to every scale?\n",

+ " How does it compare to `labs()`?\n",

+ "\n",

+ "2. Change the display of the presidential terms by:\n",

+ "\n",

+ " a. Combining the two variants that customize colors and x axis breaks.\n",

+ " b. Improving the display of the y axis.\n",

+ " c. Labelling each term with the name of the president.\n",

+ " d. Adding informative plot labels.\n",

+ " e. Placing breaks every 4 years (this is trickier than it seems!).\n"

+ ]

+ },

+ {

+ "cell_type": "markdown",

+ "id": "8b574471",

"metadata": {},

"source": [

"## Themes\n",

"\n",

- "Seaborn comes with several built-in themes that you can switch between by using"

+ "Finally, you can customise the non-data elements of your plot with a theme:\n"

]

},

{

"cell_type": "code",

"execution_count": null,

- "id": "8749bccd",

+ "id": "0b2364ca",

"metadata": {},

"outputs": [],

"source": [

- "import seaborn as sns\n",

- "\n",

- "sns.set_theme(style=\"darkgrid\", palette=\"dark\")\n",

+ "(\n",

+ " ggplot(mpg, aes(x=\"displ\", y=\"hwy\"))\n",

+ " + geom_point(aes(color=\"class\"))\n",

+ " + geom_smooth(se=False)\n",

+ " + theme_grey()\n",

+ ")"

+ ]

+ },

+ {

+ "cell_type": "markdown",

+ "id": "7814bb4d",

+ "metadata": {},

+ "source": [

+ "**lets-plot** includes several built-in themes that you can find [here](https://lets-plot.org/pages/api.html#predefined-themes). You can also create your own themes, if you are trying to match a particular corporate or journal style.\n",

"\n",

- "(so.Plot(mpg, x=\"displ\", y=\"hwy\", color=\"class\").add(so.Dot()))"

+ "Here's an example of changing multiple `theme()` settings:"

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": null,

+ "id": "67bfa9c8",

+ "metadata": {},

+ "outputs": [],

+ "source": [

+ "(\n",

+ " ggplot(mpg, aes(x=\"displ\", color=\"drv\"))\n",

+ " + geom_density(size=2)\n",

+ " + ggtitle(\"Density of drives\")\n",

+ " + theme(\n",

+ " axis_line=element_line(size=4),\n",

+ " axis_ticks_length=10,\n",

+ " axis_title_y=\"blank\",\n",

+ " legend_position=[1, 1],\n",

+ " legend_justification=[1, 1],\n",

+ " panel_background=element_rect(color=\"black\", fill=\"#eeeeee\", size=2),\n",

+ " panel_grid=element_line(color=\"black\", size=1),\n",

+ " )\n",

+ ")"

]

},

{

"cell_type": "markdown",

- "id": "b09cf2b2",

+ "id": "5b05b5da",

"metadata": {},

"source": [

- "Note that you can also create your own themes using **matplotlib**, the library that sits under **seaborn** (this book uses a custom theme).\n"

+ "### Exercises\n",

+ "\n",

+ "1. Make the axis labels of your plot blue and bolded.\n"

]

},

{

"cell_type": "markdown",

- "id": "310e4b73",

+ "id": "a56216db",

"metadata": {},

"source": [

- "## Saving Plots\n",

+ "## Layout\n",

"\n",

- "There are lots of output options to choose from to save your file to. Remember that, for graphics, *vector formats* are generally better than *raster formats*. In practice, this means saving plots in svg or pdf formats over jpg or png file formats. The svg format works in a lot of contexts (including Microsoft Word) and is a good default. To choose between formats, just supply the file extension and the file type will change automatically, eg \"chart.svg\" for svg or \"chart.png\" for png (thought note that raster formats often have extra options, like how many dots per inch to use)."

+ "So far we talked about how to create and modify a single plot.\n",

+ "What if you have multiple plots you want to lay out in a certain way? You can do that. To place two plots next to each other, you can simply put them in a list and call `gggrid` on the list. Note that you first need to create the plots and save them as objects (in the following example they're called `p1` and `p2`).\n"

]

},

{

"cell_type": "code",

"execution_count": null,

- "id": "d1492b5f",

+ "id": "a8081df4",

"metadata": {},

"outputs": [],

"source": [

- "(so.Plot(mpg, x=\"displ\", y=\"hwy\", color=\"class\").add(so.Dot()).save(\"output_chart.svg\"))"

+ "p1 = ggplot(mpg, aes(x=\"displ\", y=\"hwy\")) + geom_point() + labs(title=\"Plot 1\")\n",

+ "p2 = ggplot(mpg, aes(x=\"drv\", y=\"hwy\")) + geom_boxplot() + labs(title=\"Plot 2\")\n",

+ "gggrid([p1, p2])"

]

},

{

"cell_type": "markdown",

- "id": "6ca1b42b",

+ "id": "b0773270",

"metadata": {},

"source": [

- "To double check this works, let's use the terminal. We'll try the command `ls`, which lists everything in directory, and `grep *.svg` to pull out any files that end in `.svg` from what is returned by `ls`. These are strung together as commands by a `|`. (Note that the leading exclamation mark below just tells the software that builds this book to use the terminal.)"

+ "## Saving plots to file\n",

+ "\n",

+ "There are lots of output options to choose from to save your file to. Remember that, for graphics, *vector formats* are generally better than *raster formats*. In practice, this means saving plots in svg or pdf formats over jpg or png file formats. The svg format works in a lot of contexts (including Microsoft Word) and is a good default. To choose between formats, just supply the file extension and the file type will change automatically, eg \"chart.svg\" for svg or \"chart.png\" for png (thought note that raster formats often have extra options, like how many dots per inch to use).\n",

+ "\n",

+ "Let's try this out using the figure we made in the previous exercise, `p1`. `path=\".\"` just drops the file in the current directory."

]

},

{

"cell_type": "code",

"execution_count": null,

- "id": "8ffc45b8",

+ "id": "710a6a4f",

"metadata": {},

"outputs": [],

"source": [

- "!ls | grep *.svg"

+ "ggsave(p1, \"chart.svg\", path=\".\")"

]

},

{

"cell_type": "markdown",

- "id": "549e2576",

+ "id": "7781794a",

"metadata": {},

"source": [

- "Great! It looks like our file saved successfully."

+ "To double check this has worked, let's use the terminal. We'll try the command `ls`, which lists everything in directory, and `grep *.svg` to pull out any files that end in `.svg` from what is returned by `ls`. These are strung together as commands by a `|`. (Note that the leading exclamation mark below just tells the software that builds this book to use the terminal.)"

]

},

{

"cell_type": "code",

"execution_count": null,

- "id": "e7cf90a9",

+ "id": "bc831b1b",

+ "metadata": {},

+ "outputs": [],

+ "source": [

+ "!ls | grep *.svg"

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": null,

+ "id": "9cc10ab7",

"metadata": {

"tags": [

"remove-cell"

@@ -455,7 +1314,22 @@

"# remove-cell\n",

"import os\n",

"\n",

- "os.remove(\"output_chart.svg\")"

+ "os.remove(\"chart.svg\")"

+ ]

+ },

+ {

+ "cell_type": "markdown",

+ "id": "793f4a04",

+ "metadata": {},

+ "source": [

+ "## Summary\n",

+ "\n",

+ "In this chapter you've learned about adding plot labels such as title, subtitle, caption as well as modifying default axis labels, using annotation to add informational text to your plot or to highlight specific data points, customising the axis scales, and changing the theme of your plot.\n",

+ "You've also learned about combining multiple plots in a single graph using both simple and complex plot layouts.\n",

+ "\n",

+ "While you've so far learned about how to make many different types of plots and how to customise them using a variety of techniques, we've barely scratched the surface of what you can create with **lets-plot**.\n",

+ "\n",

+ "The best place to go for further information is the [**lets-plot** dcoumentation](https://lets-plot.org/)."

]

}

],

@@ -484,7 +1358,7 @@

"name": "python",

"nbconvert_exporter": "python",

"pygments_lexer": "ipython3",

- "version": "3.9.12"

+ "version": "3.10.12"

},

"toc-showtags": true

},

diff --git a/data-transform.ipynb b/data-transform.ipynb

index afa8864..2468c80 100644

--- a/data-transform.ipynb

+++ b/data-transform.ipynb

@@ -135,6 +135,16 @@

"We would like to work with the `\"time_hour\"` variable in the form of a datetime; fortunately, **pandas** makes it easy to perform that conversion on that specific column"

]

},

+ {

+ "cell_type": "code",

+ "execution_count": null,

+ "id": "ffb275b0",

+ "metadata": {},

+ "outputs": [],

+ "source": [

+ "flights[\"time_hour\"]"

+ ]

+ },

{

"cell_type": "code",

"execution_count": null,

@@ -142,7 +152,7 @@

"metadata": {},

"outputs": [],

"source": [

- "flights[\"time_hour\"] = pd.to_datetime(flights[\"time_hour\"], format=\"%Y-%m-%d %H:%M:%S\")"

+ "flights[\"time_hour\"] = pd.to_datetime(flights[\"time_hour\"], format=\"%Y-%m-%dT%H:%M:%SZ\")"

]

},

{

@@ -1199,7 +1209,7 @@

"name": "python",

"nbconvert_exporter": "python",

"pygments_lexer": "ipython3",

- "version": "3.9.12"

+ "version": "3.10.12"

},

"toc-showtags": true

},

diff --git a/data-visualise.ipynb b/data-visualise.ipynb

index 4dab122..f2d890d 100644

--- a/data-visualise.ipynb

+++ b/data-visualise.ipynb

@@ -12,31 +12,13 @@

"\n",

"> \"The simple graph has brought more information to the data analyst's mind than any other device.\" --- John Tukey\n",

"\n",

- "This chapter will teach you how to visualise your data using the **seaborn** package.\n",

+ "This chapter will teach you how to visualise your data using using **[letsplot](https://lets-plot.org/)**.\n",

"\n",

- "There are a plethora of other options (and packages) for data visualisation using code. There are broadly two categories of approach to using code to create data visualisations: imperative, where you build what you want, and declarative, where you say what you want. Choosing which to use involves a trade-off: imperative libraries offer you flexibility but at the cost of some verbosity; declarative libraries offer you a quick way to plot your data, but only if it’s in the right format to begin with, and customisation may be more difficult.\n",

+ "There are broadly two categories of approach to using code to create data visualisations: imperative, where you build what you want, and declarative, where you say what you want. Choosing which to use involves a trade-off: imperative libraries offer you flexibility but at the cost of some verbosity; declarative libraries offer you a quick way to plot your data, but only if it’s in the right format to begin with, and customisation to special chart types is more difficult. Python has many excellent plotting packages, including perhaps the most powerful imperative plotting package around, **matplotlib**.\n",

"\n",

- "**seaborn** is a declarative visualisation package, and these can be easier to get started with. But it's built on top of an imperative package, the incredibly powerful **matplotlib**, so you can always dig further and tweak details if you need to. However, in this chapter, we'll focus on using **seaborn** declaratively."

- ]

- },

- {

- "cell_type": "code",

- "execution_count": null,

- "id": "51a55374",

- "metadata": {

- "tags": [

- "remove-cell"

- ]

- },

- "outputs": [],

- "source": [

- "# remove cell\n",

- "import matplotlib_inline.backend_inline\n",

- "import matplotlib.pyplot as plt\n",

+ "However, we'll get further faster by learning one system and applying it in many places—and the beauty of declarative plotting is that it covers lots of standard charts simply and well. **letsplot** implements the so-called **grammar of graphics**, a coherent declarative system for describing and building graphs.\n",

"\n",

- "# Plot settings\n",

- "plt.style.use(\"https://github.com/aeturrell/python4DS/raw/main/plot_style.txt\")\n",

- "matplotlib_inline.backend_inline.set_matplotlib_formats(\"svg\")"

+ "We will start by creating a simple scatterplot and use that to introduce aesthetic mappings and geometric objects—the fundamental building blocks of **letsplot**. We will then walk you through visualising distributions of single variables as well as visualising relationships between two or more variables. We’ll finish off with saving your plots and troubleshooting tips. "

]

},

{

@@ -46,17 +28,19 @@

"source": [

"### Prerequisites\n",

"\n",

- "You will need to install the **seaborn** package for this chapter (`pip install seaborn`). Once you've done this, you'll need to import the **seaborn** library into your session using"

+ "You will need to install the **letsplot** package for this chapter. To do this, open up the command line of your computer, type in `pip install letsplot`, and hit enter."

]

},

{

- "cell_type": "code",

- "execution_count": null,

- "id": "ae4a818a",

+ "cell_type": "markdown",

+ "id": "792902c7",

"metadata": {},

- "outputs": [],

"source": [

- "import seaborn.objects as so"

+ "```{note}\n",

+ "The command line can be opened within Visual Studio Code and Codespaces by going to View -> Terminal.\n",

+ "```\n",

+ "\n",

+ "Note that you only need to install a package once in each Python environment."

]

},

{

@@ -64,805 +48,1056 @@

"id": "e0ad70c8",

"metadata": {},

"source": [

- "The second import brings in the plotting part of **seaborn**.\n",

- "\n",

- "## First Steps\n",

+ "We'll also need to have the **pandas** package installed—this package, which we'll be seeing a lot of, is for data. You can similarly install it by running `pip install pandas` on the command line.\n",

"\n",

- "Let's use our first graph to answer a question: Do cars with big engines use more fuel than cars with small engines? You probably already have an answer, but try to make your answer precise. What does the relationship between engine size and fuel efficiency look like? Is it positive? Negative? Linear? Non-linear?\n",

- "\n",

- "### The `mpg` data frame\n",

- "\n",

- "You can test your answer with the `mpg` data frame found in **seaborn** and obtained from the internet using the **pandas** package.\n",

- "\n",

- "A data frame is a rectangular collection of variables (in the columns) and observations (in the rows). `mpg` contains observations collected by the US Environmental Protection Agency on 38 car models."

+ "Finally, we'll also need some data (you can't science with data). We'll be using the Palmer penguins dataset. Unusually, this can also be installed as a package—normally you would load data from a file, but these data are so popular for tutorials they've found their way into an installable package. Run `pip install palmerpenguins` to get these data."

+ ]

+ },

+ {

+ "cell_type": "markdown",

+ "id": "8852373a",

+ "metadata": {},

+ "source": [

+ "Our next task is to load these into our Python session, either in a Python notebook cell within a Jupyter Notebook, by writing it in a script that we then send to the interactive window, or by typing it directly into the interactive window and hitting shift and enter. Here's the code:"

]

},

{

"cell_type": "code",

"execution_count": null,

- "id": "0cf986aa",

+ "id": "a86fb211",

"metadata": {},

"outputs": [],

"source": [

"import pandas as pd\n",

+ "from palmerpenguins import load_penguins\n",

+ "from lets_plot import *\n",

"\n",

- "mpg = pd.read_csv(\n",

- " \"https://vincentarelbundock.github.io/Rdatasets/csv/ggplot2/mpg.csv\", index_col=0\n",

- ")\n",

- "mpg"

+ "LetsPlot.setup_html()"

]

},

{

"cell_type": "markdown",

- "id": "cc310b4f",

+ "id": "4443f4dd",

"metadata": {},

"source": [

- "Among the variables in `mpg` are:\n",

- "\n",

- "1. `displ`, a car's engine size, in litres.\n",

+ "These lines import parts of the **pandas** and **palmerpenguins** packages, then import all (`*`) of the functions of the **letsplot** package. The final line allows charts to display in HTML."

+ ]

+ },

+ {

+ "cell_type": "markdown",

+ "id": "4bc87ab8",

+ "metadata": {},

+ "source": [

+ "## First Steps\n",

"\n",

- "2. `hwy`, a car's fuel efficiency on the highway, in miles per gallon (mpg). A car with a low fuel efficiency consumes more fuel than a car with a high fuel efficiency when they travel the same distance."

+ "Do penguins with longer flippers weigh more or less than penguins with shorter flippers? You probably already have an answer, but try to make your answer precise. What does the relationship between flipper length and body mass look like? Is it positive? Negative? Linear? Nonlinear? Does the relationship vary by the species of the penguin? How about by the island where the penguin lives? Let’s create visualisations that we can use to answer these questions."

]

},

{

"cell_type": "markdown",

- "id": "339966d7",

+ "id": "e4eb9c4f",

"metadata": {},

"source": [

- "### Creating a Plot\n",

+ "### The `penguins` data frame\n",

+ "\n",

+ "You can test your answers to those questions with the penguins data frame found in palmerpenguins (a.k.a. `from palmerpenguins import load_penguins`). A data frame is a rectangular collection of variables (in the columns) and observations (in the rows). `penguins` contains 344 observations collected and made available by Dr. Kristen Gorman and the Palmer Station, Antarctica LTER.{cite:p}`horst2020palmerpenguins`.\n",

+ "\n",

+ "To make the discussion easier, let's define some terms:\n",

+ "\n",

+ "- A **variable** is a quantity, quality, or property that you can measure.\n",

+ "\n",

+ "- A **value** is the state of a variable when you measure it.\n",

+ " The value of a variable may change from measurement to measurement.\n",

+ "\n",

+ "- An **observation** is a set of measurements made under similar conditions (you usually make all of the measurements in an observation at the same time and on the same object).\n",

+ " An observation will contain several values, each associated with a different variable.\n",

+ " We'll sometimes refer to an observation as a data point.\n",

+ "\n",

+ "- **Tabular data** is a set of values, each associated with a variable and an observation.\n",

+ " Tabular data is *tidy* if each value is placed in its own \"cell\", each variable in its own column, and each observation in its own row.\n",

+ "\n",

+ "In this context, a variable refers to an attribute of all the penguins, and an observation refers to all the attributes of a single penguin.\n",

"\n",

- "To plot `mpg`, run this code to put `displ` on the x-axis and `hwy` on the y-axis:"

+ "Type the name of the data frame in the interactive window and Python will print a preview of its contents.\n",

+ "Note that it says `shape` on top of this preview: that's the shape of your data (344 rows, 8 columns)."

]

},

{

"cell_type": "code",

"execution_count": null,

- "id": "1b12b0ca",

+ "id": "0cf986aa",

"metadata": {},

"outputs": [],

"source": [

- "so.Plot(mpg, x=\"displ\", y=\"hwy\").add(so.Dot())"

+ "penguins = load_penguins()\n",

+ "penguins"

]

},

{

"cell_type": "markdown",

- "id": "7272e621",

+ "id": "cc310b4f",

"metadata": {},

"source": [

- "The plot shows a negative relationship between engine size (`displacement`) and fuel efficiency (`mpg`). In other words, cars with smaller engine sizes have higher fuel efficiency and, in general, as engine size increases, fuel efficiency decreases. Does this confirm or refute your hypothesis about fuel efficiency and engine size?\n",

- "\n",

- "With **seaborn**, you begin a plot with the function `so.Plot()`. **seaborn** creates a coordinate system that you can add layers to. The first argument of `so.Plot()` is the dataset to use in the graph. So `so.Plot(mpg)` creates an empty graph, but it's not very interesting so I'm not going to show it here.\n",

- "\n",

- "You complete your graph by adding one or more layers to the plot. The function `.add(so.Dot())` adds a layer of points to your plot, creating a scatterplot. You can choose between telling `so.Plot` what the x and y axis variables are or passing it directly to `.add`.\n",

- "\n",

- "**seaborn** comes with many functions that each add a different type of layer to a plot. You'll learn a whole bunch of them throughout this chapter."

+ "For an alternative view, where you can see the first few observations of each variable, use `penguins.head()`."

]

},

{

- "cell_type": "markdown",

- "id": "c5e295b2",

+ "cell_type": "code",

+ "execution_count": null,

+ "id": "23c75ba7",

"metadata": {},

+ "outputs": [],

"source": [

- "### A graphing template\n",

- "\n",

- "Let's turn this code into a reusable template for making graphs with **seaborn**. To make a graph, replace the bracketed sections in the code below with a dataset, a geom function, or a collection of mappings.\n",

- "\n",

- "```python\n",

- "so.Plot(, x=, y=).add(so.)\n",

- "```\n",

- "\n",

- "The rest of this chapter will show you how to complete and extend this template to make different types of graphs."

+ "penguins.head()"

]

},

{

"cell_type": "markdown",

- "id": "351b59e2",

+ "id": "c3eb1881",

"metadata": {},

"source": [

- "### Exercises\n",

+ "Among the variables in `penguins` are:\n",

"\n",

- "1. Run `so.Plot(mpg)`.\n",

- " What do you see?\n",

+ "1. `species`: a penguin's species (Adelie, Chinstrap, or Gentoo).\n",

"\n",

- "2. How many rows are in `mpg` (the data frame)?\n",

- " How many columns?\n",

+ "2. `flipper_length_mm`: length of a penguin's flipper, in millimeters.\n",

"\n",

- "3. Make a scatterplot of `mpg` vs `cylinders`.\n",

+ "3. `body_mass_g`: body mass of a penguin, in grams.\n",

"\n",

- "4. What happens if you make a scatterplot of `class` vs `drv`? Why is the plot not useful?"

+ "To learn more about `penguins`, open the help page of its data-loading function by running `help(load_penguins)`.\n"

]

},

{

"cell_type": "markdown",

- "id": "e5867e3f",

+ "id": "caf04bde",

"metadata": {},

"source": [

- "## Aesthetic mappings\n",

+ "### Ultimate Goal\n",

"\n",

- "> \"The greatest value of a picture is when it forces us to notice what we never expected to see.\" --- John Tukey\n",

- "\n",

- "In the plot below, one group of points (highlighted in red) seems to fall outside of the linear trend. These cars have a higher mileage than you might expect. How can you explain these cars?\n"

+ "Our ultimate goal in this chapter is to recreate the following visualisation displaying the relationship between flipper lengths and body masses of these penguins, taking into consideration the species of the penguin."

]

},

{

"cell_type": "code",

"execution_count": null,

- "id": "11877e4c",

+ "id": "574fe39f",

"metadata": {

"tags": [

- "remove-input"

+ "remove-cell"

]

},

"outputs": [],

"source": [

- "# remove input\n",

- "so.Plot(mpg, x=\"displ\", y=\"hwy\").add(so.Dot()).add(\n",