diff --git a/colossalai/booster/plugin/hybrid_parallel_plugin.py b/colossalai/booster/plugin/hybrid_parallel_plugin.py

index 35a88d1e8980..42942aaeb89d 100644

--- a/colossalai/booster/plugin/hybrid_parallel_plugin.py

+++ b/colossalai/booster/plugin/hybrid_parallel_plugin.py

@@ -37,7 +37,8 @@ def __init__(self, module: Module, precision: str, shard_config: ShardConfig, dp

self.shared_param_process_groups = []

for shared_param in self.shared_params:

if len(shared_param) > 0:

- self.stage_manager.init_process_group_by_stages(list(shared_param.keys()))

+ self.shared_param_process_groups.append(

+ self.stage_manager.init_process_group_by_stages(list(shared_param.keys())))

if precision == 'fp16':

module = module.half().cuda()

elif precision == 'bf16':

@@ -49,8 +50,10 @@ def __init__(self, module: Module, precision: str, shard_config: ShardConfig, dp

def sync_shared_params(self):

for shared_param, group in zip(self.shared_params, self.shared_param_process_groups):

- param = shared_param[self.stage_manager.stage]

- dist.all_reduce(param.grad, group=group)

+ if self.stage_manager.stage in shared_param:

+ param = shared_param[self.stage_manager.stage]

+ dist.all_reduce(param.grad, group=group)

+ dist.barrier()

def no_sync(self) -> Iterator[None]:

# no sync grads across data parallel

diff --git a/colossalai/kernel/cuda_native/flash_attention.py b/colossalai/kernel/cuda_native/flash_attention.py

index 3db7374509a0..91bef0908dbb 100644

--- a/colossalai/kernel/cuda_native/flash_attention.py

+++ b/colossalai/kernel/cuda_native/flash_attention.py

@@ -6,6 +6,7 @@

import math

import os

import subprocess

+import warnings

import torch

@@ -14,7 +15,7 @@

HAS_MEM_EFF_ATTN = True

except ImportError:

HAS_MEM_EFF_ATTN = False

- print('please install xformers from https://github.com/facebookresearch/xformers')

+ warnings.warn(f'please install xformers from https://github.com/facebookresearch/xformers')

if HAS_MEM_EFF_ATTN:

@@ -22,7 +23,12 @@

from einops import rearrange

from xformers.ops.fmha import MemoryEfficientAttentionCutlassOp

- from xformers.ops.fmha.attn_bias import BlockDiagonalMask, LowerTriangularMask, LowerTriangularMaskWithTensorBias

+ from xformers.ops.fmha.attn_bias import (

+ BlockDiagonalCausalMask,

+ BlockDiagonalMask,

+ LowerTriangularMask,

+ LowerTriangularMaskWithTensorBias,

+ )

from .scaled_softmax import AttnMaskType

@@ -86,11 +92,14 @@ def backward(ctx, grad_output):

class ColoAttention(torch.nn.Module):

- def __init__(self, embed_dim: int, num_heads: int, dropout: float = 0.0):

+ def __init__(self, embed_dim: int, num_heads: int, dropout: float = 0.0, scale=None):

super().__init__()

assert embed_dim % num_heads == 0, \

f"the embed dim ({embed_dim}) is not divisible by the number of attention heads ({num_heads})."

- self.scale = 1 / math.sqrt(embed_dim // num_heads)

+ if scale is not None:

+ self.scale = scale

+ else:

+ self.scale = 1 / math.sqrt(embed_dim // num_heads)

self.dropout = dropout

@staticmethod

@@ -116,7 +125,7 @@ def forward(self,

bias: Optional[torch.Tensor] = None):

batch_size, tgt_len, src_len = query.shape[0], query.shape[1], key.shape[1]

attn_bias = None

- if attn_mask_type == AttnMaskType.padding: # bert style

+ if attn_mask_type and attn_mask_type.value % 2 == 1: # bert style

assert attn_mask is not None, \

f"attention mask {attn_mask} is not valid for attention mask type {attn_mask_type}."

assert attn_mask.dim() == 2, \

@@ -134,7 +143,10 @@ def forward(self,

if batch_size > 1:

query = rearrange(query, "b s ... -> c (b s) ...", c=1)

key, value = self.unpad(torch.stack([query, key, value], dim=2), kv_indices).unbind(dim=2)

- attn_bias = BlockDiagonalMask.from_seqlens(q_seqlen, kv_seqlen)

+ if attn_mask_type == AttnMaskType.padding:

+ attn_bias = BlockDiagonalMask.from_seqlens(q_seqlen, kv_seqlen)

+ elif attn_mask_type == AttnMaskType.paddedcausal:

+ attn_bias = BlockDiagonalCausalMask.from_seqlens(q_seqlen, kv_seqlen)

elif attn_mask_type == AttnMaskType.causal: # gpt style

attn_bias = LowerTriangularMask()

@@ -146,7 +158,7 @@ def forward(self,

out = memory_efficient_attention(query, key, value, attn_bias=attn_bias, p=self.dropout, scale=self.scale)

- if attn_mask_type == AttnMaskType.padding and batch_size > 1:

+ if attn_mask_type and attn_mask_type.value % 2 == 1 and batch_size > 1:

out = self.repad(out, q_indices, batch_size, tgt_len)

out = rearrange(out, 'b s h d -> b s (h d)')

diff --git a/colossalai/kernel/cuda_native/scaled_softmax.py b/colossalai/kernel/cuda_native/scaled_softmax.py

index 24e458bb3ea5..41cd4b20faa1 100644

--- a/colossalai/kernel/cuda_native/scaled_softmax.py

+++ b/colossalai/kernel/cuda_native/scaled_softmax.py

@@ -19,6 +19,7 @@

class AttnMaskType(enum.Enum):

padding = 1

causal = 2

+ paddedcausal = 3

class ScaledUpperTriangMaskedSoftmax(torch.autograd.Function):

@@ -139,7 +140,7 @@ def is_kernel_available(self, mask, b, np, sq, sk):

if 0 <= sk <= 2048:

batch_per_block = self.get_batch_per_block(sq, sk, b, np)

- if self.attn_mask_type == AttnMaskType.causal:

+ if self.attn_mask_type.value > 1:

if attn_batches % batch_per_block == 0:

return True

else:

@@ -151,7 +152,7 @@ def forward_fused_softmax(self, input, mask):

b, np, sq, sk = input.size()

scale = self.scale if self.scale is not None else 1.0

- if self.attn_mask_type == AttnMaskType.causal:

+ if self.attn_mask_type.value > 1:

assert sq == sk, "causal mask is only for self attention"

# input is 3D tensor (attn_batches, sq, sk)

diff --git a/colossalai/pipeline/p2p.py b/colossalai/pipeline/p2p.py

index f741b8363f13..af7a00b5c720 100644

--- a/colossalai/pipeline/p2p.py

+++ b/colossalai/pipeline/p2p.py

@@ -3,6 +3,7 @@

import io

import pickle

+import re

from typing import Any, List, Optional, Union

import torch

@@ -31,7 +32,10 @@ def _cuda_safe_tensor_to_object(tensor: torch.Tensor, tensor_size: torch.Size) -

if b'cuda' in buf:

buf_array = bytearray(buf)

device_index = torch.cuda.current_device()

- buf_array[buf_array.find(b'cuda') + 5] = 48 + device_index

+ # There might be more than one output tensors during forward

+ for cuda_str in re.finditer(b'cuda', buf_array):

+ pos = cuda_str.start()

+ buf_array[pos + 5] = 48 + device_index

buf = bytes(buf_array)

io_bytes = io.BytesIO(buf)

diff --git a/colossalai/pipeline/policy/__init__.py b/colossalai/pipeline/policy/__init__.py

new file mode 100644

index 000000000000..fd9e6e04588e

--- /dev/null

+++ b/colossalai/pipeline/policy/__init__.py

@@ -0,0 +1,22 @@

+from typing import Any, Dict, List, Optional, Tuple, Type

+

+from torch import Tensor

+from torch.nn import Module, Parameter

+

+from colossalai.pipeline.stage_manager import PipelineStageManager

+

+from .base import Policy

+from .bert import BertModel, BertModelPolicy

+

+POLICY_MAP: Dict[Type[Module], Type[Policy]] = {

+ BertModel: BertModelPolicy,

+}

+

+

+def pipeline_parallelize(

+ model: Module,

+ stage_manager: PipelineStageManager) -> Tuple[Dict[str, Parameter], Dict[str, Tensor], List[Dict[int, Tensor]]]:

+ if type(model) not in POLICY_MAP:

+ raise NotImplementedError(f"Policy for {type(model)} not implemented")

+ policy = POLICY_MAP[type(model)](stage_manager)

+ return policy.parallelize_model(model)

diff --git a/colossalai/pipeline/policy/base.py b/colossalai/pipeline/policy/base.py

new file mode 100644

index 000000000000..9736f1004fe4

--- /dev/null

+++ b/colossalai/pipeline/policy/base.py

@@ -0,0 +1,141 @@

+from typing import Any, Dict, List, Optional, Tuple

+

+import numpy as np

+from torch import Tensor

+from torch.nn import Module, Parameter

+

+from colossalai.lazy import LazyTensor

+from colossalai.pipeline.stage_manager import PipelineStageManager

+

+

+class Policy:

+

+ def __init__(self, stage_manager: PipelineStageManager) -> None:

+ self.stage_manager = stage_manager

+

+ def setup_model(self, module: Module) -> Tuple[Dict[str, Parameter], Dict[str, Tensor]]:

+ """Setup model for pipeline parallel

+

+ Args:

+ module (Module): Module to be setup

+

+ Returns:

+ Tuple[Dict[str, Parameter], Dict[str, Tensor]]: Hold parameters and buffers

+ """

+ hold_params = set()

+ hold_buffers = set()

+

+ def init_layer(layer: Module):

+ for p in layer.parameters():

+ if isinstance(p, LazyTensor):

+ p.materialize()

+ p.data = p.cuda()

+ hold_params.add(p)

+ for b in layer.buffers():

+ if isinstance(b, LazyTensor):

+ b.materialize()

+ b.data = b.cuda()

+ hold_buffers.add(b)

+

+ hold_layers = self.get_hold_layers(module)

+

+ for layer in hold_layers:

+ init_layer(layer)

+

+ hold_params_dict = {}

+ hold_buffers_dict = {}

+

+ # release other tensors

+ for n, p in module.named_parameters():

+ if p in hold_params:

+ hold_params_dict[n] = p

+ else:

+ if isinstance(p, LazyTensor):

+ p.materialize()

+ p.data = p.cuda()

+ p.storage().resize_(0)

+ for n, b in module.named_buffers():

+ if b in hold_buffers:

+ hold_buffers_dict[n] = b

+ else:

+ if isinstance(b, LazyTensor):

+ b.materialize()

+ b.data = b.cuda()

+ # FIXME(ver217): use meta tensor may be better

+ b.storage().resize_(0)

+ return hold_params_dict, hold_buffers_dict

+

+ def replace_forward(self, module: Module) -> None:

+ """Replace module forward in place. This method should be implemented by subclass. The output of internal layers must be a dict

+

+ Args:

+ module (Module): _description_

+ """

+ raise NotImplementedError

+

+ def get_hold_layers(self, module: Module) -> List[Module]:

+ """Get layers that should be hold in current stage. This method should be implemented by subclass.

+

+ Args:

+ module (Module): Module to be setup

+

+ Returns:

+ List[Module]: List of layers that should be hold in current stage

+ """

+ raise NotImplementedError

+

+ def get_shared_params(self, module: Module) -> List[Dict[int, Tensor]]:

+ """Get parameters that should be shared across stages. This method should be implemented by subclass.

+

+ Args:

+ module (Module): Module to be setup

+

+ Returns:

+ List[Module]: List of parameters that should be shared across stages. E.g. [{0: module.model.embed_tokens.weight, 3: module.lm_head.weight}]

+ """

+ raise NotImplementedError

+

+ def parallelize_model(self,

+ module: Module) -> Tuple[Dict[str, Parameter], Dict[str, Tensor], List[Dict[int, Tensor]]]:

+ """Parallelize model for pipeline parallel

+

+ Args:

+ module (Module): Module to be setup

+

+ Returns:

+ Tuple[Dict[str, Parameter], Dict[str, Tensor], List[Dict[int, Tensor]]]: Hold parameters, buffers and shared parameters

+ """

+ hold_params, hold_buffers = self.setup_model(module)

+ self.replace_forward(module)

+ shared_params = self.get_shared_params(module)

+ return hold_params, hold_buffers, shared_params

+

+ @staticmethod

+ def distribute_layers(num_layers: int, num_stages: int) -> List[int]:

+ """

+ divide layers into stages

+ """

+ quotient = num_layers // num_stages

+ remainder = num_layers % num_stages

+

+ # calculate the num_layers per stage

+ layers_per_stage = [quotient] * num_stages

+

+ # deal with the rest layers

+ if remainder > 0:

+ start_position = num_layers // 2 - remainder // 2

+ for i in range(start_position, start_position + remainder):

+ layers_per_stage[i] += 1

+ return layers_per_stage

+

+ @staticmethod

+ def get_stage_index(layers_per_stage: List[int], stage: int) -> List[int]:

+ """

+ get the start index and end index of layers for each stage.

+ """

+ num_layers_per_stage_accumulated = np.insert(np.cumsum(layers_per_stage), 0, 0)

+

+ start_idx = num_layers_per_stage_accumulated[stage]

+ end_idx = num_layers_per_stage_accumulated[stage + 1]

+

+ return [start_idx, end_idx]

diff --git a/colossalai/pipeline/policy/bert.py b/colossalai/pipeline/policy/bert.py

new file mode 100644

index 000000000000..abce504e9d61

--- /dev/null

+++ b/colossalai/pipeline/policy/bert.py

@@ -0,0 +1,523 @@

+from functools import partial

+from types import MethodType

+from typing import Dict, List, Optional, Tuple, Union

+

+import numpy as np

+import torch

+from torch import Tensor

+from torch.nn import CrossEntropyLoss, Module

+from transformers.modeling_outputs import (

+ BaseModelOutputWithPast,

+ BaseModelOutputWithPastAndCrossAttentions,

+ BaseModelOutputWithPoolingAndCrossAttentions,

+ CausalLMOutputWithCrossAttentions,

+)

+from transformers.models.bert.modeling_bert import (

+ BertForPreTraining,

+ BertForPreTrainingOutput,

+ BertLMHeadModel,

+ BertModel,

+)

+from transformers.utils import ModelOutput, logging

+

+from colossalai.pipeline.stage_manager import PipelineStageManager

+

+from .base import Policy

+

+logger = logging.get_logger(__name__)

+

+

+def bert_model_forward(

+ self: BertModel,

+ input_ids: Optional[torch.Tensor] = None,

+ attention_mask: Optional[torch.Tensor] = None,

+ token_type_ids: Optional[torch.Tensor] = None,

+ position_ids: Optional[torch.Tensor] = None,

+ head_mask: Optional[torch.Tensor] = None,

+ inputs_embeds: Optional[torch.Tensor] = None,

+ encoder_hidden_states: Optional[torch.Tensor] = None,

+ encoder_attention_mask: Optional[torch.Tensor] = None,

+ past_key_values: Optional[List[torch.FloatTensor]] = None,

+ # labels: Optional[torch.LongTensor] = None,

+ use_cache: Optional[bool] = None,

+ output_attentions: Optional[bool] = None,

+ output_hidden_states: Optional[bool] = None,

+ return_dict: Optional[bool] = None,

+ stage_manager: Optional[PipelineStageManager] = None,

+ hidden_states: Optional[torch.FloatTensor] = None, # this is from the previous stage

+):

+ # TODO: add explaination of the output here.

+ r"""

+ encoder_hidden_states (`torch.FloatTensor` of shape `(batch_size, sequence_length, hidden_size)`, *optional*):

+ Sequence of hidden-states at the output of the last layer of the encoder. Used in the cross-attention if

+ the model is configured as a decoder.

+ encoder_attention_mask (`torch.FloatTensor` of shape `(batch_size, sequence_length)`, *optional*):

+ Mask to avoid performing attention on the padding token indices of the encoder input. This mask is used in

+ the cross-attention if the model is configured as a decoder. Mask values selected in `[0, 1]`:

+

+ - 1 for tokens that are **not masked**,

+ - 0 for tokens that are **masked**.

+ past_key_values (`tuple(tuple(torch.FloatTensor))` of length `config.n_layers` with each tuple having 4 tensors of shape `(batch_size, num_heads, sequence_length - 1, embed_size_per_head)`):

+ Contains precomputed key and value hidden states of the attention blocks. Can be used to speed up decoding.

+

+ If `past_key_values` are used, the user can optionally input only the last `decoder_input_ids` (those that

+ don't have their past key value states given to this model) of shape `(batch_size, 1)` instead of all

+ `decoder_input_ids` of shape `(batch_size, sequence_length)`.

+ use_cache (`bool`, *optional*):

+ If set to `True`, `past_key_values` key value states are returned and can be used to speed up decoding (see

+ `past_key_values`).

+ """

+ # debugging

+ # preprocess:

+ output_attentions = output_attentions if output_attentions is not None else self.config.output_attentions

+ output_hidden_states = (output_hidden_states

+ if output_hidden_states is not None else self.config.output_hidden_states)

+ return_dict = return_dict if return_dict is not None else self.config.use_return_dict

+

+ if self.config.is_decoder:

+ use_cache = use_cache if use_cache is not None else self.config.use_cache

+ else:

+ use_cache = False

+

+ if stage_manager.is_first_stage():

+ if input_ids is not None and inputs_embeds is not None:

+ raise ValueError("You cannot specify both input_ids and inputs_embeds at the same time")

+ elif input_ids is not None:

+ input_shape = input_ids.size()

+ elif inputs_embeds is not None:

+ input_shape = inputs_embeds.size()[:-1]

+ else:

+ raise ValueError("You have to specify either input_ids or inputs_embeds")

+ batch_size, seq_length = input_shape

+ device = input_ids.device if input_ids is not None else inputs_embeds.device

+ else:

+ input_shape = hidden_states.size()[:-1]

+ batch_size, seq_length = input_shape

+ device = hidden_states.device

+

+ # TODO: left the recording kv-value tensors as () or None type, this feature may be added in the future.

+ if output_attentions:

+ logger.warning_once('output_attentions=True is not supported for pipeline models at the moment.')

+ output_attentions = False

+ if output_hidden_states:

+ logger.warning_once('output_hidden_states=True is not supported for pipeline models at the moment.')

+ output_hidden_states = False

+ if use_cache:

+ logger.warning_once('use_cache=True is not supported for pipeline models at the moment.')

+ use_cache = False

+

+ # past_key_values_length

+ past_key_values_length = past_key_values[0][0].shape[2] if past_key_values is not None else 0

+

+ if attention_mask is None:

+ attention_mask = torch.ones(((batch_size, seq_length + past_key_values_length)), device=device)

+

+ if token_type_ids is None:

+ if hasattr(self.embeddings, "token_type_ids"):

+ buffered_token_type_ids = self.embeddings.token_type_ids[:, :seq_length]

+ buffered_token_type_ids_expanded = buffered_token_type_ids.expand(batch_size, seq_length)

+ token_type_ids = buffered_token_type_ids_expanded

+ else:

+ token_type_ids = torch.zeros(input_shape, dtype=torch.long, device=device)

+

+ # We can provide a self-attention mask of dimensions [batch_size, from_seq_length, to_seq_length]

+ # ourselves in which case we just need to make it broadcastable to all heads.

+ extended_attention_mask: torch.Tensor = self.get_extended_attention_mask(attention_mask, input_shape)

+ attention_mask = extended_attention_mask

+ # If a 2D or 3D attention mask is provided for the cross-attention

+ # we need to make broadcastable to [batch_size, num_heads, seq_length, seq_length]

+ if self.config.is_decoder and encoder_hidden_states is not None:

+ encoder_batch_size, encoder_sequence_length, _ = encoder_hidden_states.size()

+ encoder_hidden_shape = (encoder_batch_size, encoder_sequence_length)

+ if encoder_attention_mask is None:

+ encoder_attention_mask = torch.ones(encoder_hidden_shape, device=device)

+ encoder_extended_attention_mask = self.invert_attention_mask(encoder_attention_mask)

+ else:

+ encoder_extended_attention_mask = None

+

+ # Prepare head mask if needed

+ # 1.0 in head_mask indicate we keep the head

+ # attention_probs has shape bsz x n_heads x N x N

+ # input head_mask has shape [num_heads] or [num_hidden_layers x num_heads]

+ # and head_mask is converted to shape [num_hidden_layers x batch x num_heads x seq_length x seq_length]

+ head_mask = self.get_head_mask(head_mask, self.config.num_hidden_layers)

+ hidden_states = hidden_states if hidden_states is not None else None

+

+ if stage_manager.is_first_stage():

+ hidden_states = self.embeddings(

+ input_ids=input_ids,

+ position_ids=position_ids,

+ token_type_ids=token_type_ids,

+ inputs_embeds=inputs_embeds,

+ past_key_values_length=past_key_values_length,

+ )

+

+ # inherit from bert_layer,this should be changed when we add the feature to record hidden_states

+ all_hidden_states = () if output_hidden_states else None

+ all_self_attentions = () if output_attentions else None

+ all_cross_attentions = () if output_attentions and self.config.add_cross_attention else None

+

+ if self.encoder.gradient_checkpointing and self.encoder.training:

+ if use_cache:

+ logger.warning_once(

+ "`use_cache=True` is incompatible with gradient checkpointing. Setting `use_cache=False`...")

+ use_cache = False

+ next_decoder_cache = () if use_cache else None

+

+ # calculate the num_layers

+ num_layers_per_stage = len(self.encoder.layer) // stage_manager.num_stages

+ start_layer = stage_manager.stage * num_layers_per_stage

+ end_layer = (stage_manager.stage + 1) * num_layers_per_stage

+

+ # layer_outputs

+ layer_outputs = hidden_states if hidden_states is not None else None

+ for idx, encoder_layer in enumerate(self.encoder.layer[start_layer:end_layer], start=start_layer):

+ if stage_manager.is_first_stage() and idx == 0:

+ encoder_attention_mask = encoder_extended_attention_mask

+

+ if output_hidden_states:

+ all_hidden_states = all_hidden_states + (hidden_states,)

+

+ layer_head_mask = head_mask[idx] if head_mask is not None else None

+ past_key_value = past_key_values[idx] if past_key_values is not None else None

+

+ if self.encoder.gradient_checkpointing and self.encoder.training:

+

+ def create_custom_forward(module):

+

+ def custom_forward(*inputs):

+ return module(*inputs, past_key_value, output_attentions)

+

+ return custom_forward

+

+ layer_outputs = torch.utils.checkpoint.checkpoint(

+ create_custom_forward(encoder_layer),

+ hidden_states,

+ attention_mask,

+ layer_head_mask,

+ encoder_hidden_states,

+ encoder_attention_mask,

+ )

+ else:

+ layer_outputs = encoder_layer(

+ hidden_states,

+ attention_mask,

+ layer_head_mask,

+ encoder_hidden_states,

+ encoder_attention_mask,

+ past_key_value,

+ output_attentions,

+ )

+ hidden_states = layer_outputs[0]

+ if use_cache:

+ next_decoder_cache += (layer_outputs[-1],)

+ if output_attentions:

+ all_self_attentions = all_self_attentions + (layer_outputs[1],)

+ if self.config.add_cross_attention:

+ all_cross_attentions = all_cross_attentions + \

+ (layer_outputs[2],)

+

+ if output_hidden_states:

+ all_hidden_states = all_hidden_states + (hidden_states,)

+

+ # end of a stage loop

+ sequence_output = layer_outputs[0] if layer_outputs is not None else None

+

+ if stage_manager.is_last_stage():

+ pooled_output = self.pooler(sequence_output) if self.pooler is not None else None

+ if not return_dict:

+ return (sequence_output, pooled_output) + layer_outputs[1:]

+ # return dict is not supported at this moment

+ else:

+ return BaseModelOutputWithPastAndCrossAttentions(

+ last_hidden_state=hidden_states,

+ past_key_values=next_decoder_cache,

+ hidden_states=all_hidden_states,

+ attentions=all_self_attentions,

+ cross_attentions=all_cross_attentions,

+ )

+

+ # output of non-first and non-last stages: must be a dict

+ else:

+ # intermediate stage always return dict

+ return {

+ 'hidden_states': hidden_states,

+ }

+

+

+# The layer partition policy for bertmodel

+class BertModelPolicy(Policy):

+

+ def __init__(

+ self,

+ stage_manager: PipelineStageManager,

+ num_layers: int,

+ ):

+ super().__init__(stage_manager=stage_manager)

+ self.stage_manager = stage_manager

+ self.layers_per_stage = self.distribute_layers(num_layers, stage_manager.num_stages)

+

+ def get_hold_layers(self, module: BertModel) -> List[Module]:

+ """

+ get pipeline layers for current stage

+ """

+ hold_layers = []

+ if self.stage_manager.is_first_stage():

+ hold_layers.append(module.embeddings)

+ start_idx, end_idx = self.get_stage_index(self.layers_per_stage, self.stage_manager.stage)

+ hold_layers.extend(module.encoder.layer[start_idx:end_idx])

+ if self.stage_manager.is_last_stage():

+ hold_layers.append(module.pooler)

+

+ return hold_layers

+

+ def get_shared_params(self, module: BertModel) -> List[Dict[int, Tensor]]:

+ '''no shared params in bertmodel'''

+ return []

+

+ def replace_forward(self, module: Module) -> None:

+ module.forward = MethodType(partial(bert_model_forward, stage_manager=self.stage_manager), module)

+

+

+def bert_for_pretraining_forward(

+ self: BertForPreTraining,

+ input_ids: Optional[torch.Tensor] = None,

+ attention_mask: Optional[torch.Tensor] = None,

+ token_type_ids: Optional[torch.Tensor] = None,

+ position_ids: Optional[torch.Tensor] = None,

+ head_mask: Optional[torch.Tensor] = None,

+ inputs_embeds: Optional[torch.Tensor] = None,

+ labels: Optional[torch.Tensor] = None,

+ next_sentence_label: Optional[torch.Tensor] = None,

+ output_attentions: Optional[bool] = None,

+ output_hidden_states: Optional[bool] = None,

+ return_dict: Optional[bool] = None,

+ hidden_states: Optional[torch.FloatTensor] = None,

+ stage_manager: Optional[PipelineStageManager] = None,

+):

+ return_dict = return_dict if return_dict is not None else self.config.use_return_dict

+ # TODO: left the recording kv-value tensors as () or None type, this feature may be added in the future.

+ if output_attentions:

+ logger.warning_once('output_attentions=True is not supported for pipeline models at the moment.')

+ output_attentions = False

+ if output_hidden_states:

+ logger.warning_once('output_hidden_states=True is not supported for pipeline models at the moment.')

+ output_hidden_states = False

+ if return_dict:

+ logger.warning_once('return_dict is not supported for pipeline models at the moment')

+ return_dict = False

+

+ outputs = bert_model_forward(self.bert,

+ input_ids,

+ attention_mask=attention_mask,

+ token_type_ids=token_type_ids,

+ position_ids=position_ids,

+ head_mask=head_mask,

+ inputs_embeds=inputs_embeds,

+ output_attentions=output_attentions,

+ output_hidden_states=output_hidden_states,

+ return_dict=return_dict,

+ stage_manager=stage_manager,

+ hidden_states=hidden_states if hidden_states is not None else None)

+ past_key_values = None

+ all_hidden_states = None

+ all_self_attentions = None

+ all_cross_attentions = None

+ if stage_manager.is_last_stage():

+ sequence_output, pooled_output = outputs[:2]

+ prediction_scores, seq_relationship_score = self.cls(sequence_output, pooled_output)

+ # the last stage for pretraining model

+ total_loss = None

+ if labels is not None and next_sentence_label is not None:

+ loss_fct = CrossEntropyLoss()

+ masked_lm_loss = loss_fct(prediction_scores.view(-1, self.config.vocab_size), labels.view(-1))

+ next_sentence_loss = loss_fct(seq_relationship_score.view(-1, 2), next_sentence_label.view(-1))

+ total_loss = masked_lm_loss + next_sentence_loss

+

+ if not return_dict:

+ output = (prediction_scores, seq_relationship_score) + outputs[2:]

+ return ((total_loss,) + output) if total_loss is not None else output

+

+ return BertForPreTrainingOutput(

+ loss=total_loss,

+ prediction_logits=prediction_scores,

+ seq_relationship_logits=seq_relationship_score,

+ hidden_states=outputs.hidden_states,

+ attentions=outputs.attentions,

+ )

+ else:

+ hidden_states = outputs.get('hidden_states')

+

+ # intermediate stage always return dict

+ return {

+ 'hidden_states': hidden_states,

+ }

+

+

+class BertForPreTrainingPolicy(Policy):

+

+ def __init__(self, stage_manager: PipelineStageManager, num_layers: int):

+ super().__init__(stage_manager=stage_manager)

+ self.stage_manager = stage_manager

+ self.layers_per_stage = self.distribute_layers(num_layers, stage_manager.num_stages)

+

+ def get_hold_layers(self, module: BertForPreTraining) -> List[Module]:

+ """

+ get pipeline layers for current stage

+ """

+ hold_layers = []

+ if self.stage_manager.is_first_stage():

+ hold_layers.append(module.bert.embeddings)

+

+ start_idx, end_idx = self.get_stage_index(self.layers_per_stage, self.stage_manager.stage)

+ hold_layers.extend(module.bert.encoder.layer[start_idx:end_idx])

+

+ if self.stage_manager.is_last_stage():

+ hold_layers.append(module.bert.pooler)

+ hold_layers.append(module.cls)

+

+ return hold_layers

+

+ def get_shared_params(self, module: BertForPreTraining) -> List[Dict[int, Tensor]]:

+ '''no shared params in bertmodel'''

+ return []

+

+ def replace_forward(self, module: Module) -> None:

+ module.forward = MethodType(partial(bert_for_pretraining_forward, stage_manager=self.stage_manager),

+ module.forward)

+

+

+def bert_lmhead_forward(self: BertLMHeadModel,

+ input_ids: Optional[torch.Tensor] = None,

+ attention_mask: Optional[torch.Tensor] = None,

+ token_type_ids: Optional[torch.Tensor] = None,

+ position_ids: Optional[torch.Tensor] = None,

+ head_mask: Optional[torch.Tensor] = None,

+ inputs_embeds: Optional[torch.Tensor] = None,

+ encoder_hidden_states: Optional[torch.Tensor] = None,

+ encoder_attention_mask: Optional[torch.Tensor] = None,

+ labels: Optional[torch.Tensor] = None,

+ past_key_values: Optional[List[torch.Tensor]] = None,

+ use_cache: Optional[bool] = None,

+ output_attentions: Optional[bool] = None,

+ output_hidden_states: Optional[bool] = None,

+ return_dict: Optional[bool] = None,

+ hidden_states: Optional[torch.FloatTensor] = None,

+ stage_manager: Optional[PipelineStageManager] = None):

+ r"""

+ encoder_hidden_states (`torch.FloatTensor` of shape `(batch_size, sequence_length, hidden_size)`, *optional*):

+ Sequence of hidden-states at the output of the last layer of the encoder. Used in the cross-attention if

+ the model is configured as a decoder.

+ encoder_attention_mask (`torch.FloatTensor` of shape `(batch_size, sequence_length)`, *optional*):

+ Mask to avoid performing attention on the padding token indices of the encoder input. This mask is used in

+ the cross-attention if the model is configured as a decoder. Mask values selected in `[0, 1]`:

+

+ - 1 for tokens that are **not masked**,

+ - 0 for tokens that are **masked**.

+ labels (`torch.LongTensor` of shape `(batch_size, sequence_length)`, *optional*):

+ Labels for computing the left-to-right language modeling loss (next word prediction). Indices should be in

+ `[-100, 0, ..., config.vocab_size]` (see `input_ids` docstring) Tokens with indices set to `-100` are

+ ignored (masked), the loss is only computed for the tokens with labels n `[0, ..., config.vocab_size]`

+ past_key_values (`tuple(tuple(torch.FloatTensor))` of length `config.n_layers` with each tuple having 4 tensors of shape `(batch_size, num_heads, sequence_length - 1, embed_size_per_head)`):

+ Contains precomputed key and value hidden states of the attention blocks. Can be used to speed up decoding.

+

+ If `past_key_values` are used, the user can optionally input only the last `decoder_input_ids` (those that

+ don't have their past key value states given to this model) of shape `(batch_size, 1)` instead of all

+ `decoder_input_ids` of shape `(batch_size, sequence_length)`.

+ use_cache (`bool`, *optional*):

+ If set to `True`, `past_key_values` key value states are returned and can be used to speed up decoding (see

+ `past_key_values`).

+ """

+ return_dict = return_dict if return_dict is not None else self.config.use_return_dict

+

+ if labels is not None:

+ use_cache = False

+ if output_attentions:

+ logger.warning_once('output_attentions=True is not supported for pipeline models at the moment.')

+ output_attentions = False

+ if output_hidden_states:

+ logger.warning_once('output_hidden_states=True is not supported for pipeline models at the moment.')

+ output_hidden_states = False

+ if return_dict:

+ logger.warning_once('return_dict is not supported for pipeline models at the moment')

+ return_dict = False

+

+ outputs = bert_model_forward(self.bert,

+ input_ids,

+ attention_mask=attention_mask,

+ token_type_ids=token_type_ids,

+ position_ids=position_ids,

+ head_mask=head_mask,

+ inputs_embeds=inputs_embeds,

+ encoder_hidden_states=encoder_hidden_states,

+ encoder_attention_mask=encoder_attention_mask,

+ past_key_values=past_key_values,

+ use_cache=use_cache,

+ output_attentions=output_attentions,

+ output_hidden_states=output_hidden_states,

+ return_dict=return_dict,

+ stage_manager=stage_manager,

+ hidden_states=hidden_states if hidden_states is not None else None)

+ past_key_values = None

+ all_hidden_states = None

+ all_self_attentions = None

+ all_cross_attentions = None

+

+ if stage_manager.is_last_stage():

+ sequence_output = outputs[0]

+ prediction_scores = self.cls(sequence_output)

+

+ lm_loss = None

+ if labels is not None:

+ # we are doing next-token prediction; shift prediction scores and input ids by one

+ shifted_prediction_scores = prediction_scores[:, :-1, :].contiguous()

+ labels = labels[:, 1:].contiguous()

+ loss_fct = CrossEntropyLoss()

+ lm_loss = loss_fct(shifted_prediction_scores.view(-1, self.config.vocab_size), labels.view(-1))

+

+ if not return_dict:

+ output = (prediction_scores,) + outputs[2:]

+ return ((lm_loss,) + output) if lm_loss is not None else output

+

+ return CausalLMOutputWithCrossAttentions(

+ loss=lm_loss,

+ logits=prediction_scores,

+ past_key_values=outputs.past_key_values,

+ hidden_states=outputs.hidden_states,

+ attentions=outputs.attentions,

+ cross_attentions=outputs.cross_attentions,

+ )

+ else:

+ hidden_states = outputs.get('hidden_states')

+ # intermediate stage always return dict

+ return {'hidden_states': hidden_states}

+

+

+class BertLMHeadModelPolicy(Policy):

+

+ def __init__(self, stage_manager: PipelineStageManager, num_layers: int):

+ super().__init__(stage_manager=stage_manager)

+ self.stage_manager = stage_manager

+ self.layers_per_stage = self.distribute_layers(num_layers, stage_manager.num_stages)

+

+ def get_hold_layers(self, module: BertLMHeadModel) -> List[Module]:

+ """

+ get pipeline layers for current stage

+ """

+ hold_layers = []

+ if self.stage_manager.is_first_stage():

+ hold_layers.append(module.bert.embeddings)

+ start_idx, end_idx = self.get_stage_index(self.layers_per_stage, self.stage_manager.stage)

+ hold_layers.extend(module.bert.encoder.layer[start_idx:end_idx])

+ if self.stage_manager.is_last_stage():

+ hold_layers.append(module.bert.pooler)

+ hold_layers.append(module.cls)

+

+ return hold_layers

+

+ def get_shared_params(self, module: BertLMHeadModel) -> List[Dict[int, Tensor]]:

+ '''no shared params in bertmodel'''

+ return []

+

+ def replace_forward(self, module: Module) -> None:

+ module.forward = MethodType(partial(bert_lmhead_forward, stage_manager=self.stage_manager), module)

diff --git a/colossalai/pipeline/policy/bloom.py b/colossalai/pipeline/policy/bloom.py

new file mode 100644

index 000000000000..cf5592ea2f4e

--- /dev/null

+++ b/colossalai/pipeline/policy/bloom.py

@@ -0,0 +1,220 @@

+import warnings

+from functools import partial

+from types import MethodType

+from typing import Dict, List, Optional, Tuple, Union

+

+import numpy as np

+import torch

+from torch import Tensor

+from torch.nn import CrossEntropyLoss, Module

+from transformers.modeling_outputs import BaseModelOutputWithPastAndCrossAttentions

+from transformers.models.bloom.modeling_bloom import BloomModel

+from transformers.utils import logging

+

+from colossalai.pipeline.stage_manager import PipelineStageManager

+

+from .base import Policy

+

+logger = logging.get_logger(__name__)

+

+

+def bloom_model_forward(

+ self: BloomModel,

+ input_ids: Optional[torch.LongTensor] = None,

+ past_key_values: Optional[Tuple[Tuple[torch.Tensor, torch.Tensor], ...]] = None,

+ attention_mask: Optional[torch.Tensor] = None,

+ head_mask: Optional[torch.LongTensor] = None,

+ inputs_embeds: Optional[torch.LongTensor] = None,

+ use_cache: Optional[bool] = None,

+ output_attentions: Optional[bool] = None,

+ output_hidden_states: Optional[bool] = None,

+ return_dict: Optional[bool] = None,

+ stage_manager: Optional[PipelineStageManager] = None,

+ hidden_states: Optional[torch.FloatTensor] = None,

+ **deprecated_arguments,

+) -> Union[Tuple[torch.Tensor, ...], BaseModelOutputWithPastAndCrossAttentions]:

+ if deprecated_arguments.pop("position_ids", False) is not False:

+ # `position_ids` could have been `torch.Tensor` or `None` so defaulting pop to `False` allows to detect if users were passing explicitly `None`

+ warnings.warn(

+ "`position_ids` have no functionality in BLOOM and will be removed in v5.0.0. You can safely ignore"

+ " passing `position_ids`.",

+ FutureWarning,

+ )

+ if len(deprecated_arguments) > 0:

+ raise ValueError(f"Got unexpected arguments: {deprecated_arguments}")

+

+ output_attentions = output_attentions if output_attentions is not None else self.config.output_attentions

+ output_hidden_states = (output_hidden_states

+ if output_hidden_states is not None else self.config.output_hidden_states)

+ use_cache = use_cache if use_cache is not None else self.config.use_cache

+ return_dict = return_dict if return_dict is not None else self.config.use_return_dict

+

+ # add warnings here

+ if output_attentions:

+ logger.warning_once('output_attentions=True is not supported for pipeline models at the moment.')

+ output_attentions = False

+ if output_hidden_states:

+ logger.warning_once('output_hidden_states=True is not supported for pipeline models at the moment.')

+ output_hidden_states = False

+ if use_cache:

+ logger.warning_once('use_cache=True is not supported for pipeline models at the moment.')

+ use_cache = False

+ # Prepare head mask if needed

+ # 1.0 in head_mask indicate we keep the head

+ # attention_probs has shape batch_size x num_heads x N x N

+

+ # head_mask has shape n_layer x batch x num_heads x N x N

+ head_mask = self.get_head_mask(head_mask, self.config.n_layer)

+

+ # case: First stage of training

+ if stage_manager.is_first_stage():

+ # check input_ids and inputs_embeds

+ if input_ids is not None and inputs_embeds is not None:

+ raise ValueError("You cannot specify both input_ids and inputs_embeds at the same time")

+ elif input_ids is not None:

+ batch_size, seq_length = input_ids.shape

+ elif inputs_embeds is not None:

+ batch_size, seq_length, _ = inputs_embeds.shape

+ else:

+ raise ValueError("You have to specify either input_ids or inputs_embeds")

+

+ if inputs_embeds is None:

+ inputs_embeds = self.word_embeddings(input_ids)

+

+ hidden_states = self.word_embeddings_layernorm(inputs_embeds)

+ # initialize in the first stage and then pass to the next stage

+ else:

+ input_shape = hidden_states.shape[:-1]

+ batch_size, seq_length = input_shape

+

+ # extra recording tensor should be generated in the first stage

+ presents = () if use_cache else None

+ all_self_attentions = () if output_attentions else None

+ all_hidden_states = () if output_hidden_states else None

+

+ if self.gradient_checkpointing and self.training:

+ if use_cache:

+ logger.warning_once(

+ "`use_cache=True` is incompatible with gradient checkpointing. Setting `use_cache=False`...")

+ use_cache = False

+

+ if past_key_values is None:

+ past_key_values = tuple([None] * len(self.h))

+ # Compute alibi tensor: check build_alibi_tensor documentation,build for every stage

+ seq_length_with_past = seq_length

+ past_key_values_length = 0

+ if past_key_values[0] is not None:

+ past_key_values_length = past_key_values[0][0].shape[2] # source_len

+ seq_length_with_past = seq_length_with_past + past_key_values_length

+ if attention_mask is None:

+ attention_mask = torch.ones((batch_size, seq_length_with_past), device=hidden_states.device)

+ else:

+ attention_mask = attention_mask.to(hidden_states.device)

+

+ alibi = self.build_alibi_tensor(attention_mask, self.num_heads, dtype=hidden_states.dtype)

+

+ # causal_mask is constructed every stage and its input is passed through different stages

+ causal_mask = self._prepare_attn_mask(

+ attention_mask,

+ input_shape=(batch_size, seq_length),

+ past_key_values_length=past_key_values_length,

+ )

+

+ # calculate the num_layers

+ num_layers_per_stage = len(self.h) // stage_manager.num_stages

+ start_layer = stage_manager.stage * num_layers_per_stage

+ end_layer = (stage_manager.stage + 1) * num_layers_per_stage

+

+ for i, (block, layer_past) in enumerate(zip(self.h[start_layer:end_layer], past_key_values[start_layer:end_layer])):

+ if output_hidden_states:

+ all_hidden_states = all_hidden_states + (hidden_states,)

+

+ if self.gradient_checkpointing and self.training:

+

+ def create_custom_forward(module):

+

+ def custom_forward(*inputs):

+ # None for past_key_value

+ return module(*inputs, use_cache=use_cache, output_attentions=output_attentions)

+

+ return custom_forward

+

+ outputs = torch.utils.checkpoint.checkpoint(

+ create_custom_forward(block),

+ hidden_states,

+ alibi,

+ causal_mask,

+ layer_past,

+ head_mask[i],

+ )

+ else:

+ outputs = block(

+ hidden_states,

+ layer_past=layer_past,

+ attention_mask=causal_mask,

+ head_mask=head_mask[i],

+ use_cache=use_cache,

+ output_attentions=output_attentions,

+ alibi=alibi,

+ )

+

+ hidden_states = outputs[0]

+

+ if use_cache is True:

+ presents = presents + (outputs[1],)

+

+ if output_attentions:

+ all_self_attentions = all_self_attentions + \

+ (outputs[2 if use_cache else 1],)

+

+ if stage_manager.is_last_stage():

+ # Add last hidden state

+ hidden_states = self.ln_f(hidden_states)

+

+ # TODO: deal with all_hidden_states, all_self_attentions, presents

+ if output_hidden_states:

+ all_hidden_states = all_hidden_states + (hidden_states,)

+

+ if not return_dict:

+ return tuple(v for v in [hidden_states, presents, all_hidden_states, all_self_attentions] if v is not None)

+

+ # attention_mask is not returned ; presents = past_key_values

+

+ return BaseModelOutputWithPastAndCrossAttentions(

+ last_hidden_state=hidden_states,

+ past_key_values=presents,

+ hidden_states=all_hidden_states,

+ attentions=all_self_attentions,

+ )

+

+

+class BloomModelPolicy(Policy):

+

+ def __init__(self, stage_manager: PipelineStageManager, num_layers: int, num_stages: int):

+ super().__init__(stage_manager=stage_manager)

+ self.stage_manager = stage_manager

+ self.layers_per_stage = self.distribute_layers(num_layers, num_stages)

+

+ def get_hold_layers(self, module: BloomModel) -> List[Module]:

+ """

+ get pipeline layers for current stage

+ """

+ hold_layers = []

+ if self.stage_manager.is_first_stage():

+ hold_layers.append(module.word_embeddings)

+ hold_layers.append(module.word_embeddings_layernorm)

+

+ start_idx, end_idx = self.get_stage_index(self.layers_per_stage, self.stage_manager.stage)

+ hold_layers.extend(module.h[start_idx:end_idx])

+

+ if self.stage_manager.is_last_stage():

+ hold_layers.append(module.ln_f)

+

+ return hold_layers

+

+ def get_shared_params(self, module: BloomModel) -> List[Dict[int, Tensor]]:

+ '''no shared params in bloommodel'''

+ pass

+

+ def replace_forward(self, module: Module) -> None:

+ module.forward = MethodType(partial(bloom_model_forward, stage_manager=self.stage_manager), module.model)

diff --git a/colossalai/pipeline/schedule/_utils.py b/colossalai/pipeline/schedule/_utils.py

index 045c86e40e63..3ed9239272f1 100644

--- a/colossalai/pipeline/schedule/_utils.py

+++ b/colossalai/pipeline/schedule/_utils.py

@@ -86,7 +86,7 @@ def retain_grad(x: Any) -> None:

Args:

x (Any): Object to be called.

"""

- if isinstance(x, torch.Tensor):

+ if isinstance(x, torch.Tensor) and x.requires_grad:

x.retain_grad()

diff --git a/colossalai/pipeline/schedule/one_f_one_b.py b/colossalai/pipeline/schedule/one_f_one_b.py

index d907d53edcde..ade3cf456fe3 100644

--- a/colossalai/pipeline/schedule/one_f_one_b.py

+++ b/colossalai/pipeline/schedule/one_f_one_b.py

@@ -107,8 +107,15 @@ def backward_step(self, optimizer: OptimizerWrapper, input_obj: Optional[dict],

if output_obj_grad is None:

optimizer.backward(output_obj)

else:

- for k, grad in output_obj_grad.items():

- optimizer.backward_by_grad(output_obj[k], grad)

+ if "backward_tensor_keys" not in output_obj:

+ for k, grad in output_obj_grad.items():

+ optimizer.backward_by_grad(output_obj[k], grad)

+ else:

+ for k, grad in output_obj_grad.items():

+ output_obj[k].grad = grad

+ for k in output_obj["backward_tensor_keys"]:

+ tensor_to_backward = output_obj[k]

+ optimizer.backward_by_grad(tensor_to_backward, tensor_to_backward.grad)

# Collect the grad of the input_obj.

input_obj_grad = None

diff --git a/colossalai/shardformer/README.md b/colossalai/shardformer/README.md

index 357e8ac3397e..1c11b4b85444 100644

--- a/colossalai/shardformer/README.md

+++ b/colossalai/shardformer/README.md

@@ -31,7 +31,7 @@

### Quick Start

-The sample API usage is given below:

+The sample API usage is given below(If you enable the use of flash attention, please install xformers.):

``` python

from colossalai.shardformer import ShardConfig, Shard

@@ -106,6 +106,20 @@ We will follow this roadmap to develop Shardformer:

- [ ] Multi-modal

- [x] SAM

- [x] BLIP-2

+- [ ] Flash Attention Support

+ - [ ] NLP

+ - [x] BERT

+ - [x] T5

+ - [x] LlaMa

+ - [x] GPT2

+ - [x] OPT

+ - [x] BLOOM

+ - [ ] GLM

+ - [ ] RoBERTa

+ - [ ] ALBERT

+ - [ ] ERNIE

+ - [ ] GPT Neo

+ - [ ] GPT-J

## 💡 API Design

@@ -378,11 +392,49 @@ pytest tests/test_shardformer

### System Performance

-To be added.

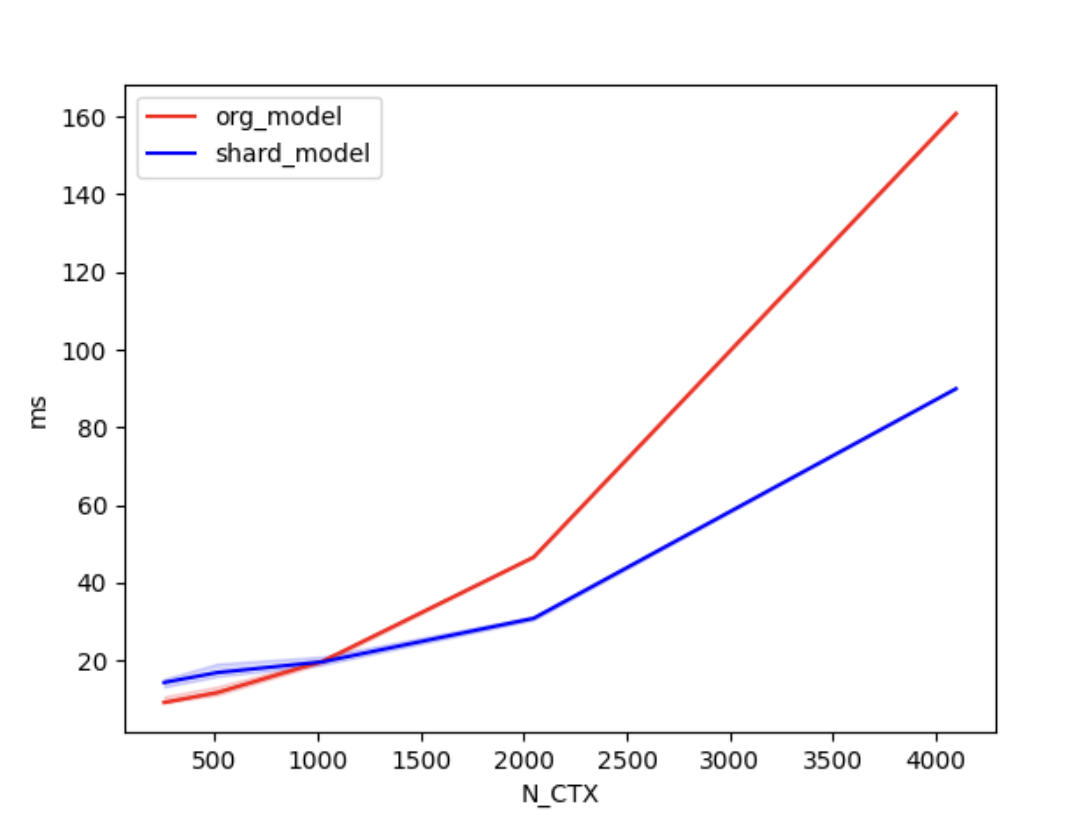

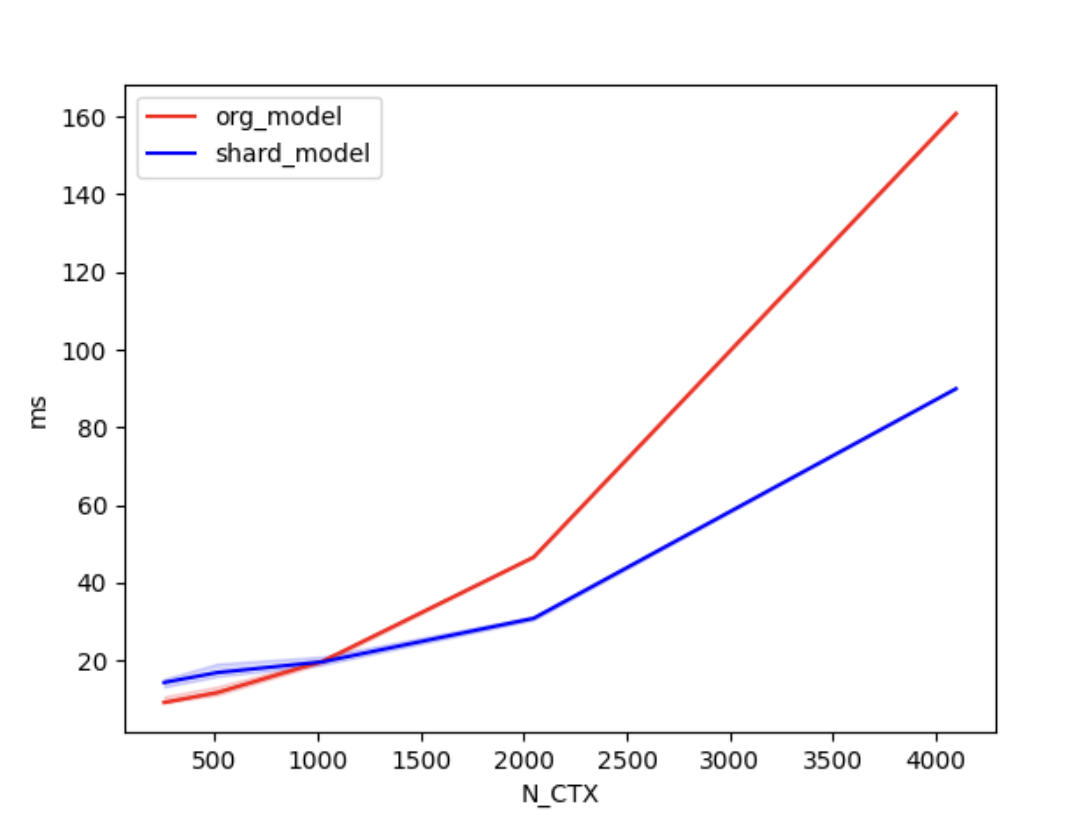

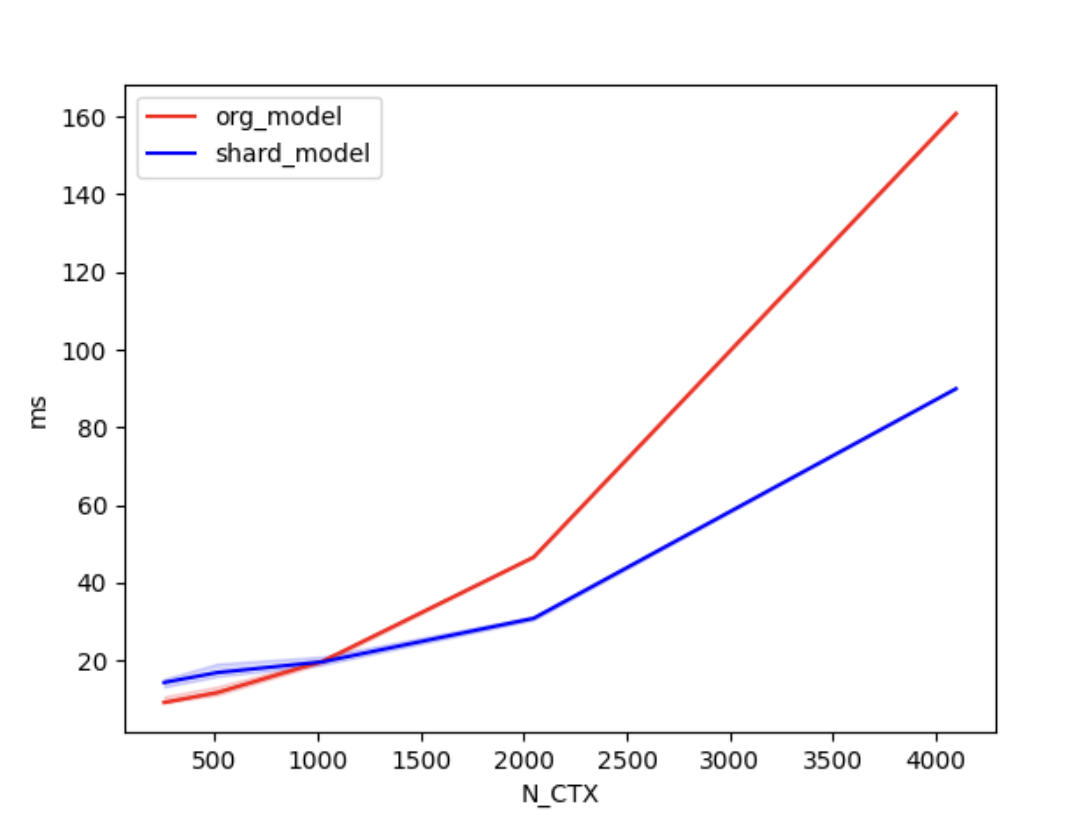

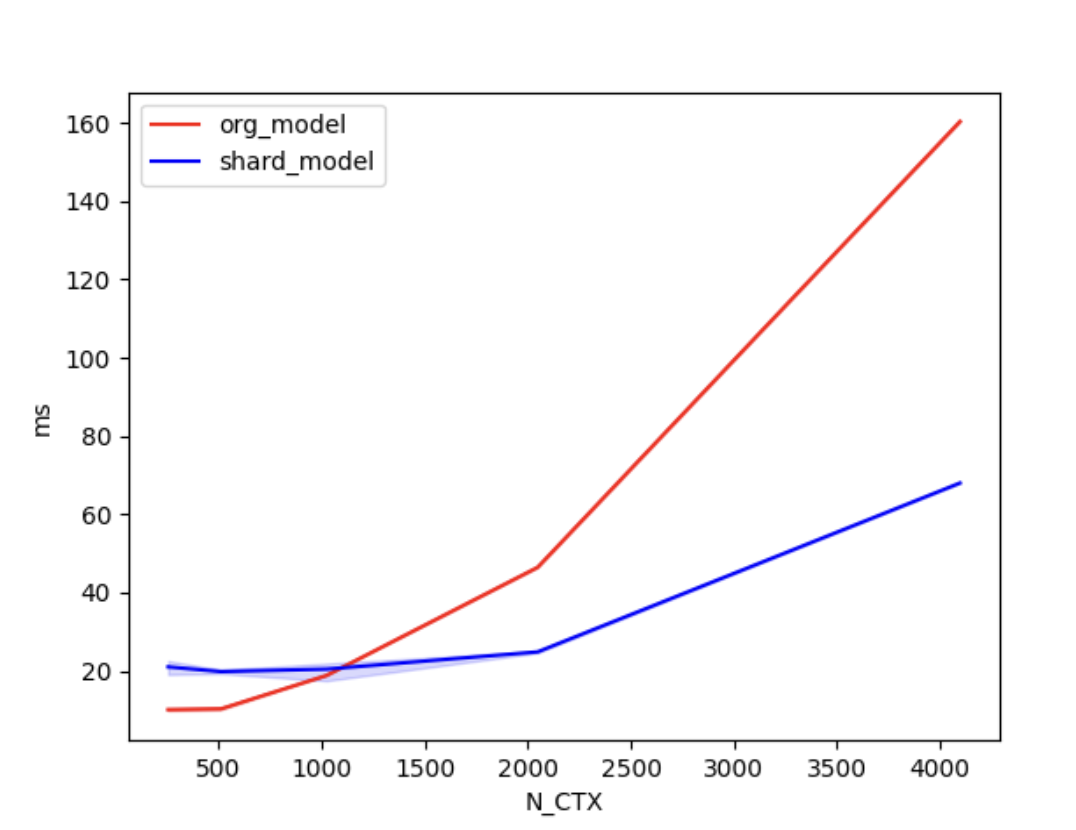

+We conducted [benchmark tests](./examples/performance_benchmark.py) to evaluate the performance improvement of Shardformer. We compared the training time between the original model and the shard model.

+

+We set the batch size to 4, the number of attention heads to 8, and the head dimension to 64. 'N_CTX' refers to the sequence length.

+

+In the case of using 2 GPUs, the training times are as follows.

+| N_CTX | org_model | shard_model |

+| :------: | :-----: | :-----: |

+| 256 | 11.2ms | 17.2ms |

+| 512 | 9.8ms | 19.5ms |

+| 1024 | 19.6ms | 18.9ms |

+| 2048 | 46.6ms | 30.8ms |

+| 4096 | 160.5ms | 90.4ms |

+

+

+

+  +

+

+

+

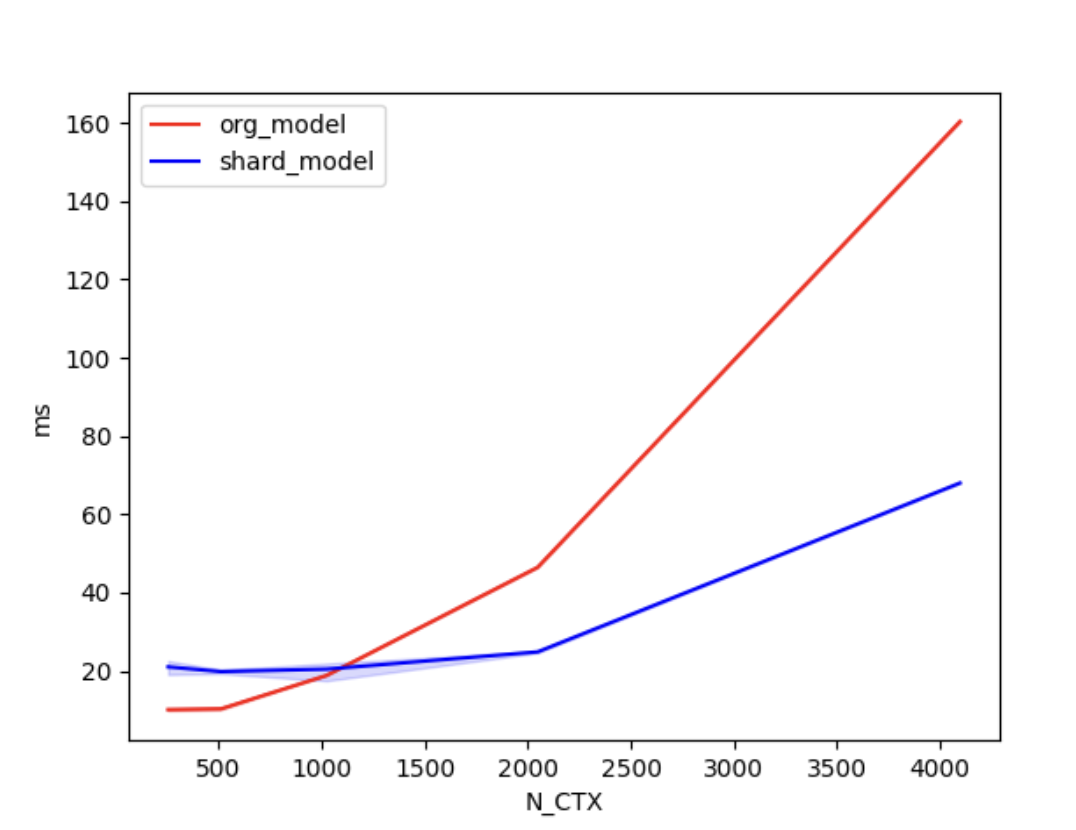

+In the case of using 4 GPUs, the training times are as follows.

+

+| N_CTX | org_model | shard_model |

+| :------: | :-----: | :-----: |

+| 256 | 10.0ms | 21.1ms |

+| 512 | 11.5ms | 20.2ms |

+| 1024 | 22.1ms | 20.6ms |

+| 2048 | 46.9ms | 24.8ms |

+| 4096 | 160.4ms | 68.0ms |

+

+

+

+

+  +

+

+

+

+

+As shown in the figures above, when the sequence length is around 1000 or greater, the parallel optimization of Shardformer for long sequences starts to become evident.

### Convergence

-To validate that training the model using shardformers does not impact its convergence. We [fine-tuned the BERT model](./examples/shardformer_benchmark.py) using both shardformer and non-shardformer approaches. We compared the accuracy, loss, F1 score of the training results.

+

+To validate that training the model using shardformers does not impact its convergence. We [fine-tuned the BERT model](./examples/convergence_benchmark.py) using both shardformer and non-shardformer approaches. We compared the accuracy, loss, F1 score of the training results.

| accuracy | f1 | loss | GPU number | model shard |

| :------: | :-----: | :-----: | :--------: | :---------: |

diff --git a/colossalai/shardformer/examples/shardformer_benchmark.py b/colossalai/shardformer/examples/convergence_benchmark.py

similarity index 100%

rename from colossalai/shardformer/examples/shardformer_benchmark.py

rename to colossalai/shardformer/examples/convergence_benchmark.py

diff --git a/colossalai/shardformer/examples/shardformer_benchmark.sh b/colossalai/shardformer/examples/convergence_benchmark.sh

similarity index 76%

rename from colossalai/shardformer/examples/shardformer_benchmark.sh

rename to colossalai/shardformer/examples/convergence_benchmark.sh

index f42b19a32d35..1c281abcda6d 100644

--- a/colossalai/shardformer/examples/shardformer_benchmark.sh

+++ b/colossalai/shardformer/examples/convergence_benchmark.sh

@@ -1,4 +1,4 @@

-torchrun --standalone --nproc_per_node=4 shardformer_benchmark.py \

+torchrun --standalone --nproc_per_node=4 convergence_benchmark.py \

--model "bert" \

--pretrain "bert-base-uncased" \

--max_epochs 1 \

diff --git a/colossalai/shardformer/examples/performance_benchmark.py b/colossalai/shardformer/examples/performance_benchmark.py

new file mode 100644

index 000000000000..9c7b76bcf0a6

--- /dev/null

+++ b/colossalai/shardformer/examples/performance_benchmark.py

@@ -0,0 +1,86 @@

+"""

+Shardformer Benchmark

+"""

+import torch

+import torch.distributed as dist

+import transformers

+import triton

+

+import colossalai

+from colossalai.shardformer import ShardConfig, ShardFormer

+

+

+def data_gen(batch_size, seq_length):

+ input_ids = torch.randint(0, seq_length, (batch_size, seq_length), dtype=torch.long)

+ attention_mask = torch.ones((batch_size, seq_length), dtype=torch.long)

+ return dict(input_ids=input_ids, attention_mask=attention_mask)

+

+

+def data_gen_for_sequence_classification(batch_size, seq_length):

+ # LM data gen

+ # the `labels` of LM is the token of the output, cause no padding, use `input_ids` as `labels`

+ data = data_gen(batch_size, seq_length)

+ data['labels'] = torch.ones((batch_size), dtype=torch.long)

+ return data

+

+

+MODEL_CONFIG = transformers.LlamaConfig(num_hidden_layers=4,

+ hidden_size=128,

+ intermediate_size=256,

+ num_attention_heads=4,

+ max_position_embeddings=128,

+ num_labels=16)

+BATCH, N_HEADS, N_CTX, D_HEAD = 4, 8, 4096, 64

+model_func = lambda: transformers.LlamaForSequenceClassification(MODEL_CONFIG)

+

+# vary seq length for fixed head and batch=4

+configs = [

+ triton.testing.Benchmark(x_names=['N_CTX'],

+ x_vals=[2**i for i in range(8, 13)],

+ line_arg='provider',

+ line_vals=['org_model', 'shard_model'],

+ line_names=['org_model', 'shard_model'],

+ styles=[('red', '-'), ('blue', '-')],

+ ylabel='ms',

+ plot_name=f'lama_for_sequence_classification-batch-{BATCH}',

+ args={

+ 'BATCH': BATCH,

+ 'dtype': torch.float16,

+ 'model_func': model_func

+ })

+]

+

+

+def train(model, data):

+ output = model(**data)

+ loss = output.logits.mean()

+ loss.backward()

+

+

+@triton.testing.perf_report(configs)

+def bench_shardformer(BATCH, N_CTX, provider, model_func, dtype=torch.float32, device="cuda"):

+ warmup = 10

+ rep = 100

+ # prepare data

+ data = data_gen_for_sequence_classification(BATCH, N_CTX)

+ data = {k: v.cuda() for k, v in data.items()}

+ model = model_func().to(device)

+ model.train()

+ if provider == "org_model":

+ fn = lambda: train(model, data)

+ ms = triton.testing.do_bench(fn, warmup=warmup, rep=rep)

+ return ms

+ if provider == "shard_model":

+ shard_config = ShardConfig(enable_fused_normalization=True, enable_tensor_parallelism=True)

+ shard_former = ShardFormer(shard_config=shard_config)

+ sharded_model = shard_former.optimize(model).cuda()

+ fn = lambda: train(sharded_model, data)

+ ms = triton.testing.do_bench(fn, warmup=warmup, rep=rep)

+ return ms

+

+

+# start benchmark, command:

+# torchrun --standalone --nproc_per_node=2 performance_benchmark.py

+if __name__ == "__main__":

+ colossalai.launch_from_torch({})

+ bench_shardformer.run(save_path='.', print_data=dist.get_rank() == 0)

diff --git a/colossalai/shardformer/layer/utils.py b/colossalai/shardformer/layer/utils.py

index f2ac6563c46f..09cb7bfe1407 100644

--- a/colossalai/shardformer/layer/utils.py

+++ b/colossalai/shardformer/layer/utils.py

@@ -122,6 +122,13 @@ def increment_index():

"""

Randomizer._INDEX += 1

+ @staticmethod

+ def reset_index():

+ """

+ Reset the index to zero.

+ """

+ Randomizer._INDEX = 0

+

@staticmethod

def is_randomizer_index_synchronized(process_group: ProcessGroup = None):

"""

diff --git a/colossalai/shardformer/modeling/bert.py b/colossalai/shardformer/modeling/bert.py

index 1b3c14d9d1c9..b9d4b5fda7af 100644

--- a/colossalai/shardformer/modeling/bert.py

+++ b/colossalai/shardformer/modeling/bert.py

@@ -1,5 +1,6 @@

+import math

import warnings

-from typing import Any, Dict, List, Optional, Tuple

+from typing import Dict, List, Optional, Tuple

import torch

from torch.nn import BCEWithLogitsLoss, CrossEntropyLoss, MSELoss

@@ -962,3 +963,138 @@ def bert_for_question_answering_forward(

else:

hidden_states = outputs.get('hidden_states')

return {'hidden_states': hidden_states}

+

+

+def get_bert_flash_attention_forward():

+

+ try:

+ from xformers.ops import memory_efficient_attention as me_attention

+ except:

+ raise ImportError("Error: xformers module is not installed. Please install it to use flash attention.")

+ from transformers.models.bert.modeling_bert import BertAttention

+

+ def forward(

+ self: BertAttention,

+ hidden_states: torch.Tensor,

+ attention_mask: Optional[torch.FloatTensor] = None,

+ head_mask: Optional[torch.FloatTensor] = None,

+ encoder_hidden_states: Optional[torch.FloatTensor] = None,

+ encoder_attention_mask: Optional[torch.FloatTensor] = None,

+ past_key_value: Optional[Tuple[Tuple[torch.FloatTensor]]] = None,

+ output_attentions: Optional[bool] = False,

+ ) -> Tuple[torch.Tensor]:

+ mixed_query_layer = self.query(hidden_states)

+

+ # If this is instantiated as a cross-attention module, the keys

+ # and values come from an encoder; the attention mask needs to be

+ # such that the encoder's padding tokens are not attended to.

+ is_cross_attention = encoder_hidden_states is not None

+

+ if is_cross_attention and past_key_value is not None:

+ # reuse k,v, cross_attentions

+ key_layer = past_key_value[0]

+ value_layer = past_key_value[1]

+ attention_mask = encoder_attention_mask

+ elif is_cross_attention:

+ key_layer = self.transpose_for_scores(self.key(encoder_hidden_states))

+ value_layer = self.transpose_for_scores(self.value(encoder_hidden_states))

+ attention_mask = encoder_attention_mask

+ elif past_key_value is not None:

+ key_layer = self.transpose_for_scores(self.key(hidden_states))

+ value_layer = self.transpose_for_scores(self.value(hidden_states))

+ key_layer = torch.cat([past_key_value[0], key_layer], dim=2)

+ value_layer = torch.cat([past_key_value[1], value_layer], dim=2)

+ else:

+ key_layer = self.transpose_for_scores(self.key(hidden_states))

+ value_layer = self.transpose_for_scores(self.value(hidden_states))

+

+ query_layer = self.transpose_for_scores(mixed_query_layer)

+

+ use_cache = past_key_value is not None

+ if self.is_decoder:

+ # if cross_attention save Tuple(torch.Tensor, torch.Tensor) of all cross attention key/value_states.

+ # Further calls to cross_attention layer can then reuse all cross-attention

+ # key/value_states (first "if" case)

+ # if uni-directional self-attention (decoder) save Tuple(torch.Tensor, torch.Tensor) of

+ # all previous decoder key/value_states. Further calls to uni-directional self-attention

+ # can concat previous decoder key/value_states to current projected key/value_states (third "elif" case)

+ # if encoder bi-directional self-attention `past_key_value` is always `None`

+ past_key_value = (key_layer, value_layer)

+

+ final_attention_mask = None

+ if self.position_embedding_type == "relative_key" or self.position_embedding_type == "relative_key_query":

+ query_length, key_length = query_layer.shape[2], key_layer.shape[2]

+ if use_cache:

+ position_ids_l = torch.tensor(key_length - 1, dtype=torch.long, device=hidden_states.device).view(-1, 1)

+ else:

+ position_ids_l = torch.arange(query_length, dtype=torch.long, device=hidden_states.device).view(-1, 1)

+ position_ids_r = torch.arange(key_length, dtype=torch.long, device=hidden_states.device).view(1, -1)

+ distance = position_ids_l - position_ids_r

+

+ positional_embedding = self.distance_embedding(distance + self.max_position_embeddings - 1)

+ positional_embedding = positional_embedding.to(dtype=query_layer.dtype) # fp16 compatibility

+

+ if self.position_embedding_type == "relative_key":

+ relative_position_scores = torch.einsum("bhld,lrd->bhlr", query_layer, positional_embedding)

+ final_attention_mask = relative_position_scores

+ elif self.position_embedding_type == "relative_key_query":

+ relative_position_scores_query = torch.einsum("bhld,lrd->bhlr", query_layer, positional_embedding)

+ relative_position_scores_key = torch.einsum("bhrd,lrd->bhlr", key_layer, positional_embedding)

+ final_attention_mask = relative_position_scores_query + relative_position_scores_key

+

+ scale = 1 / math.sqrt(self.attention_head_size)

+ if attention_mask is not None:

+ if final_attention_mask != None:

+ final_attention_mask = final_attention_mask * scale + attention_mask

+ else:

+ final_attention_mask = attention_mask

+ batch_size, src_len = query_layer.size()[0], query_layer.size()[2]

+ tgt_len = key_layer.size()[2]

+ final_attention_mask = final_attention_mask.expand(batch_size, self.num_attention_heads, src_len, tgt_len)

+

+ query_layer = query_layer.permute(0, 2, 1, 3).contiguous()

+ key_layer = key_layer.permute(0, 2, 1, 3).contiguous()

+ value_layer = value_layer.permute(0, 2, 1, 3).contiguous()

+

+ context_layer = me_attention(query_layer,

+ key_layer,

+ value_layer,

+ attn_bias=final_attention_mask,

+ p=self.dropout.p,

+ scale=scale)

+ new_context_layer_shape = context_layer.size()[:-2] + (self.all_head_size,)

+ context_layer = context_layer.view(new_context_layer_shape)

+

+ outputs = (context_layer, None)

+

+ if self.is_decoder:

+ outputs = outputs + (past_key_value,)

+ return outputs

+

+ return forward

+

+

+def get_jit_fused_bert_self_output_forward():

+

+ from transformers.models.bert.modeling_bert import BertSelfOutput

+

+ def forward(self: BertSelfOutput, hidden_states: torch.Tensor, input_tensor: torch.Tensor) -> torch.Tensor:

+ hidden_states = self.dense(hidden_states)

+ hidden_states = self.dropout_add(hidden_states, input_tensor, self.dropout.p, self.dropout.training)

+ hidden_states = self.LayerNorm(hidden_states)

+ return hidden_states

+

+ return forward

+

+

+def get_jit_fused_bert_output_forward():

+

+ from transformers.models.bert.modeling_bert import BertOutput

+

+ def forward(self: BertOutput, hidden_states: torch.Tensor, input_tensor: torch.Tensor) -> torch.Tensor:

+ hidden_states = self.dense(hidden_states)

+ hidden_states = self.dropout_add(hidden_states, input_tensor, self.dropout.p, self.dropout.training)

+ hidden_states = self.LayerNorm(hidden_states)

+ return hidden_states

+

+ return forward

diff --git a/colossalai/shardformer/modeling/blip2.py b/colossalai/shardformer/modeling/blip2.py

index b7945423ae83..c5c6b14ba993 100644

--- a/colossalai/shardformer/modeling/blip2.py

+++ b/colossalai/shardformer/modeling/blip2.py

@@ -1,3 +1,4 @@

+import math

from typing import Optional, Tuple, Union

import torch

@@ -58,3 +59,62 @@ def forward(

return outputs

return forward

+

+

+def get_blip2_flash_attention_forward():

+

+ from transformers.models.blip_2.modeling_blip_2 import Blip2Attention

+

+ from colossalai.kernel.cuda_native.flash_attention import AttnMaskType, ColoAttention

+

+ def forward(

+ self: Blip2Attention,

+ hidden_states: torch.Tensor,

+ head_mask: Optional[torch.Tensor] = None,

+ output_attentions: Optional[bool] = False,

+ ) -> Tuple[torch.Tensor, Optional[torch.Tensor], Optional[Tuple[torch.Tensor]]]:

+ """Input shape: Batch x Time x Channel"""

+

+ bsz, tgt_len, embed_dim = hidden_states.size()

+ mixed_qkv = self.qkv(hidden_states)

+ mixed_qkv = mixed_qkv.reshape(bsz, tgt_len, 3, self.num_heads, -1).permute(2, 0, 1, 3, 4)

+ query_states, key_states, value_states = mixed_qkv[0], mixed_qkv[1], mixed_qkv[2]

+

+ attention = ColoAttention(embed_dim=self.embed_dim,

+ num_heads=self.num_heads,

+ dropout=self.dropout.p,

+ scale=self.scale)

+ context_layer = attention(query_states, key_states, value_states)

+

+ output = self.projection(context_layer)

+ outputs = (output, None)

+

+ return outputs

+

+ return forward

+

+

+def get_jit_fused_blip2_QFormer_self_output_forward():

+

+ from transformers.models.blip_2.modeling_blip_2 import Blip2QFormerSelfOutput

+

+ def forward(self: Blip2QFormerSelfOutput, hidden_states: torch.Tensor, input_tensor: torch.Tensor) -> torch.Tensor:

+ hidden_states = self.dense(hidden_states)

+ hidden_states = self.dropout_add(hidden_states, input_tensor, self.dropout.p, self.dropout.training)

+ hidden_states = self.LayerNorm(hidden_states)

+ return hidden_states

+

+ return forward

+

+

+def get_jit_fused_blip2_QFormer_output_forward():

+

+ from transformers.models.blip_2.modeling_blip_2 import Blip2QFormerOutput

+

+ def forward(self: Blip2QFormerOutput, hidden_states: torch.Tensor, input_tensor: torch.Tensor) -> torch.Tensor:

+ hidden_states = self.dense(hidden_states)

+ hidden_states = self.dropout_add(hidden_states, input_tensor, self.dropout.p, self.dropout.training)

+ hidden_states = self.LayerNorm(hidden_states)

+ return hidden_states

+

+ return forward

diff --git a/colossalai/shardformer/modeling/bloom.py b/colossalai/shardformer/modeling/bloom.py

index 76948fc70439..57c45bc6adfa 100644

--- a/colossalai/shardformer/modeling/bloom.py

+++ b/colossalai/shardformer/modeling/bloom.py

@@ -5,6 +5,7 @@

import torch.distributed as dist

from torch.distributed import ProcessGroup

from torch.nn import BCEWithLogitsLoss, CrossEntropyLoss, MSELoss

+from torch.nn import functional as F

from transformers.modeling_outputs import (

BaseModelOutputWithPastAndCrossAttentions,

CausalLMOutputWithCrossAttentions,

@@ -675,3 +676,223 @@ def bloom_for_question_answering_forward(

else:

hidden_states = outputs.get('hidden_states')

return {'hidden_states': hidden_states}

+

+

+def get_bloom_flash_attention_forward(enabel_jit_fused=False):

+

+ try:

+ from xformers.ops import memory_efficient_attention as me_attention

+ except:

+ raise ImportError("Error: xformers module is not installed. Please install it to use flash attention.")

+ from transformers.models.bloom.modeling_bloom import BloomAttention

+

+ def forward(

+ self: BloomAttention,

+ hidden_states: torch.Tensor,

+ residual: torch.Tensor,

+ alibi: torch.Tensor,

+ attention_mask: torch.Tensor,

+ layer_past: Optional[Tuple[torch.Tensor, torch.Tensor]] = None,

+ head_mask: Optional[torch.Tensor] = None,

+ use_cache: bool = False,

+ output_attentions: bool = False,

+ ):

+

+ fused_qkv = self.query_key_value(hidden_states)

+ (query_layer, key_layer, value_layer) = self._split_heads(fused_qkv)

+ batch_size, tgt_len, _ = hidden_states.size()

+ assert tgt_len % 4 == 0, "Flash Attention Error: The sequence length should be a multiple of 4."

+

+ _, kv_length, _, _ = key_layer.size()

+

+ proj_shape = (batch_size, tgt_len, self.num_heads, self.head_dim)

+ query_layer = query_layer.contiguous().view(*proj_shape)

+ key_layer = key_layer.contiguous().view(*proj_shape)

+ value_layer = value_layer.contiguous().view(*proj_shape)

+

+ if layer_past is not None:

+ past_key, past_value = layer_past

+ # concatenate along seq_length dimension:

+ # - key: [batch_size * self.num_heads, head_dim, kv_length]

+ # - value: [batch_size * self.num_heads, kv_length, head_dim]

+ key_layer = torch.cat((past_key, key_layer), dim=1)

+ value_layer = torch.cat((past_value, value_layer), dim=1)

+

+ if use_cache is True:

+ present = (key_layer, value_layer)

+ else:

+ present = None

+

+ tgt_len = key_layer.size()[1]

+

+ attention_numerical_mask = torch.zeros((batch_size, self.num_heads, tgt_len, kv_length),

+ dtype=torch.float32,

+ device=query_layer.device,

+ requires_grad=True)

+ attention_numerical_mask = attention_numerical_mask + alibi.view(batch_size, self.num_heads, 1,

+ kv_length) * self.beta

+ attention_numerical_mask = torch.masked_fill(attention_numerical_mask, attention_mask,

+ torch.finfo(torch.float32).min)

+

+ context_layer = me_attention(query_layer,

+ key_layer,

+ value_layer,

+ attn_bias=attention_numerical_mask,

+ scale=self.inv_norm_factor,

+ p=self.attention_dropout.p)

+ context_layer = context_layer.reshape(-1, kv_length, self.hidden_size)

+ if self.pretraining_tp > 1 and self.slow_but_exact:

+ slices = self.hidden_size / self.pretraining_tp

+ output_tensor = torch.zeros_like(context_layer)

+ for i in range(self.pretraining_tp):

+ output_tensor = output_tensor + F.linear(

+ context_layer[:, :, int(i * slices):int((i + 1) * slices)],

+ self.dense.weight[:, int(i * slices):int((i + 1) * slices)],

+ )

+ else:

+ output_tensor = self.dense(context_layer)

+

+ # TODO to replace with the bias_dropout_add function in jit

+ output_tensor = self.dropout_add(output_tensor, residual, self.hidden_dropout, self.training)

+ outputs = (output_tensor, present, None)

+

+ return outputs

+

+ return forward

+

+

+def get_jit_fused_bloom_attention_forward():

+

+ from transformers.models.bloom.modeling_bloom import BloomAttention

+

+ def forward(

+ self: BloomAttention,

+ hidden_states: torch.Tensor,

+ residual: torch.Tensor,

+ alibi: torch.Tensor,

+ attention_mask: torch.Tensor,

+ layer_past: Optional[Tuple[torch.Tensor, torch.Tensor]] = None,

+ head_mask: Optional[torch.Tensor] = None,

+ use_cache: bool = False,

+ output_attentions: bool = False,

+ ):

+ fused_qkv = self.query_key_value(hidden_states) # [batch_size, seq_length, 3 x hidden_size]

+

+ # 3 x [batch_size, seq_length, num_heads, head_dim]

+ (query_layer, key_layer, value_layer) = self._split_heads(fused_qkv)

+

+ batch_size, q_length, _, _ = query_layer.shape

+

+ query_layer = query_layer.transpose(1, 2).reshape(batch_size * self.num_heads, q_length, self.head_dim)

+ key_layer = key_layer.permute(0, 2, 3, 1).reshape(batch_size * self.num_heads, self.head_dim, q_length)

+ value_layer = value_layer.transpose(1, 2).reshape(batch_size * self.num_heads, q_length, self.head_dim)

+ if layer_past is not None:

+ past_key, past_value = layer_past

+ # concatenate along seq_length dimension:

+ # - key: [batch_size * self.num_heads, head_dim, kv_length]

+ # - value: [batch_size * self.num_heads, kv_length, head_dim]

+ key_layer = torch.cat((past_key, key_layer), dim=2)

+ value_layer = torch.cat((past_value, value_layer), dim=1)

+

+ _, _, kv_length = key_layer.shape

+

+ if use_cache is True:

+ present = (key_layer, value_layer)

+ else:

+ present = None

+

+ # [batch_size * num_heads, q_length, kv_length]

+ # we use `torch.Tensor.baddbmm` instead of `torch.baddbmm` as the latter isn't supported by TorchScript v1.11

+ matmul_result = alibi.baddbmm(

+ batch1=query_layer,

+ batch2=key_layer,

+ beta=self.beta,

+ alpha=self.inv_norm_factor,

+ )

+

+ # change view to [batch_size, num_heads, q_length, kv_length]

+ attention_scores = matmul_result.view(batch_size, self.num_heads, q_length, kv_length)

+

+ # cast attention scores to fp32, compute scaled softmax and cast back to initial dtype - [batch_size, num_heads, q_length, kv_length]

+ input_dtype = attention_scores.dtype

+ # `float16` has a minimum value of -65504.0, whereas `bfloat16` and `float32` have a minimum value of `-3.4e+38`

+ if input_dtype == torch.float16:

+ attention_scores = attention_scores.to(torch.float)

+ attn_weights = torch.masked_fill(attention_scores, attention_mask, torch.finfo(attention_scores.dtype).min)

+ attention_probs = F.softmax(attn_weights, dim=-1, dtype=torch.float32).to(input_dtype)

+

+ # [batch_size, num_heads, q_length, kv_length]

+ attention_probs = self.attention_dropout(attention_probs)

+

+ if head_mask is not None:

+ attention_probs = attention_probs * head_mask

+

+ # change view [batch_size x num_heads, q_length, kv_length]

+ attention_probs_reshaped = attention_probs.view(batch_size * self.num_heads, q_length, kv_length)

+

+ # matmul: [batch_size * num_heads, q_length, head_dim]

+ context_layer = torch.bmm(attention_probs_reshaped, value_layer)

+

+ # change view [batch_size, num_heads, q_length, head_dim]

+ context_layer = self._merge_heads(context_layer)

+

+ # aggregate results across tp ranks. See here: https://github.com/pytorch/pytorch/issues/76232

+ if self.pretraining_tp > 1 and self.slow_but_exact:

+ slices = self.hidden_size / self.pretraining_tp

+ output_tensor = torch.zeros_like(context_layer)

+ for i in range(self.pretraining_tp):

+ output_tensor = output_tensor + F.linear(

+ context_layer[:, :, int(i * slices):int((i + 1) * slices)],

+ self.dense.weight[:, int(i * slices):int((i + 1) * slices)],

+ )

+ else:

+ output_tensor = self.dense(context_layer)

+

+ output_tensor = self.dropout_add(output_tensor, residual, self.hidden_dropout, self.training)

+

+ outputs = (output_tensor, present)

+ if output_attentions:

+ outputs += (attention_probs,)

+

+ return outputs

+

+ return forward

+

+

+def get_jit_fused_bloom_mlp_forward():

+

+ from transformers.models.bloom.modeling_bloom import BloomMLP

+

+ def forward(self: BloomMLP, hidden_states: torch.Tensor, residual: torch.Tensor) -> torch.Tensor:

+ hidden_states = self.gelu_impl(self.dense_h_to_4h(hidden_states))

+

+ if self.pretraining_tp > 1 and self.slow_but_exact:

+ intermediate_output = torch.zeros_like(residual)

+ slices = self.dense_4h_to_h.weight.shape[-1] / self.pretraining_tp

+ for i in range(self.pretraining_tp):

+ intermediate_output = intermediate_output + F.linear(

+ hidden_states[:, :, int(i * slices):int((i + 1) * slices)],

+ self.dense_4h_to_h.weight[:, int(i * slices):int((i + 1) * slices)],

+ )

+ else:

+ intermediate_output = self.dense_4h_to_h(hidden_states)

+ output = self.dropout_add(intermediate_output, residual, self.hidden_dropout, self.training)

+ return output

+

+ return forward

+

+

+def get_jit_fused_bloom_gelu_forward():

+

+ from transformers.models.bloom.modeling_bloom import BloomGelu

+

+ from colossalai.kernel.jit.bias_gelu import GeLUFunction as JitGeLUFunction

+

+ def forward(self: BloomGelu, x: torch.Tensor) -> torch.Tensor:

+ bias = torch.zeros_like(x)

+ if self.training:

+ return JitGeLUFunction.apply(x, bias)

+ else:

+ return self.bloom_gelu_forward(x, bias)

+

+ return forward

diff --git a/colossalai/shardformer/modeling/chatglm.py b/colossalai/shardformer/modeling/chatglm.py

new file mode 100644

index 000000000000..3d453c3bd6db

--- /dev/null

+++ b/colossalai/shardformer/modeling/chatglm.py

@@ -0,0 +1,299 @@

+""" PyTorch ChatGLM model. """

+from typing import Any, Dict, List, Optional, Tuple, Union

+

+import torch

+import torch.nn.functional as F

+import torch.utils.checkpoint

+from torch.nn import CrossEntropyLoss, LayerNorm

+from transformers.modeling_outputs import BaseModelOutputWithPast, CausalLMOutputWithPast

+from transformers.utils import logging

+

+from colossalai.pipeline.stage_manager import PipelineStageManager

+from colossalai.shardformer.modeling.chatglm2_6b.configuration_chatglm import ChatGLMConfig

+from colossalai.shardformer.modeling.chatglm2_6b.modeling_chatglm import (

+ ChatGLMForConditionalGeneration,

+ ChatGLMModel,

+ GLMBlock,

+)

+

+