logging — Logging facility for Python¶

+Source code: Lib/logging/__init__.py

+ ++

This module defines functions and classes which implement a flexible event +logging system for applications and libraries.

+The key benefit of having the logging API provided by a standard library module +is that all Python modules can participate in logging, so your application log +can include your own messages integrated with messages from third-party +modules.

+The simplest example:

+>>> import logging

+>>> logging.warning('Watch out!')

+WARNING:root:Watch out!

+The module provides a lot of functionality and flexibility. If you are +unfamiliar with logging, the best way to get to grips with it is to view the +tutorials (see the links above and on the right).

+The basic classes defined by the module, together with their functions, are +listed below.

+-

+

Loggers expose the interface that application code directly uses.

+Handlers send the log records (created by loggers) to the appropriate +destination.

+Filters provide a finer grained facility for determining which log records +to output.

+Formatters specify the layout of log records in the final output.

+

Logger Objects¶

+Loggers have the following attributes and methods. Note that Loggers should

+NEVER be instantiated directly, but always through the module-level function

+logging.getLogger(name). Multiple calls to getLogger() with the same

+name will always return a reference to the same Logger object.

The name is potentially a period-separated hierarchical value, like

+foo.bar.baz (though it could also be just plain foo, for example).

+Loggers that are further down in the hierarchical list are children of loggers

+higher up in the list. For example, given a logger with a name of foo,

+loggers with names of foo.bar, foo.bar.baz, and foo.bam are all

+descendants of foo. The logger name hierarchy is analogous to the Python

+package hierarchy, and identical to it if you organise your loggers on a

+per-module basis using the recommended construction

+logging.getLogger(__name__). That’s because in a module, __name__

+is the module’s name in the Python package namespace.

-

+

- +class logging.Logger¶ +

-

+

- +propagate¶ +

If this attribute evaluates to true, events logged to this logger will be +passed to the handlers of higher level (ancestor) loggers, in addition to +any handlers attached to this logger. Messages are passed directly to the +ancestor loggers’ handlers - neither the level nor filters of the ancestor +loggers in question are considered.

+If this evaluates to false, logging messages are not passed to the handlers +of ancestor loggers.

+Spelling it out with an example: If the propagate attribute of the logger named +

+A.B.Cevaluates to true, any event logged toA.B.Cvia a method call such as +logging.getLogger('A.B.C').error(...)will [subject to passing that logger’s +level and filter settings] be passed in turn to any handlers attached to loggers +namedA.B,Aand the root logger, after first being passed to any handlers +attached toA.B.C. If any logger in the chainA.B.C,A.B,Ahas its +propagateattribute set to false, then that is the last logger whose handlers +are offered the event to handle, and propagation stops at that point.The constructor sets this attribute to

+True.++Note

+If you attach a handler to a logger and one or more of its +ancestors, it may emit the same record multiple times. In general, you +should not need to attach a handler to more than one logger - if you just +attach it to the appropriate logger which is highest in the logger +hierarchy, then it will see all events logged by all descendant loggers, +provided that their propagate setting is left set to

+True. A common +scenario is to attach handlers only to the root logger, and to let +propagation take care of the rest.

-

+

- +setLevel(level)¶ +

Sets the threshold for this logger to level. Logging messages which are less +severe than level will be ignored; logging messages which have severity level +or higher will be emitted by whichever handler or handlers service this logger, +unless a handler’s level has been set to a higher severity level than level.

+When a logger is created, the level is set to

+NOTSET(which causes +all messages to be processed when the logger is the root logger, or delegation +to the parent when the logger is a non-root logger). Note that the root logger +is created with levelWARNING.The term ‘delegation to the parent’ means that if a logger has a level of +NOTSET, its chain of ancestor loggers is traversed until either an ancestor with +a level other than NOTSET is found, or the root is reached.

+If an ancestor is found with a level other than NOTSET, then that ancestor’s +level is treated as the effective level of the logger where the ancestor search +began, and is used to determine how a logging event is handled.

+If the root is reached, and it has a level of NOTSET, then all messages will be +processed. Otherwise, the root’s level will be used as the effective level.

+See Logging Levels for a list of levels.

+++Changed in version 3.2: The level parameter now accepts a string representation of the +level such as ‘INFO’ as an alternative to the integer constants +such as

+INFO. Note, however, that levels are internally stored +as integers, and methods such as e.g.getEffectiveLevel()and +isEnabledFor()will return/expect to be passed integers.

-

+

- +isEnabledFor(level)¶ +

Indicates if a message of severity level would be processed by this logger. +This method checks first the module-level level set by +

+logging.disable(level)and then the logger’s effective level as determined +bygetEffectiveLevel().

-

+

- +getEffectiveLevel()¶ +

Indicates the effective level for this logger. If a value other than +

+NOTSEThas been set usingsetLevel(), it is returned. Otherwise, +the hierarchy is traversed towards the root until a value other than +NOTSETis found, and that value is returned. The value returned is +an integer, typically one oflogging.DEBUG,logging.INFO+etc.

-

+

- +getChild(suffix)¶ +

Returns a logger which is a descendant to this logger, as determined by the suffix. +Thus,

+logging.getLogger('abc').getChild('def.ghi')would return the same +logger as would be returned bylogging.getLogger('abc.def.ghi'). This is a +convenience method, useful when the parent logger is named using e.g.__name__+rather than a literal string.++New in version 3.2.

+

-

+

- +debug(msg, *args, **kwargs)¶ +

Logs a message with level

+DEBUGon this logger. The msg is the +message format string, and the args are the arguments which are merged into +msg using the string formatting operator. (Note that this means that you can +use keywords in the format string, together with a single dictionary argument.) +No % formatting operation is performed on msg when no args are supplied.There are four keyword arguments in kwargs which are inspected: +exc_info, stack_info, stacklevel and extra.

+If exc_info does not evaluate as false, it causes exception information to be +added to the logging message. If an exception tuple (in the format returned by +

+sys.exc_info()) or an exception instance is provided, it is used; +otherwise,sys.exc_info()is called to get the exception information.The second optional keyword argument is stack_info, which defaults to +

+False. If true, stack information is added to the logging +message, including the actual logging call. Note that this is not the same +stack information as that displayed through specifying exc_info: The +former is stack frames from the bottom of the stack up to the logging call +in the current thread, whereas the latter is information about stack frames +which have been unwound, following an exception, while searching for +exception handlers.You can specify stack_info independently of exc_info, e.g. to just show +how you got to a certain point in your code, even when no exceptions were +raised. The stack frames are printed following a header line which says:

+++Stack (most recent call last): +

This mimics the

+Traceback (most recent call last):which is used when +displaying exception frames.The third optional keyword argument is stacklevel, which defaults to

+1. +If greater than 1, the corresponding number of stack frames are skipped +when computing the line number and function name set in theLogRecord+created for the logging event. This can be used in logging helpers so that +the function name, filename and line number recorded are not the information +for the helper function/method, but rather its caller. The name of this +parameter mirrors the equivalent one in thewarningsmodule.The fourth keyword argument is extra which can be used to pass a +dictionary which is used to populate the __dict__ of the

+LogRecord+created for the logging event with user-defined attributes. These custom +attributes can then be used as you like. For example, they could be +incorporated into logged messages. For example:++FORMAT = '%(asctime)s %(clientip)-15s %(user)-8s %(message)s' +logging.basicConfig(format=FORMAT) +d = {'clientip': '192.168.0.1', 'user': 'fbloggs'} +logger = logging.getLogger('tcpserver') +logger.warning('Protocol problem: %s', 'connection reset', extra=d) +

would print something like

+++2006-02-08 22:20:02,165 192.168.0.1 fbloggs Protocol problem: connection reset +

The keys in the dictionary passed in extra should not clash with the keys used +by the logging system. (See the section on LogRecord attributes for more +information on which keys are used by the logging system.)

+If you choose to use these attributes in logged messages, you need to exercise +some care. In the above example, for instance, the

+Formatterhas been +set up with a format string which expects ‘clientip’ and ‘user’ in the attribute +dictionary of theLogRecord. If these are missing, the message will +not be logged because a string formatting exception will occur. So in this case, +you always need to pass the extra dictionary with these keys.While this might be annoying, this feature is intended for use in specialized +circumstances, such as multi-threaded servers where the same code executes in +many contexts, and interesting conditions which arise are dependent on this +context (such as remote client IP address and authenticated user name, in the +above example). In such circumstances, it is likely that specialized +

+Formatters would be used with particularHandlers.If no handler is attached to this logger (or any of its ancestors, +taking into account the relevant

+Logger.propagateattributes), +the message will be sent to the handler set onlastResort.++Changed in version 3.2: The stack_info parameter was added.

+++Changed in version 3.5: The exc_info parameter can now accept exception instances.

+++Changed in version 3.8: The stacklevel parameter was added.

+

-

+

- +info(msg, *args, **kwargs)¶ +

Logs a message with level

+INFOon this logger. The arguments are +interpreted as fordebug().

-

+

- +warning(msg, *args, **kwargs)¶ +

Logs a message with level

+WARNINGon this logger. The arguments are +interpreted as fordebug().++Note

+There is an obsolete method

+warnwhich is functionally +identical towarning. Aswarnis deprecated, please do not use +it - usewarninginstead.

-

+

- +error(msg, *args, **kwargs)¶ +

Logs a message with level

+ERRORon this logger. The arguments are +interpreted as fordebug().

-

+

- +critical(msg, *args, **kwargs)¶ +

Logs a message with level

+CRITICALon this logger. The arguments are +interpreted as fordebug().

-

+

- +log(level, msg, *args, **kwargs)¶ +

Logs a message with integer level level on this logger. The other arguments are +interpreted as for

+debug().

-

+

- +exception(msg, *args, **kwargs)¶ +

Logs a message with level

+ERRORon this logger. The arguments are +interpreted as fordebug(). Exception info is added to the logging +message. This method should only be called from an exception handler.

-

+

- +addFilter(filter)¶ +

Adds the specified filter filter to this logger.

+

-

+

- +removeFilter(filter)¶ +

Removes the specified filter filter from this logger.

+

-

+

- +filter(record)¶ +

Apply this logger’s filters to the record and return

+Trueif the +record is to be processed. The filters are consulted in turn, until one of +them returns a false value. If none of them return a false value, the record +will be processed (passed to handlers). If one returns a false value, no +further processing of the record occurs.

-

+

- +addHandler(hdlr)¶ +

Adds the specified handler hdlr to this logger.

+

-

+

- +removeHandler(hdlr)¶ +

Removes the specified handler hdlr from this logger.

+

-

+

- +findCaller(stack_info=False, stacklevel=1)¶ +

Finds the caller’s source filename and line number. Returns the filename, line +number, function name and stack information as a 4-element tuple. The stack +information is returned as

+Noneunless stack_info isTrue.The stacklevel parameter is passed from code calling the

+debug()+and other APIs. If greater than 1, the excess is used to skip stack frames +before determining the values to be returned. This will generally be useful +when calling logging APIs from helper/wrapper code, so that the information +in the event log refers not to the helper/wrapper code, but to the code that +calls it.

-

+

- +handle(record)¶ +

Handles a record by passing it to all handlers associated with this logger and +its ancestors (until a false value of propagate is found). This method is used +for unpickled records received from a socket, as well as those created locally. +Logger-level filtering is applied using

+filter().

-

+

- +makeRecord(name, level, fn, lno, msg, args, exc_info, func=None, extra=None, sinfo=None)¶ +

This is a factory method which can be overridden in subclasses to create +specialized

+LogRecordinstances.

-

+

- +hasHandlers()¶ +

Checks to see if this logger has any handlers configured. This is done by +looking for handlers in this logger and its parents in the logger hierarchy. +Returns

+Trueif a handler was found, elseFalse. The method stops searching +up the hierarchy whenever a logger with the ‘propagate’ attribute set to +false is found - that will be the last logger which is checked for the +existence of handlers.++New in version 3.2.

+

++Changed in version 3.7: Loggers can now be pickled and unpickled.

+

Logging Levels¶

+The numeric values of logging levels are given in the following table. These are +primarily of interest if you want to define your own levels, and need them to +have specific values relative to the predefined levels. If you define a level +with the same numeric value, it overwrites the predefined value; the predefined +name is lost.

+Level |

+Numeric value |

+What it means / When to use it |

+

|---|---|---|

|

+0 |

+When set on a logger, indicates that

+ancestor loggers are to be consulted

+to determine the effective level.

+If that still resolves to

+ |

+

|

+10 |

+Detailed information, typically only +of interest to a developer trying to +diagnose a problem. |

+

|

+20 |

+Confirmation that things are working +as expected. |

+

|

+30 |

+An indication that something +unexpected happened, or that a +problem might occur in the near +future (e.g. ‘disk space low’). The +software is still working as +expected. |

+

|

+40 |

+Due to a more serious problem, the +software has not been able to +perform some function. |

+

|

+50 |

+A serious error, indicating that the +program itself may be unable to +continue running. |

+

Handler Objects¶

+Handlers have the following attributes and methods. Note that Handler

+is never instantiated directly; this class acts as a base for more useful

+subclasses. However, the __init__() method in subclasses needs to call

+Handler.__init__().

-

+

- +class logging.Handler¶ +

-

+

- +__init__(level=NOTSET)¶ +

Initializes the

+Handlerinstance by setting its level, setting the list +of filters to the empty list and creating a lock (usingcreateLock()) for +serializing access to an I/O mechanism.

-

+

- +createLock()¶ +

Initializes a thread lock which can be used to serialize access to underlying +I/O functionality which may not be threadsafe.

+

-

+

- +acquire()¶ +

Acquires the thread lock created with

+createLock().

-

+

- +setLevel(level)¶ +

Sets the threshold for this handler to level. Logging messages which are +less severe than level will be ignored. When a handler is created, the +level is set to

+NOTSET(which causes all messages to be +processed).See Logging Levels for a list of levels.

+++Changed in version 3.2: The level parameter now accepts a string representation of the +level such as ‘INFO’ as an alternative to the integer constants +such as

+INFO.

-

+

- +addFilter(filter)¶ +

Adds the specified filter filter to this handler.

+

-

+

- +removeFilter(filter)¶ +

Removes the specified filter filter from this handler.

+

-

+

- +filter(record)¶ +

Apply this handler’s filters to the record and return

+Trueif the +record is to be processed. The filters are consulted in turn, until one of +them returns a false value. If none of them return a false value, the record +will be emitted. If one returns a false value, the handler will not emit the +record.

-

+

- +flush()¶ +

Ensure all logging output has been flushed. This version does nothing and is +intended to be implemented by subclasses.

+

-

+

- +close()¶ +

Tidy up any resources used by the handler. This version does no output but +removes the handler from an internal list of handlers which is closed when +

+shutdown()is called. Subclasses should ensure that this gets called +from overriddenclose()methods.

-

+

- +handle(record)¶ +

Conditionally emits the specified logging record, depending on filters which may +have been added to the handler. Wraps the actual emission of the record with +acquisition/release of the I/O thread lock.

+

-

+

- +handleError(record)¶ +

This method should be called from handlers when an exception is encountered +during an

+emit()call. If the module-level attribute +raiseExceptionsisFalse, exceptions get silently ignored. This is +what is mostly wanted for a logging system - most users will not care about +errors in the logging system, they are more interested in application +errors. You could, however, replace this with a custom handler if you wish. +The specified record is the one which was being processed when the exception +occurred. (The default value ofraiseExceptionsisTrue, as that is +more useful during development).

-

+

- +format(record)¶ +

Do formatting for a record - if a formatter is set, use it. Otherwise, use the +default formatter for the module.

+

-

+

- +emit(record)¶ +

Do whatever it takes to actually log the specified logging record. This version +is intended to be implemented by subclasses and so raises a +

+NotImplementedError.++Warning

+This method is called after a handler-level lock is acquired, which +is released after this method returns. When you override this method, note +that you should be careful when calling anything that invokes other parts of +the logging API which might do locking, because that might result in a +deadlock. Specifically:

+-

+

Logging configuration APIs acquire the module-level lock, and then +individual handler-level locks as those handlers are configured.

+Many logging APIs lock the module-level lock. If such an API is called +from this method, it could cause a deadlock if a configuration call is +made on another thread, because that thread will try to acquire the +module-level lock before the handler-level lock, whereas this thread +tries to acquire the module-level lock after the handler-level lock +(because in this method, the handler-level lock has already been acquired).

+

For a list of handlers included as standard, see logging.handlers.

Formatter Objects¶

+Formatter objects have the following attributes and methods. They are

+responsible for converting a LogRecord to (usually) a string which can

+be interpreted by either a human or an external system. The base

+Formatter allows a formatting string to be specified. If none is

+supplied, the default value of '%(message)s' is used, which just includes

+the message in the logging call. To have additional items of information in the

+formatted output (such as a timestamp), keep reading.

A Formatter can be initialized with a format string which makes use of knowledge

+of the LogRecord attributes - such as the default value mentioned above

+making use of the fact that the user’s message and arguments are pre-formatted

+into a LogRecord’s message attribute. This format string contains

+standard Python %-style mapping keys. See section printf-style String Formatting

+for more information on string formatting.

The useful mapping keys in a LogRecord are given in the section on

+LogRecord attributes.

-

+

- +class logging.Formatter(fmt=None, datefmt=None, style='%', validate=True, *, defaults=None)¶ +

Returns a new instance of the

+Formatterclass. The instance is +initialized with a format string for the message as a whole, as well as a +format string for the date/time portion of a message. If no fmt is +specified,'%(message)s'is used. If no datefmt is specified, a format +is used which is described in theformatTime()documentation.The style parameter can be one of ‘%’, ‘{’ or ‘$’ and determines how +the format string will be merged with its data: using one of %-formatting, +

+str.format()orstring.Template. This only applies to the +format string fmt (e.g.'%(message)s'or{message}), not to the +actual log messages passed toLogger.debugetc; see +Using particular formatting styles throughout your application for more information on using {- and $-formatting +for log messages.The defaults parameter can be a dictionary with default values to use in +custom fields. For example: +

+logging.Formatter('%(ip)s %(message)s', defaults={"ip": None})++Changed in version 3.2: The style parameter was added.

+++Changed in version 3.8: The validate parameter was added. Incorrect or mismatched style and fmt +will raise a

+ValueError. +For example:logging.Formatter('%(asctime)s - %(message)s', style='{').++Changed in version 3.10: The defaults parameter was added.

+-

+

- +format(record)¶ +

The record’s attribute dictionary is used as the operand to a string +formatting operation. Returns the resulting string. Before formatting the +dictionary, a couple of preparatory steps are carried out. The message +attribute of the record is computed using msg % args. If the +formatting string contains

+'(asctime)',formatTime()is called +to format the event time. If there is exception information, it is +formatted usingformatException()and appended to the message. Note +that the formatted exception information is cached in attribute +exc_text. This is useful because the exception information can be +pickled and sent across the wire, but you should be careful if you have +more than oneFormattersubclass which customizes the formatting +of exception information. In this case, you will have to clear the cached +value (by setting the exc_text attribute toNone) after a formatter +has done its formatting, so that the next formatter to handle the event +doesn’t use the cached value, but recalculates it afresh.If stack information is available, it’s appended after the exception +information, using

+formatStack()to transform it if necessary.

-

+

- +formatTime(record, datefmt=None)¶ +

This method should be called from

+format()by a formatter which +wants to make use of a formatted time. This method can be overridden in +formatters to provide for any specific requirement, but the basic behavior +is as follows: if datefmt (a string) is specified, it is used with +time.strftime()to format the creation time of the +record. Otherwise, the format ‘%Y-%m-%d %H:%M:%S,uuu’ is used, where the +uuu part is a millisecond value and the other letters are as per the +time.strftime()documentation. An example time in this format is +2003-01-23 00:29:50,411. The resulting string is returned.This function uses a user-configurable function to convert the creation +time to a tuple. By default,

+time.localtime()is used; to change +this for a particular formatter instance, set theconverterattribute +to a function with the same signature astime.localtime()or +time.gmtime(). To change it for all formatters, for example if you +want all logging times to be shown in GMT, set theconverter+attribute in theFormatterclass.++Changed in version 3.3: Previously, the default format was hard-coded as in this example: +

+2010-09-06 22:38:15,292where the part before the comma is +handled by a strptime format string ('%Y-%m-%d %H:%M:%S'), and the +part after the comma is a millisecond value. Because strptime does not +have a format placeholder for milliseconds, the millisecond value is +appended using another format string,'%s,%03d'— and both of these +format strings have been hardcoded into this method. With the change, +these strings are defined as class-level attributes which can be +overridden at the instance level when desired. The names of the +attributes aredefault_time_format(for the strptime format string) +anddefault_msec_format(for appending the millisecond value).++Changed in version 3.9: The

+default_msec_formatcan beNone.

-

+

- +formatException(exc_info)¶ +

Formats the specified exception information (a standard exception tuple as +returned by

+sys.exc_info()) as a string. This default implementation +just usestraceback.print_exception(). The resulting string is +returned.

-

+

- +formatStack(stack_info)¶ +

Formats the specified stack information (a string as returned by +

+traceback.print_stack(), but with the last newline removed) as a +string. This default implementation just returns the input value.

-

+

- +class logging.BufferingFormatter(linefmt=None)¶ +

A base formatter class suitable for subclassing when you want to format a +number of records. You can pass a

+Formatterinstance which you want +to use to format each line (that corresponds to a single record). If not +specified, the default formatter (which just outputs the event message) is +used as the line formatter.-

+

- +formatHeader(records)¶ +

Return a header for a list of records. The base implementation just +returns the empty string. You will need to override this method if you +want specific behaviour, e.g. to show the count of records, a title or a +separator line.

+

-

+

+

Return a footer for a list of records. The base implementation just +returns the empty string. You will need to override this method if you +want specific behaviour, e.g. to show the count of records or a separator +line.

+

-

+

- +format(records)¶ +

Return formatted text for a list of records. The base implementation +just returns the empty string if there are no records; otherwise, it +returns the concatenation of the header, each record formatted with the +line formatter, and the footer.

+

Filter Objects¶

+Filters can be used by Handlers and Loggers for more sophisticated

+filtering than is provided by levels. The base filter class only allows events

+which are below a certain point in the logger hierarchy. For example, a filter

+initialized with ‘A.B’ will allow events logged by loggers ‘A.B’, ‘A.B.C’,

+‘A.B.C.D’, ‘A.B.D’ etc. but not ‘A.BB’, ‘B.A.B’ etc. If initialized with the

+empty string, all events are passed.

-

+

- +class logging.Filter(name='')¶ +

Returns an instance of the

+Filterclass. If name is specified, it +names a logger which, together with its children, will have its events allowed +through the filter. If name is the empty string, allows every event.-

+

- +filter(record)¶ +

Is the specified record to be logged? Returns zero for no, nonzero for +yes. If deemed appropriate, the record may be modified in-place by this +method.

+

Note that filters attached to handlers are consulted before an event is

+emitted by the handler, whereas filters attached to loggers are consulted

+whenever an event is logged (using debug(), info(),

+etc.), before sending an event to handlers. This means that events which have

+been generated by descendant loggers will not be filtered by a logger’s filter

+setting, unless the filter has also been applied to those descendant loggers.

You don’t actually need to subclass Filter: you can pass any instance

+which has a filter method with the same semantics.

Changed in version 3.2: You don’t need to create specialized Filter classes, or use other

+classes with a filter method: you can use a function (or other

+callable) as a filter. The filtering logic will check to see if the filter

+object has a filter attribute: if it does, it’s assumed to be a

+Filter and its filter() method is called. Otherwise, it’s

+assumed to be a callable and called with the record as the single

+parameter. The returned value should conform to that returned by

+filter().

Although filters are used primarily to filter records based on more

+sophisticated criteria than levels, they get to see every record which is

+processed by the handler or logger they’re attached to: this can be useful if

+you want to do things like counting how many records were processed by a

+particular logger or handler, or adding, changing or removing attributes in

+the LogRecord being processed. Obviously changing the LogRecord needs

+to be done with some care, but it does allow the injection of contextual

+information into logs (see Using Filters to impart contextual information).

LogRecord Objects¶

+LogRecord instances are created automatically by the Logger

+every time something is logged, and can be created manually via

+makeLogRecord() (for example, from a pickled event received over the

+wire).

-

+

- +class logging.LogRecord(name, level, pathname, lineno, msg, args, exc_info, func=None, sinfo=None)¶ +

Contains all the information pertinent to the event being logged.

+The primary information is passed in msg and args, +which are combined using

+msg % argsto create +themessageattribute of the record.-

+

- Parameters +

-

+

name (str) – The name of the logger used to log the event +represented by this

LogRecord. +Note that the logger name in theLogRecord+will always have this value, +even though it may be emitted by a handler +attached to a different (ancestor) logger.

+level (int) – The numeric level of the logging event +(such as

10forDEBUG,20forINFO, etc). +Note that this is converted to two attributes of the LogRecord: +levelnofor the numeric value +andlevelnamefor the corresponding level name.

+pathname (str) – The full string path of the source file +where the logging call was made.

+lineno (int) – The line number in the source file +where the logging call was made.

+msg (Any) – The event description message, +which can be a %-format string with placeholders for variable data, +or an arbitrary object (see Using arbitrary objects as messages).

+args (tuple | dict[str, Any]) – Variable data to merge into the msg argument +to obtain the event description.

+exc_info (tuple[type[BaseException], BaseException, types.TracebackType] | None) – An exception tuple with the current exception information, +as returned by

sys.exc_info(), +orNoneif no exception information is available.

+func (str | None) – The name of the function or method +from which the logging call was invoked.

+sinfo (str | None) – A text string representing stack information +from the base of the stack in the current thread, +up to the logging call.

+

+

-

+

- +getMessage()¶ +

Returns the message for this

+LogRecordinstance after merging any +user-supplied arguments with the message. If the user-supplied message +argument to the logging call is not a string,str()is called on it to +convert it to a string. This allows use of user-defined classes as +messages, whose__str__method can return the actual format string to +be used.

++Changed in version 3.2: The creation of a

+LogRecordhas been made more configurable by +providing a factory which is used to create the record. The factory can be +set usinggetLogRecordFactory()andsetLogRecordFactory()+(see this for the factory’s signature).This functionality can be used to inject your own values into a +

+LogRecordat creation time. You can use the following pattern:++old_factory = logging.getLogRecordFactory() + +def record_factory(*args, **kwargs): + record = old_factory(*args, **kwargs) + record.custom_attribute = 0xdecafbad + return record + +logging.setLogRecordFactory(record_factory) +

With this pattern, multiple factories could be chained, and as long +as they don’t overwrite each other’s attributes or unintentionally +overwrite the standard attributes listed above, there should be no +surprises.

+

LogRecord attributes¶

+The LogRecord has a number of attributes, most of which are derived from the +parameters to the constructor. (Note that the names do not always correspond +exactly between the LogRecord constructor parameters and the LogRecord +attributes.) These attributes can be used to merge data from the record into +the format string. The following table lists (in alphabetical order) the +attribute names, their meanings and the corresponding placeholder in a %-style +format string.

+If you are using {}-formatting (str.format()), you can use

+{attrname} as the placeholder in the format string. If you are using

+$-formatting (string.Template), use the form ${attrname}. In

+both cases, of course, replace attrname with the actual attribute name

+you want to use.

In the case of {}-formatting, you can specify formatting flags by placing them

+after the attribute name, separated from it with a colon. For example: a

+placeholder of {msecs:03d} would format a millisecond value of 4 as

+004. Refer to the str.format() documentation for full details on

+the options available to you.

Attribute name |

+Format |

+Description |

+

|---|---|---|

args |

+You shouldn’t need to +format this yourself. |

+The tuple of arguments merged into |

+

asctime |

+

|

+Human-readable time when the

+ |

+

created |

+

|

+Time when the |

+

exc_info |

+You shouldn’t need to +format this yourself. |

+Exception tuple (à la |

+

filename |

+

|

+Filename portion of |

+

funcName |

+

|

+Name of function containing the logging call. |

+

levelname |

+

|

+Text logging level for the message

+( |

+

levelno |

+

|

+Numeric logging level for the message

+( |

+

lineno |

+

|

+Source line number where the logging call was +issued (if available). |

+

message |

+

|

+The logged message, computed as |

+

module |

+

|

+Module (name portion of |

+

msecs |

+

|

+Millisecond portion of the time when the

+ |

+

msg |

+You shouldn’t need to +format this yourself. |

+The format string passed in the original

+logging call. Merged with |

+

name |

+

|

+Name of the logger used to log the call. |

+

pathname |

+

|

+Full pathname of the source file where the +logging call was issued (if available). |

+

process |

+

|

+Process ID (if available). |

+

processName |

+

|

+Process name (if available). |

+

relativeCreated |

+

|

+Time in milliseconds when the LogRecord was +created, relative to the time the logging +module was loaded. |

+

stack_info |

+You shouldn’t need to +format this yourself. |

+Stack frame information (where available) +from the bottom of the stack in the current +thread, up to and including the stack frame +of the logging call which resulted in the +creation of this record. |

+

thread |

+

|

+Thread ID (if available). |

+

threadName |

+

|

+Thread name (if available). |

+

Changed in version 3.1: processName was added.

+LoggerAdapter Objects¶

+LoggerAdapter instances are used to conveniently pass contextual

+information into logging calls. For a usage example, see the section on

+adding contextual information to your logging output.

-

+

- +class logging.LoggerAdapter(logger, extra)¶ +

Returns an instance of

+LoggerAdapterinitialized with an +underlyingLoggerinstance and a dict-like object.-

+

- +process(msg, kwargs)¶ +

Modifies the message and/or keyword arguments passed to a logging call in +order to insert contextual information. This implementation takes the object +passed as extra to the constructor and adds it to kwargs using key +‘extra’. The return value is a (msg, kwargs) tuple which has the +(possibly modified) versions of the arguments passed in.

+

-

+

- +manager¶ +

Delegates to the underlying

+manager`on logger.

-

+

- +_log¶ +

Delegates to the underlying

+_log`()method on logger.

In addition to the above,

+LoggerAdaptersupports the following +methods ofLogger:debug(),info(), +warning(),error(),exception(), +critical(),log(),isEnabledFor(), +getEffectiveLevel(),setLevel()and +hasHandlers(). These methods have the same signatures as their +counterparts inLogger, so you can use the two types of instances +interchangeably.++Changed in version 3.2: The

+isEnabledFor(),getEffectiveLevel(), +setLevel()andhasHandlers()methods were added +toLoggerAdapter. These methods delegate to the underlying logger.++Changed in version 3.6: Attribute

+managerand method_log()were added, which +delegate to the underlying logger and allow adapters to be nested.

Thread Safety¶

+The logging module is intended to be thread-safe without any special work +needing to be done by its clients. It achieves this though using threading +locks; there is one lock to serialize access to the module’s shared data, and +each handler also creates a lock to serialize access to its underlying I/O.

+If you are implementing asynchronous signal handlers using the signal

+module, you may not be able to use logging from within such handlers. This is

+because lock implementations in the threading module are not always

+re-entrant, and so cannot be invoked from such signal handlers.

Module-Level Functions¶

+In addition to the classes described above, there are a number of module-level +functions.

+-

+

- +logging.getLogger(name=None)¶ +

Return a logger with the specified name or, if name is

+None, return a +logger which is the root logger of the hierarchy. If specified, the name is +typically a dot-separated hierarchical name like ‘a’, ‘a.b’ or ‘a.b.c.d’. +Choice of these names is entirely up to the developer who is using logging.All calls to this function with a given name return the same logger instance. +This means that logger instances never need to be passed between different parts +of an application.

+

-

+

- +logging.getLoggerClass()¶ +

Return either the standard

+Loggerclass, or the last class passed to +setLoggerClass(). This function may be called from within a new class +definition, to ensure that installing a customizedLoggerclass will +not undo customizations already applied by other code. For example:++class MyLogger(logging.getLoggerClass()): + # ... override behaviour here +

-

+

- +logging.getLogRecordFactory()¶ +

Return a callable which is used to create a

+LogRecord.++New in version 3.2: This function has been provided, along with

+setLogRecordFactory(), +to allow developers more control over how theLogRecord+representing a logging event is constructed.See

+setLogRecordFactory()for more information about the how the +factory is called.

-

+

- +logging.debug(msg, *args, **kwargs)¶ +

Logs a message with level

+DEBUGon the root logger. The msg is the +message format string, and the args are the arguments which are merged into +msg using the string formatting operator. (Note that this means that you can +use keywords in the format string, together with a single dictionary argument.)There are three keyword arguments in kwargs which are inspected: exc_info +which, if it does not evaluate as false, causes exception information to be +added to the logging message. If an exception tuple (in the format returned by +

+sys.exc_info()) or an exception instance is provided, it is used; +otherwise,sys.exc_info()is called to get the exception information.The second optional keyword argument is stack_info, which defaults to +

+False. If true, stack information is added to the logging +message, including the actual logging call. Note that this is not the same +stack information as that displayed through specifying exc_info: The +former is stack frames from the bottom of the stack up to the logging call +in the current thread, whereas the latter is information about stack frames +which have been unwound, following an exception, while searching for +exception handlers.You can specify stack_info independently of exc_info, e.g. to just show +how you got to a certain point in your code, even when no exceptions were +raised. The stack frames are printed following a header line which says:

+++Stack (most recent call last): +

This mimics the

+Traceback (most recent call last):which is used when +displaying exception frames.The third optional keyword argument is extra which can be used to pass a +dictionary which is used to populate the __dict__ of the LogRecord created for +the logging event with user-defined attributes. These custom attributes can then +be used as you like. For example, they could be incorporated into logged +messages. For example:

+++FORMAT = '%(asctime)s %(clientip)-15s %(user)-8s %(message)s' +logging.basicConfig(format=FORMAT) +d = {'clientip': '192.168.0.1', 'user': 'fbloggs'} +logging.warning('Protocol problem: %s', 'connection reset', extra=d) +

would print something like:

+++2006-02-08 22:20:02,165 192.168.0.1 fbloggs Protocol problem: connection reset +

The keys in the dictionary passed in extra should not clash with the keys used +by the logging system. (See the

+Formatterdocumentation for more +information on which keys are used by the logging system.)If you choose to use these attributes in logged messages, you need to exercise +some care. In the above example, for instance, the

+Formatterhas been +set up with a format string which expects ‘clientip’ and ‘user’ in the attribute +dictionary of the LogRecord. If these are missing, the message will not be +logged because a string formatting exception will occur. So in this case, you +always need to pass the extra dictionary with these keys.While this might be annoying, this feature is intended for use in specialized +circumstances, such as multi-threaded servers where the same code executes in +many contexts, and interesting conditions which arise are dependent on this +context (such as remote client IP address and authenticated user name, in the +above example). In such circumstances, it is likely that specialized +

+Formatters would be used with particularHandlers.This function (as well as

+info(),warning(),error()and +critical()) will callbasicConfig()if the root logger doesn’t +have any handler attached.++Changed in version 3.2: The stack_info parameter was added.

+

-

+

- +logging.info(msg, *args, **kwargs)¶ +

Logs a message with level

+INFOon the root logger. The arguments are +interpreted as fordebug().

-

+

- +logging.warning(msg, *args, **kwargs)¶ +

Logs a message with level

+WARNINGon the root logger. The arguments +are interpreted as fordebug().++Note

+There is an obsolete function

+warnwhich is functionally +identical towarning. Aswarnis deprecated, please do not use +it - usewarninginstead.

-

+

- +logging.error(msg, *args, **kwargs)¶ +

Logs a message with level

+ERRORon the root logger. The arguments are +interpreted as fordebug().

-

+

- +logging.critical(msg, *args, **kwargs)¶ +

Logs a message with level

+CRITICALon the root logger. The arguments +are interpreted as fordebug().

-

+

- +logging.exception(msg, *args, **kwargs)¶ +

Logs a message with level

+ERRORon the root logger. The arguments are +interpreted as fordebug(). Exception info is added to the logging +message. This function should only be called from an exception handler.

-

+

- +logging.log(level, msg, *args, **kwargs)¶ +

Logs a message with level level on the root logger. The other arguments are +interpreted as for

+debug().

-

+

- +logging.disable(level=CRITICAL)¶ +

Provides an overriding level level for all loggers which takes precedence over +the logger’s own level. When the need arises to temporarily throttle logging +output down across the whole application, this function can be useful. Its +effect is to disable all logging calls of severity level and below, so that +if you call it with a value of INFO, then all INFO and DEBUG events would be +discarded, whereas those of severity WARNING and above would be processed +according to the logger’s effective level. If +

+logging.disable(logging.NOTSET)is called, it effectively removes this +overriding level, so that logging output again depends on the effective +levels of individual loggers.Note that if you have defined any custom logging level higher than +

+CRITICAL(this is not recommended), you won’t be able to rely on the +default value for the level parameter, but will have to explicitly supply a +suitable value.++Changed in version 3.7: The level parameter was defaulted to level

+CRITICAL. See +bpo-28524 for more information about this change.

-

+

- +logging.addLevelName(level, levelName)¶ +

Associates level level with text levelName in an internal dictionary, which is +used to map numeric levels to a textual representation, for example when a +

+Formatterformats a message. This function can also be used to define +your own levels. The only constraints are that all levels used must be +registered using this function, levels should be positive integers and they +should increase in increasing order of severity.++Note

+If you are thinking of defining your own levels, please see the +section on Custom Levels.

+

-

+

- +logging.getLevelNamesMapping()¶ +

Returns a mapping from level names to their corresponding logging levels. For example, the +string “CRITICAL” maps to

+CRITICAL. The returned mapping is copied from an internal +mapping on each call to this function.++New in version 3.11.

+

-

+

- +logging.getLevelName(level)¶ +

Returns the textual or numeric representation of logging level level.

+If level is one of the predefined levels

+CRITICAL,ERROR, +WARNING,INFOorDEBUGthen you get the +corresponding string. If you have associated levels with names using +addLevelName()then the name you have associated with level is +returned. If a numeric value corresponding to one of the defined levels is +passed in, the corresponding string representation is returned.The level parameter also accepts a string representation of the level such +as ‘INFO’. In such cases, this functions returns the corresponding numeric +value of the level.

+If no matching numeric or string value is passed in, the string +‘Level %s’ % level is returned.

+++Note

+Levels are internally integers (as they need to be compared in the +logging logic). This function is used to convert between an integer level +and the level name displayed in the formatted log output by means of the +

+%(levelname)sformat specifier (see LogRecord attributes), and +vice versa.++Changed in version 3.4: In Python versions earlier than 3.4, this function could also be passed a +text level, and would return the corresponding numeric value of the level. +This undocumented behaviour was considered a mistake, and was removed in +Python 3.4, but reinstated in 3.4.2 due to retain backward compatibility.

+

-

+

- +logging.makeLogRecord(attrdict)¶ +

Creates and returns a new

+LogRecordinstance whose attributes are +defined by attrdict. This function is useful for taking a pickled +LogRecordattribute dictionary, sent over a socket, and reconstituting +it as aLogRecordinstance at the receiving end.

-

+

- +logging.basicConfig(**kwargs)¶ +

Does basic configuration for the logging system by creating a +

+StreamHandlerwith a defaultFormatterand adding it to the +root logger. The functionsdebug(),info(),warning(), +error()andcritical()will callbasicConfig()automatically +if no handlers are defined for the root logger.This function does nothing if the root logger already has handlers +configured, unless the keyword argument force is set to

+True.++Note

+This function should be called from the main thread +before other threads are started. In versions of Python prior to +2.7.1 and 3.2, if this function is called from multiple threads, +it is possible (in rare circumstances) that a handler will be added +to the root logger more than once, leading to unexpected results +such as messages being duplicated in the log.

+The following keyword arguments are supported.

+++

+ + ++ + Format

Description

filename

Specifies that a

FileHandlerbe +created, using the specified filename, +rather than aStreamHandler.filemode

If filename is specified, open the file +in this mode. Defaults +to

'a'.format

Use the specified format string for the +handler. Defaults to attributes +

levelname,nameandmessage+separated by colons.datefmt

Use the specified date/time format, as +accepted by

time.strftime().style

If format is specified, use this style +for the format string. One of

'%', +'{'or'$'for printf-style, +str.format()or +string.Templaterespectively. +Defaults to'%'.level

Set the root logger level to the specified +level.

stream

Use the specified stream to initialize the +

StreamHandler. Note that this +argument is incompatible with filename - +if both are present, aValueErroris +raised.handlers

If specified, this should be an iterable of +already created handlers to add to the root +logger. Any handlers which don’t already +have a formatter set will be assigned the +default formatter created in this function. +Note that this argument is incompatible +with filename or stream - if both +are present, a

ValueErroris raised.force

If this keyword argument is specified as +true, any existing handlers attached to the +root logger are removed and closed, before +carrying out the configuration as specified +by the other arguments.

encoding

If this keyword argument is specified along +with filename, its value is used when the +

FileHandleris created, and thus +used when opening the output file.errors

If this keyword argument is specified along +with filename, its value is used when the +

FileHandleris created, and thus +used when opening the output file. If not +specified, the value ‘backslashreplace’ is +used. Note that ifNoneis specified, +it will be passed as such toopen(), +which means that it will be treated the +same as passing ‘errors’.++Changed in version 3.2: The style argument was added.

+++Changed in version 3.3: The handlers argument was added. Additional checks were added to +catch situations where incompatible arguments are specified (e.g. +handlers together with stream or filename, or stream +together with filename).

+++Changed in version 3.8: The force argument was added.

+++Changed in version 3.9: The encoding and errors arguments were added.

+

-

+

- +logging.shutdown()¶ +

Informs the logging system to perform an orderly shutdown by flushing and +closing all handlers. This should be called at application exit and no +further use of the logging system should be made after this call.

+When the logging module is imported, it registers this function as an exit +handler (see

+atexit), so normally there’s no need to do that +manually.

-

+

- +logging.setLoggerClass(klass)¶ +

Tells the logging system to use the class klass when instantiating a logger. +The class should define

+__init__()such that only a name argument is +required, and the__init__()should callLogger.__init__(). This +function is typically called before any loggers are instantiated by applications +which need to use custom logger behavior. After this call, as at any other +time, do not instantiate loggers directly using the subclass: continue to use +thelogging.getLogger()API to get your loggers.

-

+

- +logging.setLogRecordFactory(factory)¶ +

Set a callable which is used to create a

+LogRecord.-

+

- Parameters +

factory – The factory callable to be used to instantiate a log record.

+

+

++New in version 3.2: This function has been provided, along with

+getLogRecordFactory(), to +allow developers more control over how theLogRecordrepresenting +a logging event is constructed.The factory has the following signature:

+

+factory(name, level, fn, lno, msg, args, exc_info, func=None, sinfo=None, **kwargs)+

+-

+

- name +

The logger name.

+

+- level +

The logging level (numeric).

+

+- fn +

The full pathname of the file where the logging call was made.

+

+- lno +

The line number in the file where the logging call was made.

+

+- msg +

The logging message.

+

+- args +

The arguments for the logging message.

+

+- exc_info +

An exception tuple, or

+None.

+- func +

The name of the function or method which invoked the logging +call.

+

+- sinfo +

A stack traceback such as is provided by +

+traceback.print_stack(), showing the call hierarchy.

+- kwargs +

Additional keyword arguments.

+

+

Module-Level Attributes¶

+-

+

- +logging.lastResort¶ +

A “handler of last resort” is available through this attribute. This +is a

+StreamHandlerwriting tosys.stderrwith a level of +WARNING, and is used to handle logging events in the absence of any +logging configuration. The end result is to just print the message to +sys.stderr. This replaces the earlier error message saying that +“no handlers could be found for logger XYZ”. If you need the earlier +behaviour for some reason,lastResortcan be set toNone.++New in version 3.2.

+

Integration with the warnings module¶

+The captureWarnings() function can be used to integrate logging

+with the warnings module.

-

+

- +logging.captureWarnings(capture)¶ +

This function is used to turn the capture of warnings by logging on and +off.

+If capture is

+True, warnings issued by thewarningsmodule will +be redirected to the logging system. Specifically, a warning will be +formatted usingwarnings.formatwarning()and the resulting string +logged to a logger named'py.warnings'with a severity ofWARNING.If capture is

+False, the redirection of warnings to the logging system +will stop, and warnings will be redirected to their original destinations +(i.e. those in effect beforecaptureWarnings(True)was called).

See also

+-

+

- Module

logging.config Configuration API for the logging module.

+

+- Module

logging.handlers Useful handlers included with the logging module.

+

+- PEP 282 - A Logging System

The proposal which described this feature for inclusion in the Python standard +library.

+

+- Original Python logging package

This is the original source for the

+loggingpackage. The version of the +package available from this site is suitable for use with Python 1.5.2, 2.1.x +and 2.2.x, which do not include theloggingpackage in the standard +library.

+

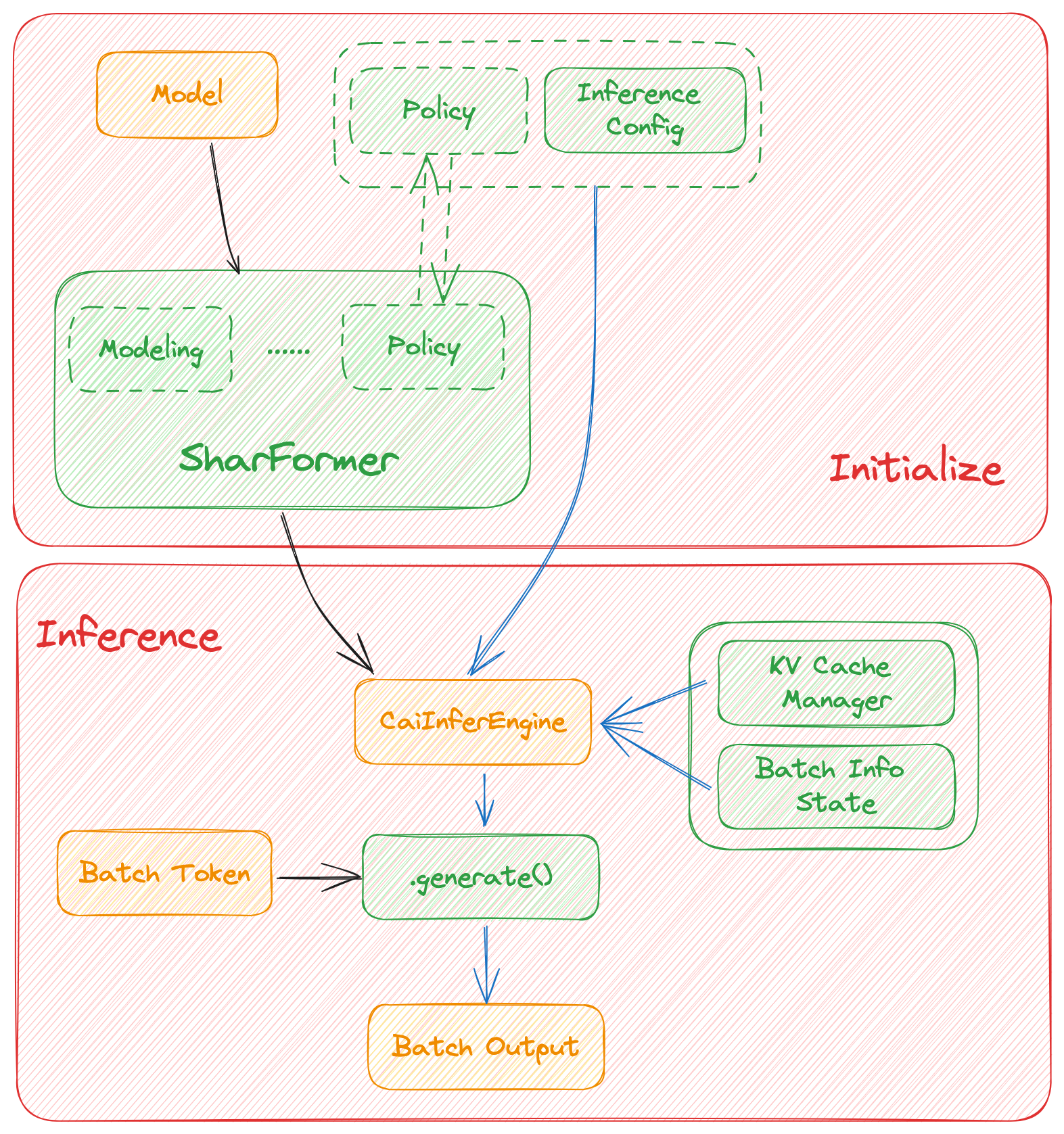

## Roadmap of our implementation

@@ -34,11 +43,15 @@ In this section we discuss how the colossal inference works and integrates with

- [x] policy

- [x] context forward

- [x] token forward

-- [ ] Replace the kernels with `faster-transformer` in token-forward stage

-- [ ] Support all models

+ - [x] support flash-decoding

+- [x] Support all models

- [x] Llama

+ - [x] Llama-2

- [x] Bloom

- - [ ] Chatglm2

+ - [x] Chatglm2

+- [x] Quantization

+ - [x] GPTQ

+ - [x] SmoothQuant

- [ ] Benchmarking for all models

## Get started

@@ -51,23 +64,19 @@ pip install -e .

### Requirements

-dependencies

+Install dependencies.

```bash

-pytorch= 1.13.1 (gpu)

-cuda>= 11.6

-transformers= 4.30.2

-triton==2.0.0.dev20221202

-# for install vllm, please use this branch to install https://github.com/tiandiao123/vllm/tree/setup_branch

-vllm

-# for install flash-attention, please use commit hash: 67ae6fd74b4bc99c36b2ce524cf139c35663793c

-flash-attention

-

-# install lightllm since we depend on lightllm triton kernels

-git clone https://github.com/ModelTC/lightllm

-git checkout 28c1267cfca536b7b4f28e921e03de735b003039

-cd lightllm

-pip3 install -e .

+pip install -r requirements/requirements-infer.txt

+

+# if you want use smoothquant quantization, please install torch-int

+git clone --recurse-submodules https://github.com/Guangxuan-Xiao/torch-int.git

+cd torch-int

+git checkout 65266db1eadba5ca78941b789803929e6e6c6856

+pip install -r requirements.txt

+source environment.sh

+bash build_cutlass.sh

+python setup.py install

```

### Docker

@@ -83,22 +92,60 @@ docker run -it --gpus all --name ANY_NAME -v $PWD:/workspace -w /workspace hpcai

cd /path/to/CollossalAI

pip install -e .

-# install lightllm

-git clone https://github.com/ModelTC/lightllm

-git checkout 28c1267cfca536b7b4f28e921e03de735b003039

-cd lightllm

-pip3 install -e .

-

-

```

-### Dive into fast-inference!

+## Usage

+### Quick start

example files are in

```bash

-cd colossalai.examples

-python xx

+cd ColossalAI/examples

+python hybrid_llama.py --path /path/to/model --tp_size 2 --pp_size 2 --batch_size 4 --max_input_size 32 --max_out_len 16 --micro_batch_size 2

+```

+

+

+

+### Example

+```python

+# import module

+from colossalai.inference import CaiInferEngine

+import colossalai

+from transformers import LlamaForCausalLM, LlamaTokenizer

+

+#launch distributed environment

+colossalai.launch_from_torch(config={})

+

+# load original model and tokenizer

+model = LlamaForCausalLM.from_pretrained("/path/to/model")

+tokenizer = LlamaTokenizer.from_pretrained("/path/to/model")

+

+# generate token ids

+input = ["Introduce a landmark in London","Introduce a landmark in Singapore"]

+data = tokenizer(input, return_tensors='pt')

+

+# set parallel parameters

+tp_size=2

+pp_size=2

+max_output_len=32

+micro_batch_size=1

+

+# initial inference engine

+engine = CaiInferEngine(

+ tp_size=tp_size,

+ pp_size=pp_size,

+ model=model,

+ max_output_len=max_output_len,

+ micro_batch_size=micro_batch_size,

+)

+

+# inference

+output = engine.generate(data)

+

+# get results

+if dist.get_rank() == 0:

+ assert len(output[0]) == max_output_len, f"{len(output)}, {max_output_len}"

+

```

## Performance

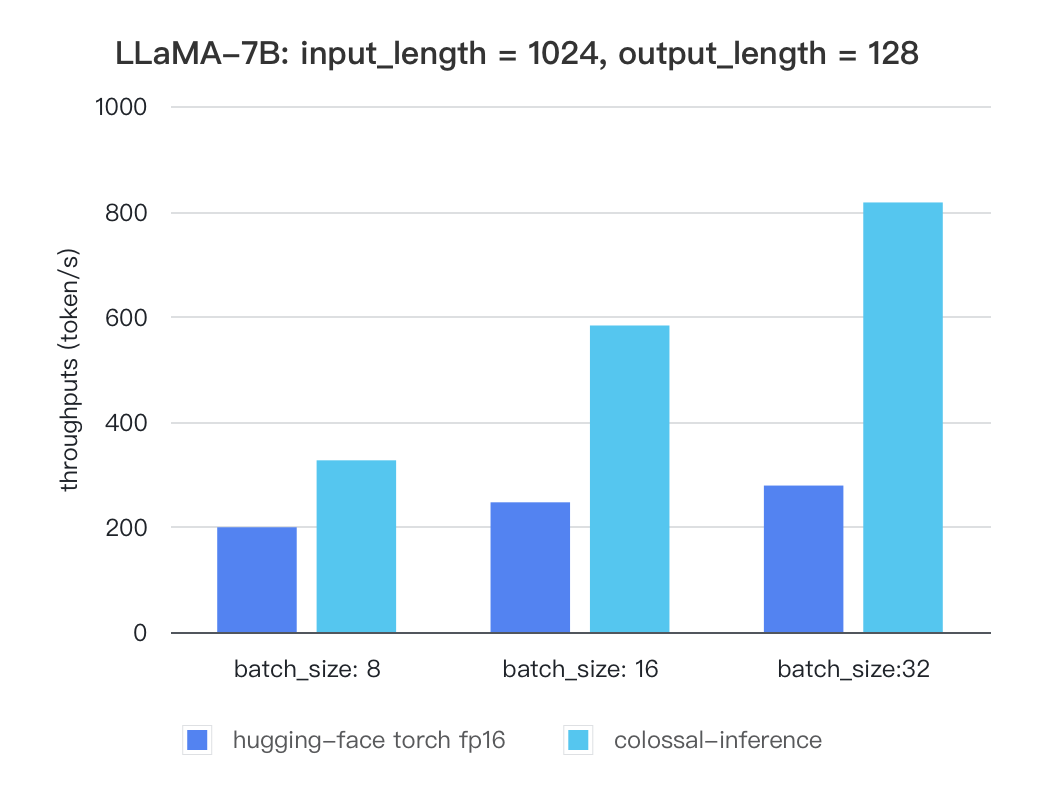

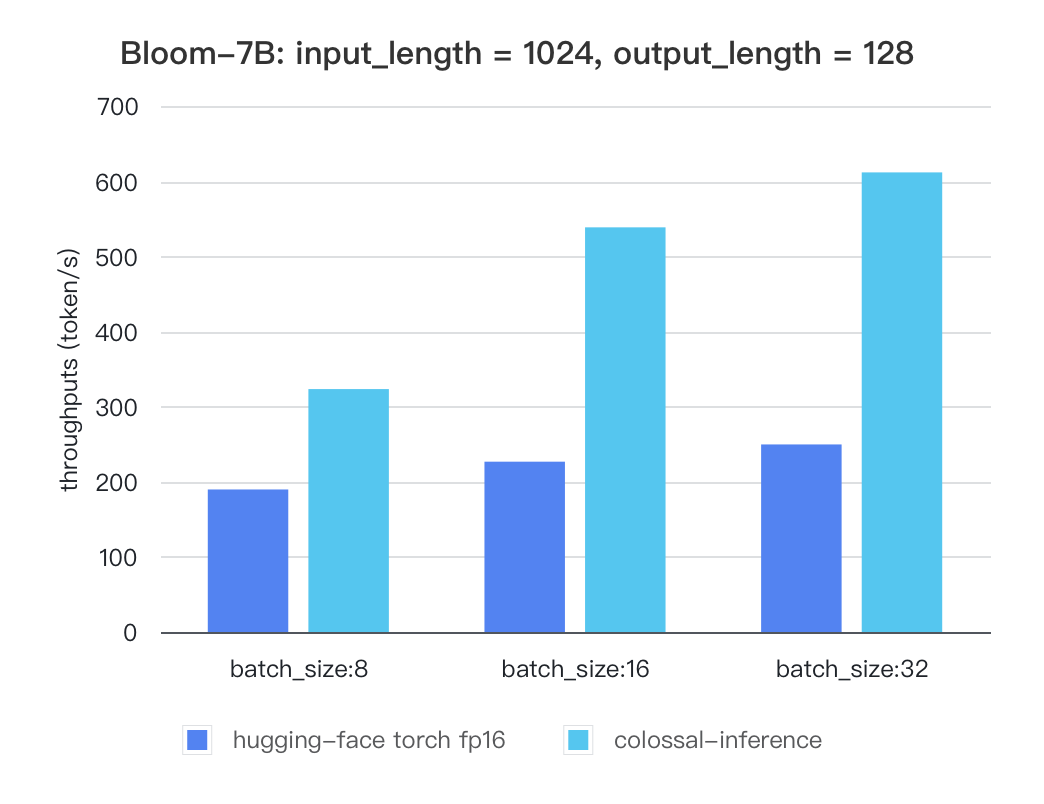

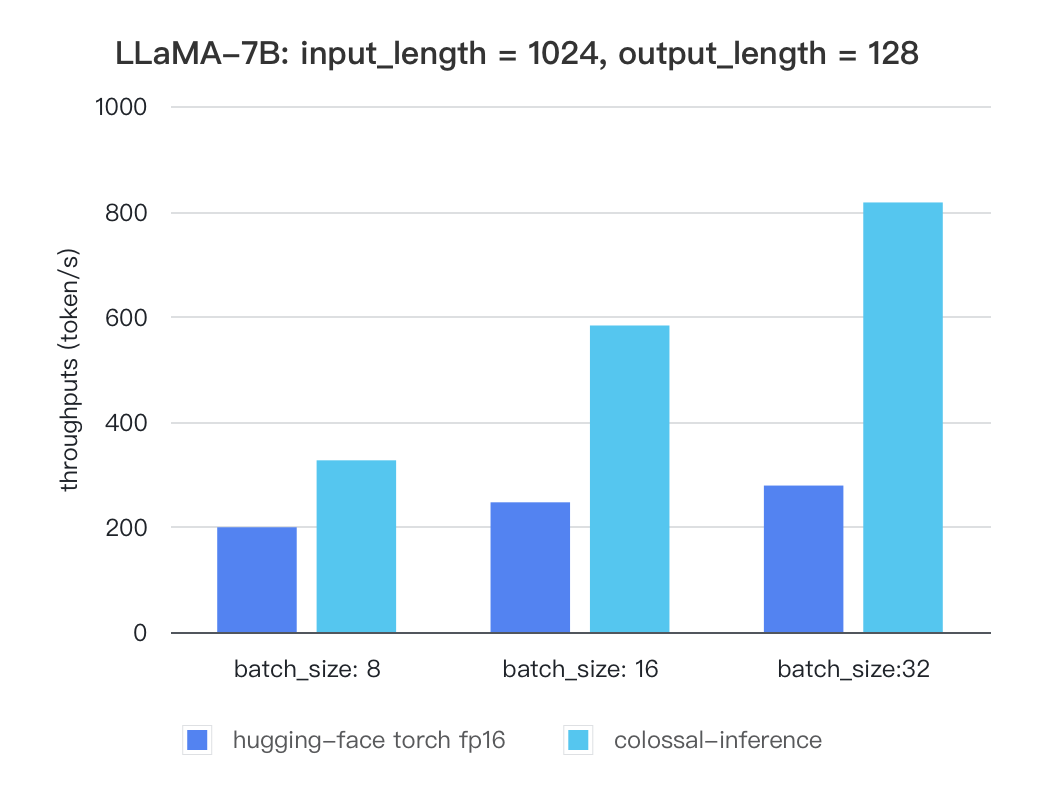

@@ -113,7 +160,9 @@ For various models, experiments were conducted using multiple batch sizes under

Currently the stats below are calculated based on A100 (single GPU), and we calculate token latency based on average values of context-forward and decoding forward process, which means we combine both of processes to calculate token generation times. We are actively developing new features and methods to further optimize the performance of LLM models. Please stay tuned.

-#### Llama

+### Tensor Parallelism Inference

+

+##### Llama

| batch_size | 8 | 16 | 32 |

| :---------------------: | :----: | :----: | :----: |

@@ -122,7 +171,7 @@ Currently the stats below are calculated based on A100 (single GPU), and we calc

-### Bloom

+#### Bloom

| batch_size | 8 | 16 | 32 |

| :---------------------: | :----: | :----: | :----: |

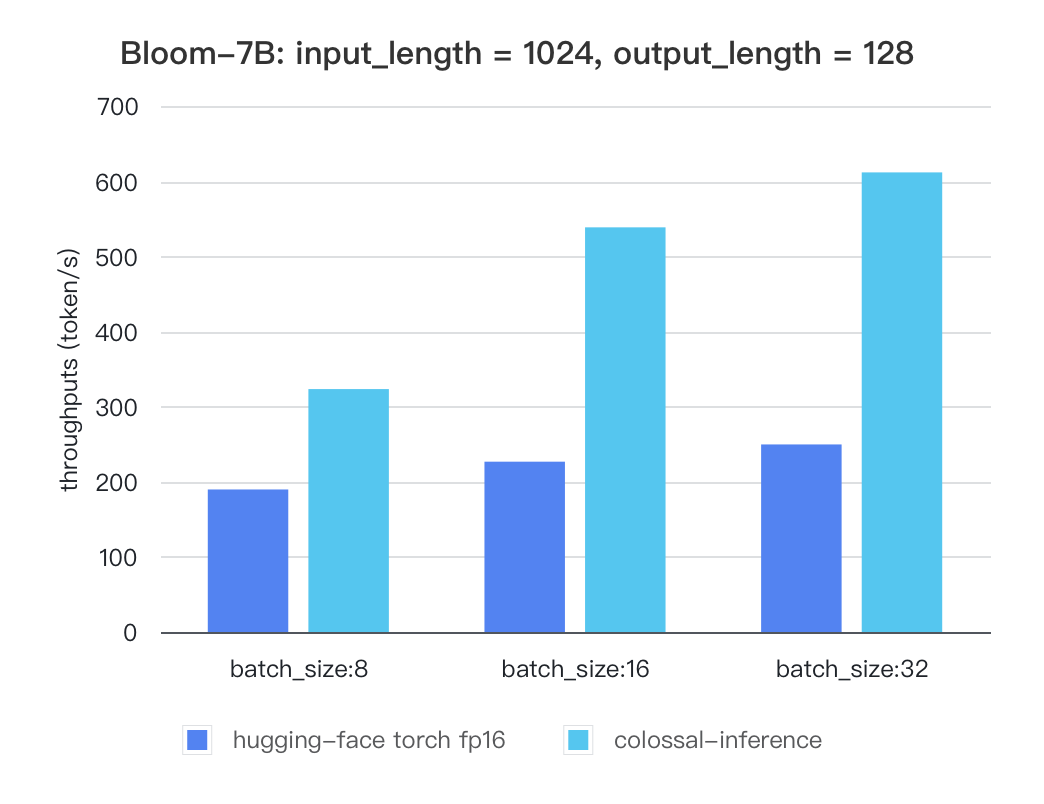

@@ -131,4 +180,50 @@ Currently the stats below are calculated based on A100 (single GPU), and we calc

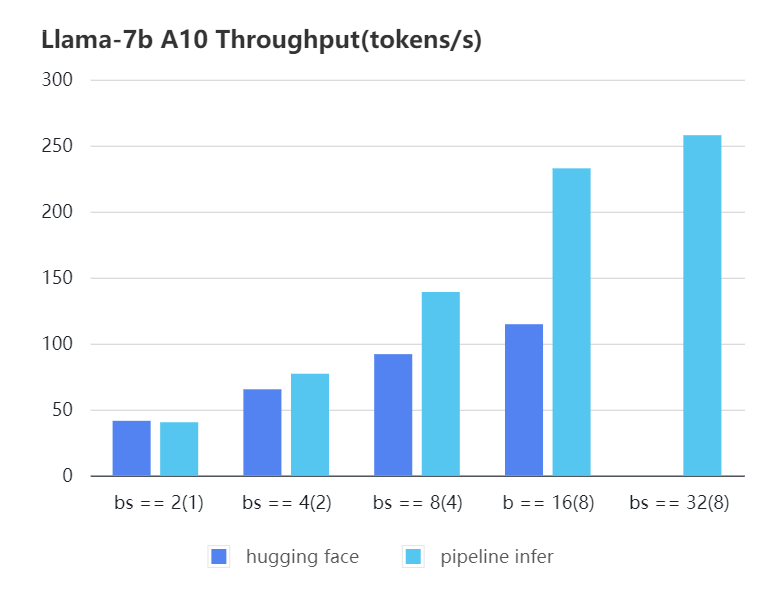

+

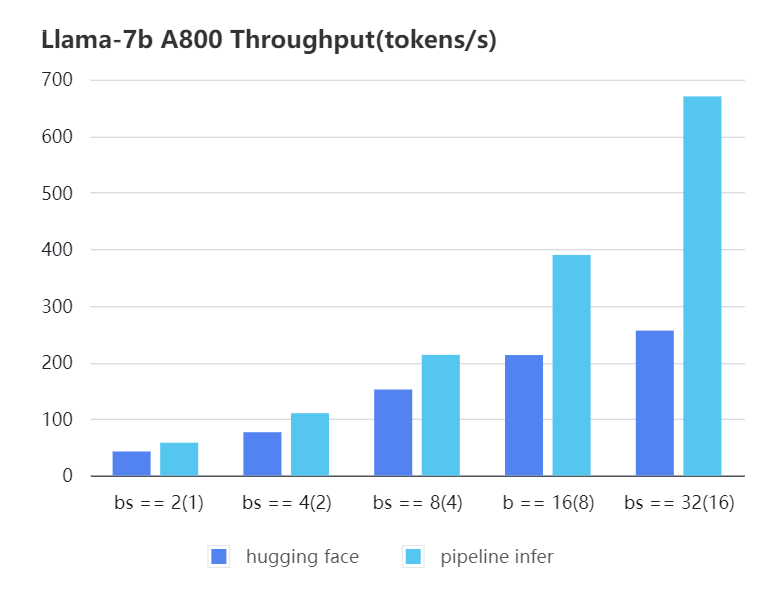

+### Pipline Parallelism Inference

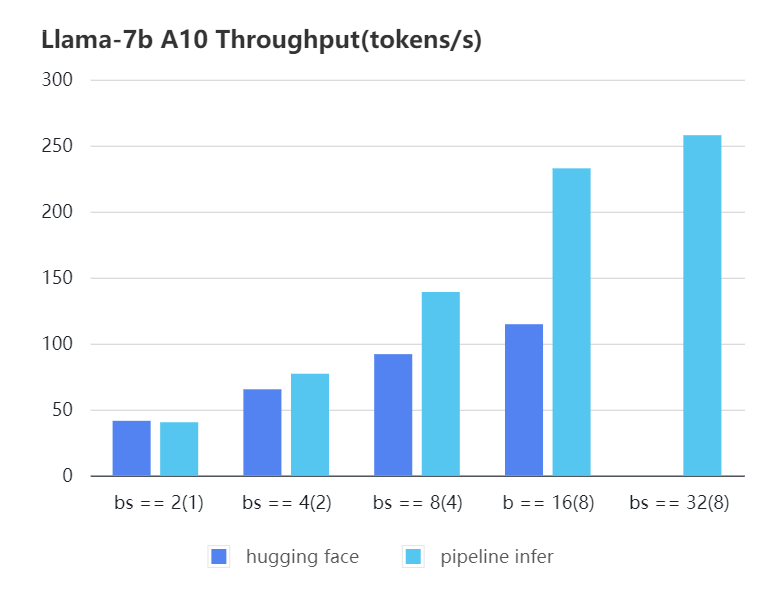

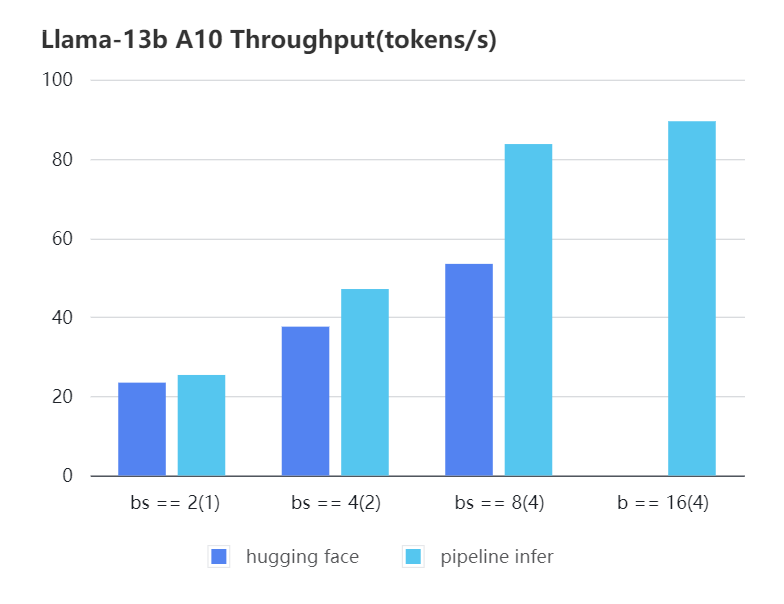

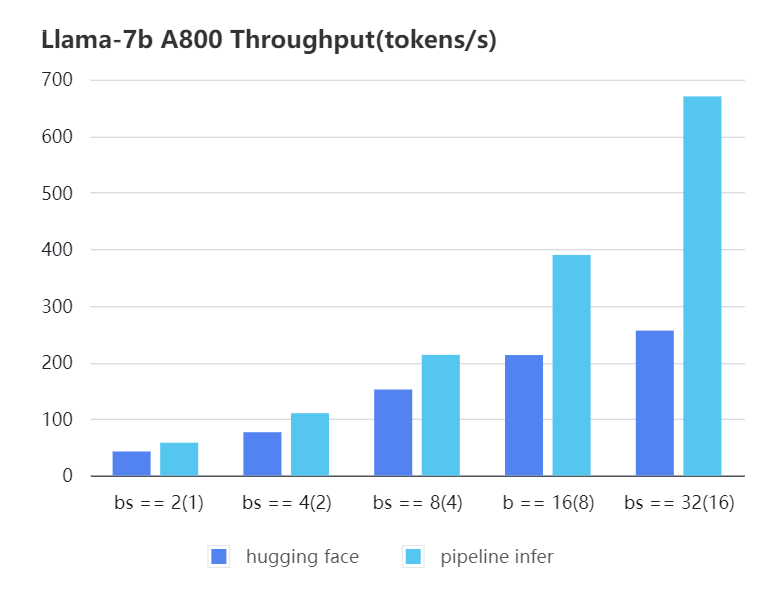

+We conducted multiple benchmark tests to evaluate the performance. We compared the inference `latency` and `throughputs` between `Pipeline Inference` and `hugging face` pipeline. The test environment is 2 * A10, 20G / 2 * A800, 80G. We set input length=1024, output length=128.

+

+

+#### A10 7b, fp16

+

+| batch_size(micro_batch size)| 2(1) | 4(2) | 8(4) | 16(8) | 32(8) | 32(16)|

+| :-------------------------: | :---: | :---:| :---: | :---: | :---: | :---: |

+| Pipeline Inference | 40.35 | 77.10| 139.03| 232.70| 257.81| OOM |

+| Hugging Face | 41.43 | 65.30| 91.93 | 114.62| OOM | OOM |

+

+

+

+

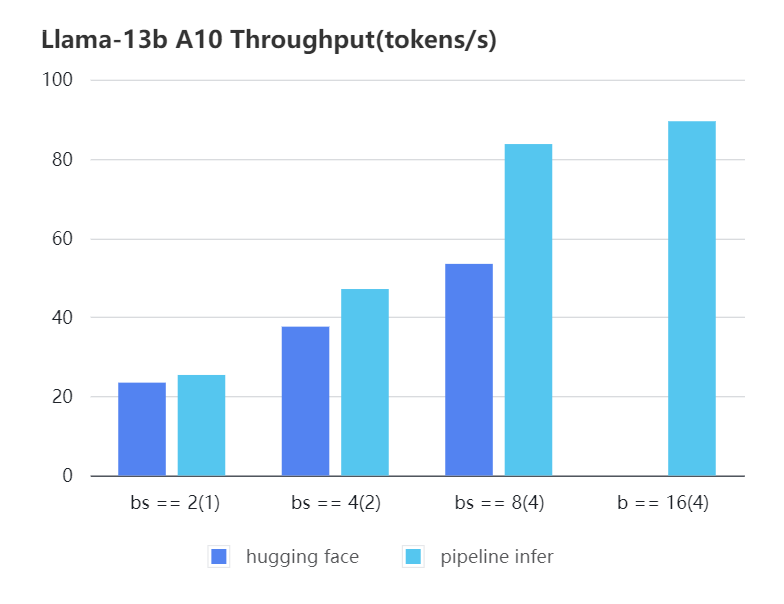

+#### A10 13b, fp16

+

+| batch_size(micro_batch size)| 2(1) | 4(2) | 8(4) | 16(4) |

+| :---: | :---: | :---: | :---: | :---: |

+| Pipeline Inference | 25.39 | 47.09 | 83.7 | 89.46 |

+| Hugging Face | 23.48 | 37.59 | 53.44 | OOM |

+

+

+

+

+#### A800 7b, fp16

+

+| batch_size(micro_batch size) | 2(1) | 4(2) | 8(4) | 16(8) | 32(16) |

+| :---: | :---: | :---: | :---: | :---: | :---: |

+| Pipeline Inference| 57.97 | 110.13 | 213.33 | 389.86 | 670.12 |

+| Hugging Face | 42.44 | 76.5 | 151.97 | 212.88 | 256.13 |

+

+

+

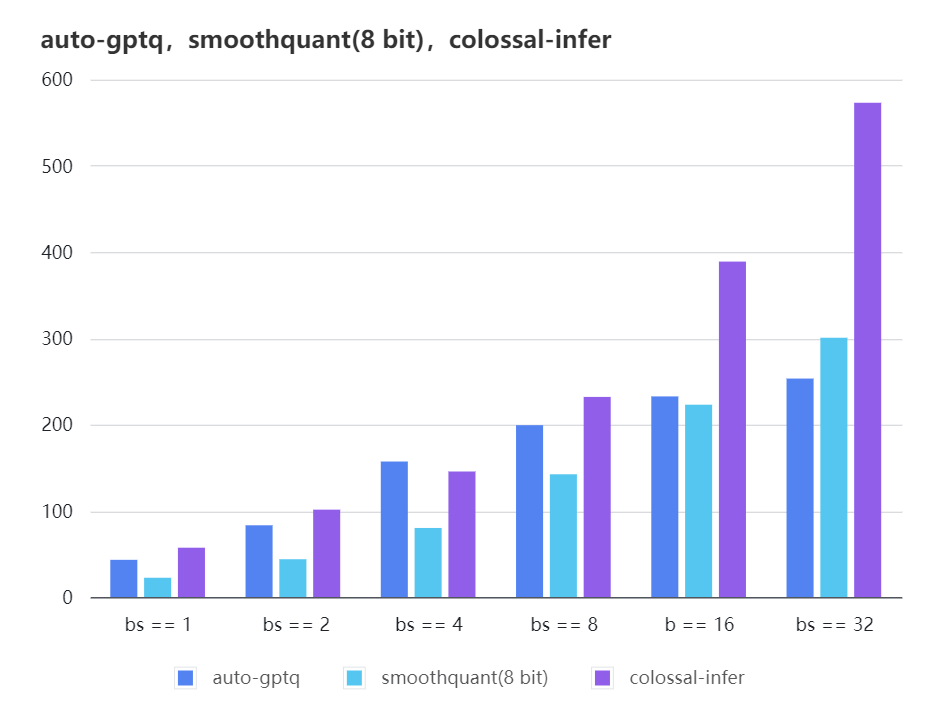

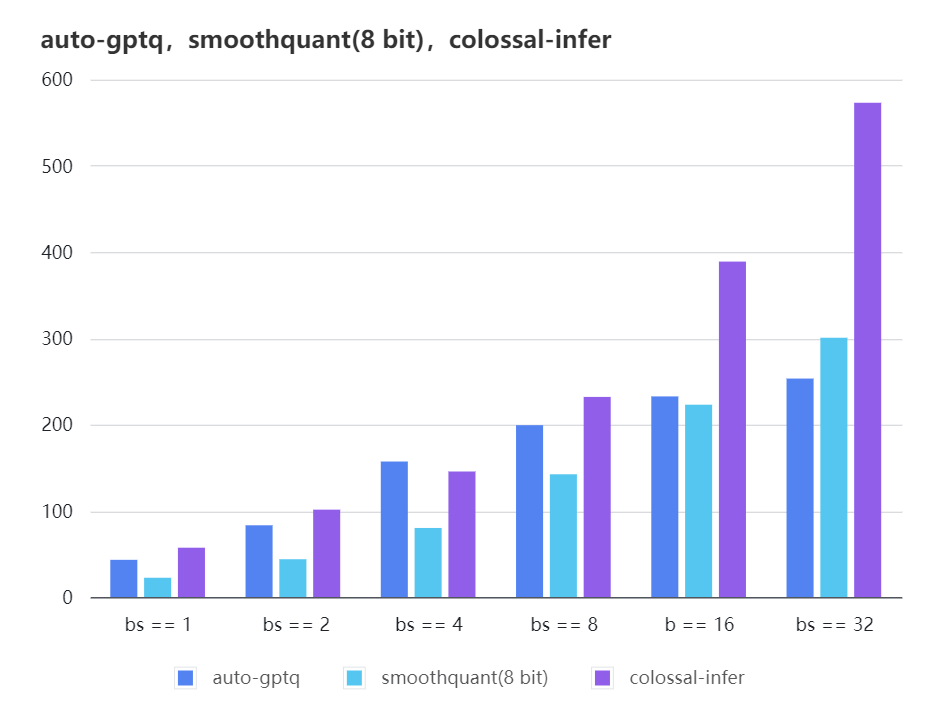

+### Quantization LLama

+

+| batch_size | 8 | 16 | 32 |

+| :---------------------: | :----: | :----: | :----: |

+| auto-gptq | 199.20 | 232.56 | 253.26 |

+| smooth-quant | 142.28 | 222.96 | 300.59 |

+| colossal-gptq | 231.98 | 388.87 | 573.03 |

+

+

+

+

+

The results of more models are coming soon!

diff --git a/colossalai/inference/__init__.py b/colossalai/inference/__init__.py

index 35891307e754..a95205efaa78 100644

--- a/colossalai/inference/__init__.py

+++ b/colossalai/inference/__init__.py

@@ -1,3 +1,4 @@

-from .pipeline import PPInferEngine

+from .engine import InferenceEngine

+from .engine.policies import BloomModelInferPolicy, ChatGLM2InferPolicy, LlamaModelInferPolicy

-__all__ = ["PPInferEngine"]

+__all__ = ["InferenceEngine", "LlamaModelInferPolicy", "BloomModelInferPolicy", "ChatGLM2InferPolicy"]

diff --git a/colossalai/inference/engine/__init__.py b/colossalai/inference/engine/__init__.py

new file mode 100644

index 000000000000..6e60da695a22

--- /dev/null

+++ b/colossalai/inference/engine/__init__.py

@@ -0,0 +1,3 @@

+from .engine import InferenceEngine

+

+__all__ = ["InferenceEngine"]

diff --git a/colossalai/inference/engine/engine.py b/colossalai/inference/engine/engine.py

new file mode 100644

index 000000000000..61da5858aa86

--- /dev/null

+++ b/colossalai/inference/engine/engine.py

@@ -0,0 +1,195 @@

+from typing import Union

+

+import torch

+import torch.distributed as dist

+import torch.nn as nn

+from transformers.utils import logging

+

+from colossalai.cluster import ProcessGroupMesh

+from colossalai.pipeline.schedule.generate import GenerateSchedule

+from colossalai.pipeline.stage_manager import PipelineStageManager

+from colossalai.shardformer import ShardConfig, ShardFormer

+from colossalai.shardformer.policies.base_policy import Policy

+

+from ..kv_cache import MemoryManager

+from .microbatch_manager import MicroBatchManager

+from .policies import model_policy_map

+

+PP_AXIS, TP_AXIS = 0, 1

+

+_supported_models = [

+ "LlamaForCausalLM",

+ "BloomForCausalLM",

+ "LlamaGPTQForCausalLM",

+ "SmoothLlamaForCausalLM",

+ "ChatGLMForConditionalGeneration",

+]

+

+

+class InferenceEngine:

+ """

+ InferenceEngine is a class that handles the pipeline parallel inference.

+

+ Args:

+ tp_size (int): the size of tensor parallelism.

+ pp_size (int): the size of pipeline parallelism.

+ dtype (str): the data type of the model, should be one of 'fp16', 'fp32', 'bf16'.

+ model (`nn.Module`): the model not in pipeline style, and will be modified with `ShardFormer`.

+ model_policy (`colossalai.shardformer.policies.base_policy.Policy`): the policy to shardformer model. It will be determined by the model type if not provided.

+ micro_batch_size (int): the micro batch size. Only useful when `pp_size` > 1.

+ micro_batch_buffer_size (int): the buffer size for micro batch. Normally, it should be the same as the number of pipeline stages.

+ max_batch_size (int): the maximum batch size.

+ max_input_len (int): the maximum input length.

+ max_output_len (int): the maximum output length.

+ quant (str): the quantization method, should be one of 'smoothquant', 'gptq', None.

+ verbose (bool): whether to return the time cost of each step.

+

+ """

+

+ def __init__(

+ self,

+ tp_size: int = 1,

+ pp_size: int = 1,

+ dtype: str = "fp16",

+ model: nn.Module = None,

+ model_policy: Policy = None,

+ micro_batch_size: int = 1,

+ micro_batch_buffer_size: int = None,

+ max_batch_size: int = 4,

+ max_input_len: int = 32,

+ max_output_len: int = 32,

+ quant: str = None,

+ verbose: bool = False,

+ # TODO: implement early_stopping, and various gerneration options

+ early_stopping: bool = False,

+ do_sample: bool = False,

+ num_beams: int = 1,

+ ) -> None:

+ if quant == "gptq":

+ from ..quant.gptq import GPTQManager

+

+ self.gptq_manager = GPTQManager(model.quantize_config, max_input_len=max_input_len)

+ model = model.model

+ elif quant == "smoothquant":

+ model = model.model

+

+ assert model.__class__.__name__ in _supported_models, f"Model {model.__class__.__name__} is not supported."

+ assert (

+ tp_size * pp_size == dist.get_world_size()

+ ), f"TP size({tp_size}) * PP size({pp_size}) should be equal to the global world size ({dist.get_world_size()})"

+ assert model, "Model should be provided."

+ assert dtype in ["fp16", "fp32", "bf16"], "dtype should be one of 'fp16', 'fp32', 'bf16'"

+

+ assert max_batch_size <= 64, "Max batch size exceeds the constraint"

+ assert max_input_len + max_output_len <= 4096, "Max length exceeds the constraint"

+ assert quant in ["smoothquant", "gptq", None], "quant should be one of 'smoothquant', 'gptq'"

+ self.pp_size = pp_size

+ self.tp_size = tp_size

+ self.quant = quant

+

+ logger = logging.get_logger(__name__)

+ if quant == "smoothquant" and dtype != "fp32":

+ dtype = "fp32"

+ logger.warning_once("Warning: smoothquant only support fp32 and int8 mix precision. set dtype to fp32")

+

+ if dtype == "fp16":

+ self.dtype = torch.float16

+ model.half()

+ elif dtype == "bf16":

+ self.dtype = torch.bfloat16

+ model.to(torch.bfloat16)

+ else:

+ self.dtype = torch.float32

+

+ if model_policy is None:

+ model_policy = model_policy_map[model.config.model_type]()

+

+ # Init pg mesh

+ pg_mesh = ProcessGroupMesh(pp_size, tp_size)

+

+ stage_manager = PipelineStageManager(pg_mesh, PP_AXIS, True if pp_size * tp_size > 1 else False)

+ self.cache_manager_list = [

+ self._init_manager(model, max_batch_size, max_input_len, max_output_len)

+ for _ in range(micro_batch_buffer_size or pp_size)

+ ]

+ self.mb_manager = MicroBatchManager(

+ stage_manager.stage,

+ micro_batch_size,

+ micro_batch_buffer_size or pp_size,

+ max_input_len,

+ max_output_len,

+ self.cache_manager_list,

+ )

+ self.verbose = verbose

+ self.schedule = GenerateSchedule(stage_manager, self.mb_manager, verbose)

+

+ self.model = self._shardformer(

+ model, model_policy, stage_manager, pg_mesh.get_group_along_axis(TP_AXIS) if pp_size * tp_size > 1 else None

+ )

+ if quant == "gptq":

+ self.gptq_manager.post_init_gptq_buffer(self.model)

+

+ def generate(self, input_list: Union[list, dict]):

+ """

+ Args:

+ input_list (list): a list of input data, each element is a `BatchEncoding` or `dict`.

+

+ Returns:

+ out (list): a list of output data, each element is a list of token.

+ timestamp (float): the time cost of the inference, only return when verbose is `True`.

+ """

+

+ out, timestamp = self.schedule.generate_step(self.model, iter([input_list]))

+ if self.verbose:

+ return out, timestamp

+ else:

+ return out

+

+ def _shardformer(self, model, model_policy, stage_manager, tp_group):

+ shardconfig = ShardConfig(

+ tensor_parallel_process_group=tp_group,

+ pipeline_stage_manager=stage_manager,

+ enable_tensor_parallelism=(self.tp_size > 1),

+ enable_fused_normalization=False,

+ enable_all_optimization=False,

+ enable_flash_attention=False,

+ enable_jit_fused=False,

+ enable_sequence_parallelism=False,

+ extra_kwargs={"quant": self.quant},

+ )

+ shardformer = ShardFormer(shard_config=shardconfig)

+ shard_model, _ = shardformer.optimize(model, model_policy)

+ return shard_model.cuda()

+

+ def _init_manager(self, model, max_batch_size: int, max_input_len: int, max_output_len: int) -> None:

+ max_total_token_num = max_batch_size * (max_input_len + max_output_len)

+ if model.config.model_type == "llama":

+ head_dim = model.config.hidden_size // model.config.num_attention_heads

+ head_num = model.config.num_key_value_heads // self.tp_size

+ num_hidden_layers = (

+ model.config.num_hidden_layers

+ if hasattr(model.config, "num_hidden_layers")

+ else model.config.num_layers

+ )

+ layer_num = num_hidden_layers // self.pp_size

+ elif model.config.model_type == "bloom":

+ head_dim = model.config.hidden_size // model.config.n_head

+ head_num = model.config.n_head // self.tp_size

+ num_hidden_layers = model.config.n_layer

+ layer_num = num_hidden_layers // self.pp_size

+ elif model.config.model_type == "chatglm":

+ head_dim = model.config.hidden_size // model.config.num_attention_heads

+ if model.config.multi_query_attention:

+ head_num = model.config.multi_query_group_num // self.tp_size

+ else:

+ head_num = model.config.num_attention_heads // self.tp_size

+ num_hidden_layers = model.config.num_layers

+ layer_num = num_hidden_layers // self.pp_size

+ else:

+ raise NotImplementedError("Only support llama, bloom and chatglm model.")

+

+ if self.quant == "smoothquant":

+ cache_manager = MemoryManager(max_total_token_num, torch.int8, head_num, head_dim, layer_num)

+ else:

+ cache_manager = MemoryManager(max_total_token_num, self.dtype, head_num, head_dim, layer_num)

+ return cache_manager

diff --git a/colossalai/inference/pipeline/microbatch_manager.py b/colossalai/inference/engine/microbatch_manager.py

similarity index 72%

rename from colossalai/inference/pipeline/microbatch_manager.py

rename to colossalai/inference/engine/microbatch_manager.py

index 49d1bf3f42cb..d698c89f9936 100644

--- a/colossalai/inference/pipeline/microbatch_manager.py

+++ b/colossalai/inference/engine/microbatch_manager.py

@@ -1,8 +1,10 @@

from enum import Enum

-from typing import Dict, Tuple

+from typing import Dict

import torch

+from ..kv_cache import BatchInferState, MemoryManager

+

__all__ = "MicroBatchManager"

@@ -27,21 +29,19 @@ class MicroBatchDescription:

def __init__(

self,

inputs_dict: Dict[str, torch.Tensor],

- output_dict: Dict[str, torch.Tensor],

- new_length: int,

+ max_input_len: int,

+ max_output_len: int,

+ cache_manager: MemoryManager,

) -> None:

- assert output_dict.get("hidden_states") is not None

- self.mb_length = output_dict["hidden_states"].shape[-2]

- self.target_length = self.mb_length + new_length

- self.kv_cache = ()

-

- def update(self, output_dict: Dict[str, torch.Tensor] = None, new_token: torch.Tensor = None):

- if output_dict is not None:

- self._update_kvcache(output_dict["past_key_values"])

+ self.mb_length = inputs_dict["input_ids"].shape[-1]

+ self.target_length = self.mb_length + max_output_len

+ self.infer_state = BatchInferState.init_from_batch(

+ batch=inputs_dict, max_input_len=max_input_len, max_output_len=max_output_len, cache_manager=cache_manager

+ )

+ # print(f"[init] {inputs_dict}, {max_input_len}, {max_output_len}, {cache_manager}, {self.infer_state}")

- def _update_kvcache(self, kv_cache: Tuple):

- assert type(kv_cache) == tuple

- self.kv_cache = kv_cache

+ def update(self, *args, **kwargs):

+ pass

@property

def state(self):

@@ -75,22 +75,24 @@ class HeadMicroBatchDescription(MicroBatchDescription):

Args:

inputs_dict (Dict[str, torch.Tensor]): the inputs of current stage. The key should have `input_ids` and `attention_mask`.

output_dict (Dict[str, torch.Tensor]): the outputs of previous stage. The key should have `hidden_states` and `past_key_values`.

- new_length (int): the new length of the input sequence.

"""

def __init__(

- self, inputs_dict: Dict[str, torch.Tensor], output_dict: Dict[str, torch.Tensor], new_length: int

+ self,

+ inputs_dict: Dict[str, torch.Tensor],

+ max_input_len: int,

+ max_output_len: int,

+ cache_manager: MemoryManager,

) -> None:

- super().__init__(inputs_dict, output_dict, new_length)

+ super().__init__(inputs_dict, max_input_len, max_output_len, cache_manager)

assert inputs_dict is not None

assert inputs_dict.get("input_ids") is not None and inputs_dict.get("attention_mask") is not None

self.input_ids = inputs_dict["input_ids"]

self.attn_mask = inputs_dict["attention_mask"]

self.new_tokens = None

- def update(self, output_dict: Dict[str, torch.Tensor] = None, new_token: torch.Tensor = None):

- super().update(output_dict, new_token)

+ def update(self, new_token: torch.Tensor = None):

if new_token is not None:

self._update_newtokens(new_token)

if self.state is not Status.DONE and new_token is not None:

@@ -125,16 +127,16 @@ class BodyMicroBatchDescription(MicroBatchDescription):

Args:

inputs_dict (Dict[str, torch.Tensor]): will always be `None`. Other stages only receive hiddenstates from previous stage.

- output_dict (Dict[str, torch.Tensor]): the outputs of previous stage. The key should have `hidden_states` and `past_key_values`.

"""

def __init__(

- self, inputs_dict: Dict[str, torch.Tensor], output_dict: Dict[str, torch.Tensor], new_length: int

+ self,

+ inputs_dict: Dict[str, torch.Tensor],

+ max_input_len: int,

+ max_output_len: int,

+ cache_manager: MemoryManager,

) -> None:

- super().__init__(inputs_dict, output_dict, new_length)

-

- def update(self, output_dict: Dict[str, torch.Tensor] = None, new_token: torch.Tensor = None):