diff --git a/.github/workflows/README.md b/.github/workflows/README.md

index 9634b84b8ff8..a46d8b1c24d0 100644

--- a/.github/workflows/README.md

+++ b/.github/workflows/README.md

@@ -30,7 +30,7 @@ In the section below, we will dive into the details of different workflows avail

Refer to this [documentation](https://docs.github.com/en/actions/managing-workflow-runs/manually-running-a-workflow) on how to manually trigger a workflow.

I will provide the details of each workflow below.

-**A PR which changes the `version.txt` is considered as a release PR in the following coontext.**

+**A PR which changes the `version.txt` is considered as a release PR in the following context.**

### Code Style Check

@@ -58,15 +58,15 @@ I will provide the details of each workflow below.

#### Example Test on Dispatch

This workflow is triggered by manually dispatching the workflow. It has the following input parameters:

-- `example_directory`: the example directory to test. Multiple directories are supported and must be separated b$$y comma. For example, language/gpt, images/vit. Simply input language or simply gpt does not work.

+- `example_directory`: the example directory to test. Multiple directories are supported and must be separated by comma. For example, language/gpt, images/vit. Simply input language or simply gpt does not work.

### Compatibility Test

| Workflow Name | File name | Description |

| -------------------------------- | ------------------------------------ | -------------------------------------------------------------------------------------------------------------------- |

-| `Compatibility Test on PR` | `compatibility_test_on_pr.yml` | Check Colossal-AI's compatiblity when `version.txt` is changed in a PR. |

-| `Compatibility Test on Schedule` | `compatibility_test_on_schedule.yml` | This workflow will check the compatiblity of Colossal-AI against PyTorch specified in `.compatibility` every Sunday. |

-| `Compatiblity Test on Dispatch` | `compatibility_test_on_dispatch.yml` | Test PyTorch Compatibility manually. |

+| `Compatibility Test on PR` | `compatibility_test_on_pr.yml` | Check Colossal-AI's compatibility when `version.txt` is changed in a PR. |

+| `Compatibility Test on Schedule` | `compatibility_test_on_schedule.yml` | This workflow will check the compatibility of Colossal-AI against PyTorch specified in `.compatibility` every Sunday. |

+| `Compatibility Test on Dispatch` | `compatibility_test_on_dispatch.yml` | Test PyTorch Compatibility manually. |

#### Compatibility Test on Dispatch

@@ -74,7 +74,7 @@ This workflow is triggered by manually dispatching the workflow. It has the foll

- `torch version`:torch version to test against, multiple versions are supported but must be separated by comma. The default is value is all, which will test all available torch versions listed in this [repository](https://github.com/hpcaitech/public_assets/tree/main/colossalai/torch_build/torch_wheels).

- `cuda version`: cuda versions to test against, multiple versions are supported but must be separated by comma. The CUDA versions must be present in our [DockerHub repository](https://hub.docker.com/r/hpcaitech/cuda-conda).

-> It only test the compatiblity of the main branch

+> It only test the compatibility of the main branch

### Release

@@ -113,7 +113,7 @@ This `.compatibility` file is to tell GitHub Actions which PyTorch and CUDA vers

2. `.cuda_ext.json`

-This file controls which CUDA versions will be checked against CUDA extenson built. You can add a new entry according to the json schema below to check the AOT build of PyTorch extensions before release.

+This file controls which CUDA versions will be checked against CUDA extension built. You can add a new entry according to the json schema below to check the AOT build of PyTorch extensions before release.

```json

{

@@ -144,7 +144,7 @@ This file controls which CUDA versions will be checked against CUDA extenson bui

- [x] check on PR

- [x] regular check

- [x] manual dispatch

-- [x] compatiblity check

+- [x] compatibility check

- [x] check on PR

- [x] manual dispatch

- [x] auto test when release

diff --git a/.github/workflows/run_chatgpt_examples.yml b/.github/workflows/run_chatgpt_examples.yml

index 51bb9d074644..1d8240ad4631 100644

--- a/.github/workflows/run_chatgpt_examples.yml

+++ b/.github/workflows/run_chatgpt_examples.yml

@@ -4,10 +4,10 @@ on:

pull_request:

types: [synchronize, opened, reopened]

paths:

- - 'applications/ChatGPT/chatgpt/**'

- - 'applications/ChatGPT/requirements.txt'

- - 'applications/ChatGPT/setup.py'

- - 'applications/ChatGPT/examples/**'

+ - 'applications/Chat/coati/**'

+ - 'applications/Chat/requirements.txt'

+ - 'applications/Chat/setup.py'

+ - 'applications/Chat/examples/**'

jobs:

@@ -16,7 +16,7 @@ jobs:

runs-on: [self-hosted, gpu]

container:

image: hpcaitech/pytorch-cuda:1.12.0-11.3.0

- options: --gpus all --rm -v /data/scratch/chatgpt:/data/scratch/chatgpt

+ options: --gpus all --rm -v /data/scratch/github_actions/chat:/data/scratch/github_actions/chat

timeout-minutes: 30

defaults:

run:

@@ -27,17 +27,26 @@ jobs:

- name: Install ColossalAI and ChatGPT

run: |

- pip install -v .

- cd applications/ChatGPT

+ pip install -e .

+ cd applications/Chat

pip install -v .

pip install -r examples/requirements.txt

+ - name: Install Transformers

+ run: |

+ cd applications/Chat

+ git clone https://github.com/hpcaitech/transformers

+ cd transformers

+ pip install -v .

+

- name: Execute Examples

run: |

- cd applications/ChatGPT

+ cd applications/Chat

rm -rf ~/.cache/colossalai

./examples/test_ci.sh

env:

NCCL_SHM_DISABLE: 1

MAX_JOBS: 8

- PROMPT_PATH: /data/scratch/chatgpt/prompts.csv

+ SFT_DATASET: /data/scratch/github_actions/chat/data.json

+ PROMPT_PATH: /data/scratch/github_actions/chat/prompts_en.jsonl

+ PRETRAIN_DATASET: /data/scratch/github_actions/chat/alpaca_data.json

diff --git a/applications/Chat/.gitignore b/applications/Chat/.gitignore

index 1ec5f53a8b8d..2b9b4f345d0f 100644

--- a/applications/Chat/.gitignore

+++ b/applications/Chat/.gitignore

@@ -144,3 +144,5 @@ docs/.build

# wandb log

example/wandb/

+

+examples/awesome-chatgpt-prompts/

\ No newline at end of file

diff --git a/applications/Chat/README.md b/applications/Chat/README.md

index 8f22084953ba..dea562c4d2ad 100644

--- a/applications/Chat/README.md

+++ b/applications/Chat/README.md

@@ -15,19 +15,18 @@

- [Install the Transformers](#install-the-transformers)

- [How to use?](#how-to-use)

- [Supervised datasets collection](#supervised-datasets-collection)

- - [Stage1 - Supervised instructs tuning](#stage1---supervised-instructs-tuning)

- - [Stage2 - Training reward model](#stage2---training-reward-model)

- - [Stage3 - Training model with reinforcement learning by human feedback](#stage3---training-model-with-reinforcement-learning-by-human-feedback)

- - [Inference - After Training](#inference---after-training)

- - [8-bit setup](#8-bit-setup)

- - [4-bit setup](#4-bit-setup)

+ - [RLHF Training Stage1 - Supervised instructs tuning](#RLHF-training-stage1---supervised-instructs-tuning)

+ - [RLHF Training Stage2 - Training reward model](#RLHF-training-stage2---training-reward-model)

+ - [RLHF Training Stage3 - Training model with reinforcement learning by human feedback](#RLHF-training-stage3---training-model-with-reinforcement-learning-by-human-feedback)

+ - [Inference Quantization and Serving - After Training](#inference-quantization-and-serving---after-training)

- [Coati7B examples](#coati7b-examples)

- [Generation](#generation)

- [Open QA](#open-qa)

- - [Limitation for LLaMA-finetuned models](#limitation-for-llama-finetuned-models)

- - [Limitation of dataset](#limitation-of-dataset)

+ - [Limitation for LLaMA-finetuned models](#limitation)

+ - [Limitation of dataset](#limitation)

- [FAQ](#faq)

- - [How to save/load checkpoint](#how-to-saveload-checkpoint)

+ - [How to save/load checkpoint](#faq)

+ - [How to train with limited resources](#faq)

- [The Plan](#the-plan)

- [Real-time progress](#real-time-progress)

- [Invitation to open-source contribution](#invitation-to-open-source-contribution)

@@ -82,6 +81,8 @@ Due to resource constraints, we will only provide this service from 29th Mar 202

```shell

conda create -n coati

conda activate coati

+git clone https://github.com/hpcaitech/ColossalAI.git

+cd ColossalAI/applications/Chat

pip install .

```

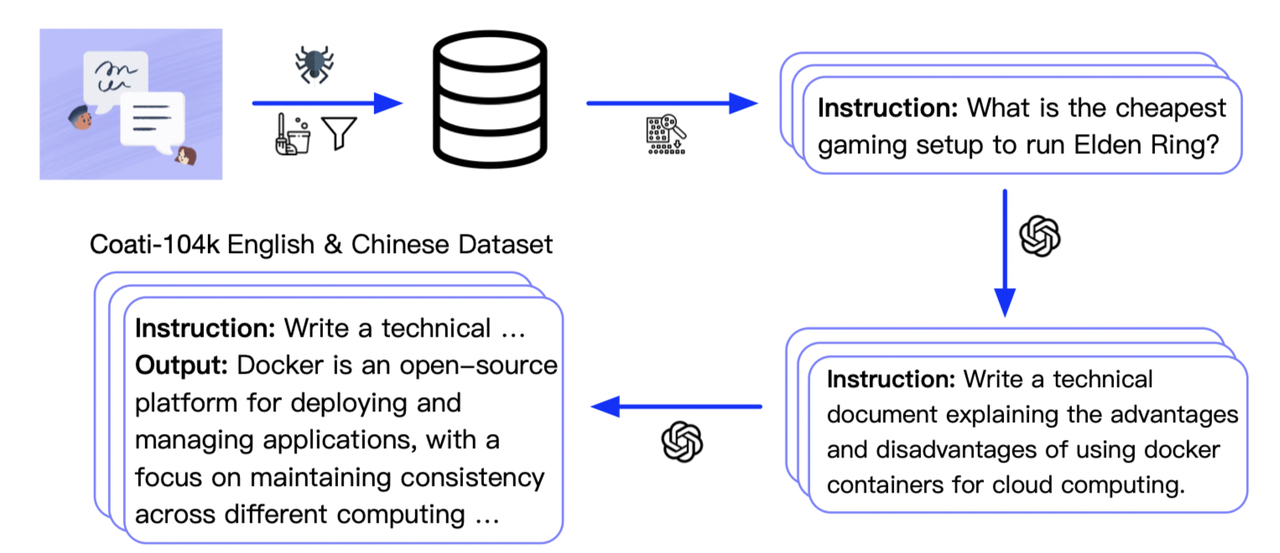

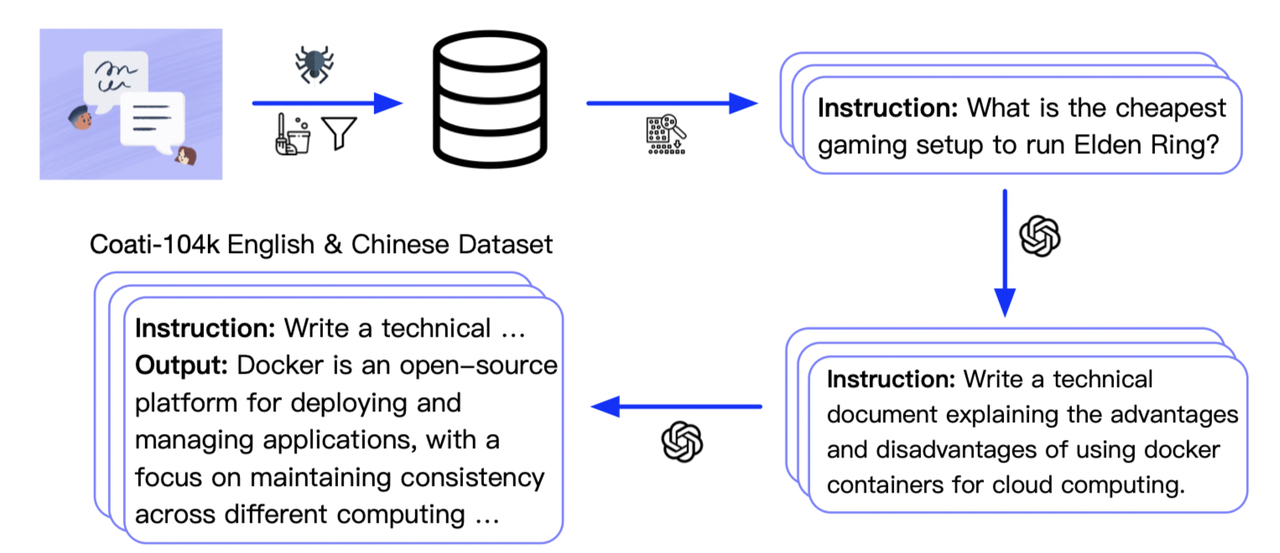

@@ -106,43 +107,19 @@ Here is how we collected the data

-### Stage1 - Supervised instructs tuning

+### RLHF Training Stage1 - Supervised instructs tuning

-Stage1 is supervised instructs fine-tuning, which uses the datasets mentioned earlier to fine-tune the model

+Stage1 is supervised instructs fine-tuning, which uses the datasets mentioned earlier to fine-tune the model.

-you can run the `examples/train_sft.sh` to start a supervised instructs fine-tuning

+You can run the `examples/train_sft.sh` to start a supervised instructs fine-tuning.

-```

-torchrun --standalone --nproc_per_node=4 train_sft.py \

- --pretrain "/path/to/LLaMa-7B/" \

- --model 'llama' \

- --strategy colossalai_zero2 \

- --log_interval 10 \

- --save_path /path/to/Coati-7B \

- --dataset /path/to/data.json \

- --batch_size 4 \

- --accimulation_steps 8 \

- --lr 2e-5 \

- --max_datasets_size 512 \

- --max_epochs 1 \

-```

-

-### Stage2 - Training reward model

+### RLHF Training Stage2 - Training reward model

Stage2 trains a reward model, which obtains corresponding scores by manually ranking different outputs for the same prompt and supervises the training of the reward model

-you can run the `examples/train_rm.sh` to start a reward model training

+You can run the `examples/train_rm.sh` to start a reward model training.

-```

-torchrun --standalone --nproc_per_node=4 train_reward_model.py

- --pretrain "/path/to/LLaMa-7B/" \

- --model 'llama' \

- --strategy colossalai_zero2 \

- --loss_fn 'log_exp'\

- --save_path 'rmstatic.pt' \

-```

-

-### Stage3 - Training model with reinforcement learning by human feedback

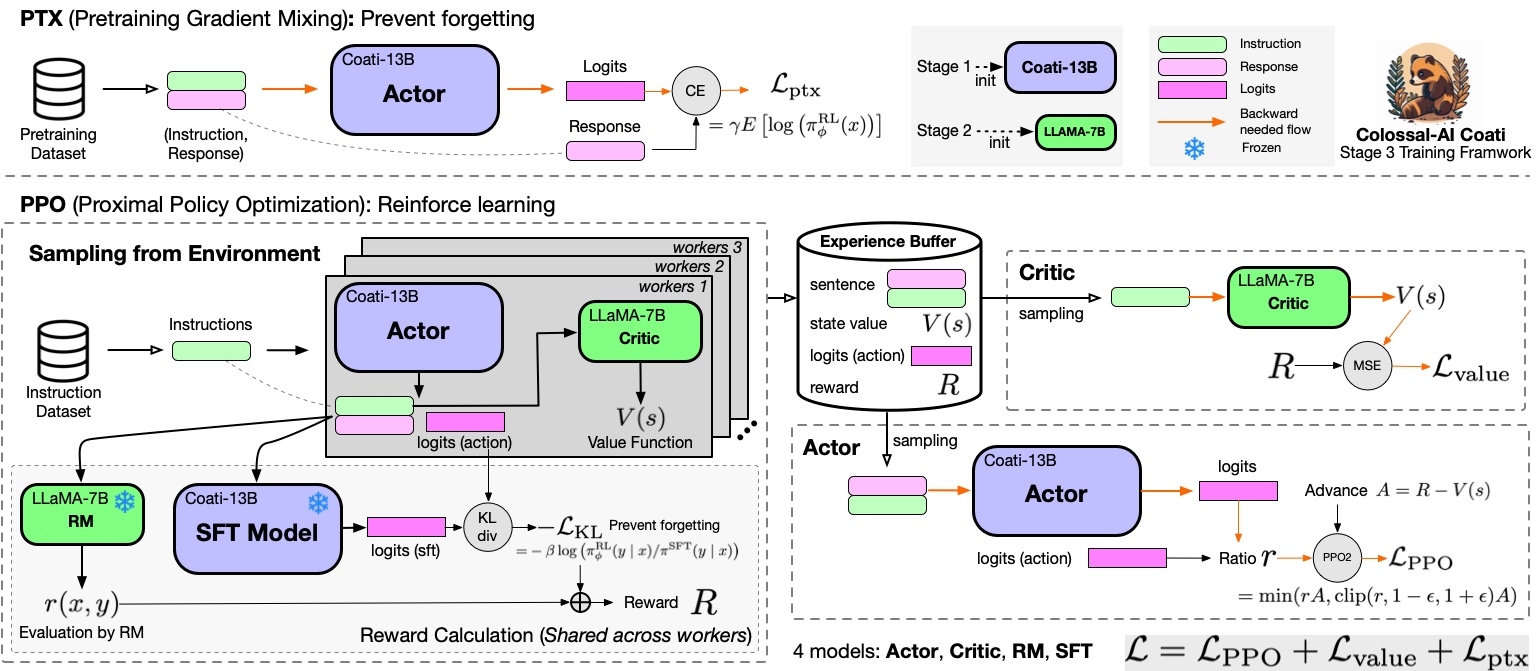

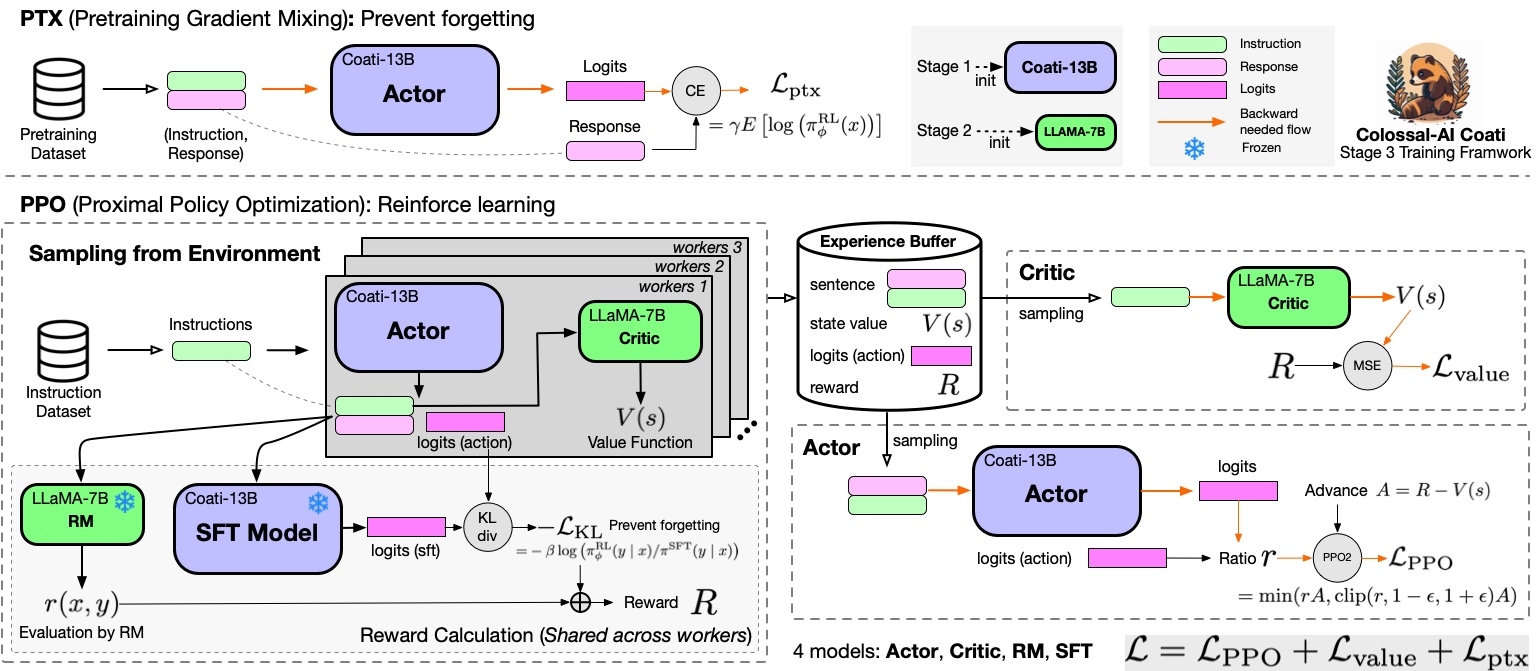

+### RLHF Training Stage3 - Training model with reinforcement learning by human feedback

Stage3 uses reinforcement learning algorithm, which is the most complex part of the training process:

@@ -150,63 +127,16 @@ Stage3 uses reinforcement learning algorithm, which is the most complex part of

-### Stage1 - Supervised instructs tuning

+### RLHF Training Stage1 - Supervised instructs tuning

-Stage1 is supervised instructs fine-tuning, which uses the datasets mentioned earlier to fine-tune the model

+Stage1 is supervised instructs fine-tuning, which uses the datasets mentioned earlier to fine-tune the model.

-you can run the `examples/train_sft.sh` to start a supervised instructs fine-tuning

+You can run the `examples/train_sft.sh` to start a supervised instructs fine-tuning.

-```

-torchrun --standalone --nproc_per_node=4 train_sft.py \

- --pretrain "/path/to/LLaMa-7B/" \

- --model 'llama' \

- --strategy colossalai_zero2 \

- --log_interval 10 \

- --save_path /path/to/Coati-7B \

- --dataset /path/to/data.json \

- --batch_size 4 \

- --accimulation_steps 8 \

- --lr 2e-5 \

- --max_datasets_size 512 \

- --max_epochs 1 \

-```

-

-### Stage2 - Training reward model

+### RLHF Training Stage2 - Training reward model

Stage2 trains a reward model, which obtains corresponding scores by manually ranking different outputs for the same prompt and supervises the training of the reward model

-you can run the `examples/train_rm.sh` to start a reward model training

+You can run the `examples/train_rm.sh` to start a reward model training.

-```

-torchrun --standalone --nproc_per_node=4 train_reward_model.py

- --pretrain "/path/to/LLaMa-7B/" \

- --model 'llama' \

- --strategy colossalai_zero2 \

- --loss_fn 'log_exp'\

- --save_path 'rmstatic.pt' \

-```

-

-### Stage3 - Training model with reinforcement learning by human feedback

+### RLHF Training Stage3 - Training model with reinforcement learning by human feedback

Stage3 uses reinforcement learning algorithm, which is the most complex part of the training process:

@@ -150,63 +127,16 @@ Stage3 uses reinforcement learning algorithm, which is the most complex part of

-you can run the `examples/train_prompts.sh` to start training PPO with human feedback

-

-```

-torchrun --standalone --nproc_per_node=4 train_prompts.py \

- --pretrain "/path/to/LLaMa-7B/" \

- --model 'llama' \

- --strategy colossalai_zero2 \

- --prompt_path /path/to/your/prompt_dataset \

- --pretrain_dataset /path/to/your/pretrain_dataset \

- --rm_pretrain /your/pretrain/rm/defination \

- --rm_path /your/rm/model/path

-```

+You can run the `examples/train_prompts.sh` to start training PPO with human feedback.

For more details, see [`examples/`](https://github.com/hpcaitech/ColossalAI/tree/main/applications/Chat/examples).

-### Inference - After Training

-#### 8-bit setup

-

-8-bit quantization is originally supported by the latest [transformers](https://github.com/huggingface/transformers). Please install it from source.

+### Inference Quantization and Serving - After Training

-Please ensure you have downloaded HF-format model weights of LLaMA models.

+We provide an online inference server and a benchmark. We aim to run inference on single GPU, so quantization is essential when using large models.

-Usage:

-

-```python

-from transformers import LlamaForCausalLM

-USE_8BIT = True # use 8-bit quantization; otherwise, use fp16

-model = LlamaForCausalLM.from_pretrained(

- "pretrained/path",

- load_in_8bit=USE_8BIT,

- torch_dtype=torch.float16,

- device_map="auto",

- )

-if not USE_8BIT:

- model.half() # use fp16

-model.eval()

-```

-

-**Troubleshooting**: if you get errors indicating your CUDA-related libraries are not found when loading the 8-bit model, you can check whether your `LD_LIBRARY_PATH` is correct.

-

-E.g. you can set `export LD_LIBRARY_PATH=$CUDA_HOME/lib64:$LD_LIBRARY_PATH`.

-

-#### 4-bit setup

-

-Please ensure you have downloaded the HF-format model weights of LLaMA models first.

-

-Then you can follow [GPTQ-for-LLaMa](https://github.com/qwopqwop200/GPTQ-for-LLaMa). This lib provides efficient CUDA kernels and weight conversion scripts.

-

-After installing this lib, we may convert the original HF-format LLaMA model weights to a 4-bit version.

-

-```shell

-CUDA_VISIBLE_DEVICES=0 python llama.py /path/to/pretrained/llama-7b c4 --wbits 4 --groupsize 128 --save llama7b-4bit.pt

-```

-

-Run this command in your cloned `GPTQ-for-LLaMa` directory, then you will get a 4-bit weight file `llama7b-4bit-128g.pt`.

-

-**Troubleshooting**: if you get errors about `position_ids`, you can checkout to commit `50287c3b9ae4a3b66f6b5127c643ec39b769b155`(`GPTQ-for-LLaMa` repo).

+We support 8-bit quantization (RTN), 4-bit quantization (GPTQ), and FP16 inference. You can

+Online inference server scripts can help you deploy your own services.

For more details, see [`inference/`](https://github.com/hpcaitech/ColossalAI/tree/main/applications/Chat/inference).

@@ -282,24 +212,27 @@ For more details, see [`inference/`](https://github.com/hpcaitech/ColossalAI/tre

You can find more examples in this [repo](https://github.com/XueFuzhao/InstructionWild/blob/main/comparison.md).

-### Limitation for LLaMA-finetuned models

+### Limitation

+

-you can run the `examples/train_prompts.sh` to start training PPO with human feedback

-

-```

-torchrun --standalone --nproc_per_node=4 train_prompts.py \

- --pretrain "/path/to/LLaMa-7B/" \

- --model 'llama' \

- --strategy colossalai_zero2 \

- --prompt_path /path/to/your/prompt_dataset \

- --pretrain_dataset /path/to/your/pretrain_dataset \

- --rm_pretrain /your/pretrain/rm/defination \

- --rm_path /your/rm/model/path

-```

+You can run the `examples/train_prompts.sh` to start training PPO with human feedback.

For more details, see [`examples/`](https://github.com/hpcaitech/ColossalAI/tree/main/applications/Chat/examples).

-### Inference - After Training

-#### 8-bit setup

-

-8-bit quantization is originally supported by the latest [transformers](https://github.com/huggingface/transformers). Please install it from source.

+### Inference Quantization and Serving - After Training

-Please ensure you have downloaded HF-format model weights of LLaMA models.

+We provide an online inference server and a benchmark. We aim to run inference on single GPU, so quantization is essential when using large models.

-Usage:

-

-```python

-from transformers import LlamaForCausalLM

-USE_8BIT = True # use 8-bit quantization; otherwise, use fp16

-model = LlamaForCausalLM.from_pretrained(

- "pretrained/path",

- load_in_8bit=USE_8BIT,

- torch_dtype=torch.float16,

- device_map="auto",

- )

-if not USE_8BIT:

- model.half() # use fp16

-model.eval()

-```

-

-**Troubleshooting**: if you get errors indicating your CUDA-related libraries are not found when loading the 8-bit model, you can check whether your `LD_LIBRARY_PATH` is correct.

-

-E.g. you can set `export LD_LIBRARY_PATH=$CUDA_HOME/lib64:$LD_LIBRARY_PATH`.

-

-#### 4-bit setup

-

-Please ensure you have downloaded the HF-format model weights of LLaMA models first.

-

-Then you can follow [GPTQ-for-LLaMa](https://github.com/qwopqwop200/GPTQ-for-LLaMa). This lib provides efficient CUDA kernels and weight conversion scripts.

-

-After installing this lib, we may convert the original HF-format LLaMA model weights to a 4-bit version.

-

-```shell

-CUDA_VISIBLE_DEVICES=0 python llama.py /path/to/pretrained/llama-7b c4 --wbits 4 --groupsize 128 --save llama7b-4bit.pt

-```

-

-Run this command in your cloned `GPTQ-for-LLaMa` directory, then you will get a 4-bit weight file `llama7b-4bit-128g.pt`.

-

-**Troubleshooting**: if you get errors about `position_ids`, you can checkout to commit `50287c3b9ae4a3b66f6b5127c643ec39b769b155`(`GPTQ-for-LLaMa` repo).

+We support 8-bit quantization (RTN), 4-bit quantization (GPTQ), and FP16 inference. You can

+Online inference server scripts can help you deploy your own services.

For more details, see [`inference/`](https://github.com/hpcaitech/ColossalAI/tree/main/applications/Chat/inference).

@@ -282,24 +212,27 @@ For more details, see [`inference/`](https://github.com/hpcaitech/ColossalAI/tre

You can find more examples in this [repo](https://github.com/XueFuzhao/InstructionWild/blob/main/comparison.md).

-### Limitation for LLaMA-finetuned models

+### Limitation

+Limitation for LLaMA-finetuned models

- Both Alpaca and ColossalChat are based on LLaMA. It is hard to compensate for the missing knowledge in the pre-training stage.

- Lack of counting ability: Cannot count the number of items in a list.

- Lack of Logics (reasoning and calculation)

- Tend to repeat the last sentence (fail to produce the end token).

- Poor multilingual results: LLaMA is mainly trained on English datasets (Generation performs better than QA).

+Limitation of dataset

- Lack of summarization ability: No such instructions in finetune datasets.

- Lack of multi-turn chat: No such instructions in finetune datasets

- Lack of self-recognition: No such instructions in finetune datasets

- Lack of Safety:

- When the input contains fake facts, the model makes up false facts and explanations.

- Cannot abide by OpenAI's policy: When generating prompts from OpenAI API, it always abides by its policy. So no violation case is in the datasets.

+How to save/load checkpoint

We have integrated the Transformers save and load pipeline, allowing users to freely call Hugging Face's language models and save them in the HF format.

@@ -324,6 +257,63 @@ trainer.fit()

trainer.save_model(path=args.save_path, only_rank0=True, tokenizer=tokenizer)

```

+How to train with limited resources

+

+Here are some examples that can allow you to train a 7B model on a single or multiple consumer-grade GPUs.

+

+If you only have a single 24G GPU, you can use the following script. `batch_size` and `lora_rank` are the most important parameters to successfully train the model.

+```

+torchrun --standalone --nproc_per_node=1 train_sft.py \

+ --pretrain "/path/to/LLaMa-7B/" \

+ --model 'llama' \

+ --strategy naive \

+ --log_interval 10 \

+ --save_path /path/to/Coati-7B \

+ --dataset /path/to/data.json \

+ --batch_size 1 \

+ --accimulation_steps 8 \

+ --lr 2e-5 \

+ --max_datasets_size 512 \

+ --max_epochs 1 \

+ --lora_rank 16 \

+```

+

+`colossalai_gemini` strategy can enable a single 24G GPU to train the whole model without using LoRA if you have sufficient CPU memory. You can use the following script.

+```

+torchrun --standalone --nproc_per_node=1 train_sft.py \

+ --pretrain "/path/to/LLaMa-7B/" \

+ --model 'llama' \

+ --strategy colossalai_gemini \

+ --log_interval 10 \

+ --save_path /path/to/Coati-7B \

+ --dataset /path/to/data.json \

+ --batch_size 1 \

+ --accimulation_steps 8 \

+ --lr 2e-5 \

+ --max_datasets_size 512 \

+ --max_epochs 1 \

+```

+

+If you have 4x32 GB GPUs, you can even train the whole 7B model using our `colossalai_zero2_cpu` strategy! The script is given as follows.

+```

+torchrun --standalone --nproc_per_node=4 train_sft.py \

+ --pretrain "/path/to/LLaMa-7B/" \

+ --model 'llama' \

+ --strategy colossalai_zero2_cpu \

+ --log_interval 10 \

+ --save_path /path/to/Coati-7B \

+ --dataset /path/to/data.json \

+ --batch_size 1 \

+ --accimulation_steps 8 \

+ --lr 2e-5 \

+ --max_datasets_size 512 \

+ --max_epochs 1 \

+```

+

@@ -375,6 +373,13 @@ Thanks so much to all of our amazing contributors!

- Increase the capacity of the fine-tuning model by up to 3.7 times on a single GPU

- Keep in a sufficiently high running speed

+| Model Pair | Alpaca-7B ⚔ Coati-7B | Coati-7B ⚔ Alpaca-7B |

+| :-----------: | :------------------: | :------------------: |

+| Better Cases | 38 ⚔ **41** | **45** ⚔ 33 |

+| Win Rate | 48% ⚔ **52%** | **58%** ⚔ 42% |

+| Average Score | 7.06 ⚔ **7.13** | **7.31** ⚔ 6.82 |

+- Our Coati-7B model performs better than Alpaca-7B when using GPT-4 to evaluate model performance. The Coati-7B model we evaluate is an old version we trained a few weeks ago and the new version is around the corner.

+

## Authors

Coati is developed by ColossalAI Team:

diff --git a/applications/Chat/benchmarks/benchmark_gpt_dummy.py b/applications/Chat/benchmarks/benchmark_gpt_dummy.py

index c0d8b1c377aa..e41ef239d378 100644

--- a/applications/Chat/benchmarks/benchmark_gpt_dummy.py

+++ b/applications/Chat/benchmarks/benchmark_gpt_dummy.py

@@ -156,8 +156,10 @@ def main(args):

eos_token_id=tokenizer.eos_token_id,

callbacks=[performance_evaluator])

- random_prompts = torch.randint(tokenizer.vocab_size, (1000, 400), device=torch.cuda.current_device())

- trainer.fit(random_prompts,

+ random_prompts = torch.randint(tokenizer.vocab_size, (1000, 1, 400), device=torch.cuda.current_device())

+ random_attention_mask = torch.randint(1, (1000, 1, 400), device=torch.cuda.current_device()).to(torch.bool)

+ random_pretrain = [{'input_ids':random_prompts[i], 'labels':random_prompts[i], 'attention_mask':random_attention_mask[i]} for i in range(1000)]

+ trainer.fit(random_prompts, random_pretrain,

num_episodes=args.num_episodes,

max_timesteps=args.max_timesteps,

update_timesteps=args.update_timesteps)

diff --git a/applications/Chat/benchmarks/benchmark_opt_lora_dummy.py b/applications/Chat/benchmarks/benchmark_opt_lora_dummy.py

index 42df2e1f28cb..c79435ec63c5 100644

--- a/applications/Chat/benchmarks/benchmark_opt_lora_dummy.py

+++ b/applications/Chat/benchmarks/benchmark_opt_lora_dummy.py

@@ -149,8 +149,10 @@ def main(args):

eos_token_id=tokenizer.eos_token_id,

callbacks=[performance_evaluator])

- random_prompts = torch.randint(tokenizer.vocab_size, (1000, 400), device=torch.cuda.current_device())

- trainer.fit(random_prompts,

+ random_prompts = torch.randint(tokenizer.vocab_size, (1000, 1, 400), device=torch.cuda.current_device())

+ random_attention_mask = torch.randint(1, (1000, 1, 400), device=torch.cuda.current_device()).to(torch.bool)

+ random_pretrain = [{'input_ids':random_prompts[i], 'labels':random_prompts[i], 'attention_mask':random_attention_mask[i]} for i in range(1000)]

+ trainer.fit(random_prompts, random_pretrain,

num_episodes=args.num_episodes,

max_timesteps=args.max_timesteps,

update_timesteps=args.update_timesteps)

diff --git a/applications/Chat/coati/dataset/sft_dataset.py b/applications/Chat/coati/dataset/sft_dataset.py

index 91e38f06daba..3e2453468bbc 100644

--- a/applications/Chat/coati/dataset/sft_dataset.py

+++ b/applications/Chat/coati/dataset/sft_dataset.py

@@ -53,29 +53,25 @@ class SFTDataset(Dataset):

def __init__(self, dataset, tokenizer: Callable, max_length: int = 512) -> None:

super().__init__()

- # self.prompts = []

self.input_ids = []

for data in tqdm(dataset, disable=not is_rank_0()):

- prompt = data['prompt'] + data['completion'] + "<|endoftext|>"

+ prompt = data['prompt'] + data['completion'] + tokenizer.eos_token

prompt_token = tokenizer(prompt,

max_length=max_length,

padding="max_length",

truncation=True,

return_tensors="pt")

- # self.prompts.append(prompt_token)s

- self.input_ids.append(prompt_token)

- self.labels = copy.deepcopy(self.input_ids)

+ self.input_ids.append(prompt_token['input_ids'][0])

+ self.labels = copy.deepcopy(self.input_ids)

def __len__(self):

- length = len(self.prompts)

+ length = len(self.input_ids)

return length

def __getitem__(self, idx):

- # dict(input_ids=self.input_ids[i], labels=self.labels[i])

return dict(input_ids=self.input_ids[idx], labels=self.labels[idx])

- # return dict(self.prompts[idx], self.prompts[idx])

def _tokenize_fn(strings: Sequence[str], tokenizer: transformers.PreTrainedTokenizer, max_length: int) -> Dict:

diff --git a/applications/Chat/coati/experience_maker/base.py b/applications/Chat/coati/experience_maker/base.py

index 61fd4f6744dc..ff75852576c8 100644

--- a/applications/Chat/coati/experience_maker/base.py

+++ b/applications/Chat/coati/experience_maker/base.py

@@ -18,7 +18,7 @@ class Experience:

action_log_probs: (B, A)

values: (B)

reward: (B)

- advatanges: (B)

+ advantages: (B)

attention_mask: (B, S)

action_mask: (B, A)

diff --git a/applications/Chat/coati/models/gpt/gpt_actor.py b/applications/Chat/coati/models/gpt/gpt_actor.py

index 6a53ad40b817..ae9d669f1f56 100644

--- a/applications/Chat/coati/models/gpt/gpt_actor.py

+++ b/applications/Chat/coati/models/gpt/gpt_actor.py

@@ -23,7 +23,8 @@ def __init__(self,

config: Optional[GPT2Config] = None,

checkpoint: bool = False,

lora_rank: int = 0,

- lora_train_bias: str = 'none') -> None:

+ lora_train_bias: str = 'none',

+ **kwargs) -> None:

if pretrained is not None:

model = GPT2LMHeadModel.from_pretrained(pretrained)

elif config is not None:

@@ -32,4 +33,4 @@ def __init__(self,

model = GPT2LMHeadModel(GPT2Config())

if checkpoint:

model.gradient_checkpointing_enable()

- super().__init__(model, lora_rank, lora_train_bias)

+ super().__init__(model, lora_rank, lora_train_bias, **kwargs)

diff --git a/applications/Chat/coati/models/gpt/gpt_critic.py b/applications/Chat/coati/models/gpt/gpt_critic.py

index 25bb1ed94de4..2e70f5f1fc96 100644

--- a/applications/Chat/coati/models/gpt/gpt_critic.py

+++ b/applications/Chat/coati/models/gpt/gpt_critic.py

@@ -24,7 +24,8 @@ def __init__(self,

config: Optional[GPT2Config] = None,

checkpoint: bool = False,

lora_rank: int = 0,

- lora_train_bias: str = 'none') -> None:

+ lora_train_bias: str = 'none',

+ **kwargs) -> None:

if pretrained is not None:

model = GPT2Model.from_pretrained(pretrained)

elif config is not None:

@@ -34,4 +35,4 @@ def __init__(self,

if checkpoint:

model.gradient_checkpointing_enable()

value_head = nn.Linear(model.config.n_embd, 1)

- super().__init__(model, value_head, lora_rank, lora_train_bias)

+ super().__init__(model, value_head, lora_rank, lora_train_bias, **kwargs)

diff --git a/applications/Chat/coati/models/lora.py b/applications/Chat/coati/models/lora.py

index f8f7a1cb5d81..7f6eb73262fa 100644

--- a/applications/Chat/coati/models/lora.py

+++ b/applications/Chat/coati/models/lora.py

@@ -108,7 +108,7 @@ def convert_to_lora_recursively(module: nn.Module, lora_rank: int) -> None:

class LoRAModule(nn.Module):

"""A LoRA module base class. All derived classes should call `convert_to_lora()` at the bottom of `__init__()`.

- This calss will convert all torch.nn.Linear layer to LoraLinear layer.

+ This class will convert all torch.nn.Linear layer to LoraLinear layer.

Args:

lora_rank (int, optional): LoRA rank. 0 means LoRA is not applied. Defaults to 0.

diff --git a/applications/Chat/coati/ray/__init__.py b/applications/Chat/coati/ray/__init__.py

new file mode 100644

index 000000000000..5802c05bc03f

--- /dev/null

+++ b/applications/Chat/coati/ray/__init__.py

@@ -0,0 +1,2 @@

+from .src.detached_replay_buffer import DetachedReplayBuffer

+from .src.detached_trainer_ppo import DetachedPPOTrainer

diff --git a/applications/Chat/coati/ray/example/1m1t.py b/applications/Chat/coati/ray/example/1m1t.py

new file mode 100644

index 000000000000..a6527370505b

--- /dev/null

+++ b/applications/Chat/coati/ray/example/1m1t.py

@@ -0,0 +1,153 @@

+import argparse

+from copy import deepcopy

+

+import pandas as pd

+import torch

+from coati.trainer import PPOTrainer

+

+

+from coati.ray.src.experience_maker_holder import ExperienceMakerHolder

+from coati.ray.src.detached_trainer_ppo import DetachedPPOTrainer

+

+from coati.trainer.strategies import ColossalAIStrategy, DDPStrategy, NaiveStrategy

+from coati.experience_maker import NaiveExperienceMaker

+from torch.optim import Adam

+from transformers import AutoTokenizer, BloomTokenizerFast

+from transformers.models.gpt2.tokenization_gpt2 import GPT2Tokenizer

+

+from colossalai.nn.optimizer import HybridAdam

+

+import ray

+import os

+import socket

+

+def get_free_port():

+ with socket.socket(socket.AF_INET, socket.SOCK_STREAM) as s:

+ s.bind(('', 0))

+ return s.getsockname()[1]

+

+

+def get_local_ip():

+ with socket.socket(socket.AF_INET, socket.SOCK_DGRAM) as s:

+ s.connect(('8.8.8.8', 80))

+ return s.getsockname()[0]

+

+def main(args):

+ master_addr = str(get_local_ip())

+ # trainer_env_info

+ trainer_port = str(get_free_port())

+ env_info_trainer = {'local_rank' : '0',

+ 'rank' : '0',

+ 'world_size' : '1',

+ 'master_port' : trainer_port,

+ 'master_addr' : master_addr}

+

+ # maker_env_info

+ maker_port = str(get_free_port())

+ env_info_maker = {'local_rank' : '0',

+ 'rank' : '0',

+ 'world_size' : '1',

+ 'master_port' : maker_port,

+ 'master_addr' : master_addr}

+

+ # configure tokenizer

+ if args.model == 'gpt2':

+ tokenizer = GPT2Tokenizer.from_pretrained('gpt2')

+ tokenizer.pad_token = tokenizer.eos_token

+ elif args.model == 'bloom':

+ tokenizer = BloomTokenizerFast.from_pretrained(args.pretrain)

+ tokenizer.pad_token = tokenizer.eos_token

+ elif args.model == 'opt':

+ tokenizer = AutoTokenizer.from_pretrained("facebook/opt-350m")

+ else:

+ raise ValueError(f'Unsupported model "{args.model}"')

+

+ # configure Trainer

+ trainer_ref = DetachedPPOTrainer.options(name="trainer1", num_gpus=1, max_concurrency=2).remote(

+ experience_maker_holder_name_list=["maker1"],

+ strategy=args.trainer_strategy,

+ model=args.model,

+ env_info = env_info_trainer,

+ pretrained=args.pretrain,

+ lora_rank=args.lora_rank,

+ train_batch_size=args.train_batch_size,

+ buffer_limit=16,

+ experience_batch_size=args.experience_batch_size,

+ max_epochs=args.max_epochs,

+ #kwargs:

+ max_length=128,

+ do_sample=True,

+ temperature=1.0,

+ top_k=50,

+ pad_token_id=tokenizer.pad_token_id,

+ eos_token_id=tokenizer.eos_token_id,

+ debug=args.debug,

+ )

+

+ # configure Experience Maker

+ experience_holder_ref = ExperienceMakerHolder.options(name="maker1", num_gpus=1, max_concurrency=2).remote(

+ detached_trainer_name_list=["trainer1"],

+ strategy=args.maker_strategy,

+ env_info = env_info_maker,

+ experience_batch_size=args.experience_batch_size,

+ kl_coef=0.1,

+ #kwargs:

+ max_length=128,

+ do_sample=True,

+ temperature=1.0,

+ top_k=50,

+ pad_token_id=tokenizer.pad_token_id,

+ eos_token_id=tokenizer.eos_token_id,

+ debug=args.debug,

+ )

+

+ # trainer send its actor and critic to experience holders.

+ ray.get(trainer_ref.initialize_remote_makers.remote())

+

+ # configure sampler

+ dataset = pd.read_csv(args.prompt_path)['prompt']

+

+ def tokenize_fn(texts):

+ # MUST padding to max length to ensure inputs of all ranks have the same length

+ # Different length may lead to hang when using gemini, as different generation steps

+ batch = tokenizer(texts, return_tensors='pt', max_length=96, padding='max_length', truncation=True)

+ return {k: v.cuda() for k, v in batch.items()}

+

+ trainer_done_ref = trainer_ref.fit.remote(num_episodes=args.num_episodes, max_timesteps=args.max_timesteps, update_timesteps=args.update_timesteps)

+ num_exp_per_maker = args.num_episodes * args.max_timesteps // args.update_timesteps * args.max_epochs + 3 # +3 for fault tolerance

+ maker_done_ref = experience_holder_ref.workingloop.remote(dataset, tokenize_fn, times=num_exp_per_maker)

+

+ ray.get([trainer_done_ref, maker_done_ref])

+

+ # save model checkpoint after fitting

+ trainer_ref.strategy_save_actor.remote(args.save_path, only_rank0=True)

+ # save optimizer checkpoint on all ranks

+ if args.need_optim_ckpt:

+ trainer_ref.strategy_save_actor_optim.remote('actor_optim_checkpoint_prompts_%d.pt' % (torch.cuda.current_device()),

+ only_rank0=False)

+

+if __name__ == '__main__':

+ parser = argparse.ArgumentParser()

+ parser.add_argument('prompt_path')

+ parser.add_argument('--trainer_strategy',

+ choices=['naive', 'ddp', 'colossalai_gemini', 'colossalai_zero2'],

+ default='naive')

+ parser.add_argument('--maker_strategy',

+ choices=['naive', 'ddp', 'colossalai_gemini', 'colossalai_zero2'],

+ default='naive')

+ parser.add_argument('--model', default='gpt2', choices=['gpt2', 'bloom', 'opt'])

+ parser.add_argument('--pretrain', type=str, default=None)

+ parser.add_argument('--save_path', type=str, default='actor_checkpoint_prompts.pt')

+ parser.add_argument('--need_optim_ckpt', type=bool, default=False)

+ parser.add_argument('--num_episodes', type=int, default=10)

+ parser.add_argument('--max_timesteps', type=int, default=10)

+ parser.add_argument('--update_timesteps', type=int, default=10)

+ parser.add_argument('--max_epochs', type=int, default=5)

+ parser.add_argument('--train_batch_size', type=int, default=8)

+ parser.add_argument('--experience_batch_size', type=int, default=8)

+ parser.add_argument('--lora_rank', type=int, default=0, help="low-rank adaptation matrices rank")

+

+ parser.add_argument('--debug', action='store_true')

+ args = parser.parse_args()

+ ray.init(namespace=os.environ["RAY_NAMESPACE"])

+ main(args)

diff --git a/applications/Chat/coati/ray/example/1m1t.sh b/applications/Chat/coati/ray/example/1m1t.sh

new file mode 100644

index 000000000000..f7c5054c800e

--- /dev/null

+++ b/applications/Chat/coati/ray/example/1m1t.sh

@@ -0,0 +1,23 @@

+set_n_least_used_CUDA_VISIBLE_DEVICES() {

+ local n=${1:-"9999"}

+ echo "GPU Memory Usage:"

+ local FIRST_N_GPU_IDS=$(nvidia-smi --query-gpu=memory.used --format=csv \

+ | tail -n +2 \

+ | nl -v 0 \

+ | tee /dev/tty \

+ | sort -g -k 2 \

+ | awk '{print $1}' \

+ | head -n $n)

+ export CUDA_VISIBLE_DEVICES=$(echo $FIRST_N_GPU_IDS | sed 's/ /,/g')

+ echo "Now CUDA_VISIBLE_DEVICES is set to:"

+ echo "CUDA_VISIBLE_DEVICES=$CUDA_VISIBLE_DEVICES"

+}

+

+set_n_least_used_CUDA_VISIBLE_DEVICES 2

+

+export RAY_NAMESPACE="admin"

+

+python 1m1t.py "/path/to/prompts.csv" \

+ --trainer_strategy colossalai_zero2 --maker_strategy naive --lora_rank 2 --pretrain "facebook/opt-350m" --model 'opt' \

+ --num_episodes 10 --max_timesteps 10 --update_timesteps 10 \

+ --max_epochs 10 --debug

diff --git a/applications/Chat/coati/ray/example/1m2t.py b/applications/Chat/coati/ray/example/1m2t.py

new file mode 100644

index 000000000000..3883c364a8e0

--- /dev/null

+++ b/applications/Chat/coati/ray/example/1m2t.py

@@ -0,0 +1,186 @@

+import argparse

+from copy import deepcopy

+

+import pandas as pd

+import torch

+from coati.trainer import PPOTrainer

+

+

+from coati.ray.src.experience_maker_holder import ExperienceMakerHolder

+from coati.ray.src.detached_trainer_ppo import DetachedPPOTrainer

+

+from coati.trainer.strategies import ColossalAIStrategy, DDPStrategy, NaiveStrategy

+from coati.experience_maker import NaiveExperienceMaker

+from torch.optim import Adam

+from transformers import AutoTokenizer, BloomTokenizerFast

+from transformers.models.gpt2.tokenization_gpt2 import GPT2Tokenizer

+

+from colossalai.nn.optimizer import HybridAdam

+

+import ray

+import os

+import socket

+

+

+def get_free_port():

+ with socket.socket(socket.AF_INET, socket.SOCK_STREAM) as s:

+ s.bind(('', 0))

+ return s.getsockname()[1]

+

+

+def get_local_ip():

+ with socket.socket(socket.AF_INET, socket.SOCK_DGRAM) as s:

+ s.connect(('8.8.8.8', 80))

+ return s.getsockname()[0]

+

+def main(args):

+ master_addr = str(get_local_ip())

+ # trainer_env_info

+ trainer_port = str(get_free_port())

+ env_info_trainer_1 = {'local_rank' : '0',

+ 'rank' : '0',

+ 'world_size' : '2',

+ 'master_port' : trainer_port,

+ 'master_addr' : master_addr}

+ env_info_trainer_2 = {'local_rank' : '0',

+ 'rank' : '1',

+ 'world_size' : '2',

+ 'master_port' : trainer_port,

+ 'master_addr' : master_addr}

+ # maker_env_info

+ maker_port = str(get_free_port())

+ env_info_maker_1 = {'local_rank' : '0',

+ 'rank' : '0',

+ 'world_size' : '2',

+ 'master_port' : maker_port,

+ 'master_addr' : master_addr}

+ print([env_info_trainer_1,

+ env_info_trainer_2,

+ env_info_maker_1])

+ ray.init(dashboard_port = 1145)

+ # configure tokenizer

+ if args.model == 'gpt2':

+ tokenizer = GPT2Tokenizer.from_pretrained('gpt2')

+ tokenizer.pad_token = tokenizer.eos_token

+ elif args.model == 'bloom':

+ tokenizer = BloomTokenizerFast.from_pretrained(args.pretrain)

+ tokenizer.pad_token = tokenizer.eos_token

+ elif args.model == 'opt':

+ tokenizer = AutoTokenizer.from_pretrained("facebook/opt-350m")

+ else:

+ raise ValueError(f'Unsupported model "{args.model}"')

+

+ # configure Trainer

+ trainer_1_ref = DetachedPPOTrainer.options(name="trainer1", namespace=os.environ["RAY_NAMESPACE"], num_gpus=1, max_concurrency=2).remote(

+ experience_maker_holder_name_list=["maker1"],

+ strategy=args.trainer_strategy,

+ model=args.model,

+ env_info=env_info_trainer_1,

+ pretrained=args.pretrain,

+ lora_rank=args.lora_rank,

+ train_batch_size=args.train_batch_size,

+ buffer_limit=16,

+ experience_batch_size=args.experience_batch_size,

+ max_epochs=args.max_epochs,

+ #kwargs:

+ max_length=128,

+ do_sample=True,

+ temperature=1.0,

+ top_k=50,

+ pad_token_id=tokenizer.pad_token_id,

+ eos_token_id=tokenizer.eos_token_id,

+ debug=args.debug,

+ )

+

+ trainer_2_ref = DetachedPPOTrainer.options(name="trainer2", namespace=os.environ["RAY_NAMESPACE"], num_gpus=1, max_concurrency=2).remote(

+ experience_maker_holder_name_list=["maker1"],

+ strategy=args.trainer_strategy,

+ model=args.model,

+ env_info=env_info_trainer_2,

+ pretrained=args.pretrain,

+ lora_rank=args.lora_rank,

+ train_batch_size=args.train_batch_size,

+ buffer_limit=16,

+ experience_batch_size=args.experience_batch_size,

+ max_epochs=args.max_epochs,

+ #kwargs:

+ max_length=128,

+ do_sample=True,

+ temperature=1.0,

+ top_k=50,

+ pad_token_id=tokenizer.pad_token_id,

+ eos_token_id=tokenizer.eos_token_id,

+ debug= args.debug,

+ )

+

+ # configure Experience Maker

+ experience_holder_1_ref = ExperienceMakerHolder.options(name="maker1", namespace=os.environ["RAY_NAMESPACE"], num_gpus=1, max_concurrency=2).remote(

+ detached_trainer_name_list=["trainer1", "trainer2"],

+ strategy=args.maker_strategy,

+ env_info=env_info_maker_1,

+ experience_batch_size=args.experience_batch_size,

+ kl_coef=0.1,

+ #kwargs:

+ max_length=128,

+ do_sample=True,

+ temperature=1.0,

+ top_k=50,

+ pad_token_id=tokenizer.pad_token_id,

+ eos_token_id=tokenizer.eos_token_id,

+ debug=args.debug,

+ )

+

+ # trainer send its actor and critic to experience holders.

+ # TODO: balance duty

+ ray.get(trainer_1_ref.initialize_remote_makers.remote())

+

+ # configure sampler

+ dataset = pd.read_csv(args.prompt_path)['prompt']

+

+ def tokenize_fn(texts):

+ # MUST padding to max length to ensure inputs of all ranks have the same length

+ # Different length may lead to hang when using gemini, as different generation steps

+ batch = tokenizer(texts, return_tensors='pt', max_length=96, padding='max_length', truncation=True)

+ return {k: v.cuda() for k, v in batch.items()}

+

+ trainer_1_done_ref = trainer_1_ref.fit.remote(num_episodes=args.num_episodes, max_timesteps=args.max_timesteps, update_timesteps=args.update_timesteps)

+ trainer_2_done_ref = trainer_2_ref.fit.remote(num_episodes=args.num_episodes, max_timesteps=args.max_timesteps, update_timesteps=args.update_timesteps)

+ num_exp_per_maker = args.num_episodes * args.max_timesteps // args.update_timesteps * args.max_epochs * 2 + 3 # +3 for fault tolerance

+ maker_1_done_ref = experience_holder_1_ref.workingloop.remote(dataset, tokenize_fn, times=num_exp_per_maker)

+

+ ray.get([trainer_1_done_ref, trainer_2_done_ref, maker_1_done_ref])

+ # save model checkpoint after fitting

+ trainer_1_ref.strategy_save_actor.remote(args.save_path, only_rank0=True)

+ trainer_2_ref.strategy_save_actor.remote(args.save_path, only_rank0=True)

+ # save optimizer checkpoint on all ranks

+ if args.need_optim_ckpt:

+ trainer_1_ref.strategy_save_actor_optim.remote('actor_optim_checkpoint_prompts_%d.pt' % (torch.cuda.current_device()),

+ only_rank0=False)

+ trainer_2_ref.strategy_save_actor_optim.remote('actor_optim_checkpoint_prompts_%d.pt' % (torch.cuda.current_device()),

+ only_rank0=False)

+

+

+if __name__ == '__main__':

+ parser = argparse.ArgumentParser()

+ parser.add_argument('prompt_path')

+ parser.add_argument('--trainer_strategy',

+ choices=['naive', 'ddp', 'colossalai_gemini', 'colossalai_zero2'],

+ default='naive')

+ parser.add_argument('--maker_strategy',

+ choices=['naive', 'ddp', 'colossalai_gemini', 'colossalai_zero2'],

+ default='naive')

+ parser.add_argument('--model', default='gpt2', choices=['gpt2', 'bloom', 'opt'])

+ parser.add_argument('--pretrain', type=str, default=None)

+ parser.add_argument('--save_path', type=str, default='actor_checkpoint_prompts.pt')

+ parser.add_argument('--need_optim_ckpt', type=bool, default=False)

+ parser.add_argument('--num_episodes', type=int, default=10)

+ parser.add_argument('--max_timesteps', type=int, default=10)

+ parser.add_argument('--update_timesteps', type=int, default=10)

+ parser.add_argument('--max_epochs', type=int, default=5)

+ parser.add_argument('--train_batch_size', type=int, default=8)

+ parser.add_argument('--experience_batch_size', type=int, default=8)

+ parser.add_argument('--lora_rank', type=int, default=0, help="low-rank adaptation matrices rank")

+

+ parser.add_argument('--debug', action='store_true')

+ args = parser.parse_args()

+ main(args)

diff --git a/applications/Chat/coati/ray/example/1m2t.sh b/applications/Chat/coati/ray/example/1m2t.sh

new file mode 100644

index 000000000000..669f4141026c

--- /dev/null

+++ b/applications/Chat/coati/ray/example/1m2t.sh

@@ -0,0 +1,23 @@

+set_n_least_used_CUDA_VISIBLE_DEVICES() {

+ local n=${1:-"9999"}

+ echo "GPU Memory Usage:"

+ local FIRST_N_GPU_IDS=$(nvidia-smi --query-gpu=memory.used --format=csv \

+ | tail -n +2 \

+ | nl -v 0 \

+ | tee /dev/tty \

+ | sort -g -k 2 \

+ | awk '{print $1}' \

+ | head -n $n)

+ export CUDA_VISIBLE_DEVICES=$(echo $FIRST_N_GPU_IDS | sed 's/ /,/g')

+ echo "Now CUDA_VISIBLE_DEVICES is set to:"

+ echo "CUDA_VISIBLE_DEVICES=$CUDA_VISIBLE_DEVICES"

+}

+

+set_n_least_used_CUDA_VISIBLE_DEVICES 2

+

+export RAY_NAMESPACE="admin"

+

+python 1m2t.py "/path/to/prompts.csv" --model gpt2 \

+ --maker_strategy naive --trainer_strategy ddp --lora_rank 2 \

+ --num_episodes 10 --max_timesteps 10 --update_timesteps 10 \

+ --max_epochs 10 #--debug

\ No newline at end of file

diff --git a/applications/Chat/coati/ray/example/2m1t.py b/applications/Chat/coati/ray/example/2m1t.py

new file mode 100644

index 000000000000..b655de1ab1fa

--- /dev/null

+++ b/applications/Chat/coati/ray/example/2m1t.py

@@ -0,0 +1,140 @@

+import argparse

+from copy import deepcopy

+

+import pandas as pd

+import torch

+from coati.trainer import PPOTrainer

+

+

+from coati.ray.src.experience_maker_holder import ExperienceMakerHolder

+from coati.ray.src.detached_trainer_ppo import DetachedPPOTrainer

+

+from coati.trainer.strategies import ColossalAIStrategy, DDPStrategy, NaiveStrategy

+from coati.experience_maker import NaiveExperienceMaker

+from torch.optim import Adam

+from transformers import AutoTokenizer, BloomTokenizerFast

+from transformers.models.gpt2.tokenization_gpt2 import GPT2Tokenizer

+

+from colossalai.nn.optimizer import HybridAdam

+

+import ray

+import os

+import socket

+

+

+def main(args):

+ # configure tokenizer

+ if args.model == 'gpt2':

+ tokenizer = GPT2Tokenizer.from_pretrained('gpt2')

+ tokenizer.pad_token = tokenizer.eos_token

+ elif args.model == 'bloom':

+ tokenizer = BloomTokenizerFast.from_pretrained(args.pretrain)

+ tokenizer.pad_token = tokenizer.eos_token

+ elif args.model == 'opt':

+ tokenizer = AutoTokenizer.from_pretrained("facebook/opt-350m")

+ else:

+ raise ValueError(f'Unsupported model "{args.model}"')

+

+ # configure Trainer

+ trainer_ref = DetachedPPOTrainer.options(name="trainer1", num_gpus=1, max_concurrency=2).remote(

+ experience_maker_holder_name_list=["maker1", "maker2"],

+ strategy=args.trainer_strategy,

+ model=args.model,

+ pretrained=args.pretrain,

+ lora_rank=args.lora_rank,

+ train_batch_size=args.train_batch_size,

+ buffer_limit=16,

+ experience_batch_size=args.experience_batch_size,

+ max_epochs=args.max_epochs,

+ #kwargs:

+ max_length=128,

+ do_sample=True,

+ temperature=1.0,

+ top_k=50,

+ pad_token_id=tokenizer.pad_token_id,

+ eos_token_id=tokenizer.eos_token_id,

+ debug=args.debug,

+ )

+

+ # configure Experience Maker

+ experience_holder_1_ref = ExperienceMakerHolder.options(name="maker1", num_gpus=1, max_concurrency=2).remote(

+ detached_trainer_name_list=["trainer1"],

+ strategy=args.maker_strategy,

+ experience_batch_size=args.experience_batch_size,

+ kl_coef=0.1,

+ #kwargs:

+ max_length=128,

+ do_sample=True,

+ temperature=1.0,

+ top_k=50,

+ pad_token_id=tokenizer.pad_token_id,

+ eos_token_id=tokenizer.eos_token_id,

+ debug=args.debug,

+ )

+

+ experience_holder_2_ref = ExperienceMakerHolder.options(name="maker2", num_gpus=1, max_concurrency=2).remote(

+ detached_trainer_name_list=["trainer1"],

+ strategy=args.maker_strategy,

+ experience_batch_size=args.experience_batch_size,

+ kl_coef=0.1,

+ #kwargs:

+ max_length=128,

+ do_sample=True,

+ temperature=1.0,

+ top_k=50,

+ pad_token_id=tokenizer.pad_token_id,

+ eos_token_id=tokenizer.eos_token_id,

+ debug=args.debug,

+ )

+

+ # trainer send its actor and critic to experience holders.

+ ray.get(trainer_ref.initialize_remote_makers.remote())

+

+ # configure sampler

+ dataset = pd.read_csv(args.prompt_path)['prompt']

+

+ def tokenize_fn(texts):

+ # MUST padding to max length to ensure inputs of all ranks have the same length

+ # Different length may lead to hang when using gemini, as different generation steps

+ batch = tokenizer(texts, return_tensors='pt', max_length=96, padding='max_length', truncation=True)

+ return {k: v.cuda() for k, v in batch.items()}

+

+ trainer_done_ref = trainer_ref.fit.remote(num_episodes=args.num_episodes, max_timesteps=args.max_timesteps, update_timesteps=args.update_timesteps)

+ num_exp_per_maker = args.num_episodes * args.max_timesteps // args.update_timesteps * args.max_epochs // 2 + 3 # +3 for fault tolerance

+ maker_1_done_ref = experience_holder_1_ref.workingloop.remote(dataset, tokenize_fn, times=num_exp_per_maker)

+ maker_2_done_ref = experience_holder_2_ref.workingloop.remote(dataset, tokenize_fn, times=num_exp_per_maker)

+

+ ray.get([trainer_done_ref, maker_1_done_ref, maker_2_done_ref])

+

+ # save model checkpoint after fitting

+ trainer_ref.strategy_save_actor.remote(args.save_path, only_rank0=True)

+ # save optimizer checkpoint on all ranks

+ if args.need_optim_ckpt:

+ trainer_ref.strategy_save_actor_optim.remote('actor_optim_checkpoint_prompts_%d.pt' % (torch.cuda.current_device()),

+ only_rank0=False)

+

+if __name__ == '__main__':

+ parser = argparse.ArgumentParser()

+ parser.add_argument('prompt_path')

+ parser.add_argument('--trainer_strategy',

+ choices=['naive', 'ddp', 'colossalai_gemini', 'colossalai_zero2'],

+ default='naive')

+ parser.add_argument('--maker_strategy',

+ choices=['naive', 'ddp', 'colossalai_gemini', 'colossalai_zero2'],

+ default='naive')

+ parser.add_argument('--model', default='gpt2', choices=['gpt2', 'bloom', 'opt'])

+ parser.add_argument('--pretrain', type=str, default=None)

+ parser.add_argument('--save_path', type=str, default='actor_checkpoint_prompts.pt')

+ parser.add_argument('--need_optim_ckpt', type=bool, default=False)

+ parser.add_argument('--num_episodes', type=int, default=10)

+ parser.add_argument('--max_timesteps', type=int, default=10)

+ parser.add_argument('--update_timesteps', type=int, default=10)

+ parser.add_argument('--max_epochs', type=int, default=5)

+ parser.add_argument('--train_batch_size', type=int, default=8)

+ parser.add_argument('--experience_batch_size', type=int, default=8)

+ parser.add_argument('--lora_rank', type=int, default=0, help="low-rank adaptation matrices rank")

+

+ parser.add_argument('--debug', action='store_true')

+ args = parser.parse_args()

+ ray.init(namespace=os.environ["RAY_NAMESPACE"])

+ main(args)

diff --git a/applications/Chat/coati/ray/example/2m1t.sh b/applications/Chat/coati/ray/example/2m1t.sh

new file mode 100644

index 000000000000..a207d4118d60

--- /dev/null

+++ b/applications/Chat/coati/ray/example/2m1t.sh

@@ -0,0 +1,23 @@

+set_n_least_used_CUDA_VISIBLE_DEVICES() {

+ local n=${1:-"9999"}

+ echo "GPU Memory Usage:"

+ local FIRST_N_GPU_IDS=$(nvidia-smi --query-gpu=memory.used --format=csv \

+ | tail -n +2 \

+ | nl -v 0 \

+ | tee /dev/tty \

+ | sort -g -k 2 \

+ | awk '{print $1}' \

+ | head -n $n)

+ export CUDA_VISIBLE_DEVICES=$(echo $FIRST_N_GPU_IDS | sed 's/ /,/g')

+ echo "Now CUDA_VISIBLE_DEVICES is set to:"

+ echo "CUDA_VISIBLE_DEVICES=$CUDA_VISIBLE_DEVICES"

+}

+

+set_n_least_used_CUDA_VISIBLE_DEVICES 3

+

+export RAY_NAMESPACE="admin"

+

+python 2m1t.py "/path/to/prompts.csv" \

+ --trainer_strategy naive --maker_strategy naive --lora_rank 2 --pretrain "facebook/opt-350m" --model 'opt' \

+ --num_episodes 10 --max_timesteps 10 --update_timesteps 10 \

+ --max_epochs 10 # --debug

diff --git a/applications/Chat/coati/ray/example/2m2t.py b/applications/Chat/coati/ray/example/2m2t.py

new file mode 100644

index 000000000000..435c71915fc2

--- /dev/null

+++ b/applications/Chat/coati/ray/example/2m2t.py

@@ -0,0 +1,209 @@

+import argparse

+from copy import deepcopy

+

+import pandas as pd

+import torch

+from coati.trainer import PPOTrainer

+

+

+from coati.ray.src.experience_maker_holder import ExperienceMakerHolder

+from coati.ray.src.detached_trainer_ppo import DetachedPPOTrainer

+

+from coati.trainer.strategies import ColossalAIStrategy, DDPStrategy, NaiveStrategy

+from coati.experience_maker import NaiveExperienceMaker

+from torch.optim import Adam

+from transformers import AutoTokenizer, BloomTokenizerFast

+from transformers.models.gpt2.tokenization_gpt2 import GPT2Tokenizer

+

+from colossalai.nn.optimizer import HybridAdam

+

+import ray

+import os

+import socket

+

+

+def get_free_port():

+ with socket.socket(socket.AF_INET, socket.SOCK_STREAM) as s:

+ s.bind(('', 0))

+ return s.getsockname()[1]

+

+

+def get_local_ip():

+ with socket.socket(socket.AF_INET, socket.SOCK_DGRAM) as s:

+ s.connect(('8.8.8.8', 80))

+ return s.getsockname()[0]

+

+def main(args):

+ master_addr = str(get_local_ip())

+ # trainer_env_info

+ trainer_port = str(get_free_port())

+ env_info_trainer_1 = {'local_rank' : '0',

+ 'rank' : '0',

+ 'world_size' : '2',

+ 'master_port' : trainer_port,

+ 'master_addr' : master_addr}

+ env_info_trainer_2 = {'local_rank' : '0',

+ 'rank' : '1',

+ 'world_size' : '2',

+ 'master_port' : trainer_port,

+ 'master_addr' : master_addr}

+ # maker_env_info

+ maker_port = str(get_free_port())

+ env_info_maker_1 = {'local_rank' : '0',

+ 'rank' : '0',

+ 'world_size' : '2',

+ 'master_port' : maker_port,

+ 'master_addr' : master_addr}

+ env_info_maker_2 = {'local_rank' : '0',

+ 'rank' : '1',

+ 'world_size' : '2',

+ 'master_port': maker_port,

+ 'master_addr' : master_addr}

+ print([env_info_trainer_1,

+ env_info_trainer_2,

+ env_info_maker_1,

+ env_info_maker_2])

+ ray.init()

+ # configure tokenizer

+ if args.model == 'gpt2':

+ tokenizer = GPT2Tokenizer.from_pretrained('gpt2')

+ tokenizer.pad_token = tokenizer.eos_token

+ elif args.model == 'bloom':

+ tokenizer = BloomTokenizerFast.from_pretrained(args.pretrain)

+ tokenizer.pad_token = tokenizer.eos_token

+ elif args.model == 'opt':

+ tokenizer = AutoTokenizer.from_pretrained("facebook/opt-350m")

+ else:

+ raise ValueError(f'Unsupported model "{args.model}"')

+

+ # configure Trainer

+ trainer_1_ref = DetachedPPOTrainer.options(name="trainer1", namespace=os.environ["RAY_NAMESPACE"], num_gpus=1, max_concurrency=2).remote(

+ experience_maker_holder_name_list=["maker1", "maker2"],

+ strategy=args.trainer_strategy,

+ model=args.model,

+ env_info=env_info_trainer_1,

+ pretrained=args.pretrain,

+ lora_rank=args.lora_rank,

+ train_batch_size=args.train_batch_size,

+ buffer_limit=16,

+ experience_batch_size=args.experience_batch_size,

+ max_epochs=args.max_epochs,

+ #kwargs:

+ max_length=128,

+ do_sample=True,

+ temperature=1.0,

+ top_k=50,

+ pad_token_id=tokenizer.pad_token_id,

+ eos_token_id=tokenizer.eos_token_id,

+ debug=args.debug,

+ )

+

+ trainer_2_ref = DetachedPPOTrainer.options(name="trainer2", namespace=os.environ["RAY_NAMESPACE"], num_gpus=1, max_concurrency=2).remote(

+ experience_maker_holder_name_list=["maker1", "maker2"],

+ strategy=args.trainer_strategy,

+ model=args.model,

+ env_info=env_info_trainer_2,

+ pretrained=args.pretrain,

+ lora_rank=args.lora_rank,

+ train_batch_size=args.train_batch_size,

+ buffer_limit=16,

+ experience_batch_size=args.experience_batch_size,

+ max_epochs=args.max_epochs,

+ #kwargs:

+ max_length=128,

+ do_sample=True,

+ temperature=1.0,

+ top_k=50,

+ pad_token_id=tokenizer.pad_token_id,

+ eos_token_id=tokenizer.eos_token_id,

+ debug=args.debug,

+ )

+

+ # configure Experience Maker

+ experience_holder_1_ref = ExperienceMakerHolder.options(name="maker1", namespace=os.environ["RAY_NAMESPACE"], num_gpus=1, max_concurrency=2).remote(

+ detached_trainer_name_list=["trainer1", "trainer2"],

+ strategy=args.maker_strategy,

+ env_info=env_info_maker_1,

+ experience_batch_size=args.experience_batch_size,

+ kl_coef=0.1,

+ #kwargs:

+ max_length=128,

+ do_sample=True,

+ temperature=1.0,

+ top_k=50,

+ pad_token_id=tokenizer.pad_token_id,

+ eos_token_id=tokenizer.eos_token_id,

+ debug=args.debug,

+ )

+

+ experience_holder_2_ref = ExperienceMakerHolder.options(name="maker2", namespace=os.environ["RAY_NAMESPACE"], num_gpus=1, max_concurrency=2).remote(

+ detached_trainer_name_list=["trainer1", "trainer2"],

+ strategy=args.maker_strategy,

+ env_info=env_info_maker_2,

+ experience_batch_size=args.experience_batch_size,

+ kl_coef=0.1,

+ #kwargs:

+ max_length=128,

+ do_sample=True,

+ temperature=1.0,

+ top_k=50,

+ pad_token_id=tokenizer.pad_token_id,

+ eos_token_id=tokenizer.eos_token_id,

+ debug=args.debug,

+ )

+

+ # trainer send its actor and critic to experience holders.

+ # TODO: balance duty

+ ray.get(trainer_1_ref.initialize_remote_makers.remote())

+

+ # configure sampler

+ dataset = pd.read_csv(args.prompt_path)['prompt']

+

+ def tokenize_fn(texts):

+ # MUST padding to max length to ensure inputs of all ranks have the same length

+ # Different length may lead to hang when using gemini, as different generation steps

+ batch = tokenizer(texts, return_tensors='pt', max_length=96, padding='max_length', truncation=True)

+ return {k: v.cuda() for k, v in batch.items()}

+

+ trainer_1_done_ref = trainer_1_ref.fit.remote(num_episodes=args.num_episodes, max_timesteps=args.max_timesteps, update_timesteps=args.update_timesteps)

+ trainer_2_done_ref = trainer_2_ref.fit.remote(num_episodes=args.num_episodes, max_timesteps=args.max_timesteps, update_timesteps=args.update_timesteps)

+ num_exp_per_maker = args.num_episodes * args.max_timesteps // args.update_timesteps * args.max_epochs + 3 # +3 for fault tolerance

+ maker_1_done_ref = experience_holder_1_ref.workingloop.remote(dataset, tokenize_fn, times=num_exp_per_maker)

+ maker_2_done_ref = experience_holder_2_ref.workingloop.remote(dataset, tokenize_fn, times=num_exp_per_maker)

+

+ ray.get([trainer_1_done_ref, trainer_2_done_ref, maker_1_done_ref, maker_2_done_ref])

+ # save model checkpoint after fitting

+ trainer_1_ref.strategy_save_actor.remote(args.save_path, only_rank0=True)

+ trainer_2_ref.strategy_save_actor.remote(args.save_path, only_rank0=True)

+ # save optimizer checkpoint on all ranks

+ if args.need_optim_ckpt:

+ trainer_1_ref.strategy_save_actor_optim.remote('actor_optim_checkpoint_prompts_%d.pt' % (torch.cuda.current_device()),

+ only_rank0=False)

+ trainer_2_ref.strategy_save_actor_optim.remote('actor_optim_checkpoint_prompts_%d.pt' % (torch.cuda.current_device()),

+ only_rank0=False)

+

+

+if __name__ == '__main__':

+ parser = argparse.ArgumentParser()

+ parser.add_argument('prompt_path')

+ parser.add_argument('--trainer_strategy',

+ choices=['naive', 'ddp', 'colossalai_gemini', 'colossalai_zero2'],

+ default='naive')

+ parser.add_argument('--maker_strategy',

+ choices=['naive', 'ddp', 'colossalai_gemini', 'colossalai_zero2'],

+ default='naive')

+ parser.add_argument('--model', default='gpt2', choices=['gpt2', 'bloom', 'opt'])

+ parser.add_argument('--pretrain', type=str, default=None)

+ parser.add_argument('--save_path', type=str, default='actor_checkpoint_prompts.pt')

+ parser.add_argument('--need_optim_ckpt', type=bool, default=False)

+ parser.add_argument('--num_episodes', type=int, default=10)

+ parser.add_argument('--max_timesteps', type=int, default=10)

+ parser.add_argument('--update_timesteps', type=int, default=10)

+ parser.add_argument('--max_epochs', type=int, default=5)

+ parser.add_argument('--train_batch_size', type=int, default=8)

+ parser.add_argument('--experience_batch_size', type=int, default=8)

+ parser.add_argument('--lora_rank', type=int, default=0, help="low-rank adaptation matrices rank")

+

+ parser.add_argument('--debug', action='store_true')

+ args = parser.parse_args()

+ main(args)

diff --git a/applications/Chat/coati/ray/example/2m2t.sh b/applications/Chat/coati/ray/example/2m2t.sh

new file mode 100644

index 000000000000..fb4024766c54

--- /dev/null

+++ b/applications/Chat/coati/ray/example/2m2t.sh

@@ -0,0 +1,23 @@

+set_n_least_used_CUDA_VISIBLE_DEVICES() {

+ local n=${1:-"9999"}

+ echo "GPU Memory Usage:"

+ local FIRST_N_GPU_IDS=$(nvidia-smi --query-gpu=memory.used --format=csv \

+ | tail -n +2 \

+ | nl -v 0 \

+ | tee /dev/tty \

+ | sort -g -k 2 \

+ | awk '{print $1}' \

+ | head -n $n)

+ export CUDA_VISIBLE_DEVICES=$(echo $FIRST_N_GPU_IDS | sed 's/ /,/g')

+ echo "Now CUDA_VISIBLE_DEVICES is set to:"

+ echo "CUDA_VISIBLE_DEVICES=$CUDA_VISIBLE_DEVICES"

+}

+

+set_n_least_used_CUDA_VISIBLE_DEVICES 2

+

+export RAY_NAMESPACE="admin"

+

+python 2m2t.py "path/to/prompts.csv" \

+ --maker_strategy naive --trainer_strategy colossalai_zero2 --lora_rank 2 \

+ --num_episodes 10 --max_timesteps 10 --update_timesteps 10 \

+ --max_epochs 10 --debug

\ No newline at end of file

diff --git a/applications/Chat/coati/ray/src/__init__.py b/applications/Chat/coati/ray/src/__init__.py

new file mode 100644

index 000000000000..e69de29bb2d1

diff --git a/applications/Chat/coati/ray/src/detached_replay_buffer.py b/applications/Chat/coati/ray/src/detached_replay_buffer.py

new file mode 100644

index 000000000000..855eee48c5a5

--- /dev/null

+++ b/applications/Chat/coati/ray/src/detached_replay_buffer.py

@@ -0,0 +1,88 @@

+import torch

+import random

+from typing import List, Any

+# from torch.multiprocessing import Queue

+from ray.util.queue import Queue

+import ray

+import asyncio

+from coati.experience_maker.base import Experience

+from coati.replay_buffer.utils import BufferItem, make_experience_batch, split_experience_batch

+from coati.replay_buffer import ReplayBuffer

+from threading import Lock

+import copy

+

+class DetachedReplayBuffer:

+ '''

+ Detached replay buffer. Share Experience across workers on the same node.

+ Therefore a trainer node is expected to have only one instance.

+ It is ExperienceMakerHolder's duty to call append(exp) method, remotely.

+

+ Args:

+ sample_batch_size: Batch size when sampling. Exp won't enqueue until they formed a batch.

+ tp_world_size: Number of workers in the same tp group

+ limit: Limit of number of experience sample BATCHs. A number <= 0 means unlimited. Defaults to 0.

+ cpu_offload: Whether to offload experience to cpu when sampling. Defaults to True.

+ '''

+

+ def __init__(self, sample_batch_size: int, tp_world_size: int = 1, limit : int = 0, cpu_offload: bool = True) -> None:

+ self.cpu_offload = cpu_offload

+ self.sample_batch_size = sample_batch_size

+ self.limit = limit

+ self.items = Queue(self.limit, actor_options={"num_cpus":1})

+ self.batch_collector : List[BufferItem] = []

+

+ '''

+ Workers in the same tp group share this buffer and need same sample for one step.

+ Therefore a held_sample should be returned tp_world_size times before it could be dropped.

+ worker_state records wheter a worker got the held_sample

+ '''

+ self.tp_world_size = tp_world_size

+ self.worker_state = [False] * self.tp_world_size

+ self.held_sample = None

+ self._worker_state_lock = Lock()

+

+ @torch.no_grad()

+ def append(self, experience: Experience) -> None:

+ '''

+ Expected to be called remotely.

+ '''

+ if self.cpu_offload:

+ experience.to_device(torch.device('cpu'))

+ items = split_experience_batch(experience)

+ self.batch_collector.extend(items)

+ while len(self.batch_collector) >= self.sample_batch_size:

+ items = self.batch_collector[:self.sample_batch_size]

+ experience = make_experience_batch(items)

+ self.items.put(experience, block=True)

+ self.batch_collector = self.batch_collector[self.sample_batch_size:]

+

+ def clear(self) -> None:

+ # self.items.close()

+ self.items.shutdown()

+ self.items = Queue(self.limit)

+ self.worker_state = [False] * self.tp_world_size

+ self.batch_collector = []

+

+ @torch.no_grad()

+ def sample(self, worker_rank = 0, to_device = "cpu") -> Experience:

+ self._worker_state_lock.acquire()

+ if not any(self.worker_state):

+ self.held_sample = self._sample_and_erase()

+ self.worker_state[worker_rank] = True

+ if all(self.worker_state):

+ self.worker_state = [False] * self.tp_world_size

+ ret = self.held_sample

+ else:

+ ret = copy.deepcopy(self.held_sample)

+ self._worker_state_lock.release()

+ ret.to_device(to_device)

+ return ret

+

+ @torch.no_grad()

+ def _sample_and_erase(self) -> Experience:

+ ret = self.items.get(block=True)

+ return ret

+

+ def get_length(self) -> int:

+ ret = self.items.qsize()

+ return ret

\ No newline at end of file

diff --git a/applications/Chat/coati/ray/src/detached_trainer_base.py b/applications/Chat/coati/ray/src/detached_trainer_base.py

new file mode 100644

index 000000000000..f1ed1ec71499

--- /dev/null

+++ b/applications/Chat/coati/ray/src/detached_trainer_base.py

@@ -0,0 +1,121 @@

+from abc import ABC, abstractmethod

+from typing import Any, Callable, Dict, List, Optional, Union

+from tqdm import tqdm

+from coati.trainer.callbacks import Callback

+from coati.experience_maker import Experience

+import ray

+import os

+

+from .detached_replay_buffer import DetachedReplayBuffer

+from .utils import is_rank_0

+

+class DetachedTrainer(ABC):

+ '''

+ Base class for detached rlhf trainers.

+ 'detach' means that the experience maker is detached compared to a normal Trainer.

+ Please set name attribute during init:

+ >>> trainer = DetachedTrainer.options(..., name = "xxx", ...).remote()

+ So an ExperienceMakerHolder can reach the detached_replay_buffer by Actor's name.

+ Args:

+ detached_strategy (DetachedStrategy): the strategy to use for training

+ detached_replay_buffer_ref (ObjectRef[DetachedReplayBuffer]): the replay buffer to use for training

+ experience_batch_size (int, defaults to 8): the batch size to use for experience generation

+ max_epochs (int, defaults to 1): the number of epochs of training process

+ data_loader_pin_memory (bool, defaults to True): whether to pin memory for data loader

+ callbacks (List[Callback], defaults to []): the callbacks to call during training process

+ generate_kwargs (dict, optional): the kwargs to use while model generating

+ '''

+

+ def __init__(self,

+ experience_maker_holder_name_list: List[str],

+ train_batch_size: int = 8,

+ buffer_limit: int = 0,

+ buffer_cpu_offload: bool = True,

+ experience_batch_size: int = 8,

+ max_epochs: int = 1,

+ dataloader_pin_memory: bool = True,

+ callbacks: List[Callback] = [],

+ **generate_kwargs) -> None:

+ super().__init__()

+ self.detached_replay_buffer = DetachedReplayBuffer(train_batch_size, limit=buffer_limit, cpu_offload=buffer_cpu_offload)

+ self.experience_batch_size = experience_batch_size

+ self.max_epochs = max_epochs

+ self.dataloader_pin_memory = dataloader_pin_memory

+ self.callbacks = callbacks

+ self.generate_kwargs = generate_kwargs

+ self.target_holder_name_list = experience_maker_holder_name_list

+ self.target_holder_list = []

+

+ def update_target_holder_list(self, experience_maker_holder_name_list):

+ self.target_holder_name_list = experience_maker_holder_name_list

+ self.target_holder_list = []

+ for name in self.target_holder_name_list:

+ self.target_holder_list.append(ray.get_actor(name, namespace=os.environ["RAY_NAMESPACE"]))

+

+ @abstractmethod

+ def _update_remote_makers(self):

+ pass

+

+ @abstractmethod

+ def training_step(self, experience: Experience) -> Dict[str, Any]:

+ pass

+

+ def _learn(self):

+ pbar = tqdm(range(self.max_epochs), desc='Train epoch', disable=not is_rank_0())

+ for _ in pbar:

+ if 'debug' in self.generate_kwargs and self.generate_kwargs['debug'] == True:

+ print("[trainer] sampling exp")

+ experience = self._buffer_sample()

+ if 'debug' in self.generate_kwargs and self.generate_kwargs['debug'] == True:

+ print("[trainer] training step")

+ metrics = self.training_step(experience)

+ if 'debug' in self.generate_kwargs and self.generate_kwargs['debug'] == True:

+ print("[trainer] step over")

+ pbar.set_postfix(metrics)

+

+ def fit(self, num_episodes: int = 50000, max_timesteps: int = 500, update_timesteps: int = 5000) -> None:

+ self._on_fit_start()

+ for episode in range(num_episodes):

+ self._on_episode_start(episode)

+ for timestep in tqdm(range(max_timesteps // update_timesteps),

+ desc=f'Episode [{episode+1}/{num_episodes}]',

+ disable=not is_rank_0()):

+ self._learn()

+ self._update_remote_makers()

+ self._on_episode_end(episode)

+ self._on_fit_end()

+

+ @ray.method(concurrency_group="buffer_length")

+ def buffer_get_length(self):

+ # called by ExperienceMakerHolder

+ if 'debug' in self.generate_kwargs and self.generate_kwargs['debug'] == True:

+ print("[trainer] telling length")

+ return self.detached_replay_buffer.get_length()

+

+ @ray.method(concurrency_group="buffer_append")

+ def buffer_append(self, experience: Experience):

+ # called by ExperienceMakerHolder

+ if 'debug' in self.generate_kwargs and self.generate_kwargs['debug'] == True:

+ # print(f"[trainer] receiving exp. Current buffer length: {self.detached_replay_buffer.get_length()}")

+ print(f"[trainer] receiving exp.")

+ self.detached_replay_buffer.append(experience)

+

+ @ray.method(concurrency_group="buffer_sample")

+ def _buffer_sample(self):

+ return self.detached_replay_buffer.sample()

+

+ def _on_fit_start(self) -> None:

+ for callback in self.callbacks:

+ callback.on_fit_start()

+

+ def _on_fit_end(self) -> None:

+ for callback in self.callbacks:

+ callback.on_fit_end()

+

+ def _on_episode_start(self, episode: int) -> None:

+ for callback in self.callbacks:

+ callback.on_episode_start(episode)

+

+ def _on_episode_end(self, episode: int) -> None:

+ for callback in self.callbacks:

+ callback.on_episode_end(episode)

diff --git a/applications/Chat/coati/ray/src/detached_trainer_ppo.py b/applications/Chat/coati/ray/src/detached_trainer_ppo.py

new file mode 100644

index 000000000000..838e82d07f4a

--- /dev/null

+++ b/applications/Chat/coati/ray/src/detached_trainer_ppo.py

@@ -0,0 +1,192 @@

+from typing import Any, Callable, Dict, List, Optional

+import torch

+from torch.optim import Adam

+

+from coati.experience_maker import Experience, NaiveExperienceMaker

+from coati.models.base import Actor, Critic

+from coati.models.generation_utils import update_model_kwargs_fn

+from coati.models.loss import PolicyLoss, ValueLoss

+from coati.trainer.strategies import ColossalAIStrategy, DDPStrategy, NaiveStrategy, Strategy

+from coati.trainer.callbacks import Callback

+

+from colossalai.nn.optimizer import HybridAdam

+

+import ray

+

+

+from .utils import is_rank_0, get_cuda_actor_critic_from_args, get_strategy_from_args, set_dist_env

+from .detached_trainer_base import DetachedTrainer

+

+

+@ray.remote(concurrency_groups={"buffer_length": 1, "buffer_append":1, "buffer_sample":1,"model_io": 1, "compute": 1})

+class DetachedPPOTrainer(DetachedTrainer):

+ '''

+ Detached Trainer for PPO algorithm

+ Args:

+ strategy (Strategy): the strategy to use for training

+ model (str) : for actor / critic init

+ pretrained (str) : for actor / critic init

+ lora_rank (int) : for actor / critic init

+ train_batch_size (int, defaults to 8): the batch size to use for training

+ train_batch_size (int, defaults to 8): the batch size to use for training

+ buffer_limit (int, defaults to 0): the max_size limitation of replay buffer