- [](https://github.com/hpcaitech/ColossalAI/actions/workflows/build.yml)

+ [](https://github.com/hpcaitech/ColossalAI/stargazers)

+ [](https://github.com/hpcaitech/ColossalAI/actions/workflows/build_on_schedule.yml)

[](https://colossalai.readthedocs.io/en/latest/?badge=latest)

[](https://www.codefactor.io/repository/github/hpcaitech/colossalai)

[](https://huggingface.co/hpcai-tech)

@@ -19,15 +20,16 @@

[](https://raw.githubusercontent.com/hpcaitech/public_assets/main/colossalai/img/WeChat.png)

- | [English](README.md) | [中文](README-zh-Hans.md) |

+ | [English](README.md) | [中文](docs/README-zh-Hans.md) |

## Latest News

-* [2023/01] [Hardware Savings Up to 46 Times for AIGC and Automatic Parallelism](https://www.hpc-ai.tech/blog/colossal-ai-0-2-0)

+* [2023/03] [AWS and Google Fund Colossal-AI with Startup Cloud Programs](https://www.hpc-ai.tech/blog/aws-and-google-fund-colossal-ai-with-startup-cloud-programs)

+* [2023/02] [Open source solution replicates ChatGPT training process! Ready to go with only 1.6GB GPU memory](https://www.hpc-ai.tech/blog/colossal-ai-chatgpt)

+* [2023/01] [Hardware Savings Up to 46 Times for AIGC and Automatic Parallelism](https://medium.com/pytorch/latest-colossal-ai-boasts-novel-automatic-parallelism-and-offers-savings-up-to-46x-for-stable-1453b48f3f02)

* [2022/11] [Diffusion Pretraining and Hardware Fine-Tuning Can Be Almost 7X Cheaper](https://www.hpc-ai.tech/blog/diffusion-pretraining-and-hardware-fine-tuning-can-be-almost-7x-cheaper)

* [2022/10] [Use a Laptop to Analyze 90% of Proteins, With a Single-GPU Inference Sequence Exceeding 10,000](https://www.hpc-ai.tech/blog/use-a-laptop-to-analyze-90-of-proteins-with-a-single-gpu-inference-sequence-exceeding)

-* [2022/10] [Embedding Training With 1% GPU Memory and 100 Times Less Budget for Super-Large Recommendation Model](https://www.hpc-ai.tech/blog/embedding-training-with-1-gpu-memory-and-10-times-less-budget-an-open-source-solution-for)

* [2022/09] [HPC-AI Tech Completes $6 Million Seed and Angel Round Fundraising](https://www.hpc-ai.tech/blog/hpc-ai-tech-completes-6-million-seed-and-angel-round-fundraising-led-by-bluerun-ventures-in-the)

## Table of Contents

@@ -58,12 +60,13 @@

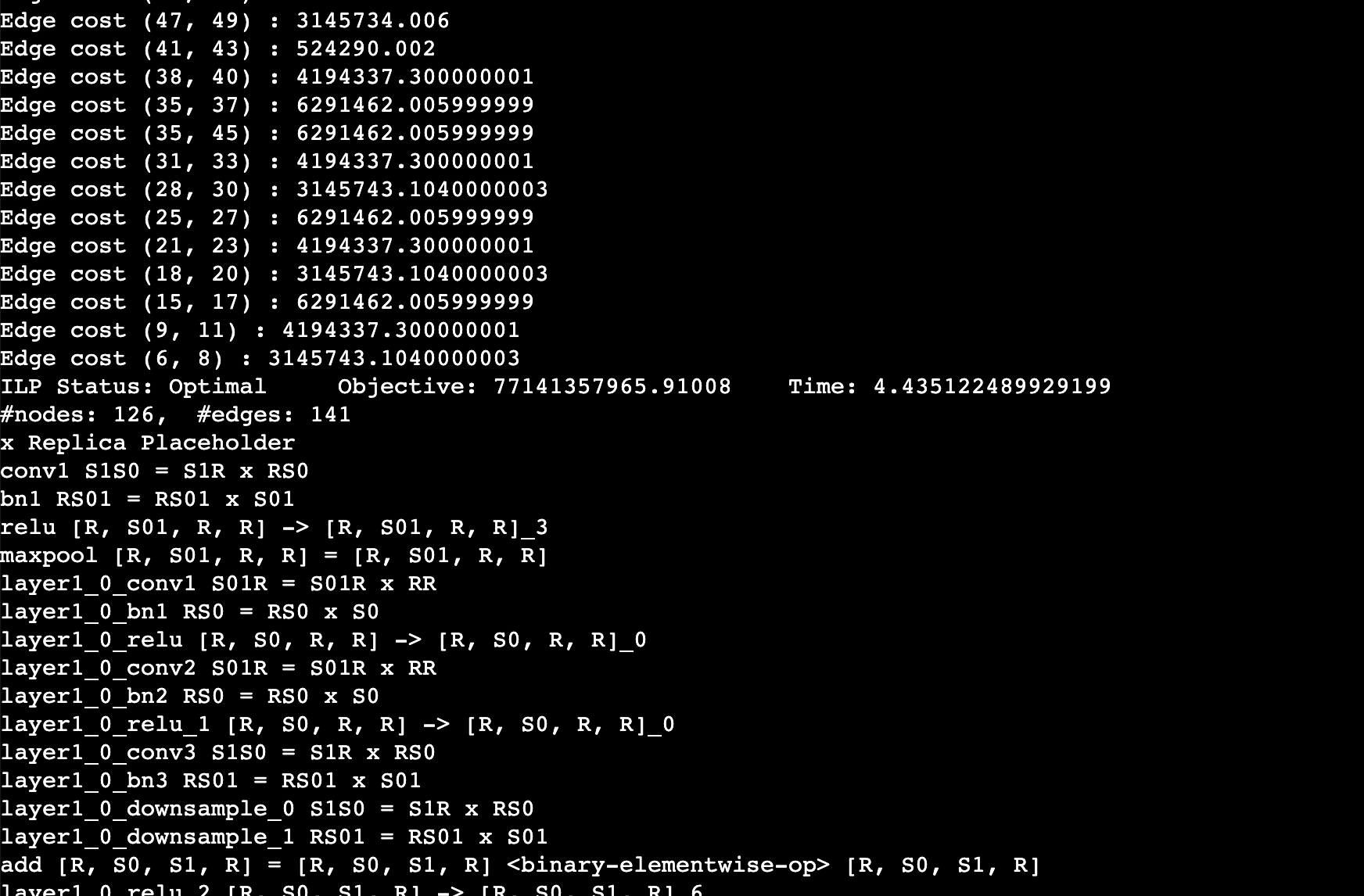

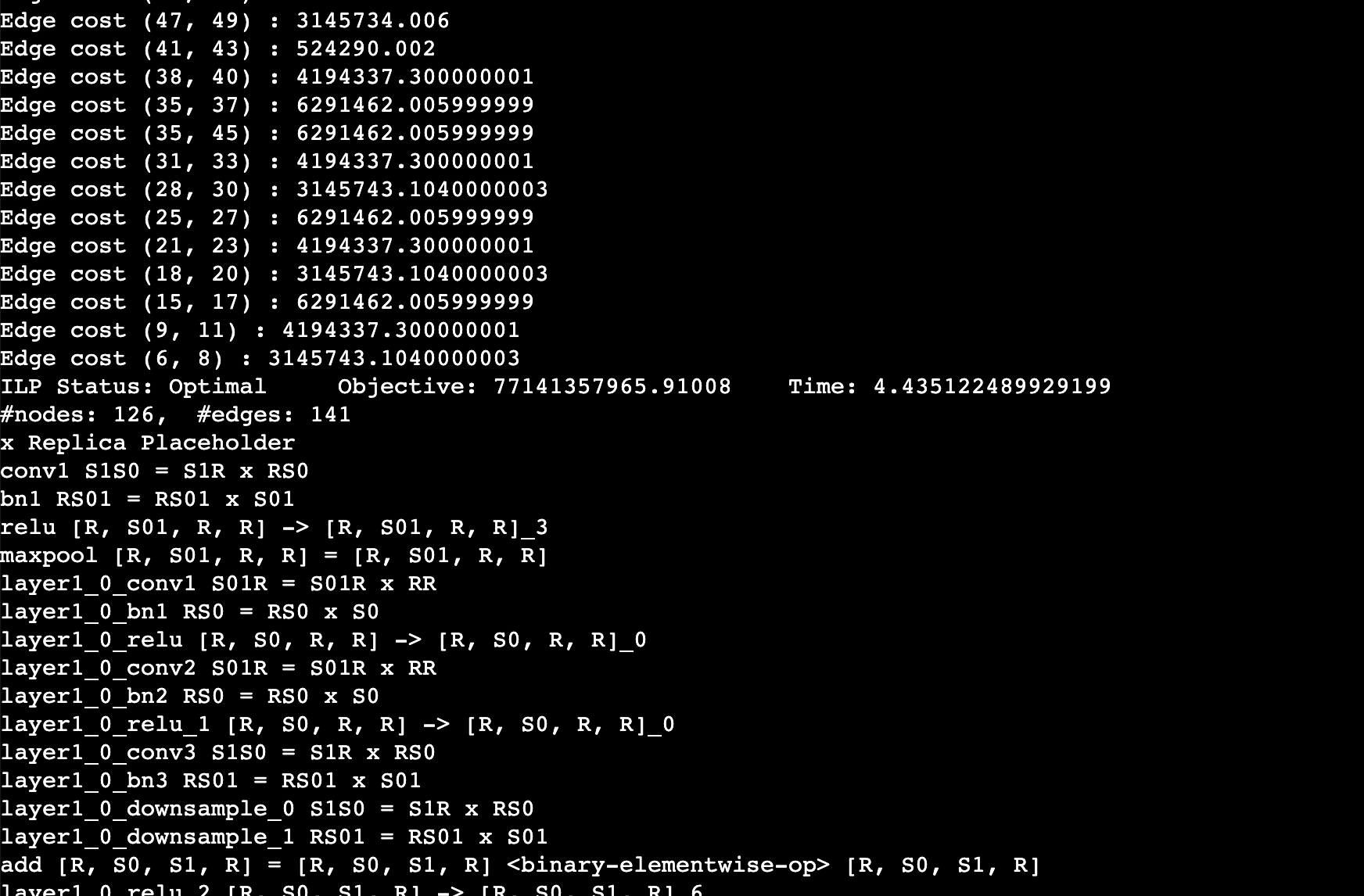

@@ -104,7 +107,7 @@ distributed training and inference in a few lines.

- 1D, [2D](https://arxiv.org/abs/2104.05343), [2.5D](https://arxiv.org/abs/2105.14500), [3D](https://arxiv.org/abs/2105.14450) Tensor Parallelism

- [Sequence Parallelism](https://arxiv.org/abs/2105.13120)

- [Zero Redundancy Optimizer (ZeRO)](https://arxiv.org/abs/1910.02054)

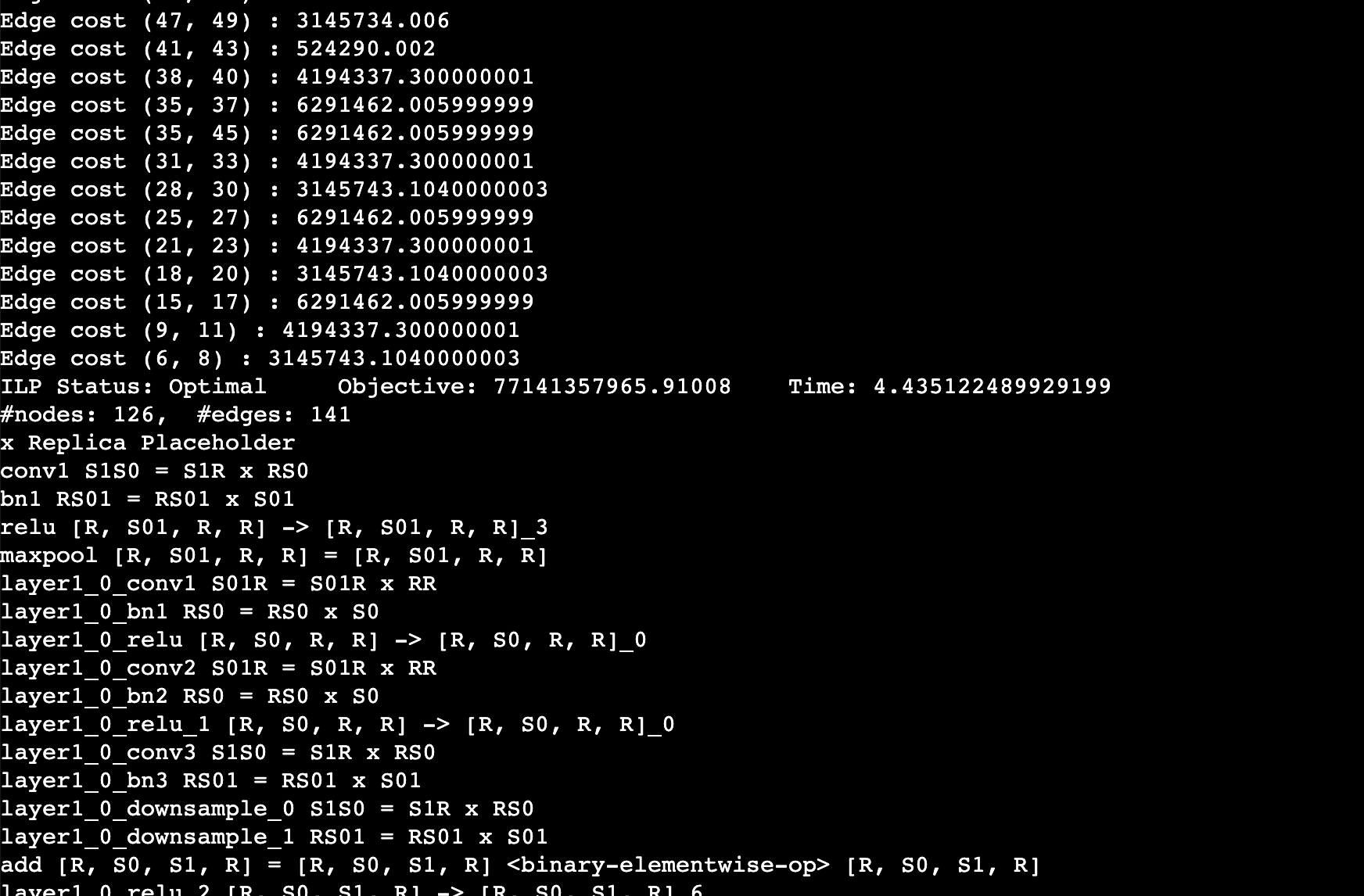

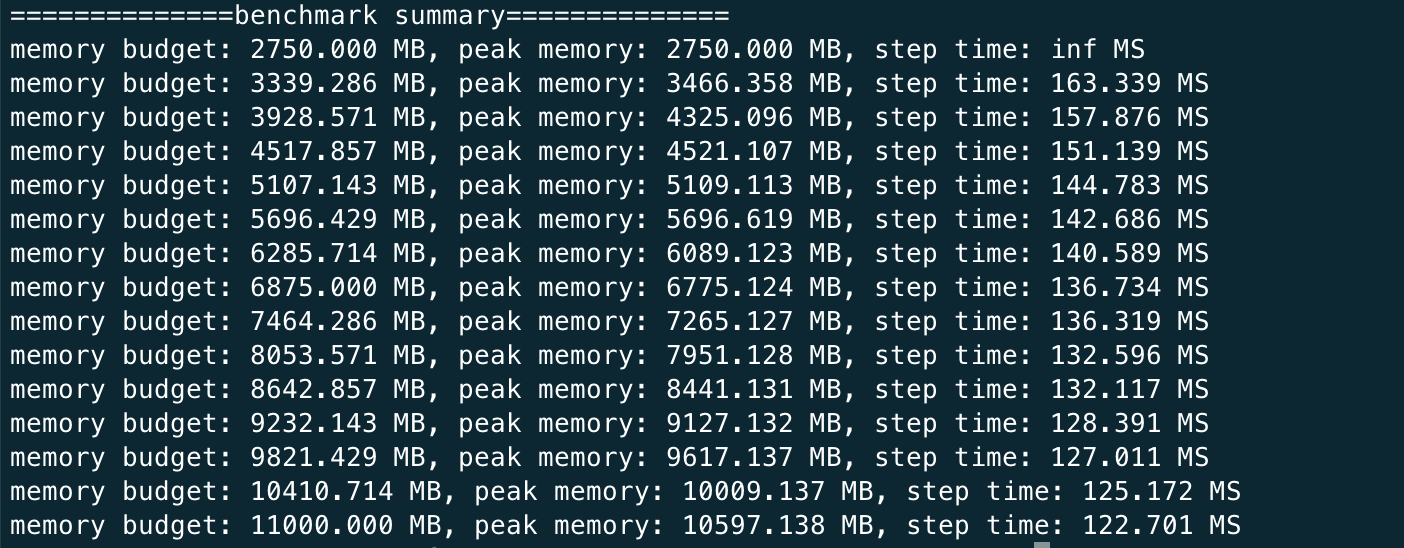

- - [Auto-Parallelism](https://github.com/hpcaitech/ColossalAI/tree/main/examples/language/gpt/auto_parallel_with_gpt)

+ - [Auto-Parallelism](https://arxiv.org/abs/2302.02599)

- Heterogeneous Memory Management

- [PatrickStar](https://arxiv.org/abs/2108.05818)

@@ -115,8 +118,6 @@ distributed training and inference in a few lines.

- Inference

- [Energon-AI](https://github.com/hpcaitech/EnergonAI)

-- Colossal-AI in the Real World

- - Biomedicine: [FastFold](https://github.com/hpcaitech/FastFold) accelerates training and inference of AlphaFold protein structure

## Parallel Training Demo

@@ -149,9 +150,9 @@ distributed training and inference in a few lines.

- [Open Pretrained Transformer (OPT)](https://github.com/facebookresearch/metaseq), a 175-Billion parameter AI language model released by Meta, which stimulates AI programmers to perform various downstream tasks and application deployments because public pretrained model weights.

-- 45% speedup fine-tuning OPT at low cost in lines. [[Example]](https://github.com/hpcaitech/ColossalAI-Examples/tree/main/language/opt) [[Online Serving]](https://service.colossalai.org/opt)

+- 45% speedup fine-tuning OPT at low cost in lines. [[Example]](https://github.com/hpcaitech/ColossalAI/tree/main/examples/language/opt) [[Online Serving]](https://colossalai.org/docs/advanced_tutorials/opt_service)

-Please visit our [documentation](https://www.colossalai.org/) and [examples](https://github.com/hpcaitech/ColossalAI-Examples) for more details.

+Please visit our [documentation](https://www.colossalai.org/) and [examples](https://github.com/hpcaitech/ColossalAI/tree/main/examples) for more details.

### ViT

@@ -199,20 +200,44 @@ Please visit our [documentation](https://www.colossalai.org/) and [examples](htt

- [Energon-AI](https://github.com/hpcaitech/EnergonAI): 50% inference acceleration on the same hardware

-

+

-- [OPT Serving](https://service.colossalai.org/opt): Try 175-billion-parameter OPT online services for free, without any registration whatsoever.

+- [OPT Serving](https://colossalai.org/docs/advanced_tutorials/opt_service): Try 175-billion-parameter OPT online services

-- [BLOOM](https://github.com/hpcaitech/EnergonAI/tree/main/examples/bloom): Reduce hardware deployment costs of 175-billion-parameter BLOOM by more than 10 times.

+- [BLOOM](https://github.com/hpcaitech/EnergonAI/tree/main/examples/bloom): Reduce hardware deployment costs of 176-billion-parameter BLOOM by more than 10 times.

## Colossal-AI in the Real World

+### ChatGPT

+A low-cost [ChatGPT](https://openai.com/blog/chatgpt/) equivalent implementation process. [[code]](https://github.com/hpcaitech/ColossalAI/tree/main/applications/ChatGPT) [[blog]](https://www.hpc-ai.tech/blog/colossal-ai-chatgpt)

+

+

+

+

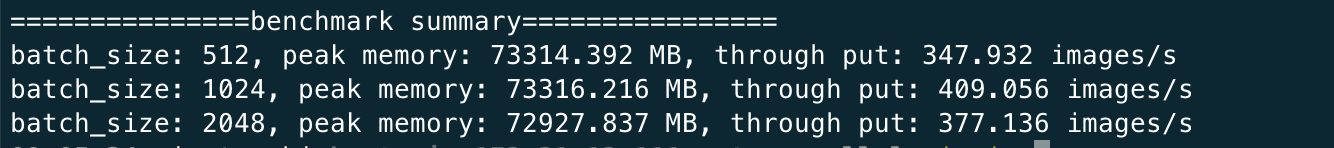

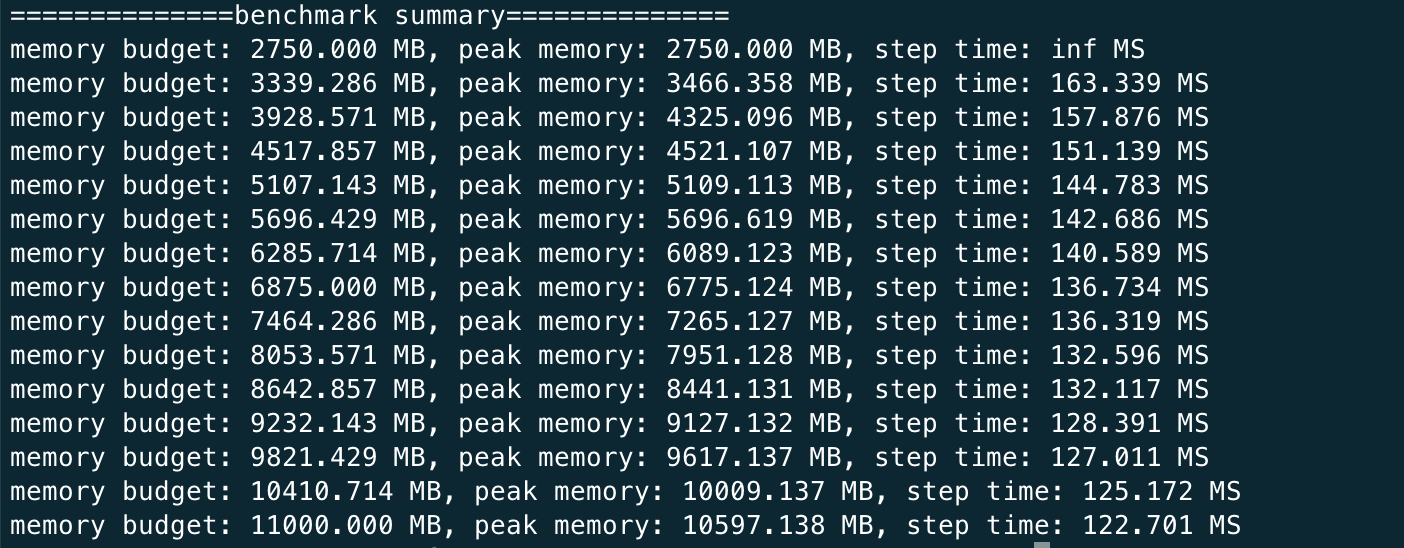

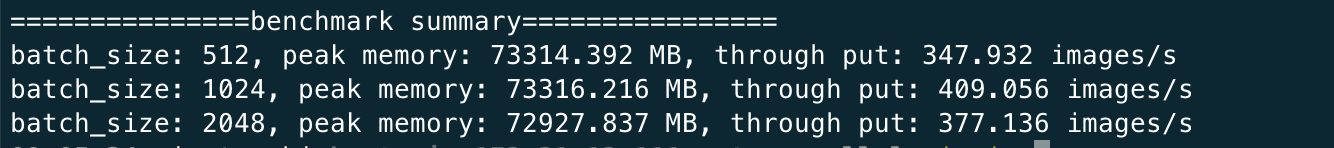

+- Up to 7.73 times faster for single server training and 1.42 times faster for single-GPU inference

+

+

+

+

+

+- Up to 10.3x growth in model capacity on one GPU

+- A mini demo training process requires only 1.62GB of GPU memory (any consumer-grade GPU)

+

+

+

+

+

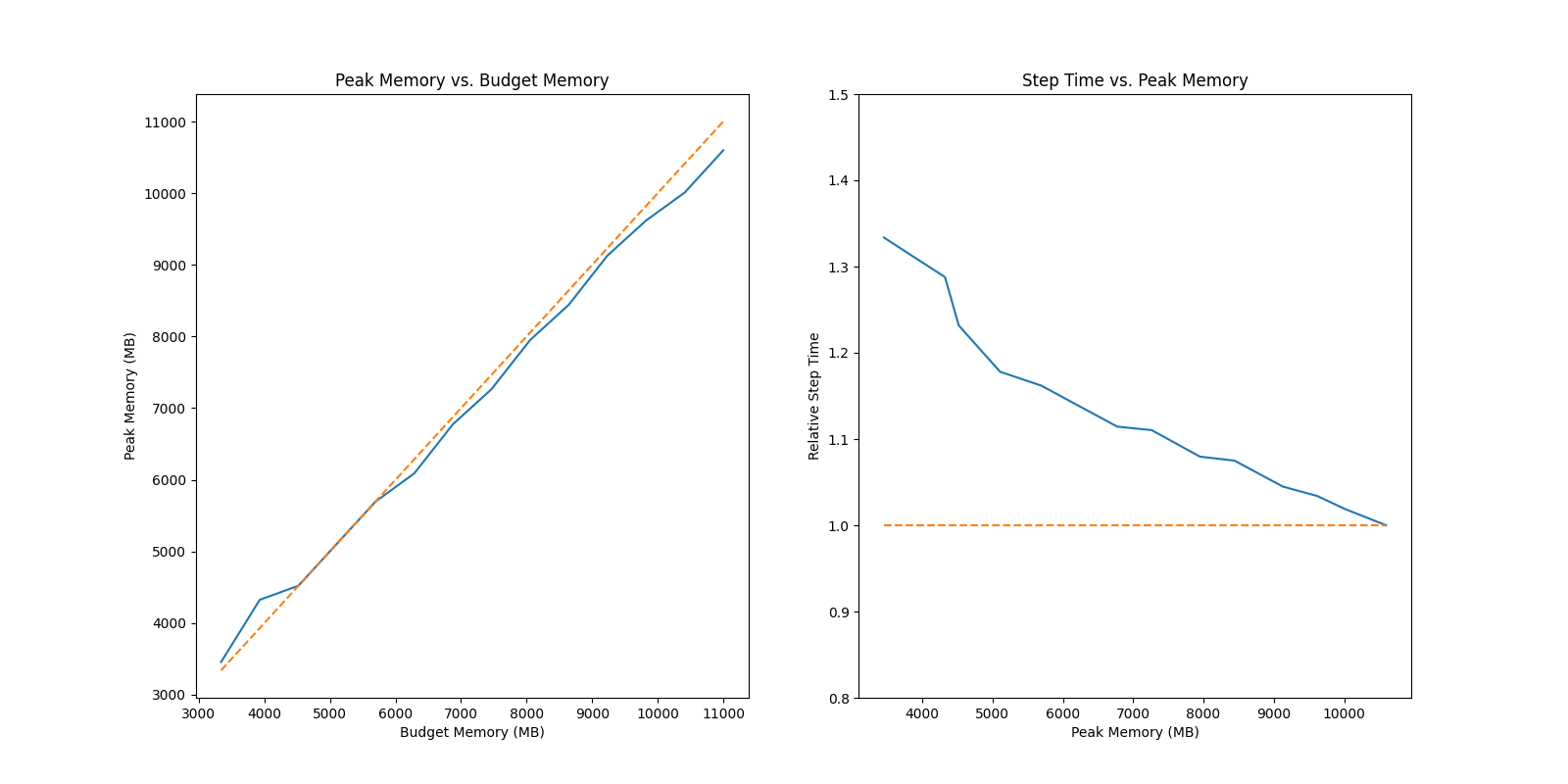

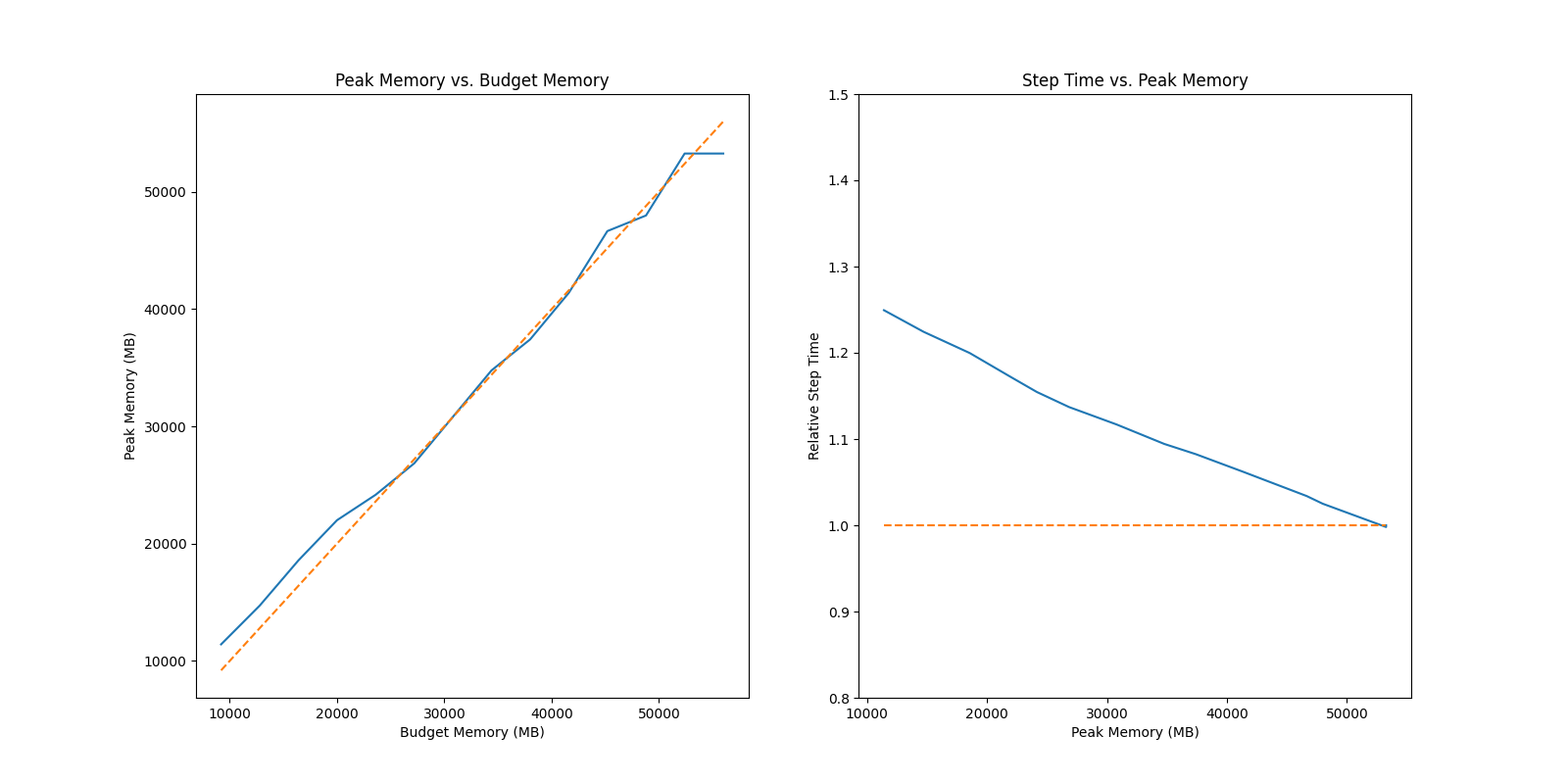

+- Increase the capacity of the fine-tuning model by up to 3.7 times on a single GPU

+- Keep in a sufficiently high running speed

+

+

+

### AIGC

Acceleration of AIGC (AI-Generated Content) models such as [Stable Diffusion v1](https://github.com/CompVis/stable-diffusion) and [Stable Diffusion v2](https://github.com/Stability-AI/stablediffusion).

@@ -244,7 +269,13 @@ Acceleration of [AlphaFold Protein Structure](https://alphafold.ebi.ac.uk/)

-- [FastFold](https://github.com/hpcaitech/FastFold): accelerating training and inference on GPU Clusters, faster data processing, inference sequence containing more than 10000 residues.

+- [FastFold](https://github.com/hpcaitech/FastFold): Accelerating training and inference on GPU Clusters, faster data processing, inference sequence containing more than 10000 residues.

+

+

+

+

+

+- [FastFold with Intel](https://github.com/hpcaitech/FastFold): 3x inference acceleration and 39% cost reduce.

@@ -257,10 +288,37 @@ Acceleration of [AlphaFold Protein Structure](https://alphafold.ebi.ac.uk/)

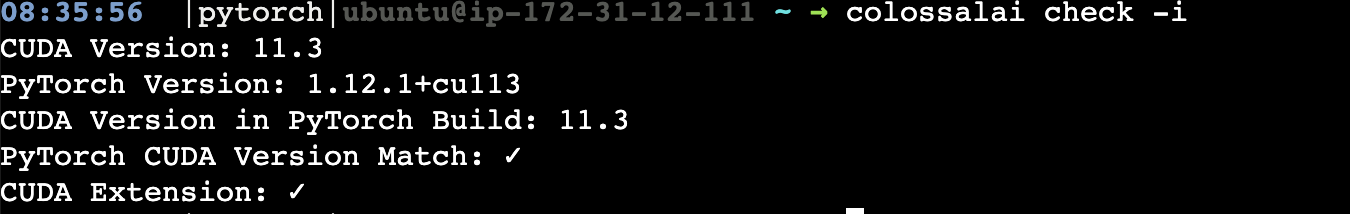

## Installation

-### Download From Official Releases

+Requirements:

+- PyTorch >= 1.11 (PyTorch 2.x in progress)

+- Python >= 3.7

+- CUDA >= 11.0

+

+If you encounter any problem about installation, you may want to raise an [issue](https://github.com/hpcaitech/ColossalAI/issues/new/choose) in this repository.

+

+### Install from PyPI

+

+You can easily install Colossal-AI with the following command. **By default, we do not build PyTorch extensions during installation.**

-You can visit the [Download](https://www.colossalai.org/download) page to download Colossal-AI with pre-built CUDA extensions.

+```bash

+pip install colossalai

+```

+

+**Note: only Linux is supported for now.**

+

+However, if you want to build the PyTorch extensions during installation, you can set `CUDA_EXT=1`.

+

+```bash

+CUDA_EXT=1 pip install colossalai

+```

+

+**Otherwise, CUDA kernels will be built during runtime when you actually need it.**

+

+We also keep release the nightly version to PyPI on a weekly basis. This allows you to access the unreleased features and bug fixes in the main branch.

+Installation can be made via

+```bash

+pip install colossalai-nightly

+```

### Download From Source

@@ -270,9 +328,6 @@ You can visit the [Download](https://www.colossalai.org/download) page to downlo

git clone https://github.com/hpcaitech/ColossalAI.git

cd ColossalAI

-# install dependency

-pip install -r requirements/requirements.txt

-

# install colossalai

pip install .

```

@@ -318,11 +373,15 @@ docker run -ti --gpus all --rm --ipc=host colossalai bash

Join the Colossal-AI community on [Forum](https://github.com/hpcaitech/ColossalAI/discussions),

[Slack](https://join.slack.com/t/colossalaiworkspace/shared_invite/zt-z7b26eeb-CBp7jouvu~r0~lcFzX832w),

-and [WeChat](https://raw.githubusercontent.com/hpcaitech/public_assets/main/colossalai/img/WeChat.png "qrcode") to share your suggestions, feedback, and questions with our engineering team.

+and [WeChat(微信)](https://raw.githubusercontent.com/hpcaitech/public_assets/main/colossalai/img/WeChat.png "qrcode") to share your suggestions, feedback, and questions with our engineering team.

-## Contributing

+## Invitation to open-source contribution

+Referring to the successful attempts of [BLOOM](https://bigscience.huggingface.co/) and [Stable Diffusion](https://en.wikipedia.org/wiki/Stable_Diffusion), any and all developers and partners with computing powers, datasets, models are welcome to join and build the Colossal-AI community, making efforts towards the era of big AI models!

-If you wish to contribute to this project, please follow the guideline in [Contributing](./CONTRIBUTING.md).

+You may contact us or participate in the following ways:

+1. [Leaving a Star ⭐](https://github.com/hpcaitech/ColossalAI/stargazers) to show your like and support. Thanks!

+2. Posting an [issue](https://github.com/hpcaitech/ColossalAI/issues/new/choose), or submitting a PR on GitHub follow the guideline in [Contributing](https://github.com/hpcaitech/ColossalAI/blob/main/CONTRIBUTING.md)

+3. Send your official proposal to email contact@hpcaitech.com

Thanks so much to all of our amazing contributors!

@@ -333,8 +392,17 @@ Thanks so much to all of our amazing contributors!

+## CI/CD

+

+We leverage the power of [GitHub Actions](https://github.com/features/actions) to automate our development, release and deployment workflows. Please check out this [documentation](.github/workflows/README.md) on how the automated workflows are operated.

+

+

## Cite Us

+This project is inspired by some related projects (some by our team and some by other organizations). We would like to credit these amazing projects as listed in the [Reference List](./docs/REFERENCE.md).

+

+To cite this project, you can use the following BibTeX citation.

+

```

@article{bian2021colossal,

title={Colossal-AI: A Unified Deep Learning System For Large-Scale Parallel Training},

@@ -344,4 +412,6 @@ Thanks so much to all of our amazing contributors!

}

```

+Colossal-AI has been accepted as official tutorials by top conference [SC](https://sc22.supercomputing.org/), [AAAI](https://aaai.org/Conferences/AAAI-23/), [PPoPP](https://ppopp23.sigplan.org/), [CVPR](https://cvpr2023.thecvf.com/), [ISC](https://www.isc-hpc.com/), etc.

+

diff --git a/applications/ChatGPT/.gitignore b/applications/ChatGPT/.gitignore

new file mode 100644

index 000000000000..40f3f6debeee

--- /dev/null

+++ b/applications/ChatGPT/.gitignore

@@ -0,0 +1,146 @@

+# Byte-compiled / optimized / DLL files

+__pycache__/

+*.py[cod]

+*$py.class

+

+# C extensions

+*.so

+

+# Distribution / packaging

+.Python

+build/

+develop-eggs/

+dist/

+downloads/

+eggs/

+.eggs/

+lib/

+lib64/

+parts/

+sdist/

+var/

+wheels/

+pip-wheel-metadata/

+share/python-wheels/

+*.egg-info/

+.installed.cfg

+*.egg

+MANIFEST

+

+# PyInstaller

+# Usually these files are written by a python script from a template

+# before PyInstaller builds the exe, so as to inject date/other infos into it.

+*.manifest

+*.spec

+

+# Installer logs

+pip-log.txt

+pip-delete-this-directory.txt

+

+# Unit test / coverage reports

+htmlcov/

+.tox/

+.nox/

+.coverage

+.coverage.*

+.cache

+nosetests.xml

+coverage.xml

+*.cover

+*.py,cover

+.hypothesis/

+.pytest_cache/

+

+# Translations

+*.mo

+*.pot

+

+# Django stuff:

+*.log

+local_settings.py

+db.sqlite3

+db.sqlite3-journal

+

+# Flask stuff:

+instance/

+.webassets-cache

+

+# Scrapy stuff:

+.scrapy

+

+# Sphinx documentation

+docs/_build/

+docs/.build/

+

+# PyBuilder

+target/

+

+# Jupyter Notebook

+.ipynb_checkpoints

+

+# IPython

+profile_default/

+ipython_config.py

+

+# pyenv

+.python-version

+

+# pipenv

+# According to pypa/pipenv#598, it is recommended to include Pipfile.lock in version control.

+# However, in case of collaboration, if having platform-specific dependencies or dependencies

+# having no cross-platform support, pipenv may install dependencies that don't work, or not

+# install all needed dependencies.

+#Pipfile.lock

+

+# PEP 582; used by e.g. github.com/David-OConnor/pyflow

+__pypackages__/

+

+# Celery stuff

+celerybeat-schedule

+celerybeat.pid

+

+# SageMath parsed files

+*.sage.py

+

+# Environments

+.env

+.venv

+env/

+venv/

+ENV/

+env.bak/

+venv.bak/

+

+# Spyder project settings

+.spyderproject

+.spyproject

+

+# Rope project settings

+.ropeproject

+

+# mkdocs documentation

+/site

+

+# mypy

+.mypy_cache/

+.dmypy.json

+dmypy.json

+

+# Pyre type checker

+.pyre/

+

+# IDE

+.idea/

+.vscode/

+

+# macos

+*.DS_Store

+#data/

+

+docs/.build

+

+# pytorch checkpoint

+*.pt

+

+# ignore version.py generated by setup.py

+colossalai/version.py

diff --git a/applications/ChatGPT/LICENSE b/applications/ChatGPT/LICENSE

new file mode 100644

index 000000000000..0528c89ea9ec

--- /dev/null

+++ b/applications/ChatGPT/LICENSE

@@ -0,0 +1,202 @@

+Copyright 2021- HPC-AI Technology Inc. All rights reserved.

+ Apache License

+ Version 2.0, January 2004

+ http://www.apache.org/licenses/

+

+ TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION

+

+ 1. Definitions.

+

+ "License" shall mean the terms and conditions for use, reproduction,

+ and distribution as defined by Sections 1 through 9 of this document.

+

+ "Licensor" shall mean the copyright owner or entity authorized by

+ the copyright owner that is granting the License.

+

+ "Legal Entity" shall mean the union of the acting entity and all

+ other entities that control, are controlled by, or are under common

+ control with that entity. For the purposes of this definition,

+ "control" means (i) the power, direct or indirect, to cause the

+ direction or management of such entity, whether by contract or

+ otherwise, or (ii) ownership of fifty percent (50%) or more of the

+ outstanding shares, or (iii) beneficial ownership of such entity.

+

+ "You" (or "Your") shall mean an individual or Legal Entity

+ exercising permissions granted by this License.

+

+ "Source" form shall mean the preferred form for making modifications,

+ including but not limited to software source code, documentation

+ source, and configuration files.

+

+ "Object" form shall mean any form resulting from mechanical

+ transformation or translation of a Source form, including but

+ not limited to compiled object code, generated documentation,

+ and conversions to other media types.

+

+ "Work" shall mean the work of authorship, whether in Source or

+ Object form, made available under the License, as indicated by a

+ copyright notice that is included in or attached to the work

+ (an example is provided in the Appendix below).

+

+ "Derivative Works" shall mean any work, whether in Source or Object

+ form, that is based on (or derived from) the Work and for which the

+ editorial revisions, annotations, elaborations, or other modifications

+ represent, as a whole, an original work of authorship. For the purposes

+ of this License, Derivative Works shall not include works that remain

+ separable from, or merely link (or bind by name) to the interfaces of,

+ the Work and Derivative Works thereof.

+

+ "Contribution" shall mean any work of authorship, including

+ the original version of the Work and any modifications or additions

+ to that Work or Derivative Works thereof, that is intentionally

+ submitted to Licensor for inclusion in the Work by the copyright owner

+ or by an individual or Legal Entity authorized to submit on behalf of

+ the copyright owner. For the purposes of this definition, "submitted"

+ means any form of electronic, verbal, or written communication sent

+ to the Licensor or its representatives, including but not limited to

+ communication on electronic mailing lists, source code control systems,

+ and issue tracking systems that are managed by, or on behalf of, the

+ Licensor for the purpose of discussing and improving the Work, but

+ excluding communication that is conspicuously marked or otherwise

+ designated in writing by the copyright owner as "Not a Contribution."

+

+ "Contributor" shall mean Licensor and any individual or Legal Entity

+ on behalf of whom a Contribution has been received by Licensor and

+ subsequently incorporated within the Work.

+

+ 2. Grant of Copyright License. Subject to the terms and conditions of

+ this License, each Contributor hereby grants to You a perpetual,

+ worldwide, non-exclusive, no-charge, royalty-free, irrevocable

+ copyright license to reproduce, prepare Derivative Works of,

+ publicly display, publicly perform, sublicense, and distribute the

+ Work and such Derivative Works in Source or Object form.

+

+ 3. Grant of Patent License. Subject to the terms and conditions of

+ this License, each Contributor hereby grants to You a perpetual,

+ worldwide, non-exclusive, no-charge, royalty-free, irrevocable

+ (except as stated in this section) patent license to make, have made,

+ use, offer to sell, sell, import, and otherwise transfer the Work,

+ where such license applies only to those patent claims licensable

+ by such Contributor that are necessarily infringed by their

+ Contribution(s) alone or by combination of their Contribution(s)

+ with the Work to which such Contribution(s) was submitted. If You

+ institute patent litigation against any entity (including a

+ cross-claim or counterclaim in a lawsuit) alleging that the Work

+ or a Contribution incorporated within the Work constitutes direct

+ or contributory patent infringement, then any patent licenses

+ granted to You under this License for that Work shall terminate

+ as of the date such litigation is filed.

+

+ 4. Redistribution. You may reproduce and distribute copies of the

+ Work or Derivative Works thereof in any medium, with or without

+ modifications, and in Source or Object form, provided that You

+ meet the following conditions:

+

+ (a) You must give any other recipients of the Work or

+ Derivative Works a copy of this License; and

+

+ (b) You must cause any modified files to carry prominent notices

+ stating that You changed the files; and

+

+ (c) You must retain, in the Source form of any Derivative Works

+ that You distribute, all copyright, patent, trademark, and

+ attribution notices from the Source form of the Work,

+ excluding those notices that do not pertain to any part of

+ the Derivative Works; and

+

+ (d) If the Work includes a "NOTICE" text file as part of its

+ distribution, then any Derivative Works that You distribute must

+ include a readable copy of the attribution notices contained

+ within such NOTICE file, excluding those notices that do not

+ pertain to any part of the Derivative Works, in at least one

+ of the following places: within a NOTICE text file distributed

+ as part of the Derivative Works; within the Source form or

+ documentation, if provided along with the Derivative Works; or,

+ within a display generated by the Derivative Works, if and

+ wherever such third-party notices normally appear. The contents

+ of the NOTICE file are for informational purposes only and

+ do not modify the License. You may add Your own attribution

+ notices within Derivative Works that You distribute, alongside

+ or as an addendum to the NOTICE text from the Work, provided

+ that such additional attribution notices cannot be construed

+ as modifying the License.

+

+ You may add Your own copyright statement to Your modifications and

+ may provide additional or different license terms and conditions

+ for use, reproduction, or distribution of Your modifications, or

+ for any such Derivative Works as a whole, provided Your use,

+ reproduction, and distribution of the Work otherwise complies with

+ the conditions stated in this License.

+

+ 5. Submission of Contributions. Unless You explicitly state otherwise,

+ any Contribution intentionally submitted for inclusion in the Work

+ by You to the Licensor shall be under the terms and conditions of

+ this License, without any additional terms or conditions.

+ Notwithstanding the above, nothing herein shall supersede or modify

+ the terms of any separate license agreement you may have executed

+ with Licensor regarding such Contributions.

+

+ 6. Trademarks. This License does not grant permission to use the trade

+ names, trademarks, service marks, or product names of the Licensor,

+ except as required for reasonable and customary use in describing the

+ origin of the Work and reproducing the content of the NOTICE file.

+

+ 7. Disclaimer of Warranty. Unless required by applicable law or

+ agreed to in writing, Licensor provides the Work (and each

+ Contributor provides its Contributions) on an "AS IS" BASIS,

+ WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or

+ implied, including, without limitation, any warranties or conditions

+ of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A

+ PARTICULAR PURPOSE. You are solely responsible for determining the

+ appropriateness of using or redistributing the Work and assume any

+ risks associated with Your exercise of permissions under this License.

+

+ 8. Limitation of Liability. In no event and under no legal theory,

+ whether in tort (including negligence), contract, or otherwise,

+ unless required by applicable law (such as deliberate and grossly

+ negligent acts) or agreed to in writing, shall any Contributor be

+ liable to You for damages, including any direct, indirect, special,

+ incidental, or consequential damages of any character arising as a

+ result of this License or out of the use or inability to use the

+ Work (including but not limited to damages for loss of goodwill,

+ work stoppage, computer failure or malfunction, or any and all

+ other commercial damages or losses), even if such Contributor

+ has been advised of the possibility of such damages.

+

+ 9. Accepting Warranty or Additional Liability. While redistributing

+ the Work or Derivative Works thereof, You may choose to offer,

+ and charge a fee for, acceptance of support, warranty, indemnity,

+ or other liability obligations and/or rights consistent with this

+ License. However, in accepting such obligations, You may act only

+ on Your own behalf and on Your sole responsibility, not on behalf

+ of any other Contributor, and only if You agree to indemnify,

+ defend, and hold each Contributor harmless for any liability

+ incurred by, or claims asserted against, such Contributor by reason

+ of your accepting any such warranty or additional liability.

+

+ END OF TERMS AND CONDITIONS

+

+ APPENDIX: How to apply the Apache License to your work.

+

+ To apply the Apache License to your work, attach the following

+ boilerplate notice, with the fields enclosed by brackets "[]"

+ replaced with your own identifying information. (Don't include

+ the brackets!) The text should be enclosed in the appropriate

+ comment syntax for the file format. We also recommend that a

+ file or class name and description of purpose be included on the

+ same "printed page" as the copyright notice for easier

+ identification within third-party archives.

+

+ Copyright 2021- HPC-AI Technology Inc.

+

+ Licensed under the Apache License, Version 2.0 (the "License");

+ you may not use this file except in compliance with the License.

+ You may obtain a copy of the License at

+

+ http://www.apache.org/licenses/LICENSE-2.0

+

+ Unless required by applicable law or agreed to in writing, software

+ distributed under the License is distributed on an "AS IS" BASIS,

+ WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+ See the License for the specific language governing permissions and

+ limitations under the License.

diff --git a/applications/ChatGPT/README.md b/applications/ChatGPT/README.md

new file mode 100644

index 000000000000..206ede5f1843

--- /dev/null

+++ b/applications/ChatGPT/README.md

@@ -0,0 +1,209 @@

+# RLHF - Colossal-AI

+

+## Table of Contents

+

+- [What is RLHF - Colossal-AI?](#intro)

+- [How to Install?](#install)

+- [The Plan](#the-plan)

+- [How can you partcipate in open source?](#invitation-to-open-source-contribution)

+---

+## Intro

+Implementation of RLHF (Reinforcement Learning with Human Feedback) powered by Colossal-AI. It supports distributed training and offloading, which can fit extremly large models. More details can be found in the [blog](https://www.hpc-ai.tech/blog/colossal-ai-chatgpt).

+

+

+

+

+

+## Training process (step 3)

+

+

+

+

+

+

+

+

+## Install

+```shell

+pip install .

+```

+

+## Usage

+

+The main entrypoint is `Trainer`. We only support PPO trainer now. We support many training strategies:

+

+- NaiveStrategy: simplest strategy. Train on single GPU.

+- DDPStrategy: use `torch.nn.parallel.DistributedDataParallel`. Train on multi GPUs.

+- ColossalAIStrategy: use Gemini and Zero of ColossalAI. It eliminates model duplication on each GPU and supports offload. It's very useful when training large models on multi GPUs.

+

+Simplest usage:

+

+```python

+from chatgpt.trainer import PPOTrainer

+from chatgpt.trainer.strategies import ColossalAIStrategy

+from chatgpt.models.gpt import GPTActor, GPTCritic

+from chatgpt.models.base import RewardModel

+from copy import deepcopy

+from colossalai.nn.optimizer import HybridAdam

+

+strategy = ColossalAIStrategy()

+

+with strategy.model_init_context():

+ # init your model here

+ # load pretrained gpt2

+ actor = GPTActor(pretrained='gpt2')

+ critic = GPTCritic()

+ initial_model = deepcopy(actor).cuda()

+ reward_model = RewardModel(deepcopy(critic.model), deepcopy(critic.value_head)).cuda()

+

+actor_optim = HybridAdam(actor.parameters(), lr=5e-6)

+critic_optim = HybridAdam(critic.parameters(), lr=5e-6)

+

+# prepare models and optimizers

+(actor, actor_optim), (critic, critic_optim), reward_model, initial_model = strategy.prepare(

+ (actor, actor_optim), (critic, critic_optim), reward_model, initial_model)

+

+# load saved model checkpoint after preparing

+strategy.load_model(actor, 'actor_checkpoint.pt', strict=False)

+# load saved optimizer checkpoint after preparing

+strategy.load_optimizer(actor_optim, 'actor_optim_checkpoint.pt')

+

+trainer = PPOTrainer(strategy,

+ actor,

+ critic,

+ reward_model,

+ initial_model,

+ actor_optim,

+ critic_optim,

+ ...)

+

+trainer.fit(dataset, ...)

+

+# save model checkpoint after fitting on only rank0

+strategy.save_model(actor, 'actor_checkpoint.pt', only_rank0=True)

+# save optimizer checkpoint on all ranks

+strategy.save_optimizer(actor_optim, 'actor_optim_checkpoint.pt', only_rank0=False)

+```

+

+For more details, see `examples/`.

+

+We also support training reward model with true-world data. See `examples/train_reward_model.py`.

+

+## FAQ

+

+### How to save/load checkpoint

+

+To load pretrained model, you can simply use huggingface pretrained models:

+

+```python

+# load OPT-350m pretrained model

+actor = OPTActor(pretrained='facebook/opt-350m')

+```

+

+To save model checkpoint:

+

+```python

+# save model checkpoint on only rank0

+strategy.save_model(actor, 'actor_checkpoint.pt', only_rank0=True)

+```

+

+This function must be called after `strategy.prepare()`.

+

+For DDP strategy, model weights are replicated on all ranks. And for ColossalAI strategy, model weights may be sharded, but all-gather will be applied before returning state dict. You can set `only_rank0=True` for both of them, which only saves checkpoint on rank0, to save disk space usage. The checkpoint is float32.

+

+To save optimizer checkpoint:

+

+```python

+# save optimizer checkpoint on all ranks

+strategy.save_optimizer(actor_optim, 'actor_optim_checkpoint.pt', only_rank0=False)

+```

+

+For DDP strategy, optimizer states are replicated on all ranks. You can set `only_rank0=True`. But for ColossalAI strategy, optimizer states are sharded over all ranks, and no all-gather will be applied. So for ColossalAI strategy, you can only set `only_rank0=False`. That is to say, each rank will save a cehckpoint. When loading, each rank should load the corresponding part.

+

+Note that different stategy may have different shapes of optimizer checkpoint.

+

+To load model checkpoint:

+

+```python

+# load saved model checkpoint after preparing

+strategy.load_model(actor, 'actor_checkpoint.pt', strict=False)

+```

+

+To load optimizer checkpoint:

+

+```python

+# load saved optimizer checkpoint after preparing

+strategy.load_optimizer(actor_optim, 'actor_optim_checkpoint.pt')

+```

+

+## The Plan

+

+- [x] implement PPO fine-tuning

+- [x] implement training reward model

+- [x] support LoRA

+- [x] support inference

+- [ ] open source the reward model weight

+- [ ] support llama from [facebook](https://github.com/facebookresearch/llama)

+- [ ] support BoN(best of N sample)

+- [ ] implement PPO-ptx fine-tuning

+- [ ] integrate with Ray

+- [ ] support more RL paradigms, like Implicit Language Q-Learning (ILQL),

+- [ ] support chain of throught by [langchain](https://github.com/hwchase17/langchain)

+

+### Real-time progress

+You will find our progress in github project broad

+

+[Open ChatGPT](https://github.com/orgs/hpcaitech/projects/17/views/1)

+

+## Invitation to open-source contribution

+Referring to the successful attempts of [BLOOM](https://bigscience.huggingface.co/) and [Stable Diffusion](https://en.wikipedia.org/wiki/Stable_Diffusion), any and all developers and partners with computing powers, datasets, models are welcome to join and build the Colossal-AI community, making efforts towards the era of big AI models from the starting point of replicating ChatGPT!

+

+You may contact us or participate in the following ways:

+1. [Leaving a Star ⭐](https://github.com/hpcaitech/ColossalAI/stargazers) to show your like and support. Thanks!

+2. Posting an [issue](https://github.com/hpcaitech/ColossalAI/issues/new/choose), or submitting a PR on GitHub follow the guideline in [Contributing](https://github.com/hpcaitech/ColossalAI/blob/main/CONTRIBUTING.md).

+3. Join the Colossal-AI community on

+[Slack](https://join.slack.com/t/colossalaiworkspace/shared_invite/zt-z7b26eeb-CBp7jouvu~r0~lcFzX832w),

+and [WeChat(微信)](https://raw.githubusercontent.com/hpcaitech/public_assets/main/colossalai/img/WeChat.png "qrcode") to share your ideas.

+4. Send your official proposal to email contact@hpcaitech.com

+

+Thanks so much to all of our amazing contributors!

+

+## Quick Preview

+

+

+

+

+- Up to 7.73 times faster for single server training and 1.42 times faster for single-GPU inference

+

+

+

+

+

+- Up to 10.3x growth in model capacity on one GPU

+- A mini demo training process requires only 1.62GB of GPU memory (any consumer-grade GPU)

+

+

+

+

+

+- Increase the capacity of the fine-tuning model by up to 3.7 times on a single GPU

+- Keep in a sufficiently high running speed

+

+## Citations

+

+```bibtex

+@article{Hu2021LoRALA,

+ title = {LoRA: Low-Rank Adaptation of Large Language Models},

+ author = {Edward J. Hu and Yelong Shen and Phillip Wallis and Zeyuan Allen-Zhu and Yuanzhi Li and Shean Wang and Weizhu Chen},

+ journal = {ArXiv},

+ year = {2021},

+ volume = {abs/2106.09685}

+}

+

+@article{ouyang2022training,

+ title={Training language models to follow instructions with human feedback},

+ author={Ouyang, Long and Wu, Jeff and Jiang, Xu and Almeida, Diogo and Wainwright, Carroll L and Mishkin, Pamela and Zhang, Chong and Agarwal, Sandhini and Slama, Katarina and Ray, Alex and others},

+ journal={arXiv preprint arXiv:2203.02155},

+ year={2022}

+}

+```

diff --git a/applications/ChatGPT/benchmarks/README.md b/applications/ChatGPT/benchmarks/README.md

new file mode 100644

index 000000000000..b4e28ba1d764

--- /dev/null

+++ b/applications/ChatGPT/benchmarks/README.md

@@ -0,0 +1,94 @@

+# Benchmarks

+

+## Benchmark GPT on dummy prompt data

+

+We provide various GPT models (string in parentheses is the corresponding model name used in this script):

+

+- GPT2-S (s)

+- GPT2-M (m)

+- GPT2-L (l)

+- GPT2-XL (xl)

+- GPT2-4B (4b)

+- GPT2-6B (6b)

+- GPT2-8B (8b)

+- GPT2-10B (10b)

+- GPT2-12B (12b)

+- GPT2-15B (15b)

+- GPT2-18B (18b)

+- GPT2-20B (20b)

+- GPT2-24B (24b)

+- GPT2-28B (28b)

+- GPT2-32B (32b)

+- GPT2-36B (36b)

+- GPT2-40B (40b)

+- GPT3 (175b)

+

+We also provide various training strategies:

+

+- ddp: torch DDP

+- colossalai_gemini: ColossalAI GeminiDDP with `placement_policy="cuda"`, like zero3

+- colossalai_gemini_cpu: ColossalAI GeminiDDP with `placement_policy="cpu"`, like zero3-offload

+- colossalai_zero2: ColossalAI zero2

+- colossalai_zero2_cpu: ColossalAI zero2-offload

+- colossalai_zero1: ColossalAI zero1

+- colossalai_zero1_cpu: ColossalAI zero1-offload

+

+We only support `torchrun` to launch now. E.g.

+

+```shell

+# run GPT2-S on single-node single-GPU with min batch size

+torchrun --standalone --nproc_per_node 1 benchmark_gpt_dummy.py --model s --strategy ddp --experience_batch_size 1 --train_batch_size 1

+# run GPT2-XL on single-node 4-GPU

+torchrun --standalone --nproc_per_node 4 benchmark_gpt_dummy.py --model xl --strategy colossalai_zero2

+# run GPT3 on 8-node 8-GPU

+torchrun --nnodes 8 --nproc_per_node 8 \

+ --rdzv_id=$JOB_ID --rdzv_backend=c10d --rdzv_endpoint=$HOST_NODE_ADDR \

+ benchmark_gpt_dummy.py --model 175b --strategy colossalai_gemini

+```

+

+> ⚠ Batch sizes in CLI args and outputed throughput/TFLOPS are all values of per GPU.

+

+In this benchmark, we assume the model architectures/sizes of actor and critic are the same for simplicity. But in practice, to reduce training cost, we may use a smaller critic.

+

+We also provide a simple shell script to run a set of benchmarks. But it only supports benchmark on single node. However, it's easy to run on multi-nodes by modifying launch command in this script.

+

+Usage:

+

+```shell

+# run for GPUS=(1 2 4 8) x strategy=("ddp" "colossalai_zero2" "colossalai_gemini" "colossalai_zero2_cpu" "colossalai_gemini_cpu") x model=("s" "m" "l" "xl" "2b" "4b" "6b" "8b" "10b") x batch_size=(1 2 4 8 16 32 64 128 256)

+./benchmark_gpt_dummy.sh

+# run for GPUS=2 x strategy=("ddp" "colossalai_zero2" "colossalai_gemini" "colossalai_zero2_cpu" "colossalai_gemini_cpu") x model=("s" "m" "l" "xl" "2b" "4b" "6b" "8b" "10b") x batch_size=(1 2 4 8 16 32 64 128 256)

+./benchmark_gpt_dummy.sh 2

+# run for GPUS=2 x strategy=ddp x model=("s" "m" "l" "xl" "2b" "4b" "6b" "8b" "10b") x batch_size=(1 2 4 8 16 32 64 128 256)

+./benchmark_gpt_dummy.sh 2 ddp

+# run for GPUS=2 x strategy=ddp x model=l x batch_size=(1 2 4 8 16 32 64 128 256)

+./benchmark_gpt_dummy.sh 2 ddp l

+```

+

+## Benchmark OPT with LoRA on dummy prompt data

+

+We provide various OPT models (string in parentheses is the corresponding model name used in this script):

+

+- OPT-125M (125m)

+- OPT-350M (350m)

+- OPT-700M (700m)

+- OPT-1.3B (1.3b)

+- OPT-2.7B (2.7b)

+- OPT-3.5B (3.5b)

+- OPT-5.5B (5.5b)

+- OPT-6.7B (6.7b)

+- OPT-10B (10b)

+- OPT-13B (13b)

+

+We only support `torchrun` to launch now. E.g.

+

+```shell

+# run OPT-125M with no lora (lora_rank=0) on single-node single-GPU with min batch size

+torchrun --standalone --nproc_per_node 1 benchmark_opt_lora_dummy.py --model 125m --strategy ddp --experience_batch_size 1 --train_batch_size 1 --lora_rank 0

+# run OPT-350M with lora_rank=4 on single-node 4-GPU

+torchrun --standalone --nproc_per_node 4 benchmark_opt_lora_dummy.py --model 350m --strategy colossalai_zero2 --lora_rank 4

+```

+

+> ⚠ Batch sizes in CLI args and outputed throughput/TFLOPS are all values of per GPU.

+

+In this benchmark, we assume the model architectures/sizes of actor and critic are the same for simplicity. But in practice, to reduce training cost, we may use a smaller critic.

diff --git a/applications/ChatGPT/benchmarks/benchmark_gpt_dummy.py b/applications/ChatGPT/benchmarks/benchmark_gpt_dummy.py

new file mode 100644

index 000000000000..5ee65763b936

--- /dev/null

+++ b/applications/ChatGPT/benchmarks/benchmark_gpt_dummy.py

@@ -0,0 +1,184 @@

+import argparse

+from copy import deepcopy

+

+import torch

+import torch.distributed as dist

+import torch.nn as nn

+from chatgpt.models.base import RewardModel

+from chatgpt.models.gpt import GPTActor, GPTCritic

+from chatgpt.trainer import PPOTrainer

+from chatgpt.trainer.callbacks import PerformanceEvaluator

+from chatgpt.trainer.strategies import ColossalAIStrategy, DDPStrategy, Strategy

+from torch.optim import Adam

+from transformers.models.gpt2.configuration_gpt2 import GPT2Config

+from transformers.models.gpt2.tokenization_gpt2 import GPT2Tokenizer

+

+from colossalai.nn.optimizer import HybridAdam

+

+

+def get_model_numel(model: nn.Module, strategy: Strategy) -> int:

+ numel = sum(p.numel() for p in model.parameters())

+ if isinstance(strategy, ColossalAIStrategy) and strategy.stage == 3 and strategy.shard_init:

+ numel *= dist.get_world_size()

+ return numel

+

+

+def preprocess_batch(samples) -> dict:

+ input_ids = torch.stack(samples)

+ attention_mask = torch.ones_like(input_ids, dtype=torch.long)

+ return {'input_ids': input_ids, 'attention_mask': attention_mask}

+

+

+def print_rank_0(*args, **kwargs) -> None:

+ if dist.get_rank() == 0:

+ print(*args, **kwargs)

+

+

+def print_model_numel(model_dict: dict) -> None:

+ B = 1024**3

+ M = 1024**2

+ K = 1024

+ outputs = ''

+ for name, numel in model_dict.items():

+ outputs += f'{name}: '

+ if numel >= B:

+ outputs += f'{numel / B:.2f} B\n'

+ elif numel >= M:

+ outputs += f'{numel / M:.2f} M\n'

+ elif numel >= K:

+ outputs += f'{numel / K:.2f} K\n'

+ else:

+ outputs += f'{numel}\n'

+ print_rank_0(outputs)

+

+

+def get_gpt_config(model_name: str) -> GPT2Config:

+ model_map = {

+ 's': GPT2Config(),

+ 'm': GPT2Config(n_embd=1024, n_layer=24, n_head=16),

+ 'l': GPT2Config(n_embd=1280, n_layer=36, n_head=20),

+ 'xl': GPT2Config(n_embd=1600, n_layer=48, n_head=25),

+ '2b': GPT2Config(n_embd=2048, n_layer=40, n_head=16),

+ '4b': GPT2Config(n_embd=2304, n_layer=64, n_head=16),

+ '6b': GPT2Config(n_embd=4096, n_layer=30, n_head=16),

+ '8b': GPT2Config(n_embd=4096, n_layer=40, n_head=16),

+ '10b': GPT2Config(n_embd=4096, n_layer=50, n_head=16),

+ '12b': GPT2Config(n_embd=4096, n_layer=60, n_head=16),

+ '15b': GPT2Config(n_embd=4096, n_layer=78, n_head=16),

+ '18b': GPT2Config(n_embd=4096, n_layer=90, n_head=16),

+ '20b': GPT2Config(n_embd=8192, n_layer=25, n_head=16),

+ '24b': GPT2Config(n_embd=8192, n_layer=30, n_head=16),

+ '28b': GPT2Config(n_embd=8192, n_layer=35, n_head=16),

+ '32b': GPT2Config(n_embd=8192, n_layer=40, n_head=16),

+ '36b': GPT2Config(n_embd=8192, n_layer=45, n_head=16),

+ '40b': GPT2Config(n_embd=8192, n_layer=50, n_head=16),

+ '175b': GPT2Config(n_positions=2048, n_embd=12288, n_layer=96, n_head=96),

+ }

+ try:

+ return model_map[model_name]

+ except KeyError:

+ raise ValueError(f'Unknown model "{model_name}"')

+

+

+def main(args):

+ if args.strategy == 'ddp':

+ strategy = DDPStrategy()

+ elif args.strategy == 'colossalai_gemini':

+ strategy = ColossalAIStrategy(stage=3, placement_policy='cuda', initial_scale=2**5)

+ elif args.strategy == 'colossalai_gemini_cpu':

+ strategy = ColossalAIStrategy(stage=3, placement_policy='cpu', initial_scale=2**5)

+ elif args.strategy == 'colossalai_zero2':

+ strategy = ColossalAIStrategy(stage=2, placement_policy='cuda')

+ elif args.strategy == 'colossalai_zero2_cpu':

+ strategy = ColossalAIStrategy(stage=2, placement_policy='cpu')

+ elif args.strategy == 'colossalai_zero1':

+ strategy = ColossalAIStrategy(stage=1, placement_policy='cuda')

+ elif args.strategy == 'colossalai_zero1_cpu':

+ strategy = ColossalAIStrategy(stage=1, placement_policy='cpu')

+ else:

+ raise ValueError(f'Unsupported strategy "{args.strategy}"')

+

+ model_config = get_gpt_config(args.model)

+

+ with strategy.model_init_context():

+ actor = GPTActor(config=model_config).cuda()

+ critic = GPTCritic(config=model_config).cuda()

+

+ initial_model = deepcopy(actor).cuda()

+ reward_model = RewardModel(deepcopy(critic.model), deepcopy(critic.value_head)).cuda()

+

+ actor_numel = get_model_numel(actor, strategy)

+ critic_numel = get_model_numel(critic, strategy)

+ initial_model_numel = get_model_numel(initial_model, strategy)

+ reward_model_numel = get_model_numel(reward_model, strategy)

+ print_model_numel({

+ 'Actor': actor_numel,

+ 'Critic': critic_numel,

+ 'Initial model': initial_model_numel,

+ 'Reward model': reward_model_numel

+ })

+ performance_evaluator = PerformanceEvaluator(actor_numel,

+ critic_numel,

+ initial_model_numel,

+ reward_model_numel,

+ enable_grad_checkpoint=False,

+ ignore_episodes=1)

+

+ if args.strategy.startswith('colossalai'):

+ actor_optim = HybridAdam(actor.parameters(), lr=5e-6)

+ critic_optim = HybridAdam(critic.parameters(), lr=5e-6)

+ else:

+ actor_optim = Adam(actor.parameters(), lr=5e-6)

+ critic_optim = Adam(critic.parameters(), lr=5e-6)

+

+ tokenizer = GPT2Tokenizer.from_pretrained('gpt2')

+ tokenizer.pad_token = tokenizer.eos_token

+

+ (actor, actor_optim), (critic, critic_optim), reward_model, initial_model = strategy.prepare(

+ (actor, actor_optim), (critic, critic_optim), reward_model, initial_model)

+

+ trainer = PPOTrainer(strategy,

+ actor,

+ critic,

+ reward_model,

+ initial_model,

+ actor_optim,

+ critic_optim,

+ max_epochs=args.max_epochs,

+ train_batch_size=args.train_batch_size,

+ experience_batch_size=args.experience_batch_size,

+ tokenizer=preprocess_batch,

+ max_length=512,

+ do_sample=True,

+ temperature=1.0,

+ top_k=50,

+ pad_token_id=tokenizer.pad_token_id,

+ eos_token_id=tokenizer.eos_token_id,

+ callbacks=[performance_evaluator])

+

+ random_prompts = torch.randint(tokenizer.vocab_size, (1000, 400), device=torch.cuda.current_device())

+ trainer.fit(random_prompts,

+ num_episodes=args.num_episodes,

+ max_timesteps=args.max_timesteps,

+ update_timesteps=args.update_timesteps)

+

+ print_rank_0(f'Peak CUDA mem: {torch.cuda.max_memory_allocated()/1024**3:.2f} GB')

+

+

+if __name__ == '__main__':

+ parser = argparse.ArgumentParser()

+ parser.add_argument('--model', default='s')

+ parser.add_argument('--strategy',

+ choices=[

+ 'ddp', 'colossalai_gemini', 'colossalai_gemini_cpu', 'colossalai_zero2',

+ 'colossalai_zero2_cpu', 'colossalai_zero1', 'colossalai_zero1_cpu'

+ ],

+ default='ddp')

+ parser.add_argument('--num_episodes', type=int, default=3)

+ parser.add_argument('--max_timesteps', type=int, default=8)

+ parser.add_argument('--update_timesteps', type=int, default=8)

+ parser.add_argument('--max_epochs', type=int, default=3)

+ parser.add_argument('--train_batch_size', type=int, default=8)

+ parser.add_argument('--experience_batch_size', type=int, default=8)

+ args = parser.parse_args()

+ main(args)

diff --git a/applications/ChatGPT/benchmarks/benchmark_gpt_dummy.sh b/applications/ChatGPT/benchmarks/benchmark_gpt_dummy.sh

new file mode 100755

index 000000000000..d70f8872570a

--- /dev/null

+++ b/applications/ChatGPT/benchmarks/benchmark_gpt_dummy.sh

@@ -0,0 +1,45 @@

+#!/usr/bin/env bash

+# Usage: $0

+set -xu

+

+BASE=$(realpath $(dirname $0))

+

+

+PY_SCRIPT=${BASE}/benchmark_gpt_dummy.py

+export OMP_NUM_THREADS=8

+

+function tune_batch_size() {

+ # we found when experience batch size is equal to train batch size

+ # peak CUDA memory usage of making experience phase is less than or equal to that of training phase

+ # thus, experience batch size can be larger than or equal to train batch size

+ for bs in 1 2 4 8 16 32 64 128 256; do

+ torchrun --standalone --nproc_per_node $1 $PY_SCRIPT --model $2 --strategy $3 --experience_batch_size $bs --train_batch_size $bs || return 1

+ done

+}

+

+if [ $# -eq 0 ]; then

+ num_gpus=(1 2 4 8)

+else

+ num_gpus=($1)

+fi

+

+if [ $# -le 1 ]; then

+ strategies=("ddp" "colossalai_zero2" "colossalai_gemini" "colossalai_zero2_cpu" "colossalai_gemini_cpu")

+else

+ strategies=($2)

+fi

+

+if [ $# -le 2 ]; then

+ models=("s" "m" "l" "xl" "2b" "4b" "6b" "8b" "10b")

+else

+ models=($3)

+fi

+

+

+for num_gpu in ${num_gpus[@]}; do

+ for strategy in ${strategies[@]}; do

+ for model in ${models[@]}; do

+ tune_batch_size $num_gpu $model $strategy || break

+ done

+ done

+done

diff --git a/applications/ChatGPT/benchmarks/benchmark_opt_lora_dummy.py b/applications/ChatGPT/benchmarks/benchmark_opt_lora_dummy.py

new file mode 100644

index 000000000000..207edbca94b5

--- /dev/null

+++ b/applications/ChatGPT/benchmarks/benchmark_opt_lora_dummy.py

@@ -0,0 +1,179 @@

+import argparse

+from copy import deepcopy

+

+import torch

+import torch.distributed as dist

+import torch.nn as nn

+from chatgpt.models.base import RewardModel

+from chatgpt.models.opt import OPTActor, OPTCritic

+from chatgpt.trainer import PPOTrainer

+from chatgpt.trainer.callbacks import PerformanceEvaluator

+from chatgpt.trainer.strategies import ColossalAIStrategy, DDPStrategy, Strategy

+from torch.optim import Adam

+from transformers import AutoTokenizer

+from transformers.models.opt.configuration_opt import OPTConfig

+

+from colossalai.nn.optimizer import HybridAdam

+

+

+def get_model_numel(model: nn.Module, strategy: Strategy) -> int:

+ numel = sum(p.numel() for p in model.parameters())

+ if isinstance(strategy, ColossalAIStrategy) and strategy.stage == 3 and strategy.shard_init:

+ numel *= dist.get_world_size()

+ return numel

+

+

+def preprocess_batch(samples) -> dict:

+ input_ids = torch.stack(samples)

+ attention_mask = torch.ones_like(input_ids, dtype=torch.long)

+ return {'input_ids': input_ids, 'attention_mask': attention_mask}

+

+

+def print_rank_0(*args, **kwargs) -> None:

+ if dist.get_rank() == 0:

+ print(*args, **kwargs)

+

+

+def print_model_numel(model_dict: dict) -> None:

+ B = 1024**3

+ M = 1024**2

+ K = 1024

+ outputs = ''

+ for name, numel in model_dict.items():

+ outputs += f'{name}: '

+ if numel >= B:

+ outputs += f'{numel / B:.2f} B\n'

+ elif numel >= M:

+ outputs += f'{numel / M:.2f} M\n'

+ elif numel >= K:

+ outputs += f'{numel / K:.2f} K\n'

+ else:

+ outputs += f'{numel}\n'

+ print_rank_0(outputs)

+

+

+def get_gpt_config(model_name: str) -> OPTConfig:

+ model_map = {

+ '125m': OPTConfig.from_pretrained('facebook/opt-125m'),

+ '350m': OPTConfig(hidden_size=1024, ffn_dim=4096, num_hidden_layers=24, num_attention_heads=16),

+ '700m': OPTConfig(hidden_size=1280, ffn_dim=5120, num_hidden_layers=36, num_attention_heads=20),

+ '1.3b': OPTConfig.from_pretrained('facebook/opt-1.3b'),

+ '2.7b': OPTConfig.from_pretrained('facebook/opt-2.7b'),

+ '3.5b': OPTConfig(hidden_size=3072, ffn_dim=12288, num_hidden_layers=32, num_attention_heads=32),

+ '5.5b': OPTConfig(hidden_size=3840, ffn_dim=15360, num_hidden_layers=32, num_attention_heads=32),

+ '6.7b': OPTConfig.from_pretrained('facebook/opt-6.7b'),

+ '10b': OPTConfig(hidden_size=5120, ffn_dim=20480, num_hidden_layers=32, num_attention_heads=32),

+ '13b': OPTConfig.from_pretrained('facebook/opt-13b'),

+ }

+ try:

+ return model_map[model_name]

+ except KeyError:

+ raise ValueError(f'Unknown model "{model_name}"')

+

+

+def main(args):

+ if args.strategy == 'ddp':

+ strategy = DDPStrategy()

+ elif args.strategy == 'colossalai_gemini':

+ strategy = ColossalAIStrategy(stage=3, placement_policy='cuda', initial_scale=2**5)

+ elif args.strategy == 'colossalai_gemini_cpu':

+ strategy = ColossalAIStrategy(stage=3, placement_policy='cpu', initial_scale=2**5)

+ elif args.strategy == 'colossalai_zero2':

+ strategy = ColossalAIStrategy(stage=2, placement_policy='cuda')

+ elif args.strategy == 'colossalai_zero2_cpu':

+ strategy = ColossalAIStrategy(stage=2, placement_policy='cpu')

+ elif args.strategy == 'colossalai_zero1':

+ strategy = ColossalAIStrategy(stage=1, placement_policy='cuda')

+ elif args.strategy == 'colossalai_zero1_cpu':

+ strategy = ColossalAIStrategy(stage=1, placement_policy='cpu')

+ else:

+ raise ValueError(f'Unsupported strategy "{args.strategy}"')

+

+ torch.cuda.set_per_process_memory_fraction(args.cuda_mem_frac)

+

+ model_config = get_gpt_config(args.model)

+

+ with strategy.model_init_context():

+ actor = OPTActor(config=model_config, lora_rank=args.lora_rank).cuda()

+ critic = OPTCritic(config=model_config, lora_rank=args.lora_rank).cuda()

+

+ initial_model = deepcopy(actor).cuda()

+ reward_model = RewardModel(deepcopy(critic.model), deepcopy(critic.value_head)).cuda()

+

+ actor_numel = get_model_numel(actor, strategy)

+ critic_numel = get_model_numel(critic, strategy)

+ initial_model_numel = get_model_numel(initial_model, strategy)

+ reward_model_numel = get_model_numel(reward_model, strategy)

+ print_model_numel({

+ 'Actor': actor_numel,

+ 'Critic': critic_numel,

+ 'Initial model': initial_model_numel,

+ 'Reward model': reward_model_numel

+ })

+ performance_evaluator = PerformanceEvaluator(actor_numel,

+ critic_numel,

+ initial_model_numel,

+ reward_model_numel,

+ enable_grad_checkpoint=False,

+ ignore_episodes=1)

+

+ if args.strategy.startswith('colossalai'):

+ actor_optim = HybridAdam(actor.parameters(), lr=5e-6)

+ critic_optim = HybridAdam(critic.parameters(), lr=5e-6)

+ else:

+ actor_optim = Adam(actor.parameters(), lr=5e-6)

+ critic_optim = Adam(critic.parameters(), lr=5e-6)

+

+ tokenizer = AutoTokenizer.from_pretrained('facebook/opt-350m')

+ tokenizer.pad_token = tokenizer.eos_token

+

+ (actor, actor_optim), (critic, critic_optim), reward_model, initial_model = strategy.prepare(

+ (actor, actor_optim), (critic, critic_optim), reward_model, initial_model)

+

+ trainer = PPOTrainer(strategy,

+ actor,

+ critic,

+ reward_model,

+ initial_model,

+ actor_optim,

+ critic_optim,

+ max_epochs=args.max_epochs,

+ train_batch_size=args.train_batch_size,

+ experience_batch_size=args.experience_batch_size,

+ tokenizer=preprocess_batch,

+ max_length=512,

+ do_sample=True,

+ temperature=1.0,

+ top_k=50,

+ pad_token_id=tokenizer.pad_token_id,

+ eos_token_id=tokenizer.eos_token_id,

+ callbacks=[performance_evaluator])

+

+ random_prompts = torch.randint(tokenizer.vocab_size, (1000, 400), device=torch.cuda.current_device())

+ trainer.fit(random_prompts,

+ num_episodes=args.num_episodes,

+ max_timesteps=args.max_timesteps,

+ update_timesteps=args.update_timesteps)

+

+ print_rank_0(f'Peak CUDA mem: {torch.cuda.max_memory_allocated()/1024**3:.2f} GB')

+

+

+if __name__ == '__main__':

+ parser = argparse.ArgumentParser()

+ parser.add_argument('--model', default='125m')

+ parser.add_argument('--strategy',

+ choices=[

+ 'ddp', 'colossalai_gemini', 'colossalai_gemini_cpu', 'colossalai_zero2',

+ 'colossalai_zero2_cpu', 'colossalai_zero1', 'colossalai_zero1_cpu'

+ ],

+ default='ddp')

+ parser.add_argument('--num_episodes', type=int, default=3)

+ parser.add_argument('--max_timesteps', type=int, default=8)

+ parser.add_argument('--update_timesteps', type=int, default=8)

+ parser.add_argument('--max_epochs', type=int, default=3)

+ parser.add_argument('--train_batch_size', type=int, default=8)

+ parser.add_argument('--experience_batch_size', type=int, default=8)

+ parser.add_argument('--lora_rank', type=int, default=4)

+ parser.add_argument('--cuda_mem_frac', type=float, default=1.0)

+ args = parser.parse_args()

+ main(args)

diff --git a/examples/tutorial/stable_diffusion/ldm/data/__init__.py b/applications/ChatGPT/chatgpt/__init__.py

similarity index 100%

rename from examples/tutorial/stable_diffusion/ldm/data/__init__.py

rename to applications/ChatGPT/chatgpt/__init__.py

diff --git a/applications/ChatGPT/chatgpt/dataset/__init__.py b/applications/ChatGPT/chatgpt/dataset/__init__.py

new file mode 100644

index 000000000000..df484f46d24c

--- /dev/null

+++ b/applications/ChatGPT/chatgpt/dataset/__init__.py

@@ -0,0 +1,5 @@

+from .reward_dataset import RmStaticDataset, HhRlhfDataset

+from .utils import is_rank_0

+from .sft_dataset import SFTDataset, AlpacaDataset, AlpacaDataCollator

+

+__all__ = ['RmStaticDataset', 'HhRlhfDataset','is_rank_0', 'SFTDataset', 'AlpacaDataset', 'AlpacaDataCollator']

diff --git a/applications/ChatGPT/chatgpt/dataset/reward_dataset.py b/applications/ChatGPT/chatgpt/dataset/reward_dataset.py

new file mode 100644

index 000000000000..9ee13490b893

--- /dev/null

+++ b/applications/ChatGPT/chatgpt/dataset/reward_dataset.py

@@ -0,0 +1,109 @@

+from typing import Callable

+

+from torch.utils.data import Dataset

+from tqdm import tqdm

+

+from .utils import is_rank_0

+

+# Dahaos/rm-static

+class RmStaticDataset(Dataset):

+ """

+ Dataset for reward model

+

+ Args:

+ dataset: dataset for reward model

+ tokenizer: tokenizer for reward model

+ max_length: max length of input

+ special_token: special token at the end of sentence

+ """

+

+ def __init__(self, dataset, tokenizer: Callable, max_length: int, special_token=None) -> None:

+ super().__init__()

+ self.chosen = []

+ self.reject = []

+ if special_token is None:

+ self.end_token = tokenizer.eos_token

+ else:

+ self.end_token = special_token

+ for data in tqdm(dataset, disable=not is_rank_0()):

+ prompt = data['prompt']

+

+ chosen = prompt + data['chosen'] + self.end_token

+ chosen_token = tokenizer(chosen,

+ max_length=max_length,

+ padding="max_length",

+ truncation=True,

+ return_tensors="pt")

+ self.chosen.append({

+ "input_ids": chosen_token['input_ids'],

+ "attention_mask": chosen_token['attention_mask']

+ })

+

+ reject = prompt + data['rejected'] + self.end_token

+ reject_token = tokenizer(reject,

+ max_length=max_length,

+ padding="max_length",

+ truncation=True,

+ return_tensors="pt")

+ self.reject.append({

+ "input_ids": reject_token['input_ids'],

+ "attention_mask": reject_token['attention_mask']

+ })

+

+ def __len__(self):

+ length = len(self.chosen)

+ return length

+

+ def __getitem__(self, idx):

+ return self.chosen[idx]["input_ids"], self.chosen[idx]["attention_mask"], self.reject[idx][

+ "input_ids"], self.reject[idx]["attention_mask"]

+

+# Anthropic/hh-rlhf

+class HhRlhfDataset(Dataset):

+ """

+ Dataset for reward model

+

+ Args:

+ dataset: dataset for reward model

+ tokenizer: tokenizer for reward model

+ max_length: max length of input

+ special_token: special token at the end of sentence

+ """

+ def __init__(self, dataset, tokenizer: Callable, max_length: int, special_token=None) -> None:

+ super().__init__()

+ self.chosen = []

+ self.reject = []

+ if special_token is None:

+ self.end_token = tokenizer.eos_token

+ else:

+ self.end_token = special_token

+ for data in tqdm(dataset, disable=not is_rank_0()):

+ chosen = data['chosen'] + self.end_token

+ chosen_token = tokenizer(chosen,

+ max_length=max_length,

+ padding="max_length",

+ truncation=True,

+ return_tensors="pt")

+ self.chosen.append({

+ "input_ids": chosen_token['input_ids'],

+ "attention_mask": chosen_token['attention_mask']

+ })

+

+ reject = data['rejected'] + self.end_token

+ reject_token = tokenizer(reject,

+ max_length=max_length,

+ padding="max_length",

+ truncation=True,

+ return_tensors="pt")

+ self.reject.append({

+ "input_ids": reject_token['input_ids'],

+ "attention_mask": reject_token['attention_mask']

+ })

+

+ def __len__(self):

+ length = len(self.chosen)

+ return length

+

+ def __getitem__(self, idx):

+ return self.chosen[idx]["input_ids"], self.chosen[idx]["attention_mask"], self.reject[idx][

+ "input_ids"], self.reject[idx]["attention_mask"]

diff --git a/applications/ChatGPT/chatgpt/dataset/sft_dataset.py b/applications/ChatGPT/chatgpt/dataset/sft_dataset.py

new file mode 100644

index 000000000000..11ec61908aef

--- /dev/null

+++ b/applications/ChatGPT/chatgpt/dataset/sft_dataset.py

@@ -0,0 +1,163 @@

+# Copyright 2023 Rohan Taori, Ishaan Gulrajani, Tianyi Zhang, Yann Dubois, Xuechen Li

+#

+# Licensed under the Apache License, Version 2.0 (the "License");

+# you may not use this file except in compliance with the License.

+# You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+

+import copy

+from dataclasses import dataclass, field

+from typing import Callable, Dict, Sequence

+import random

+from torch.utils.data import Dataset

+import torch.distributed as dist

+from tqdm import tqdm

+import torch

+

+from .utils import is_rank_0, jload

+

+import transformers

+from colossalai.logging import get_dist_logger

+

+logger = get_dist_logger()

+

+IGNORE_INDEX = -100

+PROMPT_DICT = {

+ "prompt_input": (

+ "Below is an instruction that describes a task, paired with an input that provides further context. "

+ "Write a response that appropriately completes the request.\n\n"

+ "### Instruction:\n{instruction}\n\n### Input:\n{input}\n\n### Response:"

+ ),

+ "prompt_no_input": (

+ "Below is an instruction that describes a task. "

+ "Write a response that appropriately completes the request.\n\n"

+ "### Instruction:\n{instruction}\n\n### Response:"

+ ),

+}

+

+class SFTDataset(Dataset):

+ """

+ Dataset for sft model

+

+ Args:

+ dataset: dataset for supervised model

+ tokenizer: tokenizer for supervised model

+ max_length: max length of input

+ """

+

+ def __init__(self, dataset, tokenizer: Callable, max_length: int=512) -> None:

+ super().__init__()

+ # self.prompts = []

+ self.input_ids = []

+

+ for data in tqdm(dataset, disable=not is_rank_0()):

+ prompt = data['prompt'] + data['completion'] + "<|endoftext|>"

+ prompt_token = tokenizer(prompt,

+ max_length=max_length,

+ padding="max_length",

+ truncation=True,

+ return_tensors="pt")

+

+ # self.prompts.append(prompt_token)s

+ self.input_ids.append(prompt_token)

+ self.labels = copy.deepcopy(self.input_ids)

+

+ def __len__(self):

+ length = len(self.prompts)

+ return length

+

+ def __getitem__(self, idx):

+ # dict(input_ids=self.input_ids[i], labels=self.labels[i])

+ return dict(input_ids=self.input_ids[i], labels=self.labels[i])

+ # return dict(self.prompts[idx], self.prompts[idx])

+

+

+def _tokenize_fn(strings: Sequence[str], tokenizer: transformers.PreTrainedTokenizer) -> Dict:

+ """Tokenize a list of strings."""

+ tokenized_list = [

+ tokenizer(

+ text,

+ return_tensors="pt",

+ padding="longest",

+ max_length=tokenizer.model_max_length,

+ truncation=True,

+ )

+ for text in strings

+ ]

+ input_ids = labels = [tokenized.input_ids[0] for tokenized in tokenized_list]

+ input_ids_lens = labels_lens = [

+ tokenized.input_ids.ne(tokenizer.pad_token_id).sum().item() for tokenized in tokenized_list

+ ]

+ return dict(

+ input_ids=input_ids,

+ labels=labels,

+ input_ids_lens=input_ids_lens,

+ labels_lens=labels_lens,

+ )

+

+def preprocess(

+ sources: Sequence[str],

+ targets: Sequence[str],

+ tokenizer: transformers.PreTrainedTokenizer,

+) -> Dict:

+ """Preprocess the data by tokenizing."""

+ examples = [s + t for s, t in zip(sources, targets)]

+ examples_tokenized, sources_tokenized = [_tokenize_fn(strings, tokenizer) for strings in (examples, sources)]

+ input_ids = examples_tokenized["input_ids"]

+ labels = copy.deepcopy(input_ids)

+ for label, source_len in zip(labels, sources_tokenized["input_ids_lens"]):

+ label[:source_len] = IGNORE_INDEX

+ return dict(input_ids=input_ids, labels=labels)

+

+class AlpacaDataset(Dataset):

+ """Dataset for supervised fine-tuning."""

+

+ def __init__(self, data_path: str, tokenizer: transformers.PreTrainedTokenizer):

+ super(AlpacaDataset, self).__init__()

+ logger.info("Loading data...")

+ list_data_dict = jload(data_path)

+

+ logger.info("Formatting inputs...")

+ prompt_input, prompt_no_input = PROMPT_DICT["prompt_input"], PROMPT_DICT["prompt_no_input"]

+ sources = [

+ prompt_input.format_map(example) if example.get("input", "") != "" else prompt_no_input.format_map(example)

+ for example in list_data_dict

+ ]

+ targets = [f"{example['output']}{tokenizer.eos_token}" for example in list_data_dict]

+

+ logger.info("Tokenizing inputs... This may take some time...")

+ data_dict = preprocess(sources, targets, tokenizer)

+

+ self.input_ids = data_dict["input_ids"]

+ self.labels = data_dict["labels"]

+

+ def __len__(self):

+ return len(self.input_ids)

+

+ def __getitem__(self, i) -> Dict[str, torch.Tensor]:

+ return dict(input_ids=self.input_ids[i], labels=self.labels[i])

+

+@dataclass

+class AlpacaDataCollator(object):

+ """Collate examples for supervised fine-tuning."""

+

+ tokenizer: transformers.PreTrainedTokenizer

+

+ def __call__(self, instances: Sequence[Dict]) -> Dict[str, torch.Tensor]:

+ input_ids, labels = tuple([instance[key] for instance in instances] for key in ("input_ids", "labels"))

+ input_ids = torch.nn.utils.rnn.pad_sequence(

+ input_ids, batch_first=True, padding_value=self.tokenizer.pad_token_id

+ )

+ labels = torch.nn.utils.rnn.pad_sequence(labels, batch_first=True, padding_value=IGNORE_INDEX)

+ return dict(

+ input_ids=input_ids,

+ labels=labels,

+ attention_mask=input_ids.ne(self.tokenizer.pad_token_id),

+ )

diff --git a/applications/ChatGPT/chatgpt/dataset/utils.py b/applications/ChatGPT/chatgpt/dataset/utils.py

new file mode 100644

index 000000000000..0e88cc8c39b4

--- /dev/null

+++ b/applications/ChatGPT/chatgpt/dataset/utils.py

@@ -0,0 +1,20 @@

+import io

+import json

+

+import torch.distributed as dist

+

+

+def is_rank_0() -> bool:

+ return not dist.is_initialized() or dist.get_rank() == 0

+

+def _make_r_io_base(f, mode: str):

+ if not isinstance(f, io.IOBase):

+ f = open(f, mode=mode)

+ return f

+

+def jload(f, mode="r"):

+ """Load a .json file into a dictionary."""

+ f = _make_r_io_base(f, mode)

+ jdict = json.load(f)

+ f.close()

+ return jdict

\ No newline at end of file

diff --git a/applications/ChatGPT/chatgpt/experience_maker/__init__.py b/applications/ChatGPT/chatgpt/experience_maker/__init__.py

new file mode 100644

index 000000000000..39ca7576b227

--- /dev/null

+++ b/applications/ChatGPT/chatgpt/experience_maker/__init__.py

@@ -0,0 +1,4 @@

+from .base import Experience, ExperienceMaker

+from .naive import NaiveExperienceMaker

+

+__all__ = ['Experience', 'ExperienceMaker', 'NaiveExperienceMaker']

diff --git a/applications/ChatGPT/chatgpt/experience_maker/base.py b/applications/ChatGPT/chatgpt/experience_maker/base.py

new file mode 100644

index 000000000000..f3640fc1e496

--- /dev/null

+++ b/applications/ChatGPT/chatgpt/experience_maker/base.py

@@ -0,0 +1,77 @@

+from abc import ABC, abstractmethod

+from dataclasses import dataclass

+from typing import Optional

+

+import torch

+import torch.nn as nn

+from chatgpt.models.base import Actor

+

+

+@dataclass

+class Experience:

+ """Experience is a batch of data.

+ These data should have the the sequence length and number of actions.

+ Left padding for sequences is applied.

+

+ Shapes of each tensor:

+ sequences: (B, S)

+ action_log_probs: (B, A)

+ values: (B)

+ reward: (B)

+ advatanges: (B)

+ attention_mask: (B, S)

+ action_mask: (B, A)

+

+ "A" is the number of actions.

+ """

+ sequences: torch.Tensor

+ action_log_probs: torch.Tensor

+ values: torch.Tensor

+ reward: torch.Tensor

+ advantages: torch.Tensor

+ attention_mask: Optional[torch.LongTensor]

+ action_mask: Optional[torch.BoolTensor]

+

+ @torch.no_grad()

+ def to_device(self, device: torch.device) -> None:

+ self.sequences = self.sequences.to(device)

+ self.action_log_probs = self.action_log_probs.to(device)

+ self.values = self.values.to(device)

+ self.reward = self.reward.to(device)

+ self.advantages = self.advantages.to(device)

+ if self.attention_mask is not None:

+ self.attention_mask = self.attention_mask.to(device)

+ if self.action_mask is not None:

+ self.action_mask = self.action_mask.to(device)

+

+ def pin_memory(self):

+ self.sequences = self.sequences.pin_memory()

+ self.action_log_probs = self.action_log_probs.pin_memory()

+ self.values = self.values.pin_memory()

+ self.reward = self.reward.pin_memory()

+ self.advantages = self.advantages.pin_memory()

+ if self.attention_mask is not None:

+ self.attention_mask = self.attention_mask.pin_memory()

+ if self.action_mask is not None:

+ self.action_mask = self.action_mask.pin_memory()

+ return self

+

+

+class ExperienceMaker(ABC):

+

+ def __init__(self,

+ actor: Actor,

+ critic: nn.Module,

+ reward_model: nn.Module,

+ initial_model: Actor,

+ kl_coef: float = 0.1) -> None:

+ super().__init__()

+ self.actor = actor

+ self.critic = critic

+ self.reward_model = reward_model

+ self.initial_model = initial_model

+ self.kl_coef = kl_coef

+

+ @abstractmethod

+ def make_experience(self, input_ids: torch.Tensor, **generate_kwargs) -> Experience:

+ pass

diff --git a/applications/ChatGPT/chatgpt/experience_maker/naive.py b/applications/ChatGPT/chatgpt/experience_maker/naive.py

new file mode 100644

index 000000000000..64835cfa1918

--- /dev/null

+++ b/applications/ChatGPT/chatgpt/experience_maker/naive.py

@@ -0,0 +1,36 @@

+import torch

+from chatgpt.models.utils import compute_reward, normalize

+

+from .base import Experience, ExperienceMaker

+

+

+class NaiveExperienceMaker(ExperienceMaker):

+ """

+ Naive experience maker.

+ """

+

+ @torch.no_grad()

+ def make_experience(self, input_ids: torch.Tensor, **generate_kwargs) -> Experience:

+ self.actor.eval()

+ self.critic.eval()

+ self.initial_model.eval()

+ self.reward_model.eval()

+

+ sequences, attention_mask, action_mask = self.actor.generate(input_ids,

+ return_action_mask=True,

+ **generate_kwargs)

+ num_actions = action_mask.size(1)

+

+ action_log_probs = self.actor(sequences, num_actions, attention_mask)

+ base_action_log_probs = self.initial_model(sequences, num_actions, attention_mask)

+ value = self.critic(sequences, action_mask, attention_mask)

+ r = self.reward_model(sequences, attention_mask)

+

+ reward = compute_reward(r, self.kl_coef, action_log_probs, base_action_log_probs, action_mask=action_mask)

+

+ advantage = reward - value

+ # TODO(ver217): maybe normalize adv

+ if advantage.ndim == 1:

+ advantage = advantage.unsqueeze(-1)

+

+ return Experience(sequences, action_log_probs, value, reward, advantage, attention_mask, action_mask)

diff --git a/applications/ChatGPT/chatgpt/models/__init__.py b/applications/ChatGPT/chatgpt/models/__init__.py

new file mode 100644

index 000000000000..b274188a21df

--- /dev/null

+++ b/applications/ChatGPT/chatgpt/models/__init__.py

@@ -0,0 +1,4 @@

+from .base import Actor, Critic, RewardModel

+from .loss import PolicyLoss, PPOPtxActorLoss, ValueLoss, LogSigLoss, LogExpLoss

+

+__all__ = ['Actor', 'Critic', 'RewardModel', 'PolicyLoss', 'ValueLoss', 'PPOPtxActorLoss', 'LogSigLoss', 'LogExpLoss']

diff --git a/applications/ChatGPT/chatgpt/models/base/__init__.py b/applications/ChatGPT/chatgpt/models/base/__init__.py

new file mode 100644

index 000000000000..7c7b1ceba257

--- /dev/null

+++ b/applications/ChatGPT/chatgpt/models/base/__init__.py

@@ -0,0 +1,6 @@

+from .actor import Actor

+from .critic import Critic

+from .reward_model import RewardModel

+from .lm import LM

+

+__all__ = ['Actor', 'Critic', 'RewardModel', 'LM']

diff --git a/applications/ChatGPT/chatgpt/models/base/actor.py b/applications/ChatGPT/chatgpt/models/base/actor.py

new file mode 100644

index 000000000000..57db2bb11a6a

--- /dev/null

+++ b/applications/ChatGPT/chatgpt/models/base/actor.py

@@ -0,0 +1,62 @@

+from typing import Optional, Tuple, Union

+

+import torch

+import torch.nn as nn

+import torch.nn.functional as F

+

+from ..generation import generate

+from ..lora import LoRAModule

+from ..utils import log_probs_from_logits

+

+

+class Actor(LoRAModule):

+ """

+ Actor model base class.

+

+ Args:

+ model (nn.Module): Actor Model.

+ lora_rank (int): LoRA rank.

+ lora_train_bias (str): LoRA bias training mode.

+ """

+

+ def __init__(self, model: nn.Module, lora_rank: int = 0, lora_train_bias: str = 'none') -> None:

+ super().__init__(lora_rank=lora_rank, lora_train_bias=lora_train_bias)

+ self.model = model

+ self.convert_to_lora()

+

+ @torch.no_grad()

+ def generate(

+ self,

+ input_ids: torch.Tensor,

+ return_action_mask: bool = True,

+ **kwargs

+ ) -> Union[Tuple[torch.LongTensor, torch.LongTensor], Tuple[torch.LongTensor, torch.LongTensor, torch.BoolTensor]]:

+ sequences = generate(self.model, input_ids, **kwargs)

+ attention_mask = None

+ pad_token_id = kwargs.get('pad_token_id', None)

+ if pad_token_id is not None:

+ attention_mask = sequences.not_equal(pad_token_id).to(dtype=torch.long, device=sequences.device)

+ if not return_action_mask:

+ return sequences, attention_mask, None

+ input_len = input_ids.size(1)

+ eos_token_id = kwargs.get('eos_token_id', None)

+ if eos_token_id is None:

+ action_mask = torch.ones_like(sequences, dtype=torch.bool)

+ else:

+ # left padding may be applied, only mask action