diff --git a/.github/workflows/report_test_coverage.yml b/.github/workflows/report_test_coverage.yml

index d9b131fd994c..c9dc541b8a33 100644

--- a/.github/workflows/report_test_coverage.yml

+++ b/.github/workflows/report_test_coverage.yml

@@ -9,6 +9,7 @@ on:

jobs:

report-test-coverage:

runs-on: ubuntu-latest

+ if: ${{ github.event.workflow_run.conclusion == 'success' }}

steps:

- name: "Download artifact"

uses: actions/github-script@v6

diff --git a/.github/workflows/scripts/generate_leaderboard_and_send_to_lark.py b/.github/workflows/scripts/generate_leaderboard_and_send_to_lark.py

index d8f6c8fe309e..2884e38dd3dd 100644

--- a/.github/workflows/scripts/generate_leaderboard_and_send_to_lark.py

+++ b/.github/workflows/scripts/generate_leaderboard_and_send_to_lark.py

@@ -1,5 +1,4 @@

import os

-from dataclasses import dataclass

from datetime import datetime, timedelta

from typing import Any, Dict, List

@@ -10,8 +9,7 @@

from requests_toolbelt import MultipartEncoder

-@dataclass

-class Contributor:

+class Counter(dict):

"""

Dataclass for a github contributor.

@@ -19,8 +17,40 @@ class Contributor:

name (str): name of the contributor

num_commits_this_week (int): number of commits made within one week

"""

- name: str

- num_commits_this_week: int

+

+ def record(self, item: str):

+ if item in self:

+ self[item] += 1

+ else:

+ self[item] = 1

+

+ def to_sorted_list(self):

+ data = [(key, value) for key, value in self.items()]

+ data.sort(key=lambda x: x[1], reverse=True)

+ return data

+

+

+def get_utc_time_one_week_ago():

+ """

+ Get the UTC time one week ago.

+ """

+ now = datetime.utcnow()

+ start_datetime = now - timedelta(days=7)

+ return start_datetime

+

+

+def datetime2str(dt):

+ """

+ Convert datetime to string in the format of YYYY-MM-DDTHH:MM:SSZ

+ """

+ return dt.strftime("%Y-%m-%dT%H:%M:%SZ")

+

+

+def str2datetime(string):

+ """

+ Convert string in the format of YYYY-MM-DDTHH:MM:SSZ to datetime

+ """

+ return datetime.strptime(string, "%Y-%m-%dT%H:%M:%SZ")

def plot_bar_chart(x: List[Any], y: List[Any], xlabel: str, ylabel: str, title: str, output_path: str) -> None:

@@ -36,7 +66,28 @@ def plot_bar_chart(x: List[Any], y: List[Any], xlabel: str, ylabel: str, title:

plt.savefig(output_path, dpi=1200)

-def get_issue_pull_request_comments(github_token: str, since: str) -> Dict[str, int]:

+def get_organization_repositories(github_token, organization_name) -> List[str]:

+ """

+ Retrieve the public repositories under the organization.

+ """

+ url = f"https://api.github.com/orgs/{organization_name}/repos?type=public"

+

+ # prepare header

+ headers = {

+ 'Authorization': f'Bearer {github_token}',

+ 'Accept': 'application/vnd.github+json',

+ 'X-GitHub-Api-Version': '2022-11-28'

+ }

+

+ res = requests.get(url, headers=headers).json()

+ repo_list = []

+

+ for item in res:

+ repo_list.append(item['name'])

+ return repo_list

+

+

+def get_issue_pull_request_comments(github_token: str, org_name: str, repo_name: str, since: str) -> Dict[str, int]:

"""

Retrieve the issue/PR comments made by our members in the last 7 days.

@@ -56,7 +107,7 @@ def get_issue_pull_request_comments(github_token: str, since: str) -> Dict[str,

# do pagination to the API

page = 1

while True:

- comment_api = f'https://api.github.com/repos/hpcaitech/ColossalAI/issues/comments?since={since}&page={page}'

+ comment_api = f'https://api.github.com/repos/{org_name}/{repo_name}/issues/comments?since={since}&page={page}'

comment_response = requests.get(comment_api, headers=headers).json()

if len(comment_response) == 0:

@@ -70,7 +121,7 @@ def get_issue_pull_request_comments(github_token: str, since: str) -> Dict[str,

continue

issue_id = item['issue_url'].split('/')[-1]

- issue_api = f'https://api.github.com/repos/hpcaitech/ColossalAI/issues/{issue_id}'

+ issue_api = f'https://api.github.com/repos/{org_name}/{repo_name}/issues/{issue_id}'

issue_response = requests.get(issue_api, headers=headers).json()

issue_author_relationship = issue_response['author_association']

@@ -87,7 +138,7 @@ def get_issue_pull_request_comments(github_token: str, since: str) -> Dict[str,

return user_engagement_count

-def get_discussion_comments(github_token, since) -> Dict[str, int]:

+def get_discussion_comments(github_token: str, org_name: str, repo_name: str, since: str) -> Dict[str, int]:

"""

Retrieve the discussion comments made by our members in the last 7 days.

This is only available via the GitHub GraphQL API.

@@ -105,7 +156,7 @@ def _generate_discussion_query(num, cursor: str = None):

offset_str = f", after: \"{cursor}\""

query = f"""

{{

- repository(owner: "hpcaitech", name: "ColossalAI"){{

+ repository(owner: "{org_name}", name: "{repo_name}"){{

discussions(first: {num} {offset_str}){{

edges {{

cursor

@@ -134,7 +185,7 @@ def _generate_comment_reply_count_for_discussion(discussion_number, num, cursor:

offset_str = f", before: \"{cursor}\""

query = f"""

{{

- repository(owner: "hpcaitech", name: "ColossalAI"){{

+ repository(owner: "{org_name}", name: "{repo_name}"){{

discussion(number: {discussion_number}){{

title

comments(last: {num} {offset_str}){{

@@ -191,8 +242,8 @@ def _call_graphql_api(query):

for edge in edges:

# print the discussion title

discussion = edge['node']

+ discussion_updated_at = str2datetime(discussion['updatedAt'])

- discussion_updated_at = datetime.strptime(discussion['updatedAt'], "%Y-%m-%dT%H:%M:%SZ")

# check if the updatedAt is within the last 7 days

# if yes, add it to discussion_numbers

if discussion_updated_at > since:

@@ -250,6 +301,7 @@ def _call_graphql_api(query):

if reply['authorAssociation'] == 'MEMBER':

# check if the updatedAt is within the last 7 days

# if yes, add it to discussion_numbers

+

reply_updated_at = datetime.strptime(reply['updatedAt'], "%Y-%m-%dT%H:%M:%SZ")

if reply_updated_at > since:

member_name = reply['author']['login']

@@ -260,7 +312,7 @@ def _call_graphql_api(query):

return user_engagement_count

-def generate_user_engagement_leaderboard_image(github_token: str, output_path: str) -> bool:

+def generate_user_engagement_leaderboard_image(github_token: str, org_name: str, repo_list: List[str], output_path: str) -> bool:

"""

Generate the user engagement leaderboard image for stats within the last 7 days

@@ -270,23 +322,29 @@ def generate_user_engagement_leaderboard_image(github_token: str, output_path: s

"""

# request to the Github API to get the users who have replied the most in the last 7 days

- now = datetime.utcnow()

- start_datetime = now - timedelta(days=7)

- start_datetime_str = start_datetime.strftime("%Y-%m-%dT%H:%M:%SZ")

+ start_datetime = get_utc_time_one_week_ago()

+ start_datetime_str = datetime2str(start_datetime)

# get the issue/PR comments and discussion comment count

- issue_pr_engagement_count = get_issue_pull_request_comments(github_token=github_token, since=start_datetime_str)

- discussion_engagement_count = get_discussion_comments(github_token=github_token, since=start_datetime)

total_engagement_count = {}

- # update the total engagement count

- total_engagement_count.update(issue_pr_engagement_count)

- for name, count in discussion_engagement_count.items():

- if name in total_engagement_count:

- total_engagement_count[name] += count

- else:

- total_engagement_count[name] = count

+ def _update_count(counter):

+ for name, count in counter.items():

+ if name in total_engagement_count:

+ total_engagement_count[name] += count

+ else:

+ total_engagement_count[name] = count

+

+ for repo_name in repo_list:

+ print(f"Fetching user engagement count for {repo_name}/{repo_name}")

+ issue_pr_engagement_count = get_issue_pull_request_comments(github_token=github_token, org_name=org_name, repo_name=repo_name, since=start_datetime_str)

+ discussion_engagement_count = get_discussion_comments(github_token=github_token, org_name=org_name, repo_name=repo_name, since=start_datetime)

+

+ # update the total engagement count

+ _update_count(issue_pr_engagement_count)

+ _update_count(discussion_engagement_count)

+

# prepare the data for plotting

x = []

y = []

@@ -302,9 +360,6 @@ def generate_user_engagement_leaderboard_image(github_token: str, output_path: s

x.append(count)

y.append(name)

- # use Shanghai time to display on the image

- start_datetime_str = datetime.now(pytz.timezone('Asia/Shanghai')).strftime("%Y-%m-%dT%H:%M:%SZ")

-

# plot the leaderboard

xlabel = f"Number of Comments made (since {start_datetime_str})"

ylabel = "Member"

@@ -315,7 +370,7 @@ def generate_user_engagement_leaderboard_image(github_token: str, output_path: s

return False

-def generate_contributor_leaderboard_image(github_token, output_path) -> bool:

+def generate_contributor_leaderboard_image(github_token, org_name, repo_list, output_path) -> bool:

"""

Generate the contributor leaderboard image for stats within the last 7 days

@@ -324,54 +379,81 @@ def generate_contributor_leaderboard_image(github_token, output_path) -> bool:

output_path (str): the path to save the image

"""

# request to the Github API to get the users who have contributed in the last 7 days

- URL = 'https://api.github.com/repos/hpcaitech/ColossalAI/stats/contributors'

headers = {

'Authorization': f'Bearer {github_token}',

'Accept': 'application/vnd.github+json',

'X-GitHub-Api-Version': '2022-11-28'

}

- while True:

- response = requests.get(URL, headers=headers).json()

+ counter = Counter()

+ start_datetime = get_utc_time_one_week_ago()

- if len(response) != 0:

- # sometimes the Github API returns empty response for unknown reason

- # request again if the response is empty

- break

+ def _get_url(org_name, repo_name, page):

+ return f'https://api.github.com/repos/{org_name}/{repo_name}/pulls?per_page=50&page={page}&state=closed'

+

+ def _iterate_by_page(org_name, repo_name):

+ page = 1

+ stop = False

+

+ while not stop:

+ print(f"Fetching pull request data for {org_name}/{repo_name} - page{page}")

+ url = _get_url(org_name, repo_name, page)

- contributor_list = []

+ while True:

+ response = requests.get(url, headers=headers).json()

- # get number of commits for each contributor

- start_timestamp = None

- for item in response:

- num_commits_this_week = item['weeks'][-1]['c']

- name = item['author']['login']

- contributor = Contributor(name=name, num_commits_this_week=num_commits_this_week)

- contributor_list.append(contributor)

+ if isinstance(response, list):

+ # sometimes the Github API returns nothing

+ # request again if the response is not a list

+ break

+ print("Empty response, request again...")

- # update start_timestamp

- start_timestamp = item['weeks'][-1]['w']

+ if len(response) == 0:

+ # if the response is empty, stop

+ stop = True

+ break

+

+ # count the pull request and author from response

+ for pr_data in response:

+ merged_at = pr_data['merged_at']

+ author = pr_data['user']['login']

+

+ if merged_at is None:

+ continue

+

+ merge_datetime = str2datetime(merged_at)

+

+ if merge_datetime < start_datetime:

+ # if we found a pull request that is merged before the start_datetime

+ # we stop

+ stop = True

+ break

+ else:

+ # record the author1

+ counter.record(author)

+

+ # next page

+ page += 1

+

+ for repo_name in repo_list:

+ _iterate_by_page(org_name, repo_name)

# convert unix timestamp to Beijing datetime

- start_datetime = datetime.fromtimestamp(start_timestamp, tz=pytz.timezone('Asia/Shanghai'))

- start_datetime_str = start_datetime.strftime("%Y-%m-%dT%H:%M:%SZ")

+ bj_start_datetime = datetime.fromtimestamp(start_datetime.timestamp(), tz=pytz.timezone('Asia/Shanghai'))

+ bj_start_datetime_str = datetime2str(bj_start_datetime)

- # sort by number of commits

- contributor_list.sort(key=lambda x: x.num_commits_this_week, reverse=True)

+ contribution_list = counter.to_sorted_list()

# remove contributors who has zero commits

- contributor_list = [x for x in contributor_list if x.num_commits_this_week > 0]

-

- # prepare the data for plotting

- x = [x.num_commits_this_week for x in contributor_list]

- y = [x.name for x in contributor_list]

+ author_list = [x[0] for x in contribution_list]

+ num_commit_list = [x[1] for x in contribution_list]

# plot

- if len(x) > 0:

- xlabel = f"Number of Commits (since {start_datetime_str})"

+ if len(author_list) > 0:

+ xlabel = f"Number of Pull Requests (since {bj_start_datetime_str})"

ylabel = "Contributor"

title = 'Active Contributor Leaderboard'

- plot_bar_chart(x, y, xlabel=xlabel, ylabel=ylabel, title=title, output_path=output_path)

+ plot_bar_chart(num_commit_list, author_list, xlabel=xlabel, ylabel=ylabel, title=title, output_path=output_path)

return True

else:

return False

@@ -438,10 +520,14 @@ def send_message_to_lark(message: str, webhook_url: str):

GITHUB_TOKEN = os.environ['GITHUB_TOKEN']

CONTRIBUTOR_IMAGE_PATH = 'contributor_leaderboard.png'

USER_ENGAGEMENT_IMAGE_PATH = 'engagement_leaderboard.png'

+ ORG_NAME = "hpcaitech"

+

+ # get all open source repositories

+ REPO_LIST = get_organization_repositories(GITHUB_TOKEN, ORG_NAME)

# generate images

- contrib_success = generate_contributor_leaderboard_image(GITHUB_TOKEN, CONTRIBUTOR_IMAGE_PATH)

- engagement_success = generate_user_engagement_leaderboard_image(GITHUB_TOKEN, USER_ENGAGEMENT_IMAGE_PATH)

+ contrib_success = generate_contributor_leaderboard_image(GITHUB_TOKEN, ORG_NAME, REPO_LIST, CONTRIBUTOR_IMAGE_PATH)

+ engagement_success = generate_user_engagement_leaderboard_image(GITHUB_TOKEN, ORG_NAME, REPO_LIST, USER_ENGAGEMENT_IMAGE_PATH)

# upload images

APP_ID = os.environ['LARK_APP_ID']

@@ -457,8 +543,8 @@ def send_message_to_lark(message: str, webhook_url: str):

2. 用户互动榜单

注:

-- 开发贡献者测评标准为:本周由公司成员提交的commit次数

-- 用户互动榜单测评标准为:本周由公司成员在非成员创建的issue/PR/discussion中回复的次数

+- 开发贡献者测评标准为:本周由公司成员与社区在所有开源仓库提交的Pull Request次数

+- 用户互动榜单测评标准为:本周由公司成员在非成员在所有开源仓库创建的issue/PR/discussion中回复的次数

"""

send_message_to_lark(message, LARK_WEBHOOK_URL)

@@ -467,7 +553,7 @@ def send_message_to_lark(message: str, webhook_url: str):

if contrib_success:

send_image_to_lark(contributor_image_key, LARK_WEBHOOK_URL)

else:

- send_message_to_lark("本周没有成员贡献commit,无榜单图片生成。", LARK_WEBHOOK_URL)

+ send_message_to_lark("本周没有成员贡献PR,无榜单图片生成。", LARK_WEBHOOK_URL)

# send user engagement image to lark

if engagement_success:

diff --git a/applications/Chat/README.md b/applications/Chat/README.md

index 29cd581d7cc9..016272ed8c89 100644

--- a/applications/Chat/README.md

+++ b/applications/Chat/README.md

@@ -83,7 +83,7 @@ More details can be found in the latest news.

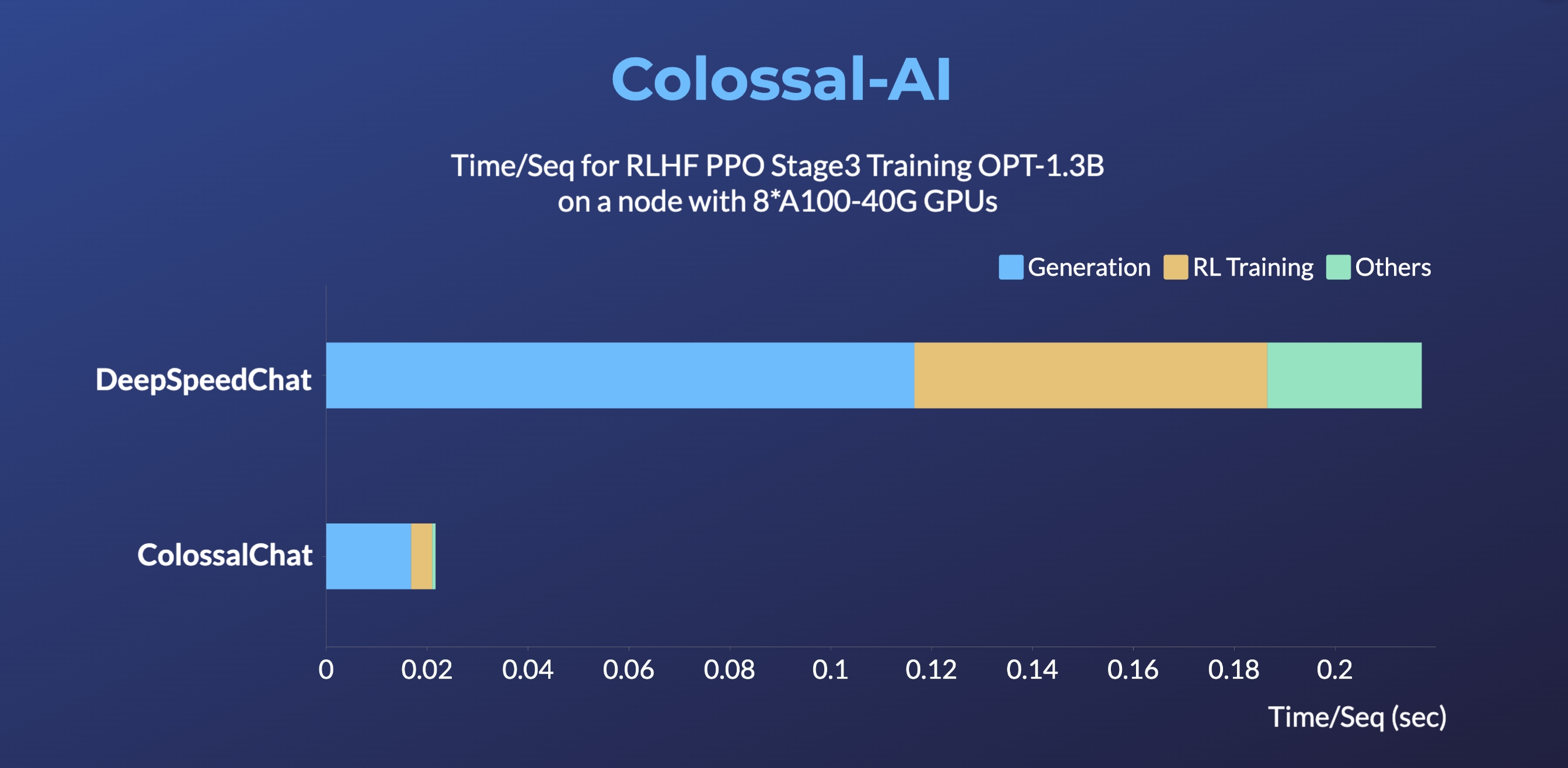

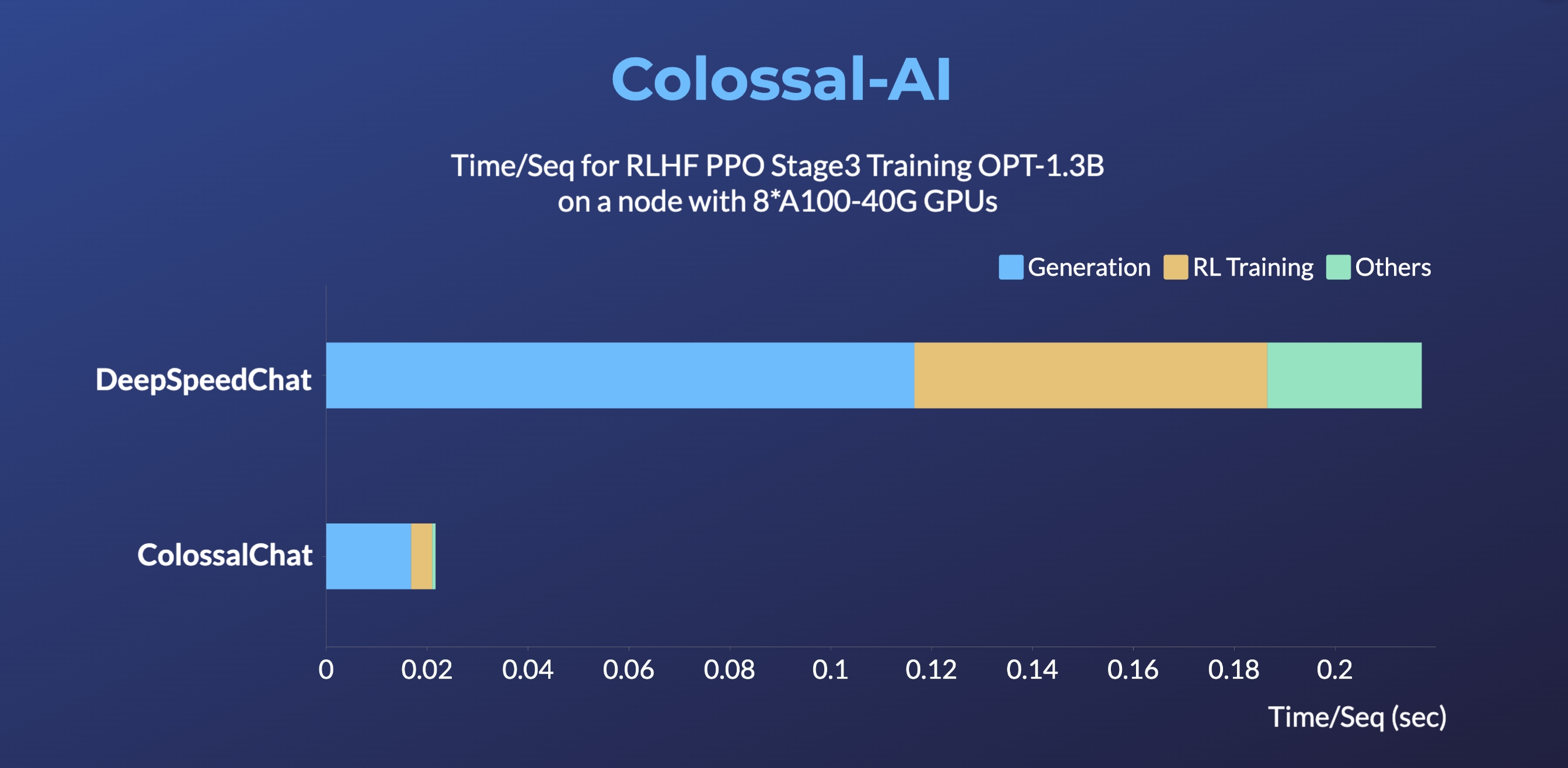

-> DeepSpeedChat performance comes from its blog on 2023 April 12, ColossalChat performance can be reproduced on an AWS p4d.24xlarge node with 8 A100-40G GPUs with the following command: torchrun --standalone --nproc_per_node 8 benchmark_opt_lora_dummy.py --max_timesteps 1 --update_timesteps 1 --use_kernels --strategy colossalai_zero2 --experience_batch_size 64 --train_batch_size 32

+> DeepSpeedChat performance comes from its blog on 2023 April 12, ColossalChat performance can be reproduced on an AWS p4d.24xlarge node with 8 A100-40G GPUs with the following command: torchrun --standalone --nproc_per_node 8 benchmark_opt_lora_dummy.py --num_collect_steps 1 --use_kernels --strategy colossalai_zero2 --experience_batch_size 64 --train_batch_size 32

## Install

@@ -287,7 +287,7 @@ If you only have a single 24G GPU, you can use the following script. `batch_size

torchrun --standalone --nproc_per_node=1 train_sft.py \

--pretrain "/path/to/LLaMa-7B/" \

--model 'llama' \

- --strategy naive \

+ --strategy ddp \

--log_interval 10 \

--save_path /path/to/Coati-7B \

--dataset /path/to/data.json \

diff --git a/applications/Chat/benchmarks/benchmark_opt_lora_dummy.py b/applications/Chat/benchmarks/benchmark_opt_lora_dummy.py

index 7a47624f74d8..90471ed727b0 100644

--- a/applications/Chat/benchmarks/benchmark_opt_lora_dummy.py

+++ b/applications/Chat/benchmarks/benchmark_opt_lora_dummy.py

@@ -8,7 +8,7 @@

from coati.models.opt import OPTActor, OPTCritic

from coati.trainer import PPOTrainer

from coati.trainer.callbacks import PerformanceEvaluator

-from coati.trainer.strategies import ColossalAIStrategy, DDPStrategy, Strategy

+from coati.trainer.strategies import DDPStrategy, GeminiStrategy, LowLevelZeroStrategy, Strategy

from torch.optim import Adam

from torch.utils.data import DataLoader

from transformers import AutoTokenizer

@@ -19,7 +19,7 @@

def get_model_numel(model: nn.Module, strategy: Strategy) -> int:

numel = sum(p.numel() for p in model.parameters())

- if isinstance(strategy, ColossalAIStrategy) and strategy.stage == 3 and strategy.shard_init:

+ if isinstance(strategy, GeminiStrategy) and strategy.shard_init:

numel *= dist.get_world_size()

return numel

@@ -76,17 +76,17 @@ def main(args):

if args.strategy == 'ddp':

strategy = DDPStrategy()

elif args.strategy == 'colossalai_gemini':

- strategy = ColossalAIStrategy(stage=3, placement_policy='cuda', initial_scale=2**5)

+ strategy = GeminiStrategy(placement_policy='cuda', initial_scale=2**5)

elif args.strategy == 'colossalai_gemini_cpu':

- strategy = ColossalAIStrategy(stage=3, placement_policy='cpu', initial_scale=2**5)

+ strategy = GeminiStrategy(placement_policy='cpu', initial_scale=2**5)

elif args.strategy == 'colossalai_zero2':

- strategy = ColossalAIStrategy(stage=2, placement_policy='cuda')

+ strategy = LowLevelZeroStrategy(stage=2, placement_policy='cuda')

elif args.strategy == 'colossalai_zero2_cpu':

- strategy = ColossalAIStrategy(stage=2, placement_policy='cpu')

+ strategy = LowLevelZeroStrategy(stage=2, placement_policy='cpu')

elif args.strategy == 'colossalai_zero1':

- strategy = ColossalAIStrategy(stage=1, placement_policy='cuda')

+ strategy = LowLevelZeroStrategy(stage=1, placement_policy='cuda')

elif args.strategy == 'colossalai_zero1_cpu':

- strategy = ColossalAIStrategy(stage=1, placement_policy='cpu')

+ strategy = LowLevelZeroStrategy(stage=1, placement_policy='cpu')

else:

raise ValueError(f'Unsupported strategy "{args.strategy}"')

@@ -135,6 +135,12 @@ def main(args):

(actor, actor_optim), (critic, critic_optim) = strategy.prepare((actor, actor_optim), (critic, critic_optim))

+ random_prompts = torch.randint(tokenizer.vocab_size, (1000, 256), device=torch.cuda.current_device())

+ dataloader = DataLoader(random_prompts,

+ batch_size=args.experience_batch_size,

+ shuffle=True,

+ collate_fn=preprocess_batch)

+

trainer = PPOTrainer(strategy,

actor,

critic,

@@ -143,7 +149,6 @@ def main(args):

actor_optim,

critic_optim,

ptx_coef=0,

- max_epochs=args.max_epochs,

train_batch_size=args.train_batch_size,

offload_inference_models=args.offload_inference_models,

max_length=512,

@@ -155,17 +160,11 @@ def main(args):

eos_token_id=tokenizer.eos_token_id,

callbacks=[performance_evaluator])

- random_prompts = torch.randint(tokenizer.vocab_size, (1000, 256), device=torch.cuda.current_device())

- dataloader = DataLoader(random_prompts,

- batch_size=args.experience_batch_size,

- shuffle=True,

- collate_fn=preprocess_batch)

-

- trainer.fit(dataloader,

- None,

+ trainer.fit(prompt_dataloader=dataloader,

+ pretrain_dataloader=None,

num_episodes=args.num_episodes,

- max_timesteps=args.max_timesteps,

- update_timesteps=args.update_timesteps)

+ num_update_steps=args.num_update_steps,

+ num_collect_steps=args.num_collect_steps)

print_rank_0(f'Peak CUDA mem: {torch.cuda.max_memory_allocated()/1024**3:.2f} GB')

@@ -181,9 +180,8 @@ def main(args):

],

default='ddp')

parser.add_argument('--num_episodes', type=int, default=3)

- parser.add_argument('--max_timesteps', type=int, default=8)

- parser.add_argument('--update_timesteps', type=int, default=8)

- parser.add_argument('--max_epochs', type=int, default=1)

+ parser.add_argument('--num_collect_steps', type=int, default=8)

+ parser.add_argument('--num_update_steps', type=int, default=1)

parser.add_argument('--train_batch_size', type=int, default=8)

parser.add_argument('--experience_batch_size', type=int, default=8)

parser.add_argument('--lora_rank', type=int, default=0)

diff --git a/applications/Chat/benchmarks/ray/1mmt_dummy.py b/applications/Chat/benchmarks/ray/1mmt_dummy.py

index 9e8f36cefc4f..7fc990448805 100644

--- a/applications/Chat/benchmarks/ray/1mmt_dummy.py

+++ b/applications/Chat/benchmarks/ray/1mmt_dummy.py

@@ -83,8 +83,8 @@ def model_fn():

env_info=env_info_maker,

kl_coef=0.1,

debug=args.debug,

- # sync_models_from_trainers=True,

- # generation kwargs:

+ # sync_models_from_trainers=True,

+ # generation kwargs:

max_length=512,

do_sample=True,

temperature=1.0,

@@ -153,10 +153,10 @@ def build_dataloader(size):

parser.add_argument('--num_trainers', type=int, default=1)

parser.add_argument('--trainer_strategy',

choices=[

- 'naive', 'ddp', 'colossalai_gemini', 'colossalai_zero2', 'colossalai_gemini_cpu',

+ 'ddp', 'colossalai_gemini', 'colossalai_zero2', 'colossalai_gemini_cpu',

'colossalai_zero2_cpu'

],

- default='naive')

+ default='ddp')

parser.add_argument('--maker_strategy', choices=['naive'], default='naive')

parser.add_argument('--model', default='gpt2', choices=['gpt2', 'bloom', 'opt', 'llama'])

parser.add_argument('--critic_model', default='gpt2', choices=['gpt2', 'bloom', 'opt', 'llama'])

diff --git a/applications/Chat/benchmarks/ray/mmmt_dummy.py b/applications/Chat/benchmarks/ray/mmmt_dummy.py

index 46a0062893b8..ca1df22070fc 100644

--- a/applications/Chat/benchmarks/ray/mmmt_dummy.py

+++ b/applications/Chat/benchmarks/ray/mmmt_dummy.py

@@ -87,8 +87,8 @@ def model_fn():

env_info=env_info_maker,

kl_coef=0.1,

debug=args.debug,

- # sync_models_from_trainers=True,

- # generation kwargs:

+ # sync_models_from_trainers=True,

+ # generation kwargs:

max_length=512,

do_sample=True,

temperature=1.0,

@@ -164,10 +164,10 @@ def build_dataloader(size):

parser.add_argument('--num_trainers', type=int, default=1)

parser.add_argument('--trainer_strategy',

choices=[

- 'naive', 'ddp', 'colossalai_gemini', 'colossalai_zero2', 'colossalai_gemini_cpu',

+ 'ddp', 'colossalai_gemini', 'colossalai_zero2', 'colossalai_gemini_cpu',

'colossalai_zero2_cpu'

],

- default='naive')

+ default='ddp')

parser.add_argument('--maker_strategy', choices=['naive'], default='naive')

parser.add_argument('--model', default='gpt2', choices=['gpt2', 'bloom', 'opt', 'llama'])

parser.add_argument('--critic_model', default='gpt2', choices=['gpt2', 'bloom', 'opt', 'llama'])

diff --git a/applications/Chat/coati/ray/detached_trainer_ppo.py b/applications/Chat/coati/ray/detached_trainer_ppo.py

index 5f0032716f93..2f2aa0e29579 100644

--- a/applications/Chat/coati/ray/detached_trainer_ppo.py

+++ b/applications/Chat/coati/ray/detached_trainer_ppo.py

@@ -6,7 +6,7 @@

from coati.models.base import Actor, Critic

from coati.models.loss import PolicyLoss, ValueLoss

from coati.trainer.callbacks import Callback

-from coati.trainer.strategies import ColossalAIStrategy, DDPStrategy, NaiveStrategy, Strategy

+from coati.trainer.strategies import DDPStrategy, GeminiStrategy, LowLevelZeroStrategy, Strategy

from torch.optim import Adam

from colossalai.nn.optimizer import HybridAdam

@@ -85,7 +85,7 @@ def __init__(

evaluator = TrainerPerformanceEvaluator(actor_numel, critic_numel)

callbacks = callbacks + [evaluator]

- if isinstance(self.strategy, ColossalAIStrategy):

+ if isinstance(self.strategy, (LowLevelZeroStrategy, GeminiStrategy)):

self.actor_optim = HybridAdam(self.actor.parameters(), lr=1e-7)

self.critic_optim = HybridAdam(self.critic.parameters(), lr=1e-7)

else:

diff --git a/applications/Chat/coati/ray/utils.py b/applications/Chat/coati/ray/utils.py

index 4361ee236771..4f8e0b8a87e9 100644

--- a/applications/Chat/coati/ray/utils.py

+++ b/applications/Chat/coati/ray/utils.py

@@ -1,6 +1,6 @@

import os

-from typing import Any, Callable, Dict, List, Optional

from collections import OrderedDict

+from typing import Any, Callable, Dict, List, Optional

import torch

import torch.distributed as dist

@@ -10,7 +10,7 @@

from coati.models.llama import LlamaActor, LlamaCritic, LlamaRM

from coati.models.opt import OPTRM, OPTActor, OPTCritic

from coati.models.roberta import RoBERTaActor, RoBERTaCritic, RoBERTaRM

-from coati.trainer.strategies import ColossalAIStrategy, DDPStrategy, NaiveStrategy

+from coati.trainer.strategies import DDPStrategy, GeminiStrategy, LowLevelZeroStrategy

from coati.utils import prepare_llama_tokenizer_and_embedding

from transformers import AutoTokenizer, BloomTokenizerFast, GPT2Tokenizer, LlamaTokenizer, RobertaTokenizer

@@ -76,18 +76,16 @@ def get_reward_model_from_args(model: str, pretrained: str = None, config=None):

def get_strategy_from_args(strategy: str):

- if strategy == 'naive':

- strategy_ = NaiveStrategy()

- elif strategy == 'ddp':

+ if strategy == 'ddp':

strategy_ = DDPStrategy()

elif strategy == 'colossalai_gemini':

- strategy_ = ColossalAIStrategy(stage=3, placement_policy='cuda', initial_scale=2**5)

+ strategy_ = GeminiStrategy(placement_policy='cuda', initial_scale=2**5)

elif strategy == 'colossalai_zero2':

- strategy_ = ColossalAIStrategy(stage=2, placement_policy='cuda')

+ strategy_ = LowLevelZeroStrategy(stage=2, placement_policy='cuda')

elif strategy == 'colossalai_gemini_cpu':

- strategy_ = ColossalAIStrategy(stage=3, placement_policy='cpu', initial_scale=2**5)

+ strategy_ = GeminiStrategy(placement_policy='cpu', initial_scale=2**5)

elif strategy == 'colossalai_zero2_cpu':

- strategy_ = ColossalAIStrategy(stage=2, placement_policy='cpu')

+ strategy_ = LowLevelZeroStrategy(stage=2, placement_policy='cpu')

else:

raise ValueError(f'Unsupported strategy "{strategy}"')

return strategy_

diff --git a/applications/Chat/coati/trainer/__init__.py b/applications/Chat/coati/trainer/__init__.py

index 525b57bf21d3..86142361f3ff 100644

--- a/applications/Chat/coati/trainer/__init__.py

+++ b/applications/Chat/coati/trainer/__init__.py

@@ -1,6 +1,10 @@

-from .base import Trainer

+from .base import OnPolicyTrainer, SLTrainer

from .ppo import PPOTrainer

from .rm import RewardModelTrainer

from .sft import SFTTrainer

-__all__ = ['Trainer', 'PPOTrainer', 'RewardModelTrainer', 'SFTTrainer']

+__all__ = [

+ 'SLTrainer', 'OnPolicyTrainer',

+ 'RewardModelTrainer', 'SFTTrainer',

+ 'PPOTrainer'

+]

diff --git a/applications/Chat/coati/trainer/base.py b/applications/Chat/coati/trainer/base.py

index ac3a878be884..13571cdcc23a 100644

--- a/applications/Chat/coati/trainer/base.py

+++ b/applications/Chat/coati/trainer/base.py

@@ -1,54 +1,108 @@

from abc import ABC, abstractmethod

-from typing import Any, Callable, Dict, List, Optional, Union

+from contextlib import contextmanager

+from typing import List

-import torch

+import torch.nn as nn

+import tqdm

from coati.experience_maker import Experience

+from coati.replay_buffer import NaiveReplayBuffer

+from torch.optim import Optimizer

+from torch.utils.data import DataLoader

from .callbacks import Callback

from .strategies import Strategy

+from .utils import CycledDataLoader, is_rank_0

-class Trainer(ABC):

+class SLTrainer(ABC):

"""

- Base class for rlhf trainers.

+ Base class for supervised learning trainers.

Args:

strategy (Strategy):the strategy to use for training

max_epochs (int, defaults to 1): the number of epochs of training process

+ model (nn.Module): the model to train

+ optim (Optimizer): the optimizer to use for training

+ """

+

+ def __init__(self,

+ strategy: Strategy,

+ max_epochs: int,

+ model: nn.Module,

+ optimizer: Optimizer,

+ ) -> None:

+ super().__init__()

+ self.strategy = strategy

+ self.max_epochs = max_epochs

+ self.model = model

+ self.optimizer = optimizer

+

+ @abstractmethod

+ def _train(self, epoch):

+ raise NotImplementedError()

+

+ @abstractmethod

+ def _eval(self, epoch):

+ raise NotImplementedError()

+

+ def _before_fit(self):

+ self.no_epoch_bar = False

+

+ def fit(self, *args, **kwargs):

+ self._before_fit(*args, **kwargs)

+ for epoch in tqdm.trange(self.max_epochs,

+ desc="Epochs",

+ disable=not is_rank_0() or self.no_epoch_bar

+ ):

+ self._train(epoch)

+ self._eval(epoch)

+

+

+class OnPolicyTrainer(ABC):

+ """

+ Base class for on-policy rl trainers, e.g. PPO.

+

+ Args:

+ strategy (Strategy):the strategy to use for training

+ buffer (NaiveReplayBuffer): the buffer to collect experiences

+ sample_buffer (bool, defaults to False): whether to sample from buffer

dataloader_pin_memory (bool, defaults to True): whether to pin memory for data loader

callbacks (List[Callback], defaults to []): the callbacks to call during training process

- generate_kwargs (dict, optional): the kwargs to use while model generating

"""

def __init__(self,

strategy: Strategy,

- max_epochs: int = 1,

- dataloader_pin_memory: bool = True,

- callbacks: List[Callback] = [],

- **generate_kwargs) -> None:

+ buffer: NaiveReplayBuffer,

+ sample_buffer: bool,

+ dataloader_pin_memory: bool,

+ callbacks: List[Callback] = []

+ ) -> None:

super().__init__()

self.strategy = strategy

- self.max_epochs = max_epochs

- self.generate_kwargs = generate_kwargs

+ self.buffer = buffer

+ self.sample_buffer = sample_buffer

self.dataloader_pin_memory = dataloader_pin_memory

self.callbacks = callbacks

- # TODO(ver217): maybe simplify these code using context

- def _on_fit_start(self) -> None:

+ @contextmanager

+ def _fit_ctx(self) -> None:

for callback in self.callbacks:

callback.on_fit_start()

-

- def _on_fit_end(self) -> None:

- for callback in self.callbacks:

- callback.on_fit_end()

-

- def _on_episode_start(self, episode: int) -> None:

+ try:

+ yield

+ finally:

+ for callback in self.callbacks:

+ callback.on_fit_end()

+

+ @contextmanager

+ def _episode_ctx(self, episode: int) -> None:

for callback in self.callbacks:

callback.on_episode_start(episode)

-

- def _on_episode_end(self, episode: int) -> None:

- for callback in self.callbacks:

- callback.on_episode_end(episode)

+ try:

+ yield

+ finally:

+ for callback in self.callbacks:

+ callback.on_episode_end(episode)

def _on_make_experience_start(self) -> None:

for callback in self.callbacks:

@@ -73,3 +127,71 @@ def _on_learn_batch_start(self) -> None:

def _on_learn_batch_end(self, metrics: dict, experience: Experience) -> None:

for callback in self.callbacks:

callback.on_learn_batch_end(metrics, experience)

+

+ @abstractmethod

+ def _make_experience(self, collect_step: int):

+ """

+ Implement this method to make experience.

+ """

+ raise NotImplementedError()

+

+ @abstractmethod

+ def _learn(self, update_step: int):

+ """

+ Implement this method to learn from experience, either

+ sample from buffer or transform buffer into dataloader.

+ """

+ raise NotImplementedError()

+

+ def _collect_phase(self, collect_step: int):

+ self._on_make_experience_start()

+ experience = self._make_experience(collect_step)

+ self._on_make_experience_end(experience)

+ self.buffer.append(experience)

+

+ def _update_phase(self, update_step: int):

+ self._on_learn_epoch_start(update_step)

+ self._learn(update_step)

+ self._on_learn_epoch_end(update_step)

+

+ def fit(self,

+ prompt_dataloader: DataLoader,

+ pretrain_dataloader: DataLoader,

+ num_episodes: int,

+ num_collect_steps: int,

+ num_update_steps: int,

+ ):

+ """

+ The main training loop of on-policy rl trainers.

+

+ Args:

+ prompt_dataloader (DataLoader): the dataloader to use for prompt data

+ pretrain_dataloader (DataLoader): the dataloader to use for pretrain data

+ num_episodes (int): the number of episodes to train

+ num_collect_steps (int): the number of collect steps per episode

+ num_update_steps (int): the number of update steps per episode

+ """

+ self.prompt_dataloader = CycledDataLoader(prompt_dataloader)

+ self.pretrain_dataloader = CycledDataLoader(pretrain_dataloader)

+

+ with self._fit_ctx():

+ for episode in tqdm.trange(num_episodes,

+ desc="Episodes",

+ disable=not is_rank_0()):

+ with self._episode_ctx(episode):

+ for collect_step in tqdm.trange(num_collect_steps,

+ desc="Collect steps",

+ disable=not is_rank_0()):

+ self._collect_phase(collect_step)

+ if not self.sample_buffer:

+ # HACK(cwher): according to the design of boost API, dataloader should also be boosted,

+ # but it is impractical to adapt this pattern in RL training. Thus, I left dataloader unboosted.

+ # I only call strategy.setup_dataloader() to setup dataloader.

+ self.dataloader = self.strategy.setup_dataloader(self.buffer,

+ self.dataloader_pin_memory)

+ for update_step in tqdm.trange(num_update_steps,

+ desc="Update steps",

+ disable=not is_rank_0()):

+ self._update_phase(update_step)

+ # NOTE: this is for on-policy algorithms

+ self.buffer.clear()

diff --git a/applications/Chat/coati/trainer/callbacks/save_checkpoint.py b/applications/Chat/coati/trainer/callbacks/save_checkpoint.py

index d2dcc0dd4c65..f0d77a191a88 100644

--- a/applications/Chat/coati/trainer/callbacks/save_checkpoint.py

+++ b/applications/Chat/coati/trainer/callbacks/save_checkpoint.py

@@ -1,7 +1,7 @@

import os

import torch.distributed as dist

-from coati.trainer.strategies import ColossalAIStrategy, Strategy

+from coati.trainer.strategies import GeminiStrategy, LowLevelZeroStrategy, Strategy

from coati.trainer.utils import is_rank_0

from torch import nn

from torch.optim import Optimizer

@@ -69,7 +69,7 @@ def on_episode_end(self, episode: int) -> None:

# save optimizer

if self.model_dict[model][1] is None:

continue

- only_rank0 = not isinstance(self.strategy, ColossalAIStrategy)

+ only_rank0 = not isinstance(self.strategy, (LowLevelZeroStrategy, GeminiStrategy))

rank = 0 if is_rank_0() else dist.get_rank()

optim_path = os.path.join(base_path, f'{model}-optim-rank-{rank}.pt')

self.strategy.save_optimizer(optimizer=self.model_dict[model][1], path=optim_path, only_rank0=only_rank0)

diff --git a/applications/Chat/coati/trainer/ppo.py b/applications/Chat/coati/trainer/ppo.py

index e2e44e62533e..4c4a1002e96d 100644

--- a/applications/Chat/coati/trainer/ppo.py

+++ b/applications/Chat/coati/trainer/ppo.py

@@ -1,6 +1,5 @@

-from typing import Any, Callable, Dict, List, Optional, Union

+from typing import Dict, List

-import torch

import torch.nn as nn

from coati.experience_maker import Experience, NaiveExperienceMaker

from coati.models.base import Actor, Critic, get_base_model

@@ -9,19 +8,32 @@

from coati.replay_buffer import NaiveReplayBuffer

from torch import Tensor

from torch.optim import Optimizer

-from torch.utils.data import DistributedSampler

+from torch.utils.data import DataLoader, DistributedSampler

from tqdm import tqdm

-from transformers.tokenization_utils_base import PreTrainedTokenizerBase

from colossalai.utils import get_current_device

-from .base import Trainer

+from .base import OnPolicyTrainer

from .callbacks import Callback

-from .strategies import Strategy

+from .strategies import GeminiStrategy, Strategy

from .utils import is_rank_0, to_device

-class PPOTrainer(Trainer):

+def _set_default_generate_kwargs(strategy: Strategy, generate_kwargs: dict, actor: Actor) -> Dict:

+ unwrapper_model = strategy.unwrap_model(actor)

+ hf_model = get_base_model(unwrapper_model)

+ new_kwargs = {**generate_kwargs}

+ # use huggingface models method directly

+ if 'prepare_inputs_fn' not in generate_kwargs and hasattr(hf_model, 'prepare_inputs_for_generation'):

+ new_kwargs['prepare_inputs_fn'] = hf_model.prepare_inputs_for_generation

+

+ if 'update_model_kwargs_fn' not in generate_kwargs and hasattr(hf_model, '_update_model_kwargs_for_generation'):

+ new_kwargs['update_model_kwargs_fn'] = hf_model._update_model_kwargs_for_generation

+

+ return new_kwargs

+

+

+class PPOTrainer(OnPolicyTrainer):

"""

Trainer for PPO algorithm.

@@ -35,14 +47,13 @@ class PPOTrainer(Trainer):

critic_optim (Optimizer): the optimizer to use for critic model

kl_coef (float, defaults to 0.1): the coefficient of kl divergence loss

train_batch_size (int, defaults to 8): the batch size to use for training

- buffer_limit (int, defaults to 0): the max_size limitation of replay buffer

- buffer_cpu_offload (bool, defaults to True): whether to offload replay buffer to cpu

+ buffer_limit (int, defaults to 0): the max_size limitation of buffer

+ buffer_cpu_offload (bool, defaults to True): whether to offload buffer to cpu

eps_clip (float, defaults to 0.2): the clip coefficient of policy loss

vf_coef (float, defaults to 1.0): the coefficient of value loss

ptx_coef (float, defaults to 0.9): the coefficient of ptx loss

value_clip (float, defaults to 0.4): the clip coefficient of value loss

- max_epochs (int, defaults to 1): the number of epochs of training process

- sample_replay_buffer (bool, defaults to False): whether to sample from replay buffer

+ sample_buffer (bool, defaults to False): whether to sample from buffer

dataloader_pin_memory (bool, defaults to True): whether to pin memory for data loader

offload_inference_models (bool, defaults to True): whether to offload inference models to cpu during training process

callbacks (List[Callback], defaults to []): the callbacks to call during training process

@@ -65,20 +76,25 @@ def __init__(self,

eps_clip: float = 0.2,

vf_coef: float = 1.0,

value_clip: float = 0.4,

- max_epochs: int = 1,

- sample_replay_buffer: bool = False,

+ sample_buffer: bool = False,

dataloader_pin_memory: bool = True,

offload_inference_models: bool = True,

callbacks: List[Callback] = [],

- **generate_kwargs) -> None:

- experience_maker = NaiveExperienceMaker(actor, critic, reward_model, initial_model, kl_coef)

- replay_buffer = NaiveReplayBuffer(train_batch_size, buffer_limit, buffer_cpu_offload)

- generate_kwargs = _set_default_generate_kwargs(strategy, generate_kwargs, actor)

- super().__init__(strategy, max_epochs, dataloader_pin_memory, callbacks, **generate_kwargs)

-

- self.experience_maker = experience_maker

- self.replay_buffer = replay_buffer

- self.sample_replay_buffer = sample_replay_buffer

+ **generate_kwargs

+ ) -> None:

+ if isinstance(strategy, GeminiStrategy):

+ assert not offload_inference_models, \

+ "GeminiPlugin is not compatible with manual model.to('cpu')"

+

+ buffer = NaiveReplayBuffer(train_batch_size, buffer_limit, buffer_cpu_offload)

+ super().__init__(

+ strategy, buffer,

+ sample_buffer, dataloader_pin_memory,

+ callbacks

+ )

+

+ self.generate_kwargs = _set_default_generate_kwargs(strategy, generate_kwargs, actor)

+ self.experience_maker = NaiveExperienceMaker(actor, critic, reward_model, initial_model, kl_coef)

self.offload_inference_models = offload_inference_models

self.actor = actor

@@ -94,74 +110,20 @@ def __init__(self,

self.device = get_current_device()

- def _make_experience(self, inputs: Union[Tensor, Dict[str, Tensor]]) -> Experience:

- if isinstance(inputs, Tensor):

- return self.experience_maker.make_experience(inputs, **self.generate_kwargs)

- elif isinstance(inputs, dict):

- return self.experience_maker.make_experience(**inputs, **self.generate_kwargs)

- else:

- raise ValueError(f'Unsupported input type "{type(inputs)}"')

-

- def _learn(self):

- # replay buffer may be empty at first, we should rebuild at each training

- if not self.sample_replay_buffer:

- dataloader = self.strategy.setup_dataloader(self.replay_buffer, self.dataloader_pin_memory)

- if self.sample_replay_buffer:

- pbar = tqdm(range(self.max_epochs), desc='Train epoch', disable=not is_rank_0())

- for _ in pbar:

- experience = self.replay_buffer.sample()

- experience.to_device(self.device)

- metrics = self.training_step(experience)

- pbar.set_postfix(metrics)

+ def _make_experience(self, collect_step: int) -> Experience:

+ prompts = self.prompt_dataloader.next()

+ if self.offload_inference_models:

+ # TODO(ver217): this may be controlled by strategy if they are prepared by strategy

+ self.experience_maker.initial_model.to(self.device)

+ self.experience_maker.reward_model.to(self.device)

+ if isinstance(prompts, Tensor):

+ return self.experience_maker.make_experience(prompts, **self.generate_kwargs)

+ elif isinstance(prompts, dict):

+ return self.experience_maker.make_experience(**prompts, **self.generate_kwargs)

else:

- for epoch in range(self.max_epochs):

- self._on_learn_epoch_start(epoch)

- if isinstance(dataloader.sampler, DistributedSampler):

- dataloader.sampler.set_epoch(epoch)

- pbar = tqdm(dataloader, desc=f'Train epoch [{epoch+1}/{self.max_epochs}]', disable=not is_rank_0())

- for experience in pbar:

- self._on_learn_batch_start()

- experience.to_device(self.device)

- metrics = self.training_step(experience)

- self._on_learn_batch_end(metrics, experience)

- pbar.set_postfix(metrics)

- self._on_learn_epoch_end(epoch)

-

- def fit(self,

- prompt_dataloader,

- pretrain_dataloader,

- num_episodes: int = 50000,

- max_timesteps: int = 500,

- update_timesteps: int = 5000) -> None:

- time = 0

- self.pretrain_dataloader = pretrain_dataloader

- self.prompt_dataloader = prompt_dataloader

- self._on_fit_start()

- for episode in range(num_episodes):

- self._on_episode_start(episode)

- for timestep in tqdm(range(max_timesteps),

- desc=f'Episode [{episode+1}/{num_episodes}]',

- disable=not is_rank_0()):

- time += 1

- prompts = next(iter(self.prompt_dataloader))

- self._on_make_experience_start()

- if self.offload_inference_models:

- # TODO(ver217): this may be controlled by strategy if they are prepared by strategy

- self.experience_maker.initial_model.to(self.device)

- self.experience_maker.reward_model.to(self.device)

- experience = self._make_experience(prompts)

- self._on_make_experience_end(experience)

- self.replay_buffer.append(experience)

- if time % update_timesteps == 0:

- if self.offload_inference_models:

- self.experience_maker.initial_model.to('cpu')

- self.experience_maker.reward_model.to('cpu')

- self._learn()

- self.replay_buffer.clear()

- self._on_episode_end(episode)

- self._on_fit_end()

-

- def training_step(self, experience: Experience) -> Dict[str, float]:

+ raise ValueError(f'Unsupported input type "{type(prompts)}"')

+

+ def _training_step(self, experience: Experience) -> Dict[str, float]:

self.actor.train()

self.critic.train()

# policy loss

@@ -175,7 +137,7 @@ def training_step(self, experience: Experience) -> Dict[str, float]:

# ptx loss

if self.ptx_coef != 0:

- batch = next(iter(self.pretrain_dataloader))

+ batch = self.pretrain_dataloader.next()

batch = to_device(batch, self.device)

ptx_log_probs = self.actor(batch['input_ids'],

attention_mask=batch['attention_mask'])['logits']

@@ -201,16 +163,29 @@ def training_step(self, experience: Experience) -> Dict[str, float]:

return {'reward': experience.reward.mean().item()}

-

-def _set_default_generate_kwargs(strategy: Strategy, generate_kwargs: dict, actor: Actor) -> Dict:

- unwrapper_model = strategy.unwrap_model(actor)

- hf_model = get_base_model(unwrapper_model)

- new_kwargs = {**generate_kwargs}

- # use huggingface models method directly

- if 'prepare_inputs_fn' not in generate_kwargs and hasattr(hf_model, 'prepare_inputs_for_generation'):

- new_kwargs['prepare_inputs_fn'] = hf_model.prepare_inputs_for_generation

-

- if 'update_model_kwargs_fn' not in generate_kwargs and hasattr(hf_model, '_update_model_kwargs_for_generation'):

- new_kwargs['update_model_kwargs_fn'] = hf_model._update_model_kwargs_for_generation

-

- return new_kwargs

+ def _learn(self, update_step: int):

+ if self.offload_inference_models:

+ self.experience_maker.initial_model.to('cpu')

+ self.experience_maker.reward_model.to('cpu')

+

+ # buffer may be empty at first, we should rebuild at each training

+ if self.sample_buffer:

+ experience = self.buffer.sample()

+ self._on_learn_batch_start()

+ experience.to_device(self.device)

+ metrics = self._training_step(experience)

+ self._on_learn_batch_end(metrics, experience)

+ else:

+ if isinstance(self.dataloader.sampler, DistributedSampler):

+ self.dataloader.sampler.set_epoch(update_step)

+ pbar = tqdm(

+ self.dataloader,

+ desc=f'Train epoch [{update_step + 1}]',

+ disable=not is_rank_0()

+ )

+ for experience in pbar:

+ self._on_learn_batch_start()

+ experience.to_device(self.device)

+ metrics = self._training_step(experience)

+ self._on_learn_batch_end(metrics, experience)

+ pbar.set_postfix(metrics)

diff --git a/applications/Chat/coati/trainer/rm.py b/applications/Chat/coati/trainer/rm.py

index cdae5108ab00..54a5d0f40dea 100644

--- a/applications/Chat/coati/trainer/rm.py

+++ b/applications/Chat/coati/trainer/rm.py

@@ -1,35 +1,29 @@

from datetime import datetime

-from typing import List, Optional

+from typing import Callable

import pandas as pd

import torch

-import torch.distributed as dist

-from torch.optim import Optimizer, lr_scheduler

-from torch.utils.data import DataLoader, Dataset, DistributedSampler

-from tqdm import tqdm

-from transformers.tokenization_utils_base import PreTrainedTokenizerBase

+import tqdm

+from torch.optim import Optimizer

+from torch.optim.lr_scheduler import _LRScheduler

+from torch.utils.data import DataLoader

-from .base import Trainer

-from .callbacks import Callback

+from .base import SLTrainer

from .strategies import Strategy

from .utils import is_rank_0

-class RewardModelTrainer(Trainer):

+class RewardModelTrainer(SLTrainer):

"""

Trainer to use while training reward model.

Args:

model (torch.nn.Module): the model to train

strategy (Strategy): the strategy to use for training

- optim(Optimizer): the optimizer to use for training

+ optim (Optimizer): the optimizer to use for training

+ lr_scheduler (_LRScheduler): the lr scheduler to use for training

loss_fn (callable): the loss function to use for training

- train_dataloader (DataLoader): the dataloader to use for training

- valid_dataloader (DataLoader): the dataloader to use for validation

- eval_dataloader (DataLoader): the dataloader to use for evaluation

- batch_size (int, defaults to 1): the batch size while training

max_epochs (int, defaults to 2): the number of epochs to train

- callbacks (List[Callback], defaults to []): the callbacks to call during training process

"""

def __init__(

@@ -37,87 +31,81 @@ def __init__(

model,

strategy: Strategy,

optim: Optimizer,

- loss_fn,

- train_dataloader: DataLoader,

- valid_dataloader: DataLoader,

- eval_dataloader: DataLoader,

+ lr_scheduler: _LRScheduler,

+ loss_fn: Callable,

max_epochs: int = 1,

- callbacks: List[Callback] = [],

) -> None:

- super().__init__(strategy, max_epochs, callbacks=callbacks)

+ super().__init__(strategy, max_epochs, model, optim)

- self.train_dataloader = train_dataloader

- self.valid_dataloader = valid_dataloader

- self.eval_dataloader = eval_dataloader

-

- self.model = model

self.loss_fn = loss_fn

- self.optimizer = optim

- self.scheduler = lr_scheduler.CosineAnnealingLR(self.optimizer, self.train_dataloader.__len__() // 100)

+ self.scheduler = lr_scheduler

- def eval_acc(self, dataloader):

- dist = 0

- on = 0

- cnt = 0

- self.model.eval()

- with torch.no_grad():

- for chosen_ids, c_mask, reject_ids, r_mask in dataloader:

- chosen_ids = chosen_ids.squeeze(1).to(torch.cuda.current_device())

- c_mask = c_mask.squeeze(1).to(torch.cuda.current_device())

- reject_ids = reject_ids.squeeze(1).to(torch.cuda.current_device())

- r_mask = r_mask.squeeze(1).to(torch.cuda.current_device())

- chosen_reward = self.model(chosen_ids, attention_mask=c_mask)

- reject_reward = self.model(reject_ids, attention_mask=r_mask)

- for i in range(len(chosen_reward)):

- cnt += 1

- if chosen_reward[i] > reject_reward[i]:

- on += 1

- dist += (chosen_reward - reject_reward).mean().item()

- dist_mean = dist / len(dataloader)

- acc = on / cnt

- self.model.train()

- return dist_mean, acc

+ def _eval(self, epoch):

+ if self.eval_dataloader is not None:

+ self.model.eval()

+ dist, on, cnt = 0, 0, 0

+ with torch.no_grad():

+ for chosen_ids, c_mask, reject_ids, r_mask in self.eval_dataloader:

+ chosen_ids = chosen_ids.squeeze(1).to(torch.cuda.current_device())

+ c_mask = c_mask.squeeze(1).to(torch.cuda.current_device())

+ reject_ids = reject_ids.squeeze(1).to(torch.cuda.current_device())

+ r_mask = r_mask.squeeze(1).to(torch.cuda.current_device())

+ chosen_reward = self.model(chosen_ids, attention_mask=c_mask)

+ reject_reward = self.model(reject_ids, attention_mask=r_mask)

+ for i in range(len(chosen_reward)):

+ cnt += 1

+ if chosen_reward[i] > reject_reward[i]:

+ on += 1

+ dist += (chosen_reward - reject_reward).mean().item()

+ self.dist = dist / len(self.eval_dataloader)

+ self.acc = on / cnt

- def fit(self):

- time = datetime.now()

- epoch_bar = tqdm(range(self.max_epochs), desc='Train epoch', disable=not is_rank_0())

- for epoch in range(self.max_epochs):

- step_bar = tqdm(range(self.train_dataloader.__len__()),

- desc='Train step of epoch %d' % epoch,

- disable=not is_rank_0())

- # train

- self.model.train()

- cnt = 0

- acc = 0

- dist = 0

- for chosen_ids, c_mask, reject_ids, r_mask in self.train_dataloader:

- chosen_ids = chosen_ids.squeeze(1).to(torch.cuda.current_device())

- c_mask = c_mask.squeeze(1).to(torch.cuda.current_device())

- reject_ids = reject_ids.squeeze(1).to(torch.cuda.current_device())

- r_mask = r_mask.squeeze(1).to(torch.cuda.current_device())

- chosen_reward = self.model(chosen_ids, attention_mask=c_mask)

- reject_reward = self.model(reject_ids, attention_mask=r_mask)

- loss = self.loss_fn(chosen_reward, reject_reward)

- self.strategy.backward(loss, self.model, self.optimizer)

- self.strategy.optimizer_step(self.optimizer)

- self.optimizer.zero_grad()

- cnt += 1

- if cnt == 100:

- self.scheduler.step()

- dist, acc = self.eval_acc(self.valid_dataloader)

- cnt = 0

- if is_rank_0():

- log = pd.DataFrame([[step_bar.n, loss.item(), dist, acc]],

- columns=['step', 'loss', 'dist', 'acc'])

- log.to_csv('log_%s.csv' % time, mode='a', header=False, index=False)

- step_bar.update()

- step_bar.set_postfix({'dist': dist, 'acc': acc})

-

- # eval

- dist, acc = self.eval_acc(self.eval_dataloader)

if is_rank_0():

- log = pd.DataFrame([[step_bar.n, loss.item(), dist, acc]], columns=['step', 'loss', 'dist', 'acc'])

+ log = pd.DataFrame(

+ [[(epoch + 1) * len(self.train_dataloader),

+ self.loss.item(), self.dist, self.acc]],

+ columns=['step', 'loss', 'dist', 'acc']

+ )

log.to_csv('log.csv', mode='a', header=False, index=False)

- epoch_bar.update()

- step_bar.set_postfix({'dist': dist, 'acc': acc})

- step_bar.close()

+

+ def _train(self, epoch):

+ self.model.train()

+ step_bar = tqdm.trange(

+ len(self.train_dataloader),

+ desc='Train step of epoch %d' % epoch,

+ disable=not is_rank_0()

+ )

+ cnt = 0

+ for chosen_ids, c_mask, reject_ids, r_mask in self.train_dataloader:

+ chosen_ids = chosen_ids.squeeze(1).to(torch.cuda.current_device())

+ c_mask = c_mask.squeeze(1).to(torch.cuda.current_device())

+ reject_ids = reject_ids.squeeze(1).to(torch.cuda.current_device())

+ r_mask = r_mask.squeeze(1).to(torch.cuda.current_device())

+ chosen_reward = self.model(chosen_ids, attention_mask=c_mask)

+ reject_reward = self.model(reject_ids, attention_mask=r_mask)

+ self.loss = self.loss_fn(chosen_reward, reject_reward)

+ self.strategy.backward(self.loss, self.model, self.optimizer)

+ self.strategy.optimizer_step(self.optimizer)

+ self.optimizer.zero_grad()

+ cnt += 1

+ if cnt % 100 == 0:

+ self.scheduler.step()

+ step_bar.update()

+ step_bar.close()

+

+ def _before_fit(self,

+ train_dataloader: DataLoader,

+ valid_dataloader: DataLoader,

+ eval_dataloader: DataLoader):

+ """

+ Args:

+ train_dataloader (DataLoader): the dataloader to use for training

+ valid_dataloader (DataLoader): the dataloader to use for validation

+ eval_dataloader (DataLoader): the dataloader to use for evaluation

+ """

+ super()._before_fit()

+ self.datetime = datetime.now().strftime('%Y-%m-%d_%H-%M-%S')

+

+ self.train_dataloader = train_dataloader

+ self.valid_dataloader = valid_dataloader

+ self.eval_dataloader = eval_dataloader

diff --git a/applications/Chat/coati/trainer/sft.py b/applications/Chat/coati/trainer/sft.py

index 63fde53956cc..0812ba165286 100644

--- a/applications/Chat/coati/trainer/sft.py

+++ b/applications/Chat/coati/trainer/sft.py

@@ -1,23 +1,22 @@

-import math

import time

-from typing import List, Optional

+from typing import Optional

import torch

import torch.distributed as dist

+import tqdm

import wandb

from torch.optim import Optimizer

+from torch.optim.lr_scheduler import _LRScheduler

from torch.utils.data import DataLoader

-from tqdm import tqdm

-from transformers.tokenization_utils_base import PreTrainedTokenizerBase

-from transformers.trainer import get_scheduler

-from .base import Trainer

-from .callbacks import Callback

-from .strategies import ColossalAIStrategy, Strategy

+from colossalai.logging import DistributedLogger

+

+from .base import SLTrainer

+from .strategies import GeminiStrategy, Strategy

from .utils import is_rank_0, to_device

-class SFTTrainer(Trainer):

+class SFTTrainer(SLTrainer):

"""

Trainer to use while training reward model.

@@ -25,12 +24,9 @@ class SFTTrainer(Trainer):

model (torch.nn.Module): the model to train

strategy (Strategy): the strategy to use for training

optim(Optimizer): the optimizer to use for training

- train_dataloader: the dataloader to use for training

- eval_dataloader: the dataloader to use for evaluation

- batch_size (int, defaults to 1): the batch size while training

+ lr_scheduler(_LRScheduler): the lr scheduler to use for training

max_epochs (int, defaults to 2): the number of epochs to train

- callbacks (List[Callback], defaults to []): the callbacks to call during training process

- optim_kwargs (dict, defaults to {'lr':1e-4}): the kwargs to use while initializing optimizer

+ accumulation_steps (int, defaults to 8): the number of steps to accumulate gradients

"""

def __init__(

@@ -38,98 +34,92 @@ def __init__(

model,

strategy: Strategy,

optim: Optimizer,

- train_dataloader: DataLoader,

- eval_dataloader: DataLoader = None,

+ lr_scheduler: _LRScheduler,

max_epochs: int = 2,

accumulation_steps: int = 8,

- callbacks: List[Callback] = [],

) -> None:

- if accumulation_steps > 1 and isinstance(strategy, ColossalAIStrategy) and strategy.stage == 3:

- raise ValueError("Accumulation steps are not supported in stage 3 of ColossalAI")

- super().__init__(strategy, max_epochs, callbacks=callbacks)

- self.train_dataloader = train_dataloader

- self.eval_dataloader = eval_dataloader

- self.model = model

- self.optimizer = optim

+ if accumulation_steps > 1:

+ assert not isinstance(strategy, GeminiStrategy), \

+ "Accumulation steps are not supported in stage 3 of ColossalAI"

- self.accumulation_steps = accumulation_steps

- num_update_steps_per_epoch = len(train_dataloader) // self.accumulation_steps

- max_steps = math.ceil(self.max_epochs * num_update_steps_per_epoch)

+ super().__init__(strategy, max_epochs, model, optim)

- self.scheduler = get_scheduler("cosine",

- self.optimizer,

- num_warmup_steps=math.ceil(max_steps * 0.03),

- num_training_steps=max_steps)

+ self.accumulation_steps = accumulation_steps

+ self.scheduler = lr_scheduler

+

+ def _train(self, epoch: int):

+ self.model.train()

+ for batch_id, batch in enumerate(self.train_dataloader):

+

+ batch = to_device(batch, torch.cuda.current_device())

+ outputs = self.model(batch["input_ids"],

+ attention_mask=batch["attention_mask"],

+ labels=batch["labels"])

+

+ loss = outputs.loss

+ loss = loss / self.accumulation_steps

+

+ self.strategy.backward(loss, self.model, self.optimizer)

+

+ self.total_loss += loss.item()

+

+ # gradient accumulation

+ if (batch_id + 1) % self.accumulation_steps == 0:

+ self.strategy.optimizer_step(self.optimizer)

+ self.optimizer.zero_grad()

+ self.scheduler.step()

+ if is_rank_0() and self.use_wandb:

+ wandb.log({

+ "loss": self.total_loss / self.accumulation_steps,

+ "lr": self.scheduler.get_last_lr()[0],

+ "epoch": epoch,

+ "batch_id": batch_id

+ })

+ self.total_loss = 0

+ self.step_bar.update()

+

+ def _eval(self, epoch: int):

+ if self.eval_dataloader is not None:

+ self.model.eval()

+ with torch.no_grad():

+ loss_sum, num_seen = 0, 0

+ for batch in self.eval_dataloader:

+ batch = to_device(batch, torch.cuda.current_device())

+ outputs = self.model(batch["input_ids"],

+ attention_mask=batch["attention_mask"],

+ labels=batch["labels"])

+ loss = outputs.loss

+

+ loss_sum += loss.item()

+ num_seen += batch["input_ids"].size(0)

+

+ loss_mean = loss_sum / num_seen

+ if dist.get_rank() == 0:

+ self.logger.info(f'Eval Epoch {epoch}/{self.max_epochs} loss {loss_mean}')

+

+ def _before_fit(self,

+ train_dataloader: DataLoader,

+ eval_dataloader: Optional[DataLoader] = None,

+ logger: Optional[DistributedLogger] = None,

+ use_wandb: bool = False):

+ """

+ Args:

+ train_dataloader: the dataloader to use for training

+ eval_dataloader: the dataloader to use for evaluation

+ """

+ self.train_dataloader = train_dataloader

+ self.eval_dataloader = eval_dataloader

- def fit(self, logger, use_wandb: bool = False):

+ self.logger = logger

+ self.use_wandb = use_wandb

if use_wandb:

wandb.init(project="Coati", name=time.strftime("%Y-%m-%d %H:%M:%S", time.localtime()))

wandb.watch(self.model)

- total_loss = 0

- # epoch_bar = tqdm(range(self.epochs), desc='Epochs', disable=not is_rank_0())

- step_bar = tqdm(range(len(self.train_dataloader) // self.accumulation_steps * self.max_epochs),

- desc=f'steps',

- disable=not is_rank_0())

- for epoch in range(self.max_epochs):

-

- # process_bar = tqdm(range(len(self.train_dataloader)), desc=f'Train process for{epoch}', disable=not is_rank_0())

- # train

- self.model.train()

- for batch_id, batch in enumerate(self.train_dataloader):

-

- batch = to_device(batch, torch.cuda.current_device())

- outputs = self.model(batch["input_ids"], attention_mask=batch["attention_mask"], labels=batch["labels"])

-

- loss = outputs.loss

-

- if loss >= 2.5 and is_rank_0():

- logger.warning(f"batch_id:{batch_id}, abnormal loss: {loss}")

-

- loss = loss / self.accumulation_steps

-

- self.strategy.backward(loss, self.model, self.optimizer)

-

- total_loss += loss.item()

-

- # gradient accumulation

- if (batch_id + 1) % self.accumulation_steps == 0:

- self.strategy.optimizer_step(self.optimizer)

- self.optimizer.zero_grad()

- self.scheduler.step()

- if is_rank_0() and use_wandb:

- wandb.log({

- "loss": total_loss / self.accumulation_steps,

- "lr": self.scheduler.get_last_lr()[0],

- "epoch": epoch,

- "batch_id": batch_id

- })

- total_loss = 0

- step_bar.update()

-

- # if batch_id % log_interval == 0:

- # logger.info(f'Train Epoch {epoch}/{self.epochs} Batch {batch_id} Rank {dist.get_rank()} loss {loss.item()}')

- # wandb.log({"loss": loss.item()})

-

- # process_bar.update()

-

- # eval

- if self.eval_dataloader is not None:

- self.model.eval()

- with torch.no_grad():

- loss_sum = 0

- num_seen = 0

- for batch in self.eval_dataloader:

- batch = to_device(batch, torch.cuda.current_device())

- outputs = self.model(batch["input_ids"],

- attention_mask=batch["attention_mask"],

- labels=batch["labels"])

- loss = outputs.loss

-

- loss_sum += loss.item()

- num_seen += batch["input_ids"].size(0)

-

- loss_mean = loss_sum / num_seen

- if dist.get_rank() == 0:

- logger.info(f'Eval Epoch {epoch}/{self.max_epochs} loss {loss_mean}')

-

- # epoch_bar.update()

+

+ self.total_loss = 0

+ self.no_epoch_bar = True

+ self.step_bar = tqdm.trange(

+ len(self.train_dataloader) // self.accumulation_steps * self.max_epochs,

+ desc=f'steps',

+ disable=not is_rank_0()

+ )

diff --git a/applications/Chat/coati/trainer/strategies/__init__.py b/applications/Chat/coati/trainer/strategies/__init__.py

index f258c9b8a873..b49a2c742db3 100644

--- a/applications/Chat/coati/trainer/strategies/__init__.py

+++ b/applications/Chat/coati/trainer/strategies/__init__.py

@@ -1,6 +1,8 @@

from .base import Strategy

-from .colossalai import ColossalAIStrategy

+from .colossalai import GeminiStrategy, LowLevelZeroStrategy

from .ddp import DDPStrategy

-from .naive import NaiveStrategy

-__all__ = ['Strategy', 'NaiveStrategy', 'DDPStrategy', 'ColossalAIStrategy']

+__all__ = [

+ 'Strategy', 'DDPStrategy',

+ 'LowLevelZeroStrategy', 'GeminiStrategy'

+]

diff --git a/applications/Chat/coati/trainer/strategies/base.py b/applications/Chat/coati/trainer/strategies/base.py

index 06f81f21ab26..80bc3272872e 100644

--- a/applications/Chat/coati/trainer/strategies/base.py

+++ b/applications/Chat/coati/trainer/strategies/base.py

@@ -1,6 +1,6 @@

from abc import ABC, abstractmethod

from contextlib import nullcontext

-from typing import Any, List, Optional, Tuple, Union

+from typing import Callable, Dict, List, Optional, Tuple, Union

import torch

import torch.nn as nn

@@ -9,10 +9,12 @@

from torch.utils.data import DataLoader

from transformers.tokenization_utils_base import PreTrainedTokenizerBase

+from colossalai.booster import Booster

+from colossalai.booster.plugin import Plugin

+

from .sampler import DistributedSampler

-ModelOptimPair = Tuple[nn.Module, Optimizer]

-ModelOrModelOptimPair = Union[nn.Module, ModelOptimPair]

+_BoostArgSpec = Union[nn.Module, Tuple[nn.Module, Optimizer], Dict]

class Strategy(ABC):

@@ -20,30 +22,28 @@ class Strategy(ABC):

Base class for training strategies.

"""

- def __init__(self) -> None:

+ def __init__(self, plugin_initializer: Callable[..., Optional[Plugin]] = lambda: None) -> None:

super().__init__()

+ # NOTE: dist must be initialized before Booster

self.setup_distributed()

+ self.plugin = plugin_initializer()

+ self.booster = Booster(plugin=self.plugin)

+ self._post_init()

@abstractmethod

- def backward(self, loss: torch.Tensor, model: nn.Module, optimizer: Optimizer, **kwargs) -> None:

+ def _post_init(self) -> None:

pass

- @abstractmethod

+ def backward(self, loss: torch.Tensor, model: nn.Module, optimizer: Optimizer, **kwargs) -> None:

+ self.booster.backward(loss, optimizer)

+

def optimizer_step(self, optimizer: Optimizer, **kwargs) -> None:

- pass

+ optimizer.step()

@abstractmethod

def setup_distributed(self) -> None:

pass

- @abstractmethod

- def setup_model(self, model: nn.Module) -> nn.Module:

- pass

-

- @abstractmethod

- def setup_optimizer(self, optimizer: Optimizer, model: nn.Module) -> Optimizer:

- pass

-

@abstractmethod

def setup_dataloader(self, replay_buffer: ReplayBuffer, pin_memory: bool = False) -> DataLoader:

pass

@@ -51,12 +51,13 @@ def setup_dataloader(self, replay_buffer: ReplayBuffer, pin_memory: bool = False

def model_init_context(self):

return nullcontext()

- def prepare(

- self, *models_or_model_optim_pairs: ModelOrModelOptimPair

- ) -> Union[List[ModelOrModelOptimPair], ModelOrModelOptimPair]:

- """Prepare models or model-optimizer-pairs based on each strategy.

+ def prepare(self, *boost_args: _BoostArgSpec) -> Union[List[_BoostArgSpec], _BoostArgSpec]:

+ """Prepare [model | (model, optimizer) | Dict] based on each strategy.

+ NOTE: the keys of Dict must be a subset of `self.booster.boost`'s arguments.

Example::

+ >>> # e.g., include lr_scheduler

+ >>> result_dict = strategy.prepare(dict(model=model, lr_scheduler=lr_scheduler))

>>> # when fine-tuning actor and critic

>>> (actor, actor_optim), (critic, critic_optim), reward_model, initial_model = strategy.prepare((actor, actor_optim), (critic, critic_optim), reward_model, initial_model)

>>> # or when training reward model

@@ -65,25 +66,39 @@ def prepare(

>>> actor, critic = strategy.prepare(actor, critic)

Returns:

- Union[List[ModelOrModelOptimPair], ModelOrModelOptimPair]: Models or model-optimizer-pairs in the original order.

+ Union[List[_BoostArgSpec], _BoostArgSpec]: [model | (model, optimizer) | Dict] in the original order.

"""

rets = []

- for arg in models_or_model_optim_pairs:

- if isinstance(arg, tuple):

- assert len(arg) == 2, f'Expect (model, optimizer) pair, got a tuple with size "{len(arg)}"'

- model, optimizer = arg

- model = self.setup_model(model)

- optimizer = self.setup_optimizer(optimizer, model)

+ for arg in boost_args:

+ if isinstance(arg, nn.Module):

+ model, *_ = self.booster.boost(arg)

+ rets.append(model)

+ elif isinstance(arg, tuple):

+ try:

+ model, optimizer = arg

+ except ValueError:

+ raise RuntimeError(f'Expect (model, optimizer) pair, got a tuple with size "{len(arg)}"')

+ model, optimizer, *_ = self.booster.boost(model=model,

+ optimizer=optimizer)

rets.append((model, optimizer))

- elif isinstance(arg, nn.Module):

- rets.append(self.setup_model(model))

+ elif isinstance(arg, Dict):

+ model, optimizer, criterion, dataloader, lr_scheduler = self.booster.boost(**arg)

+ boost_result = dict(model=model,

+ optimizer=optimizer,

+ criterion=criterion,

+ dataloader=dataloader,

+ lr_scheduler=lr_scheduler)

+ # remove None values

+ boost_result = {

+ key: value

+ for key, value in boost_result.items() if value is not None

+ }

+ rets.append(boost_result)

else:

- raise RuntimeError(f'Expect model or (model, optimizer) pair, got {type(arg)}')

+ raise RuntimeError(f'Type {type(arg)} is not supported')

- if len(rets) == 1:

- return rets[0]

- return rets

+ return rets[0] if len(rets) == 1 else rets

@staticmethod

def unwrap_model(model: nn.Module) -> nn.Module:

@@ -97,23 +112,30 @@ def unwrap_model(model: nn.Module) -> nn.Module:

"""

return model

- @abstractmethod

- def save_model(self, model: nn.Module, path: str, only_rank0: bool = True) -> None:

- pass

+ def save_model(self,

+ model: nn.Module,

+ path: str,

+ only_rank0: bool = True,

+ **kwargs

+ ) -> None:

+ self.booster.save_model(model, path, shard=not only_rank0, **kwargs)

- @abstractmethod

- def load_model(self, model: nn.Module, path: str, map_location: Any = None, strict: bool = True) -> None:

- pass

+ def load_model(self, model: nn.Module, path: str, strict: bool = True) -> None:

+ self.booster.load_model(model, path, strict)

- @abstractmethod

- def save_optimizer(self, optimizer: Optimizer, path: str, only_rank0: bool = False) -> None:

- pass

+ def save_optimizer(self,

+ optimizer: Optimizer,

+ path: str,

+ only_rank0: bool = False,

+ **kwargs

+ ) -> None:

+ self.booster.save_optimizer(optimizer, path, shard=not only_rank0, **kwargs)

- @abstractmethod

- def load_optimizer(self, optimizer: Optimizer, path: str, map_location: Any = None) -> None:

- pass

+ def load_optimizer(self, optimizer: Optimizer, path: str) -> None:

+ self.booster.load_optimizer(optimizer, path)

def setup_sampler(self, dataset) -> DistributedSampler:

+ # FIXME(cwher): this is only invoked in train_on_ray, not tested after adapt Boost API.

return DistributedSampler(dataset, 1, 0)

@abstractmethod

diff --git a/applications/Chat/coati/trainer/strategies/colossalai.py b/applications/Chat/coati/trainer/strategies/colossalai.py

index cfdab2806a25..1b59d704eec3 100644

--- a/applications/Chat/coati/trainer/strategies/colossalai.py

+++ b/applications/Chat/coati/trainer/strategies/colossalai.py

@@ -1,32 +1,113 @@

import warnings

-from typing import Optional, Union

+from typing import Optional

import torch

import torch.distributed as dist

import torch.nn as nn

-import torch.optim as optim

-from torch.optim import Optimizer

from transformers.tokenization_utils_base import PreTrainedTokenizerBase

import colossalai

-from colossalai.logging import get_dist_logger

-from colossalai.nn.optimizer import CPUAdam, HybridAdam

+from colossalai.booster.plugin import GeminiPlugin, LowLevelZeroPlugin

+from colossalai.booster.plugin.gemini_plugin import GeminiModel

+from colossalai.booster.plugin.low_level_zero_plugin import LowLevelZeroModel

from colossalai.tensor import ProcessGroup, ShardSpec

from colossalai.utils import get_current_device

-from colossalai.zero import ColoInitContext, ZeroDDP, zero_model_wrapper, zero_optim_wrapper

+from colossalai.zero import ColoInitContext

+from colossalai.zero.gemini.gemini_ddp import GeminiDDP

from .ddp import DDPStrategy

-logger = get_dist_logger(__name__)

+class LowLevelZeroStrategy(DDPStrategy):

+ """

+ The strategy for training with ColossalAI.

+

+ Args:

+ stage(int): The stage to use in ZeRO. Choose in (1, 2)

+ precision(str): The precision to use. Choose in ('fp32', 'fp16').

+ seed(int): The seed for the random number generator.

+ placement_policy(str): The placement policy for gemini. Choose in ('cpu', 'cuda')

+ If it is “cpu”, parameters, gradients and optimizer states will be offloaded to CPU,

+ If it is “cuda”, they will not be offloaded, which means max CUDA memory will be used. It is the fastest.

+ reduce_bucket_size(int): The reduce bucket size in bytes. Only for ZeRO-1 and ZeRO-2.

+ overlap_communication(bool): Whether to overlap communication and computation. Only for ZeRO-1 and ZeRO-2.

+ initial_scale(float): The initial scale for the optimizer.

+ growth_factor(float): The growth factor for the optimizer.

+ backoff_factor(float): The backoff factor for the optimizer.

+ growth_interval(int): The growth interval for the optimizer.

+ hysteresis(int): The hysteresis for the optimizer.

+ min_scale(float): The minimum scale for the optimizer.

+ max_scale(float): The maximum scale for the optimizer.

+ max_norm(float): The maximum norm for the optimizer.

+ norm_type(float): The norm type for the optimizer.

-class ColossalAIStrategy(DDPStrategy):

+ """

+

+ def __init__(self,

+ stage: int = 3,

+ precision: str = 'fp16',

+ seed: int = 42,

+ placement_policy: str = 'cuda',

+ reduce_bucket_size: int = 12 * 1024**2, # only for stage 1&2

+ overlap_communication: bool = True, # only for stage 1&2

+ initial_scale: float = 2**16,

+ growth_factor: float = 2,

+ backoff_factor: float = 0.5,

+ growth_interval: int = 1000,

+ hysteresis: int = 2,

+ min_scale: float = 1,

+ max_scale: float = 2**32,

+ max_norm: float = 0.0,

+ norm_type: float = 2.0

+ ) -> None:

+

+ assert stage in (1, 2), f'Unsupported stage "{stage}"'

+ assert placement_policy in ('cpu', 'cuda'), f'Unsupported placement policy "{placement_policy}"'

+ assert precision in ('fp32', 'fp16'), f'Unsupported precision "{precision}"'

+

+ plugin_initializer = lambda: LowLevelZeroPlugin(

+ # zero_config

+ stage=stage,

+ precision=precision,

+ # zero_optim_config