diff --git a/_posts/2007-01-03-towards-verifiable-operating-systems.html b/_posts/2007-01-03-towards-verifiable-operating-systems.html

index b8b0c60..851b7c2 100644

--- a/_posts/2007-01-03-towards-verifiable-operating-systems.html

+++ b/_posts/2007-01-03-towards-verifiable-operating-systems.html

@@ -9,4 +9,4 @@

blogger_orig_url: http://theinvisiblethings.blogspot.com/2007/01/towards-verifiable-operating-systems.html

---

-Last week I gave a presentation at the 23rd Chaos Communication Congress in Berlin. Originally the presentation was supposed to be titled "Stealth malware - can good guys win?", but in the very last moment I decided to redesign it completely and gave it a new title: "Fighting Stealth Malware – Towards Verifiable OSes". You can download it from here.

The presentation first debunks The 4 Myths About Stealth Malware Fighting that surprisingly many people believe in. Then my stealth malware classification is briefly described, presenting the malware of type 0, I and II and challenges with their detection (mainly with type II). Finally I talk about what changes into the OS design are needed to make our systems verifiable. If the OS were designed in such a way, then detection of type I and type II malware would be a trivial task...

There are only four requirements that an OS must satisfy to become easily verifiable, these are:

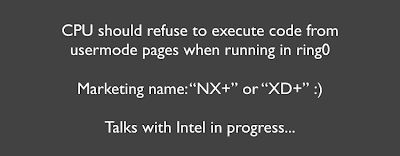

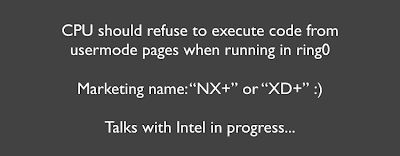

- The underlying processors must support non-executable attribute on a per-page level,

- OS design must maintain strong code and data separation on a per-page level (this could be first only in kernel and later might be extended to include sensitive applications),

- All code sections should be verifiable on a per-page level (usually this means some signing or hashing scheme implemented),

- OS must allow to safely read physical memory by a 3rd party application (kernel driver/module) and for each page allow for reliable determination whether it is executable or not.

The first three requirements are becoming more and more popular these days in various operating systems, as a side effect of introducing anti-exploitation/anti-malware technologies (which is a good thing, BTW). However, the 4th requirement presents a big challenge and it is not clear now whether it would be feasible on some architectures.

Still, I think that it's possible to redesign our systems in order to make them verifiable. If we don't do that, then we will always have to rely on a bunch of "hacks" to check for some known rootktis and we will be taking part in endless arm race with the bad guys. On the other hand, such situation is very convenient for the security vendors, as they can always improve their "Advanced Rootkit Detection Technology" and sell some updates... ;)

Happy New Year!

\ No newline at end of file

+Last week I gave a presentation at the 23rd Chaos Communication Congress in Berlin. Originally the presentation was supposed to be titled "Stealth malware - can good guys win?", but in the very last moment I decided to redesign it completely and gave it a new title: "Fighting Stealth Malware – Towards Verifiable OSes". You can download it from here.

The presentation first debunks The 4 Myths About Stealth Malware Fighting that surprisingly many people believe in. Then my stealth malware classification is briefly described, presenting the malware of type 0, I and II and challenges with their detection (mainly with type II). Finally I talk about what changes into the OS design are needed to make our systems verifiable. If the OS were designed in such a way, then detection of type I and type II malware would be a trivial task...

There are only four requirements that an OS must satisfy to become easily verifiable, these are:

- The underlying processors must support non-executable attribute on a per-page level,

- OS design must maintain strong code and data separation on a per-page level (this could be first only in kernel and later might be extended to include sensitive applications),

- All code sections should be verifiable on a per-page level (usually this means some signing or hashing scheme implemented),

- OS must allow to safely read physical memory by a 3rd party application (kernel driver/module) and for each page allow for reliable determination whether it is executable or not.

The first three requirements are becoming more and more popular these days in various operating systems, as a side effect of introducing anti-exploitation/anti-malware technologies (which is a good thing, BTW). However, the 4th requirement presents a big challenge and it is not clear now whether it would be feasible on some architectures.

Still, I think that it's possible to redesign our systems in order to make them verifiable. If we don't do that, then we will always have to rely on a bunch of "hacks" to check for some known rootktis and we will be taking part in endless arm race with the bad guys. On the other hand, such situation is very convenient for the security vendors, as they can always improve their "Advanced Rootkit Detection Technology" and sell some updates... ;)

Happy New Year!

\ No newline at end of file

diff --git a/_posts/2007-02-04-running-vista-every-day.html b/_posts/2007-02-04-running-vista-every-day.html

index e97ea65..22b4dd3 100644

--- a/_posts/2007-02-04-running-vista-every-day.html

+++ b/_posts/2007-02-04-running-vista-every-day.html

@@ -10,4 +10,4 @@

blogger_orig_url: http://theinvisiblethings.blogspot.com/2007/02/running-vista-every-day.html

---

-More then a month ago I have installed Vista RTM on my primary laptop (x86 machine) and have been running it since that time almost every day. Below are some of my reflections about the new security model introduced in Vista, its limitations, a few flaws and some practical info about how I configured my system.

UAC – The Good and The Bad

User Account Control (UAC) is a new security mechanism introduced in Vista, whose primary goal is to force users to work using restricted accounts, instead working as administrators. This is, in my opinion the most important security mechanism introduced in Vista. That doesn’t mean it can not be bypassed in many ways (due to implementation flaws), but just the fact that such a design change has been made into Windows is, without doubt, a great step towards securing consumer OSes.

When UAC is active (which is a default setting) even when user logs in as an administrator, most of her programs run as restricted processes, i.e. they have only some very limited subset of privileges in their process token. Also, they run at, so called, Medium integrity level, which, among other things, should prevent those applications from interacting with higher integrity level processes via Window messages. This mechanism also got a nice marketing acronym, UIPI, which stands for User Interface Privilege Isolation. Once the system determines that a given program (or a given action) requires administrative privileges, because e.g. the user wants to change system time, it displays a consent window to the user, asking her whether she really wants to proceed. In case the user logged in as a normal user (i.e. the account does not belong to the Administrators group), then the user is also asked to enter password for the one of the administrator's accounts. You can find more background information about UAC, e.g. at this page.

Many people complain about UAC, saying that it’s very annoying for them to see UAC consent dialog box to appear every few minutes or so, and claim that this will discourage users from using this mechanism at all (and yes, there’s an option to disable UAC). I strongly disagree with such opinion - I’ve been running Vista more then a month now and, besides the first few days when I was installing various applications, I now do not see UAC prompt more then 1-2 times per day. So, I really wonder what those people are doing that they see UAC constantly appearing every other minute…

One thing that I found particularly annoying though, is that Vista automatically assumes that all setup programs (application installers) should be run with administrator privileges. So, when you try to run such a program, you get a UAC prompt and you have only two choices: either to agree to run this application as administrator or to disallow running it at all. That means that if you downloaded some freeware Tetris game, you will have to run its installer as administrator, giving it not only full access to all your file system and registry, but also allowing e.g. to load kernel drivers! Why Tetris installer should be allowed to load kernel drivers?

How Vista recognizes installer executables? It has a compatibility database as well as uses several heuristics to do that, e.g. if the file name contains the string “setup” (Really, I’m not kidding!). Finally it looks at the executable’s manifest and most of the modern installers are expected to have such manifest embedded, which may indicate that the executable should be run as administrator.

To get around this problem, e.g. on XP, I would normally just add appropriate permissions to my normal (restricted) user account, in such a way that this account would be ale to add new directories under C:\Program Files and to add new keys under HKLM\Software (in most cases this is just enough), but still would not be able to modify any global files nor registry keys nor, heaven forbid, to load drivers. More paranoid people could chose to create a separate account, called e.g. installer and use it to install most of the applications. Of course, the real life is not that beautiful and you sometimes need to play a bit with regmon to tweak the permissions, but, in general it works for majority of applications and I have been successfully using this approach for years now on my XP box.

That approach would not work on Vista, because every time Vista detects that an executable is a setup program (and believe me Vista is really good at doing this), it will only allow running it as administrator… Even though it’s possible to disable heuristics-based installer detection via local policy settings – see picture below:

that doesn’t seem to work for those installer executables which have embedded manifest saying that they should be run as administrator.

I see the above limitation as a very severe hole in the design of UAC. After all, I would like to be offered a choice whether to fully trust given installer executable (and run it as full administrator) or just allow it to add a folder in C:\Program Files and some keys under HKLM\Software and do nothing more. I could do that under XP, but apparently I can’t under Vista, which is a bit disturbing (unless I’m missing some secret option to change that behavior).

Integrity Levels – Protect the OS but not your data!

Integrity Levels (IL) mechanism has been introduced to help implementing UAC. This mechanism is very simple – every process can be assigned one of the four possible integrity levels:

• Low

• Medium

• High

• System

Similarly, every securable object in the system, like e.g. a directory, file or registry key, can also be assigned an integrity level. Integrity level is nothing else then just an ACE of a special type assigned to the SACL list. If there’s no such ACE at all, then the integrity level of the object is assumed to be Medium. You can use icacls command to see integrity levels on file system objects:

C:\>icacls \Users\joanna\AppData\LocalLow

\Users\joanna\AppData\LocalLow silverose\joanna:(F)

silverose\joanna:(OI)(CI)(IO)(F)

NT AUTHORITY\SYSTEM:(F)

NT AUTHORITY\SYSTEM:(OI)(CI)(IO)(F)

BUILTIN\Administrators:(F)

BUILTIN\Administrators:(OI)(CI)(IO)(F)

Mandatory Label\Low Mandatory Level:(OI)(CI)(NW)

BTW, I don’t know any tool/command to see and modify integrity levels assigned to registry keys (I think I know how to do this in C though). Anybody?

Now, the whole concept behind IL is that a process can only get write-access to those objects which have the same or lower integrity level then the process itself.

Update (March 5th, 2007): This is the default behavior of IL and is indicated by the “(NW)” symbol on the picture above, which stands for NoWriteUp policy. I have just learned that one can use the chml tool by Mark Minasi to set also a different policy, i.e. NoReadUp (NR) or NoExecuteUp (NX), which would result that IL mechanism will not allow a lower integrity process to read or execute the objects marked with higher IL. See also my recent post about this tool.

UAC is implemented using IL – even if you log in as administrator, all your processes (like e.g. explorer.exe) run with Medium IL. Once you elevated to the “real admin” your process runs at High IL. System processes, like e.g. services, runs at System IL. From the security point of view High IL seems to be equivalent to System IL, because once you are allowed to execute code at High IL you can compromise the whole system.

Internet Explorer’s protected mode is implemented using the IL mechanism. The iexplore.exe process runs at Low IL and, in a system with default configuration, can only write to %USERPROFILE%\AppData\LocalLow and HKCU\Software\AppDataLow because all other objects have higher ILs (usually Medium).

If you don’t like surfing using IE, you can very easily setup your Firefox (or other browser of your choice) to run as Low integrity process (here we assume that Firefox user’s profile is in j:\config\firefox-profile):

C:\Program Files\Mozilla Firefox>icacls firefox.exe /setintegritylevel low

J:\config>icacls firefox-profile /setintegritylevel (OI)(CI)low

Because firefox.exe is now marked as a Low integrity file, Vista will also create a Low integrity process from this file, unless you are going to start this executable from a High integrity process (e.g. elevated command prompt). Also, if you, for some reason (see below), wanted to use runas or psexec to start a Low integrity process, it won’t work and will start the process as Medium, regardless that the executable is marked as Low integrity.

It should be stressed that IL, by default, protects only against modifications of higher integrity objects. It’s perfectly ok for the Low IL process to read e.g. files, even if they are marked as Medium or High IL. In other words, if somebody exploits IE running in Protected Mode (at Low IL), she will be able to read (i.e. steal) all user’s data.

This is not an implementation bug, this is a design decision and it’s cleverly called the “read-up policy”. If we think about it for a while, it should become clear why Microsoft decided to do it that way. First, we should observe, that what Microsoft is most concerned about, is malware which permanently installs itself in the system and that could later be detected by some anti-malware programs. Microsoft doesn’t like it, because it’s the source of all the complains about how insecure Windows is and also the A/V companies can publish their statistics about how many percent of computers is compromised, etc… All in all, a very uncomfortable situation, not only for Microsoft but also for all those poor users, who now need to try all the various methods (read buy A/V programs) to remove the malware, instead just focus on their work…

On the other hand, imagine a reliable exploit (i.e. not crashing a target too often) which, after exploiting e.g. IE Protected Mode process, steals all the user’s DOC and XLS files, sends them back somewhere and afterwards disappears in an elegant fashion. Our user, busy with his every day work, does not even notice anything, so he can continue working undisturbed and focus on his real job. The A/V programs do not detect the exploit (why should they? – after all there’s no signature for it nor the shellcode uses any suspicious API) so they do not report the machine as infected – because, after all it’s not infected. So, the statistics look better and everybody is generally happier. Including the competition, who now has access to stolen data ;)

User Interface Privilege Isolation and some little Fun

UAC and Integrity Levels mechanism makes it possible for processes running with different ILs to share the same desktop. This raises potential security problem, because Windows implements a mechanism to allow one process to send a “window message”, like e.g. WM_SETTEXT, to another process. Moreover, some messages, like e.g. the infamous WM_TIMER, could be used the redirect execution flow of the target thread. This has been popular a few years ago in so called “Shatter Attacks”.

UIPI, introduced in Vista, is for the rescue. UIPI basically enforces the obvious policy that lower integrity processes can not send messages to higher integrity processes.

Interestingly, UIPI implementation is a bit “unfinished” I would say… For example, in contrast to design assumption, on my system at least, it is possible for the Low integrity process to send e.g. WM_KEYDOWN to e.g. open Administrative shell (cmd.exe) running at High IL and gets arbitrary commands executed.

One simple scenario of the attack is that a malicious program, running at Low IL, can wait for the user to open elevated command prompt – it can e.g. poll the open window handles e.g. every second or so (Window enumeration is allowed even at Low IL). Once it finds the window, it can send commands to execute… Probably not that cool as the recent “Vista Speech Exploit”, but still something to play with ;)

It’s my feeling that there are more holes in UAC, but I will leave finding them all as an exercise for the readers...

Do-It-Yourself: Implementing Privilege Separation

Because of the limitations of the UAC and IL mentioned above (i.e. the read-up policy), I decided to implement a little privilege-separation policy in my system. The first thing we need, is to create a few more accounts, each for a specific type of applications or tasks. E.g. I decided that I want a separate account to run my web browser, a different one for running my email client as well as IM client (which I occasionally run) and a whole other account to deal with my super-secret projects. And, of course, I need a main account, that is, the one which I will use to log in to the system. All in all, here is the list of all the accounts on my Vista laptop:

• admin

• joanna

• joanna.web

• joanna.email

• joanna.sensitive

So, joanna is used to log into system (this is, BTW, a truly limited account, i.e. it doesn’t belong to the Administrators group) and Explorer and all applications like e.g. Picassa are started using this account. Firefox and Thunderbird run as joanna.web and joanna.email respectively. However, a little trick is needed here, if we want to start those applications as Low IL processes (and we want to do this, because we want UIPI to protect, at least in theory, other applications from web and mail clients if they got compromised somehow). As it was mentioned above, if one uses runas or psexec the created process will run as Medium IL, regardless the integrity level assigned to the executable. We can get around this, buy using this simple trick (note the nested quotations):runas /user:joanna.web "cmd /c start \"c:\Program Files\Mozilla Firefox\firefox.exe\""

c:\tools\psexec -d -e -u joanna.web -p l33tp4ssw0rd "cmd" "/c start "c:\Program Files\Mozilla Firefox\firefox.exe""

Obviously, we also need to set appropriate ACLs on the directories containing Firefox and Thunderbird user’s profiles, so that each of those two users get full access to the respective directories as well as to a \tmp folder, used to store email attachments and downloaded files. No other personal files should be accessible to joanna.web and joanna.email.

Finally, being a paranoid person as I am, I have also a special user joanna.sensitive, which is the only one granted access to my \projects directory. It may come as a surprise, but I decided to make joanna.sensitve a member of the Administrators group. The reason for that is that I need to make all the applications which run as joanna.sensitve (e.g. gvim, cmd, Visual Studio, KeePass, etc) to have their UI isolated from all other normal applications, which run as joanna at Medium IL. It seems like the only way to start a processes at High IL is to make it part of the Administrators group and then use ‘Run As Administrator’ or runas command to start it.

That way we have the highly dangerous applications, like web browser or email client, run at Low IL and as very limited users (joanna.web and joanna.email), who have access only to the necessary profile directories (the restriction, this time, applies both to read- and write- accesses, because it’s enforced by normal ACLs on file system objects and not by IL mechanism). Then we have all other applications, like Explorer, various Office applications, etc. running as joanna at Medium IL and finally the most critical programs, those running as joanna.sensitve, like KeePass and those which get access to my \projects directory, they all run at High IL.

Thunderbird, GPG and Smart Cards

Even though the above configuration might look good, there’s still a problem with it I haven’t solved yet. The problem is related to mail encryption and how to isolate email client from my PGP private keys. I use Thunderbird together with Enigmail’s OpenPGP extension. The extension is just a wrapper around gpg.exe, a GnuPG implementation of PGP. When I open encrypted email, my Thunderbird processes spawns a new gpg.exe process and passes the passphrase to it as an argument. There are two alarming things here – first Thunderbird process needs to know my passphrase (in fact I enter it into a dialog box displayed by the Enigmail’s extension) and second, the gpg.exe process runs as the same user and at the same IL level as the thunderbird.exe process. So, if thunderbird.exe gets compromised, the malicious code executing inside thunderbird.exe will not only be able to get to know my passphrase, but will also be free to read my private key from disk (because it has the same rights as gpg.exe).

Theoretically it should be possible to solve the problem with passphrase stealing by using GPG Agent, which could run in the background as a service and gpg.exe would ask the agent for the passphrase instead asking thunderbird.exe process, which will never be in possession of the passphrase. Ignoring the fact that there doesn’t seem to be a working GPG Agent implementation for Win32 environment, this still is not a good solution, because thunderbird.exe still gets access to gpg.exe process, which is its own child after all – so it’s possible for thunderbird.exe to read the contents of gpg.exe memory and to find a decrypted PGP private key there.

It would help if GPG was implemented as a service running in the background and thunderbird.exe would only communicate with it using some sort of LPC to send request to encrypt, decrypt, sign and verify buffers. Unfortunately I’m not aware of such implementation, especially for Win32.

The only practical solution seems to be to use a Smart Card, which would perform all the

necessary crypto operations using its own processor. Unfortunately, GnuPG supports only, a so called, OpenPGP smart cards, but it seems that the only two cards which implements this standard (i.e. Fellowship card and the g10 card) implement only 1024 bits RSA keys, which is definitely not enough for even a moderately paranoid person ;)

In the last hope, I turned to commercial PGP, downloaded the trial of PGP Desktop and… it turned out that it doesn’t support Vista yet (what a shame, BTW).

So, for the time being I’m defenseless like a baby against all those mean people who would try to exploit my thunderbird.exe and steal my private PGP key :(

The forgotten part: Detection

One might think that it’s a pretty secure system configuration… Well, more precisely, it could be considered as pretty secure, if UIPI was not buggy and UAC didn’t force me to run random setup programs with full administrator rights and if GPG supported Smart Cards with RSA keys > 1024 (or alternatively PGP Desktop supported Vista). But let’s not be that scrupulous and forgot about those minor problems…

Still, even though that might look like a secure configuration, this is all just an illusion of security! The whole security of the system can be compromised if attacker finds and exploits e.g. a bug in kernel driver.

It should be noted that Microsoft has also implemented several anti-exploitation techniques in Vista, the two most advertised are Address Space Layout Randomization (ASLR) and Data Execution Prevention (DEP). However, ASLR does not protect against local kernel exploitation, because it’s possible, even for the Low IL process, to query system about the list of loaded kernel modules together with their base addresses (using ZwQuerySystemInformation function). Also, hardware DEP, which works only on 64-bit processors, is not applied to the whole non-paged pool (as well as some other areas, but non-paged pool is the biggest one). In other words, the hardware NX bit is not set on all pages comprising the non-paged pool. BTW, there is a reason for Microsoft doing this and this is not due to compatibility issues (at least I believe so). I wonder who else can guess... ;)

UPDATE (see above): David Solomon, pointed out, that Hardware DEP is also available on many modern 32-bit processors (as the NX bit is implemented in PAE mode).

It’s very good that Microsoft implemented those anti-exploitation technologies (besides ASLR and NX, there are also some others). However the point is, they could be bypassed by a clever attacker under some circumstances. Now think about how many 3rd party kernel drivers are typically present in an average Windows systems – all those graphics card drivers, audio drivers, SATA drivers, A/V drivers, etc... and try answering the question how many possible bugs could be there? (BTW, it should be mentioned that Microsoft did a clever step by moving some classes of kernel drivers into user mode, like e.g. USB drivers – this is called UMDF).

When attacker successfully exploits kernel bug, then all the security scheme implemented by the OS is just worth nothing. So, what can we do? Well, we need to complement all those cool prevention technologies with effective detection technology. But has Microsoft done anything to make systematic detection possible? This is a rhetoric question of course and the negative answer applies unfortunately not only to Microsoft products but also to all other general purpose operating systems I’m aware of :(

My favorite quote of all those people who negate the value of detection is this: “once the system is compromised we can’t do anything!”. BS! Even though it might be true today – because the Operating System are not designed to be verifiable, but that doesn’t mean we can’t change this!

Bottom Line

Microsoft did a good job with securing Vista. They could do better, of course, but remember that Windows is a system for masses and also that they need to take care about compatibility issues, which sometimes can be a real pain. If you want to run Microsoft OS, then I believe that Vista is definitely a better choice then XP from a security standpoint. It has bugs, but which OS doesn't? What I wish for, is that they paid more attention to make their system verifiable...Acknowledgements

I would like to thank John Lambert and Andrew Roths, both of Microsoft, for answering my questions about UAC design.

\ No newline at end of file

+More then a month ago I have installed Vista RTM on my primary laptop (x86 machine) and have been running it since that time almost every day. Below are some of my reflections about the new security model introduced in Vista, its limitations, a few flaws and some practical info about how I configured my system.

UAC – The Good and The Bad

User Account Control (UAC) is a new security mechanism introduced in Vista, whose primary goal is to force users to work using restricted accounts, instead working as administrators. This is, in my opinion the most important security mechanism introduced in Vista. That doesn’t mean it can not be bypassed in many ways (due to implementation flaws), but just the fact that such a design change has been made into Windows is, without doubt, a great step towards securing consumer OSes.

When UAC is active (which is a default setting) even when user logs in as an administrator, most of her programs run as restricted processes, i.e. they have only some very limited subset of privileges in their process token. Also, they run at, so called, Medium integrity level, which, among other things, should prevent those applications from interacting with higher integrity level processes via Window messages. This mechanism also got a nice marketing acronym, UIPI, which stands for User Interface Privilege Isolation. Once the system determines that a given program (or a given action) requires administrative privileges, because e.g. the user wants to change system time, it displays a consent window to the user, asking her whether she really wants to proceed. In case the user logged in as a normal user (i.e. the account does not belong to the Administrators group), then the user is also asked to enter password for the one of the administrator's accounts. You can find more background information about UAC, e.g. at this page.

Many people complain about UAC, saying that it’s very annoying for them to see UAC consent dialog box to appear every few minutes or so, and claim that this will discourage users from using this mechanism at all (and yes, there’s an option to disable UAC). I strongly disagree with such opinion - I’ve been running Vista more then a month now and, besides the first few days when I was installing various applications, I now do not see UAC prompt more then 1-2 times per day. So, I really wonder what those people are doing that they see UAC constantly appearing every other minute…

One thing that I found particularly annoying though, is that Vista automatically assumes that all setup programs (application installers) should be run with administrator privileges. So, when you try to run such a program, you get a UAC prompt and you have only two choices: either to agree to run this application as administrator or to disallow running it at all. That means that if you downloaded some freeware Tetris game, you will have to run its installer as administrator, giving it not only full access to all your file system and registry, but also allowing e.g. to load kernel drivers! Why Tetris installer should be allowed to load kernel drivers?

How Vista recognizes installer executables? It has a compatibility database as well as uses several heuristics to do that, e.g. if the file name contains the string “setup” (Really, I’m not kidding!). Finally it looks at the executable’s manifest and most of the modern installers are expected to have such manifest embedded, which may indicate that the executable should be run as administrator.

To get around this problem, e.g. on XP, I would normally just add appropriate permissions to my normal (restricted) user account, in such a way that this account would be ale to add new directories under C:\Program Files and to add new keys under HKLM\Software (in most cases this is just enough), but still would not be able to modify any global files nor registry keys nor, heaven forbid, to load drivers. More paranoid people could chose to create a separate account, called e.g. installer and use it to install most of the applications. Of course, the real life is not that beautiful and you sometimes need to play a bit with regmon to tweak the permissions, but, in general it works for majority of applications and I have been successfully using this approach for years now on my XP box.

That approach would not work on Vista, because every time Vista detects that an executable is a setup program (and believe me Vista is really good at doing this), it will only allow running it as administrator… Even though it’s possible to disable heuristics-based installer detection via local policy settings – see picture below:

that doesn’t seem to work for those installer executables which have embedded manifest saying that they should be run as administrator.

I see the above limitation as a very severe hole in the design of UAC. After all, I would like to be offered a choice whether to fully trust given installer executable (and run it as full administrator) or just allow it to add a folder in C:\Program Files and some keys under HKLM\Software and do nothing more. I could do that under XP, but apparently I can’t under Vista, which is a bit disturbing (unless I’m missing some secret option to change that behavior).

Integrity Levels – Protect the OS but not your data!

Integrity Levels (IL) mechanism has been introduced to help implementing UAC. This mechanism is very simple – every process can be assigned one of the four possible integrity levels:

• Low

• Medium

• High

• System

Similarly, every securable object in the system, like e.g. a directory, file or registry key, can also be assigned an integrity level. Integrity level is nothing else then just an ACE of a special type assigned to the SACL list. If there’s no such ACE at all, then the integrity level of the object is assumed to be Medium. You can use icacls command to see integrity levels on file system objects:

C:\>icacls \Users\joanna\AppData\LocalLow

\Users\joanna\AppData\LocalLow silverose\joanna:(F)

silverose\joanna:(OI)(CI)(IO)(F)

NT AUTHORITY\SYSTEM:(F)

NT AUTHORITY\SYSTEM:(OI)(CI)(IO)(F)

BUILTIN\Administrators:(F)

BUILTIN\Administrators:(OI)(CI)(IO)(F)

Mandatory Label\Low Mandatory Level:(OI)(CI)(NW)

BTW, I don’t know any tool/command to see and modify integrity levels assigned to registry keys (I think I know how to do this in C though). Anybody?

Now, the whole concept behind IL is that a process can only get write-access to those objects which have the same or lower integrity level then the process itself.

Update (March 5th, 2007): This is the default behavior of IL and is indicated by the “(NW)” symbol on the picture above, which stands for NoWriteUp policy. I have just learned that one can use the chml tool by Mark Minasi to set also a different policy, i.e. NoReadUp (NR) or NoExecuteUp (NX), which would result that IL mechanism will not allow a lower integrity process to read or execute the objects marked with higher IL. See also my recent post about this tool.

UAC is implemented using IL – even if you log in as administrator, all your processes (like e.g. explorer.exe) run with Medium IL. Once you elevated to the “real admin” your process runs at High IL. System processes, like e.g. services, runs at System IL. From the security point of view High IL seems to be equivalent to System IL, because once you are allowed to execute code at High IL you can compromise the whole system.

Internet Explorer’s protected mode is implemented using the IL mechanism. The iexplore.exe process runs at Low IL and, in a system with default configuration, can only write to %USERPROFILE%\AppData\LocalLow and HKCU\Software\AppDataLow because all other objects have higher ILs (usually Medium).

If you don’t like surfing using IE, you can very easily setup your Firefox (or other browser of your choice) to run as Low integrity process (here we assume that Firefox user’s profile is in j:\config\firefox-profile):

C:\Program Files\Mozilla Firefox>icacls firefox.exe /setintegritylevel low

J:\config>icacls firefox-profile /setintegritylevel (OI)(CI)low

Because firefox.exe is now marked as a Low integrity file, Vista will also create a Low integrity process from this file, unless you are going to start this executable from a High integrity process (e.g. elevated command prompt). Also, if you, for some reason (see below), wanted to use runas or psexec to start a Low integrity process, it won’t work and will start the process as Medium, regardless that the executable is marked as Low integrity.

It should be stressed that IL, by default, protects only against modifications of higher integrity objects. It’s perfectly ok for the Low IL process to read e.g. files, even if they are marked as Medium or High IL. In other words, if somebody exploits IE running in Protected Mode (at Low IL), she will be able to read (i.e. steal) all user’s data.

This is not an implementation bug, this is a design decision and it’s cleverly called the “read-up policy”. If we think about it for a while, it should become clear why Microsoft decided to do it that way. First, we should observe, that what Microsoft is most concerned about, is malware which permanently installs itself in the system and that could later be detected by some anti-malware programs. Microsoft doesn’t like it, because it’s the source of all the complains about how insecure Windows is and also the A/V companies can publish their statistics about how many percent of computers is compromised, etc… All in all, a very uncomfortable situation, not only for Microsoft but also for all those poor users, who now need to try all the various methods (read buy A/V programs) to remove the malware, instead just focus on their work…

On the other hand, imagine a reliable exploit (i.e. not crashing a target too often) which, after exploiting e.g. IE Protected Mode process, steals all the user’s DOC and XLS files, sends them back somewhere and afterwards disappears in an elegant fashion. Our user, busy with his every day work, does not even notice anything, so he can continue working undisturbed and focus on his real job. The A/V programs do not detect the exploit (why should they? – after all there’s no signature for it nor the shellcode uses any suspicious API) so they do not report the machine as infected – because, after all it’s not infected. So, the statistics look better and everybody is generally happier. Including the competition, who now has access to stolen data ;)

User Interface Privilege Isolation and some little Fun

UAC and Integrity Levels mechanism makes it possible for processes running with different ILs to share the same desktop. This raises potential security problem, because Windows implements a mechanism to allow one process to send a “window message”, like e.g. WM_SETTEXT, to another process. Moreover, some messages, like e.g. the infamous WM_TIMER, could be used the redirect execution flow of the target thread. This has been popular a few years ago in so called “Shatter Attacks”.

UIPI, introduced in Vista, is for the rescue. UIPI basically enforces the obvious policy that lower integrity processes can not send messages to higher integrity processes.

Interestingly, UIPI implementation is a bit “unfinished” I would say… For example, in contrast to design assumption, on my system at least, it is possible for the Low integrity process to send e.g. WM_KEYDOWN to e.g. open Administrative shell (cmd.exe) running at High IL and gets arbitrary commands executed.

One simple scenario of the attack is that a malicious program, running at Low IL, can wait for the user to open elevated command prompt – it can e.g. poll the open window handles e.g. every second or so (Window enumeration is allowed even at Low IL). Once it finds the window, it can send commands to execute… Probably not that cool as the recent “Vista Speech Exploit”, but still something to play with ;)

It’s my feeling that there are more holes in UAC, but I will leave finding them all as an exercise for the readers...

Do-It-Yourself: Implementing Privilege Separation

Because of the limitations of the UAC and IL mentioned above (i.e. the read-up policy), I decided to implement a little privilege-separation policy in my system. The first thing we need, is to create a few more accounts, each for a specific type of applications or tasks. E.g. I decided that I want a separate account to run my web browser, a different one for running my email client as well as IM client (which I occasionally run) and a whole other account to deal with my super-secret projects. And, of course, I need a main account, that is, the one which I will use to log in to the system. All in all, here is the list of all the accounts on my Vista laptop:

• admin

• joanna

• joanna.web

• joanna.email

• joanna.sensitive

So, joanna is used to log into system (this is, BTW, a truly limited account, i.e. it doesn’t belong to the Administrators group) and Explorer and all applications like e.g. Picassa are started using this account. Firefox and Thunderbird run as joanna.web and joanna.email respectively. However, a little trick is needed here, if we want to start those applications as Low IL processes (and we want to do this, because we want UIPI to protect, at least in theory, other applications from web and mail clients if they got compromised somehow). As it was mentioned above, if one uses runas or psexec the created process will run as Medium IL, regardless the integrity level assigned to the executable. We can get around this, buy using this simple trick (note the nested quotations):runas /user:joanna.web "cmd /c start \"c:\Program Files\Mozilla Firefox\firefox.exe\""

c:\tools\psexec -d -e -u joanna.web -p l33tp4ssw0rd "cmd" "/c start "c:\Program Files\Mozilla Firefox\firefox.exe""

Obviously, we also need to set appropriate ACLs on the directories containing Firefox and Thunderbird user’s profiles, so that each of those two users get full access to the respective directories as well as to a \tmp folder, used to store email attachments and downloaded files. No other personal files should be accessible to joanna.web and joanna.email.

Finally, being a paranoid person as I am, I have also a special user joanna.sensitive, which is the only one granted access to my \projects directory. It may come as a surprise, but I decided to make joanna.sensitve a member of the Administrators group. The reason for that is that I need to make all the applications which run as joanna.sensitve (e.g. gvim, cmd, Visual Studio, KeePass, etc) to have their UI isolated from all other normal applications, which run as joanna at Medium IL. It seems like the only way to start a processes at High IL is to make it part of the Administrators group and then use ‘Run As Administrator’ or runas command to start it.

That way we have the highly dangerous applications, like web browser or email client, run at Low IL and as very limited users (joanna.web and joanna.email), who have access only to the necessary profile directories (the restriction, this time, applies both to read- and write- accesses, because it’s enforced by normal ACLs on file system objects and not by IL mechanism). Then we have all other applications, like Explorer, various Office applications, etc. running as joanna at Medium IL and finally the most critical programs, those running as joanna.sensitve, like KeePass and those which get access to my \projects directory, they all run at High IL.

Thunderbird, GPG and Smart Cards

Even though the above configuration might look good, there’s still a problem with it I haven’t solved yet. The problem is related to mail encryption and how to isolate email client from my PGP private keys. I use Thunderbird together with Enigmail’s OpenPGP extension. The extension is just a wrapper around gpg.exe, a GnuPG implementation of PGP. When I open encrypted email, my Thunderbird processes spawns a new gpg.exe process and passes the passphrase to it as an argument. There are two alarming things here – first Thunderbird process needs to know my passphrase (in fact I enter it into a dialog box displayed by the Enigmail’s extension) and second, the gpg.exe process runs as the same user and at the same IL level as the thunderbird.exe process. So, if thunderbird.exe gets compromised, the malicious code executing inside thunderbird.exe will not only be able to get to know my passphrase, but will also be free to read my private key from disk (because it has the same rights as gpg.exe).

Theoretically it should be possible to solve the problem with passphrase stealing by using GPG Agent, which could run in the background as a service and gpg.exe would ask the agent for the passphrase instead asking thunderbird.exe process, which will never be in possession of the passphrase. Ignoring the fact that there doesn’t seem to be a working GPG Agent implementation for Win32 environment, this still is not a good solution, because thunderbird.exe still gets access to gpg.exe process, which is its own child after all – so it’s possible for thunderbird.exe to read the contents of gpg.exe memory and to find a decrypted PGP private key there.

It would help if GPG was implemented as a service running in the background and thunderbird.exe would only communicate with it using some sort of LPC to send request to encrypt, decrypt, sign and verify buffers. Unfortunately I’m not aware of such implementation, especially for Win32.

The only practical solution seems to be to use a Smart Card, which would perform all the

necessary crypto operations using its own processor. Unfortunately, GnuPG supports only, a so called, OpenPGP smart cards, but it seems that the only two cards which implements this standard (i.e. Fellowship card and the g10 card) implement only 1024 bits RSA keys, which is definitely not enough for even a moderately paranoid person ;)

In the last hope, I turned to commercial PGP, downloaded the trial of PGP Desktop and… it turned out that it doesn’t support Vista yet (what a shame, BTW).

So, for the time being I’m defenseless like a baby against all those mean people who would try to exploit my thunderbird.exe and steal my private PGP key :(

The forgotten part: Detection

One might think that it’s a pretty secure system configuration… Well, more precisely, it could be considered as pretty secure, if UIPI was not buggy and UAC didn’t force me to run random setup programs with full administrator rights and if GPG supported Smart Cards with RSA keys > 1024 (or alternatively PGP Desktop supported Vista). But let’s not be that scrupulous and forgot about those minor problems…

Still, even though that might look like a secure configuration, this is all just an illusion of security! The whole security of the system can be compromised if attacker finds and exploits e.g. a bug in kernel driver.

It should be noted that Microsoft has also implemented several anti-exploitation techniques in Vista, the two most advertised are Address Space Layout Randomization (ASLR) and Data Execution Prevention (DEP). However, ASLR does not protect against local kernel exploitation, because it’s possible, even for the Low IL process, to query system about the list of loaded kernel modules together with their base addresses (using ZwQuerySystemInformation function). Also, hardware DEP, which works only on 64-bit processors, is not applied to the whole non-paged pool (as well as some other areas, but non-paged pool is the biggest one). In other words, the hardware NX bit is not set on all pages comprising the non-paged pool. BTW, there is a reason for Microsoft doing this and this is not due to compatibility issues (at least I believe so). I wonder who else can guess... ;)

UPDATE (see above): David Solomon, pointed out, that Hardware DEP is also available on many modern 32-bit processors (as the NX bit is implemented in PAE mode).

It’s very good that Microsoft implemented those anti-exploitation technologies (besides ASLR and NX, there are also some others). However the point is, they could be bypassed by a clever attacker under some circumstances. Now think about how many 3rd party kernel drivers are typically present in an average Windows systems – all those graphics card drivers, audio drivers, SATA drivers, A/V drivers, etc... and try answering the question how many possible bugs could be there? (BTW, it should be mentioned that Microsoft did a clever step by moving some classes of kernel drivers into user mode, like e.g. USB drivers – this is called UMDF).

When attacker successfully exploits kernel bug, then all the security scheme implemented by the OS is just worth nothing. So, what can we do? Well, we need to complement all those cool prevention technologies with effective detection technology. But has Microsoft done anything to make systematic detection possible? This is a rhetoric question of course and the negative answer applies unfortunately not only to Microsoft products but also to all other general purpose operating systems I’m aware of :(

My favorite quote of all those people who negate the value of detection is this: “once the system is compromised we can’t do anything!”. BS! Even though it might be true today – because the Operating System are not designed to be verifiable, but that doesn’t mean we can’t change this!

Bottom Line

Microsoft did a good job with securing Vista. They could do better, of course, but remember that Windows is a system for masses and also that they need to take care about compatibility issues, which sometimes can be a real pain. If you want to run Microsoft OS, then I believe that Vista is definitely a better choice then XP from a security standpoint. It has bugs, but which OS doesn't? What I wish for, is that they paid more attention to make their system verifiable...Acknowledgements

I would like to thank John Lambert and Andrew Roths, both of Microsoft, for answering my questions about UAC design.

\ No newline at end of file

diff --git a/_posts/2007-02-12-vista-security-model-big-joke.html b/_posts/2007-02-12-vista-security-model-big-joke.html

index a48607b..7a294de 100644

--- a/_posts/2007-02-12-vista-security-model-big-joke.html

+++ b/_posts/2007-02-12-vista-security-model-big-joke.html

@@ -9,4 +9,4 @@

blogger_orig_url: http://theinvisiblethings.blogspot.com/2007/02/vista-security-model-big-joke.html

---

-[Update: if you came here from ZDNet or Slashdot - see the post about confusion above!]

Today I saw a new post at Mark Russinovich’s blog which I take as a response to my recent musings about Vista security features, where I pointed out several problems with UAC, like e.g. the attack that allows for a low integrity process to hijack the high integrity level command prompt. Those who read the whole article undoubtedly noticed that my overall opinion of vista security changes was still very positive – after all everybody can do mistakes and the fact UAC is not perfect, doesn’t diminish the fact that it’s a step into the right direction, i.e. implementing least-privilege policy in Windows OS.

However, I now read this post by Mark Russinovich (a Microsoft employee), which says:

"It should be clear then, that neither UAC elevations nor Protected Mode IE define new Windows security boundaries. Microsoft has been communicating this but I want to make sure that the point is clearly heard. Further, as Jim Allchin pointed out in his blog post Security Features vs Convenience, Vista makes tradeoffs between security and convenience, and both UAC and Protected Mode IE have design choices that required paths to be opened in the IL wall for application compatibility and ease of use."

And then we read:

"Because elevations and ILs don’t define a security boundary, potential avenues of attack, regardless of ease or scope, are not security bugs. So if you aren’t guaranteed that your elevated processes aren’t susceptible to compromise by those running at a lower IL, why did Windows Vista go to the trouble of introducing elevations and ILs? To get us to a world where everyone runs as standard user by default and all software is written with that assumption."

Oh, excuse me, is this supposed be a joke? We all remember all those Microsoft’s statements about how serious Microsoft is about security in Vista and how all those new cool security features like UAC or Protected Mode IE will improve the world’s security. And now we hear what? That this flagship security technology (UAC) is in fact… not a security technology!

I understand that implementing UAC, UIPI and Integrity Levels mechanisms on top of the existing Windows OS infrastructure is a hard task and it would be much easier to design the whole new OS from scratch and that Microsoft can’t do this for various of reasons. I understand that all, but that doesn’t mean that once more people at Microsoft realized that too, they should turn everything into a big joke? Or maybe I’m too much of an idealist…

So, I will say this: If Microsoft won’t change their attitude soon, then in a couple of months the security of Vista (from the typical malware’s point of view) will be equal to the security of current XP systems (which means, not too impressive).

\ No newline at end of file

+[Update: if you came here from ZDNet or Slashdot - see the post about confusion above!]

Today I saw a new post at Mark Russinovich’s blog which I take as a response to my recent musings about Vista security features, where I pointed out several problems with UAC, like e.g. the attack that allows for a low integrity process to hijack the high integrity level command prompt. Those who read the whole article undoubtedly noticed that my overall opinion of vista security changes was still very positive – after all everybody can do mistakes and the fact UAC is not perfect, doesn’t diminish the fact that it’s a step into the right direction, i.e. implementing least-privilege policy in Windows OS.

However, I now read this post by Mark Russinovich (a Microsoft employee), which says:

"It should be clear then, that neither UAC elevations nor Protected Mode IE define new Windows security boundaries. Microsoft has been communicating this but I want to make sure that the point is clearly heard. Further, as Jim Allchin pointed out in his blog post Security Features vs Convenience, Vista makes tradeoffs between security and convenience, and both UAC and Protected Mode IE have design choices that required paths to be opened in the IL wall for application compatibility and ease of use."

And then we read:

"Because elevations and ILs don’t define a security boundary, potential avenues of attack, regardless of ease or scope, are not security bugs. So if you aren’t guaranteed that your elevated processes aren’t susceptible to compromise by those running at a lower IL, why did Windows Vista go to the trouble of introducing elevations and ILs? To get us to a world where everyone runs as standard user by default and all software is written with that assumption."

Oh, excuse me, is this supposed be a joke? We all remember all those Microsoft’s statements about how serious Microsoft is about security in Vista and how all those new cool security features like UAC or Protected Mode IE will improve the world’s security. And now we hear what? That this flagship security technology (UAC) is in fact… not a security technology!

I understand that implementing UAC, UIPI and Integrity Levels mechanisms on top of the existing Windows OS infrastructure is a hard task and it would be much easier to design the whole new OS from scratch and that Microsoft can’t do this for various of reasons. I understand that all, but that doesn’t mean that once more people at Microsoft realized that too, they should turn everything into a big joke? Or maybe I’m too much of an idealist…

So, I will say this: If Microsoft won’t change their attitude soon, then in a couple of months the security of Vista (from the typical malware’s point of view) will be equal to the security of current XP systems (which means, not too impressive).

\ No newline at end of file

diff --git a/_posts/2007-02-13-confiusion-about-joke.html b/_posts/2007-02-13-confiusion-about-joke.html

index 74260ea..9d2595a 100644

--- a/_posts/2007-02-13-confiusion-about-joke.html

+++ b/_posts/2007-02-13-confiusion-about-joke.html

@@ -9,4 +9,4 @@

blogger_orig_url: http://theinvisiblethings.blogspot.com/2007/02/confiusion-about-joke.html

---

-It seems that many people didn’t fully understand why I wrote the previous post – Vista Security Model – A Big Joke... There are two things which should be distinguished:

1) The fact that UAC design assumes that every setup executable should be run elevated (and that a user doesn't really have a choice to run it from a non-elevated account),

2) The fact that UAC implementation contains bug(s), like e.g. the bug I pointed out in my article, which allows a low integrity level process to send WM_KEYDOWN messages to a command prompt window running at high integrity level.

I was pissed off not because of #1, but because Microsoft employee - Mark Russinovich - declared that all implementation bugs in UAC are not to be considered as security bugs.

True, I also don't like the fact that UAC forces users to run every setup program with elevated privileges (fact #1), but I can understand such a design decision (as being a compromise between usability and security) and this was not the reason why I wrote "The Joke Post".

\ No newline at end of file

+It seems that many people didn’t fully understand why I wrote the previous post – Vista Security Model – A Big Joke... There are two things which should be distinguished:

1) The fact that UAC design assumes that every setup executable should be run elevated (and that a user doesn't really have a choice to run it from a non-elevated account),

2) The fact that UAC implementation contains bug(s), like e.g. the bug I pointed out in my article, which allows a low integrity level process to send WM_KEYDOWN messages to a command prompt window running at high integrity level.

I was pissed off not because of #1, but because Microsoft employee - Mark Russinovich - declared that all implementation bugs in UAC are not to be considered as security bugs.

True, I also don't like the fact that UAC forces users to run every setup program with elevated privileges (fact #1), but I can understand such a design decision (as being a compromise between usability and security) and this was not the reason why I wrote "The Joke Post".

\ No newline at end of file

diff --git a/_posts/2007-03-05-handy-tool-to-play-with-windows.html b/_posts/2007-03-05-handy-tool-to-play-with-windows.html

index f9c25aa..0dabf44 100644

--- a/_posts/2007-03-05-handy-tool-to-play-with-windows.html

+++ b/_posts/2007-03-05-handy-tool-to-play-with-windows.html

@@ -9,4 +9,4 @@

blogger_orig_url: http://theinvisiblethings.blogspot.com/2007/03/handy-tool-to-play-with-windows.html

---

-Mark Minasi wrote to me recently to point out that his new tool, chml, is capable of setting NoReadUp and NoExecuteUp policy on file objects, in addition to the standard NoWriteUp policy, which is used by default on Vista.

As I wrote before the default IL policy used on Vista assumes only the NoWriteUp policy. That means that all objects which do not have assigned any IL explicitly (and consequently are treated as if they were marked with Medium IL) can be read by low integrity processes (only writes are prevented). Also, the standard Windows icacls command, which allows to set IL for file objects, assumes always the NoWriteUp policy only (unless I’m missing some secret switch).

However, it’s possible, for each object, to define not only the integrity level but also the policy which will be used to access it. All this information is stored in the same SACE which also defines the IL.

There doesn’t seem to be too much documentation from Microsoft about how to set those permissions, except this paper about Protected Mode IE and the sddl.h file itself.

Anyway, it’s good to see a tool like chml as it allows to do some cool things in a very simple way. E.g. consider that you have some secret documents in the folder c:\secretes and that you don’t feel like sharing those files with anybody who can exploit your Protected Mode IE. As I pointed out in my previous article, by default all your personal files are accessible to your Protected Mode IE low integrity process, so in the event of successful exploitation the attacker is free to steal them all. However now, using Mark Minasi’s tool, you can just do this:

chml.exe c:\secrets -i:m -nr -nx

This should prevent all the low IL processes, like e.g. Protected Mode IE, from reading the contents of your secret directory.

BTW, you can use chml to also examine the SACE which was created:

chml.exe c:\secrets -showsddl

and you should get something like that as a result:

SDDL string for c:\secrets's integrity label=S:(ML;OICI;NRNX;;;ME)

Where S means that it’s an SACE (in contrast to e.g. DACE), ML says that this ACE defines mandatory label, OICI means “Object Inherit” and “Container Inherit”, NRNX defines that to access this object the NoReadUp and NoExecuteUp policies should be used (which also implies the NoWriteUp BTW) and finally the ME stands for Medium Integrity Level.

All credits go to Mark Minasi and the Windows IL team :)

As a side note: the updated slides for my recent Black Hat DC talk about cheating hardware based memory acquisition can be found here. You can also get the demo movies here.

\ No newline at end of file

+Mark Minasi wrote to me recently to point out that his new tool, chml, is capable of setting NoReadUp and NoExecuteUp policy on file objects, in addition to the standard NoWriteUp policy, which is used by default on Vista.

As I wrote before the default IL policy used on Vista assumes only the NoWriteUp policy. That means that all objects which do not have assigned any IL explicitly (and consequently are treated as if they were marked with Medium IL) can be read by low integrity processes (only writes are prevented). Also, the standard Windows icacls command, which allows to set IL for file objects, assumes always the NoWriteUp policy only (unless I’m missing some secret switch).

However, it’s possible, for each object, to define not only the integrity level but also the policy which will be used to access it. All this information is stored in the same SACE which also defines the IL.

There doesn’t seem to be too much documentation from Microsoft about how to set those permissions, except this paper about Protected Mode IE and the sddl.h file itself.

Anyway, it’s good to see a tool like chml as it allows to do some cool things in a very simple way. E.g. consider that you have some secret documents in the folder c:\secretes and that you don’t feel like sharing those files with anybody who can exploit your Protected Mode IE. As I pointed out in my previous article, by default all your personal files are accessible to your Protected Mode IE low integrity process, so in the event of successful exploitation the attacker is free to steal them all. However now, using Mark Minasi’s tool, you can just do this:

chml.exe c:\secrets -i:m -nr -nx

This should prevent all the low IL processes, like e.g. Protected Mode IE, from reading the contents of your secret directory.

BTW, you can use chml to also examine the SACE which was created:

chml.exe c:\secrets -showsddl

and you should get something like that as a result:

SDDL string for c:\secrets's integrity label=S:(ML;OICI;NRNX;;;ME)

Where S means that it’s an SACE (in contrast to e.g. DACE), ML says that this ACE defines mandatory label, OICI means “Object Inherit” and “Container Inherit”, NRNX defines that to access this object the NoReadUp and NoExecuteUp policies should be used (which also implies the NoWriteUp BTW) and finally the ME stands for Medium Integrity Level.

All credits go to Mark Minasi and the Windows IL team :)

As a side note: the updated slides for my recent Black Hat DC talk about cheating hardware based memory acquisition can be found here. You can also get the demo movies here.

\ No newline at end of file

diff --git a/_posts/2007-03-26-game-is-over.html b/_posts/2007-03-26-game-is-over.html

index ac8c977..b5a33ce 100644

--- a/_posts/2007-03-26-game-is-over.html

+++ b/_posts/2007-03-26-game-is-over.html

@@ -10,4 +10,4 @@

blogger_orig_url: http://theinvisiblethings.blogspot.com/2007/03/game-is-over.html

---

-People often say that once an attacker gets access to the kernel the game is over! That’s true indeed these days, and most of the research I have done over the past two years or so, was about proofing just that. Some people, however, go a bit further and say, that thus there is no point in researching ways to detect system compromises and, once an attacker got in, you should simply assume everything has been compromised and replace all the components, i.e. buy new machine (as the attacker might have modified the BIOS or re-flashed PCI EEPROMs), reinstall OS, all applications, etc.

However, they miss one little detail – how can they actually know that the attacker got access to the system and that the game is over indeed and we need to reinstall just now?

Well, we simply assume that the attacker had to make some mistake and that we, sooner or later, will find out. But what if she didn’t make a mistake?

There are several trends of how this problem should be addressed in a more general and elegant way though. Most of them are based on a proactive approach. Let’s have a quick look at them…

- One generic solution is to build in a prevention technology into the OS. That includes all the anti-exploitation mechanisms, like e.g. ASLR, Non Executable memory, Stack Guard/GS, and others, as well as some little design changes into OS, like e.g. implementation of least-privilege principle (think e.g. UAC in Vista) and some sort of kernel protection (e.g. securelevel in BSD, grsecurity on Linux, signed drivers in Vista, etc).

This has been undoubtedly the most popular approach for the last couple of years and recently it gets even more popular, as Microsoft implemented most of those techniques in Vista.

However, everybody who follows the security research for at least several years should know that all those clever mechanisms have all been bypassed at least once in their history. That includes attacks against Stack Guard protection presented back in 2000 by Bulba and Kil3r, several ways to bypass PaX ASLR, like those described by Nergal in 2001 and by others several months later as well as exploiting the privilege elevation bug in PaX discovered by its author in 2005. Also the Microsoft's Hardware DEP (AKA NX) has been demonstrated to be bypassable by skape and Skywing in 2005.

Similarly, kernel protection mechanisms have also been bypassed over the past years, starting e.g. with this nice attack against grsecurity /dev/(k)mem protection presented by Guillaume Pelat in 2002. In 2006 Loic Duflot demonstrated that BSD's famous securelevel mechanism can also be bypassed. And, also last year, I showed that Vista x64 kernel protection is not foolproof either.

The point is – all those hardening techniques are designed to make exploitation harder or to limit the damage after a successful exploitation, but not to be 100% foolproof. On the other hand, it must be said, that they probably represent the best prevention solutions available for us these days.

- Another approach is to dramatically redesign the whole OS in such a way that all components (like e.g. drivers and serves) are compartmentalized, e.g. run as separate processes in usermode, and consequently are isolated not only from each other but also from the OS kernel (micro kernel). The idea here is that the most critical components, i.e. the micro kernel, is very small and can be easily verified. Example of such OS is Minix3 which is still under development though.

Undoubtedly this is a very good approach to minimize impact from system or driver faults, but does not protect us against malicious system compromises. After all if an attacker exploits a bug in a web browser, she may only be interested in modifying the browser’s code. Sure, she probably would not be able to get access to the micro kernel, but why would she really need it?

Imagine, for example, the following common scenario: many online banking systems require users to use smart cards to sign all transaction requests (e.g. money transfers). This usually works by having a browser (more specifically an ActiveX control or Firefox’s plugin) to display a message to a user that he or she is about to make e.g. a wire transfer to a given account number for a given amount of money. If the user confirms that action, they should press an ‘Accept’ button, which instructs browser to send the message to the smart card for signing. The message itself is usually just some kind of formatted text message specifying the source and destination account numbers, amount of money, date and time stamp etc. Then the user is asked to insert the smart card, which contains his or her private key (issued by the bank) and to also enter the PIN code. The latter can be done either by using the same browser applet or, in slightly more secure implementations, by the smart card reader itself, if it has a pad for entering PINs.

Obviously the point here is that malware should not be able to forge the digital signature and only the legitimate user has access to the smart card and also knows the card’s PIN, so nobody else will be able to sign that message with the user’s key.

However, it’s just enough for the attacker to replace the message while it’s being send to the card, while displaying the original message in the browser’s window. This all can be done by just modifying (“hooking”) the browser’s in-memory code and/or data. No need for kernel malware, yet the system (the browser more specifically) is compromised!

Still, one good thing about such a system design is that if we don’t allow an attacker to compromise the microkernel, then, at least in theory, we can write a detector capable of finding that some (malicious) changes to the browsers memory have been introduced indeed. However, in practice, we would have to know how exactly the browser’s memory should look like, e.g. which function pointers in Firefox’s code should be verified in order to find out whether such a compromise has indeed occurred. Unfortunately we can not do that today.

- Alternative approach to the above two, which does not require any dramatic changes into OS, is to make use of so called sound static code analyzers to verify all sensitive code in OS and applications. The soundness property assures that the analyzer has been mathematically proven not to miss even a single potential run time error, which includes e.g. unintentional execution flow modifications. The catch here is that soundness doesn’t mean that the analyzer doesn’t generate false positives. It’s actually mathematically proven that we can’t have such an ideal tool (i.e. with zero false positive rate), as the problem of analyzing all possible program execution paths is incomputable. Thus, the practical analyzers always consider some superset of all possible execution flows, which is easy to compute, yet may introduce some false alarms and the whole trick is how to choose that superset so that the number of false positives is minimal.

ASTREE is an example of a sound static code analyzer for the C language (although it doesn’t support programs which make use of dynamic memory allocation) and it apparently has been used to verify the primary flight control software for Airbus A340 and A380. Unfortunately, there doesn’t seem to be any publicly available sound binary code static analyzers… (if anybody knows any links, you’re more then welcome to paste the links under this post – just please make sure you’re referring to sound analyzers).

If we had such sound and precise (i.e. with minimal rate of false alarms) binary static code analyzer that could be a big breakthrough in the OS and application security.

We could imagine, for example, a special authority for signing device drivers for various OSes and that they would first perform such a formal static validation on submitted drivers and, once passed the test, the drivers would be digitally signed. Plus, the OS kernel itself would be validated itself by the vendor and would accept only those drivers which were signed by the driver verification authority. The authority could be an OS vendor itself or a separate 3rd party organization. Additionally we could also require that the code of all security critical applications, like e.g. web browser be also signed by such an authority and set a special policy in our OS to allow e.g. only signed applications to access network.

The only one week point here is, that if the private key used by the certification authority gets compromised, then the game is over and nobody really knows that… For this reason it would be good, to have more then one certification authority and require that each driver/application be signed by at least two independent authorities.

From the above three approaches only the last one can guarantee that our system will not get compromised ever. The only problem here is that… there are no tools today for static binary code analysis that would be proved to be sound and also precise enough to be used in practice…

So, today, as far as proactive solutions are considered, we’re left only with solutions #1 and #2, which, as discussed above, can not protect OS and applications from compromises in 100%. And, to make it worse, do not offer any clue, whether the compromise actually occurred.

That’s why I’m trying so much to promote the idea of Verifiable Operating Systems, which should allow to at least find out (in a systematic way) whether the system in question has been compromised or not (but, unfortunately not to find whether the single-shot incident occurred). The point is that the number of required design changes should be fairly small. There are some problems with it too, like e.g. verifying JIT-like code, but hopefully they can be solved in the near feature. Expect me to write more on this topic in the near feature.

Special thanks to Halvar Flake for eye-opening discussions about sound code analyzers and OS security in general.

\ No newline at end of file

+People often say that once an attacker gets access to the kernel the game is over! That’s true indeed these days, and most of the research I have done over the past two years or so, was about proofing just that. Some people, however, go a bit further and say, that thus there is no point in researching ways to detect system compromises and, once an attacker got in, you should simply assume everything has been compromised and replace all the components, i.e. buy new machine (as the attacker might have modified the BIOS or re-flashed PCI EEPROMs), reinstall OS, all applications, etc.

However, they miss one little detail – how can they actually know that the attacker got access to the system and that the game is over indeed and we need to reinstall just now?

Well, we simply assume that the attacker had to make some mistake and that we, sooner or later, will find out. But what if she didn’t make a mistake?

There are several trends of how this problem should be addressed in a more general and elegant way though. Most of them are based on a proactive approach. Let’s have a quick look at them…

- One generic solution is to build in a prevention technology into the OS. That includes all the anti-exploitation mechanisms, like e.g. ASLR, Non Executable memory, Stack Guard/GS, and others, as well as some little design changes into OS, like e.g. implementation of least-privilege principle (think e.g. UAC in Vista) and some sort of kernel protection (e.g. securelevel in BSD, grsecurity on Linux, signed drivers in Vista, etc).

This has been undoubtedly the most popular approach for the last couple of years and recently it gets even more popular, as Microsoft implemented most of those techniques in Vista.

However, everybody who follows the security research for at least several years should know that all those clever mechanisms have all been bypassed at least once in their history. That includes attacks against Stack Guard protection presented back in 2000 by Bulba and Kil3r, several ways to bypass PaX ASLR, like those described by Nergal in 2001 and by others several months later as well as exploiting the privilege elevation bug in PaX discovered by its author in 2005. Also the Microsoft's Hardware DEP (AKA NX) has been demonstrated to be bypassable by skape and Skywing in 2005.

Similarly, kernel protection mechanisms have also been bypassed over the past years, starting e.g. with this nice attack against grsecurity /dev/(k)mem protection presented by Guillaume Pelat in 2002. In 2006 Loic Duflot demonstrated that BSD's famous securelevel mechanism can also be bypassed. And, also last year, I showed that Vista x64 kernel protection is not foolproof either.

The point is – all those hardening techniques are designed to make exploitation harder or to limit the damage after a successful exploitation, but not to be 100% foolproof. On the other hand, it must be said, that they probably represent the best prevention solutions available for us these days.

- Another approach is to dramatically redesign the whole OS in such a way that all components (like e.g. drivers and serves) are compartmentalized, e.g. run as separate processes in usermode, and consequently are isolated not only from each other but also from the OS kernel (micro kernel). The idea here is that the most critical components, i.e. the micro kernel, is very small and can be easily verified. Example of such OS is Minix3 which is still under development though.

Undoubtedly this is a very good approach to minimize impact from system or driver faults, but does not protect us against malicious system compromises. After all if an attacker exploits a bug in a web browser, she may only be interested in modifying the browser’s code. Sure, she probably would not be able to get access to the micro kernel, but why would she really need it?