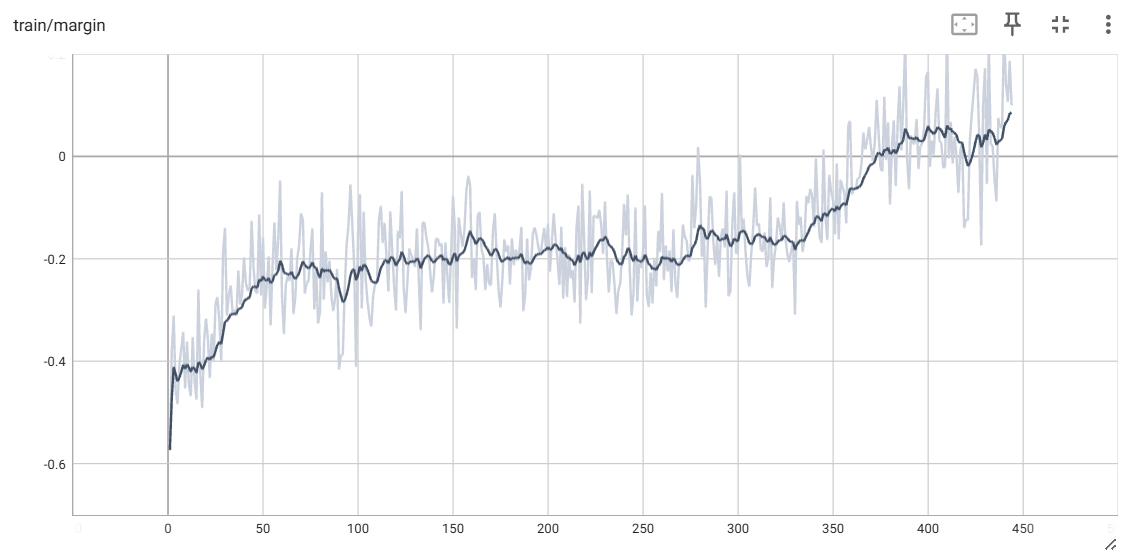

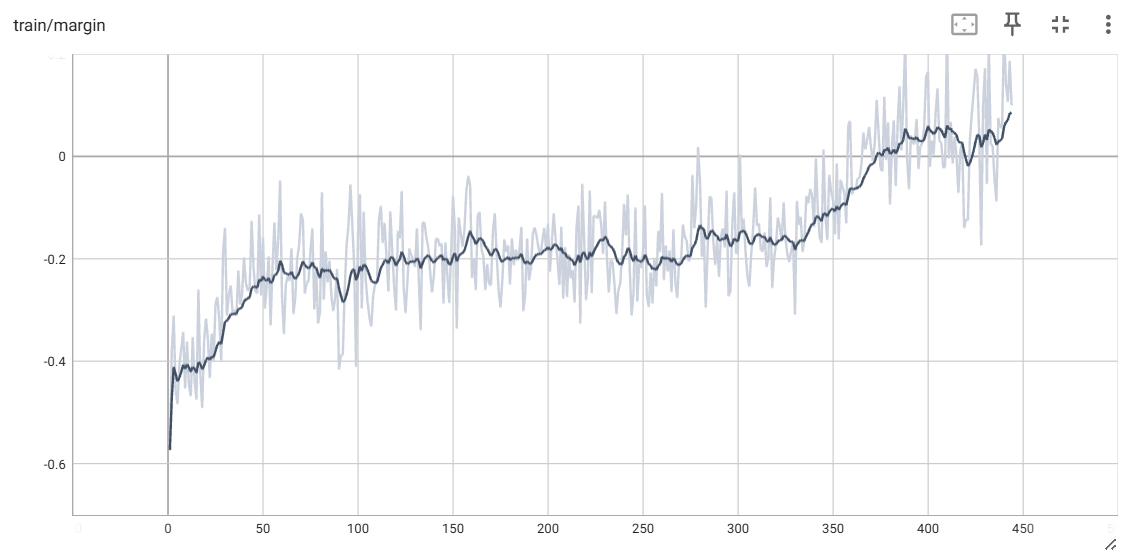

@@ -744,13 +772,50 @@ with a Reference-Free Reward](https://arxiv.org/pdf/2405.14734) (SimPO). Which i ### Alternative Option For RLHF: Odds Ratio Preference Optimization -We support the method introduced in the paper [ORPO: Monolithic Preference Optimization without Reference Model](https://arxiv.org/abs/2403.07691) (ORPO). Which is a reference model free aligment method that mixes the SFT loss with a reinforcement learning loss that uses odds ratio as the implicit reward to enhance training stability and efficiency. Simply set the flag to disable the use of the reference model, set the reward target margin and enable length normalization in the DPO training script. To use ORPO in alignment, use the [train_orpo.sh](./examples/training_scripts/train_orpo.sh) script, You can set the value for `lambda` (which determine how strongly the reinforcement learning loss affect the training) but it is optional. +We support the method introduced in the paper [ORPO: Monolithic Preference Optimization without Reference Model](https://arxiv.org/abs/2403.07691) (ORPO). Which is a reference model free aligment method that mixes the SFT loss with a reinforcement learning loss that uses odds ratio as the implicit reward to enhance training stability and efficiency. To use ORPO in alignment, use the [train_orpo.sh](./training_scripts/train_orpo.sh) script, You can set the value for `lambda` (which determine how strongly the reinforcement learning loss affect the training) but it is optional. #### ORPO Result

+ +

+

@@ -310,4 +310,14 @@ If you wish to cite relevant research papars, you can find the reference below.

journal={arXiv},

year={2023}

}

+

+# Distrifusion

+@InProceedings{Li_2024_CVPR,

+ author={Li, Muyang and Cai, Tianle and Cao, Jiaxin and Zhang, Qinsheng and Cai, Han and Bai, Junjie and Jia, Yangqing and Li, Kai and Han, Song},

+ title={DistriFusion: Distributed Parallel Inference for High-Resolution Diffusion Models},

+ booktitle={Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)},

+ month={June},

+ year={2024},

+ pages={7183-7193}

+}

```

diff --git a/colossalai/inference/config.py b/colossalai/inference/config.py

index 1beb86874826..072ddbcfd298 100644

--- a/colossalai/inference/config.py

+++ b/colossalai/inference/config.py

@@ -186,6 +186,7 @@ class InferenceConfig(RPC_PARAM):

enable_streamingllm(bool): Whether to use StreamingLLM, the relevant algorithms refer to the paper at https://arxiv.org/pdf/2309.17453 for implementation.

start_token_size(int): The size of the start tokens, when using StreamingLLM.

generated_token_size(int): The size of the generated tokens, When using StreamingLLM.

+ patched_parallelism_size(int): Patched Parallelism Size, When using Distrifusion

"""

# NOTE: arrange configs according to their importance and frequency of usage

@@ -245,6 +246,11 @@ class InferenceConfig(RPC_PARAM):

start_token_size: int = 4

generated_token_size: int = 512

+ # Acceleration for Diffusion Model(PipeFusion or Distrifusion)

+ patched_parallelism_size: int = 1 # for distrifusion

+ # pipeFusion_m_size: int = 1 # for pipefusion

+ # pipeFusion_n_size: int = 1 # for pipefusion

+

def __post_init__(self):

self.max_context_len_to_capture = self.max_input_len + self.max_output_len

self._verify_config()

@@ -288,6 +294,14 @@ def _verify_config(self) -> None:

# Thereafter, we swap out tokens in units of blocks, and always swapping out the second block when the generated tokens exceeded the limit.

self.start_token_size = self.block_size

+ # check Distrifusion

+ # TODO(@lry89757) need more detailed check

+ if self.patched_parallelism_size > 1:

+ # self.use_patched_parallelism = True

+ self.tp_size = (

+ self.patched_parallelism_size

+ ) # this is not a real tp, because some annoying check, so we have to set this to patched_parallelism_size

+

# check prompt template

if self.prompt_template is None:

return

@@ -324,6 +338,7 @@ def to_model_shard_inference_config(self) -> "ModelShardInferenceConfig":

use_cuda_kernel=self.use_cuda_kernel,

use_spec_dec=self.use_spec_dec,

use_flash_attn=use_flash_attn,

+ patched_parallelism_size=self.patched_parallelism_size,

)

return model_inference_config

@@ -396,6 +411,7 @@ class ModelShardInferenceConfig:

use_cuda_kernel: bool = False

use_spec_dec: bool = False

use_flash_attn: bool = False

+ patched_parallelism_size: int = 1 # for diffusion model, Distrifusion Technique

@dataclass

diff --git a/colossalai/inference/core/diffusion_engine.py b/colossalai/inference/core/diffusion_engine.py

index 75b9889bf28d..8bed508cba55 100644

--- a/colossalai/inference/core/diffusion_engine.py

+++ b/colossalai/inference/core/diffusion_engine.py

@@ -11,7 +11,7 @@

from colossalai.accelerator import get_accelerator

from colossalai.cluster import ProcessGroupMesh

from colossalai.inference.config import DiffusionGenerationConfig, InferenceConfig, ModelShardInferenceConfig

-from colossalai.inference.modeling.models.diffusion import DiffusionPipe

+from colossalai.inference.modeling.layers.diffusion import DiffusionPipe

from colossalai.inference.modeling.policy import model_policy_map

from colossalai.inference.struct import DiffusionSequence

from colossalai.inference.utils import get_model_size, get_model_type

diff --git a/colossalai/inference/modeling/models/diffusion.py b/colossalai/inference/modeling/layers/diffusion.py

similarity index 100%

rename from colossalai/inference/modeling/models/diffusion.py

rename to colossalai/inference/modeling/layers/diffusion.py

diff --git a/colossalai/inference/modeling/layers/distrifusion.py b/colossalai/inference/modeling/layers/distrifusion.py

new file mode 100644

index 000000000000..ea97cceefac9

--- /dev/null

+++ b/colossalai/inference/modeling/layers/distrifusion.py

@@ -0,0 +1,626 @@

+# Code refer and adapted from:

+# https://github.com/huggingface/diffusers/blob/v0.29.0-release/src/diffusers

+# https://github.com/PipeFusion/PipeFusion

+

+import inspect

+from typing import Any, Dict, List, Optional, Tuple, Union

+

+import torch

+import torch.distributed as dist

+import torch.nn.functional as F

+from diffusers.models import attention_processor

+from diffusers.models.attention import Attention

+from diffusers.models.embeddings import PatchEmbed, get_2d_sincos_pos_embed

+from diffusers.models.transformers.pixart_transformer_2d import PixArtTransformer2DModel

+from diffusers.models.transformers.transformer_sd3 import SD3Transformer2DModel

+from torch import nn

+from torch.distributed import ProcessGroup

+

+from colossalai.inference.config import ModelShardInferenceConfig

+from colossalai.logging import get_dist_logger

+from colossalai.shardformer.layer.parallel_module import ParallelModule

+from colossalai.utils import get_current_device

+

+try:

+ from flash_attn import flash_attn_func

+

+ HAS_FLASH_ATTN = True

+except ImportError:

+ HAS_FLASH_ATTN = False

+

+

+logger = get_dist_logger(__name__)

+

+

+# adapted from https://github.com/huggingface/diffusers/blob/v0.29.0-release/src/diffusers/models/transformers/transformer_2d.py

+def PixArtAlphaTransformer2DModel_forward(

+ self: PixArtTransformer2DModel,

+ hidden_states: torch.Tensor,

+ encoder_hidden_states: Optional[torch.Tensor] = None,

+ timestep: Optional[torch.LongTensor] = None,

+ added_cond_kwargs: Dict[str, torch.Tensor] = None,

+ class_labels: Optional[torch.LongTensor] = None,

+ cross_attention_kwargs: Dict[str, Any] = None,

+ attention_mask: Optional[torch.Tensor] = None,

+ encoder_attention_mask: Optional[torch.Tensor] = None,

+ return_dict: bool = True,

+):

+ assert hasattr(

+ self, "patched_parallel_size"

+ ), "please check your policy, `Transformer2DModel` Must have attribute `patched_parallel_size`"

+

+ if cross_attention_kwargs is not None:

+ if cross_attention_kwargs.get("scale", None) is not None:

+ logger.warning("Passing `scale` to `cross_attention_kwargs` is deprecated. `scale` will be ignored.")

+ # ensure attention_mask is a bias, and give it a singleton query_tokens dimension.

+ # we may have done this conversion already, e.g. if we came here via UNet2DConditionModel#forward.

+ # we can tell by counting dims; if ndim == 2: it's a mask rather than a bias.

+ # expects mask of shape:

+ # [batch, key_tokens]

+ # adds singleton query_tokens dimension:

+ # [batch, 1, key_tokens]

+ # this helps to broadcast it as a bias over attention scores, which will be in one of the following shapes:

+ # [batch, heads, query_tokens, key_tokens] (e.g. torch sdp attn)

+ # [batch * heads, query_tokens, key_tokens] (e.g. xformers or classic attn)

+ if attention_mask is not None and attention_mask.ndim == 2:

+ # assume that mask is expressed as:

+ # (1 = keep, 0 = discard)

+ # convert mask into a bias that can be added to attention scores:

+ # (keep = +0, discard = -10000.0)

+ attention_mask = (1 - attention_mask.to(hidden_states.dtype)) * -10000.0

+ attention_mask = attention_mask.unsqueeze(1)

+

+ # convert encoder_attention_mask to a bias the same way we do for attention_mask

+ if encoder_attention_mask is not None and encoder_attention_mask.ndim == 2:

+ encoder_attention_mask = (1 - encoder_attention_mask.to(hidden_states.dtype)) * -10000.0

+ encoder_attention_mask = encoder_attention_mask.unsqueeze(1)

+

+ # 1. Input

+ batch_size = hidden_states.shape[0]

+ height, width = (

+ hidden_states.shape[-2] // self.config.patch_size,

+ hidden_states.shape[-1] // self.config.patch_size,

+ )

+ hidden_states = self.pos_embed(hidden_states)

+

+ timestep, embedded_timestep = self.adaln_single(

+ timestep, added_cond_kwargs, batch_size=batch_size, hidden_dtype=hidden_states.dtype

+ )

+

+ if self.caption_projection is not None:

+ encoder_hidden_states = self.caption_projection(encoder_hidden_states)

+ encoder_hidden_states = encoder_hidden_states.view(batch_size, -1, hidden_states.shape[-1])

+

+ # 2. Blocks

+ for block in self.transformer_blocks:

+ hidden_states = block(

+ hidden_states,

+ attention_mask=attention_mask,

+ encoder_hidden_states=encoder_hidden_states,

+ encoder_attention_mask=encoder_attention_mask,

+ timestep=timestep,

+ cross_attention_kwargs=cross_attention_kwargs,

+ class_labels=class_labels,

+ )

+

+ # 3. Output

+ shift, scale = (self.scale_shift_table[None] + embedded_timestep[:, None].to(self.scale_shift_table.device)).chunk(

+ 2, dim=1

+ )

+ hidden_states = self.norm_out(hidden_states)

+ # Modulation

+ hidden_states = hidden_states * (1 + scale.to(hidden_states.device)) + shift.to(hidden_states.device)

+ hidden_states = self.proj_out(hidden_states)

+ hidden_states = hidden_states.squeeze(1)

+

+ # unpatchify

+ hidden_states = hidden_states.reshape(

+ shape=(

+ -1,

+ height // self.patched_parallel_size,

+ width,

+ self.config.patch_size,

+ self.config.patch_size,

+ self.out_channels,

+ )

+ )

+ hidden_states = torch.einsum("nhwpqc->nchpwq", hidden_states)

+ output = hidden_states.reshape(

+ shape=(

+ -1,

+ self.out_channels,

+ height // self.patched_parallel_size * self.config.patch_size,

+ width * self.config.patch_size,

+ )

+ )

+

+ # enable Distrifusion Optimization

+ if hasattr(self, "patched_parallel_size"):

+ from torch import distributed as dist

+

+ if (getattr(self, "output_buffer", None) is None) or (self.output_buffer.shape != output.shape):

+ self.output_buffer = torch.empty_like(output)

+ if (getattr(self, "buffer_list", None) is None) or (self.buffer_list[0].shape != output.shape):

+ self.buffer_list = [torch.empty_like(output) for _ in range(self.patched_parallel_size)]

+ output = output.contiguous()

+ dist.all_gather(self.buffer_list, output, async_op=False)

+ torch.cat(self.buffer_list, dim=2, out=self.output_buffer)

+ output = self.output_buffer

+

+ return (output,)

+

+

+# adapted from https://github.com/huggingface/diffusers/blob/v0.29.0-release/src/diffusers/models/transformers/transformer_sd3.py

+def SD3Transformer2DModel_forward(

+ self: SD3Transformer2DModel,

+ hidden_states: torch.FloatTensor,

+ encoder_hidden_states: torch.FloatTensor = None,

+ pooled_projections: torch.FloatTensor = None,

+ timestep: torch.LongTensor = None,

+ joint_attention_kwargs: Optional[Dict[str, Any]] = None,

+ return_dict: bool = True,

+) -> Union[torch.FloatTensor]:

+

+ assert hasattr(

+ self, "patched_parallel_size"

+ ), "please check your policy, `Transformer2DModel` Must have attribute `patched_parallel_size`"

+

+ height, width = hidden_states.shape[-2:]

+

+ hidden_states = self.pos_embed(hidden_states) # takes care of adding positional embeddings too.

+ temb = self.time_text_embed(timestep, pooled_projections)

+ encoder_hidden_states = self.context_embedder(encoder_hidden_states)

+

+ for block in self.transformer_blocks:

+ encoder_hidden_states, hidden_states = block(

+ hidden_states=hidden_states, encoder_hidden_states=encoder_hidden_states, temb=temb

+ )

+

+ hidden_states = self.norm_out(hidden_states, temb)

+ hidden_states = self.proj_out(hidden_states)

+

+ # unpatchify

+ patch_size = self.config.patch_size

+ height = height // patch_size // self.patched_parallel_size

+ width = width // patch_size

+

+ hidden_states = hidden_states.reshape(

+ shape=(hidden_states.shape[0], height, width, patch_size, patch_size, self.out_channels)

+ )

+ hidden_states = torch.einsum("nhwpqc->nchpwq", hidden_states)

+ output = hidden_states.reshape(

+ shape=(hidden_states.shape[0], self.out_channels, height * patch_size, width * patch_size)

+ )

+

+ # enable Distrifusion Optimization

+ if hasattr(self, "patched_parallel_size"):

+ from torch import distributed as dist

+

+ if (getattr(self, "output_buffer", None) is None) or (self.output_buffer.shape != output.shape):

+ self.output_buffer = torch.empty_like(output)

+ if (getattr(self, "buffer_list", None) is None) or (self.buffer_list[0].shape != output.shape):

+ self.buffer_list = [torch.empty_like(output) for _ in range(self.patched_parallel_size)]

+ output = output.contiguous()

+ dist.all_gather(self.buffer_list, output, async_op=False)

+ torch.cat(self.buffer_list, dim=2, out=self.output_buffer)

+ output = self.output_buffer

+

+ return (output,)

+

+

+# Code adapted from: https://github.com/PipeFusion/PipeFusion/blob/main/pipefuser/modules/dit/patch_parallel/patchembed.py

+class DistrifusionPatchEmbed(ParallelModule):

+ def __init__(

+ self,

+ module: PatchEmbed,

+ process_group: Union[ProcessGroup, List[ProcessGroup]],

+ model_shard_infer_config: ModelShardInferenceConfig = None,

+ ):

+ super().__init__()

+ self.module = module

+ self.rank = dist.get_rank(group=process_group)

+ self.patched_parallelism_size = model_shard_infer_config.patched_parallelism_size

+

+ @staticmethod

+ def from_native_module(module: PatchEmbed, process_group: Union[ProcessGroup, List[ProcessGroup]], *args, **kwargs):

+ model_shard_infer_config = kwargs.get("model_shard_infer_config", None)

+ distrifusion_embed = DistrifusionPatchEmbed(

+ module, process_group, model_shard_infer_config=model_shard_infer_config

+ )

+ return distrifusion_embed

+

+ def forward(self, latent):

+ module = self.module

+ if module.pos_embed_max_size is not None:

+ height, width = latent.shape[-2:]

+ else:

+ height, width = latent.shape[-2] // module.patch_size, latent.shape[-1] // module.patch_size

+

+ latent = module.proj(latent)

+ if module.flatten:

+ latent = latent.flatten(2).transpose(1, 2) # BCHW -> BNC

+ if module.layer_norm:

+ latent = module.norm(latent)

+ if module.pos_embed is None:

+ return latent.to(latent.dtype)

+ # Interpolate or crop positional embeddings as needed

+ if module.pos_embed_max_size:

+ pos_embed = module.cropped_pos_embed(height, width)

+ else:

+ if module.height != height or module.width != width:

+ pos_embed = get_2d_sincos_pos_embed(

+ embed_dim=module.pos_embed.shape[-1],

+ grid_size=(height, width),

+ base_size=module.base_size,

+ interpolation_scale=module.interpolation_scale,

+ )

+ pos_embed = torch.from_numpy(pos_embed).float().unsqueeze(0).to(latent.device)

+ else:

+ pos_embed = module.pos_embed

+

+ b, c, h = pos_embed.shape

+ pos_embed = pos_embed.view(b, self.patched_parallelism_size, -1, h)[:, self.rank]

+

+ return (latent + pos_embed).to(latent.dtype)

+

+

+# Code adapted from: https://github.com/PipeFusion/PipeFusion/blob/main/pipefuser/modules/dit/patch_parallel/conv2d.py

+class DistrifusionConv2D(ParallelModule):

+

+ def __init__(

+ self,

+ module: nn.Conv2d,

+ process_group: Union[ProcessGroup, List[ProcessGroup]],

+ model_shard_infer_config: ModelShardInferenceConfig = None,

+ ):

+ super().__init__()

+ self.module = module

+ self.rank = dist.get_rank(group=process_group)

+ self.patched_parallelism_size = model_shard_infer_config.patched_parallelism_size

+

+ @staticmethod

+ def from_native_module(module: nn.Conv2d, process_group: Union[ProcessGroup, List[ProcessGroup]], *args, **kwargs):

+ model_shard_infer_config = kwargs.get("model_shard_infer_config", None)

+ distrifusion_conv = DistrifusionConv2D(module, process_group, model_shard_infer_config=model_shard_infer_config)

+ return distrifusion_conv

+

+ def sliced_forward(self, x: torch.Tensor) -> torch.Tensor:

+

+ b, c, h, w = x.shape

+

+ stride = self.module.stride[0]

+ padding = self.module.padding[0]

+

+ output_h = x.shape[2] // stride // self.patched_parallelism_size

+ idx = dist.get_rank()

+ h_begin = output_h * idx * stride - padding

+ h_end = output_h * (idx + 1) * stride + padding

+ final_padding = [padding, padding, 0, 0]

+ if h_begin < 0:

+ h_begin = 0

+ final_padding[2] = padding

+ if h_end > h:

+ h_end = h

+ final_padding[3] = padding

+ sliced_input = x[:, :, h_begin:h_end, :]

+ padded_input = F.pad(sliced_input, final_padding, mode="constant")

+ return F.conv2d(

+ padded_input,

+ self.module.weight,

+ self.module.bias,

+ stride=stride,

+ padding="valid",

+ )

+

+ def forward(self, input: torch.Tensor) -> Tuple[torch.Tensor, torch.Tensor]:

+ output = self.sliced_forward(input)

+ return output

+

+

+# Code adapted from: https://github.com/huggingface/diffusers/blob/v0.29.0-release/src/diffusers/models/attention_processor.py

+class DistrifusionFusedAttention(ParallelModule):

+

+ def __init__(

+ self,

+ module: attention_processor.Attention,

+ process_group: Union[ProcessGroup, List[ProcessGroup]],

+ model_shard_infer_config: ModelShardInferenceConfig = None,

+ ):

+ super().__init__()

+ self.counter = 0

+ self.module = module

+ self.buffer_list = None

+ self.kv_buffer_idx = dist.get_rank(group=process_group)

+ self.patched_parallelism_size = model_shard_infer_config.patched_parallelism_size

+ self.handle = None

+ self.process_group = process_group

+ self.warm_step = 5 # for warmup

+

+ @staticmethod

+ def from_native_module(

+ module: attention_processor.Attention, process_group: Union[ProcessGroup, List[ProcessGroup]], *args, **kwargs

+ ) -> ParallelModule:

+ model_shard_infer_config = kwargs.get("model_shard_infer_config", None)

+ return DistrifusionFusedAttention(

+ module=module,

+ process_group=process_group,

+ model_shard_infer_config=model_shard_infer_config,

+ )

+

+ def _forward(

+ self,

+ attn: Attention,

+ hidden_states: torch.FloatTensor,

+ encoder_hidden_states: torch.FloatTensor = None,

+ attention_mask: Optional[torch.FloatTensor] = None,

+ *args,

+ **kwargs,

+ ) -> torch.FloatTensor:

+ residual = hidden_states

+

+ input_ndim = hidden_states.ndim

+ if input_ndim == 4:

+ batch_size, channel, height, width = hidden_states.shape

+ hidden_states = hidden_states.view(batch_size, channel, height * width).transpose(1, 2)

+ context_input_ndim = encoder_hidden_states.ndim

+ if context_input_ndim == 4:

+ batch_size, channel, height, width = encoder_hidden_states.shape

+ encoder_hidden_states = encoder_hidden_states.view(batch_size, channel, height * width).transpose(1, 2)

+

+ batch_size = encoder_hidden_states.shape[0]

+

+ # `sample` projections.

+ query = attn.to_q(hidden_states)

+ key = attn.to_k(hidden_states)

+ value = attn.to_v(hidden_states)

+

+ kv = torch.cat([key, value], dim=-1) # shape of kv now: (bs, seq_len // parallel_size, dim * 2)

+

+ if self.patched_parallelism_size == 1:

+ full_kv = kv

+ else:

+ if self.buffer_list is None: # buffer not created

+ full_kv = torch.cat([kv for _ in range(self.patched_parallelism_size)], dim=1)

+ elif self.counter <= self.warm_step:

+ # logger.info(f"warmup: {self.counter}")

+ dist.all_gather(

+ self.buffer_list,

+ kv,

+ group=self.process_group,

+ async_op=False,

+ )

+ full_kv = torch.cat(self.buffer_list, dim=1)

+ else:

+ # logger.info(f"use old kv to infer: {self.counter}")

+ self.buffer_list[self.kv_buffer_idx].copy_(kv)

+ full_kv = torch.cat(self.buffer_list, dim=1)

+ assert self.handle is None, "we should maintain the kv of last step"

+ self.handle = dist.all_gather(self.buffer_list, kv, group=self.process_group, async_op=True)

+

+ key, value = torch.split(full_kv, full_kv.shape[-1] // 2, dim=-1)

+

+ # `context` projections.

+ encoder_hidden_states_query_proj = attn.add_q_proj(encoder_hidden_states)

+ encoder_hidden_states_key_proj = attn.add_k_proj(encoder_hidden_states)

+ encoder_hidden_states_value_proj = attn.add_v_proj(encoder_hidden_states)

+

+ # attention

+ query = torch.cat([query, encoder_hidden_states_query_proj], dim=1)

+ key = torch.cat([key, encoder_hidden_states_key_proj], dim=1)

+ value = torch.cat([value, encoder_hidden_states_value_proj], dim=1)

+

+ inner_dim = key.shape[-1]

+ head_dim = inner_dim // attn.heads

+ query = query.view(batch_size, -1, attn.heads, head_dim).transpose(1, 2)

+ key = key.view(batch_size, -1, attn.heads, head_dim).transpose(1, 2)

+ value = value.view(batch_size, -1, attn.heads, head_dim).transpose(1, 2)

+

+ hidden_states = hidden_states = F.scaled_dot_product_attention(

+ query, key, value, dropout_p=0.0, is_causal=False

+ ) # NOTE(@lry89757) for torch >= 2.2, flash attn has been already integrated into scaled_dot_product_attention, https://pytorch.org/blog/pytorch2-2/

+ hidden_states = hidden_states.transpose(1, 2).reshape(batch_size, -1, attn.heads * head_dim)

+ hidden_states = hidden_states.to(query.dtype)

+

+ # Split the attention outputs.

+ hidden_states, encoder_hidden_states = (

+ hidden_states[:, : residual.shape[1]],

+ hidden_states[:, residual.shape[1] :],

+ )

+

+ # linear proj

+ hidden_states = attn.to_out[0](hidden_states)

+ # dropout

+ hidden_states = attn.to_out[1](hidden_states)

+ if not attn.context_pre_only:

+ encoder_hidden_states = attn.to_add_out(encoder_hidden_states)

+

+ if input_ndim == 4:

+ hidden_states = hidden_states.transpose(-1, -2).reshape(batch_size, channel, height, width)

+ if context_input_ndim == 4:

+ encoder_hidden_states = encoder_hidden_states.transpose(-1, -2).reshape(batch_size, channel, height, width)

+

+ return hidden_states, encoder_hidden_states

+

+ def forward(

+ self,

+ hidden_states: torch.Tensor,

+ encoder_hidden_states: Optional[torch.Tensor] = None,

+ attention_mask: Optional[torch.Tensor] = None,

+ **cross_attention_kwargs,

+ ) -> torch.Tensor:

+

+ if self.handle is not None:

+ self.handle.wait()

+ self.handle = None

+

+ b, l, c = hidden_states.shape

+ kv_shape = (b, l, self.module.to_k.out_features * 2)

+ if self.patched_parallelism_size > 1 and (self.buffer_list is None or self.buffer_list[0].shape != kv_shape):

+

+ self.buffer_list = [

+ torch.empty(kv_shape, dtype=hidden_states.dtype, device=get_current_device())

+ for _ in range(self.patched_parallelism_size)

+ ]

+

+ self.counter = 0

+

+ attn_parameters = set(inspect.signature(self.module.processor.__call__).parameters.keys())

+ quiet_attn_parameters = {"ip_adapter_masks"}

+ unused_kwargs = [

+ k for k, _ in cross_attention_kwargs.items() if k not in attn_parameters and k not in quiet_attn_parameters

+ ]

+ if len(unused_kwargs) > 0:

+ logger.warning(

+ f"cross_attention_kwargs {unused_kwargs} are not expected by {self.module.processor.__class__.__name__} and will be ignored."

+ )

+ cross_attention_kwargs = {k: w for k, w in cross_attention_kwargs.items() if k in attn_parameters}

+

+ output = self._forward(

+ self.module,

+ hidden_states,

+ encoder_hidden_states=encoder_hidden_states,

+ attention_mask=attention_mask,

+ **cross_attention_kwargs,

+ )

+

+ self.counter += 1

+

+ return output

+

+

+# Code adapted from: https://github.com/PipeFusion/PipeFusion/blob/main/pipefuser/modules/dit/patch_parallel/attn.py

+class DistriSelfAttention(ParallelModule):

+ def __init__(

+ self,

+ module: Attention,

+ process_group: Union[ProcessGroup, List[ProcessGroup]],

+ model_shard_infer_config: ModelShardInferenceConfig = None,

+ ):

+ super().__init__()

+ self.counter = 0

+ self.module = module

+ self.buffer_list = None

+ self.kv_buffer_idx = dist.get_rank(group=process_group)

+ self.patched_parallelism_size = model_shard_infer_config.patched_parallelism_size

+ self.handle = None

+ self.process_group = process_group

+ self.warm_step = 3 # for warmup

+

+ @staticmethod

+ def from_native_module(

+ module: Attention, process_group: Union[ProcessGroup, List[ProcessGroup]], *args, **kwargs

+ ) -> ParallelModule:

+ model_shard_infer_config = kwargs.get("model_shard_infer_config", None)

+ return DistriSelfAttention(

+ module=module,

+ process_group=process_group,

+ model_shard_infer_config=model_shard_infer_config,

+ )

+

+ def _forward(self, hidden_states: torch.FloatTensor, scale: float = 1.0):

+ attn = self.module

+ assert isinstance(attn, Attention)

+

+ residual = hidden_states

+

+ batch_size, sequence_length, _ = hidden_states.shape

+

+ query = attn.to_q(hidden_states)

+

+ encoder_hidden_states = hidden_states

+ k = self.module.to_k(encoder_hidden_states)

+ v = self.module.to_v(encoder_hidden_states)

+ kv = torch.cat([k, v], dim=-1) # shape of kv now: (bs, seq_len // parallel_size, dim * 2)

+

+ if self.patched_parallelism_size == 1:

+ full_kv = kv

+ else:

+ if self.buffer_list is None: # buffer not created

+ full_kv = torch.cat([kv for _ in range(self.patched_parallelism_size)], dim=1)

+ elif self.counter <= self.warm_step:

+ # logger.info(f"warmup: {self.counter}")

+ dist.all_gather(

+ self.buffer_list,

+ kv,

+ group=self.process_group,

+ async_op=False,

+ )

+ full_kv = torch.cat(self.buffer_list, dim=1)

+ else:

+ # logger.info(f"use old kv to infer: {self.counter}")

+ self.buffer_list[self.kv_buffer_idx].copy_(kv)

+ full_kv = torch.cat(self.buffer_list, dim=1)

+ assert self.handle is None, "we should maintain the kv of last step"

+ self.handle = dist.all_gather(self.buffer_list, kv, group=self.process_group, async_op=True)

+

+ if HAS_FLASH_ATTN:

+ # flash attn

+ key, value = torch.split(full_kv, full_kv.shape[-1] // 2, dim=-1)

+ inner_dim = key.shape[-1]

+ head_dim = inner_dim // attn.heads

+

+ query = query.view(batch_size, -1, attn.heads, head_dim)

+ key = key.view(batch_size, -1, attn.heads, head_dim)

+ value = value.view(batch_size, -1, attn.heads, head_dim)

+

+ hidden_states = flash_attn_func(query, key, value, dropout_p=0.0, causal=False)

+ hidden_states = hidden_states.reshape(batch_size, -1, attn.heads * head_dim).to(query.dtype)

+ else:

+ # naive attn

+ key, value = torch.split(full_kv, full_kv.shape[-1] // 2, dim=-1)

+

+ inner_dim = key.shape[-1]

+ head_dim = inner_dim // attn.heads

+

+ query = query.view(batch_size, -1, attn.heads, head_dim).transpose(1, 2)

+ key = key.view(batch_size, -1, attn.heads, head_dim).transpose(1, 2)

+ value = value.view(batch_size, -1, attn.heads, head_dim).transpose(1, 2)

+

+ # the output of sdp = (batch, num_heads, seq_len, head_dim)

+ # TODO: add support for attn.scale when we move to Torch 2.1

+ hidden_states = F.scaled_dot_product_attention(query, key, value, dropout_p=0.0, is_causal=False)

+

+ hidden_states = hidden_states.transpose(1, 2).reshape(batch_size, -1, attn.heads * head_dim)

+ hidden_states = hidden_states.to(query.dtype)

+

+ # linear proj

+ hidden_states = attn.to_out[0](hidden_states)

+ # dropout

+ hidden_states = attn.to_out[1](hidden_states)

+

+ if attn.residual_connection:

+ hidden_states = hidden_states + residual

+

+ hidden_states = hidden_states / attn.rescale_output_factor

+

+ return hidden_states

+

+ def forward(

+ self,

+ hidden_states: torch.FloatTensor,

+ encoder_hidden_states: Optional[torch.FloatTensor] = None,

+ scale: float = 1.0,

+ *args,

+ **kwargs,

+ ) -> torch.FloatTensor:

+

+ # async preallocates memo buffer

+ if self.handle is not None:

+ self.handle.wait()

+ self.handle = None

+

+ b, l, c = hidden_states.shape

+ kv_shape = (b, l, self.module.to_k.out_features * 2)

+ if self.patched_parallelism_size > 1 and (self.buffer_list is None or self.buffer_list[0].shape != kv_shape):

+

+ self.buffer_list = [

+ torch.empty(kv_shape, dtype=hidden_states.dtype, device=get_current_device())

+ for _ in range(self.patched_parallelism_size)

+ ]

+

+ self.counter = 0

+

+ output = self._forward(hidden_states, scale=scale)

+

+ self.counter += 1

+ return output

diff --git a/colossalai/inference/modeling/models/pixart_alpha.py b/colossalai/inference/modeling/models/pixart_alpha.py

index d5774946e365..cc2bee5efd4d 100644

--- a/colossalai/inference/modeling/models/pixart_alpha.py

+++ b/colossalai/inference/modeling/models/pixart_alpha.py

@@ -14,7 +14,7 @@

from colossalai.logging import get_dist_logger

-from .diffusion import DiffusionPipe

+from ..layers.diffusion import DiffusionPipe

logger = get_dist_logger(__name__)

diff --git a/colossalai/inference/modeling/models/stablediffusion3.py b/colossalai/inference/modeling/models/stablediffusion3.py

index d1c63a6dc665..b123164039c8 100644

--- a/colossalai/inference/modeling/models/stablediffusion3.py

+++ b/colossalai/inference/modeling/models/stablediffusion3.py

@@ -4,7 +4,7 @@

import torch

from diffusers.pipelines.stable_diffusion_3.pipeline_stable_diffusion_3 import retrieve_timesteps

-from .diffusion import DiffusionPipe

+from ..layers.diffusion import DiffusionPipe

# TODO(@lry89757) temporarily image, please support more return output

diff --git a/colossalai/inference/modeling/policy/pixart_alpha.py b/colossalai/inference/modeling/policy/pixart_alpha.py

index 356056ba73e7..1150b2432cc5 100644

--- a/colossalai/inference/modeling/policy/pixart_alpha.py

+++ b/colossalai/inference/modeling/policy/pixart_alpha.py

@@ -1,9 +1,17 @@

+from diffusers.models.attention import BasicTransformerBlock

+from diffusers.models.transformers.pixart_transformer_2d import PixArtTransformer2DModel

from torch import nn

from colossalai.inference.config import RPC_PARAM

-from colossalai.inference.modeling.models.diffusion import DiffusionPipe

+from colossalai.inference.modeling.layers.diffusion import DiffusionPipe

+from colossalai.inference.modeling.layers.distrifusion import (

+ DistrifusionConv2D,

+ DistrifusionPatchEmbed,

+ DistriSelfAttention,

+ PixArtAlphaTransformer2DModel_forward,

+)

from colossalai.inference.modeling.models.pixart_alpha import pixart_alpha_forward

-from colossalai.shardformer.policies.base_policy import Policy

+from colossalai.shardformer.policies.base_policy import ModulePolicyDescription, Policy, SubModuleReplacementDescription

class PixArtAlphaInferPolicy(Policy, RPC_PARAM):

@@ -12,9 +20,46 @@ def __init__(self) -> None:

def module_policy(self):

policy = {}

+

+ if self.shard_config.extra_kwargs["model_shard_infer_config"].patched_parallelism_size > 1:

+

+ policy[PixArtTransformer2DModel] = ModulePolicyDescription(

+ sub_module_replacement=[

+ SubModuleReplacementDescription(

+ suffix="pos_embed.proj",

+ target_module=DistrifusionConv2D,

+ kwargs={"model_shard_infer_config": self.shard_config.extra_kwargs["model_shard_infer_config"]},

+ ),

+ SubModuleReplacementDescription(

+ suffix="pos_embed",

+ target_module=DistrifusionPatchEmbed,

+ kwargs={"model_shard_infer_config": self.shard_config.extra_kwargs["model_shard_infer_config"]},

+ ),

+ ],

+ attribute_replacement={

+ "patched_parallel_size": self.shard_config.extra_kwargs[

+ "model_shard_infer_config"

+ ].patched_parallelism_size

+ },

+ method_replacement={"forward": PixArtAlphaTransformer2DModel_forward},

+ )

+

+ policy[BasicTransformerBlock] = ModulePolicyDescription(

+ sub_module_replacement=[

+ SubModuleReplacementDescription(

+ suffix="attn1",

+ target_module=DistriSelfAttention,

+ kwargs={

+ "model_shard_infer_config": self.shard_config.extra_kwargs["model_shard_infer_config"],

+ },

+ )

+ ]

+ )

+

self.append_or_create_method_replacement(

description={"forward": pixart_alpha_forward}, policy=policy, target_key=DiffusionPipe

)

+

return policy

def preprocess(self) -> nn.Module:

diff --git a/colossalai/inference/modeling/policy/stablediffusion3.py b/colossalai/inference/modeling/policy/stablediffusion3.py

index c9877f7dcae6..39b764b92887 100644

--- a/colossalai/inference/modeling/policy/stablediffusion3.py

+++ b/colossalai/inference/modeling/policy/stablediffusion3.py

@@ -1,9 +1,17 @@

+from diffusers.models.attention import JointTransformerBlock

+from diffusers.models.transformers import SD3Transformer2DModel

from torch import nn

from colossalai.inference.config import RPC_PARAM

-from colossalai.inference.modeling.models.diffusion import DiffusionPipe

+from colossalai.inference.modeling.layers.diffusion import DiffusionPipe

+from colossalai.inference.modeling.layers.distrifusion import (

+ DistrifusionConv2D,

+ DistrifusionFusedAttention,

+ DistrifusionPatchEmbed,

+ SD3Transformer2DModel_forward,

+)

from colossalai.inference.modeling.models.stablediffusion3 import sd3_forward

-from colossalai.shardformer.policies.base_policy import Policy

+from colossalai.shardformer.policies.base_policy import ModulePolicyDescription, Policy, SubModuleReplacementDescription

class StableDiffusion3InferPolicy(Policy, RPC_PARAM):

@@ -12,6 +20,42 @@ def __init__(self) -> None:

def module_policy(self):

policy = {}

+

+ if self.shard_config.extra_kwargs["model_shard_infer_config"].patched_parallelism_size > 1:

+

+ policy[SD3Transformer2DModel] = ModulePolicyDescription(

+ sub_module_replacement=[

+ SubModuleReplacementDescription(

+ suffix="pos_embed.proj",

+ target_module=DistrifusionConv2D,

+ kwargs={"model_shard_infer_config": self.shard_config.extra_kwargs["model_shard_infer_config"]},

+ ),

+ SubModuleReplacementDescription(

+ suffix="pos_embed",

+ target_module=DistrifusionPatchEmbed,

+ kwargs={"model_shard_infer_config": self.shard_config.extra_kwargs["model_shard_infer_config"]},

+ ),

+ ],

+ attribute_replacement={

+ "patched_parallel_size": self.shard_config.extra_kwargs[

+ "model_shard_infer_config"

+ ].patched_parallelism_size

+ },

+ method_replacement={"forward": SD3Transformer2DModel_forward},

+ )

+

+ policy[JointTransformerBlock] = ModulePolicyDescription(

+ sub_module_replacement=[

+ SubModuleReplacementDescription(

+ suffix="attn",

+ target_module=DistrifusionFusedAttention,

+ kwargs={

+ "model_shard_infer_config": self.shard_config.extra_kwargs["model_shard_infer_config"],

+ },

+ )

+ ]

+ )

+

self.append_or_create_method_replacement(

description={"forward": sd3_forward}, policy=policy, target_key=DiffusionPipe

)

diff --git a/colossalai/shardformer/layer/moe/__init__.py b/colossalai/legacy/moe/layer/__init__.py

similarity index 100%

rename from colossalai/shardformer/layer/moe/__init__.py

rename to colossalai/legacy/moe/layer/__init__.py

diff --git a/colossalai/shardformer/layer/moe/experts.py b/colossalai/legacy/moe/layer/experts.py

similarity index 95%

rename from colossalai/shardformer/layer/moe/experts.py

rename to colossalai/legacy/moe/layer/experts.py

index 1be7a27547ed..8088cf44e473 100644

--- a/colossalai/shardformer/layer/moe/experts.py

+++ b/colossalai/legacy/moe/layer/experts.py

@@ -5,9 +5,9 @@

import torch.nn as nn

from colossalai.kernel.triton.llama_act_combine_kernel import HAS_TRITON

-from colossalai.moe._operation import MoeInGradScaler, MoeOutGradScaler

-from colossalai.moe.manager import MOE_MANAGER

-from colossalai.moe.utils import get_activation

+from colossalai.legacy.moe.manager import MOE_MANAGER

+from colossalai.legacy.moe.utils import get_activation

+from colossalai.moe._operation import EPGradScalerIn, EPGradScalerOut

from colossalai.shardformer.layer.utils import Randomizer

from colossalai.tensor.moe_tensor.api import get_ep_rank, get_ep_size

@@ -118,7 +118,7 @@ def forward(

Returns:

torch.Tensor: The output tensor of shape (num_groups, num_experts, capacity, hidden_size)

"""

- x = MoeInGradScaler.apply(x, self.ep_size)

+ x = EPGradScalerIn.apply(x, self.ep_size)

e = x.size(1)

h = x.size(-1)

@@ -157,5 +157,5 @@ def forward(

x = torch.cat([x[i].unsqueeze(0) for i in range(e)], dim=0)

x = x.reshape(inshape)

x = x.transpose(0, 1).contiguous()

- x = MoeOutGradScaler.apply(x, self.ep_size)

+ x = EPGradScalerOut.apply(x, self.ep_size)

return x

diff --git a/colossalai/shardformer/layer/moe/layers.py b/colossalai/legacy/moe/layer/layers.py

similarity index 99%

rename from colossalai/shardformer/layer/moe/layers.py

rename to colossalai/legacy/moe/layer/layers.py

index e5b0ef97fd87..e43966f68a8c 100644

--- a/colossalai/shardformer/layer/moe/layers.py

+++ b/colossalai/legacy/moe/layer/layers.py

@@ -7,9 +7,9 @@

import torch.nn as nn

import torch.nn.functional as F

+from colossalai.legacy.moe.load_balance import LoadBalancer

+from colossalai.legacy.moe.utils import create_ep_hierarchical_group, get_noise_generator

from colossalai.moe._operation import AllGather, AllToAll, HierarchicalAllToAll, MoeCombine, MoeDispatch, ReduceScatter

-from colossalai.moe.load_balance import LoadBalancer

-from colossalai.moe.utils import create_ep_hierarchical_group, get_noise_generator

from colossalai.shardformer.layer.moe import MLPExperts

from colossalai.tensor.moe_tensor.api import get_dp_group, get_ep_group, get_ep_group_ranks, get_ep_size

diff --git a/colossalai/shardformer/layer/moe/routers.py b/colossalai/legacy/moe/layer/routers.py

similarity index 95%

rename from colossalai/shardformer/layer/moe/routers.py

rename to colossalai/legacy/moe/layer/routers.py

index 1be7a27547ed..8088cf44e473 100644

--- a/colossalai/shardformer/layer/moe/routers.py

+++ b/colossalai/legacy/moe/layer/routers.py

@@ -5,9 +5,9 @@

import torch.nn as nn

from colossalai.kernel.triton.llama_act_combine_kernel import HAS_TRITON

-from colossalai.moe._operation import MoeInGradScaler, MoeOutGradScaler

-from colossalai.moe.manager import MOE_MANAGER

-from colossalai.moe.utils import get_activation

+from colossalai.legacy.moe.manager import MOE_MANAGER

+from colossalai.legacy.moe.utils import get_activation

+from colossalai.moe._operation import EPGradScalerIn, EPGradScalerOut

from colossalai.shardformer.layer.utils import Randomizer

from colossalai.tensor.moe_tensor.api import get_ep_rank, get_ep_size

@@ -118,7 +118,7 @@ def forward(

Returns:

torch.Tensor: The output tensor of shape (num_groups, num_experts, capacity, hidden_size)

"""

- x = MoeInGradScaler.apply(x, self.ep_size)

+ x = EPGradScalerIn.apply(x, self.ep_size)

e = x.size(1)

h = x.size(-1)

@@ -157,5 +157,5 @@ def forward(

x = torch.cat([x[i].unsqueeze(0) for i in range(e)], dim=0)

x = x.reshape(inshape)

x = x.transpose(0, 1).contiguous()

- x = MoeOutGradScaler.apply(x, self.ep_size)

+ x = EPGradScalerOut.apply(x, self.ep_size)

return x

diff --git a/colossalai/moe/load_balance.py b/colossalai/legacy/moe/load_balance.py

similarity index 99%

rename from colossalai/moe/load_balance.py

rename to colossalai/legacy/moe/load_balance.py

index 3dc6c02c7445..7339b1a7b0eb 100644

--- a/colossalai/moe/load_balance.py

+++ b/colossalai/legacy/moe/load_balance.py

@@ -7,7 +7,7 @@

from torch.distributed import ProcessGroup

from colossalai.cluster import ProcessGroupMesh

-from colossalai.moe.manager import MOE_MANAGER

+from colossalai.legacy.moe.manager import MOE_MANAGER

from colossalai.shardformer.layer.moe import MLPExperts

from colossalai.zero.low_level import LowLevelZeroOptimizer

diff --git a/colossalai/moe/manager.py b/colossalai/legacy/moe/manager.py

similarity index 100%

rename from colossalai/moe/manager.py

rename to colossalai/legacy/moe/manager.py

diff --git a/examples/language/openmoe/README.md b/colossalai/legacy/moe/openmoe/README.md

similarity index 100%

rename from examples/language/openmoe/README.md

rename to colossalai/legacy/moe/openmoe/README.md

diff --git a/examples/language/openmoe/benchmark/benchmark_cai.py b/colossalai/legacy/moe/openmoe/benchmark/benchmark_cai.py

similarity index 99%

rename from examples/language/openmoe/benchmark/benchmark_cai.py

rename to colossalai/legacy/moe/openmoe/benchmark/benchmark_cai.py

index b9ef915c32a4..5f9447246ae4 100644

--- a/examples/language/openmoe/benchmark/benchmark_cai.py

+++ b/colossalai/legacy/moe/openmoe/benchmark/benchmark_cai.py

@@ -18,9 +18,9 @@

from colossalai.booster import Booster

from colossalai.booster.plugin.moe_hybrid_parallel_plugin import MoeHybridParallelPlugin

from colossalai.cluster import DistCoordinator

+from colossalai.legacy.moe.manager import MOE_MANAGER

+from colossalai.legacy.moe.utils import skip_init

from colossalai.moe.layers import apply_load_balance

-from colossalai.moe.manager import MOE_MANAGER

-from colossalai.moe.utils import skip_init

from colossalai.nn.optimizer import HybridAdam

diff --git a/examples/language/openmoe/benchmark/benchmark_cai.sh b/colossalai/legacy/moe/openmoe/benchmark/benchmark_cai.sh

similarity index 100%

rename from examples/language/openmoe/benchmark/benchmark_cai.sh

rename to colossalai/legacy/moe/openmoe/benchmark/benchmark_cai.sh

diff --git a/examples/language/openmoe/benchmark/benchmark_cai_dist.sh b/colossalai/legacy/moe/openmoe/benchmark/benchmark_cai_dist.sh

similarity index 100%

rename from examples/language/openmoe/benchmark/benchmark_cai_dist.sh

rename to colossalai/legacy/moe/openmoe/benchmark/benchmark_cai_dist.sh

diff --git a/examples/language/openmoe/benchmark/benchmark_fsdp.py b/colossalai/legacy/moe/openmoe/benchmark/benchmark_fsdp.py

similarity index 98%

rename from examples/language/openmoe/benchmark/benchmark_fsdp.py

rename to colossalai/legacy/moe/openmoe/benchmark/benchmark_fsdp.py

index b00fbd001022..1ae94dd90977 100644

--- a/examples/language/openmoe/benchmark/benchmark_fsdp.py

+++ b/colossalai/legacy/moe/openmoe/benchmark/benchmark_fsdp.py

@@ -14,7 +14,7 @@

from transformers.models.llama import LlamaConfig

from utils import PerformanceEvaluator, get_model_numel

-from colossalai.moe.manager import MOE_MANAGER

+from colossalai.legacy.moe.manager import MOE_MANAGER

class RandomDataset(Dataset):

diff --git a/examples/language/openmoe/benchmark/benchmark_fsdp.sh b/colossalai/legacy/moe/openmoe/benchmark/benchmark_fsdp.sh

similarity index 100%

rename from examples/language/openmoe/benchmark/benchmark_fsdp.sh

rename to colossalai/legacy/moe/openmoe/benchmark/benchmark_fsdp.sh

diff --git a/examples/language/openmoe/benchmark/hostfile.txt b/colossalai/legacy/moe/openmoe/benchmark/hostfile.txt

similarity index 100%

rename from examples/language/openmoe/benchmark/hostfile.txt

rename to colossalai/legacy/moe/openmoe/benchmark/hostfile.txt

diff --git a/examples/language/openmoe/benchmark/utils.py b/colossalai/legacy/moe/openmoe/benchmark/utils.py

similarity index 100%

rename from examples/language/openmoe/benchmark/utils.py

rename to colossalai/legacy/moe/openmoe/benchmark/utils.py

diff --git a/examples/language/openmoe/infer.py b/colossalai/legacy/moe/openmoe/infer.py

similarity index 100%

rename from examples/language/openmoe/infer.py

rename to colossalai/legacy/moe/openmoe/infer.py

diff --git a/examples/language/openmoe/infer.sh b/colossalai/legacy/moe/openmoe/infer.sh

similarity index 100%

rename from examples/language/openmoe/infer.sh

rename to colossalai/legacy/moe/openmoe/infer.sh

diff --git a/examples/language/openmoe/model/__init__.py b/colossalai/legacy/moe/openmoe/model/__init__.py

similarity index 100%

rename from examples/language/openmoe/model/__init__.py

rename to colossalai/legacy/moe/openmoe/model/__init__.py

diff --git a/examples/language/openmoe/model/convert_openmoe_ckpt.py b/colossalai/legacy/moe/openmoe/model/convert_openmoe_ckpt.py

similarity index 100%

rename from examples/language/openmoe/model/convert_openmoe_ckpt.py

rename to colossalai/legacy/moe/openmoe/model/convert_openmoe_ckpt.py

diff --git a/examples/language/openmoe/model/convert_openmoe_ckpt.sh b/colossalai/legacy/moe/openmoe/model/convert_openmoe_ckpt.sh

similarity index 100%

rename from examples/language/openmoe/model/convert_openmoe_ckpt.sh

rename to colossalai/legacy/moe/openmoe/model/convert_openmoe_ckpt.sh

diff --git a/examples/language/openmoe/model/modeling_openmoe.py b/colossalai/legacy/moe/openmoe/model/modeling_openmoe.py

similarity index 99%

rename from examples/language/openmoe/model/modeling_openmoe.py

rename to colossalai/legacy/moe/openmoe/model/modeling_openmoe.py

index 1febacd7d226..5d6e91765883 100644

--- a/examples/language/openmoe/model/modeling_openmoe.py

+++ b/colossalai/legacy/moe/openmoe/model/modeling_openmoe.py

@@ -50,8 +50,8 @@

except:

HAS_FLASH_ATTN = False

from colossalai.kernel.triton.llama_act_combine_kernel import HAS_TRITON

-from colossalai.moe.manager import MOE_MANAGER

-from colossalai.moe.utils import get_activation, set_moe_args

+from colossalai.legacy.moe.manager import MOE_MANAGER

+from colossalai.legacy.moe.utils import get_activation, set_moe_args

from colossalai.shardformer.layer.moe import SparseMLP

if HAS_TRITON:

diff --git a/examples/language/openmoe/model/openmoe_8b_config.json b/colossalai/legacy/moe/openmoe/model/openmoe_8b_config.json

similarity index 100%

rename from examples/language/openmoe/model/openmoe_8b_config.json

rename to colossalai/legacy/moe/openmoe/model/openmoe_8b_config.json

diff --git a/examples/language/openmoe/model/openmoe_base_config.json b/colossalai/legacy/moe/openmoe/model/openmoe_base_config.json

similarity index 100%

rename from examples/language/openmoe/model/openmoe_base_config.json

rename to colossalai/legacy/moe/openmoe/model/openmoe_base_config.json

diff --git a/examples/language/openmoe/model/openmoe_policy.py b/colossalai/legacy/moe/openmoe/model/openmoe_policy.py

similarity index 99%

rename from examples/language/openmoe/model/openmoe_policy.py

rename to colossalai/legacy/moe/openmoe/model/openmoe_policy.py

index f46062128563..ccd566b08594 100644

--- a/examples/language/openmoe/model/openmoe_policy.py

+++ b/colossalai/legacy/moe/openmoe/model/openmoe_policy.py

@@ -9,7 +9,7 @@

from transformers.modeling_outputs import CausalLMOutputWithPast

from transformers.utils import logging

-from colossalai.moe.manager import MOE_MANAGER

+from colossalai.legacy.moe.manager import MOE_MANAGER

from colossalai.pipeline.stage_manager import PipelineStageManager

from colossalai.shardformer.layer import FusedRMSNorm, Linear1D_Col

from colossalai.shardformer.policies.base_policy import ModulePolicyDescription, Policy, SubModuleReplacementDescription

diff --git a/examples/language/openmoe/requirements.txt b/colossalai/legacy/moe/openmoe/requirements.txt

similarity index 100%

rename from examples/language/openmoe/requirements.txt

rename to colossalai/legacy/moe/openmoe/requirements.txt

diff --git a/examples/language/openmoe/test_ci.sh b/colossalai/legacy/moe/openmoe/test_ci.sh

similarity index 100%

rename from examples/language/openmoe/test_ci.sh

rename to colossalai/legacy/moe/openmoe/test_ci.sh

diff --git a/examples/language/openmoe/train.py b/colossalai/legacy/moe/openmoe/train.py

similarity index 99%

rename from examples/language/openmoe/train.py

rename to colossalai/legacy/moe/openmoe/train.py

index ff0e4bad6ee3..0173f0964453 100644

--- a/examples/language/openmoe/train.py

+++ b/colossalai/legacy/moe/openmoe/train.py

@@ -19,7 +19,7 @@

from colossalai.booster import Booster

from colossalai.booster.plugin.moe_hybrid_parallel_plugin import MoeHybridParallelPlugin

from colossalai.cluster import DistCoordinator

-from colossalai.moe.utils import skip_init

+from colossalai.legacy.moe.utils import skip_init

from colossalai.nn.optimizer import HybridAdam

from colossalai.shardformer.layer.moe import apply_load_balance

diff --git a/examples/language/openmoe/train.sh b/colossalai/legacy/moe/openmoe/train.sh

similarity index 100%

rename from examples/language/openmoe/train.sh

rename to colossalai/legacy/moe/openmoe/train.sh

diff --git a/colossalai/moe/utils.py b/colossalai/legacy/moe/utils.py

similarity index 99%

rename from colossalai/moe/utils.py

rename to colossalai/legacy/moe/utils.py

index 3d08ab7dd9b0..d91c41363316 100644

--- a/colossalai/moe/utils.py

+++ b/colossalai/legacy/moe/utils.py

@@ -9,7 +9,7 @@

from torch.distributed.distributed_c10d import get_process_group_ranks

from colossalai.accelerator import get_accelerator

-from colossalai.moe.manager import MOE_MANAGER

+from colossalai.legacy.moe.manager import MOE_MANAGER

from colossalai.tensor.moe_tensor.api import is_moe_tensor

diff --git a/colossalai/moe/__init__.py b/colossalai/moe/__init__.py

index 0623d19efd5f..e69de29bb2d1 100644

--- a/colossalai/moe/__init__.py

+++ b/colossalai/moe/__init__.py

@@ -1,5 +0,0 @@

-from .manager import MOE_MANAGER

-

-__all__ = [

- "MOE_MANAGER",

-]

diff --git a/colossalai/moe/_operation.py b/colossalai/moe/_operation.py

index 01c837ee36ad..ac422a4da98f 100644

--- a/colossalai/moe/_operation.py

+++ b/colossalai/moe/_operation.py

@@ -290,7 +290,7 @@ def moe_cumsum(inputs: Tensor, use_kernel: bool = False):

return torch.cumsum(inputs, dim=0) - 1

-class MoeInGradScaler(torch.autograd.Function):

+class EPGradScalerIn(torch.autograd.Function):

"""

Scale the gradient back by the number of experts

because the batch size increases in the moe stage

@@ -298,8 +298,7 @@ class MoeInGradScaler(torch.autograd.Function):

@staticmethod

def forward(ctx: Any, inputs: Tensor, ep_size: int) -> Tensor:

- if ctx is not None:

- ctx.ep_size = ep_size

+ ctx.ep_size = ep_size

return inputs

@staticmethod

@@ -311,7 +310,7 @@ def backward(ctx: Any, *grad_outputs: Tensor) -> Tuple[Tensor, None]:

return grad, None

-class MoeOutGradScaler(torch.autograd.Function):

+class EPGradScalerOut(torch.autograd.Function):

"""

Scale the gradient by the number of experts

because the batch size increases in the moe stage

@@ -331,6 +330,50 @@ def backward(ctx: Any, *grad_outputs: Tensor) -> Tuple[Tensor, None]:

return grad, None

+class DPGradScalerIn(torch.autograd.Function):

+ """

+ Scale the gradient back by the number of experts

+ because the batch size increases in the moe stage

+ """

+

+ @staticmethod

+ def forward(ctx: Any, inputs: Tensor, moe_dp_size: int, activated_experts: int) -> Tensor:

+ assert activated_experts != 0, f"shouldn't be called when no expert is activated"

+ ctx.moe_dp_size = moe_dp_size

+ ctx.activated_experts = activated_experts

+ return inputs

+

+ @staticmethod

+ def backward(ctx: Any, *grad_outputs: Tensor) -> Tuple[Tensor, None, None]:

+ assert len(grad_outputs) == 1

+ grad = grad_outputs[0]

+ if ctx.moe_dp_size != ctx.activated_experts:

+ grad.mul_(ctx.activated_experts / ctx.moe_dp_size)

+ return grad, None, None

+

+

+class DPGradScalerOut(torch.autograd.Function):

+ """

+ Scale the gradient by the number of experts

+ because the batch size increases in the moe stage

+ """

+

+ @staticmethod

+ def forward(ctx: Any, inputs: Tensor, moe_dp_size: int, activated_experts: int) -> Tensor:

+ assert activated_experts != 0, f"shouldn't be called when no expert is activated"

+ ctx.moe_dp_size = moe_dp_size

+ ctx.activated_experts = activated_experts

+ return inputs

+

+ @staticmethod

+ def backward(ctx: Any, *grad_outputs: Tensor) -> Tuple[Tensor, None, None]:

+ assert len(grad_outputs) == 1

+ grad = grad_outputs[0]

+ if ctx.moe_dp_size != ctx.activated_experts:

+ grad.mul_(ctx.moe_dp_size / ctx.activated_experts)

+ return grad, None, None

+

+

def _all_to_all(

inputs: torch.Tensor,

input_split_sizes: Optional[List[int]] = None,

@@ -393,4 +436,7 @@ def all_to_all_uneven(

group=None,

overlap: bool = False,

):

+ assert (

+ inputs.requires_grad

+ ), "Input must require grad to assure that backward is executed, otherwise it might hang the program."

return AllToAllUneven.apply(inputs, input_split_sizes, output_split_sizes, group, overlap)

diff --git a/colossalai/shardformer/layer/attn.py b/colossalai/shardformer/layer/attn.py

index 141baf3d3770..5872c64856b9 100644

--- a/colossalai/shardformer/layer/attn.py

+++ b/colossalai/shardformer/layer/attn.py

@@ -139,12 +139,11 @@ def prepare_attn_kwargs(

# no padding

assert is_causal

outputs["attention_mask_type"] = AttnMaskType.CAUSAL

- attention_mask = torch.ones(s_q, s_kv, dtype=dtype, device=device).tril(diagonal=0).expand(b, s_q, s_kv)

+ attention_mask = torch.ones(s_q, s_kv, dtype=dtype, device=device)

+ if s_q != 1:

+ attention_mask = attention_mask.tril(diagonal=0)

+ attention_mask = attention_mask.expand(b, s_q, s_kv)

else:

- assert q_padding_mask.shape == (

- b,

- s_q,

- ), f"q_padding_mask shape {q_padding_mask.shape} should be the same. ({shape_4d})"

max_seqlen_q, cu_seqlens_q, q_indices = get_pad_info(q_padding_mask)

if kv_padding_mask is None:

# self attention

@@ -156,7 +155,7 @@ def prepare_attn_kwargs(

b,

s_kv,

), f"q_padding_mask shape {kv_padding_mask.shape} should be the same. ({shape_4d})"

- attention_mask = q_padding_mask[:, None, :].expand(b, s_kv, s_q).to(dtype=dtype, device=device)

+ attention_mask = kv_padding_mask[:, None, :].expand(b, s_q, s_kv).to(dtype=dtype, device=device)

outputs.update(

{

"cu_seqlens_q": cu_seqlens_q,

@@ -169,7 +168,8 @@ def prepare_attn_kwargs(

)

if is_causal:

outputs["attention_mask_type"] = AttnMaskType.PADDED_CAUSAL

- attention_mask = attention_mask * attention_mask.new_ones(s_q, s_kv).tril(diagonal=0)

+ if s_q != 1:

+ attention_mask = attention_mask * attention_mask.new_ones(s_q, s_kv).tril(diagonal=0)

else:

outputs["attention_mask_type"] = AttnMaskType.PADDED

attention_mask = invert_mask(attention_mask).unsqueeze(1)

diff --git a/colossalai/shardformer/layer/qkv_fused_linear.py b/colossalai/shardformer/layer/qkv_fused_linear.py

index 0f6595a7c4d6..000934ad91a2 100644

--- a/colossalai/shardformer/layer/qkv_fused_linear.py

+++ b/colossalai/shardformer/layer/qkv_fused_linear.py

@@ -695,6 +695,7 @@ def from_native_module(

process_group=process_group,

weight=module.weight,

bias_=module.bias,

+ n_fused=n_fused,

*args,

**kwargs,

)

diff --git a/colossalai/shardformer/modeling/command.py b/colossalai/shardformer/modeling/command.py

index 759c8d7b8d59..5b36fc7db3b9 100644

--- a/colossalai/shardformer/modeling/command.py

+++ b/colossalai/shardformer/modeling/command.py

@@ -116,7 +116,7 @@ def command_model_forward(

# for the other stages, hidden_states is the output of the previous stage

if shard_config.enable_flash_attention:

# in this case, attention_mask is a dict rather than a tensor

- mask_shape = (batch_size, 1, seq_length_with_past, seq_length_with_past)

+ mask_shape = (batch_size, 1, seq_length, seq_length_with_past)

attention_mask = ColoAttention.prepare_attn_kwargs(

mask_shape,

hidden_states.dtype,

diff --git a/colossalai/shardformer/modeling/deepseek.py b/colossalai/shardformer/modeling/deepseek.py

index 6e79ce144cc8..a84a3097231a 100644

--- a/colossalai/shardformer/modeling/deepseek.py

+++ b/colossalai/shardformer/modeling/deepseek.py

@@ -1,21 +1,38 @@

-from typing import List, Optional, Union

+import warnings

+from typing import List, Optional, Tuple, Union

import torch

import torch.distributed as dist

import torch.nn as nn

from torch.distributed import ProcessGroup

-

-# from colossalai.tensor.moe_tensor.moe_info import MoeParallelInfo

from torch.nn import CrossEntropyLoss

-from transformers.modeling_attn_mask_utils import _prepare_4d_causal_attention_mask

-from transformers.modeling_outputs import CausalLMOutputWithPast

+from transformers.cache_utils import Cache, DynamicCache

+from transformers.modeling_attn_mask_utils import (

+ _prepare_4d_causal_attention_mask,

+ _prepare_4d_causal_attention_mask_for_sdpa,

+)

+from transformers.modeling_outputs import BaseModelOutputWithPast, CausalLMOutputWithPast

+from transformers.models.llama.modeling_llama import apply_rotary_pos_emb

from transformers.utils import is_flash_attn_2_available, logging

from colossalai.lazy import LazyInitContext

-from colossalai.moe._operation import MoeInGradScaler, MoeOutGradScaler, all_to_all_uneven

+from colossalai.moe._operation import (

+ DPGradScalerIn,

+ DPGradScalerOut,

+ EPGradScalerIn,

+ EPGradScalerOut,

+ all_to_all_uneven,

+)

from colossalai.pipeline.stage_manager import PipelineStageManager

+from colossalai.shardformer.layer._operation import (

+ all_to_all_comm,

+ gather_forward_split_backward,

+ split_forward_gather_backward,

+)

+from colossalai.shardformer.layer.linear import Linear1D_Col, Linear1D_Row

from colossalai.shardformer.shard import ShardConfig

from colossalai.shardformer.shard.utils import set_tensors_to_none

+from colossalai.tensor.moe_tensor.api import set_moe_tensor_ep_group

# copied from modeling_deepseek.py

@@ -42,30 +59,54 @@ def backward(ctx, grad_output):

class EPDeepseekMoE(nn.Module):

def __init__(self):

- super(EPDeepseekMoE, self).__init__()

+ raise RuntimeError(f"Please use `from_native_module` to create an instance of {self.__class__.__name__}")

+

+ def setup_process_groups(self, tp_group: ProcessGroup, moe_dp_group: ProcessGroup, ep_group: ProcessGroup):

+ assert tp_group is not None

+ assert moe_dp_group is not None

+ assert ep_group is not None

- def setup_ep(self, ep_group: ProcessGroup):

- ep_group = ep_group

- self.ep_size = dist.get_world_size(ep_group) if ep_group is not None else 1

- self.ep_rank = dist.get_rank(ep_group) if ep_group is not None else 0

+ self.ep_size = dist.get_world_size(ep_group)

+ self.ep_rank = dist.get_rank(ep_group)

self.num_experts = self.config.n_routed_experts

assert self.num_experts % self.ep_size == 0

+

self.ep_group = ep_group

self.num_experts_per_ep = self.num_experts // self.ep_size

self.expert_start_idx = self.ep_rank * self.num_experts_per_ep

held_experts = self.experts[self.expert_start_idx : self.expert_start_idx + self.num_experts_per_ep]

+

set_tensors_to_none(self.experts, exclude=set(held_experts))

+

+ # setup moe_dp group

+ self.moe_dp_group = moe_dp_group

+ self.moe_dp_size = moe_dp_group.size()

+

+ # setup tp group

+ self.tp_group = tp_group

+ if self.tp_group.size() > 1:

+ for expert in held_experts:

+ expert.gate_proj = Linear1D_Col.from_native_module(expert.gate_proj, self.tp_group)

+ expert.up_proj = Linear1D_Col.from_native_module(expert.up_proj, self.tp_group)

+ expert.down_proj = Linear1D_Row.from_native_module(expert.down_proj, self.tp_group)

+

for p in self.experts.parameters():

- p.ep_group = ep_group

+ set_moe_tensor_ep_group(p, ep_group)

@staticmethod

- def from_native_module(module: Union["DeepseekMoE", "DeepseekMLP"], *args, **kwargs) -> "EPDeepseekMoE":

+ def from_native_module(

+ module,

+ tp_group: ProcessGroup,

+ moe_dp_group: ProcessGroup,

+ ep_group: ProcessGroup,

+ *args,

+ **kwargs,

+ ) -> "EPDeepseekMoE":

LazyInitContext.materialize(module)

if module.__class__.__name__ == "DeepseekMLP":

return module

module.__class__ = EPDeepseekMoE

- assert "ep_group" in kwargs, "You should pass ep_group in SubModuleReplacementDescription via shard_config!!"

- module.setup_ep(kwargs["ep_group"])

+ module.setup_process_groups(tp_group, moe_dp_group, ep_group)

return module

def forward(self, hidden_states: torch.Tensor) -> torch.Tensor:

@@ -91,15 +132,24 @@ def forward(self, hidden_states: torch.Tensor) -> torch.Tensor:

# [n0, n1, n2, n3] [m0, m1, m2, m3] -> [n0, n1, m0, m1] [n2, n3, m2, m3]

dist.all_to_all_single(output_split_sizes, input_split_sizes, group=self.ep_group)

+ with torch.no_grad():

+ activate_experts = output_split_sizes[: self.num_experts_per_ep].clone()

+ for i in range(1, self.ep_size):

+ activate_experts += output_split_sizes[i * self.num_experts_per_ep : (i + 1) * self.num_experts_per_ep]

+ activate_experts = (activate_experts > 0).float()

+ dist.all_reduce(activate_experts, group=self.moe_dp_group)

+

input_split_list = input_split_sizes.view(self.ep_size, self.num_experts_per_ep).sum(dim=-1).tolist()

output_split_list = output_split_sizes.view(self.ep_size, self.num_experts_per_ep).sum(dim=-1).tolist()

output_states, _ = all_to_all_uneven(dispatch_states, input_split_list, output_split_list, self.ep_group)

- output_states = MoeInGradScaler.apply(output_states, self.ep_size)

+ output_states = EPGradScalerIn.apply(output_states, self.ep_size)

if output_states.size(0) > 0:

if self.num_experts_per_ep == 1:

expert = self.experts[self.expert_start_idx]

+ output_states = DPGradScalerIn.apply(output_states, self.moe_dp_size, activate_experts[0])

output_states = expert(output_states)

+ output_states = DPGradScalerOut.apply(output_states, self.moe_dp_size, activate_experts[0])

else:

output_states_splits = output_states.split(output_split_sizes.tolist())

output_states_list = []

@@ -107,10 +157,16 @@ def forward(self, hidden_states: torch.Tensor) -> torch.Tensor:

if split_states.size(0) == 0: # no token routed to this experts

continue

expert = self.experts[self.expert_start_idx + i % self.num_experts_per_ep]

+ split_states = DPGradScalerIn.apply(

+ split_states, self.moe_dp_size, activate_experts[i % self.num_experts_per_ep]

+ )

split_states = expert(split_states)

+ split_states = DPGradScalerOut.apply(

+ split_states, self.moe_dp_size, activate_experts[i % self.num_experts_per_ep]

+ )

output_states_list.append(split_states)

output_states = torch.cat(output_states_list)

- output_states = MoeOutGradScaler.apply(output_states, self.ep_size)

+ output_states = EPGradScalerOut.apply(output_states, self.ep_size)

dispatch_states, _ = all_to_all_uneven(output_states, output_split_list, input_split_list, self.ep_group)

recover_token_idx = torch.empty_like(flat_topk_token_idx)

recover_token_idx[flat_topk_token_idx] = torch.arange(

@@ -310,7 +366,14 @@ def custom_forward(*inputs):

next_cache = next_decoder_cache if use_cache else None

if stage_manager.is_last_stage():

- return tuple(v for v in [hidden_states, next_cache, all_hidden_states, all_self_attns] if v is not None)

+ if not return_dict:

+ return tuple(v for v in [hidden_states, next_cache, all_hidden_states, all_self_attns] if v is not None)

+ return BaseModelOutputWithPast(

+ last_hidden_state=hidden_states,

+ past_key_values=next_cache,

+ hidden_states=all_hidden_states,

+ attentions=all_self_attns,

+ )

# always return dict for imediate stage

return {

"hidden_states": hidden_states,

@@ -427,3 +490,265 @@ def deepseek_for_causal_lm_forward(

hidden_states = outputs.get("hidden_states")

out["hidden_states"] = hidden_states

return out

+

+

+def get_deepseek_flash_attention_forward(shard_config, sp_mode=None, sp_size=None, sp_group=None):

+ logger = logging.get_logger(__name__)

+

+ def forward(

+ self,

+ hidden_states: torch.Tensor,

+ attention_mask: Optional[torch.LongTensor] = None,

+ position_ids: Optional[torch.LongTensor] = None,

+ past_key_value: Optional[Cache] = None,

+ output_attentions: bool = False,

+ use_cache: bool = False,

+ **kwargs,

+ ) -> Tuple[torch.Tensor, Optional[torch.Tensor], Optional[Tuple[torch.Tensor]]]:

+ if sp_mode is not None:

+ assert sp_mode in ["all_to_all", "split_gather", "ring"], "Invalid sp_mode"

+ assert (sp_size is not None) and (

+ sp_group is not None

+ ), "Must specify sp_size and sp_group for sequence parallel"

+

+ # DeepseekFlashAttention2 attention does not support output_attentions

+ if "padding_mask" in kwargs:

+ warnings.warn(

+ "Passing `padding_mask` is deprecated and will be removed in v4.37. Please make sure use `attention_mask` instead.`"

+ )

+

+ # overwrite attention_mask with padding_mask

+ attention_mask = kwargs.pop("padding_mask")

+

+ output_attentions = False

+

+ bsz, q_len, _ = hidden_states.size()

+

+ # sp: modify sp_len when sequence parallel mode is ring

+ if sp_mode in ["split_gather", "ring"]:

+ q_len *= sp_size

+

+ query_states = self.q_proj(hidden_states)

+ key_states = self.k_proj(hidden_states)

+ value_states = self.v_proj(hidden_states)

+

+ # sp: all-to-all comminucation when introducing sequence parallel

+ if sp_mode == "all_to_all":

+ query_states = all_to_all_comm(query_states, sp_group)

+ key_states = all_to_all_comm(key_states, sp_group)

+ value_states = all_to_all_comm(value_states, sp_group)

+ bsz, q_len, _ = query_states.size()

+ # Flash attention requires the input to have the shape

+ # batch_size x seq_length x head_dim x hidden_dim

+ # therefore we just need to keep the original shape

+ query_states = query_states.view(bsz, q_len, self.num_heads, self.head_dim).transpose(1, 2)

+ key_states = key_states.view(bsz, q_len, self.num_key_value_heads, self.head_dim).transpose(1, 2)

+ value_states = value_states.view(bsz, q_len, self.num_key_value_heads, self.head_dim).transpose(1, 2)

+

+ kv_seq_len = key_states.shape[-2]

+ if past_key_value is not None:

+ kv_seq_len += past_key_value.get_usable_length(kv_seq_len, self.layer_idx)

+ cos, sin = self.rotary_emb(value_states, seq_len=kv_seq_len)

+ query_states, key_states = apply_rotary_pos_emb(

+ query_states, key_states, cos, sin, position_ids, unsqueeze_dim=0

+ )

+

+ if past_key_value is not None:

+ cache_kwargs = {"sin": sin, "cos": cos} # Specific to RoPE models

+ key_states, value_states = past_key_value.update(key_states, value_states, self.layer_idx, cache_kwargs)

+

+ # TODO: These transpose are quite inefficient but Flash Attention requires the layout [batch_size, sequence_length, num_heads, head_dim]. We would need to refactor the KV cache

+ # to be able to avoid many of these transpose/reshape/view.

+ query_states = query_states.transpose(1, 2)

+ key_states = key_states.transpose(1, 2)

+ value_states = value_states.transpose(1, 2)

+ dropout_rate = self.attention_dropout if self.training else 0.0

+

+ # In PEFT, usually we cast the layer norms in float32 for training stability reasons

+ # therefore the input hidden states gets silently casted in float32. Hence, we need

+ # cast them back in the correct dtype just to be sure everything works as expected.

+ # This might slowdown training & inference so it is recommended to not cast the LayerNorms

+ # in fp32. (DeepseekRMSNorm handles it correctly)

+

+ input_dtype = query_states.dtype

+ if input_dtype == torch.float32:

+ # Handle the case where the model is quantized

+ if hasattr(self.config, "_pre_quantization_dtype"):

+ target_dtype = self.config._pre_quantization_dtype

+ elif torch.is_autocast_enabled():

+ target_dtype = torch.get_autocast_gpu_dtype()

+ else:

+ target_dtype = self.q_proj.weight.dtype

+

+ logger.warning_once(

+ f"The input hidden states seems to be silently casted in float32, this might be related to"

+ f" the fact you have upcasted embedding or layer norm layers in float32. We will cast back the input in"

+ f" {target_dtype}."

+ )

+

+ query_states = query_states.to(target_dtype)

+ key_states = key_states.to(target_dtype)

+ value_states = value_states.to(target_dtype)

+ attn_output = self._flash_attention_forward(

+ query_states, key_states, value_states, attention_mask, q_len, dropout=dropout_rate

+ )

+ # sp: all-to-all comminucation when introducing sequence parallel

+ if sp_mode == "all_to_all":

+ attn_output = attn_output.reshape(bsz, q_len, self.num_heads * self.head_dim).contiguous() # (1, 8, 128)

+ attn_output = all_to_all_comm(attn_output, sp_group, scatter_dim=1, gather_dim=2) # (1, 4, 256)

+ else:

+ attn_output = attn_output.reshape(bsz, q_len, self.hidden_size)

+

+ attn_output = self.o_proj(attn_output)

+

+ if not output_attentions:

+ attn_weights = None

+

+ return attn_output, attn_weights, past_key_value

+

+ return forward

+

+

+def get_deepseek_flash_attention_model_forward(shard_config, sp_mode=None, sp_size=None, sp_group=None):

+ logger = logging.get_logger(__name__)

+

+ def forward(

+ self,

+ input_ids: torch.LongTensor = None,

+ attention_mask: Optional[torch.Tensor] = None,

+ position_ids: Optional[torch.LongTensor] = None,

+ past_key_values: Optional[List[torch.FloatTensor]] = None,

+ inputs_embeds: Optional[torch.FloatTensor] = None,

+ use_cache: Optional[bool] = None,

+ output_attentions: Optional[bool] = None,

+ output_hidden_states: Optional[bool] = None,

+ return_dict: Optional[bool] = None,

+ ) -> Union[Tuple, BaseModelOutputWithPast]:

+ output_attentions = output_attentions if output_attentions is not None else self.config.output_attentions

+ output_hidden_states = (

+ output_hidden_states if output_hidden_states is not None else self.config.output_hidden_states

+ )

+ use_cache = use_cache if use_cache is not None else self.config.use_cache

+

+ return_dict = return_dict if return_dict is not None else self.config.use_return_dict

+

+ # retrieve input_ids and inputs_embeds

+ if input_ids is not None and inputs_embeds is not None:

+ raise ValueError("You cannot specify both input_ids and inputs_embeds at the same time")

+ elif input_ids is not None:

+ batch_size, seq_length = input_ids.shape[:2]

+ elif inputs_embeds is not None:

+ batch_size, seq_length = inputs_embeds.shape[:2]

+ else:

+ raise ValueError("You have to specify either input_ids or inputs_embeds")

+

+ if self.gradient_checkpointing and self.training:

+ if use_cache:

+ logger.warning_once(

+ "`use_cache=True` is incompatible with gradient checkpointing. Setting `use_cache=False`transformers."

+ )

+ use_cache = False

+

+ past_key_values_length = 0

+ if use_cache:

+ use_legacy_cache = not isinstance(past_key_values, Cache)

+ if use_legacy_cache:

+ past_key_values = DynamicCache.from_legacy_cache(past_key_values)

+ past_key_values_length = past_key_values.get_usable_length(seq_length)

+

+ if position_ids is None:

+ device = input_ids.device if input_ids is not None else inputs_embeds.device

+ position_ids = torch.arange(

+ past_key_values_length, seq_length + past_key_values_length, dtype=torch.long, device=device

+ )

+ position_ids = position_ids.unsqueeze(0)

+

+ if inputs_embeds is None:

+ inputs_embeds = self.embed_tokens(input_ids)

+

+ if self._use_flash_attention_2:

+ # 2d mask is passed through the layers

+ attention_mask = attention_mask if (attention_mask is not None and 0 in attention_mask) else None

+ elif self._use_sdpa and not output_attentions:

+ # output_attentions=True can not be supported when using SDPA, and we fall back on