Hello, our production code become broken after we one of our dataframes become bigger than 65536 rows.

For about a year, we have a code that merge small data.frame to big data.table with merge. Due to merge.data.table syntax, first argument of merge decided merge manner, and it was data.table, resulting merge.data.table. I understand that it is not correct to mix types, but it was our oversight.

Last week, our "small" data.frame got bigger and Rstudio start to crash not only when merging with big data.table, but even with small ones. We switched to merge.data.frame and all start working again, but in takes 12+ hours for our code to run instead 1+ hour - that's why I'm asking for help.

After some debug I've found that before 65536 rows in data.frame all is good, and after it is not. We tested this example on several machines, and two of our main machines have error, but some others don't. I've posted session infos from broken and working machine FYI.

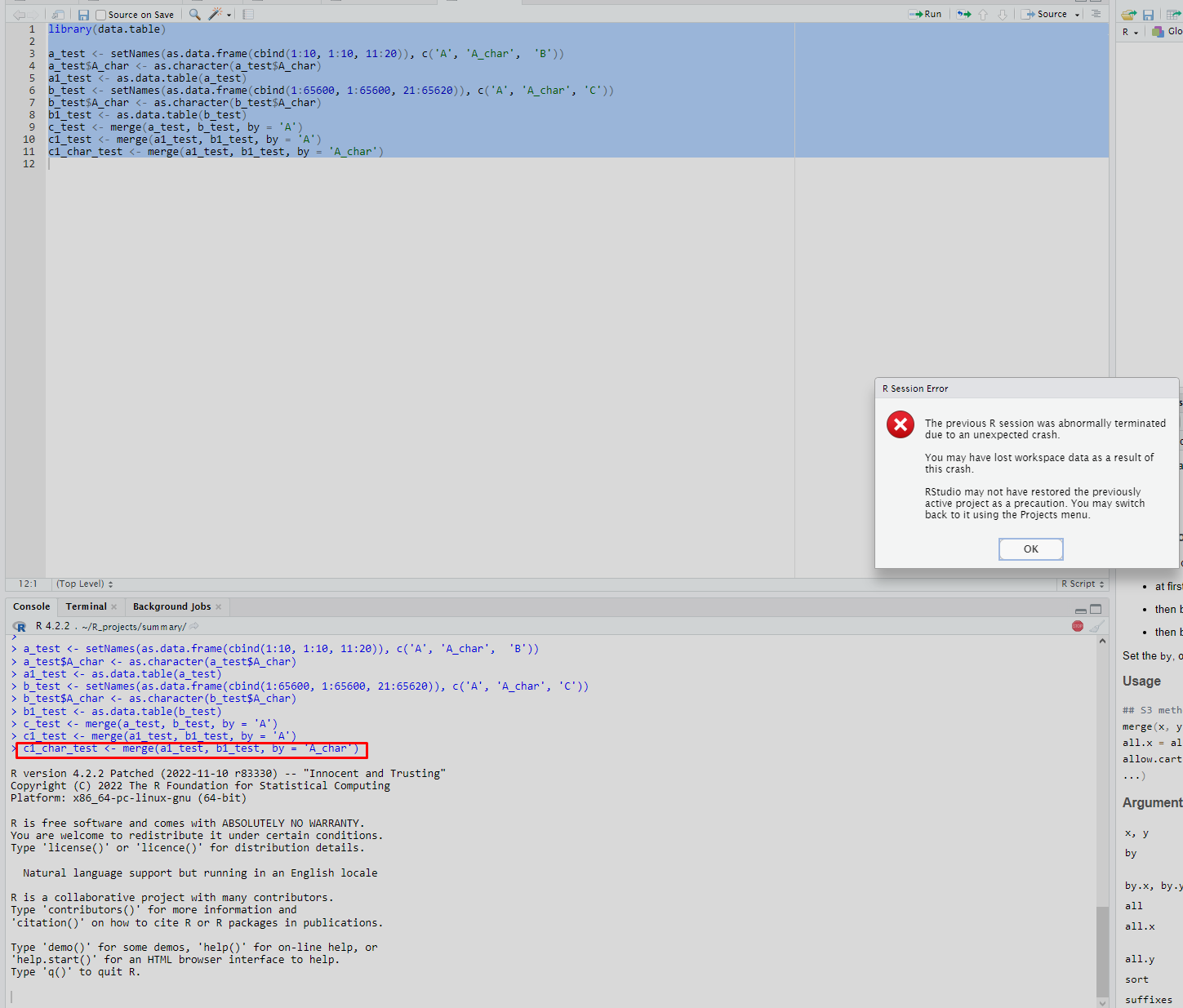

I've created an example that shows this behavior. As you can see - merge.data.frame working good (a_test and b_test are data.frames), merge.data.table with numeric column working good (a1_test and b1_test are data.tables), merge.data.table with character column fails.

# Minimal reproducible example

library(data.table)

a_test <- setNames(as.data.frame(cbind(1:10, 1:10, 11:20)), c('A', 'A_char', 'B'))

a_test$A_char <- as.character(a_test$A_char)

a1_test <- as.data.table(a_test)

b_test <- setNames(as.data.frame(cbind(1:65600, 1:65600, 21:65620)), c('A', 'A_char', 'C'))

b_test$A_char <- as.character(b_test$A_char)

b1_test <- as.data.table(b_test)

c_test <- merge(a_test, b_test, by = 'A')

c1_test <- merge(a1_test, b1_test, by = 'A')

c1_char_test <- merge(a1_test, b1_test, by = 'A_char')# Output of sessionInfo()

> sessionInfo()

R version 4.2.2 Patched (2022-11-10 r83330)

Platform: x86_64-pc-linux-gnu (64-bit)

Running under: Ubuntu 18.04.6 LTS

Matrix products: default

BLAS: /usr/lib/x86_64-linux-gnu/blas/libblas.so.3.7.1

LAPACK: /usr/lib/x86_64-linux-gnu/lapack/liblapack.so.3.7.1

locale:

[1] LC_CTYPE=en_US.UTF-8 LC_NUMERIC=C

[3] LC_TIME=en_US.UTF-8 LC_COLLATE=en_US.UTF-8

[5] LC_MONETARY=en_US.UTF-8 LC_MESSAGES=en_US.UTF-8

[7] LC_PAPER=en_US.UTF-8 LC_NAME=C

[9] LC_ADDRESS=C LC_TELEPHONE=C

[11] LC_MEASUREMENT=en_US.UTF-8 LC_IDENTIFICATION=C

attached base packages:

[1] stats graphics grDevices utils datasets methods base

other attached packages:

[1] data.table_1.14.6

loaded via a namespace (and not attached):

[1] compiler_4.2.2 tools_4.2.2

# Output of sessionInfo() from machine where all is ok

> sessionInfo()

R version 4.2.1 (2022-06-23)

Platform: x86_64-pc-linux-gnu (64-bit)

Running under: Ubuntu 18.04.6 LTS

Matrix products: default

BLAS: /usr/lib/x86_64-linux-gnu/openblas/libblas.so.3

LAPACK: /usr/lib/x86_64-linux-gnu/libopenblasp-r0.2.20.so

locale:

[1] LC_CTYPE=en_US.UTF-8 LC_NUMERIC=C LC_TIME=en_US.UTF-8 LC_COLLATE=en_US.UTF-8 LC_MONETARY=en_US.UTF-8 LC_MESSAGES=en_US.UTF-8

[7] LC_PAPER=en_US.UTF-8 LC_NAME=C LC_ADDRESS=C LC_TELEPHONE=C LC_MEASUREMENT=en_US.UTF-8 LC_IDENTIFICATION=C

attached base packages:

[1] stats graphics grDevices utils datasets methods base

other attached packages:

[1] data.table_1.14.6

loaded via a namespace (and not attached):

[1] compiler_4.2.1 tools_4.2.1

I've found somewhat similar issue #4733, that's why I've linked it.

I can produce any other debug information if you tell me how. Looking for help - we cannot afford 12+ hours of analysis in production.

Hello, our production code become broken after we one of our dataframes become bigger than 65536 rows.

For about a year, we have a code that merge small

data.frameto bigdata.tablewithmerge. Due tomerge.data.tablesyntax, first argument of merge decided merge manner, and it wasdata.table, resultingmerge.data.table. I understand that it is not correct to mix types, but it was our oversight.Last week, our "small"

data.framegot bigger and Rstudio start to crash not only when merging with bigdata.table, but even with small ones. We switched tomerge.data.frameand all start working again, but in takes 12+ hours for our code to run instead 1+ hour - that's why I'm asking for help.After some debug I've found that before 65536 rows in data.frame all is good, and after it is not. We tested this example on several machines, and two of our main machines have error, but some others don't. I've posted session infos from broken and working machine FYI.

I've created an example that shows this behavior. As you can see -

merge.data.frameworking good (a_testandb_testare data.frames),merge.data.tablewith numeric column working good (a1_testandb1_testare data.tables),merge.data.tablewith character column fails.#Minimal reproducible example#Output of sessionInfo()#Output of sessionInfo() from machine where all is okI've found somewhat similar issue #4733, that's why I've linked it.

I can produce any other debug information if you tell me how. Looking for help - we cannot afford 12+ hours of analysis in production.