-

Notifications

You must be signed in to change notification settings - Fork 1k

fwrite() UTF-8 csv file #4785

New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

fwrite() UTF-8 csv file #4785

Changes from all commits

617683d

4f40af2

c7c66c1

f8a2647

272fa49

bc57e66

51bd4af

e8fb9bf

2e86730

3c76808

5efe229

004c3d0

File filter

Filter by extension

Conversations

Jump to

Diff view

Diff view

There are no files selected for viewing

| Original file line number | Diff line number | Diff line change |

|---|---|---|

|

|

@@ -19,7 +19,8 @@ fwrite(x, file = "", append = FALSE, quote = "auto", | |

| compress = c("auto", "none", "gzip"), | ||

| yaml = FALSE, | ||

| bom = FALSE, | ||

| verbose = getOption("datatable.verbose", FALSE)) | ||

| verbose = getOption("datatable.verbose", FALSE), | ||

| encoding = "") | ||

| } | ||

| \arguments{ | ||

| \item{x}{Any \code{list} of same length vectors; e.g. \code{data.frame} and \code{data.table}. If \code{matrix}, it gets internally coerced to \code{data.table} preserving col names but not row names} | ||

|

|

@@ -59,6 +60,7 @@ fwrite(x, file = "", append = FALSE, quote = "auto", | |

| \item{yaml}{If \code{TRUE}, \code{fwrite} will output a CSVY file, that is, a CSV file with metadata stored as a YAML header, using \code{\link[yaml]{as.yaml}}. See \code{Details}. } | ||

| \item{bom}{If \code{TRUE} a BOM (Byte Order Mark) sequence (EF BB BF) is added at the beginning of the file; format 'UTF-8 with BOM'.} | ||

| \item{verbose}{Be chatty and report timings?} | ||

| \item{encoding}{ The encoding of the strings written to the CSV file. Default is \code{""}, which means writting raw bytes without considering the encoding. Other possible options are \code{"UTF-8"} and \code{"native"}. } | ||

|

Member

There was a problem hiding this comment. Choose a reason for hiding this commentThe reason will be displayed to describe this comment to others. Learn more. if this matches the behavior of

Member

Author

There was a problem hiding this comment. Choose a reason for hiding this commentThe reason will be displayed to describe this comment to others. Learn more. It's a little bit different and the |

||

| } | ||

| \details{ | ||

| \code{fwrite} began as a community contribution with \href{https://github.com/Rdatatable/data.table/pull/1613}{pull request #1613} by Otto Seiskari. This gave Matt Dowle the impetus to specialize the numeric formatting and to parallelize: \url{https://www.h2o.ai/blog/fast-csv-writing-for-r/}. Final items were tracked in \href{https://github.com/Rdatatable/data.table/issues/1664}{issue #1664} such as automatic quoting, \code{bit64::integer64} support, decimal/scientific formatting exactly matching \code{write.csv} between 2.225074e-308 and 1.797693e+308 to 15 significant figures, \code{row.names}, dates (between 0000-03-01 and 9999-12-31), times and \code{sep2} for \code{list} columns where each cell can itself be a vector. | ||

|

|

||

| Original file line number | Diff line number | Diff line change |

|---|---|---|

|

|

@@ -5,18 +5,23 @@ | |

| #define DATETIMEAS_EPOCH 2 | ||

| #define DATETIMEAS_WRITECSV 3 | ||

|

|

||

| static bool utf8=false; | ||

| static bool native=false; | ||

| #define TO_UTF8(s) (utf8 && NEED2UTF8(s)) | ||

|

Member

There was a problem hiding this comment. Choose a reason for hiding this commentThe reason will be displayed to describe this comment to others. Learn more. nit: Nothing comes to mind unfortunately, worth considering some more though. |

||

| #define TO_NATIVE(s) (native && (s)!=NA_STRING && !IS_ASCII(s)) | ||

|

Member

There was a problem hiding this comment. Choose a reason for hiding this commentThe reason will be displayed to describe this comment to others. Learn more. @jangorecki I think you recommended putting |

||

| #define ENCODED_CHAR(s) (TO_UTF8(s) ? translateCharUTF8(s) : (TO_NATIVE(s) ? translateChar(s) : CHAR(s))) | ||

|

Member

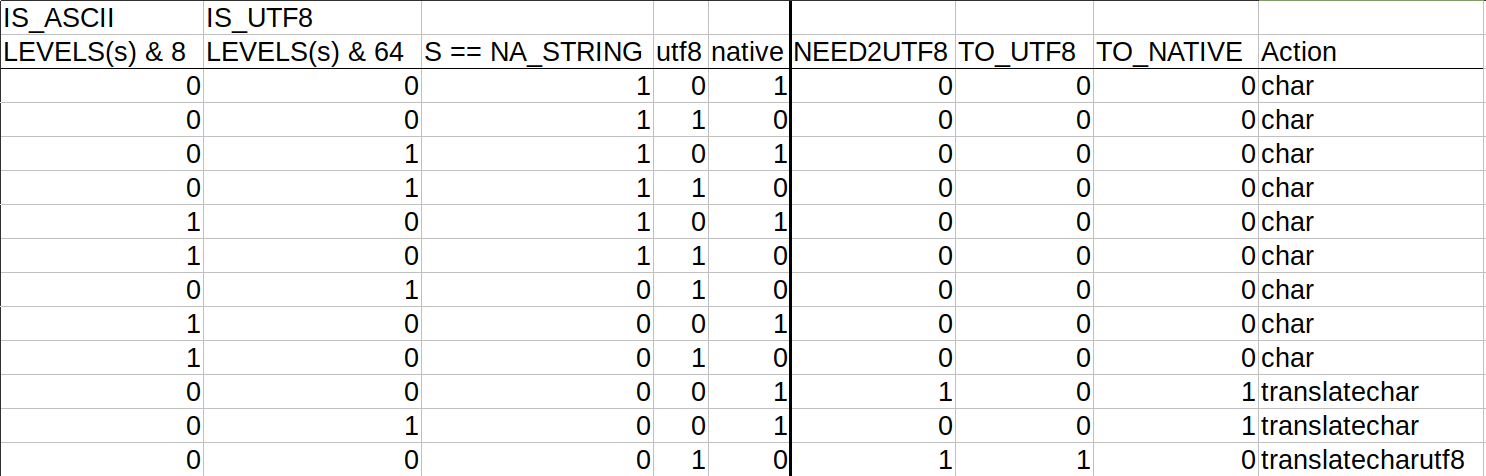

There was a problem hiding this comment. Choose a reason for hiding this commentThe reason will be displayed to describe this comment to others. Learn more. note that how about or based on this truth table (the other 22/32 combinations are impossible because

Member

Author

There was a problem hiding this comment. Choose a reason for hiding this commentThe reason will be displayed to describe this comment to others. Learn more. Yes, you're right but after a 2nd thought, I still prefer the current version. The reasons are:

|

||

|

|

||

| static char sep2; // '\0' if there are no list columns. Otherwise, the within-column separator. | ||

| static bool logical01=true; // should logicals be written as 0|1 or true|false. Needed by list column writer too in case a cell is a logical vector. | ||

| static int dateTimeAs=0; // 0=ISO(yyyy-mm-dd), 1=squash(yyyymmdd), 2=epoch, 3=write.csv | ||

| static const char *sep2start, *sep2end; | ||

| // sep2 is in main fwrite.c so that writeString can quote other fields if sep2 is present in them | ||

| // if there are no list columns, set sep2=='\0' | ||

|

|

||

| // Non-agnostic helpers ... | ||

|

|

||

| const char *getString(SEXP *col, int64_t row) { // TODO: inline for use in fwrite.c | ||

| SEXP x = col[row]; | ||

| return x==NA_STRING ? NULL : CHAR(x); | ||

| return x==NA_STRING ? NULL : ENCODED_CHAR(x); | ||

| } | ||

|

|

||

| int getStringLen(SEXP *col, int64_t row) { | ||

|

|

@@ -45,7 +50,7 @@ int getMaxCategLen(SEXP col) { | |

| const char *getCategString(SEXP col, int64_t row) { | ||

| // the only writer that needs to have the header of the SEXP column, to get to the levels | ||

| int x = INTEGER(col)[row]; | ||

| return x==NA_INTEGER ? NULL : CHAR(STRING_ELT(getAttrib(col, R_LevelsSymbol), x-1)); | ||

| return x==NA_INTEGER ? NULL : ENCODED_CHAR(STRING_ELT(getAttrib(col, R_LevelsSymbol), x-1)); | ||

| } | ||

|

|

||

| writer_fun_t funs[] = { | ||

|

|

@@ -164,10 +169,12 @@ SEXP fwriteR( | |

| SEXP is_gzip_Arg, | ||

| SEXP bom_Arg, | ||

| SEXP yaml_Arg, | ||

| SEXP verbose_Arg | ||

| SEXP verbose_Arg, | ||

| SEXP encoding_Arg | ||

| ) | ||

| { | ||

| if (!isNewList(DF)) error(_("fwrite must be passed an object of type list; e.g. data.frame, data.table")); | ||

|

|

||

| fwriteMainArgs args = {0}; // {0} to quieten valgrind's uninitialized, #4639 | ||

| args.is_gzip = LOGICAL(is_gzip_Arg)[0]; | ||

| args.bom = LOGICAL(bom_Arg)[0]; | ||

|

|

@@ -224,6 +231,8 @@ SEXP fwriteR( | |

| dateTimeAs = INTEGER(dateTimeAs_Arg)[0]; | ||

| logical01 = LOGICAL(logical01_Arg)[0]; | ||

| args.scipen = INTEGER(scipen_Arg)[0]; | ||

| utf8 = !strcmp(CHAR(STRING_ELT(encoding_Arg, 0)), "UTF-8"); | ||

| native = !strcmp(CHAR(STRING_ELT(encoding_Arg, 0)), "native"); | ||

|

|

||

| int firstListColumn = 0; | ||

| for (int j=0; j<args.ncol; j++) { | ||

|

|

||

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

nit: could have signature

encoding = c("", "UTF-8", "native")and usematch.argsinstead of the check belowThere was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

The encoding arg of

fread()doesn't use this signature, either. The reason is the empty string seems not work well withmatch.arg():Created on 2020-10-30 by the reprex package (v0.3.0)

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

Filed the bug to https://bugs.r-project.org/bugzilla/show_bug.cgi?id=17959