We wanna use kafka indexing serivce for streaming events. But I encounter some problem even in very simple case.

- Install druid on one machine. (use imply-2.5.8.tar.gz)

- Install kafka on same machine.

- create topic, and submit a spec:

{

"type": "kafka",

"dataSchema": {

"dataSource": "metrics-ocean4",

"parser": {

"type": "string",

"parseSpec": {

"format": "json",

"timestampSpec": {

"column": "timestamp",

"format": "auto"

},

"dimensionsSpec": {

"dimensions": [],

"dimensionExclusions": [

"timestamp",

"value"

]

}

}

},

"metricsSpec": [

{

"name": "count",

"type": "count"

},

{

"name": "value_sum",

"fieldName": "value",

"type": "doubleSum"

},

{

"name": "value_min",

"fieldName": "value",

"type": "doubleMin"

},

{

"name": "value_max",

"fieldName": "value",

"type": "doubleMax"

}

],

"granularitySpec": {

"type": "uniform",

"segmentGranularity": "MINUTE",

"queryGranularity": "NONE"

}

},

"tuningConfig": {

"type": "kafka",

"maxRowsPerSegment": 5000000,

"intermediatePersistPeriod": "PT5M",

"basePersistDirectory": "/home/qspace/data/mmgamedruiddata/tmp"

},

"ioConfig": {

"topic": "oceantopic4",

"consumerProperties": {

"bootstrap.servers": "10.242.20.145:9092"

},

"taskCount": 1,

"replicas": 1,

"taskDuration": "PT10M",

"completionTimeout": "PT10M"

}

}

Note that I set task duration to 10M, because I wanna hava a quick test. But my tasks mostly failed in publishing status.

Failed task log seems that stop at

"2018-04-17T11:25:33,184 INFO [task-runner-0-priority-0] io.druid.indexing.kafka.KafkaIndexTask - Pausing ingestion until resumed" and never resume.

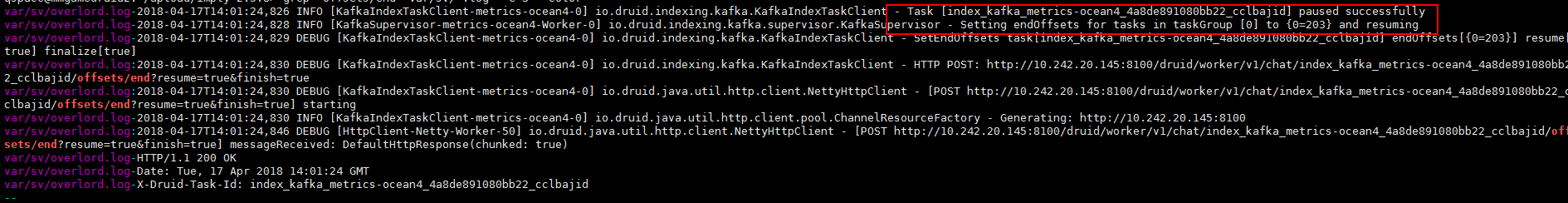

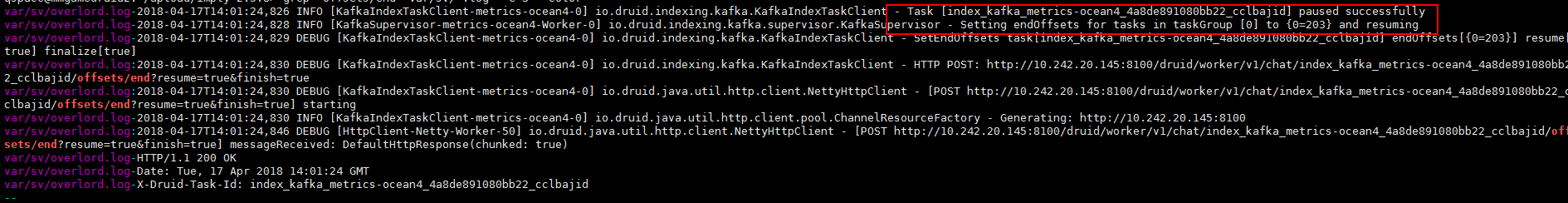

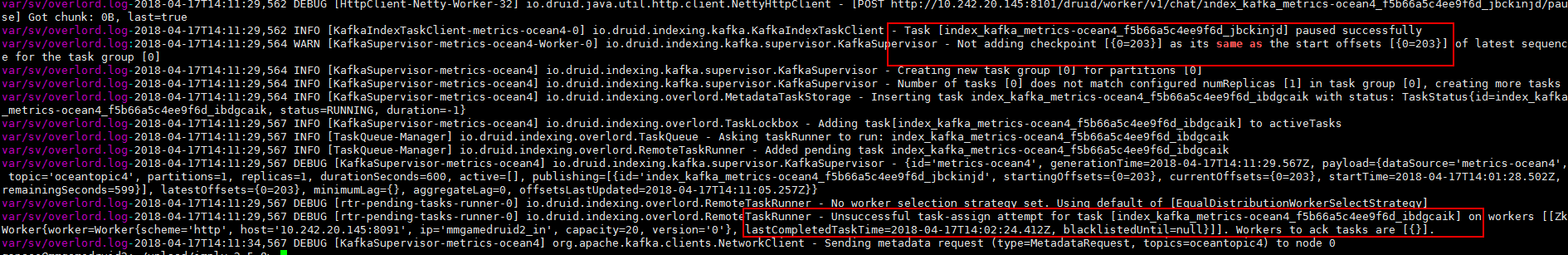

I set process overlord log to debug. When index task(middleMgr) paused, overload will send offset/end to index task to publish segments.

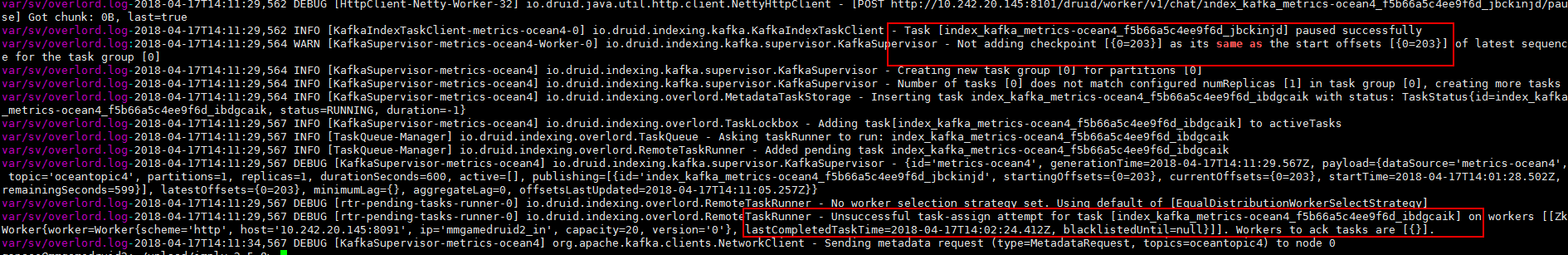

If there aren't any envets, offsets would not changed. So overload will not send "offset/end" any more?

If index task(middleMgr) cannot receive resume, it will pause forever until taskCompleteTimeout.

Am i miss something? Could anyone help. Thx advance!

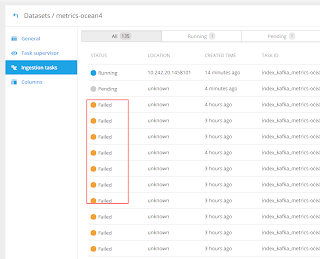

SUCC index task overlord:

FAIL index task overload log:

We wanna use kafka indexing serivce for streaming events. But I encounter some problem even in very simple case.

{

"type": "kafka",

"dataSchema": {

"dataSource": "metrics-ocean4",

"parser": {

"type": "string",

"parseSpec": {

"format": "json",

"timestampSpec": {

"column": "timestamp",

"format": "auto"

},

"dimensionsSpec": {

"dimensions": [],

"dimensionExclusions": [

"timestamp",

"value"

]

}

}

},

"metricsSpec": [

{

"name": "count",

"type": "count"

},

{

"name": "value_sum",

"fieldName": "value",

"type": "doubleSum"

},

{

"name": "value_min",

"fieldName": "value",

"type": "doubleMin"

},

{

"name": "value_max",

"fieldName": "value",

"type": "doubleMax"

}

],

"granularitySpec": {

"type": "uniform",

"segmentGranularity": "MINUTE",

"queryGranularity": "NONE"

}

},

"tuningConfig": {

"type": "kafka",

"maxRowsPerSegment": 5000000,

"intermediatePersistPeriod": "PT5M",

"basePersistDirectory": "/home/qspace/data/mmgamedruiddata/tmp"

},

"ioConfig": {

"topic": "oceantopic4",

"consumerProperties": {

"bootstrap.servers": "10.242.20.145:9092"

},

"taskCount": 1,

"replicas": 1,

"taskDuration": "PT10M",

"completionTimeout": "PT10M"

}

}

Note that I set task duration to 10M, because I wanna hava a quick test. But my tasks mostly failed in publishing status.

Failed task log seems that stop at

"2018-04-17T11:25:33,184 INFO [task-runner-0-priority-0] io.druid.indexing.kafka.KafkaIndexTask - Pausing ingestion until resumed" and never resume.

I set process overlord log to debug. When index task(middleMgr) paused, overload will send offset/end to index task to publish segments.

If there aren't any envets, offsets would not changed. So overload will not send "offset/end" any more?

If index task(middleMgr) cannot receive resume, it will pause forever until taskCompleteTimeout.

Am i miss something? Could anyone help. Thx advance!

SUCC index task overlord:

FAIL index task overload log: