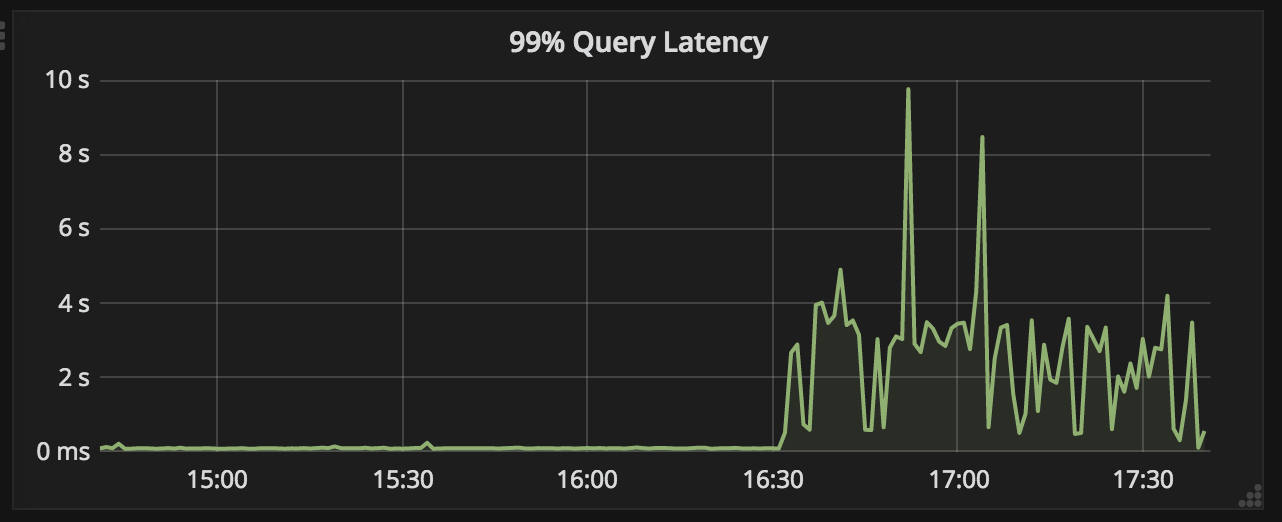

After upgrading some of our historical nodes from 0.11.0 to 0.12, we observed significant performance degradation on these nodes. Below is a graph showing 99% query latency of one node. Before upgrade, it's around 100ms. After upgrade (around 16:30), it soars to almost 10s.

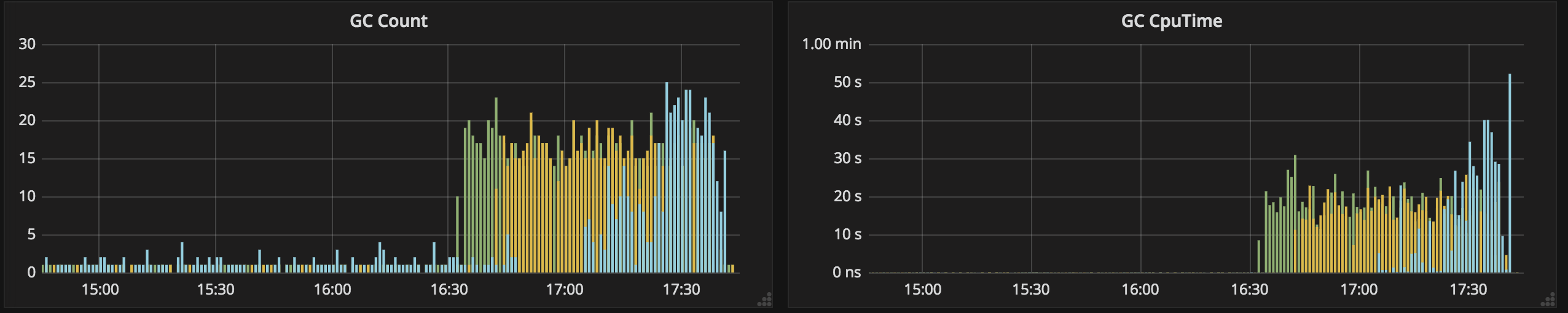

In addition to latency, GC activities also increased significantly.

GC log shows frequent and long STW pauses, which could be the direct cause of the increase in latency.

2018-08-27T16:34:00.400+0800: 325.630: Total time for which application threads were stopped: 3.1070312 seconds, Stopping threads took: 0.0009851 seconds

2018-08-27T16:34:03.996+0800: 329.226: Total time for which application threads were stopped: 2.8285654 seconds, Stopping threads took: 0.0032638 seconds

2018-08-27T16:34:12.182+0800: 337.412: Total time for which application threads were stopped: 1.2309673 seconds, Stopping threads took: 0.0014758 seconds

2018-08-27T16:34:16.974+0800: 342.204: Total time for which application threads were stopped: 2.2249500 seconds, Stopping threads took: 0.0005618 seconds

After upgrading some of our historical nodes from 0.11.0 to 0.12, we observed significant performance degradation on these nodes. Below is a graph showing 99% query latency of one node. Before upgrade, it's around 100ms. After upgrade (around 16:30), it soars to almost 10s.

In addition to latency, GC activities also increased significantly.

GC log shows frequent and long STW pauses, which could be the direct cause of the increase in latency.