Affected Version

0.16, 0.15 (and technically all versions of Druid)

Description

When sampling a datasource with the data loader sampler (via ingestSegment firehose) having intervals set a bit too large makes a http call that never returns in time because it loads way too much data and does not listen to the sampler maxRows or timeoutMs settings.

The root cause of it can be found in the abnormally large amount of work that is done in the ingestSegmentFirehose constructor which stalls everything and does not let the sampler do its thing.

Here is an example request:

{

"type": "index",

"spec": {

"type": "index",

"ioConfig": {

"type": "index",

"firehose": {

"type": "ingestSegment",

"interval": "2010-06-27/2020-06-27", // Pray that this interval does not have many segments

"dataSource": "wikipedia"

}

},

"dataSchema": {

"dataSource": "sample",

"parser": {

"type": "string",

"parseSpec": {

"format": "regex",

"pattern": "(.*)",

"columns": [

"a"

],

"dimensionsSpec": {},

"timestampSpec": {

"column": "!!!_no_such_column_!!!",

"missingValue": "2010-01-01T00:00:00Z"

}

}

}

}

},

"samplerConfig": {

"numRows": 500,

"timeoutMs": 15000

}

}Impact:

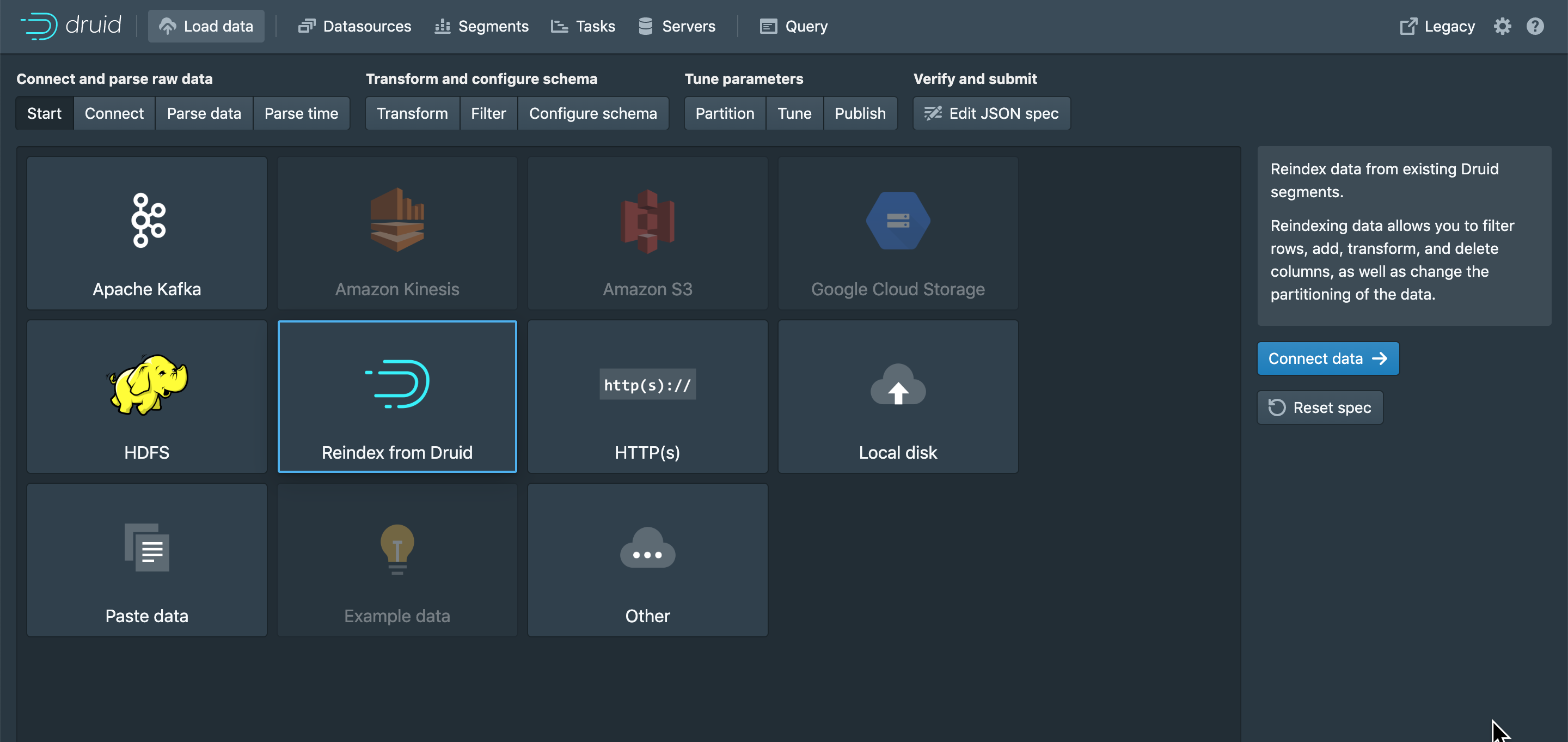

Fixing this will make the data loader work nicer for segment re-ingest

Affected Version

0.16, 0.15 (and technically all versions of Druid)

Description

When sampling a datasource with the data loader sampler (via

ingestSegmentfirehose) having intervals set a bit too large makes a http call that never returns in time because it loads way too much data and does not listen to the samplermaxRowsortimeoutMssettings.The root cause of it can be found in the abnormally large amount of work that is done in the ingestSegmentFirehose constructor which stalls everything and does not let the sampler do its thing.

Here is an example request:

Impact:

Fixing this will make the data loader work nicer for segment re-ingest