Conversation

|

Opened now to get any comments on approach before finishing enumerating the different metrics |

|

This pull request has been marked as stale due to 60 days of inactivity. It will be closed in 4 weeks if no further activity occurs. If you think that's incorrect or this pull request should instead be reviewed, please simply write any comment. Even if closed, you can still revive the PR at any time or discuss it on the dev@druid.apache.org list. Thank you for your contributions. |

|

@michaelschiff apologize for the delayed review. I'll take a look the PR soon. I'm closing and reopening the PR to trigger the CI (Druid repository name has changed due to the deincubation and the CI failure logs have gone). |

|

@jihoonson Sure - let me know if you need anything from me |

jihoonson

left a comment

jihoonson

left a comment

There was a problem hiding this comment.

Thanks. I quickly looked through the Travis failure (Sadly, reopening this PR didn't trigger the TC 😢) and the below failure looks legit.

../docs/development/extensions-contrib/prometheus.md

39 | e port on which to expose the prometheus HTTPServer.|yes|none|

39 | hich to expose the prometheus HTTPServer.|yes|none|

41 | ed dimensions, help text, and conversionFactor for every Druid metric.|no|De

../docs/operations/metrics.md

240 | pool/count`|Bufferpool count.|bufferpoolName.|Varies.|

241 | erpool/used`|Bufferpool used.|bufferpoolName.|close to capacity|

>> 5 spelling errors found in 162 files

The CI does the spell check and this error means there are some unknown words which are not registered on the dictionary. You may want to skip them by adding some of them to the .spelling file.

e36a1ca to

88e6f24

Compare

|

Oops, @michaelschiff sorry, I forgot about this PR. Would you please resolve the conflicts? Also, Git says this PR changes 808 files, but are they all yours? Please double check this PR contains only your changes. |

|

clone this emitter and used in my test cluster |

|

@cxlRay do you see the appropriate port open by each of the druid processes? If so can you try to |

|

@jihoonson FYI @Tiaaa will be taking over this PR (I've changed jobs and can't commit to the source fork). I'll help however I can to see this over the line though. |

|

@michaelschiff thanks for the update. @Tiaaa would you please double check that this PR contains only your changes? |

… updated statsd json)

* API to verify a datasource has the latest ingested data * API to verify a datasource has the latest ingested data * API to verify a datasource has the latest ingested data * API to verify a datasource has the latest ingested data * API to verify a datasource has the latest ingested data * fix checksyle * API to verify a datasource has the latest ingested data * API to verify a datasource has the latest ingested data * API to verify a datasource has the latest ingested data * API to verify a datasource has the latest ingested data * fix spelling * address comments * fix checkstyle * update docs * fix tests * fix doc * address comments * fix typo * fix spelling * address comments * address comments * fix typo in docs

* All aggregators should use vectorization-aware column processor * All aggregators should use vectorization-aware column processor * fix canVectorize * fix canVectorize * add tests * revert back default * address comment * address comments * address comment * address comment

…e#10040) Fix Avatica based metadata queries by appending ESCAPE '\' clause to the LIKE expressions

… accesses replaceable with Collections.empty*()" (apache#9690) * IntelliJ inspection and checkstyle rule for "Collection.EMPTY_* field accesses replaceable with Collections.empty*()" * Reverted checkstyle rule * Added tests to pass CI * Codestyle

Co-authored-by: tomscut <tomscut@gmail.com>

* global table if only joinable * oops * fix style, add more tests * Update sql/src/test/java/org/apache/druid/sql/calcite/schema/DruidSchemaTest.java * better information schema columns, distinguish broadcast from joinable * fix javadoc * fix mistake Co-authored-by: Jihoon Son <jihoonson@apache.org>

…les (apache#10048) * Coordinator loadstatus API full format does not consider Broadcast rules * address comments * fix checkstyle * minor optimization * address comments

…native batch ingestion (apache#10025) * Fill in the core partition set size properly for batch ingestion with dynamic partitioning * incomplete javadoc * Address comments * fix tests * fix json serde, add tests * checkstyle * Set core partition set size for hash-partitioned segments properly in batch ingestion * test for both parallel and single-threaded task * unused variables * fix test * unused imports * add hash/range buckets * some test adjustment and missing json serde * centralized partition id allocation in parallel and simple tasks * remove string partition chunk * revive string partition chunk * fill numCorePartitions for hadoop * clean up hash stuffs * resolved todos * javadocs * Fix tests * add more tests * doc * unused imports

* Fix join filter rewrites with nested queries * Fix test, inspection, coverage * Remove clauses from group key * Fix import order Co-authored-by: Gian Merlino <gianmerlino@gmail.com>

…apache#10053) * fix topn on string columns with non-sorted or non-unique dictionaries * fix metadata tests * refactor, clarify comments and code, fix ci failures

… loadstatus API (apache#10054) * Add safeguard to make sure new Rules added are aware of Rule usuage in loadstatus API * address comments * address comments * add tests

* fix docs error: google to azure and hdfs to http * fix docs error: indexSpecForIntermediatePersists of tuningConfig in hadoop-based batch part * fix docs error: logParseExceptions of tuningConfig in hadoop-based batch part * fix docs error: maxParseExceptions of tuningConfig in hadoop-based batch part

apache#10058) * minor refactor of topn engine algorithm selection for clarity * adjust * more javadoc

* change default number of segment loading threads * fix docs * missed file * min -> max for segment loading threads Co-authored-by: Dylan <dwylie@spotx.tv>

* retry 500 and 503 errors against kinesis * add test that exercises retry logic * more branch coverage * retry 500 and 503 on getRecords request when fetching sequence numberu Co-authored-by: Harshpreet Singh <hrshpr@twitch.tv>

* Druid user permissions apply in the console * Update index.md * noting user warning in console page; some minor shuffling * noting user warning in console page; some minor shuffling 1 * touchups * link checking fixes * Updated per suggestions

…o respect output type (apache#10063) * fix return type from HyperUniquesAggregator/HyperUniquesVectorAggregator * address comments * address comments

|

@exherb |

|

This pull request has been marked as stale due to 60 days of inactivity. It will be closed in 4 weeks if no further activity occurs. If you think that's incorrect or this pull request should instead be reviewed, please simply write any comment. Even if closed, you can still revive the PR at any time or discuss it on the dev@druid.apache.org list. Thank you for your contributions. |

|

This pull request/issue has been closed due to lack of activity. If you think that is incorrect, or the pull request requires review, you can revive the PR at any time. |

|

@jihoonson @Tiaaa closed again due to inactivity :( I have been using this extension in my clusters for the last 6 months without issue (Without a push-gateway, so not collecting indexing task metrics yet). I can set this up and start validating this collection path too if that's blocking getting this merged. @Tiaaa can you bring this PR up to date? @jihoonson What do you think about getting this merged after that? |

|

@michaelschiff re-opened it here #10412 cherry-picking all relevant commits. |

|

Do we have any Milestone/Timeline to get this emitter released ? |

|

@TheRum currently is in pending review state, hopefully get it in around end of October/mid-November. |

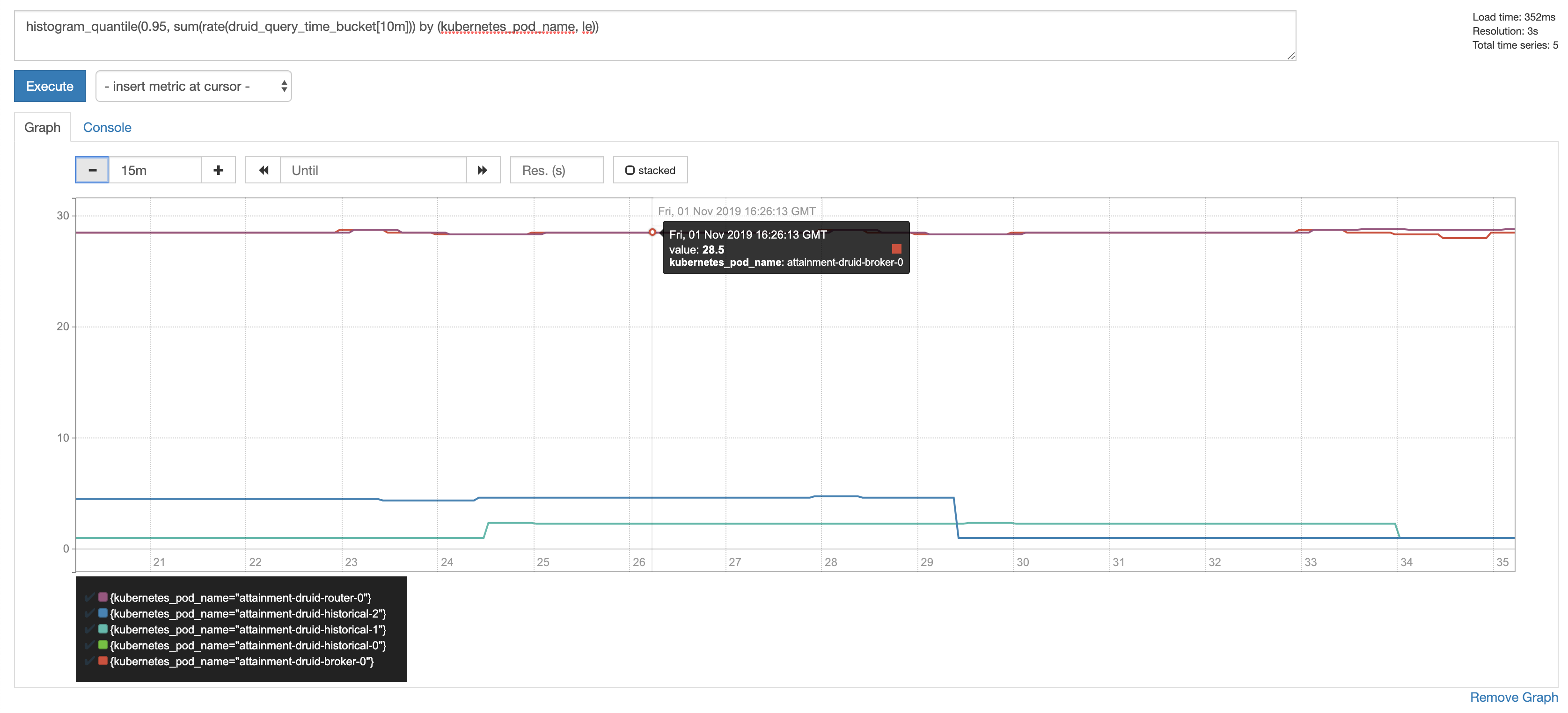

Adds a new extension

prometheus-emitterto expose Druid metrics for collection directly by a Prometheus server.This PR has:

Key changed/added classes in this PR

org.apache.druid.emitter.prometheus.*