Simulate multi-turn conversations with your AI agent. Find failures before production.

Documentation · Examples · Report a Bug

arksim_find_your_agent.s_errors.mp4

Agents fail in ways that only show up mid-conversation. They misinterpret intent three turns in, call the wrong tool, or hallucinate a policy that does not exist. Single-turn testing misses all of this.

ArkSim generates LLM-powered synthetic users that hold realistic multi-turn conversations with your agent. Each user has a distinct profile, goal, and knowledge level. They push back, ask follow-ups, and behave like real users would.

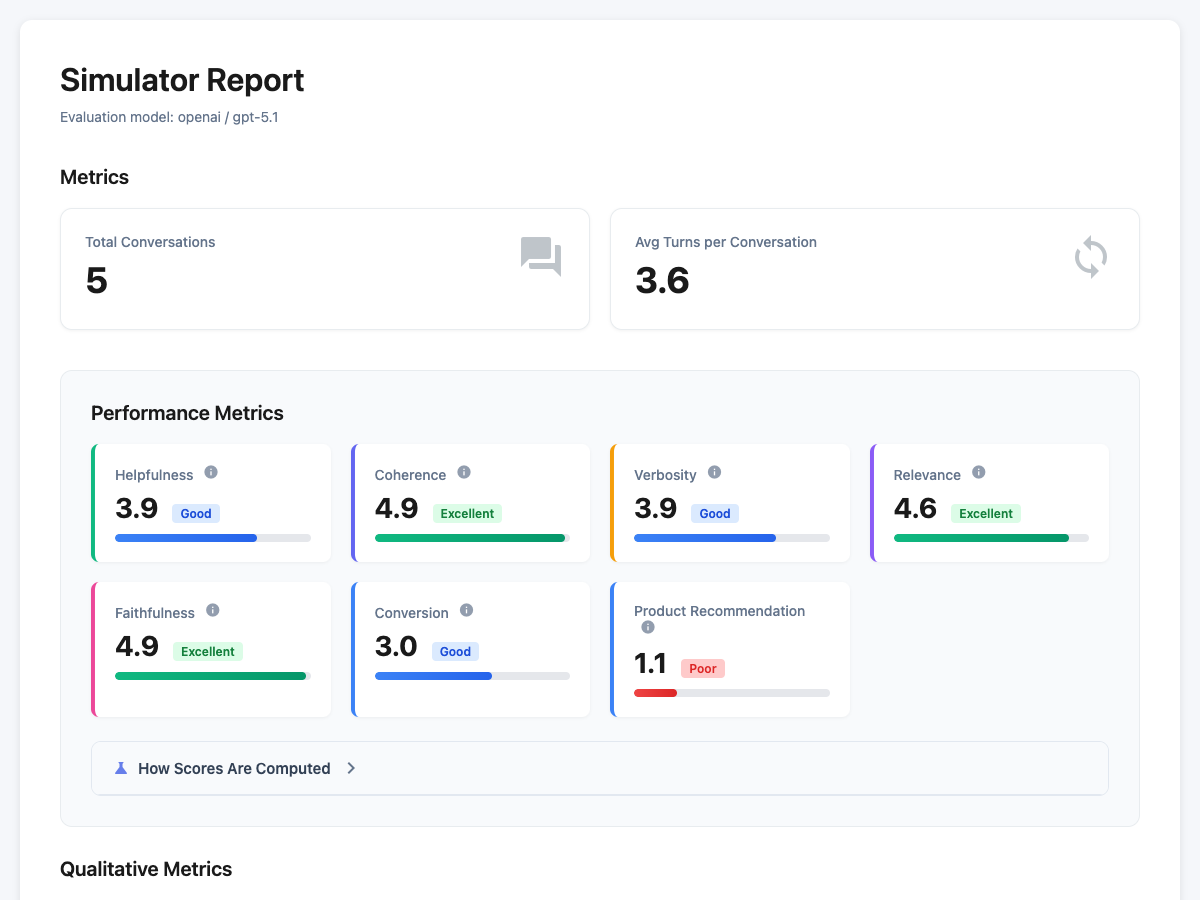

You define scenarios, ArkSim simulates conversations, then evaluates every turn across metrics like helpfulness, faithfulness, and goal completion. The output is an interactive report showing exactly where your agent broke and why.

pip install arksim

export OPENAI_API_KEY="your-key"

arksim init

# Edit my_agent.py with your agent logic, then run:

arksim simulate-evaluate config.yamlThis generates config.yaml, scenarios.json, and a starter my_agent.py.

For HTTP or A2A agents: arksim init --agent-type chat_completions or arksim init --agent-type a2a.

For Anthropic or Google as the evaluation LLM: pip install "arksim[anthropic]" or pip install "arksim[google]".

pip install arksim

export OPENAI_API_KEY="your-key"

arksim examples

cd examples/e-commerce

arksim simulate-evaluate config.yamlThe report tells you where your agent is strong and where it breaks. You get per-metric scores, categorized failures, and full conversation transcripts so you can read the exact turns where things went wrong.

arksim init generates a my_agent.py with a BaseAgent subclass. Replace the execute() body with your agent logic:

from arksim.simulation_engine.agent.base import BaseAgent

from arksim.simulation_engine.tool_types import AgentResponse

class MyAgent(BaseAgent):

async def get_chat_id(self) -> str:

return "unique-id"

async def execute(self, user_query: str, **kwargs: object) -> str | AgentResponse:

# Replace with your agent logic

return "agent response"agent_config:

agent_type: chat_completions

agent_name: my-agent

api_config:

endpoint: http://localhost:8000/v1/chat/completionsagent_config:

agent_type: a2a

agent_name: my-agent

api_config:

endpoint: http://localhost:9999/agentA2A agents can also surface tool calls for evaluation via the arksim tool call capture extension. See examples/customer-service/a2a_server/ for a runnable reference server.

Write scenarios that match your agent's domain. See the Scenarios documentation for how to define goals, user profiles, and knowledge.

- Simulation, not just evaluation. Most tools score conversations you already have. ArkSim generates them with synthetic users who push back, ask follow-ups, and behave unpredictably.

- Multi-turn by default. Every test is a full conversation, not a single prompt. Context loss, tool misuse, and contradictions only show up across turns.

- Any agent, any framework. Works with 14+ frameworks through Chat Completions, A2A, or direct Python import.

- Runs in CI. Add it as a quality gate on every PR. Exits non-zero when your agent drops below threshold.

- Fully open source. Runs on your infrastructure. Your data never leaves.

| Framework | Provider |

|---|---|

| Claude Agent SDK | Anthropic |

| OpenAI Agents SDK | OpenAI |

| Google ADK | |

| LangChain | LangChain |

| LangGraph | LangChain |

| CrewAI | CrewAI |

| Dify | Dify |

| AutoGen | Microsoft |

| LlamaIndex | LlamaIndex |

| Pydantic AI | Pydantic |

| Rasa | Rasa |

| Smolagents | Hugging Face |

| Mastra | TypeScript |

| Vercel AI SDK | TypeScript |

See examples for end-to-end projects with custom metrics and scenarios.

| Topic | |

|---|---|

| Evaluation metrics (built-in and custom) | Metrics guide |

| CI integration (pytest and GitHub Actions) | CI setup guide |

| Configuration reference (all YAML settings) | Schema reference |

| Simulation and CLI usage | Simulation guide |

| Web UI for browsing results | Overview |

git clone https://github.com/arklexai/arksim.git

cd arksim

pip install -e ".[dev]"

pytest tests/Linting and formatting:

ruff check .

ruff format .See CONTRIBUTING.md for guidelines.

Apache-2.0. See LICENSE.

@misc{shea2026sage,

title={SAGE: A Top-Down Bottom-Up Knowledge-Grounded User Simulator for Multi-turn AGent Evaluation},

author={Ryan Shea and Yunan Lu and Liang Qiu and Zhou Yu},

year={2026},

eprint={2510.11997},

archivePrefix={arXiv},

primaryClass={cs.CL},

url={https://arxiv.org/abs/2510.11997},

}