🌐 Website: clawfleet.io · 💬 Community: Discord · 📝 Blog: Dev.to

Run a fleet of AI agents on your laptop in 5 minutes — OpenClaw and Hermes in isolated containers, managed from one browser dashboard. Use your ChatGPT subscription, no cloud bills.

Imagine buying N dedicated Mac Minis, each running its own AI agent — some OpenClaw, some Hermes, all collaborating in Discord. Your own AI company — data stays on your hardware, no SaaS subscription.

ClawFleet makes that free. Each agent runs in its own Docker container with isolated filesystem and networking. On your existing Mac or Linux box. ~500 MB RAM per OpenClaw instance, ~150 MB per Hermes instance.

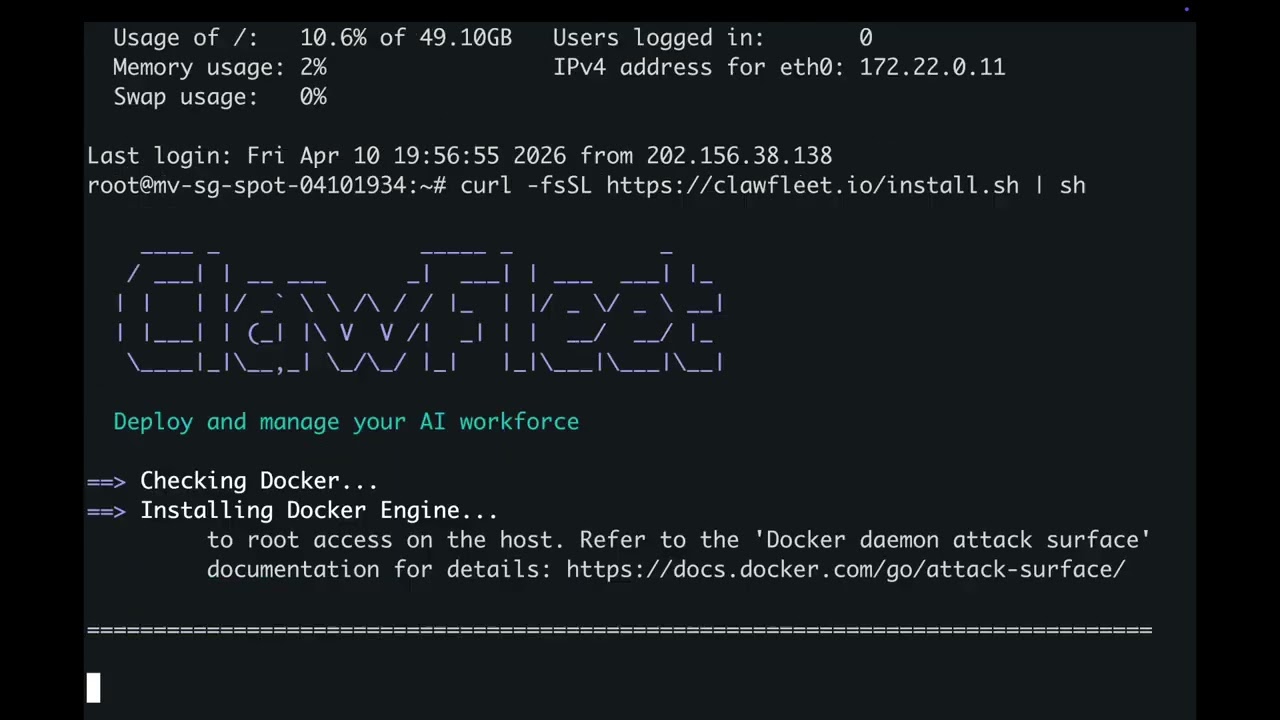

curl -fsSL https://clawfleet.io/install.sh | sh5 minutes: Docker installed, image pulled, dashboard running at http://localhost:8080. Connect any model provider with a single API key — every instance, OpenClaw or Hermes, runs in its own Docker container with full isolation.

- Two runtimes, one Dashboard — OpenClaw and Hermes, both first-class (more ↓)

- Sandboxed instances — each agent in its own Docker container, isolated from host and peers

- Any LLM provider — OpenAI, Anthropic, Google, DeepSeek, or your ChatGPT subscription (OpenClaw)

clawfleet shell— drop into any instance's terminal: Hermes TUI chat or OpenClaw bash shell- Version pinning — lock tested runtime versions so upstream breaking changes don't touch you

- Character system — reusable personas (bio, backstory, style, traits) (OpenClaw)

- Skill management — 52 built-in + 13,000+ community skills via ClawHub (OpenClaw)

- Soul Archive — snapshot persona + memory + config, clone into new hires (OpenClaw)

- macOS or Linux

- Mac users: strongly recommended to install Docker Desktop first for the best experience

Otherwise ClawFleet will automatically install Colima as an alternative Docker runtime.

The install command above will:

- Install Docker if needed (Colima on macOS, Docker Engine on Linux)

- Download and install the

clawfleetCLI - Pull the pre-built sandbox image (~1.4 GB)

- Start the Dashboard as a background daemon

- Open http://localhost:8080 in your browser

Linux server deployment notes

The Dashboard listens on 0.0.0.0:8080 by default on Linux. Restrict to localhost:

clawfleet dashboard stop

clawfleet dashboard start --host 127.0.0.1SSH tunnel from your laptop:

ssh -fNL 8081:127.0.0.1:8080 user@your-server # then http://localhost:8081The Control Panel (OpenClaw's built-in web UI) requires a secure context — the SSH tunnel provides this. Hermes's native Dashboard does not require a secure context. All other Dashboard features work without a tunnel.

Manual install? See the Getting Started wiki page.

Think of ClawFleet as your AI company. Assets are the tools your company owns; Fleet is your team of AI employees. You assign tools to employees, then put your AI workforce into production.

Assets → Models — register LLM API keys. The "brains" your employees think with. Each model is validated before saving. Models are shared across all runtimes.

Assets → Characters — reusable personas. Think of them as "job descriptions" — a CTO, a CPO, a CMO. Bio, backstory, communication style, personality traits. Characters today are surfaced for OpenClaw instances; Hermes uses its own personality system in its native Dashboard.

Assets → Channels — connect messaging platforms. The "workstations" where your employees serve customers. Validated before saving. OpenClaw supports 24+ channels; Hermes today supports Discord / Telegram / Slack.

Fleet → Create — spin up an instance, OpenClaw or Hermes. Each one is a new employee joining your company.

Fleet → Configure — assign a model, character, and channel. Give your CTO a Claude brain and a Discord workstation. Give your CMO a GPT brain and a Slack feed. Different employees, different tools, different personalities.

Fleet → Skills — each instance has access to 52 built-in skills (weather, GitHub, coding, and more). Want more? Search 13,000+ community skills on ClawHub and install them with one click. Different employees can learn different skills. Skill Manager today is OpenClaw-specific — Hermes manages skills via its own Dashboard.

Once an employee is trained and performing well, save their soul — personality, memory, model config, and conversation history — so you can clone them instantly. Soul Archive today is OpenClaw-specific.

Fleet → Save Soul — click on any configured instance to save its soul.

Fleet → Soul Archive — browse all saved souls, ready to be loaded into new hires.

Fleet → Create → Load Soul — when creating new instances, pick a soul. The new employee starts with all the knowledge and personality of the original.

Connect your fleet to messaging platforms and watch your AI employees work together. Here, an engineer, product manager, and marketer welcome a new teammate — all running autonomously in a Discord group chat.

ClawFleet manages all agent runtimes as first-class citizens in one unified Dashboard — with shared asset pool (Models, Channels), per-instance container isolation, live stats, logs, event streams, and clawfleet shell access.

Today ClawFleet ships with two runtimes: OpenClaw and Hermes. OpenClaw has been supported longer, so more of its features are currently surfaced in the Dashboard UI. Hermes support is newer; its current Dashboard scope is container lifecycle and Configure (model + channel), with deeper features accessible via Hermes's own native Dashboard. Bringing both runtimes to full Dashboard parity is an active direction — and it's how future runtimes will be added too.

Upstream: github.com/openclaw/openclaw

OpenClaw is a local-first assistant gateway that makes a single agent addressable from 24+ messaging platforms — Telegram, Discord, Slack, Lark, WhatsApp, Signal, Matrix, and more. The bot lives inside the conversation as a participant, with pairing codes, @-mentions, and roster-aware coordination with other bots.

Pick OpenClaw when you want:

- A bot that lives as a participant inside a group chat (not a DM assistant you have to call)

- A fleet of distinct personas that collaborate (CEO + CTO + PM in one Discord) via Roster-aware @mentions

- Installable skills from a curated community catalog (ClawHub, 13,000+)

In ClawFleet today:

- Configure — assign Model + Character + Channel from the Dashboard

- Characters as reusable personas, hot-reloaded via SOUL.md

- Skills — 52 bundled + ClawHub install from the Skill Manager

- Save Soul — snapshot persona + config into the archive, clone into new hires

Upstream: github.com/NousResearch/hermes-agent

Hermes is built around a learning loop: the agent writes new skills from experience, improves them while using them, and maintains memory that compounds across sessions (FTS5 cross-session search + Honcho user modeling). The primary interface is a TUI; messaging platforms are secondary remote controls.

Pick Hermes when you want:

- One personal agent that learns you over time (not a group-chat participant, but a "my assistant")

- First-class cron / scheduled automations delivered to messaging

- Remote execution on SSH / Daytona / Modal (serverless) backends

- Long-tail LLM providers (Nous Portal, GLM, Kimi, MiMo, MiniMax, OpenRouter's 200+)

- A TUI-first workflow

In ClawFleet today:

- Configure — assign Model + Channel (Discord / Telegram / Slack) from the Dashboard, using the same Asset pool OpenClaw uses

- Hermes Dashboard — one-click link to Hermes's native Dashboard for credential pool, cron, personality, terminal backends

clawfleet shell hermes-1— drop into Hermes's interactive TUI

On top of either runtime, ClawFleet also exposes the instance's graphical desktop in the browser via noVNC — useful for watching what the agent does, manually steering a workflow, or demoing. Available today on OpenClaw images (which ship an XFCE desktop); bringing equivalent visibility to Hermes is on the roadmap.

See the Wiki for full documentation:

- Getting Started — prerequisites, install, first instance

- Dashboard Guide — sidebar, asset management, fleet management

- LLM Provider guides — Anthropic | OpenAI | Google | DeepSeek

- Channel guides — Telegram | Discord | Slack | Lark

- CLI Reference | FAQ

Every command supports --help:

clawfleet --help # All commands

clawfleet dashboard --help # Dashboard subcommandsQuick reference:

clawfleet create <N> [--runtime openclaw|hermes] # Create N instances (default: openclaw)

clawfleet create <N> --pull # Force re-pull image from registry

clawfleet create 1 --from-snapshot <soul> # Clone from a saved soul (OpenClaw)

clawfleet configure <name> # Configure model + channel (OpenClaw via CLI; Hermes via Dashboard)

clawfleet list # List instances and status

clawfleet shell <name> # Drop into terminal: Hermes TUI / OpenClaw bash

clawfleet desktop <name> # Open noVNC desktop (OpenClaw)

clawfleet start|stop|restart <name|all> # Lifecycle

clawfleet logs <name> [-f] # View logs

clawfleet destroy <name|all> [--purge] # Destroy (--purge also deletes data)

clawfleet snapshot save|list|delete <name> # Soul archive (OpenClaw)

clawfleet dashboard serve|stop|restart|open # Dashboard daemon

clawfleet build # Build image locally

clawfleet config | version # Config / version infoDestroy all instances (including data), stop the Dashboard, remove all build artifacts:

make resetIdle memory, measured on M4 MacBook Air (16 GB RAM):

| Instances | OpenClaw RAM | Hermes RAM |

|---|---|---|

| 1 | ~700 MB | ~140 MB |

| 3 | ~2.1 GB | ~400 MB |

| 5 | ~3.5 GB | ~700 MB |

OpenClaw memory rises ~3× when the agent is actively browsing (Chromium loaded). Hermes stays roughly flat.

MIT · Contributions welcome — open an issue or PR. Reach out: weiyong1024@gmail.com