-

-

Notifications

You must be signed in to change notification settings - Fork 2.5k

Description

Hello,

When I using dogstatsd to submit counts or histograms with a sample rate smaller than 1.0, the metrics end up inflated in Datadog, corresponding to the inverse of the sample rate. For example, if I set a sample rate of 0.5, the metrics in Datadog will be multiplied by 2.0.

I believe the issue is caused by the metrics code submitting the sample rate value to dogstatsd without downsampling the submitted metrics. On the other end, Datadog multiplies the metric values by the inverse of the sample rate to compensate (see Datadog docs), but since the submitted values were not downsampled by go-kit, the "compensation" ends up inflating the values on Datadog's side.

I used this test program:

package main

import (

"fmt"

"net"

"os"

"time"

"github.com/go-kit/kit/log"

"github.com/go-kit/kit/metrics/dogstatsd"

)

func main() {

logger := log.NewLogfmtLogger(log.NewSyncWriter(os.Stdout))

logger = log.NewContext(logger).With("timestamp", log.DefaultTimestampUTC)

addr := net.JoinHostPort(os.Getenv("DOGSTATSD_HOST"), os.Getenv("DOGSTATSD_PORT"))

logger.Log("msg", "Sending metrics to dogstatsd...", "addr", addr)

dd := dogstatsd.New("paulo.test.", log.NewNopLogger())

go dd.SendLoop(time.Tick(time.Second), "udp", addr)

fmt.Println("running...")

notSampled := dd.NewHistogram("throughput.not_sampled", 1.0)

sampled := dd.NewHistogram("throughput.sampled", 0.2)

count := 0

for {

sampled.Observe(20)

notSampled.Observe(20)

time.Sleep(100 * time.Millisecond)

count++

if count%100 == 0 {

logger.Log("count", count)

}

}

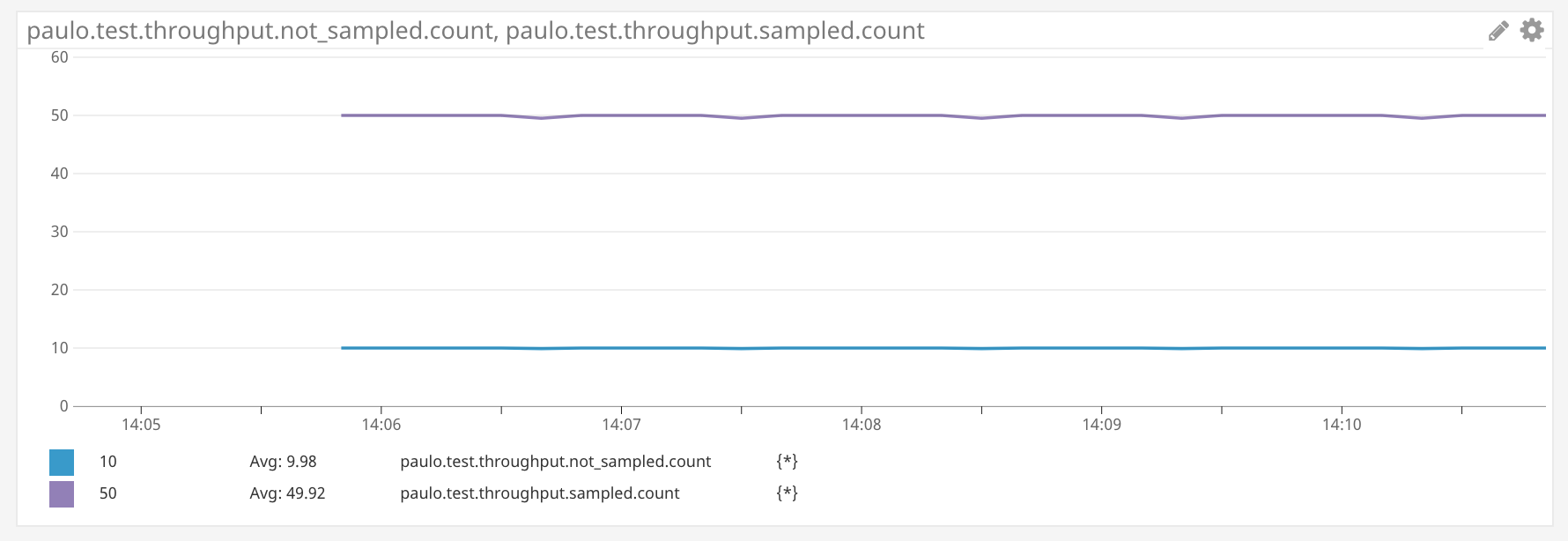

}This submits what Datadog calls a "histogram" (though not a true histogram), which contains a .count metric. In the graph below we see the rate of the counters, which are incrementing at approx. 10/sec, based on the 100ms sleep.

The expected rate of the both counters should be ~10/s, but the "sampled" counter reports ~50/s:

I am happy to submit a PR with a proposed fix. I think the idea would be to drop a random percentage of "observations", corresponding to the sample rate. This is what Datadog's statsd client does.

Thanks,

Paulo