[autoparallel] Patch meta information of torch.nn.Embedding#2760

Merged

YuliangLiu0306 merged 60 commits intohpcaitech:mainfrom Feb 17, 2023

Merged

[autoparallel] Patch meta information of torch.nn.Embedding#2760YuliangLiu0306 merged 60 commits intohpcaitech:mainfrom

torch.nn.Embedding#2760YuliangLiu0306 merged 60 commits intohpcaitech:mainfrom

Conversation

Merge ColossalAI

Daily merge

Contributor

|

The code coverage for the changed files is 39%. Click me to view the complete report |

1 similar comment

Contributor

|

The code coverage for the changed files is 39%. Click me to view the complete report |

Contributor

|

The code coverage for the changed files is 39%. Click me to view the complete report |

Contributor

|

The code coverage for the changed files is 47%. Click me to view the complete report |

YuliangLiu0306

approved these changes

Feb 17, 2023

This file contains hidden or bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

Sign up for free

to join this conversation on GitHub.

Already have an account?

Sign in to comment

Add this suggestion to a batch that can be applied as a single commit.This suggestion is invalid because no changes were made to the code.Suggestions cannot be applied while the pull request is closed.Suggestions cannot be applied while viewing a subset of changes.Only one suggestion per line can be applied in a batch.Add this suggestion to a batch that can be applied as a single commit.Applying suggestions on deleted lines is not supported.You must change the existing code in this line in order to create a valid suggestion.Outdated suggestions cannot be applied.This suggestion has been applied or marked resolved.Suggestions cannot be applied from pending reviews.Suggestions cannot be applied on multi-line comments.Suggestions cannot be applied while the pull request is queued to merge.Suggestion cannot be applied right now. Please check back later.

📌 Checklist before creating the PR

[doc/gemini/tensor/...]: A concise description🚨 Issue number

📝 What does this PR do?

In this PR, I patch meta information of

torch.nn.Embedding, and fix some small errors caused by several former PRs related to meta information patch.NOTE: the temp memory during the backward phase of

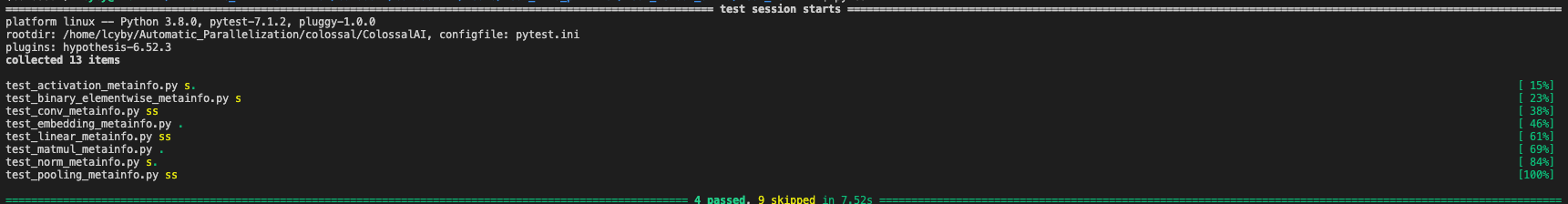

torch.nn.Embeddingis quite weird (sometimes it doesn't have temp memory) and currently I just simply set it to zero, as in NLP tasks the temp memory cost is significantly smaller than gradient memory cost.The tests will be skipped when running on torch 1.11.0, so I attach the test results here

💥 Checklist before requesting a review

⭐️ Do you enjoy contributing to Colossal-AI?

Tell us more if you don't enjoy contributing to Colossal-AI.