Getting Hosted inference API working?#8030

Closed

longenbach wants to merge 1 commit intohuggingface:masterfrom

Closed

Getting Hosted inference API working?#8030longenbach wants to merge 1 commit intohuggingface:masterfrom

longenbach wants to merge 1 commit intohuggingface:masterfrom

Conversation

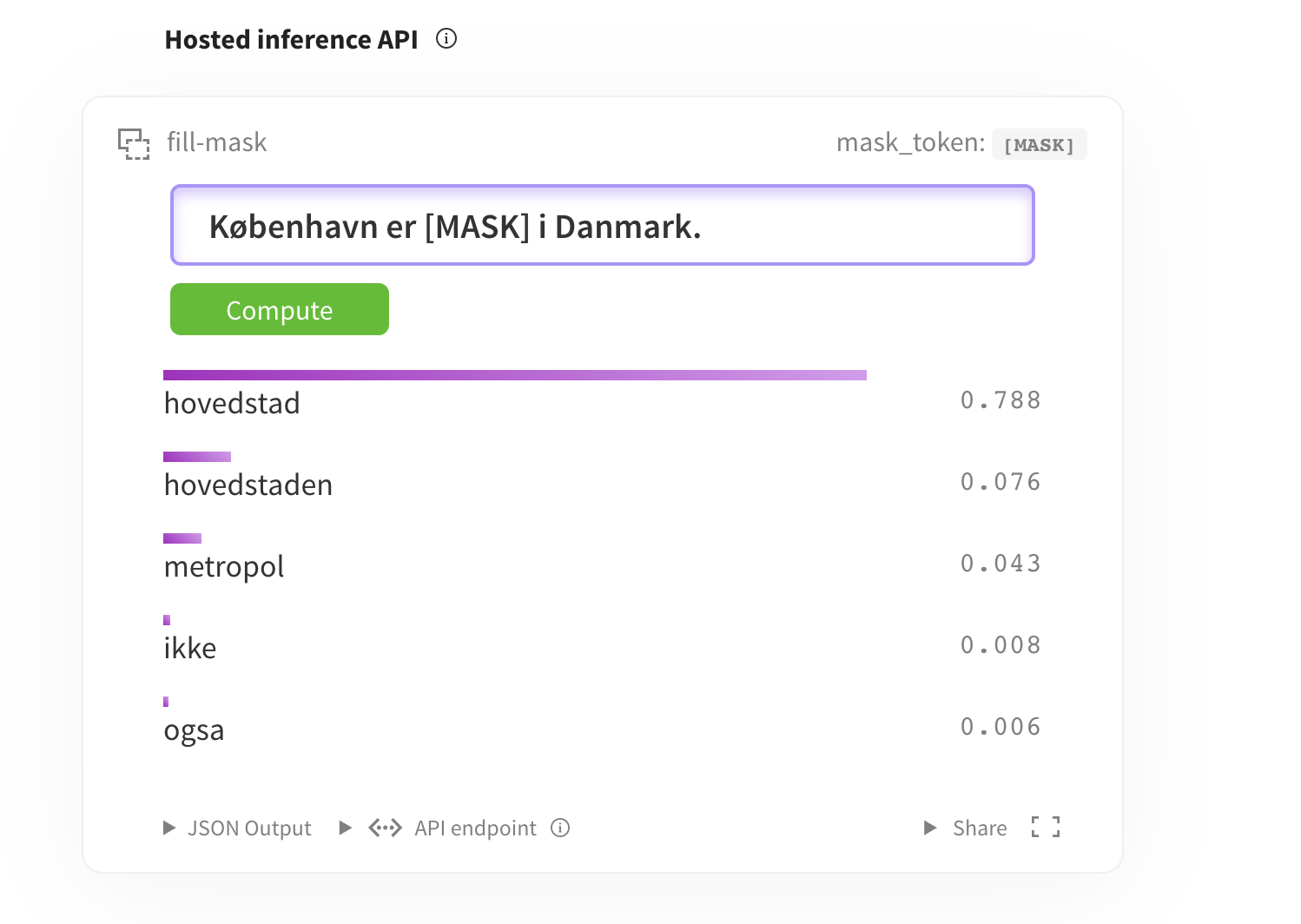

Trying to get Hosted inference API to work. Was following https://gist.github.com/julien-c/857ba86a6c6a895ecd90e7f7cab48046 ... is below the correct YAML syntax? pipeline: - fill-mask widget: - text: "København er <mask> i Danmark."

Member

|

@longenbach Note that you wouldn't need any of the tags or pipeline_tag (they would be detected automatically) if your {

...

"architectures": [

"BertForMaskedLM"

],

"model_type": "bert"

}We'll try to make that clearer in a next iteration. |

Member

Contributor

Author

|

@julien-c it works 🤗 Thanks for the insight on the documentation. So you are saying we can avoid making a model card if you include that JSON chunk in your uploaded config.json file? In case others find confusion with the Hosted inference API. Below is the YAML section of my model card that works: ---

language: da

tags:

- bert

- masked-lm

- lm-head

license: cc-by-4.0

datasets:

- common_crawl

- wikipedia

pipeline_tag: fill-mask

widget:

- text: "København er [MASK] i Danmark."

--- |

This file contains hidden or bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

Sign up for free

to join this conversation on GitHub.

Already have an account?

Sign in to comment

Add this suggestion to a batch that can be applied as a single commit.This suggestion is invalid because no changes were made to the code.Suggestions cannot be applied while the pull request is closed.Suggestions cannot be applied while viewing a subset of changes.Only one suggestion per line can be applied in a batch.Add this suggestion to a batch that can be applied as a single commit.Applying suggestions on deleted lines is not supported.You must change the existing code in this line in order to create a valid suggestion.Outdated suggestions cannot be applied.This suggestion has been applied or marked resolved.Suggestions cannot be applied from pending reviews.Suggestions cannot be applied on multi-line comments.Suggestions cannot be applied while the pull request is queued to merge.Suggestion cannot be applied right now. Please check back later.

Trying to get Hosted inference API to work. Was following https://gist.github.com/julien-c/857ba86a6c6a895ecd90e7f7cab48046 ... is below the correct YAML syntax?

pipeline:

-fill-mask

widget:

-text: "København er [mask] i Danmark."

What does this PR do?

Fixes # (issue)

Before submitting

Pull Request section?

to the it if that's the case.

documentation guidelines, and

here are tips on formatting docstrings.

Who can review?

Anyone in the community is free to review the PR once the tests have passed. Feel free to tag

members/contributors which may be interested in your PR.