tables: retry repo_uuid lookup#5593

Conversation

This adds on to 1264642 (omegh-5481) but not just adding a wait to the .omero/repository lookup but as well to the .omero/repository/*/repo_uuid lookup, so that the 20 REPEATS don't progress within 2-3 seconds but instead take long enough for the integration server has time to configure itself

ef2906d to

91c94e5

Compare

|

I wonder why |

|

Would certainly be worth adding some debugging to figure it out. E.g. a "starting hibernate initialize" and "finishing hibernate initialization" in the log so that we know how long. (There are certainly |

|

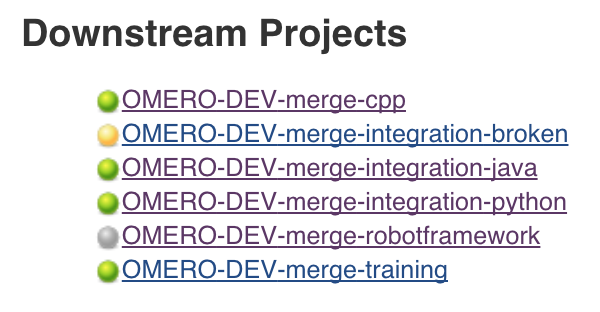

The downstream jobs from https://ci.openmicroscopy.org/job/OMERO-DEV-merge-integration/863/ were nicely green: |

|

Looks good. And I've not seen the typical |

|

Re. #5593 (comment) I'm happy to open a #5586-like PR if that could help, not sure which package/class(es) are best targeted. |

|

@mtbc: likely we should take a step back and see if we can't re-architect how the repositories are acquiring their UUIDs in the first place. This strategy has proven generally brittle and prone to race-conditions. |

|

Subjobs of https://ci.openmicroscopy.org/view/Failing/job/OMERO-DEV-merge-integration/894/ still failed since the tables server didn't start: |

|

Note: this fix wasn't sufficient. See gh-5615 |

This adds on to 1264642 (gh-5481)

but not just adding a wait to the .omero/repository lookup but as

well to the .omero/repository/*/repo_uuid lookup, so that the 20

REPEATS don't progress within 2-3 seconds but instead take long

enough for the integration server has time to configure itself

cF. https://ci.openmicroscopy.org/view/Failing/job/OMERO-DEV-merge-training/811/

Testing this PR

omero.repo.waitto a longer period (in MT) should help alleviate any issues