Expose negative matching in silences#2471

Conversation

|

Odd failure in API v1 acceptance tests, I can not reproduce even after |

|

I tried to run it locally as well and I see some timeouts when running it multiple times but I am not sure where to investigate: |

|

Great. I'll have a look ASAP (which might not be that soon in absolute terms, sorry… 😥 ). @asquare14 perhaps you might want to have a look here, too. @QuentinBisson those timeouts are usually something not related to the code. We have seen the tests in the past to be sensitive to subtle thinks like what platform you are running on. Do you get the same problem if running against the code in the main branch? @vladimiroff the CI test failure might also just be flakiness, but i doubt it. Too many tests were failing. Do you have an idea what's going on? (I'll rerun the tests right now, and once I get to review this, I will hopefully be able to help in a more meaningful way.) |

|

🎉 rerunning "fixed" the tests. So I guess we should really work on making the tests less flaky (also see recent #2461 ). |

|

I am running fedora so this might not be happening on darwin only. I tried it some more times and it is also happening on the master branch: |

|

@QuentinBisson OK, so that's a problem with the tests in your environment in general. Would be great if you could find out what's happening so that we can improve the tests. |

|

@roidelapluie raised a point, which I then explored: This PRs allows the actual creation of silences with negative matching. So what happens if you update only one Alertmanager instance to a new version with this feature but leave the other cluster member on the old version? The answer: They would read the silences with negative matchers as regular (i.e. non-regexp) positive matchers. The reason is here: This code doesn't check if So what to do now? Ideas:

Of course, even with the 2nd approach, users that haven't upgraded to the bugfix release will still be in the 1st situation. Thoughts? |

|

Note that nothing bad will happen in mixed clusters as long as you don't create silences using negative matchers. In a way, we can hope that this new feature doesn't become popular too quickly to limit the damage. (o: |

f7a827f to

3c1444c

Compare

|

Latest commit actually exposes that isEqual field to the UI. The matcher format I will highly appreciate thorough review especially on that commit. While I feel fairly confident with the Go part, my experience with Elm boils down to trying to close that issue in a second PR, so far 😅 Also, think I just found another flaky acceptance test (cc: @QuentinBisson) |

|

Regarding test flakiness, I could reproduce it running multiple instances in parallel and the only issue I had had to do with tests using a port already in use on my machine. The issue could be due to the following code in the acceptance test and especially the hope part in the comment :D // freeAddress returns a new listen address not currently in use.

func freeAddress() string {

// Let the OS allocate a free address, close it and hope

// it is still free when starting Alertmanager.

l, err := net.Listen("tcp4", "localhost:0")

if err != nil {

panic(err)

}

defer func() {

if err := l.Close(); err != nil {

panic(err)

}

}()

return l.Addr().String()

}@beorn7 Regarding your backporting question, I guess it depends on the alertmanager release process. Are there multiple release maintained at the same time (i.e. if 2.22.0 is released, will there still be some work on the 2.21 branch)? If yes, I think we should backport, otherwise, if there is a an upgrade to 2.22.0 and one to 2.21.x, I think most people will upgrade to 2.22.0 and skip the 2.21.x release so a warning is the best we could do in my opinion. |

|

About the release strategy: We usually only do development on the last release branch for bugfixing. Once a new minor release has been cut, we rarely do bugfixes for the previous minor release. In this case, the fix seems to be so easy that I'm tempted to just propose it, cut 0.21.1, and then warn users if they want to upgrade a whole cluster without risk of inverting the meaning of their silences while the upgrade is happening, they should first upgrade to 0.21.1 before upgrading that one to 0.22.0. |

|

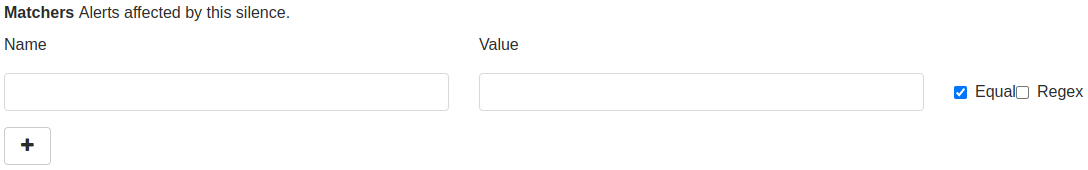

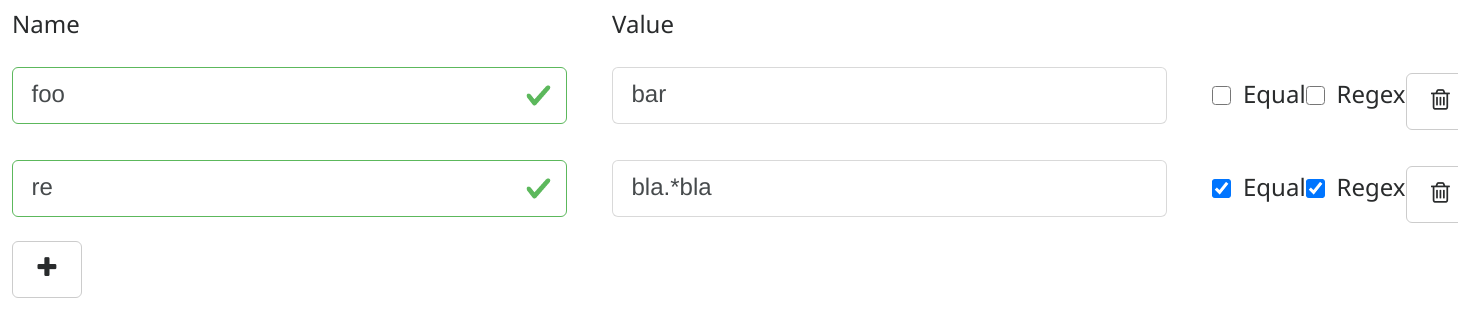

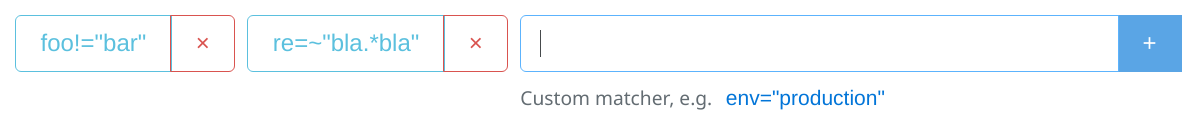

@vladimiroff Thanks for all the work. I still need to find time for a code level review, but here is a thought about the UI: My idea was to not add another checkbox to the silence form but to change it completely to the same kind of UI we have for alert filtering on the landing page. I.e. instead of this: it would look like With the basic idea that it should be easy to re-use the UI code we already have for the main alerts page. (I'm not a UI developer, either, but that's what I would naively assume. :-) I think this would provide consistency, ease of use, and be much more compact. |

|

For the 0.21 fix, I have now created #2479 |

beorn7

left a comment

beorn7

left a comment

There was a problem hiding this comment.

I think this looks good from the general code perspective. I just had an idea how to do the formatting more consistently, see comments.

And I really think we should at least try to use the UI I have proposed. @vladimiroff do you think you have a chance implementing it? Otherwise, I can try to get support from someone fluent in Elm.

Signed-off-by: Kiril Vladimirov <kiril@vladimiroff.org>

Signed-off-by: Kiril Vladimirov <kiril@vladimiroff.org>

Signed-off-by: Kiril Vladimirov <kiril@vladimiroff.org>

23e3b88 to

5f0cbe2

Compare

Thanks for the review. Addressed comments in 5f0cbe2.

Would definitely prefer somebody else to tackle properly the UI part. If there's nobody available I could have a go at it in a week or two. Does it make sense to merge this, being functionally complete, and have a separate issue for UI before releasing v0.22? |

beorn7

left a comment

beorn7

left a comment

There was a problem hiding this comment.

One more comment to finish up the matchers formatting.

cli/format/format_extended.go

Outdated

| output := []string{} | ||

| for _, matcher := range matchers { | ||

| output = append(output, extendedFormatMatcher(*matcher)) | ||

| output = append(output, simpleFormatMatcher(*matcher)) | ||

| } | ||

| return strings.Join(output, " ") |

There was a problem hiding this comment.

I think we should also utilize how labels.Matchers.String formats multiple matchers, i.e. {foo="bar", dings="bums"} rather than foo="bar" dings="bums" as it is done now. And I think now that you have implemented labelsMatcher, it's very easy:

lms := labels.Matchers{}

for _, matcher := range matchers {

lms = append(lms, labelsMatcher(*matcher)

}

return lms.String()|

About the UI issue: I feel a bit uncomfortable to change the UI twice, even if we only cut a release after the final change. Ideally, we could get to the final (or "final-like") UI in this PR. It's a long shot, but perhaps @w0rm or @stuartnelson3 or @mxinden might find a bit of time to help out @vladimiroff? I'm pretty sure it should be easy for someone fluent in Elm. |

|

@vladimiroff thanks for working on supporting negative matching in silences and even giving the Elm part a try! I agree with @beorn7 that reusing the same UI would be ideal. We might be able to reuse a lot of existing code and simplify the UI at the benefit of providing the new feature. I am happy to implement the proposed UI (ETA next Monday). Will it be possible to only merge the changes to the Go codebase, and let me open a separate PR that updates the UI? |

|

@w0rm thanks a million for your help! @vladimiroff how about removing the UI changes from this PR then? Once my last comment in addressed, we can merge this, and @w0rm can do his magic. |

There is non-trivial value escaping going inside pkg/labels. Signed-off-by: Kiril Vladimirov <kiril@vladimiroff.org>

Signed-off-by: Kiril Vladimirov <kiril@vladimiroff.org>

bc627f8 to

84dd6ab

Compare

Signed-off-by: Kiril Vladimirov <kiril@vladimiroff.org>

Sure, think I've just addressed the comment in 84dd6ab. Removing all UI changes is as easy as a simple revert, but then the build fails. What I did is simply removing the changes in silence form (i.e. that 'Regex' checkbox). Thus API compliance and proper rendering of non-equal matchers is still here. |

|

OK, thanks. Let's go with this. @w0rm all is set for you to do your magic. It would be great if old deep-links to create silences continued to work. (People have a lot of those out there, e.g. to provide a silence link in a notification.) |

The changes introduced in prometheus#2471 (see prometheus@2b6315f#diff-4975284c2279cb0d4dbc76c701ea7c35725245a92caaf2e8be8e5b5e56cf1ab0) allow generated `Matcher.IsEqual` can become nil (see https://github.com/prometheus/alertmanager/blob/2b6315f399b95c4e327dcd5f5eb10866ef844252/api/v2/models/matcher.go#L35), as its default value is considered true now. Checking for nil value of this field and default it to `true` in the `labelsMatcher()` func fixes nil pointer dereference.

The changes introduced in prometheus#2471 (see prometheus@2b6315f#diff-4975284c2279cb0d4dbc76c701ea7c35725245a92caaf2e8be8e5b5e56cf1ab0) allow generated `Matcher.IsEqual` can become nil (see https://github.com/prometheus/alertmanager/blob/2b6315f399b95c4e327dcd5f5eb10866ef844252/api/v2/models/matcher.go#L35), as its default value is considered true now. Checking for nil value of this field and default it to `true` in the `labelsMatcher()` func fixes nil pointer dereference. Signed-off-by: Yury Evtikhov <yury@cloudflare.com>

Closes #1682