Written in December 2020 for the Octree/Async work in napari.

Webmon is three things:

- An experimental napari shared memory client.

- A proof of concept Flask-SocketIO webserver.

- An example web app that contains two pages:

See the lengthy napari PR 1909 for more information.

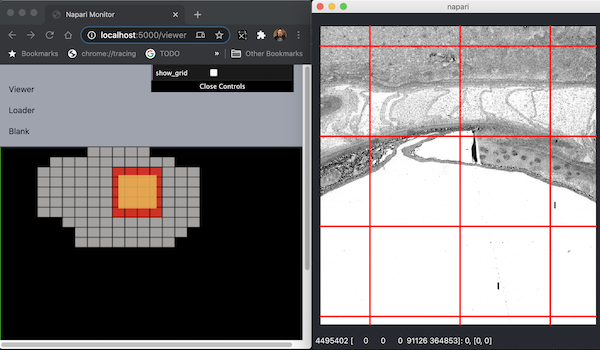

Web app has two pages Tile Viewer and Loader Stats:

The Tile Viewer is shown on left. On right is napari. The tile viewer shows which Octree/Quadtree tiles are currently being viewed by napari. The yellow rectangle is the view frustum, and the red tiles are the ones napari is drawing.

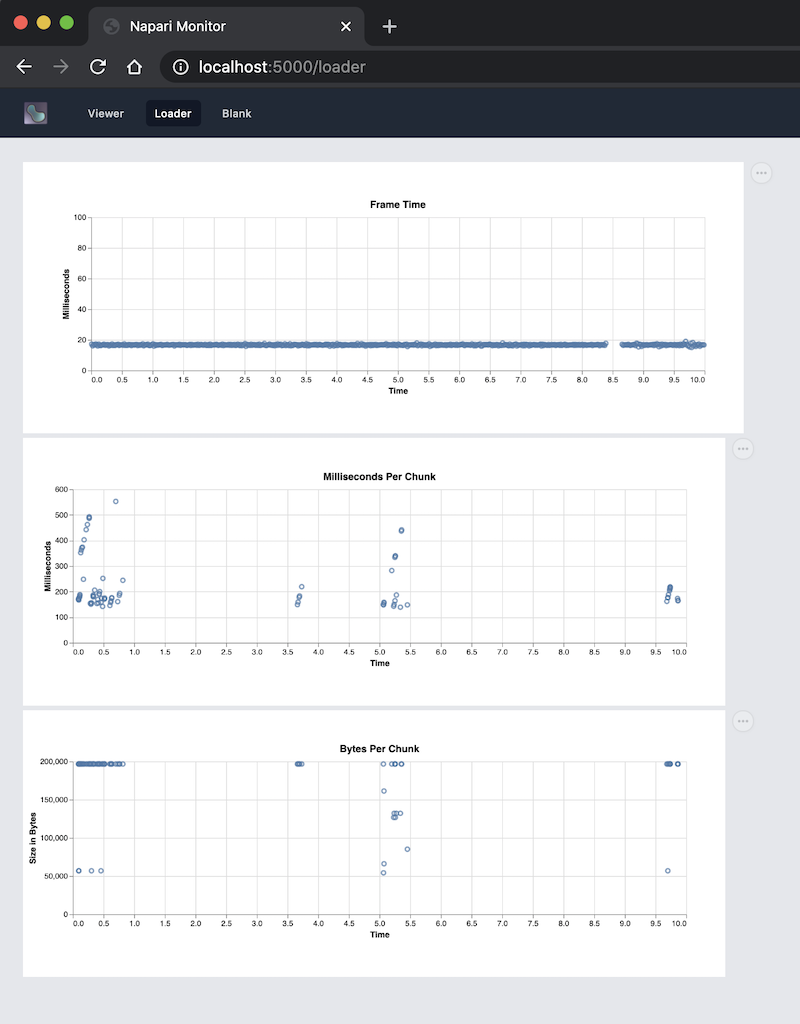

The Loader Stats are three 2D Vega-Lite graphs:

There is a shared memory connection between napari (Python) and the small webmon process (Python). And there is an always-on WebSocket connection between webmon (Python) and the web app (Javascript). Messages can flow through both hops at 30-60Hz. Obviously there is some limit to the message size before things bog down. Limit is TBD.

- Python 3.9

- Newest shared memory features were first added in Python 3.8.

- However 3.8 seemed to have bugs, where 3.9 works.

- In webmon directory:

pip3 install -r requirements.txt

- Install node/npm

- Not sure of min req but on MacOS I've been using:

node -v->v14.3.0npm -v->6.14.4

- In webmon directory:

make build

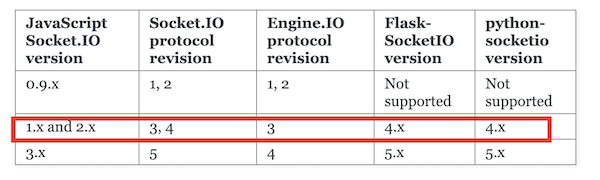

Something we are using is not compatible with latest socketio version. So we need to stay in this red box. Our npm and Python requirements.txt should configure this for you. However, if you get this error The client is using an unsupported version of the Socket.IO or Engine.IO protocols the WebUI will not talk to webmon until you fix the dependencies.

webmon.pyandnapari_client.pyare pretty immature and need work.

Long term it would be nice if the Python part was pretty generic. So to add new WebUI you only had to modify napari to share the data and then modify the WebUI to show the data. And the Python parts just pass data around without caring what the data is about. We are heading in that direction, but not there yet.

- Tailwind CSS - GitHub

- Like bootstrap but more modern.

- VS Code extension: Tailwind CSS IntelliSense

- The

styles.cssfile is trimmed/customized "in production".

- Do not edit .js files under

static.- .json files in static are fair game.

- Edit .js files under

jsthen build as above. - If you made a Javascript-only change:

make build- Typically hard reload (shift-command-R) in Chrome is enough.

- Typically do not need to restart napari/webmon unless you changed those.

- We are using Vega-Lite for viz.

- You can modify the

.jsonfiles instatic/specs.

- Issues and PR's needed.

- Update this README if you were stuck on something.

- Or if you found useful resources for learning.

- Modify existing pages or create new ones.

- Use tailwindcss and Vega-Lite more fully.

- Use other styles/packages/modules beyond those.

- Modify napari to share more things.

- Try sharing

numpydata backed by a shared memory buffer. - Create a system so we only share data if a client is asking for it.

- Try sharing

- Modify napari so the WebUI can control more things.

-

In

webmon.pyadd an entry to thepageslist like:pages = [ "viewer", "loader", "my_new_page" ]

-

In

build.jsadd your.jsfile for the page:entryPoints: [ 'src/viewer.js', 'src/loader.js', 'src/my_new_page.js' ],

-

Add your new

js/src/my_new_page.jsandtemplates/my_new_page.html.

If you are using your own script to launch napari, make sure you are using the convention:

if __name__ == "__main__":

main()

By default SharedMemoryManager uses fork(). Fork will start your

process a second time. Only this time __name__ will not be set to

"__main__".

Your code and napari's code should not do anything on import-time that it's

not safe do a second time. The main flow the application should only come

from a guarded main() call.

The SharedMemoryManager forks the main process so it can start a little

manager process that will talk to remote clients. This second process wants

the same overall context of the first process, but it runs a little server

of some sort, it does not want to run napari itself.

An attempt has been made to start a new process before the

current process has finished its bootstrapping phase.

Probably the same __main__ problem as above. Napari ran a second time,

which created SharedMemoryMonitor a second time, which forked a second

time. A fork loop basically.

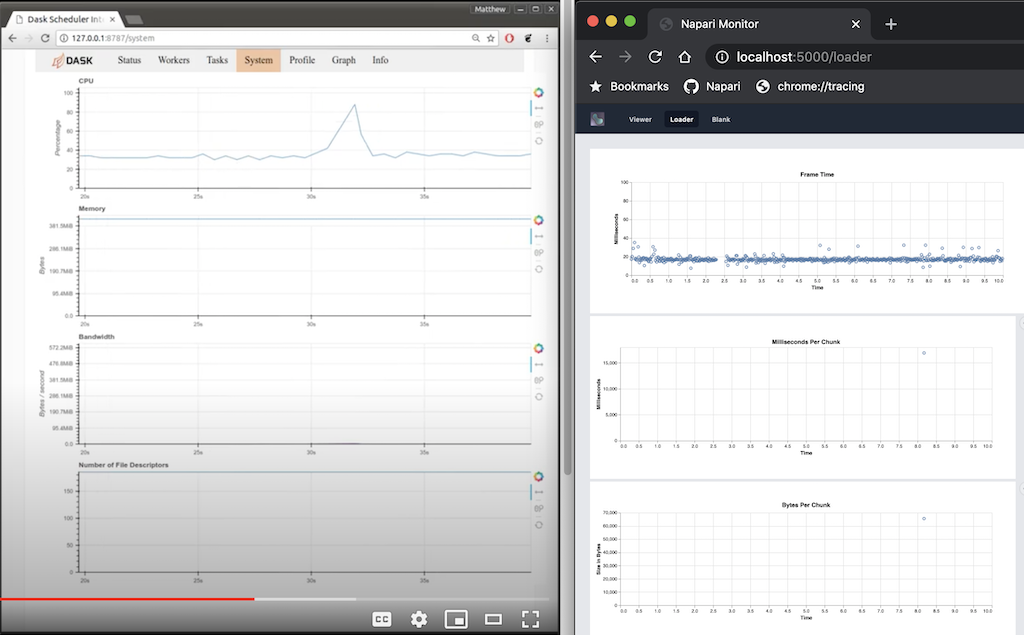

The Dask Dashboard design is very similar to webmon. It's also a localhost website that you connect to, which has tabs along the top, and the tabs show graphs and other visualizations. Theirs is much more advanced. Here is Dask Dashboard on the left from this video and webmon on the right:

They use Bokeh where we use Vega-Lite. It looks like Bokeh might be better for streaming data. There is a Python server you can get which talks to the Javscript front end.

Beyond messages, the Big Kahuna would be using shared memory buffers to

back numpy arrays and recarrays. Then we could share large chunks of

binary data. Throughput limits are not known yet. Particularly the

Websocket hop might be the slow part. This has not been attempted yet. If

the websocket hop is the slow part, could an

Electron process be a shared memory client,

and then directly render the data? TBD.

Please add more references if you found them useful.

- multiprocessing.shared_memory (official docs)

- Python Shared Memory in Multiprocessing (

numpy recarray) - Interview With Davin Potts (core contributor)

- FlaskTest

- A simple demo that combines Flask, Socket-IO, and three.js/WebGL