Conversation

|

Thanks for your pull request, @JackStouffer! Bugzilla references

|

441931e to

0d1765c

Compare

|

#2991 has been closed because it will be a part of the future |

|

@JackStouffer Since

|

|

@CyberShadow heh, blast from the past. Sure, if people are interested, I'll resurrect this. |

|

There is a |

|

@CyberShadow Fixed |

int[] arr = null;

writeln(mean(arr, 5));This prints 0. Is that correct? What does the seed do, anyway? I think it could use a bit more elaboration. |

|

Yeah, the seed is mainly there to support user defined types. It doesn't really make sense when you're just using plain number types. I'll add a note in the docs. |

|

OK. Shouldn't |

|

@CyberShadow It will if you don't explicitly set the seed. Or, are you saying that there should be some |

|

Maybe I just don't understand the docs.

So far so good.

Populate in what way? What effect does it have, exactly?

The above conditions are both satisfied for my example above. So it should return

A seed is given but it is a numerical type, so this shouldn't apply to my example above. Also, is the seed value used anywhere, or just its type? |

Yep, I think nonsensical instantiations should be rejected outright to avoid confusion. |

The value is used in both the call to

Ok, changed the template constraints on the second overload and changed the docs. |

std/numeric.d

Outdated

| /// ditto | ||

| auto mean(R, T)(R r, T seed) | ||

| if (isInputRange!R && | ||

| (is(T == real) || !isNumeric!(T)) && |

There was a problem hiding this comment.

to make the first overload compile

There was a problem hiding this comment.

plus it also correctly returns nan if you do

int[] a = [];

mean(a, real(0));There was a problem hiding this comment.

Why you restrict the seed type at all?

Isn't assert((cast(int[]) null).mean(0) == 0) a valid use case?

There was a problem hiding this comment.

Isn't assert((cast(int[]) null).mean(0) == 0) a valid use case?

No. Taking the mean of an array and not giving back a FP value makes no sense from a statistical perspective.

This is why this function is in std.numeric and not std.algorithm, because it's opinionated by design.

wilzbach

left a comment

wilzbach

left a comment

There was a problem hiding this comment.

Please mind my feedback about FP precision.

Moreover,

- if it's just

meanI would search instd.algorithm.iterationfor this (after all that's wheresumis). What's your reasoning forstd.numerical? - there's quite a bunch of statistical properties that can be computed online (i.e. as an OutputRange):

at least mean, stdev, variance, skewness, kurtosis, min, and max. I wonder whether it would make sense to add aStatReportinstead. It would still be very easy to usearr.stat.mean(of course the convenience overload can still exist) and we could even make more expensive computations opt-in (e.g.arr.stat!(Stat.skewness, Stat.kurtosis))

std/numeric.d

Outdated

|

|

||

| if (r.empty) | ||

| { | ||

| return Unqual!(ElementType!R).init; |

There was a problem hiding this comment.

Even if documented, this is quite counter-intuitive. After all - the purpose of a seed is to be used for these edge cases ;-)

What's your motivation for this?

Here's how I would expect mean to work:

(cast(int[]) null).mean; // nan

(cast(int[]) null).mean(0); // 0There was a problem hiding this comment.

The problem as mentioned above is that mean should return a FP result when ever possible.

std/numeric.d

Outdated

| auto pair = reduce!((a, b) => tuple(a[0] + 1, a[1] + b)) | ||

| (tuple(size_t(0), seed), r); | ||

|

|

||

| return pair[1] / pair[0]; |

There was a problem hiding this comment.

Please let's use a more efficient algorithm here. Or to quote Jonis alonen

Do not use this method to compute variance ever. This is one of those cases where a mathematically simple approach turns out to give wrong results for being numerically unstable. In simple cases the algorithm will seem to work fine, but eventually you will find a dataset that exposes the problem with the algorithm.

To put this in code. The naive approach vs. an online.combination after Welford. I used StatReport here, it's a OutputRange for mean + variance, butSummary of dstats is nice as well.

auto arr = [10e19 + 4, 7, 10e9 + 13, 10e15 + 16];

writefln("Naive: %f", arr.mean);

writefln("Online: %f", arr.stat.mean);yields:

Naive: 25002500002500001792.000000

Online: 25002500002500000008.000000

For reference, it should be 25002500002500000010.

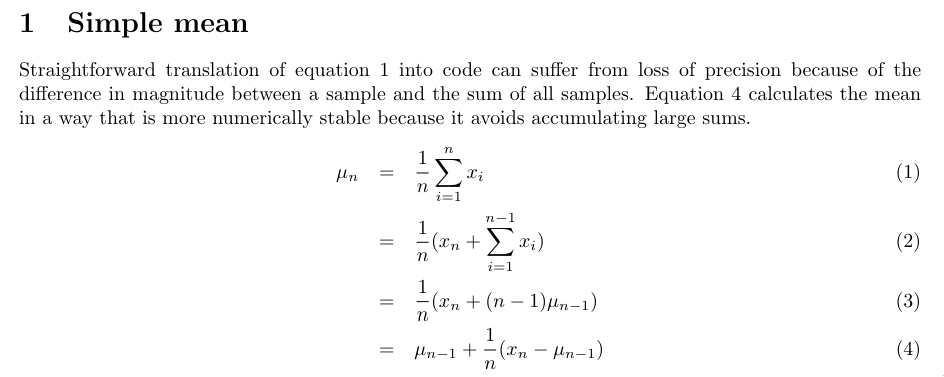

Or if you prefer to read a paper (the simple trick is equation 4 in chapter 1):

This method is more precise, but not as efficient due to division in the loop. However, I argue that most users won't care and correctness is more important than performance for a standard library.

std/numeric.d

Outdated

| assert(bigint_arr.mean(BigInt(0)) == BigInt("3")); | ||

| assert(bigint_arr2.mean(BigInt(0)) == BigInt("3")); | ||

| } | ||

|

|

There was a problem hiding this comment.

A test for floating point accuracy would be nice.

std/numeric.d

Outdated

| return real.nan; | ||

| } | ||

|

|

||

| return mean(r, real(0.0)); |

There was a problem hiding this comment.

Are you aware that real FP (in this summation) is quite slower than double?

/**

Compile with:

> ldc -release -O3 -mcpu=native -boundscheck=off

*/

import std.algorithm, std.range;

private void doNotOptimizeAway(T)(auto ref T t)

{

import core.thread : getpid;

import std.stdio : writeln;

if(getpid() == 1) {

writeln(*cast(char*)&t);

}

}

/// ditto

auto mean(R, T)(R r, T seed)

{

import std.algorithm.iteration : sum, reduce;

static if (hasLength!R)

{

if (r.length == 1)

return r.front;

return sum(r, seed) / r.length;

}

else

{

import std.typecons : tuple;

auto pair = reduce!((a, b) => tuple(a[0] + 1, a[1] + b))

(tuple(size_t(0), seed), r);

return pair[1] / pair[0];

}

}

void main() {

import std.datetime;

import std.stdio;

import std.array;

import std.conv;

import std.random;

auto arr = iota(100_000).array;

auto bench = benchmark!(

{ doNotOptimizeAway({

return arr.mean(real(0));

}());},

{ doNotOptimizeAway({

return arr.mean(double(0));

}());},

{ doNotOptimizeAway({

return arr.filter!(a => a >= 0).mean(real(0));

}());},

{ doNotOptimizeAway({

return arr.filter!(a => a >= 0).mean(double(0));

}());},

)(50_000);

string[] names = ["mean (real)", "mean (double)", "mean (real, InputRange)", "mean (double, InputRange)"];

foreach(j,r;bench)

writefln("%-15s = %s", names[j], r.to!Duration);

}> ldc -release -O3 -mcpu=native -boundscheck=off test.d && ./test

mean (real) = 5 secs, 185 ms, 845 μs, and 3 hnsecs

mean (double) = 3 secs, 869 ms, 445 μs, and 9 hnsecs

mean (real, InputRange) = 6 secs, 109 ms, 83 μs, and 8 hnsecs

mean (double, InputRange) = 5 secs, 940 ms, 972 μs, and 1 hnsec

There was a problem hiding this comment.

I assume that if you want statistical values, accuracy will be more valuable than speed.

There was a problem hiding this comment.

I assume that if you want statistical values, accuracy will be more valuable than speed.

FWIW with the test provided below double yields exactly the same inaccuracy (25002500002500001792.000000).

The point was that always picking real is a severe performance penalty with almost no neglectable accuracy improvements, so the user should at least have the choice.

Also there's another, very valid point about allowing double: it's consistent on all platforms. I purposefully ignored real on my Tinflex random project last year because the algorithm was very sensitive to small change (more details about FP behavior with dmd or as a handy list of FP issues to keep in mind).

std/numeric.d

Outdated

| BigInt("1"), BigInt("2"), BigInt("3"), BigInt("6") | ||

| ]); | ||

| assert(bigint_arr.mean(BigInt(0)) == BigInt("3")); | ||

| assert(bigint_arr2.mean(BigInt(0)) == BigInt("3")); |

There was a problem hiding this comment.

Could we test mean on an empty array of a user-defined typed (e.g. BigInt). Thanks!

edit: has been fixed.

std/numeric.d

Outdated

| } | ||

|

|

||

| static if (hasLength!R) | ||

| { |

There was a problem hiding this comment.

As mentioned below, it's more precise to do division on every step, but of course a bit slower.

std/numeric.d

Outdated

| return r.front; | ||

| } | ||

|

|

||

| return sum(r, seed) / r.length; |

There was a problem hiding this comment.

@JackStouffer Since std.las never materialized

FYI there's mir.math.sum: https://github.com/libmir/mir-algorithm/blob/master/source/mir/math/sum.d

It's well tested and highly optimized.

There was a problem hiding this comment.

If anyone is willing to port that to Phobos, I'd be willing to use it :P

|

@wilzbach I tried your algorithm and I'm still getting the same result for the test case. What do I need to change? auto mean(R, T)(R r, T seed)

{

T meanRes = seed;

size_t i = 0;

foreach (e; r) {

immutable delta = (e - meanRes);

meanRes += delta / ++i;

}

return meanRes;

}

auto arr = [10e19 + 4, 7, 10e9 + 13, 10e15 + 16];

writefln("Online: %f", arr.mean(real(0.0))); // 25002500002500001792.000000 |

Looks like your void main()

{

import std.stdio;

auto arr = [10e19 + 4, 7, 10e9 + 13, 10e15 + 16];

writefln("Online: %f", arr.mean(double(0)));

writefln("Online: %f", arr.mean(real(0)));

} |

|

Gah! Seems like this needs to be really re-worked. |

|

@wilzbach I think addressed most of the issues. |

95f96bd to

55b44d3

Compare

Behavior for empty inputI thought a bit and returning

|

wilzbach

left a comment

wilzbach

left a comment

There was a problem hiding this comment.

Looks a lot better now. Thanks for addressing my concerns!

I found some typos + had an idea for a good tests, but I think the only blocker left is the approval of the name addition.

Lastly I am still not really happy about the different behavior between an empty array of type long and a user-subtype of long. I am aware that the user will be warned by a compile error as the seed parameter is missing, but it's still not a nice behavior.

AFAICT the second overload solely exists for arbitrary-precision types like BigInt and there aren't many precedent of math* or numeric caring about BigInt. gcd is the only one I know of.

std/numeric.d

Outdated

| is used. The default result type is `real`, which is more accurate, | ||

| but far slower than `double` on many architectures. | ||

|

|

||

| For user defined types, element by element summation is used. Additionally |

There was a problem hiding this comment.

Nit: user-defined is the common spelling here

std/numeric.d

Outdated

| The first overload of this function will return `T.init` if the range | ||

| is empty. However, the second overload will return `seed` on empty ranges. | ||

|

|

||

| This function is $(BIGOH r.length). |

There was a problem hiding this comment.

big O notations are adjectives, not nouns.

std/numeric.d

Outdated

| The mean of `r` when `r` is non-empty. | ||

| */ | ||

| T mean(T = real, R)(R r) | ||

| if (isInputRange!R && |

There was a problem hiding this comment.

Nit: The if constraint should be on the same indent level as the declaration.

See also: it's part of the DStyle and will be automated soon.

std/numeric.d

Outdated

| BigInt[] bigint_arr3 = []; | ||

| assert(bigint_arr3.mean(BigInt(0)) == BigInt(0)); | ||

| } | ||

|

|

There was a problem hiding this comment.

One last idea: testing the behavior with subtypes:

struct MyFancyDouble

{

double v;

alias v this;

}(they currently use the second overload, however, intuitively as a user I would expect the first one).

There was a problem hiding this comment.

(they currently use the second overload, however, intuitively as a user I would expect the first one).

Yeah, well that's the problem with alias this and templates. alias this works great with function overloads, but with templates you have to either explicitly cast or explicitly define the template types a la the hacks in std.file

std/numeric.d

Outdated

| // inaccurate for integer division, which the user defined | ||

| // types might be representing | ||

| auto pair = reduce!((a, b) => tuple(a[0] + 1, a[1] + b)) | ||

| (tuple(size_t(0), seed), r); |

There was a problem hiding this comment.

Hmm AFAICT this only makes sense for BigInt (or similar types with arbitrary precision), for all other user-defined or subtyped types using real and the first overload makes more sense...

Also there's no need to save and compute the length is hasLength is available. Another lambda comes with a cost.

There was a problem hiding this comment.

Hmm AFAICT this only makes sense for BigInt (or similar types with arbitrary precision), for all other user-defined or subtyped types using real and the first overload makes more sense...

The problem is there's no way to know if the type doesn't use alias this. And if it does use alias this, then they can cast to real in order to get the other overload.

Also there's no need to save and compute the length is hasLength is available. Another lambda comes with a cost.

ok

There was a problem hiding this comment.

The problem is there's no way to know if the type doesn't use alias this. And if it does use alias this, then they can cast to real in order to get the other overload.

There's and that was my entire point:

import std.bigint;

import std.stdio;

void main(string[] args)

{

struct MyFancyDouble

{

double v;

alias v this;

}

pragma(msg, typeof(MyFancyDouble.init / size_t(0)));

pragma(msg, typeof(BigInt.init / size_t(0)));

}Or in other words: if the division by size_t yields a FloatingPoint type -> algorithm from overload 1 should be used.

The |

c7e18f9 to

718017e

Compare

Yet another reason for putting it into |

|

Ping @wilzbach @CyberShadow Moved it back to std.algorithm as that makes the most sense. Let's get a final decision on this and get it out of the queue. |

Thanks! I think the API is looking much better than the initial iteration. Still, math stuff is really not my area of expertise (I was off on a limb reviewing this in the first place), so deferring this to std.algorithm code owners. |

std/algorithm/iteration.d

Outdated

| Returns: | ||

| The mean of `r` when `r` is non-empty. | ||

| */ | ||

| T mean(T = real, R)(R r) |

There was a problem hiding this comment.

We should move away from the use of real as a default and treat it as a special interest type. Please use double here.

std/algorithm/iteration.d

Outdated

| if (isInputRange!R && | ||

| isNumeric!(ElementType!R) && | ||

| !isInfinite!R && | ||

| is(Unqual!(T) == T)) |

There was a problem hiding this comment.

superfluous parens, use Unqual!T

std/algorithm/iteration.d

Outdated

|

|

||

| // Knuth & Welford mean calculation | ||

| // division per element is slower, but more accurate | ||

| foreach (e; r) |

There was a problem hiding this comment.

Avoid foreach, use explicit loops

There was a problem hiding this comment.

Huh? We're moving away from foreach?

There was a problem hiding this comment.

it's a convenience engine and may copy stuff etc. Just use a loop and use r.front only once inside the function.

std/algorithm/iteration.d

Outdated

| // division per element is slower, but more accurate | ||

| foreach (e; r) | ||

| { | ||

| immutable T delta = (e - meanRes); |

std/algorithm/iteration.d

Outdated

| foreach (e; r) | ||

| { | ||

| immutable T delta = (e - meanRes); | ||

| meanRes += delta / ++i; |

There was a problem hiding this comment.

better use meanRes += delta / i++; initializing i to 1

| auto mean(R, T)(R r, T seed) | ||

| if (isInputRange!R && | ||

| !isNumeric!(ElementType!R) && | ||

| is(typeof(r.front + seed)) && |

There was a problem hiding this comment.

the type of + should be numeric

| if (isInputRange!R && | ||

| !isNumeric!(ElementType!R) && | ||

| is(typeof(r.front + seed)) && | ||

| is(typeof(r.front / size_t(1))) && |

std/algorithm/iteration.d

Outdated

|

|

||

| if (len > 0) | ||

| return sum(r, seed) / len; | ||

| else |

std/algorithm/iteration.d

Outdated

| // types might be representing | ||

| static if (hasLength!R) | ||

| { | ||

| immutable len = r.length; |

There was a problem hiding this comment.

that's overdoing it - just use r.length

In the BigInt case this isn't true, because the type of the addition is BigInt and |

|

Thanks everyone for getting this through! |

Now that #2991 has been closed, I am reintroducing this function. I believe std.numeric to be a better fit than std.algorithm.iteration, so I moved it.

See #3679 for previous discussion

Also fixes issue 14034