Reduce Task and async method overheads#9471

Conversation

|

@benaadams, would you mind giving this a go with your various test suites (microbenchmarks and ASP.NET) to see how well this does for you? |

|

Good timing; have test-bed already setup and running 😄 |

😄 |

|

Don't know how much its used but line 2330 in TaskFactory.cs could change from if (Interlocked.CompareExchange(ref m_firstTaskAlreadyCompleted, 1, 0) == 0)to? if (Interlocked.Exchange(ref m_firstTaskAlreadyCompleted, 1) == 0) |

|

Definitely hasn't regressed; peaking up to 3.3GiB/s (2 x D15 v2 - 20 Cores, 2 Numa, Win <-> Linux) I get the odd run that locks at ~1.9GiB/s but was getting that before this change. Is probably an entire server rack complaining about their noisy neighbour 😄 Will pull some traces |

|

/cc @mikeharder |

|

Plaintext Traces https://aoa.blob.core.windows.net/aspnet/HttpTest-Task.zip (97MB) Sampling trace in Will try the task and treadpool tests in morning. |

|

We'll likely merge clash in ThrowHelper #9473 |

|

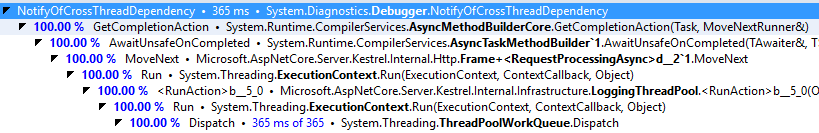

AsyncMethodBuilder.cs line 888

Have been handling this with an extra call to separate NoInline one more call away. (e.g. in ThrowHelper) As there are already two calls might be worth removing the e.g. if (Debugger.IsAttached)

Debugger.NotifyOfCrossThreadDependency(); |

|

Hmm... is public, might have to leave the inner |

|

Raised PR for Debugger #9490 |

- Slim down AsyncTaskMethodBuilder`1.Task and make it aggressively inlined. The common case we care to optimize for is synchronous completion, in which case the first access to Task will already fine m_task non-null, and we want that access inlined. - Slim down AsyncTaskMethodBuilder`1.SetResult. As with Task, we care most about the synchronous completion path, as when perf matters, most async method invocations complete synchronously. The code path is streamlined so that the synchronous completion path can mostly be inlined. - Replace some throws with ThrowHelper - Mark GetTaskForResult as aggressive inlining. It contains a lot of C# code, but for a given TResult, the JIT trims away the majority of the implementation.

- Remove some unecessary intermediate functions, e.g. Execute(), manually inlining it into its one caller - Make the common completion path (no exceptions, no cancellation, etc.) more inlineable, and avoid calling some functions (like AddExceptionsFromChildren) when we know they will be nops. - Make FinishContinuations inlineable. When there aren't any continuations, this shaves off a measurable percentage of time. When there are, we're no worse off, as the FinishContinuations entrypoint gets inlined, so we still only have the one function call to RunContinuations. - Make TaskCompletionSource.TrySetResult more inlineable. It was just on the cusp, with an extra branch / call to IsCompleted putting it over the edge. But the common path for calling TrySetResult is when the call will successfully transition, in which case we don't need the IsCompleted call; it's only necessary if/when we lose the race condition, in which case we can pay a bit more to call SpinUntilCompleted. - Avoid some duplicate logging-related calls - Remove AggressiveInlining from an ETW-related method that did not need it; the call sites can instead just check IsEnabled before calling it. - Remove some unnecessary writes, casts, locals, etc., make some fields readonly - Change CompareExchange to Exchange in Task.WhenAny completion

41c7282 to

f45a336

Compare

|

Thanks, @benaadams and @kouvel. |

|

@stephentoub will do some longer runs today with the latest merges; and the Task tests |

|

Thanks, @benaadams. |

|

Running tests on updated libs https://aoa.blob.core.windows.net/aspnet/HttpTest-Task2a.zip (106MB) Will post traces when finished; using the |

|

@stephentoub sampling traces

|

|

Thanks for running these, Ben. What am I looking at here? Are these numbers better? worse? same? |

|

Long running execution samples to see what's being executed. The workloads are definitely better :) There are some slight regressions when very many threads end up queuing batches to the global threadpool; but they aren't consistent. As discussed previously that looks like increased contention from the essentially empty tasks going even faster, rather than a practical issue. One thread creating single threadpool/Task work on 20 core VM was always an outliner (1/4 speed of rest) and that seems to have gone. 💯 ASP.NET workload looks good. |

|

LGTM |

|

Ideally we'd run our benchmarks as well before the ASP.NET workload is considered "good". @benaadams it would be good to understand what kinds of tests you're running and what spectrum of things are being covered. If you have some that we're missing, we should get them into our benchmarks. |

I've been running my own that test a spectrum of things that hit these code paths, in addition to the ASP.NET plaintext and JSON workloads. Ben's been helpful in providing additional verification. What other scenarios are you concerned this will regress, David? |

|

I don't have any problems with this change in particular. It be cool if we were all running the same set of tests. Also I'd like it if somebody from our team also ran benchmarks for changes where you think it may affect our scenarios. More eyes on it is a good thing. PS: Ben I do trust you 😁 |

I would like that, too. |

Am testing 12 different ways to call and use Tasks, with awaiting, continuations, fanout, chains and direct returns. Via both the threadpool and dedicated threads. Also calling QUWI from both dedicated threads and threadpool. These are tested being called serially and in parallel (x1, x2, x16, x64, x512) and at different recursion depths (x1, x16, x64, x512). In total 650 different tests ((12+1) x 2 x 5 x 5); which are then run in batches of 262,144 iterations (then pause for full GC) The The Though the traces are mostly for identifying the hot methods than the actual performance. I also run the Task tests without profiling on 4 core, 8(16HT) core and 20 core VM. The plaintext test on the VM which is where it is bursting up to 3.3.GiB/s for requests/sec: 2,561,367.13; though I am testing over shared networking between two VMs in two different Azure subscriptions and it does vary between runs. The ASP.NET test is particularly important because as the code paths for the global threadpool have become more efficient; it has increased contention on it for the zero workload tests, with the contention becoming stronger as the core count increases and across Numa nodes. Adding a workload to the threadpool items decreases this contention and is more real world; with the ASP.NET being a very relevant workload to use to do this. Plaintext is the shortest task workload to check the contention "relief" on; the others would mostly be in their own code and decrease the contention much more significantly. The simple plaintext server code was included in earlier bundle in thread though is https://aoa.blob.core.windows.net/aspnet/HttpTest-Code.zip The binaries for coreclr and corefx from current source, aspnet from myget and the two executables are in earlier comment #9471 (comment) (also help with correlating the traces to source) |

I'd like that too 😄 |

|

I will run Plaintext before and after this change. Are we expecting any change in throughput? |

I wouldn't expect any significant impact; plaintext doesn't use tasks much, and the impact here is mostly on the overhead associated with task execution. It's possible that the combination of this PR, #9562, and #9560 might move the needle slightly, as together they'll end up reducing the overhead associated with async methods, but I wouldn't expect anything huge. |

|

Before: 2.0.0-beta-001559-00 (2/10/17 21:38 UTC) |

|

There are a few other related changes like the no-line vs stackcrawl mark,

it may be easier to compare before to current rather than try to pickout

the individual commits

…On 14 February 2017 at 00:42, Mike Harder ***@***.***> wrote:

Before: 2.0.0-beta-001559-00 (2/10/17 21:38 UTC)

Commit: 2/12/17 00:58 UTC

After: 2.0.0-beta-001577-00 (2/13/17 19:09 UTC)

—

You are receiving this because you were mentioned.

Reply to this email directly, view it on GitHub

<#9471 (comment)>, or mute

the thread

<https://github.com/notifications/unsubscribe-auth/ABFwrl2SNuNloM5QTwQf4_inRL1XZFeWks5rcPiOgaJpZM4L8ye4>

.

|

|

1577 is currently the latest build at https://dotnet.myget.org/feed/dotnet-core/package/nuget/Microsoft.NETCore.App |

|

No significant difference between 1559 and 1577 for Plaintext. |

Reduce Task and async method overheads Commit migrated from dotnet/coreclr@2b7a76c

This PR attempts to reduce the overhead associated with executing Tasks and invoking async methods.

Microbenchmarks look pretty good:

AsyncTaskMethodBuilder<bool>and completing it "synchronously" (before accessing its Task) with a known cached value: 1.95xAsyncTaskMethodBuilder<int>and completing it "synchronously" (before accessing its Task) with a known non-cached value: 1.53xAsyncTaskMethodBuilder<int>and completing it "asynchronously" (after accessing its Task): 1.38xTask<bool>method: 1.26x(I'd like to hold off on merging though until doing some more verification on ASP.NET workloads to make sure they're at least not negatively affected.)

cc: @jkotas, @kouvel, @benaadams