Preallocate web assembly workers#9394

Conversation

…ly module is compiled. This puts the work of loading and starting up the javascript in parallel with the wasm compile, reducing overall load times.

…join a newly created thread!

…u can't join a newly created thread!" This reverts commit 9ec2778.

…u can't join a newly created thread!" This reverts commit 9ec2778. I realized that the issue was with the test - you can't join() in main() on a thread emscripten might immediately create. This can be fixed in production by proxying the main thread on a pthread, but that would elide giving access to the PThread.foo arrays in the test.

… into preallocate-workers

Preallocate web assembly workers

kripken

left a comment

kripken

left a comment

There was a problem hiding this comment.

Great work, thanks @belraquib!

I hope we can find a better name for this option, but it isn't easy...

On the other hand, maybe we don't need a new option, if we turn it on by default! Is there ever a downside? That is, why not always do the effect of setting PREWARM_PTHREAD_POOL_WORKERS_SIZE to the value of PTHREAD_POOL_SIZE? (i.e. always immediately create preallocated workers, of the number we expect to want later for the pool)

| schedPrio: 0 | ||

| }, | ||

| // Workers that have been created but uninitialized. These have already been | ||

| // parsed, but the wabassembly model has not yet been loaded, making it |

| }, | ||

| // Workers that have been created but uninitialized. These have already been | ||

| // parsed, but the wabassembly model has not yet been loaded, making it | ||

| // distinct from the unusedWorkers below. |

There was a problem hiding this comment.

also, since we do use the wasm in workers as well, please clarify that this happens before loading the wasm on the main thread.

| pthreadMainJs = locateFile(pthreadMainJs); | ||

| var workers = []; | ||

|

|

||

| var createNumNewWorkers = numWorkers; |

There was a problem hiding this comment.

how about renaming createNumNewWorkers to numWorkersToCreate?

|

|

||

| // Specifies the number of web workers that are preallocated before runtime is | ||

| // initialized. If 0, workers are created on demand. | ||

| // Specifies the number of web workers that are preallocated with the |

There was a problem hiding this comment.

Maybe best to avoid the word "preallocated" here, as we use it for the other group now in the JS.

There was a problem hiding this comment.

Done. Using the word created.

| // webassembly module is compiled. If 0, workers are created on demand. You | ||

| // likely want this to be >= PTHREAD_POOL_SIZE. Note that this starts the | ||

| // webworkers in a state where they have yet to load the webassembly module. | ||

| var PREWARM_PTHREAD_POOL_WORKERS_SIZE = 0 |

There was a problem hiding this comment.

I think we can remove _WORKERS from the name, to better match the other one.

I don't have a good idea about what's best for the prefix, though. The current PREWARM_ is reasonable, but also PREALLOCATED_ is the name used in the JS. If we prefer the former, we should rename the JS I think.

There was a problem hiding this comment.

Are there really two distinct use cases here? Why not just extend the meaning of the existing PTHREAD_POOL_SIZE option?

There was a problem hiding this comment.

Yes. Image the following timeline

Document load - (a) - Wasm Compile - (b) - Post wasm compile - (c) - wasm main running

PTHREAD_POOL_SIZE adds a step at (c) - wherein we guarantee that N threads are allocated and immediately usable by wasm main running. This necessarily waits till the workers are loaded. Note that because this both creates the workers and waits, this waits for the entire worker creation and load for all threads created.

I'm proposing adding a step at (a) that creates the workers. These are not usable yet - the load hasn't been called. This allows the client to choose to either create the thread pool early at (c) using the current PTHREAD_POOL_SIZE option or to elide doing so until their runtime has started.

There was a problem hiding this comment.

I can't really think of a reason that a client would want to use PTHREAD_POOL_SIZE and not use PREWARM_PTHREAD_POOL_WORKERS_SIZE.

There was a problem hiding this comment.

I agree. The naive change would be to ensure PREWARM_PTHREAD_POOL_WORKERS_SIZE is max(PREWARM_PTHREAD_POOL_WORKERS_SIZE , PTHREAD_POOL_SIZE).

In general, though, I don't like behaviors set by settings to be dependent on multiple settings (in this case both PREWARM_PTHREAD_POOL_WORKERS_SIZE and PTHREAD_POOL_SIZE).

Is there a place we could print a warning? I'm also happy to make a change to library_pthread to spawn the max of those two settings if either is set.

There was a problem hiding this comment.

I think like @sbc100 that perhaps we can extend the current option to just do the new useful functionality in this PR - I'm not sure I understand the responses since, maybe I'm misunderstanding them? What is the downside to not adding a new option?

| static void *thread_start(void *arg) | ||

| { | ||

| // This should be long enough for threads to pile up. | ||

| sleep(2); |

There was a problem hiding this comment.

I think this is at risk of being a flakey test. Can we loop with a sleep until some condition is true?

There was a problem hiding this comment.

Yes - will ping with a fix.

| var PTHREAD_POOL_SIZE = 0; | ||

|

|

||

| // Specifies the number of web workers that are preallocated before the | ||

| // webassembly module is compiled. If 0, workers are created on demand. You |

There was a problem hiding this comment.

compiled => compiled on the main thread.

|

Normally we use PROXY_TO_PTREAD in tests so that the main thread can indeed join with the workers. |

|

re: On the other hand, maybe we don't need a new option, if we turn it on by default! Is there ever a downside? That is, why not always do the effect of setting PREWARM_PTHREAD_POOL_WORKERS_SIZE to the value of PTHREAD_POOL_SIZE? (i.e. always immediately create preallocated workers, of the number we expect to want later for the pool) I think we want to keep it separate in order to be able to pre-allocate the workers without loading them after wasm is compiled. This allows the client to load the threads as needed on-demand. This can be advantageous if the application doesn't need threads to start, and only spawns one due to an interaction later. |

|

Ah, I didn't see this comment at the bottom before, sorry!

I still don't see what the downside is, though? If the user asked to have a pool of workers, then we are creating that pool at some point. Why not create it even earlier since it's more efficient, as this PR does? :) |

The interesting this is that the "load" portion does take some amount of time - since it explicitly waits for the runtimes to fully load before having the main runtime start (via the run dependencies). At least that's what I think Line 821 in 2346596 is doing. I measured this to be ~20ms on a pretty beefy workstation, so it's not a ton of time savings admittedly. Because of this, I'd rather punt on the load until the first time the thread is required, which decreases the first time the user can interact with the application. However, if what you are suggesting is that if PTHREAD_POOL_SIZE is defined and PREWARM_PTHREAD_POOL_WORKERS_SIZE is not (or is smaller), then always set PREWARM_PTHREAD_POOL_WORKERS_SIZE to PTHREAD_POOL_SIZE, I can almost get behind that - my only concern is that I'm wary when settings are auto-updated. That being said, check out the changes to library_pthread and settings and let me know what you think |

|

I think the other suggestion being proposed is to not add a new option at all - to not have That would be equivalent to what you said here,

(but without adding the new setting). What do you think? |

|

I would rather keep the new setting. In my case, I explicitly wanted to not

create an active pthread pool because I want the application to start

faster.

…On Tue, Sep 10, 2019, 5:10 PM Alon Zakai ***@***.***> wrote:

I think the other suggestion being proposed is to not add a new option at

all - to not have PREWARM_PTHREAD_POOL_WORKERS_SIZE. And to just see this

PR as adding an additional optimization for the existing option, basically.

That would be equivalent to what you said here,

always set PREWARM_PTHREAD_POOL_WORKERS_SIZE to PTHREAD_POOL_SIZE

(but without adding the new setting). What do you think?

—

You are receiving this because you were mentioned.

Reply to this email directly, view it on GitHub

<#9394?email_source=notifications&email_token=ABHRXCRRTBYXYXGKMBRQTU3QJAEMRA5CNFSM4IUCC72KYY3PNVWWK3TUL52HS4DFVREXG43VMVBW63LNMVXHJKTDN5WW2ZLOORPWSZGOD6MQDCA#issuecomment-530121096>,

or mute the thread

<https://github.com/notifications/unsubscribe-auth/ABHRXCSR7M5BM55B6VV6M5DQJAEMRANCNFSM4IUCC72A>

.

|

|

Ah, I'm confused, sorry. Which settings do you want for your own app? I'd be surprised if that is faster than with |

Yup, PREWARM_PTHREAD_POOL_WORKERS_SIZE without PTHREAD_POOL_SIZE. This will let me pay the cost of loading the javascript workers in parallel with compiling wasm. It'll elide paying the cost to start up the threads until i really need them [as it is serial with respect to running the wasm module]. In my particular case, I don't need the threads until after the application is responsive, so creating the actual pool doesn't help. |

|

OK, I tink I understand. If I'm right, then anyone who wants PTHREAD_POOL_SIZE will certainly want them to be pre-warmed right? Which means PTHREAD_POOL_SIZE is kind of like addition or superset of PREWARM_PTHREAD_POOL_WORKERS_SIZE? In which case how about the new option is for exactly your case and rather than setting two different pool sizes we make it a boolean and call is something like |

|

@sbc100 Yup, exactly. If you have PTHREAD_POOL_SIZE you almost definitely want PREWARM_PTHREAD_POOL_WORKERS_SIZE but not vice-versa. I like PTHREAD_POOL_ONLY_PREWARM=1, will make that change tomorrow and ping the thread. |

…o concat syntax for concat'ing arrays.

kripken

left a comment

kripken

left a comment

There was a problem hiding this comment.

Thanks! Looks good overall. Some naming questions in a comment.

Also, we need to verify this isn't a regression, as we are changing the behavior for existing users. (That you don't intend to use the new default in your own app in particular worries me, as otherwise I'd assume your extensive testing is a good argument for changing the default.) I don't know that we have great benchmarks for startup, and likely there is a lot of variability here, but checking some of the things in the test suite may be good. Let me know if I can help there.

| // PTHREAD_POOL_ONLY_PREWARM = 1, then the workers will only be created and | ||

| // have their runtimes loaded on demand after the main runtime is initialized. | ||

| var PTHREAD_POOL_SIZE = 0; | ||

| var PTHREAD_POOL_ONLY_PREWARM = 0; |

There was a problem hiding this comment.

I think we can maybe improve this flag a little. One issue is that we call it prewarm here but preallocated in the implementation. It would be better to make that uniform. How about preallocate in both places?

A second thing is ONLY_PREWARM seems less clear than PREWARM.

Overall, what do you think about having this be var PTHREAD_POOL_PREALLOCATE = 1;?

The default value of the the (newly renamed) PTHREAD_POOL_PREALLOCATE as 1 should ensure that the current behavior is mostly unchanged - the worker load will still happen prior to the module being run. I believe this should be strictly better for current users, and am happy to check numbers if you can point me to a specific test. The non-default value can only be used if the main thread will not join the worker threads (which I added to the documentation in settings). This is true for my project, and allows me to shave a few more milliseconds off of startup time. |

|

Ah, I think I am confused now...

So the new flag is not the opposite of the one before? So either the code is wrong or the name is confusing atm, I think. Or maybe I'm just confused? |

|

For benchmarking, I think it would be enough to get a hello world program that requests a large thread pool, and seeing that startup speed is the same or better with this PR. We should check at least chrome and firefox. |

That is correct.

The new option is PTHREAD_POOL_PREALLOCATE, which defaults to 1. I had read this as refering to "fully" preallocating the worker (that is to mean,calling load) before the runtime starts. To bikeshed, what if this was renamed to: PTHREAD_POOL_LOAD_BEFORE_STARTING_RUNTIME=1 ? It would have the same sense as it does now... when it == 1, we will start the workers before starting the compile and then load the workers after the compile is done but before starting the runtime. If it is 0, then we will start the workers prior to starting the compile but not load the workers before runtime starts. |

There are 2 things to measure here, I think: startup time and worker allocation time. If PTHREAD_POOL_PREALLOCATE=1, there should be no change to startup time (or it may be slightly faster) and no change to thread allocation time. If the parameter PTHREAD_POOL_PREALLOCATE=0, then startup should be slightly faster but thread allocation will be slightly slower. I'll see about how to measure both of these... |

…er set for preallocated threads. I checked this with my change and without my change. With my change, the initial allocation takes ~27.66 ms. Without my change, the initial allocation takes 28.67 ms. As we are talking about a delta of ~1ms, this isn't much of a difference. Note that this is the version of the test that checks that there are no unexpected changes when the defaults are used when allocating threads. I'm not sure how to measure startup time in a test setting...

|

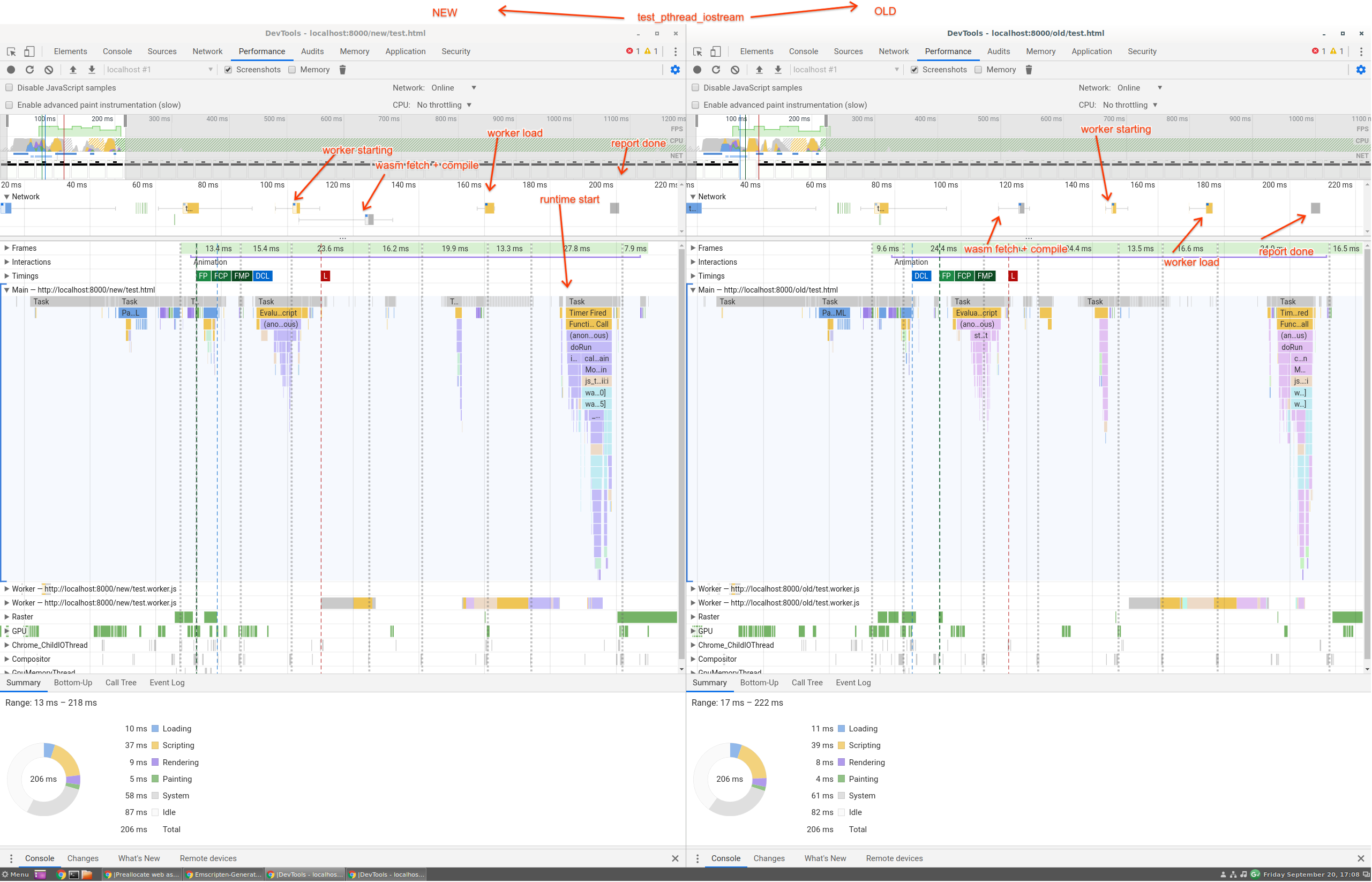

I couldn't figure out how to measure the startup time in a test, so I profiled the test_pthread_iostream test instead (see attached file) Things to note: left side is my change, right side is synced to head. I left markers on [conveniently, the network timeline has the main events] the workers starting, the workers loading, the wasm fetch and compile step, when the runtime starts, and the result is reported. Apologies for the large image. Note that this isn't highly scientific, and the startup delta is only ~20ms on my workstation. |

|

Thanks for the profiling data! Looks encouraging. |

|

How about PTHREAD_POOL_DELAY_LOAD? Defaulting to 0. |

|

I'm curious about the performance impact. Are you expecting to see higher gains with larger wasm files or more threads? |

That sounds good. I'll ping when that's done.

The biggest win will be if threading with a threadpool is enabled at all. If that's the case, then all clients will see a speed up on the order of (time to fetch + evaluate worker js). The worker(s) start up immediately and the fetch+eval happen as the webassembly starts the streaming compile phase. If the webassembly fetch + streaming compile takes longer than the fetch + evaluate worker.js files (and this is most likely), then those loads happen in parallel (in this change) and so startup is faster. The old code would explicitly wait to start until after the streaming compile step was done, which means that paralleling the work wasn't an option. This will be a constant time savings with respect to the device that the user is running on. Implicit in the above is that there is enough compute that the environment does the fetch and load of the various workers (and the streaming compile) in parallel, which may not be true in a single core/single thread environment... but I don't know if that's something to worry about given that this is the pthread implementation. When there are more threads, we'll see bigger wins as any contention between the workers loading while loading the webassembly is resolved in parallel to the streaming compile. With a larger webassembly module, the streaming compile will dominate. My project is a ~9MB wasm file; the compile is ~400ms (using unstable + wasm caching) and the worker loads (5 threads) is about 50-200ms each. Since these are done mostly in parallel; the workers and the module are ready in just over 410ms. However, as the module gets larger, it dominates the time profile and the savings from loading the workers in parallel isn't as much of a win [but hey, every little bit counts!] |

|

I updated; but merging the upstream I get an error with local tests: undefined symbol: __trunctfdf2 |

|

Can you see if |

That worked. I also had to rebuild LLVM |

|

About the perf analysis: There are 3 things to compare between,

In the analysis above, is 1 compared to 2 or 3? I definitely see the benefit of this PR when comparing 1 and 2, since as you said, it creates the workers while still doing other things like streaming. However, it's the difference between 2 and 3 that I'd like to understand more, as it is forcing us to add a flag here, and I'm worried about the fact we're having a hard time naming it, and that every time after a day or so that I return to this PR and try to read the code, I have a hard time following it. |

|

The state before this PR. The perf above is between 1 and 2. What (3) would look like would be moving the "worker load" part of the diagram and moving it to after the runtime has started; to whenever the client starts the first thread. The same constant cost will be paid, but it will be done after the runtime has started. For my project (5 workers, and note I can only do this as I don't join on the spawned threads!), this lets me defer 40ms of startup time to later. The savings will increase with the number of workers + cpu scaling. It's not a huge win, but it's something. I'm happy to remove the flag to make progress, however. EDIT - Actually, the defer'ed cost is not actually the same, it's slightly cheaper. When setting the flag to the default, we wait until the threads are fully loaded to load the runtime. When the flag is not the default, the behaviour is similar as to when there is no pool - the thread load request is sent but the runtime registers the thread to run on the worker and the main thread continues running. When the thread starts will be slightly later. Think of this as the behaviour of spawning threads without the threadpool, but without having to pay the cost of spinning up the worker. |

kripken

left a comment

kripken

left a comment

There was a problem hiding this comment.

Thanks @belraquib for the info! And sorry for the delay.

I think that's reasonable, and the new option name seems reasonable too.

| // parsed, but the wasm file has not yet been loaded, making it | ||

| // distinct from the unusedWorkers below. These workers will be created before | ||

| // loading wasm on the main thread. | ||

| preallocatedWorkers: [], |

There was a problem hiding this comment.

I think we should look at reverting/undoing this PR, the concept of preallocatedWorkers is identical to unusedWorkers, to the contrary what the comment might be stating. There is no reason why we should have two different concepts.

There was a problem hiding this comment.

We measured a speedup here, though - are you saying the measurement might have been wrong somehow? Or is there a way to achieve a similar speedup using just unusedWorkers?

There was a problem hiding this comment.

The idea of more eagerly loading up the Worker scripts and decoupling the passing of Wasm modules there is fine. Posted #10269 to remove the preallocatedWorkers construct.

Adding support for pre-allocating worker threads before the webassembly module is compiled. This puts the work of loading and starting up the javascript in parallel with the wasm compile, reducing overall load times. This works with the worker pool. The pool can either create + load the workers (default) or with `PTHREAD_POOL_DELAY_LOAD` it will only create them but leave loading for later. (That means that when pthreads are created the actual wasm will be loaded, but the work to create the Worker was already done earlier.)

Optimize end-to-end load time by allowing pthread workers to start loading prior to the webassembly compile step. This is done without a call to onmessage 'load', so these workers aren't ready yet.

This effectively puts the javascript parsing and loading of the workers in separate threads in parallel to the webassembly compile. Once the webassembly module is loaded, the normal PTHREAD_POOL_SIZE logic kicks in, and greedily uses the preallocated threads.

The test was a little tricky, as the main thread can't join() created threads.