[fx] provide a stable but not accurate enough version of profiler.#1547

Merged

FrankLeeeee merged 28 commits intohpcaitech:mainfrom Sep 7, 2022

Merged

[fx] provide a stable but not accurate enough version of profiler.#1547FrankLeeeee merged 28 commits intohpcaitech:mainfrom

FrankLeeeee merged 28 commits intohpcaitech:mainfrom

Conversation

super-dainiu

commented

Sep 6, 2022

Comment on lines

+184

to

+187

| @register_meta(aten.hardtanh_backward.default) | ||

| def meta_hardtanh_backward(grad_out: torch.Tensor, input: torch.Tensor, min_val: int, max_val: int): | ||

| grad_in = torch.empty_like(input) | ||

| return grad_in |

Contributor

Author

There was a problem hiding this comment.

only some extra registrations in this file.

super-dainiu

commented

Sep 6, 2022

Comment on lines

+2

to

+6

| from . import _meta_registrations | ||

| META_COMPATIBILITY = True | ||

| except: | ||

| import torch | ||

| META_COMPATIBILITY = False |

Contributor

Author

There was a problem hiding this comment.

META_COMPATIBILITY is checked when Colossal-AI initializes.

super-dainiu

commented

Sep 6, 2022

Comment on lines

+106

to

+107

| for param in self.module.parameters(): | ||

| param.grad = None |

Contributor

Author

There was a problem hiding this comment.

Obviously, we need to clear grad of the parameter, because these grads are meta

super-dainiu

commented

Sep 6, 2022

| @@ -0,0 +1,125 @@ | |||

| from typing import Callable, Any, Dict, Tuple | |||

Contributor

Author

There was a problem hiding this comment.

This is the old one, so I did not modify anything except for the output format.

Contributor

Author

There was a problem hiding this comment.

old for PyTorch 1.11

super-dainiu

commented

Sep 6, 2022

Comment on lines

+8

to

+30

| if META_COMPATIBILITY: | ||

| aten = torch.ops.aten | ||

|

|

||

| WEIRD_OPS = [ | ||

| torch.where, | ||

| ] | ||

|

|

||

| INPLACE_ATEN = [ | ||

| aten.add_.Tensor, | ||

| aten.add.Tensor, | ||

| aten.sub_.Tensor, | ||

| aten.div_.Tensor, | ||

| aten.div_.Scalar, | ||

| aten.mul_.Tensor, | ||

| aten.mul.Tensor, | ||

| aten.bernoulli_.float, | ||

|

|

||

| # inplace reshaping | ||

| aten.detach.default, | ||

| aten.t.default, | ||

| aten.transpose.int, | ||

| aten.view.default, | ||

| aten._unsafe_view.default, |

Contributor

Author

There was a problem hiding this comment.

These are created if we have META_COMPATIBILITY

FrankLeeeee

approved these changes

Sep 7, 2022

This file contains hidden or bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

Sign up for free

to join this conversation on GitHub.

Already have an account?

Sign in to comment

Add this suggestion to a batch that can be applied as a single commit.This suggestion is invalid because no changes were made to the code.Suggestions cannot be applied while the pull request is closed.Suggestions cannot be applied while viewing a subset of changes.Only one suggestion per line can be applied in a batch.Add this suggestion to a batch that can be applied as a single commit.Applying suggestions on deleted lines is not supported.You must change the existing code in this line in order to create a valid suggestion.Outdated suggestions cannot be applied.This suggestion has been applied or marked resolved.Suggestions cannot be applied from pending reviews.Suggestions cannot be applied on multi-line comments.Suggestions cannot be applied while the pull request is queued to merge.Suggestion cannot be applied right now. Please check back later.

What's new?

With MetaTensor, we can compute flops of any autograd procedure.

Combined with MetaInfoProp, every node will have the following attribute, which will facilitate research on act_ckpt with more specific information.

And the

MetaInfoPropresults are almost accurate.TODO

I skipped the test for checkpoint solvers because it should integrate new features.

Concerns

This profiler is still not accurate enough.

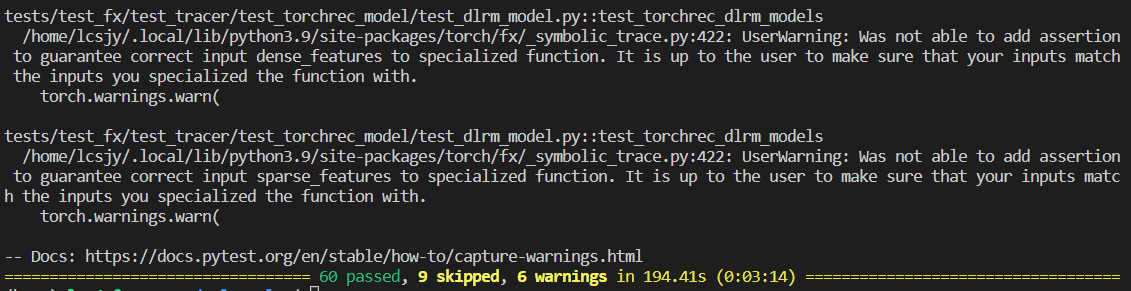

Tests

All tests passed with PyTorch 1.11 (CI) and Pytorch 1.12 (as below).