Integrated retry-handler to not having to rely on Polly for throttling handling as everyone will need it#17

Integrated retry-handler to not having to rely on Polly for throttling handling as everyone will need it#17jansenbe wants to merge 2 commits intomicrosoft:mainfrom

Conversation

… everyone will need

|

|

||

| namespace Microsoft.SemanticKernel.AI.OpenAI.Clients; | ||

|

|

||

| internal class RetryHandler: DelegatingHandler |

There was a problem hiding this comment.

Thoughts on making this something that implement IRetryMechanism and replaces dotnet\src\SemanticKernel\Reliability\PassThroughWithoutRetry.cs as the default mechanism? I worry about how this might conflict with what developers supply through SetRetryMechanism on KernelConfig.

There was a problem hiding this comment.

@lemillermicrosoft : wasn't aware of that, just got access to the code base yesterday and tried to workaround throttling in my prototype. I can have a look later to your proposed model and update if needed.

There was a problem hiding this comment.

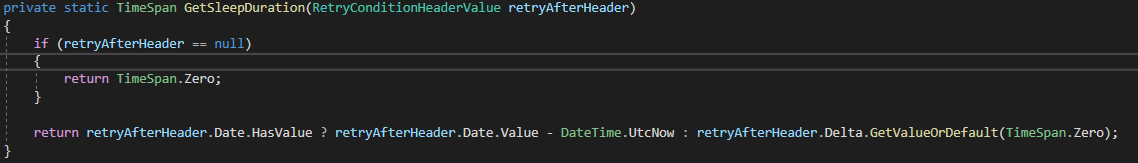

I do like how this solution is able to leverage the retry time from the response, I don't think that'd be possible currently without some extra work with IRetryMechanism. Thanks for contributing!

There was a problem hiding this comment.

@lemillermicrosoft : retrying without respecting the Retry-After header is just adding more load, so in my view using that header is a must have if you want to optimize throughput

There was a problem hiding this comment.

@lemillermicrosoft : did have a look at PassThroughWithoutRetry...but that's not really doing anything when it comes to throttling, it's simply eating some exceptions to not break the code. The code I wrote will be compatible, if an exception is thrown the current logic and settings will apply, the big benefit is that you'll not see exceptions due to 429's being returned

| // Default delay, in case the retry-after header data is corrupt | ||

| double delayInSeconds = 10; | ||

|

|

||

| if (response != null && response.Headers.TryGetValues(Retry_After, out IEnumerable<string> values)) |

There was a problem hiding this comment.

Consider using standard RetryAfter property of the HttpResponseHeaders class. E.g. response.Headers.RetryAfter

| { | ||

| // Can we use the provided retry-after header? | ||

| string retryAfter = values.First(); | ||

| if (int.TryParse(retryAfter, out int delaySeconds)) |

| internal class RetryHandler: DelegatingHandler | ||

| { | ||

| private const string Retry_After = "Retry-After"; | ||

| private const int MaxRetries = 10; |

There was a problem hiding this comment.

In the worst case, this handler will delay original call to a downstream service by 1.6 minute (10 retries * 10 seconds delay time) + 10 retries * each request latency. It's a lot and might be inacceptable for majority user facing/related operations where the overall delay should not exceed a few seconds. It's a usual practice to fail fast by doing a few retry attempts with a 500ms-1s delay between them and let the exception bubble up the call chain up to the point where there's enough information to make a decision about its handling - showing an error that some feature is temporary unavailable and ask retry later or degrade the experience until the service has recovered.

There was a problem hiding this comment.

@SergeyMenshykh : that makes sense for this world, the original code was used in the context of SharePoint

| if (retryCount >= MaxRetries) | ||

| { | ||

| throw new AIException(AIException.ErrorCodes.UnknownError, | ||

| $"Request reached it's max retry count of {retryCount}"); |

There was a problem hiding this comment.

We can return response here instead of throwing the exception. The response has more information (status code, headers, etc) than the exception which might be useful for the other potential handlers in the handlers chain if they need to act upon it. And it seems the OpenAI Service Client class can successfully handle it by checking if it’s successful or not and mapping one of the HTTP Status codes the handler handles to the AIException.

…oft#17 (microsoft#88) ### Motivation and Context Add ability to set Web App region in deployment - Fixes microsoft#17 ### Description This PR adds support to the `deploy-azure.ps1` and `deploy-azure.sh` files to pass through the region to deploy the Static Web App to. This will default to `westus2 and is set in the .PS1/SH files. This enables deploying all the resources to the same region, even though Static Web Apps is supported in only a few regions. The one region that all resource types are supported in is WestEurope. ### Contribution Checklist <!-- Before submitting this PR, please make sure: --> - [x] The code builds clean without any errors or warnings - [x] The PR follows the [Contribution Guidelines](https://github.com/microsoft/copilot-chat/blob/main/CONTRIBUTING.md) and the [pre-submission formatting script](https://github.com/microsoft/copilot-chat/blob/main/CONTRIBUTING.md#development-scripts) raises no violations - [x] All unit tests pass, and I have added new tests where possible - [x] I didn't break anyone 😄 Tests: `azure-deploy.ps1` <img width="845" alt="image" src="https://github.com/microsoft/chat-copilot/assets/7589164/dfc1412a-0d7c-4045-adc8-c07f0202adc2"> <img width="944" alt="image" src="https://github.com/microsoft/chat-copilot/assets/7589164/d6af1409-892a-44b4-a3c0-18783ae2170e"> `azure-deploy.sh` <img width="1110" alt="image" src="https://github.com/microsoft/chat-copilot/assets/7589164/4ef5abd8-6d33-4386-8254-b87af5f99aae"> <img width="969" alt="image" src="https://github.com/microsoft/chat-copilot/assets/7589164/5291a3dc-6dd3-4fc2-87ea-0da8f143cd37">

Motivation and Context

Trying to get some embeddings for a couple string already led into to throttling after a short timeframe, hence I've ported a retry-handler I use in other projects to have default retry logic in SK. Integrating with Polly for more complex scenarios makes sense, but feels wrong to make it mandatory for everyone to use Polly or external code to deal with retries, especially since our OpenAI backends throttle a lot.