-

Notifications

You must be signed in to change notification settings - Fork 4.6k

Integrated retry-handler to not having to rely on Polly for throttling handling as everyone will need it #17

New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

Changes from all commits

File filter

Filter by extension

Conversations

Jump to

Diff view

Diff view

There are no files selected for viewing

| Original file line number | Diff line number | Diff line change |

|---|---|---|

| @@ -0,0 +1,156 @@ | ||

| // Copyright (c) Microsoft. All rights reserved. | ||

|

|

||

| using System; | ||

| using System.Collections.Generic; | ||

| using System.IO; | ||

| using System.Linq; | ||

| using System.Net; | ||

| using System.Net.Http; | ||

| using System.Threading; | ||

| using System.Threading.Tasks; | ||

|

|

||

| namespace Microsoft.SemanticKernel.AI.OpenAI.Clients; | ||

|

|

||

| internal class RetryHandler: DelegatingHandler | ||

| { | ||

| private const string Retry_After = "Retry-After"; | ||

| private const int MaxRetries = 10; | ||

|

Member

There was a problem hiding this comment. Choose a reason for hiding this commentThe reason will be displayed to describe this comment to others. Learn more. In the worst case, this handler will delay original call to a downstream service by 1.6 minute (10 retries * 10 seconds delay time) + 10 retries * each request latency. It's a lot and might be inacceptable for majority user facing/related operations where the overall delay should not exceed a few seconds. It's a usual practice to fail fast by doing a few retry attempts with a 500ms-1s delay between them and let the exception bubble up the call chain up to the point where there's enough information to make a decision about its handling - showing an error that some feature is temporary unavailable and ask retry later or degrade the experience until the service has recovered.

Member

Author

There was a problem hiding this comment. Choose a reason for hiding this commentThe reason will be displayed to describe this comment to others. Learn more. @SergeyMenshykh : that makes sense for this world, the original code was used in the context of SharePoint |

||

|

|

||

| protected override async Task<HttpResponseMessage> SendAsync(HttpRequestMessage request, CancellationToken cancellationToken) | ||

| { | ||

| int retryCount = 0; | ||

|

|

||

| while (true) | ||

| { | ||

| // Throw an exception if we've requested to cancel the operation | ||

| cancellationToken.ThrowIfCancellationRequested(); | ||

|

|

||

| // Issue the request | ||

| HttpResponseMessage response = await base.SendAsync(request, cancellationToken); | ||

|

|

||

| // If the request does not require a retry then we're done | ||

| if (!ShouldRetry(response.StatusCode)) | ||

| { | ||

| return response; | ||

| } | ||

|

|

||

| // Drain response content to free connections. Need to perform this | ||

| // before retry attempt and before the TooManyRetries ServiceException. | ||

| if (response.Content != null) | ||

| { | ||

| #if NET5_0_OR_GREATER | ||

| await response.Content.ReadAsByteArrayAsync(cancellationToken).ConfigureAwait(false); | ||

| #else | ||

| await response.Content.ReadAsByteArrayAsync().ConfigureAwait(false); | ||

| #endif | ||

| } | ||

|

|

||

| // Safety measure to not keep retrying forever | ||

| if (retryCount >= MaxRetries) | ||

| { | ||

| throw new AIException(AIException.ErrorCodes.UnknownError, | ||

| $"Request reached it's max retry count of {retryCount}"); | ||

|

Member

There was a problem hiding this comment. Choose a reason for hiding this commentThe reason will be displayed to describe this comment to others. Learn more. We can return response here instead of throwing the exception. The response has more information (status code, headers, etc) than the exception which might be useful for the other potential handlers in the handlers chain if they need to act upon it. And it seems the OpenAI Service Client class can successfully handle it by checking if it’s successful or not and mapping one of the HTTP Status codes the handler handles to the AIException. |

||

| } | ||

|

|

||

| // Prepare Delay task configured with the delay time from response's Retry-After header | ||

| var waitTime = CalculateWaitTime(response); | ||

| Task delay = Task.Delay(waitTime, cancellationToken); | ||

|

|

||

| // Clone request with CloneAsync before retrying | ||

| // Do not dispose this request as that breaks the request cloning | ||

| #pragma warning disable CA2000 | ||

| request = await CloneAsync(request); | ||

| #pragma warning restore CA2000 | ||

|

|

||

| // Increase retryCount | ||

| retryCount++; | ||

|

|

||

| // Delay time based upon Retry-After header | ||

| await delay; | ||

| } | ||

| } | ||

|

|

||

| private static TimeSpan CalculateWaitTime(HttpResponseMessage response) | ||

| { | ||

| // Default delay, in case the retry-after header data is corrupt | ||

| double delayInSeconds = 10; | ||

|

|

||

| if (response != null && response.Headers.TryGetValues(Retry_After, out IEnumerable<string> values)) | ||

|

Member

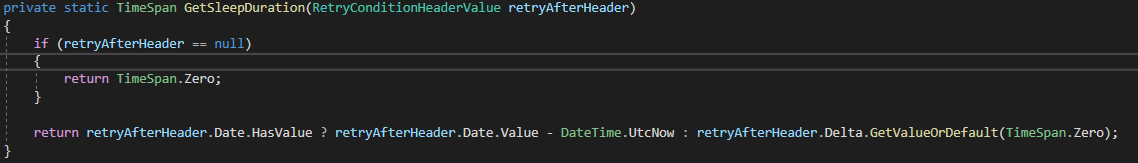

There was a problem hiding this comment. Choose a reason for hiding this commentThe reason will be displayed to describe this comment to others. Learn more. Consider using standard RetryAfter property of the HttpResponseHeaders class. E.g. response.Headers.RetryAfter |

||

| { | ||

| // Can we use the provided retry-after header? | ||

| string retryAfter = values.First(); | ||

| if (int.TryParse(retryAfter, out int delaySeconds)) | ||

|

Member

There was a problem hiding this comment. Choose a reason for hiding this commentThe reason will be displayed to describe this comment to others. Learn more. |

||

| { | ||

| delayInSeconds = delaySeconds; | ||

| } | ||

| } | ||

|

|

||

| return TimeSpan.FromSeconds(delayInSeconds); | ||

| } | ||

|

|

||

| internal static bool ShouldRetry(HttpStatusCode statusCode) | ||

| { | ||

| return (statusCode == HttpStatusCode.ServiceUnavailable || | ||

| statusCode == HttpStatusCode.GatewayTimeout || | ||

| statusCode == (HttpStatusCode)429); | ||

| } | ||

|

|

||

| /// <summary> | ||

| /// Create a new HTTP request by copying previous HTTP request's headers and properties from response's request message. | ||

| /// Copied from: https://github.com/microsoftgraph/msgraph-sdk-dotnet-core/blob/dev/src/Microsoft.Graph.Core/Extensions/HttpRequestMessageExtensions.cs | ||

| /// </summary> | ||

| /// <param name="originalRequest">The previous <see cref="HttpRequestMessage"/> needs to be copy.</param> | ||

| /// <returns>The <see cref="HttpRequestMessage"/>.</returns> | ||

| /// <remarks> | ||

| /// Re-issue a new HTTP request with the previous request's headers and properities | ||

| /// </remarks> | ||

| internal static async Task<HttpRequestMessage> CloneAsync(HttpRequestMessage originalRequest) | ||

| { | ||

| var newRequest = new HttpRequestMessage(originalRequest.Method, originalRequest.RequestUri); | ||

|

|

||

| // Copy request headers. | ||

| foreach (var header in originalRequest.Headers) | ||

| { | ||

| newRequest.Headers.TryAddWithoutValidation(header.Key, header.Value); | ||

| } | ||

|

|

||

| // Copy request properties. | ||

| #if NET5_0_OR_GREATER | ||

| #pragma warning disable CS0618 // Type or member is obsolete | ||

| #endif | ||

| foreach (var property in originalRequest.Properties) | ||

| { | ||

| newRequest.Properties.Add(property); | ||

| } | ||

| #if NET5_0_OR_GREATER | ||

| #pragma warning restore CS0618 // Type or member is obsolete | ||

| #endif | ||

|

|

||

| // Set Content if previous request had one. | ||

| if (originalRequest.Content != null) | ||

| { | ||

| // HttpClient doesn't rewind streams and we have to explicitly do so. | ||

| await originalRequest.Content.ReadAsStreamAsync().ContinueWith(t => | ||

| { | ||

| if (t.Result.CanSeek) | ||

| { | ||

| t.Result.Seek(0, SeekOrigin.Begin); | ||

| } | ||

|

|

||

| newRequest.Content = new StreamContent(t.Result); | ||

| }).ConfigureAwait(false); | ||

|

|

||

| // Copy content headers. | ||

| if (originalRequest.Content.Headers != null) | ||

| { | ||

| foreach (var contentHeader in originalRequest.Content.Headers) | ||

| { | ||

| newRequest.Content.Headers.TryAddWithoutValidation(contentHeader.Key, contentHeader.Value); | ||

| } | ||

| } | ||

| } | ||

|

|

||

| return newRequest; | ||

| } | ||

|

|

||

| } | ||

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

Thoughts on making this something that implement IRetryMechanism and replaces dotnet\src\SemanticKernel\Reliability\PassThroughWithoutRetry.cs as the default mechanism? I worry about how this might conflict with what developers supply through

SetRetryMechanismon KernelConfig.There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

@lemillermicrosoft : wasn't aware of that, just got access to the code base yesterday and tried to workaround throttling in my prototype. I can have a look later to your proposed model and update if needed.

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

I do like how this solution is able to leverage the retry time from the response, I don't think that'd be possible currently without some extra work with IRetryMechanism. Thanks for contributing!

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

@lemillermicrosoft : retrying without respecting the Retry-After header is just adding more load, so in my view using that header is a must have if you want to optimize throughput

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

@lemillermicrosoft : did have a look at

PassThroughWithoutRetry...but that's not really doing anything when it comes to throttling, it's simply eating some exceptions to not break the code. The code I wrote will be compatible, if an exception is thrown the current logic and settings will apply, the big benefit is that you'll not see exceptions due to 429's being returned