update gateway to add ability to run and exec into containers#1627

Conversation

| } | ||

| } | ||

|

|

||

| func addDefaultEnvvar(env []string, k, v string) []string { |

There was a problem hiding this comment.

this was copied from ops/exec.go, we can move it to a common util package if desired, was not sure where to put it.

|

The cross compile failed 3 times with this error: not sure what this issue could be from. |

tonistiigi

left a comment

tonistiigi

left a comment

There was a problem hiding this comment.

Could we get some tests for this please 🤞

For the CI error, I think it is token expiring showing up after #1622 . I switched CI to v0.7.2 that should unblock this (but introduces another issue). I'll try to get a proper fix soon but requires custom containerd authorizer implementation.

hinshun

left a comment

hinshun

left a comment

There was a problem hiding this comment.

Found a few potential race conditions, I may find more but submitting this for now.

|

@tonistiigi not sure how to create tests for this one. I could not find any unit tests for the gateway, and the integration tests (ie ./client/build_test.go) I think will require modifications to the |

bb7adb8 to

5962481

Compare

f6744d5 to

1732086

Compare

|

After reading Tonis' comment here I realized I have the starting message out of order. I kept thinking the started channel was to indicate IO ready to receive, but it really means container is ready to |

|

@coryb Sorry, got confused as well. We do not need a started channel to signal if container is ready for exec, we know that from the |

|

I see, yeah. There is still an unlikely race with my code since the server returns the StartedMessage in parallel with executor.Run. So if client receives StartedMessage and then immediately sends another InitMessage (which should call executor.Exec) then the second InitMessage might result int "container not found" because pid1 might not have updated the |

57d512b to

b90aaee

Compare

|

Converted to Draft until I can get the client side of this integrated as per discussion in #749 |

ef6b41a to

14ffe33

Compare

3448537 to

df7d944

Compare

|

Sorry for the delay, life has not gone to plan for a few weeks now. I have reset the PR to include the client code and added some tests. Here is a gist for sample main: Some issues that came up I am not sure how to deal with. In the sample main, the status Another odd issue I am not sure how to deal with is that the integration tests fail under |

|

|

||

| // ExitError will be returned from Run and Exec when the container process exits with | ||

| // a non-zero exit code. | ||

| type ExitError struct { |

There was a problem hiding this comment.

moved ExitError to errdefs package to avoid circular package dependencies

Signed-off-by: Cory Bennett <cbennett@netflix.com>

df7d944 to

355e937

Compare

hinshun

left a comment

hinshun

left a comment

There was a problem hiding this comment.

I reviewed a few times before, only small nit for now.

|

|

||

| lbf.ctrsMu.Lock() | ||

| // ensure we are not clobbering a dup container id request | ||

| if _, ok := lbf.ctrs[in.ContainerID]; ok { |

There was a problem hiding this comment.

Should we check this in the beginning of this function instead?

There was a problem hiding this comment.

I think to move it up we would have to put most of the function under the mutex lock. It is isolate at the bottom after the container object has been created so that we can check for duplication at the same time we do the assignment and to minimize the timing and code run under the lock. If we move the dup check up, we would either have to check again anyway inside the lock (since concurrency is allowed) or lock before we create the container which could take time with creating volumes via the cache manager.

I have tweaked the code a bit to tighten up the release and unlock handling, does that look better?

Signed-off-by: Cory Bennett <cbennett@netflix.com>

c4223b4 to

8df17c8

Compare

@AkihiroSuda any ideas? |

|

Haven't reviewed yet but one thing I noticed was that there was no new api cap. Client-side should also detect missing cap. I assume integration to |

| func testClientGatewayContainerPID1Exit(t *testing.T, sb integration.Sandbox) { | ||

| if sb.Rootless() { | ||

| // TODO fix this | ||

| // We get `panic: cannot statfs cgroup root` when running this test |

There was a problem hiding this comment.

Could you open an issue in runc repo

There was a problem hiding this comment.

Why does it appear in exec and not in regular builds?

There was a problem hiding this comment.

Created issue, with stacktrace from runc: opencontainers/runc#2573

There was a problem hiding this comment.

/sys/fs/cgroup isn't mounted?

There was a problem hiding this comment.

It looks like the /sys mounts are trimmed out here

There was a problem hiding this comment.

It appears to work if we bind mount in /sys, but not sure is this is the right thing to do here. After diffing the config.json from runc spec and runc spec --rootless. I added this to that ToRootless function and it worked for my tests, but not sure if there are other complications:

spec.Mounts = append(spec.Mounts, specs.Mount{

Destination: "/sys",

Type: "none",

Source: "/sys",

Options: []string{"rbind", "nosuid", "noexec", "nodev", "ro"},

})

Does this seem like the right solution?

There was a problem hiding this comment.

Sorry I didn't notice this comment.

{"rbind", "ro"} doesn't make submounts recursively read-only and can result in container breakout.

So we should mount /sys and /sys/fs/cgroup separately as {"bind", "ro"}.

In the long term, we should fix opencontainers/runc#2573

There was a problem hiding this comment.

Okay, I have updated with this: 4b51fbd

It seems to work, hopefully does not cause other issues.

There was a problem hiding this comment.

This change ended up causing several other integration tests to fail with oci-rootless, like: TestIntegration/TestDefaultEnvWithArgs so I am going to revert it. I am leaning towards leaving my "skip rootless" hack in place for this PR and then investigating/fixing the rootless + /sys issue later.

…d.Solve Signed-off-by: Cory Bennett <cbennett@netflix.com>

c09d63d to

03e1c19

Compare

…be per process Signed-off-by: Cory Bennett <cbennett@netflix.com>

Signed-off-by: Cory Bennett <cbennett@netflix.com>

349a0fd to

095a919

Compare

Signed-off-by: Cory Bennett <cbennett@netflix.com>

Signed-off-by: Cory Bennett <cbennett@netflix.com>

Signed-off-by: Cory Bennett <cbennett@netflix.com>

5845b3c to

d1a14ae

Compare

|

Sorry for the delays, I was out on a much needed vacation for almost 2 weeks. I think I have most of the items fixed. 2 open issue:

|

|

Sorry I didn't notice your question, please take a look at #1627 (comment) |

4b51fbd to

d1a14ae

Compare

Signed-off-by: Cory Bennett <cbennett@netflix.com>

|

I think this PR is ready now. There are several follow-up issues that I will create to track:

|

| }, | ||

| exit.Error, | ||

| ) | ||

| if exit.Code == containerd.UnknownExitStatus { |

There was a problem hiding this comment.

Don't quite understand this special case handling. Why isn't this just

err = grpcerrors.FromGRPC(status.ErrorProto(exit.Error)

if exit.Code != containerd.UnknownExitStatus {

err = &errdefs.ExitError{ExitCode: exit.Code, Err: err}

}

There was a problem hiding this comment.

Yeah, that makes more sense. exit.Error is an *rpc.Status so has to be converted to *spb.Status first for status.ErrorProto, unless I am missing some other helper func that already does this? Anyway, updated per your outline here: 398593b

Signed-off-by: Cory Bennett <cbennett@netflix.com>

|

@coryb I started to try to use this and discovered the signal part was left unimplemented . That unfortunately makes it quite unusable for anything interactive (and means we still need to wait for another buildkit release for everyone 😢 ). Do you have plans to finish it? |

|

Hey @tonistiigi, I will try to look into it next week. We have been using the gateway exec functionality fairly extensively for debugging failing builds, not having signals has caused a few annoyances for us but generally it has been very usable for us. The interactive terminal allows things like ctrl-c to go through, so just curious where the missing signals are causing problems for you. |

Hmm. It did not work for me. |

|

@tonistiigi not sure what is wrong. I have a crud client main that will exec into a container and run bash with interactive tty here: https://gist.github.com/coryb/8d733b7f1f2398a7b85a640d1f38fdc0 If you |

|

@coryb That indeed seems to work in most cases. Need to test more. |

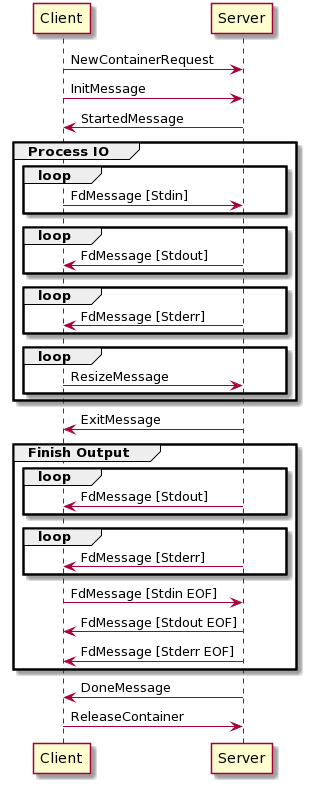

more updates for #749, this pr adds the gateway client and server implementation with proto updates.

Here is a sequence diagram for the grpc message flow: