Add graceful shutdown timeout for Jetty#5429

Conversation

|

Failed from #2373 |

|

failing from |

|

@drcrallen With PR #4716 when I deployed it in production, I still saw channel disconnect issue. This was some time ago though, so I don't remember the issue. Let me re-deploy 4716 in production and confirm if I no longer see that issue. |

| server.setHandler(new StatisticsHandler()); | ||

| server.setConnectors(connectors); | ||

| server.setAttribute(GRACEFUL_SHUTDOWN_TIMEOUT, config.getGracefulShutdownTimeout().toStandardDuration().getMillis()); | ||

|

|

There was a problem hiding this comment.

Why doesn't this call server.setStopTimeout and or server.setStopAtShutdown which use/rely on the StatisticsHandler internally to cause graceful shutdown if set? As far as I can tell, using them and calling server.stop will have the more desirable effect of also no longer accepting new connections, which this approach seems like it will lack unless I am missing something, likely reducing the number of connections will have to be forcibly closed after the grace period is up.

further reading:

- https://github.com/eclipse/jetty.project/blob/21365234f8cf036829f7a03e5c7b89a9572444f9/jetty-server/src/main/java/org/eclipse/jetty/server/handler/StatisticsHandler.java#L204

- https://github.com/eclipse/jetty.project/blob/21365234f8cf036829f7a03e5c7b89a9572444f9/jetty-server/src/main/java/org/eclipse/jetty/server/handler/StatisticsHandler.java#L261

- https://github.com/eclipse/jetty.project/blob/385ba7d70ded912ca702ebbba2694dbcbac61256/jetty-server/src/test/java/org/eclipse/jetty/server/GracefulStopTest.java

There was a problem hiding this comment.

setStopTimeout sounds good, but setStopAtShutdown registers a shutdown hook, which should already be taken care of by the lifecycle. Shutdown hooks do not preserve any sort of order, and we want to unannounce before we shutdown jetty

|

@clintropolis I put in the changes to do the native shutdown stuff. Now the logs look something like this: on shutdown. The jetty shut down happens after unannouncing, but it probably needs to wait a short time before simply rejecting connections or else folks will get rejected connection exceptions. |

|

@drcrallen I think you are right, a delay before graceful jetty shutdown makes sense and would be more graceful than rejecting outright. That way we can handle incoming stuff for a reasonable period where we might be perceived to be announced (I'm not sure offhand what this value is), and then finish as many of those requests as possible. I guess long enough delay after unannouncing sort of does negate the need for jetty to gracefully shutdown... and we've gone full circle 😜 |

|

@clintropolis the timeframe between "unnanounce" and "reject any more connections" should be on the order of the propagation time of the unannouncement I think I'll just add a config option for setting this extra delay, with a comment that it is additive to the graceful shutdown and is related to how fast nodes can act on a zookeeper unannouncement. |

|

@clintropolis / @niketh can you check and see if the latest changes make sense to you? |

clintropolis

left a comment

clintropolis

left a comment

There was a problem hiding this comment.

Oops, sorry for the delay, LGTM 🤘

|

I'm a bit stuck here, I can't run integration tests locally on docker-for-osx, and the failures are pretty much undiagnosable |

|

Ok, I'm trying allowing it completely as an opt-in thing to see if it passes integration tests now. |

|

Looks like it has a problem in integration tests. |

|

@drcrallen what issue do you have when running the tests locally? I think @jihoonson had run into issues too but solved them somehow; maybe you have run into the same issue and he could help? |

|

@gianm / @jihoonson I'm on docker for mac and can't seem to find the magic |

|

here's the net info on the "druid-overlord" container: |

|

After some more digging I found https://serverfault.com/questions/870568/fatal-error-cant-open-and-lock-privilege-tables-table-storage-engine-for-use which was causing mysql containers to fail. I added |

|

yay! I'm getting the same errors as integration tests now @jihoonson looks like using |

|

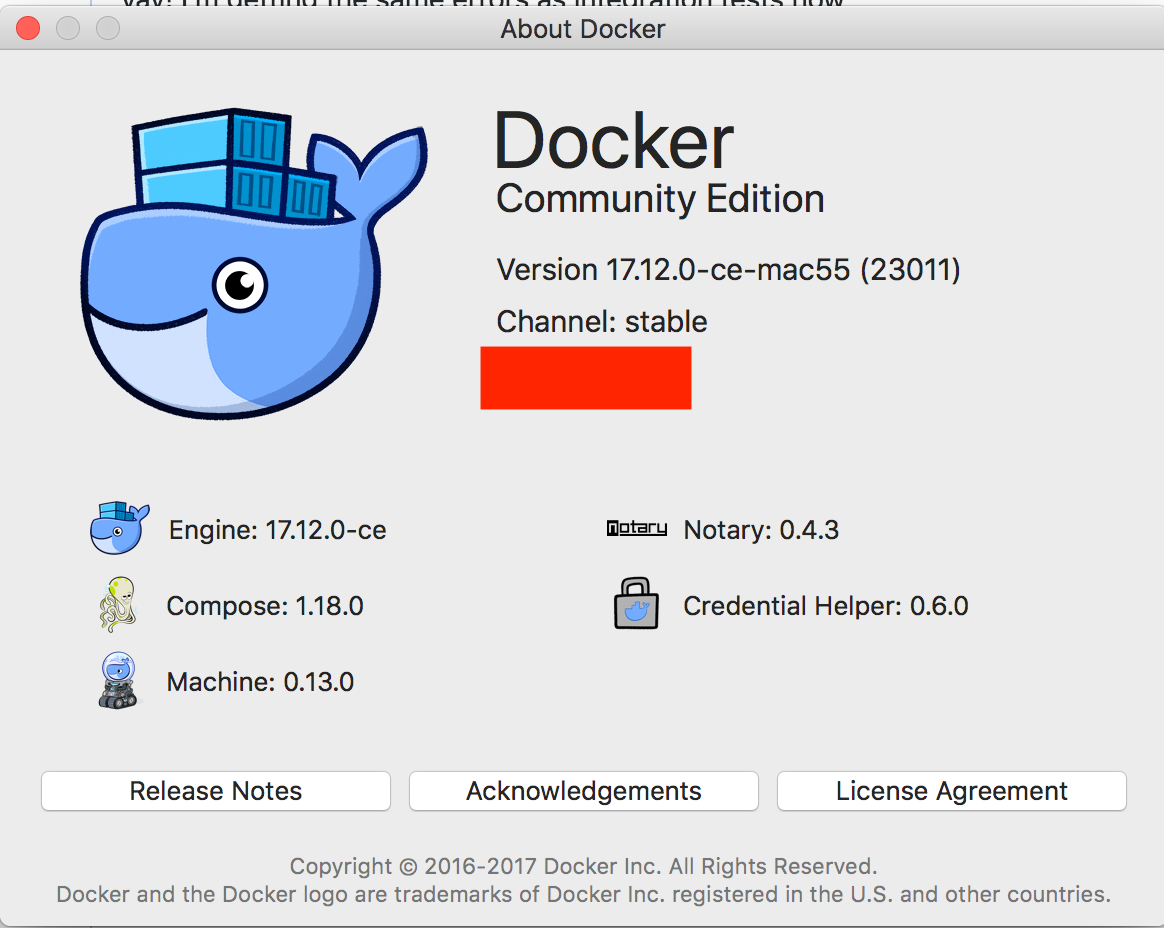

@drcrallen hmm that's odd. I'm using |

|

Underlying problem was |

|

@drcrallen Hm, I'm using overlay2 storage driver on my mac as well, haven't run into issues with running the integration tests locally: |

|

@drcrallen I'm using the same version of docker. |

|

@jihoonson it works with I didn't bother trying docker-machine commands |

|

All right. It's good if it works for you. Just wondering what steps you took before meeting the above error. |

|

I didn't do anything special, had the overlayfs enabled, then set the docker ip and ran the integration tests. But the thing would not want to finish. I checked out the containers and noticed that mysql was not running in its container. Looking in the logs I saw the error. It might be a red herring, though. So I wouldn't spend too much time on it unless others report a similar problem with integration tests. |

|

@jihoonson would you be able to review this? |

|

@drcrallen sure. I'll review soon. |

| @Override | ||

| public void lifeCycleFailure(LifeCycle event, Throwable cause) | ||

| { | ||

| log.warn(cause, "Jetty lifecycle event failed [%s]", event.getClass()); |

There was a problem hiding this comment.

We can start at error and see what happens, I'm good for that.

| } | ||

| catch (InterruptedException e) { | ||

| Thread.currentThread().interrupt(); | ||

| throw new RE(e, "Interrupted waiting for jetty shutdown."); |

There was a problem hiding this comment.

So if an InterrupedException occurs which means this thread wakes up before unannounceDelay, server won't be stopped. Is this desirable? Maybe better to stop server anyway?

There was a problem hiding this comment.

I don't think that is desired here. The reason that stop() would be called is because the lifecycle is shutting down, aka there is already an interrupt requested.

If there's ANOTHER interrupt coming in, I would think it essentially means "You are going to get a kill -9 if you don't exit right now" in which case continuing on with the lifecycle shutdowns in a best effort stopping seems like the thing to do

|

@jihoonson any other comments? |

|

A few days have passed and no other comments |

One of these is a configuration parameter (introduced in apache#5429), but it's never been in a release, so I think it's ok to rename it.

One of these is a configuration parameter (introduced in #5429), but it's never been in a release, so I think it's ok to rename it.

* Add graceful shutdown timeout * Handle interruptedException * Incorporate code review comments * Address code review comments * Poll for activeConnections to be zero * Use statistics handler to get active requests * Use native jetty shutdown gracefully * Move log line back to where it was * Add unannounce wait time * Make the default retain prior behavior * Update docs with new config defaults * Make duration handling on jetty shutdown more consistent * StatisticsHandler is a wrapper * Move jetty lifecycle error logging to error

#4716 fixed against master

I'm hoping this can go in because it would be super handy.

Adds

druid.server.http.gracefulShutdownTimeoutanddruid.server.http.unannouncePropogationDelaywhich are described in the docs.The

druid.server.http.unannouncePropogationDelayis defaulting to 0 to facilitate not delaying unit tests that may start a Jetty server, and to make the default behavior match legacy behavior of not waiting.