KAFKA-14324: Upgrade RocksDB to 7.1.2#12809

Conversation

|

Hello @ableegoldman! I am tagging you since I saw you are the release manager for 3.4.0 and this addresses https://issues.apache.org/jira/browse/KAFKA-14324 which is listed as a blocker for the release. |

|

@clolov Thank you for the PR! That seems a tricky one. Actually, we cannot simply remove methods from that class. It is true that they are not used within our code base. However, the options are directly exposed to the users as public APIs via What alternatives do we have?

What else? \cc @ableegoldman |

|

Hello @cadonna! In my opinion 1 is a clean solution and it is probably something we can contribute ourselves - I can try doing it in the upcoming days. I think 2 is similar to 3 in breaking backwards compatibility (we still change the behaviour of at least |

|

|

|

I also like option 1 best. I also agree that option 2 somehow breaks backward compatibility but at least it does not break compilation of user code. We could move to RocksDB 7.1.2 or higher in AK 4.0.0. We could deprecate the methods for 3.4.0. Usually we use longer deprecation periods. |

|

@clolov, to get option 1 rolling I think you could open an issue at https://github.com/facebook/rocksdb/issues and ask if it is possible to get a patch release of 6.29 with the zlib CVE fixed. Please also explain that for Kafka Streams it is hard to move to 7.1.2 due to backwards compatibility issues. Let me know if you need any help from our side for this. |

|

@cadonna, I wrote earlier today to https://groups.google.com/g/rocksdb/c/DWsH8Yda5gc. I will wait for a day and if there isn't a response I will also open an issue as you suggest :) |

|

Hey @cadonna, Adam Retter has been very helpful in responding to my mail, so I have updated the pull request with his suggestion. I believe this is option 2, but the reasoning behind it makes sense to me. Let me know if you disagree :) |

ableegoldman

left a comment

ableegoldman

left a comment

There was a problem hiding this comment.

Hey @clolov sorry I'm a bit late to the party here, but the current approach seems like the best we can do for now

However, the PR isn't complete because there seem to be new APIs introduced in this version for which we will need to add corresponding "Conversion" methods in the adapter class. This is why the RocksDBGenericOptionsToDbOptionsColumnFamilyOptionsAdapterTest.shouldForwardAllColumnFamilyCalls test is failing -- it uses reflection to make sure we cover all the Option-related APIs. You can use that test to figure out what's missing, might be easier than trying to gather it all from the rocksdb docs/release notes

| @Override | ||

| public void setBaseBackgroundCompactions(final int baseBackgroundCompactions) { | ||

| dbOptions.setBaseBackgroundCompactions(baseBackgroundCompactions); | ||

| // no-op |

There was a problem hiding this comment.

we should still log a warning imo (and maybe point users to the new way of doing this?)

Ditto all the others like this

|

Thank you for the review! All of these are valid suggestions, I will aim to get them done today. |

|

Hopefully the newest two commits address your comments @ableegoldman, if not just let me know :) |

| public boolean newTableReaderForCompactionInputs() { | ||

| return dbOptions.newTableReaderForCompactionInputs(); | ||

| String message = "This method has been removed from the underlying RocksDB. " + | ||

| "It is now a method which always return false."; |

There was a problem hiding this comment.

| "It is now a method which always return false."; | |

| "It is now a method which always returns false."; |

There was a problem hiding this comment.

Ah, apologies, I will get into the habit of running all of these before I upload new commits.

|

@clolov, could you please rebase to recent trunk since on trunk there is a fix for the flaky |

|

@cadonna I suspect the automatic merge from GitHub will fix things, but if not I can rebase. |

|

Yeah, you are probably right! I forgot about it! Sorry! |

ableegoldman

left a comment

ableegoldman

left a comment

There was a problem hiding this comment.

Nice, LGTM -- test failures are unrelated. Let's merge this

|

@ableegoldman Are you planning to backport this PR to 3.3, 3.2, 3.1, and maybe 3.0? Backporting to 3.3 and 3.2 should be easy since they use the same RocksDB version as trunk. If you did not plan for it, I can also do it. Just let me know. |

|

Oh I just missed that -- if you wouldn't mind backporting it yourself I would appreciate it, thanks! |

Reviewers: Bruno Cadonna <cadonna@confluent.io>, Anna Sophie Blee-Goldman <ableegoldman@apache.org>

Reviewers: Bruno Cadonna <cadonna@confluent.io>, Anna Sophie Blee-Goldman <ableegoldman@apache.org>

Reviewers: Bruno Cadonna <cadonna@confluent.io>, Anna Sophie Blee-Goldman <ableegoldman@apache.org>

Reviewers: Bruno Cadonna <cadonna@confluent.io>, Anna Sophie Blee-Goldman <ableegoldman@apache.org>

|

Backported to 3.3, 3.2, 3.1, and 3.0. For 3.1 and 3.0, I also needed to backport #11690. |

This reverts commit 876c338.

|

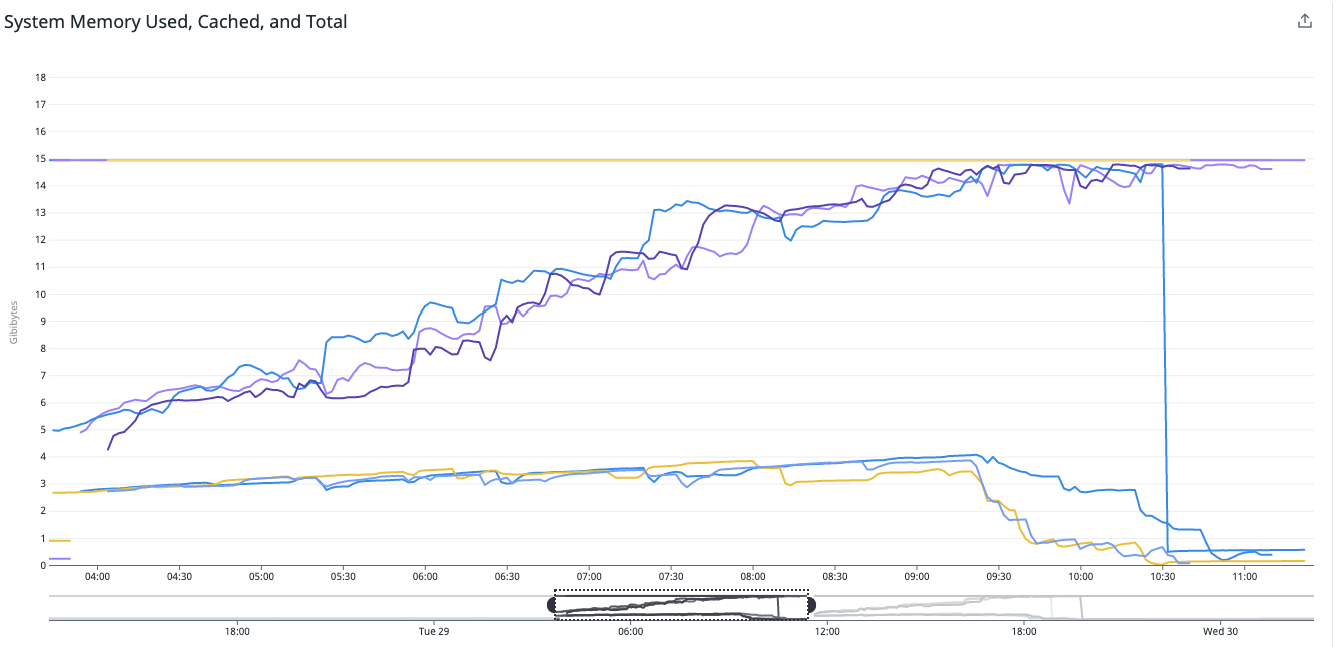

I think the new version of RocksDB, or the way it was used in this PR, is leaking memory. To the point that with this change, a stream thread will only survive a few hours without running out of memory. I don't have a native heap profile yet, but this is the heap consumption in the first 8 hours of running trunk: This is the heap consumption of trunk after reverting this change ( lucaasbru/kafka branch revert ): Can we revert this change in trunk until we have resolved this issue? @cadonna @clolov @ableegoldman The leak is in native memory. |

|

Possibly related: |

|

@lucasbru well we shouldn't be, because if we happen to be relying on finalizers then that means we have a memory leak in the Streams code itself...which is definitely possible. Actually one of the first things I worked on in Kafka was fixing a handful of memory leaks in Streams. After a particularly severe one was detected I remember we ran some kind of check for usage of objects implementing Unfortunately I don't remember how/what we did off the top of my head, but I'm sure you can just google for it |

Reviewers: Bruno Cadonna <cadonna@confluent.io>, Anna Sophie Blee-Goldman <ableegoldman@apache.org>

|

Is there any fix for this memory leak? How can we reproduce this memory leak for testing? Some of our environment is facing a memory leak but we cannot reproduce it, as we use org.rocksdb:rocksdbjni 7.1.2, I suppose this is the root cause. |

|

@vepo The memory leaks are fixed in the following PRs: Without those fixes we would have reverted this PR. There should not be any memory leaks caused by Kafka Streams anymore. If there are any, please open a ticket and/or provide a fix. A popular root cause of memory leaks is not closing iterators or other RocksDB objects. |

I upgraded RocksDB from 6.29.4.1 to 7.1.2. In the process some methods in the Kafka code base could no longer be found. I inspected where they are used and they were not used anywhere so I deleted them.