Revise PodAutoscaler's life cycle#4094

Conversation

knative-prow-robot

left a comment

knative-prow-robot

left a comment

There was a problem hiding this comment.

@hohaichi: 0 warnings.

Details

In response to this:

…eck container health

Fixes #3456

Proposed Changes

- Introduce a Requirements condition in PodAutoscaler. This condition is to capture all preconditions for a PodAutoscaler to become Ready, besides the Active condition.

- Use this Requirements condition to fix the crashlooping problem by having heathy containers as one requirement.

Release Note

Instructions for interacting with me using PR comments are available here. If you have questions or suggestions related to my behavior, please file an issue against the kubernetes/test-infra repository.

|

/hold |

| } | ||

|

|

||

| func (c *Reconciler) verifyContainer(pa *pav1alpha1.PodAutoscaler) (*apis.Condition, error) { | ||

| rev, err := c.revLister.Revisions(pa.Namespace).Get(pa.Name) |

There was a problem hiding this comment.

I don't like the layering violation that's here. Revision creates the PodAutoscaler abstraction, so this is a back-edge in the layering graph, which I've been trying to eliminate. :(

|

@mattmoor I've prototyped a fix based on our discussion. Could you please take a look? If the change is good, I'll add tests. |

|

/assign @jonjohnsonjr |

vagababov

left a comment

vagababov

left a comment

There was a problem hiding this comment.

Some superficial items, I need to read this in more detail

|

@mattmoor @vagababov @jonjohnsonjr |

|

/hold |

|

[APPROVALNOTIFIER] This PR is NOT APPROVED This pull-request has been approved by: hohaichi The full list of commands accepted by this bot can be found here. DetailsNeeds approval from an approver in each of these files:Approvers can indicate their approval by writing |

|

/hold cancel |

| // MarkResourceFailedCreation changes the "Active" condition to false to reflect that a | ||

| // critical resource of the given kind and name was unable to be created. | ||

| func (pas *PodAutoscalerStatus) MarkResourceFailedCreation(kind, name string) { | ||

| // TODO: This looks more like a bootstrap condition? |

There was a problem hiding this comment.

This is only used here when we fail to create a HorizontalPodAutoscaler.

Looking at other resources, what happens if we fail to create an owned resource?

Just return an error:

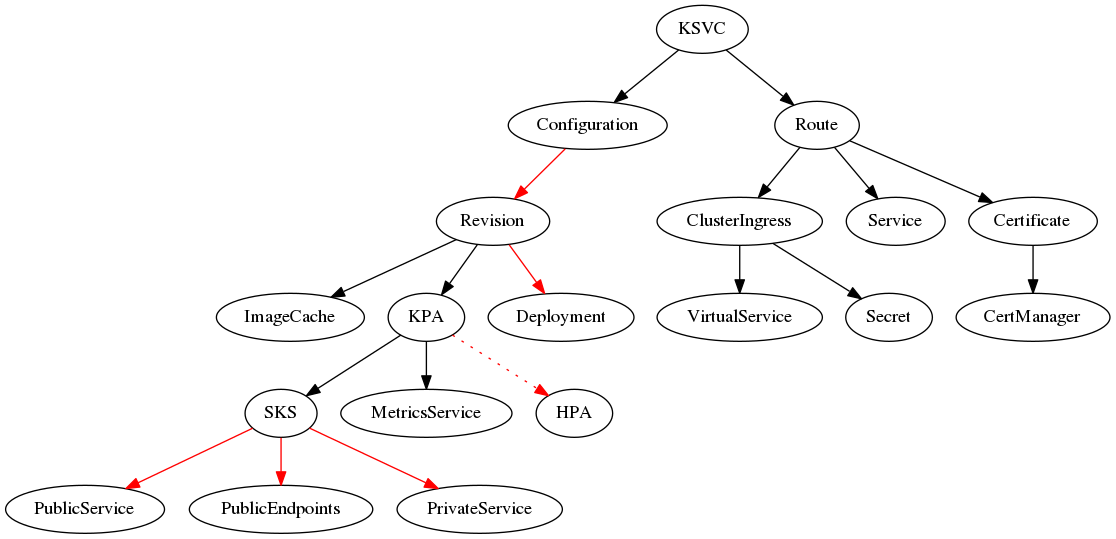

- Service -> Configuration

- Service -> Route

- Revision -> ImageCache

- Revision -> KPA

- KPA -> SKS

- KPA -> Metrics Service

- Route -> ClusterIngress

- Route -> Service

- Route -> Certificate

- Certificate -> CertManager

- ClusterIngress -> VirtualService

- ClusterIngress -> Secret

Outliers:

- Configuration -> Revision calls

MarkRevisionCreationFailedto set Ready=False. - Revision -> Deployment calls

MarkDeployingto set ResourcesAvailable=Unknown and ContainerHealthy=Unknown. - SKS -> Public Service calls

MarkEndpointsNotReadyto set EndpointsPopulated=Unknown. - SKS -> Public Endpoints calls

MarkEndpointsNotReadyto set EndpointsPopulated=Unknown. - SKS -> Private Service calls

MarkEndpointsNotReadyto set EndpointsPopulated=Unknown. - KPA -> HPA calls

MarkResourceFailedCreationto set Active=False (this one).

It seems like we should consider this entire dependency graph when we think about the lifecycle of any single resource (and how failures to reconcile get propagated)...

In this case, I think I'd just return an error and leave Ready=Unknown. This might be an issue, though, since the HPA will still be Active, but it definitely shouldn't be... should we just remove that line? I haven't entirely though through that...

There was a problem hiding this comment.

I turned the previous comment into a graph because I wanted to visualize which resources actually update their conditions based on failure to create a child resource (in red):

It seems like we should be more consistent here... cc @mattmoor

| // MarkResourceNotOwned changes the "Active" condition to false to reflect that the | ||

| // resource of the given kind and name has already been created, and we do not own it. | ||

| func (pas *PodAutoscalerStatus) MarkResourceNotOwned(kind, name string) { | ||

| // TODO: This looks more like a bootstrap condition? |

There was a problem hiding this comment.

Seems like it should set Ready=False, if we're being consistent with other reconcilers.

…ions rather active conditions.

|

Updated per our discussion yesterday--making resource-not-owned and resource-failed-creation readiness conditions. |

|

The following is the coverage report on pkg/.

|

|

@hohaichi: The following test failed, say

Full PR test history. Your PR dashboard. DetailsInstructions for interacting with me using PR comments are available here. If you have questions or suggestions related to my behavior, please file an issue against the kubernetes/test-infra repository. I understand the commands that are listed here. |

|

I think @jonjohnsonjr is going to reopen this as another PR. Going to close this to clear from gubernator. |

Currently PodAutoscaler's Ready condition is the same as Active condition. It is not only mismatched with Revision's Ready condition, where the Active condition is only an informative condition that does not define the Ready condition, but also not capturing the PodAutoscaler's status very well. For example, when everything is good but there's no traffic, the PodAutoscaler should be Ready, but Inactive.

Fixes #3456

Proposed Changes

Release Note